Analyzing customer feedback using machine learning

Prabhat Jha

CTO, Wootric

This guest post explains how Wootric's platform uses Google Cloud Natural Language API to complement its own machine learning for saving infrastructure and engineering costs.

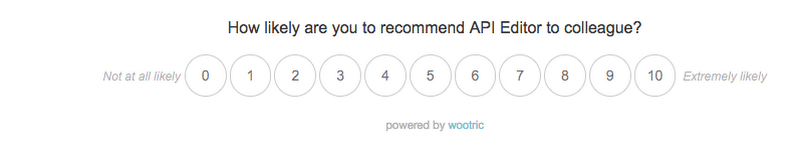

Wootric is a customer feedback management platform that allows businesses to gauge and quantify customer loyalty through proven feedback metrics such as Net Promoter Score (NPS), Customer Satisfaction (CSAT) and Customer Effort Score (CES).

For example, here's an NPS survey that we present in-app (we also support mobile, email and SMS) that usually takes a user less than 30 seconds to complete.

Step 1 : Quantitative Feedback

As you can see, the question above is very specific and objective. Applying simple arithmetic on this score from your customer base gives you your Net Promoter Score, and allows you to sort your customers into sets of {Promoters, Passives and Detractors}.

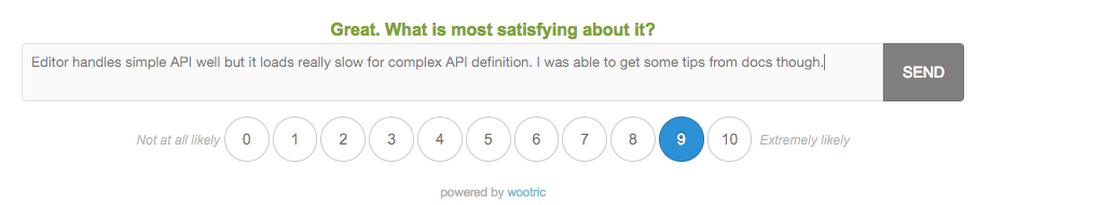

Step 2 : Qualitative Feedback

While the NPS score is important, it’s the open ended follow-up feedback in Step 2 that provides real insight. Open-ended feedback is unstructured and difficult to make sense of programmatically though. This is where we use machine learning in the form of Natural Language Processing (NLP) to extract deeper meaning.

Specifically, we use machine learning and the Cloud Natural Language API in the following ways:

- To detect anomalies and inconsistencies in sentiment between score and feedback

- For auto classification of unstructured feedback and routing

- For entity detection of people or companies

Anomaly detection using sentiment analysis

Typically, the sentiment of the feedback from detractors and most promoters is consistent with their score, reducing the need for sentiment analysis for these data sets. However, our data sets show a significant number of passives (and even promoters) with inconsistencies that suggest they may transition to becoming detractors if their feedback is not addressed in a timely manner. Because the NL API returns both sentiment score and magnitude, it helps to surface problematic feedback by further sub-grouping them into {Passive Negative, Passive Positive, Promoter Negative, Promoter Positive}. Moreover, doing a parity check between NPS and sentiment, we can flag these responses appropriately so that the customer relations team can proactively reach out to them.This is especially helpful for organizations that receive an unmanageable amount of feedback on a weekly basis.

Automatic tagging and routing using syntax analysis

To understand the benefits of automatic tagging and routing of customer feedback, let’s first think about how our customers manually tag and classify the open-ended feedback they receive. An enterprise SaaS company, for example, usually has tags like UX, Documentation, Performance, Usability, Cost, Feature Request, etc. Accordingly, feedback for each of these classifications gets routed to product managers, marketers, support, sales, etc. With thousands of survey responses coming in each week, the company needs a dedicated team to read each piece of feedback, compare it to the NPS score and route it to the right team for resolution. This process is laborious and expensive, has a long turnaround time and does not scale.To automatically tag feedback with high confidence and route it to the relevant team through email or Slack channels, we use the Natural Language API's syntax analysis method. The JSON response below shows what the syntax method returns for the word “imaginable”:

We use the Lemma (the root form of a word), Part of Speech (PoS) tag and the dependency tree in our routing algorithm. In addition, because we have huge volumes of manually tagged data from before we implemented auto-tagging, we're currently in the process of complementing the Natural Language API output with some of our own machine learning algorithms based on n-gram, LDA and word2vec to have the best in breed customer feedback classification system. Combining syntax and sentiment lets us provide our customers insight on the average feedback sentiment for each product. Here's an example chart that combines tags and sentiment to highlight which area needs the most attention — in this example, pricing.

Entity detection of people or companies

For surveys that were triggered as a follow up to support ticket resolution (such as Customer Effort Score), responses usually mention the name of someone on the support team. Routing this feedback to the customer support team provides a helpful performance metric for that team.Here's an example output of our engine:

We've been using the Natural Language API since its beta launch in October 2016. We're continuously adapting our implementation to take advantage of new features such as sentence-level sentiment breakdown and new language support. For a small engineering team to get to this point without Google Cloud would have required months of developer resources and expensive infrastructure. We're very happy about the progress we've made and feel confident that we're on track to be one of the best customer feedback management platforms.