Announcing the 2022 Accelerate State of DevOps Report: A deep dive into security

Derek DeBellis

DORA Research Lead

Claire Peters

DORA Research Lead

In 2021, more than 22 billion records were exposed because of data breaches, with several huge companies falling victim. Between that and other malicious attacks, security continues to be top of mind for organizations as they work to keep customer data safe and their businesses up and running.

With this in mind, Google Cloud’s DevOps Research and Assessment (DORA) team decided to focus on security for the 2022 Accelerate State of DevOps Report, which is out today.

Over the past eight years, more than 33,000 professionals around the world have taken part in the Accelerate State of DevOps survey, making it the largest and longest-running research of its kind. Year after year, Accelerate State of DevOps Reports provide data-driven industry insights that examine the capabilities and practices that drive software delivery, as well as operational and organizational performance.

Securing the software supply chain

To analyze the relationship between security and DevOps, we explored the topic of software supply chain security, which the survey only touched upon lightly in previous years. To do this, we used the Supply-chain Levels for Secure Artifacts (SLSA) framework, as well as the NIST’s Secure Software Development Framework (SSDF). Together, these two frameworks allowed us to explore both the technical and non-technical aspects that influence how an organization implements and thinks about software security practices.

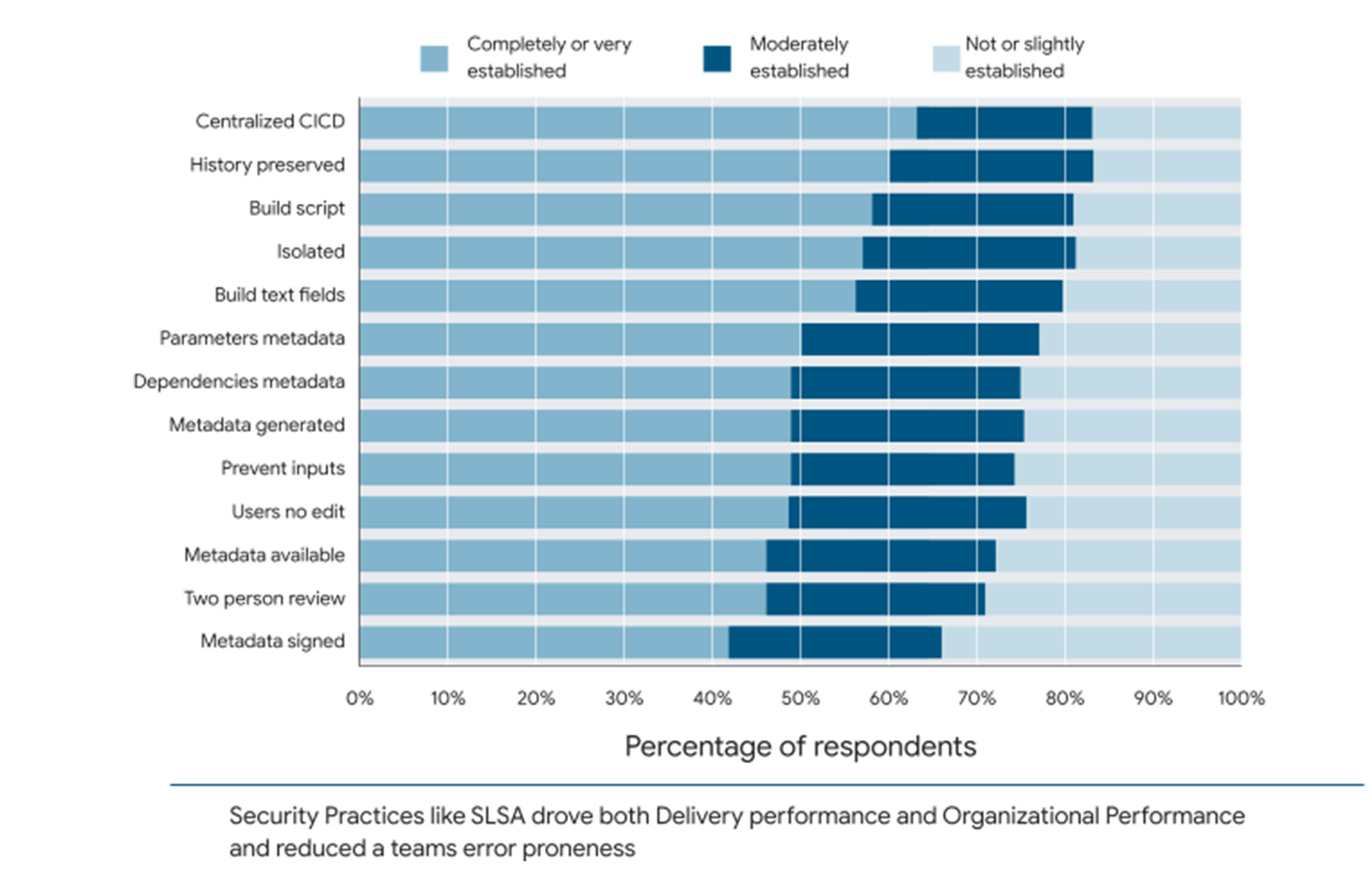

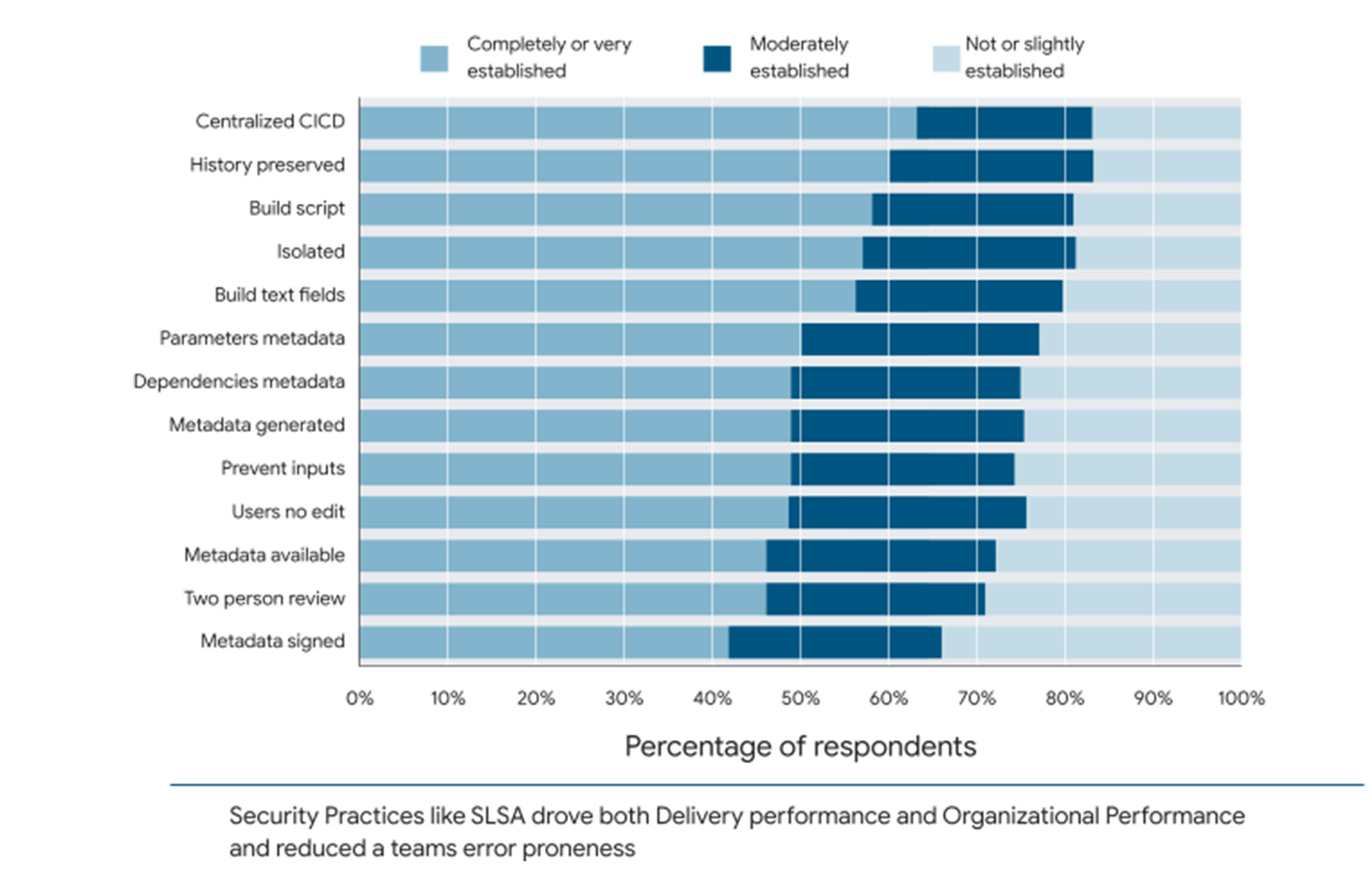

Overall, we found surprisingly broad adoption of emerging security practices, with a majority of respondents reporting at least partial adoption of every practice we asked about. Among all the practices that SLSA and NIST SSDF promote, using application-level security scanning as part of continuous integration/continuous delivery (CI/CD) systems for production releases was the most common practice, with 63% of respondents saying this was “very” or “completely” established. Preserving code history and using build scripts are also highly established, while signing metadata and requiring a two-person review process have the most room for growth.

One thing we found surprising was that the biggest predictor of an organization's software security practices was cultural, not technical: high-trust, low-blame cultures — as defined by Westrum — focused on performance were significantly more likely to adopt emerging security practices than low trust, high-blame cultures focused on power or rules. Not only that, survey results indicate that teams who focus on establishing these security practices have reduced developer burnout and are more likely to recommend their team to someone else. To that end, the data indicate that organizational culture and modern development processes (such as continuous integration) are the biggest drivers of an organization’s software security and are the best place to start for organizations looking to improve their security posture.

What else is new in 2022?

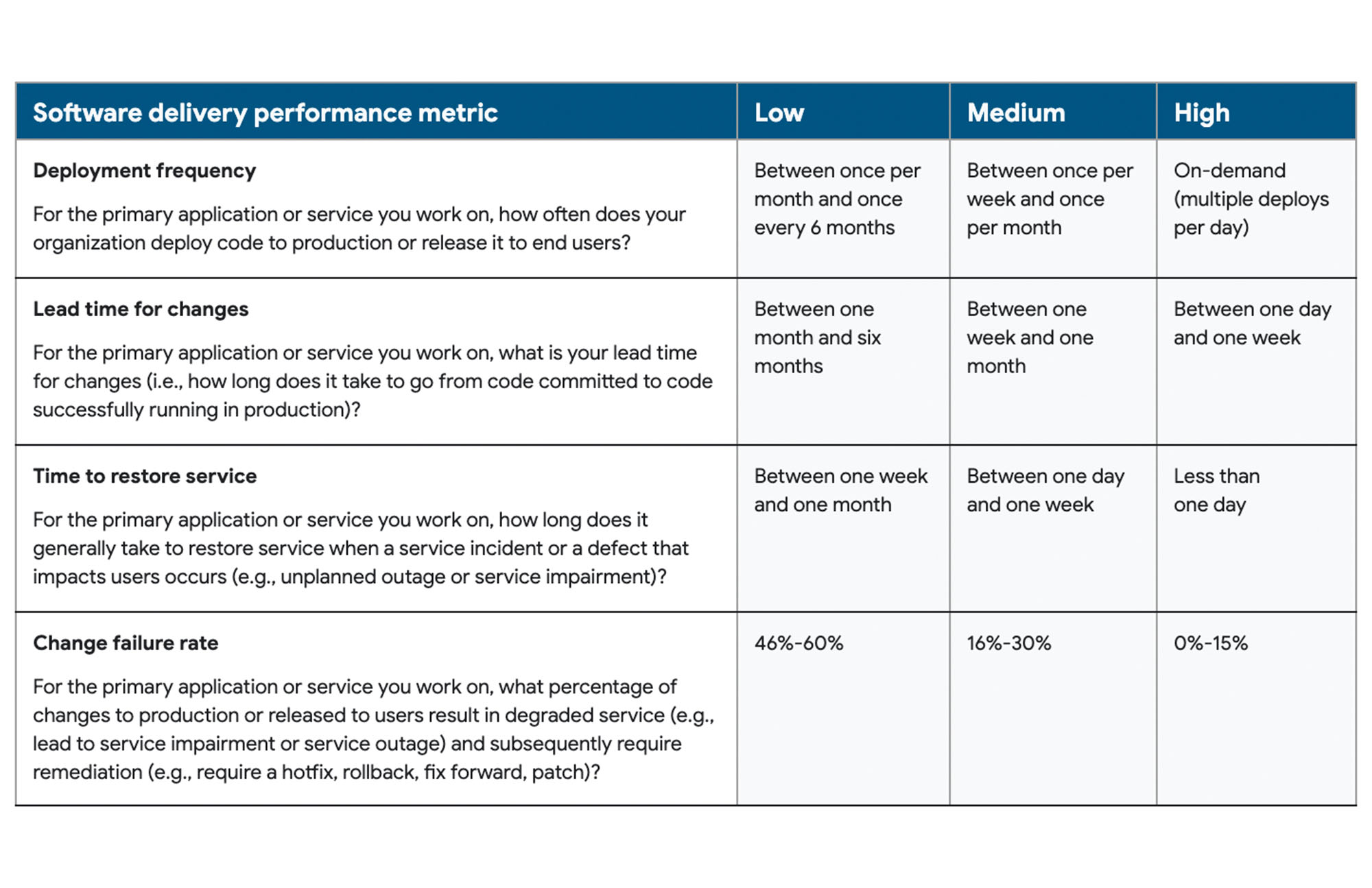

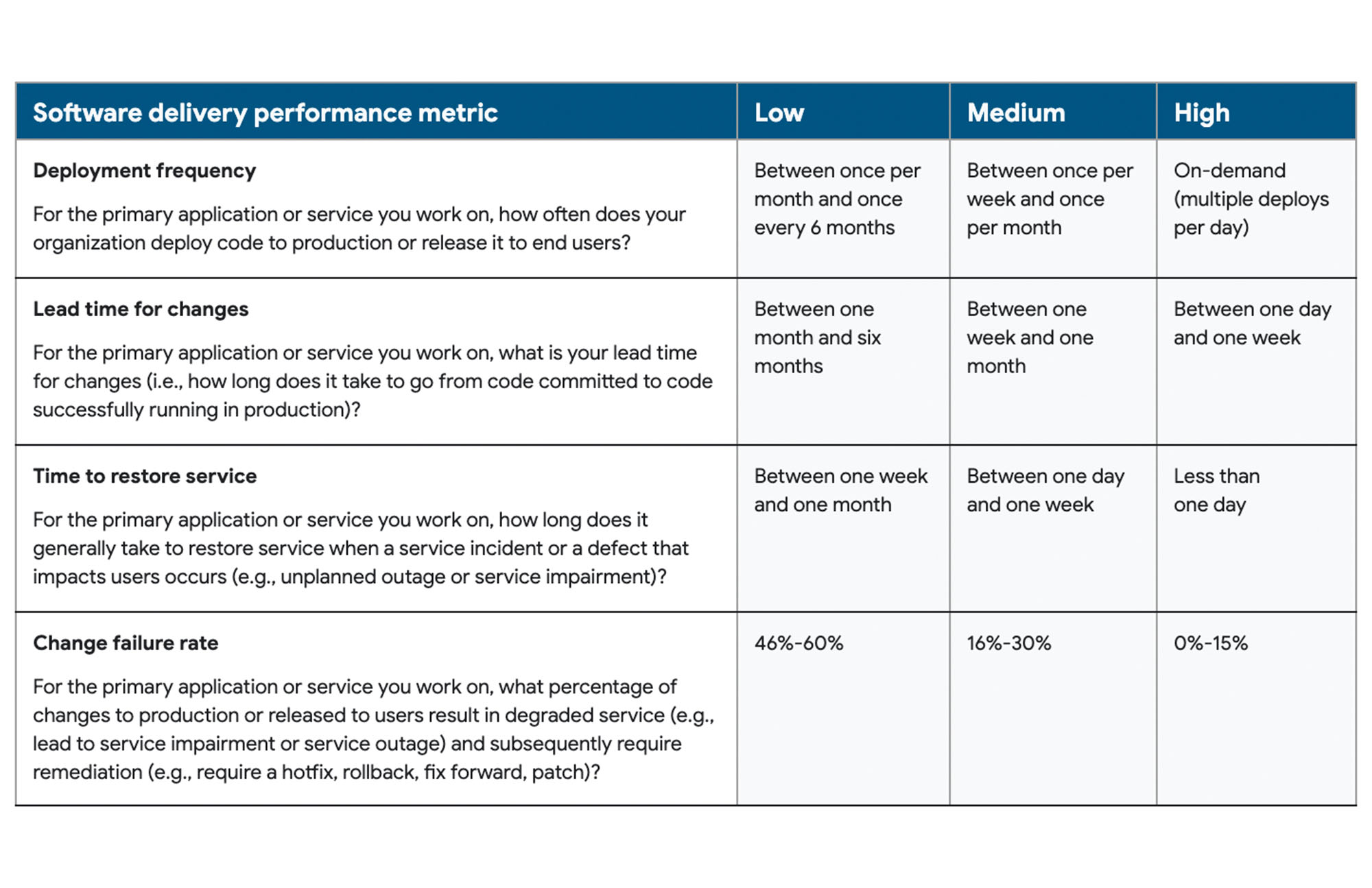

This year’s focus on security didn’t stop us from exploring software delivery and operational performance. We classify DevOps teams using four key metrics: deployment frequency, lead time for changes, mean-time-to-restore, and change fail rate, as well as a fifth metric that we introduced last year, reliability.

Software delivery performance

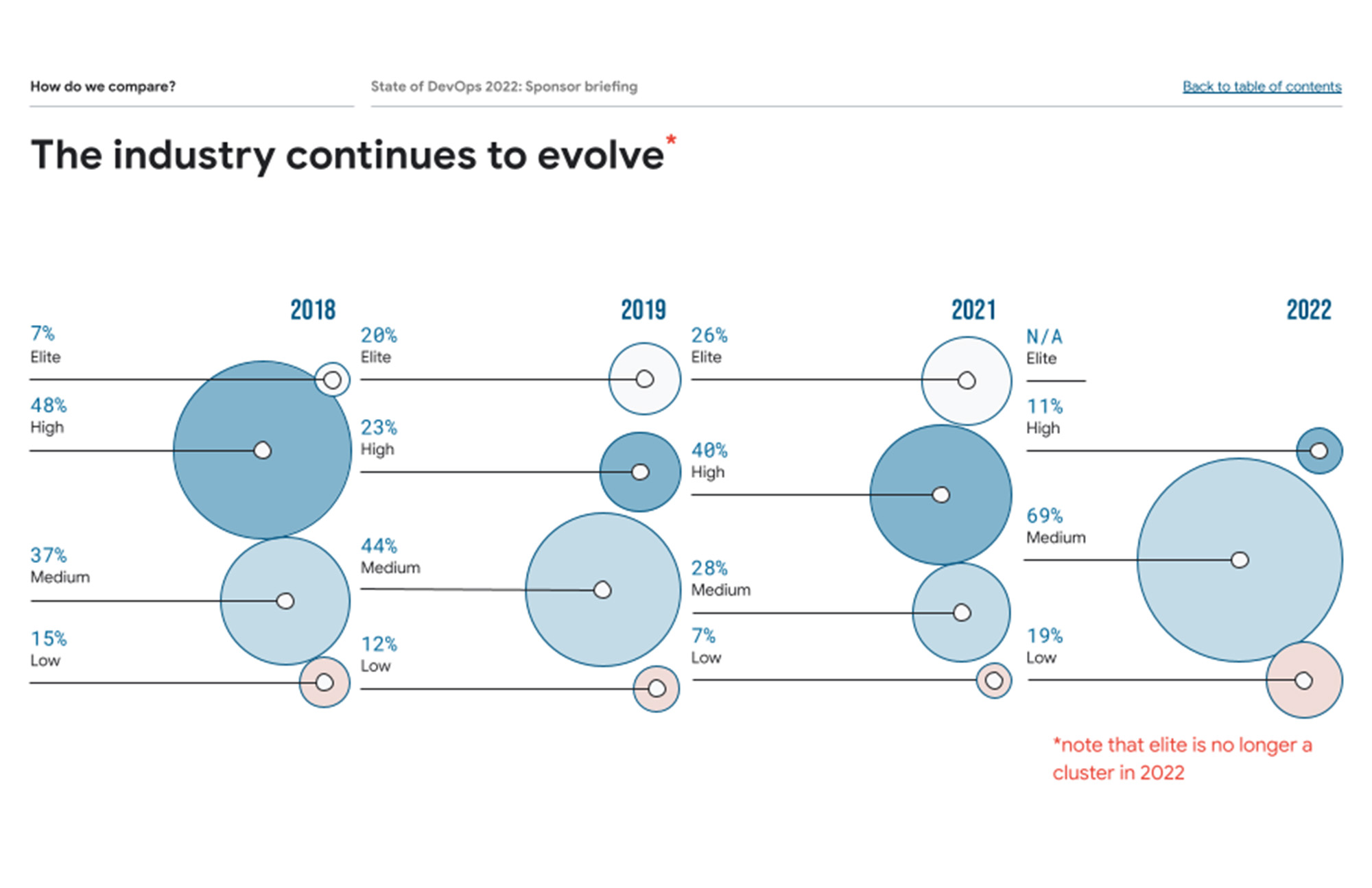

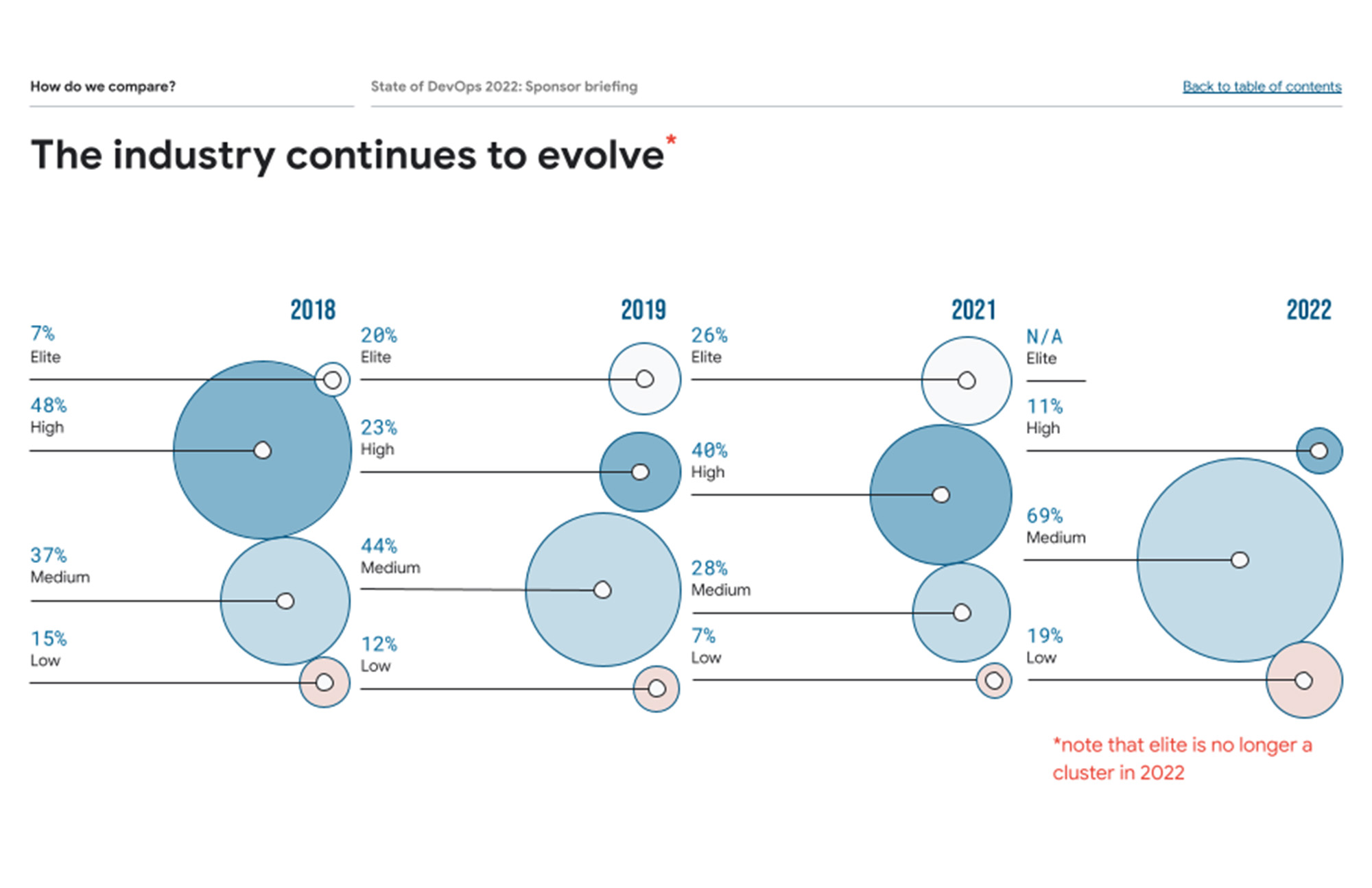

Looking at these five metrics, respondents fell into three clusters – High, Medium and Low. Unlike in years past, there was no evidence of an ‘Elite’ cluster. When it came to software delivery performance, this year’s High cluster is a blend of last year’s High and Elite clusters.

As shown in the percentage breakdowns in the table below, High performers are at a four-year low and Low performers rose dramatically from 7% in 2021 to 19% in 2022! The Medium cluster, however, swelled to 69% of respondents. That said, if you compare this year's Low, Medium, and High clusters with last year’s, you’ll see that there is a shift toward slightly higher software delivery performance overall. This year's High performers are performing better - their performance is a blend of last year's High and Elite. Low performers are also performing better than last year - this year's Low performers are a blend of last year's Low and Medium.

We plan to conduct further research that will help us better understand this shift, but for now, our hypothesis is that the ongoing pandemic may have hampered teams' ability to share knowledge, collaborate, and innovate, contributing to a decrease in the number of High performers and an increase in the number of Low performers.

Operational performance

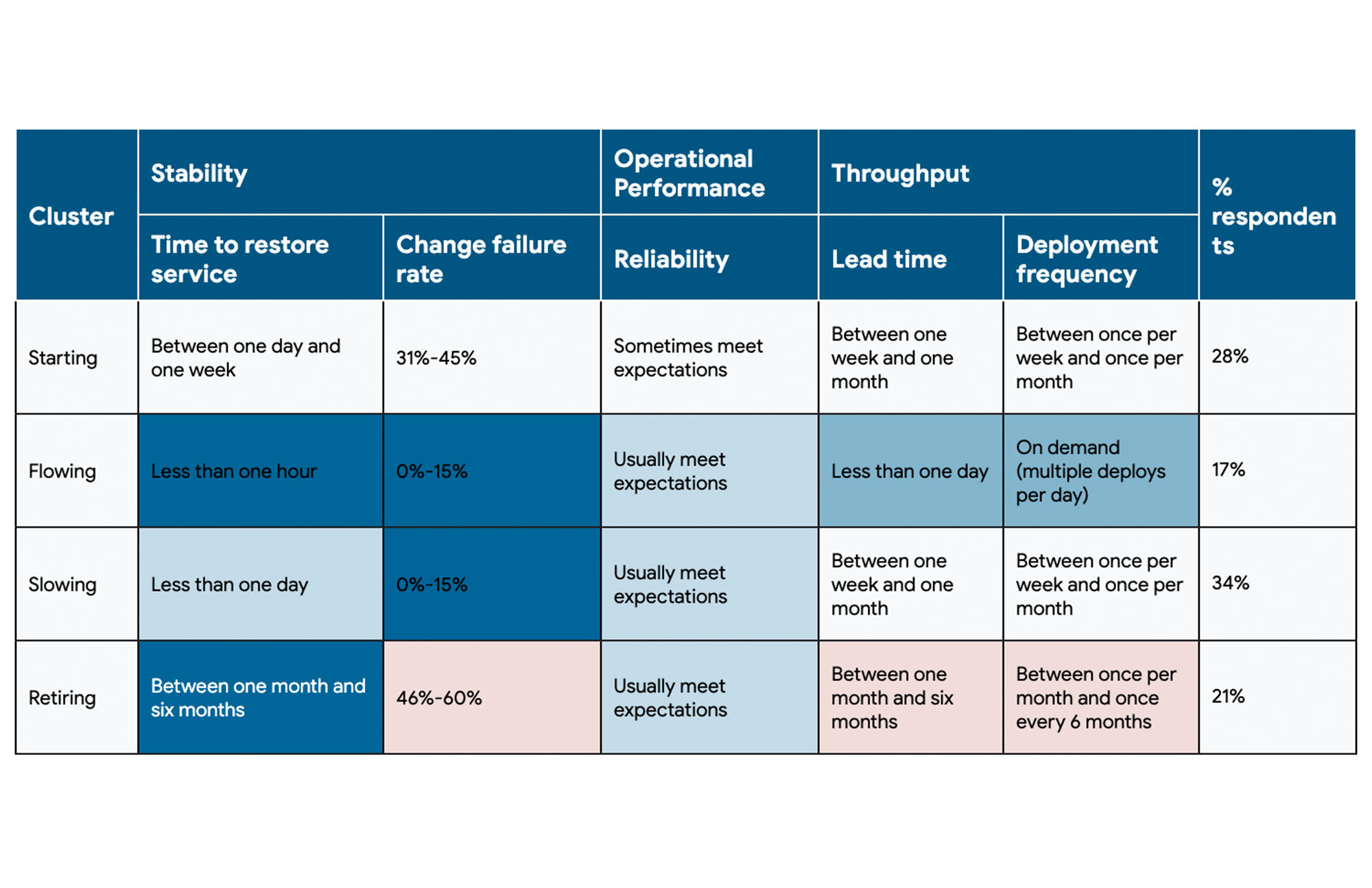

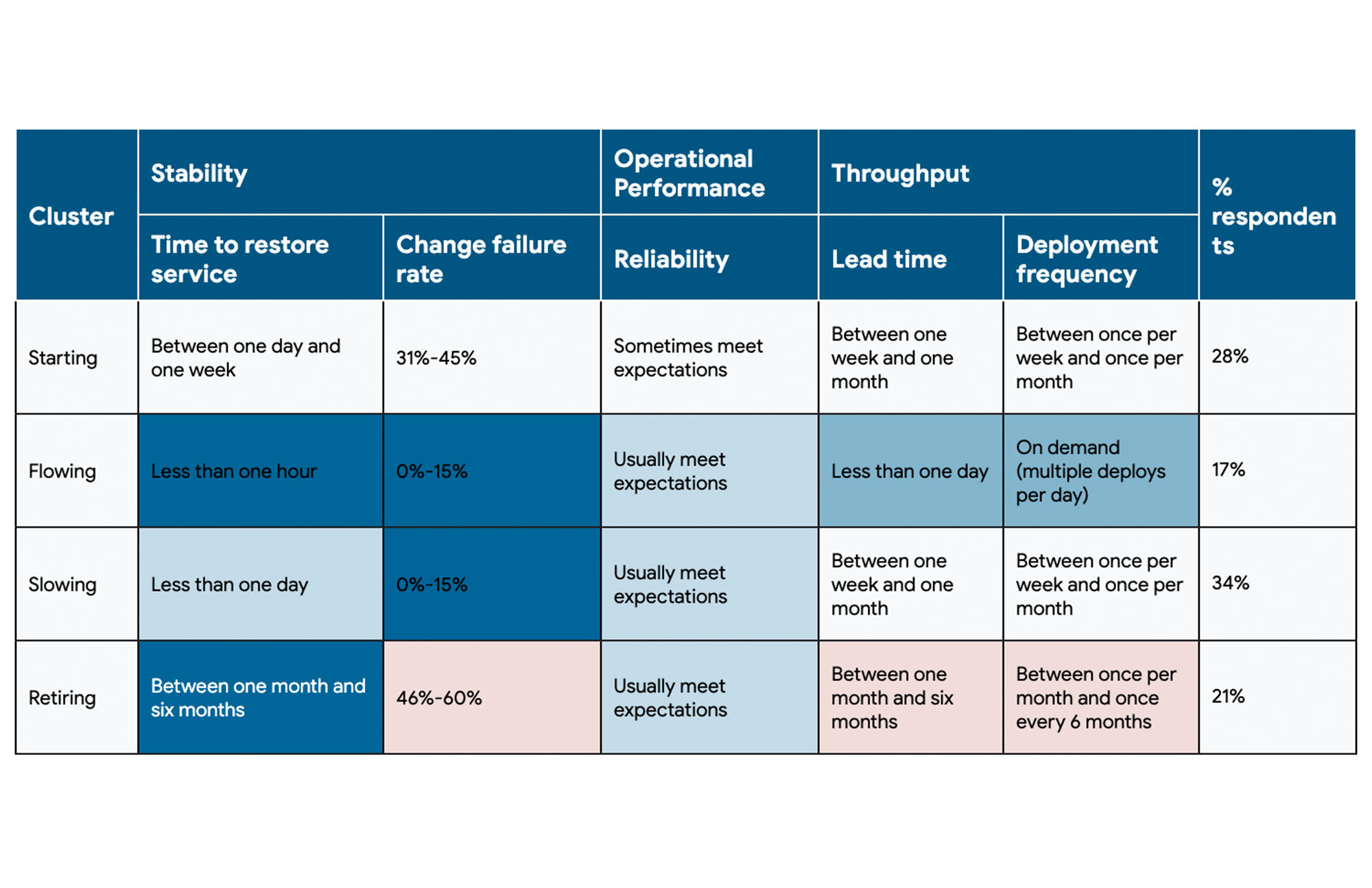

When it comes to DevOps, software delivery performance isn’t the whole picture — it can also contribute to the organization’s overall operational performance. To dive deeper, we performed a cluster analysis on the three categories the five metrics are designed to represent: throughput (a composite of lead time of code changes and deployment frequency), stability (a composite of time to restore a service and change failure rate) and operational performance (reliability).

Through our data analysis, four distinct types of DevOps organizations emerged; these clusters differ notably in their practices and technical capabilities, so we broke them down a bit further:

Starting: This cluster performs neither well nor poorly across any of our dimensions. This cluster may be in the early stages of their product, feature, or service’s development. They may be less focused on reliability because they’re focusing on getting feedback, understanding their product-market fit and more generally, exploring.

Flowing: This cluster performs well across all characteristics: high reliability, high stability, high throughput. Only 17% of respondents achieve this flow state.

Slowing: Respondents in this cluster do not deploy too often, but when they do, they are likely to succeed. Over a third of responses fall into this cluster, making it the most representative of our sample. This pattern is likely typical (though far from exclusive) to a team that is in the process of incrementally improving, but they and their customers are mostly happy with the current state of their application or product.

Retiring: And finally, this cluster looks like a team that is working on a service or application that is still valuable to them and their customers, but no longer under active development.

Are you in the Flowing cohort? While previous respondents followed this guidance to help them achieve Elite status, teams aiming for the Flowing cohort should focus on loosely-coupled architectures, CI/CD, version control and providing workplace flexibility. Be sure to check out our technical capabilities articles, which go into more detail on these competencies and how to implement them.

Show us how you use DORA

The State of DevOps Report is a great place to begin learning about some ways your team can improve its DevOps performance, but it is also helpful to see how other organizations are already using the report to make a meaningful impact throughout their organizations. Last year we launched the Inaugural Google Cloud DevOps Awards, and this year we are excited to share the DevOps Awards Ebook, which includes 13 case studies from last year’s winning companies. Learn from companies like Deloitte, Lowe’s, and Virgin Media on how they successfully implemented DORA practices in their organizations. And be sure to apply to the 2022 DevOps Awards to share your organization’s transformation story!

Thanks to everyone who took our 2022 survey. We hope the Accelerate State of DevOps Report helps organizations of all sizes, industries, and regions improve their DevOps capabilities, and we look forward to hearing your thoughts and feedback. To learn more about the report and implementing DevOps with Google Cloud:

Find out more about how your organization stacks up against others in your industry with the DevOps Quick Check

Learn more about how you can implement DORA practices in your organization with our Enterprise Guidebook

Model your organization around the DevOps capabilities of high-performing teams