Introducing Spark 3 and Hadoop 3 on Dataproc image version 2.0

Christopher Crosbie

Product Manager, Data Analytics

Igor Dvorzhak

Software Engineer

Dataproc makes open source data and analytics processing fast, easy, and more secure in the cloud. Dataproc provides fully configured autoscaling clusters in around 90 seconds on custom machine types. This makes Dataproc an ideal way to experiment with and test the latest functionality from the open source ecosystem.

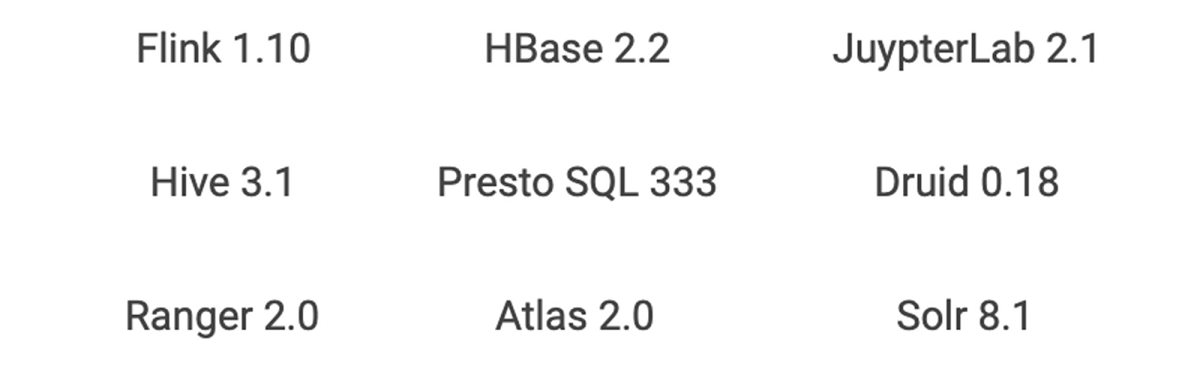

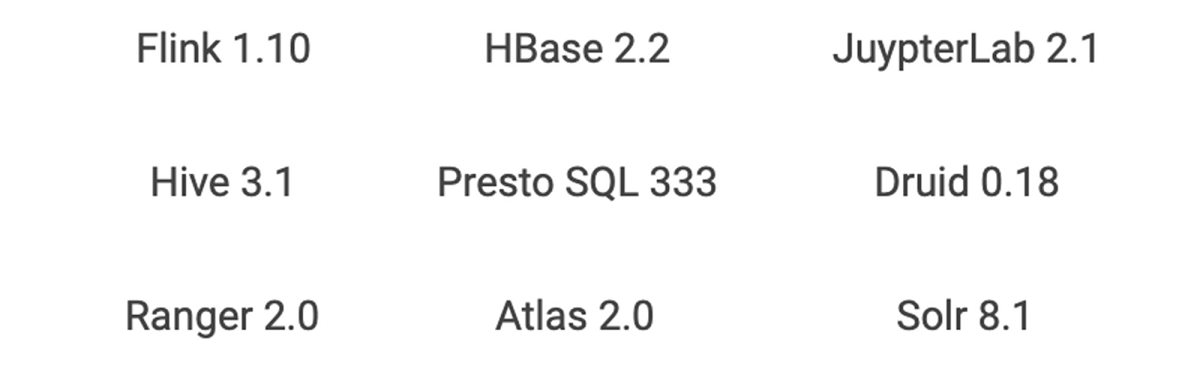

Dataproc provides image versions that align with bundles of core software that typically come on Hadoop and Spark clusters. Dataproc optional components can extend this bundle to include other popular open source technologies, including Anaconda, Druid, HBase, Jupyter, Presto, Tanager, Solr, Zeppelin, and Zookeeper. You can customize the cluster even further with your own configurations that can be deployed via initialization actions. Check out the Dataproc initialization actions GitHub repository, a collection of scripts that can help you get started with installations like Kafka.

Dataproc image version 2.0 is the latest set of open source software that is ready for testing. (It’s in preview image, a Dataproc term for images that signifies a new version in a generally available service.) It provides a step function increase over past OSS functionality and is the first new version track for Dataproc since it became a generally available service in early 2016. Let’s look at some of the highlights of Dataproc image version 2.0.

You can use Spark 3 in preview

Apache Spark 3 is the highly anticipated next iteration of Apache Spark. Apache Spark 3 is not yet recommended for production workloads. It remains in a preview state in the open source community. However, if you’re anxious to take advantage of Spark 3’s improvements, you can start the work of migrating jobs using isolated clusters on Dataproc image version 2.0.

The main headline of new Spark 3 is “performance.” There will be lots of speed and performance gains from under-the-hood changes to Spark’s processing. Some examples of performance optimization include:

Adaptive queries: Spark can now optimize a query plan while execution is occuring. This will be a big gain for data lake queries that often lack proper statistics in advance of the query processing.

Dynamic partition pruning: Avoiding unnecessary data scans are critical in queries that resemble data warehouse queries, which use a single fact table and many dimension tables. Spark 3 brings this data pruning technique to Spark.

GPU acceleration: NVIDIA has been collaborating with the open source community to bring GPUs into Spark’s native processing. This allows Spark to hand off processing to GPUs where appropriate.

In addition to performance, advances in Spark on Kubernetes in version 3 will bring shuffle improvements that enable dynamic scaling, making running Dataproc jobs on Google Kubernetes Engine (GKE) a preferred migration option for many of those moving jobs to Spark 3.

As often with major version overhauls in software, upgrades come with deprecations and Spark 3 is no exception. However, there are gains that come from some of these deprecations.

MLLib (the Resilient Distributed Datasets or RDD version of ML) has been deprecated. While most of the functionality is still there, it will no longer be worked on or tested, so it’s a good idea to move away from MLLib on your migration to Spark 3. As you move from MLLib, it will also be an opportunity to evaluate if a deep learning model may make sense instead. Spark 3 will have better bridges to deep learning models that run on GPUs from ML pipelines.

GraphX will be deprecated in favor of a new graphing component, SparkGraph, based on Cypher, a much richer graph language than previously offered by GraphX.

DataSource API will become DataSource V2, giving a unified way of writing to various data sources, pushdown to those sources, and a data catalog within Spark.

Python 2.7 will no longer be supported in favor of Python 3.

Hadoop 3 is now available

Another major version upgrade on the Dataproc image version 2.0 track is Hadoop 3, which is composed of two parts: HDFS and YARN.

Many on-prem Hadoop deployments have benefited from 3.0 features such as HDFS federation, multiple standby name nodes, HDFS erasure encoding, and a global scheduler for YARN. In cloud-based deployments of Hadoop, there tends to be less reliance on HDFS and YARN. HDFS storage will be substituted for Cloud Storage in most situations. YARN is still used for scheduling resources within a cluster, but in the cloud, Hadoop customers start to think about job and resource management at the cluster or VM level. Dataproc offers job-scoped clusters that are right-sized for the task at hand instead of being limited to just configuring a single cluster’s YARN queues with complex workload management policies.

However, if you don’t want to overhaul your architectures before moving to Google Cloud, you can lift and shift your on-prem Hadoop 3 infrastructure to Dataproc image version 2.0 and keep all your current tooling and processes in place. New cloud methodologies can then gradually be introduced for the right workloads over time.

While migrating to the cloud technology may relegate many features of Hadoop 3 to niche use cases, there are still a couple of useful Hadoop 3 features that will appeal to many existing Dataproc customers:

Native support for GPUs in the YARN scheduler

This makes it possible for YARN to identify the right nodes to use when GPUs are needed, properly isolate the GPU resources on a shared cluster, and autodiscover the GPUs available (previously, administrators needed to configure the GPUs). The GPU information will even show up in the YARN UI, which is easily accessed via the Dataproc Component Gateway.

YARN containerization

Modern open source components like Spark and Flink have native support for Kubernetes, which offers production-grade container orchestration. However, there are still many legacy Hadoop components that have not yet been ported away from YARN and into Kubernetes. Hadoop 3’s YARN containerization can help manage those components using Docker containers and today’s CI/CD pipelines. This feature will be very useful for applications such as HBase that need to stay up and would benefit from additional software isolation.

Other software upgrades on Dataproc image version 2.0

Various other advances are available on Dataproc image version 2.0, and include:

Software libraries

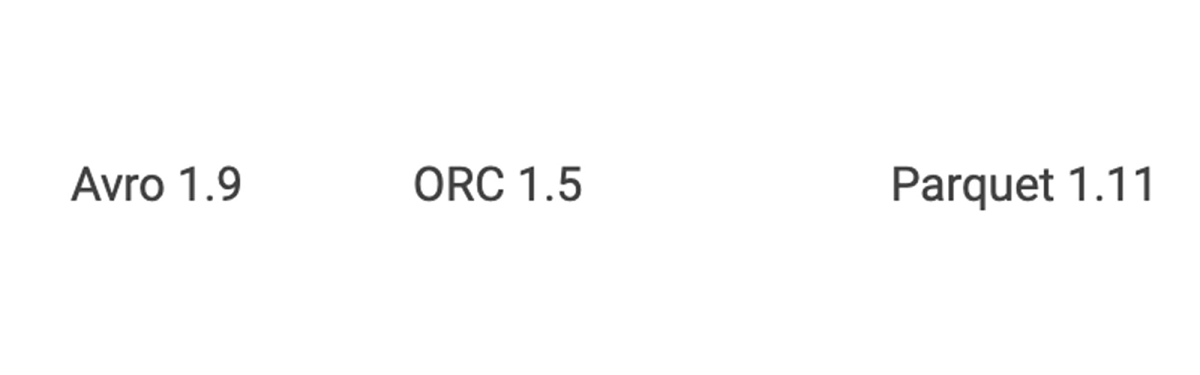

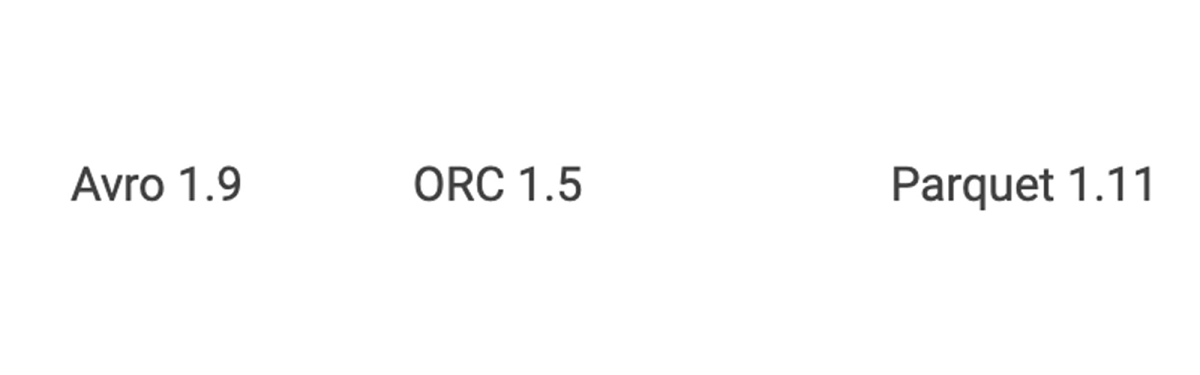

Shared libraries

In conjunction with the component upgrades, other shared libraries will also be upgraded to prevent runtime incompatibilities and offer the full features of the new OSS offerings.

You may also find that Dataproc image version 2.0 will change many previous configuration settings to optimize the OSS software and settings for Google Cloud.

Getting started

To get started with Spark 3 and Hadoop 3, simply run the following command to create a Dataproc image version 2.0 cluster:

When you are ready to move from development to production, check out these 7 best practices for running Cloud Dataproc in production.