Introducing container-native load balancing on Google Kubernetes Engine

Neha Pattan

Staff Software Engineer

Minhan Xia

Software Engineer

In the past few years, developers have moved en masse to containers for their ease-of-use, portability and performance. Today, we’re excited to announce that Google Cloud Platform (GCP) now offers container-native load balancing for applications running on Google Kubernetes Engine (GKE) and Kubernetes on Compute Engine, reaffirming containers as first-class citizens on GCP.

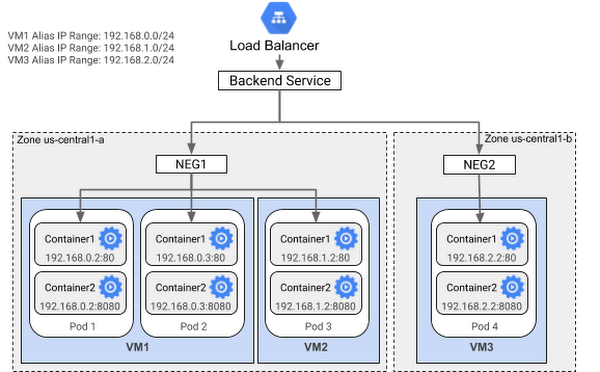

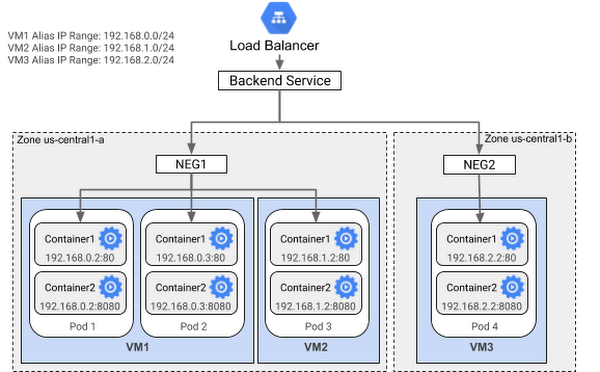

With today’s announcement, now you can program load balancers with arbitrary network endpoints as IP port pairs using network endpoint groups (NEGs), and load balancing directly to the containers. With this new data model abstraction layer on GCP, you get native health checking as well as more accurate and even load balancing to pods.

How container-native load balancing works

Load balancers were initially built to support resource allocation for virtual machines, helping make workloads highly available. When containers and container orchestrators started to take off, users adapted these VM-focused load balancers for their use, even though the performance was suboptimal. Container orchestrators like GKE performed load balancing using existing network functions applied to the granularity of VMs. In the absence of a way to define a group of pods as backends, the load balancer used instance groups to group VMs as backends. Ingress support in GKE using those instance groups used the HTTP(S) load balancer to perform load balancing to nodes in the cluster. Those nodes followed IPTable rules to route the requests to the pods serving as backends for the load-balanced application. Load balancing to the nodes was the only option, since the load balancer didn’t recognize pods or containers as backends, resulting in imbalanced load and a suboptimal data path with lots of traffic hops between nodes.

The new NEG abstraction layer that enables container-native load balancing is integrated with the Kubernetes Ingress controller running on GCP. If you have a multi-tiered deployment where you want to expose one or more services to the internet using GKE, you can also create an Ingress object, which provisions an HTTP(S) load balancer, allowing you to configure path-based or host-based routing to your backend services.

The benefits of using container-native load balancing

Container-native load balancing has several important advantages over the earlier IPTables-based approach:

Optimal load balancing

Previously, the Google load balancing system evenly distributed requests to the nodes specified in the backend instance groups, without any knowledge of the backend containers. The request would then get routed to the first randomly chosen healthy pod, resulting in uneven traffic distribution among the actual backends serving the application. With container-native load balancing, traffic is distributed evenly among the available healthy backends in an endpoint group, following a user-defined load balancing algorithm.

Native support for health checking

Being aware of the actual backends allows the Google load balancing system to health-check the pods directly, rather than sending the health check to the nodes and the node forwarding the health check to a random pod (possibly on a different node). As a result, health check results obtained this way more accurately mirror the health of the backends. You can specify a variety of health checks (TCP, HTTP(S) or HTTP/2), which health-check the backend containers (pods), directly rather than the nodes.

Graceful termination

When a pod is removed, the load balancer natively drains the connections to the endpoint serving traffic to the load balancer according to the connection draining period configured for the load balancer’s backend service.

Optimal data path

With the ability to load balance directly to containers, the traffic hop from the load balancer to the nodes disappears, since load balancing is performed in a single step rather than two.

Increased visibility and security

Container-native load balancing helps you troubleshoot your services at the pod level. It preserves the source IP to make it easier to trace back to the source of the traffic. Since the container sees the packets arrive from the load balancer rather than through a source NAT from another node, you can now create firewall rules using node-level network policy.

Getting started with container-native load balancing

You can use container-native load balancing in several scenarios. For example, you can create an Ingress using NEGs with VPC-native GKE clusters created using Alias IPs. This gives native support for pod IP routing and enables advertising prefixes.

For detailed instructions on how to set up an Ingress with native load-balancing support for containers, refer to the documentation.

In addition, if you use Alias IPs to create secondary IPs on network interfaces of your VMs, you can also now use NEGs to load balance to your alias IPs, using either HTTP(S) or TCP/SSL Proxy based load balancing. For detailed instructions on using NEGs for load balancing to VMs, refer to the documentation here.

As more organizations build applications using containers and Kubernetes, it’s important for core network infrastructure services to support that deployment model. Now, with container-native load balancing and NEGs on GCP, you get optimal performance, native health checks and improved visibility. Drop us a line about how you use NEGs, and other networking features you’d like to see on GCP.