Get in sync: Consistent Kubernetes with new Anthos Config Management features

Jeff Reed

VP, Product Management, Cloud Security

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

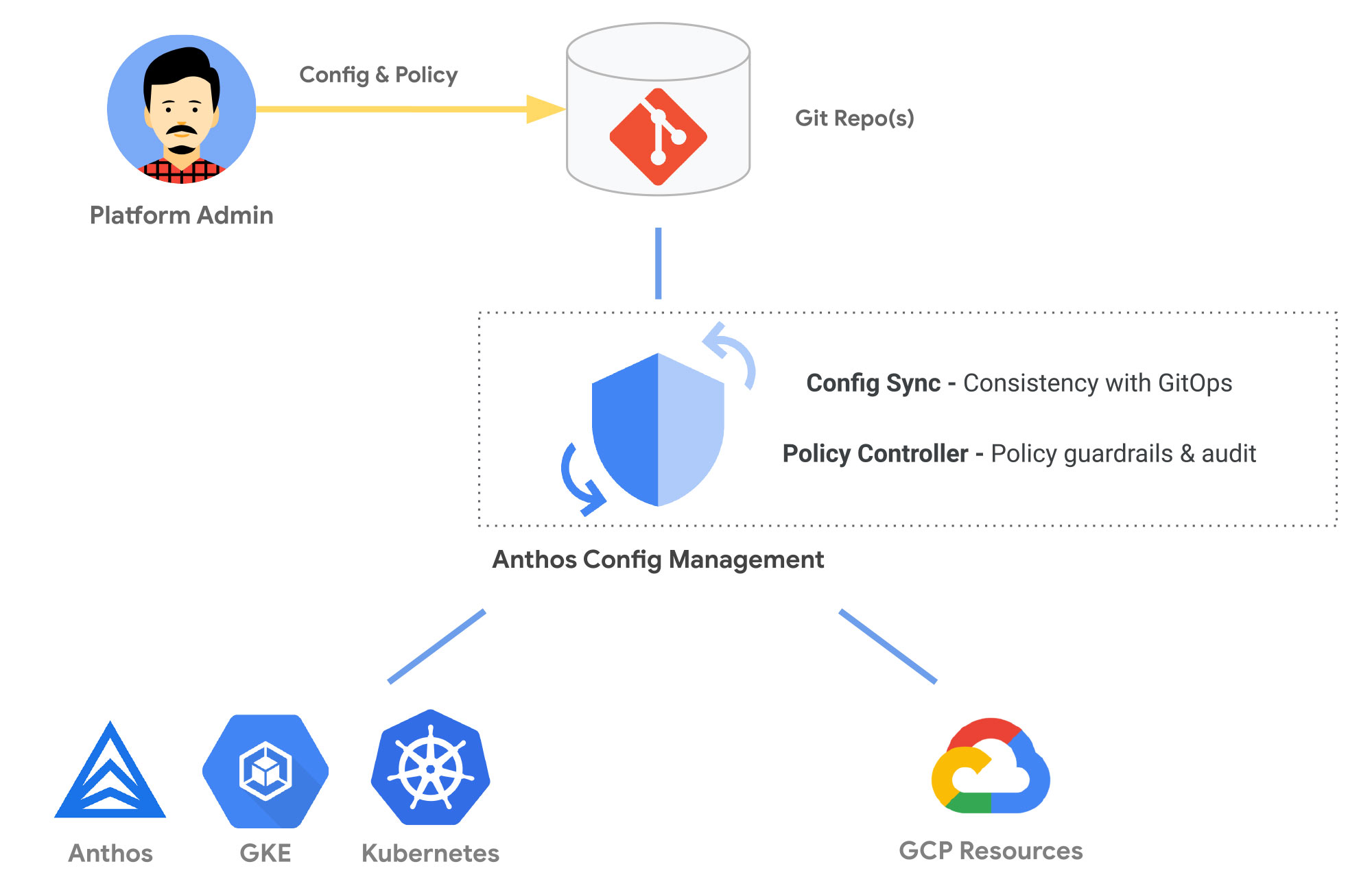

Free trialFrom large digital-native powerhouses to midsized manufacturing firms, every company today is creating and deploying more software to more places more often. Anthos Config Management lets you set and enforce consistent configurations and policies for your Kubernetes resources—wherever you build and run them—and manage Google Cloud services the same way.

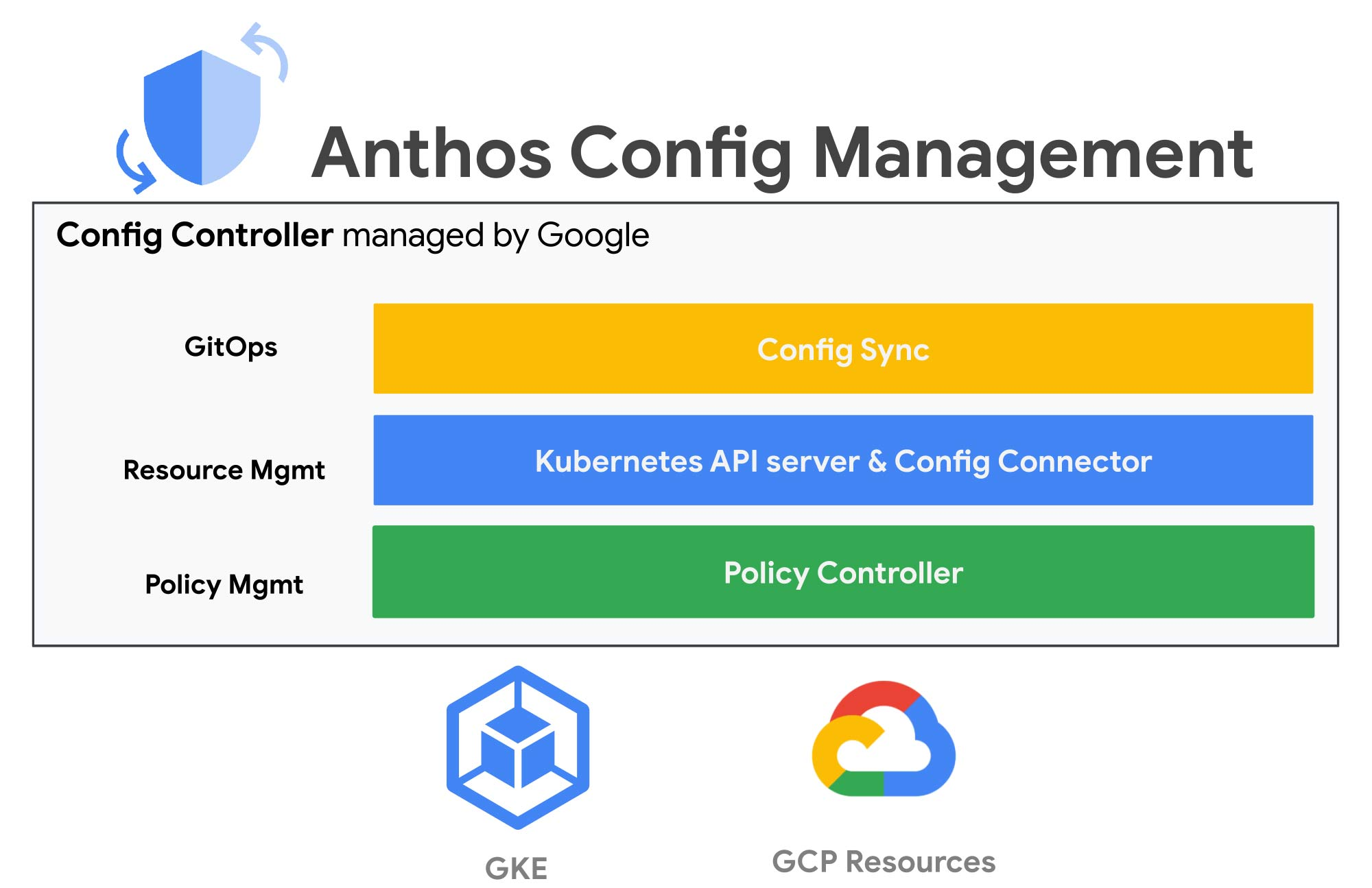

Today, as a part of Anthos Config Management, we are introducing Config Controller, a hosted service to provision and orchestrate Google Cloud resources. This service offers an API endpoint that can provision, actuate, and orchestrate Google Cloud resources the same way it manages Kubernetes resources. You don’t have to install or manage the components—or be an expert in Kubernetes resource management or GitOps—because Google Cloud will manage them for you.

Today, we’re also announcing that, in addition to using it for hybrid and multicloud use cases, Anthos Config Management is now available for Google Kubernetes Engine (GKE) as a standalone service. GKE customers can now take advantage of config and policy automation in Google Cloud at a low incremental per-cluster cost.

These announcements deliver a whole new approach to config and policy management—one that’s descriptive or declarative, rather than procedural or imperative. Let’s take a closer look.

Let Kubernetes automate your configs and policies

Development teams need stable and secure environments to build apps quickly and deploy them easily. Today, platform teams often scramble to provision and configure the necessary infrastructure components, apps, and cloud services the same way—in many different places—and keep them all up-to-date, patched, and secure.

The struggle is real, and it’s not new. Platform administrators have been hand-crafting and partially automating configuration with new infrastructure-as-code languages and tools for years. We can spin up new containerized dev environments in minutes in the cloud and on-prem. We can push code to production hundreds of times a day with automated CI/CD processes. So why do configurations drift and fall out of sync with production?

Because it takes time and toil to develop a consistent and automated way to describe what we want, create what we need, and repair what we break. The declarative Kubernetes Resource Model (KRM) reduces this toil with a consistent way to define and update resources: describe what you want and Kubernetes makes it happen. ACM makes it even easier by adding pre-built, opinionated config and policy automations, such as creating a secure landing zone and provisioning a GKE cluster from a blueprint. Blueprints help platform teams configure both Kubernetes and Google Cloud services the same way every time.

Describe your intent with a single resource model

The Kubernetes API server includes controllers that make sure your container infrastructure state always matches the state you declare in YAML. For example, Kubernetes can ensure that a load balancer and service proxy are always created, connected to the right pods, and configured properly. But KRM can manage more than just container infrastructure. You can use KRM to deploy and manage resources such as cloud databases, storage, and networks. It can also manage your custom-developed apps and services using custom resource definitions.

Create what you need from a single source of truth

With Anthos Config Management, you declare and set configurations once and forget them. You don’t have to be an expert in KRM or GitOps-style configuration because the hosted Config Controller service takes care of it. Config Controller provisions infrastructure, apps, and cloud services; configures them to meet your desired intent; monitors them for configuration drift; and applies changes every time you push a new resource declaration to your Git repository. Config changes are as easy as a git push—and easily integrate with your development workflows.

Anthos Config Management uses Config Sync to continuously reconcile the state of your registered clusters and resources—that means any GKE, Anthos, or other registered cluster—and makes sure unvetted changes are never pushed to live clusters. Anthos Config Management reduces the risk of dev or ops teams making any changes outside the Git source of truth by requiring code reviews and rolling back any breaking changes to a good working state. In short, using Anthos Config Management both encourages and enforces best practices.

Repair what breaks for automated compliance

Anthos Config Management’s Policy Controller makes it easier to create and enforce fully programmable policies across all connected clusters. Policies act as guardrails to prevent any changes to configuration from violating your custom security, operational, or compliance controls. For example, you can set policies to actively block any non-compliant API requests, require every namespace to have a label, prevent pods from running privileged containers, restrict the types of storage volumes a container can mount, and more.

Policy Controller is based on the open source Open Policy Agent Gatekeeper project, augmented by Google Cloud with a ready-to-use library of pre-built policies for the most common security and compliance controls. Customers can establish a secure baseline easily without deep expertise and ACM applies policies to a single cluster (e.g. GKE) or to a distributed set of Anthos clusters on-prem or in other cloud platforms. You can audit and add your own custom policies by allowing your security-savvy experts to create constraint templates which anyone can invoke in different dev or production environments without learning how to write or manage policy code. The audit functionality included allows platform admins to audit all violations, simplifying compliance reviews.

Configure and control every cluster consistently

The hosted service, Config Controller, which runs Config Connector, Config Sync, and Policy Controller for you, is available in Preview. Config Controller leverages Config Connector, which lets you manage Google Cloud resources the same way you manage other Kubernetes resources, with continuous monitoring and self-healing. For example, you can ask Config Connector to create a Cloud SQL instance and a database. Config Connector can manage more than 60 Google Cloud resources, including Bigtable, BigQuery, Pub/Sub, Spanner, Cloud Storage, and Cloud Load Balancer.

Once you’ve embraced a consistent resource model, using ACM to enforce configuration and policy automatically for individual resources, take the next step with blueprints. A blueprint is a package of config and policy that documents an opinionated solution to deploy and manage multiple resources at once. Blueprints capture best practices and policy guardrails, package them together, and let you deploy them as a complete solution to any Kubernetes clusters using Config Controller. Use Blueprints to manage multiple resources at once, or to create customized landing zones—compliant, properly configured, and easily duplicated environments that meet your own best practice guidelines and that are properly networked and secured.

The Vienna Insurance Group uses Anthos Config Management in its Viesure Innovation Center, which it credits with improving its compliance posture.

"Google's Landing Zones and Config Controller equipped us with an extensive set of tools to set up our Google Cloud infrastructure quickly and securely. Their policy controllers are a powerful instrument for ensuring compliance for all our Google Cloud resources." —Rene Schakmann, Head of Technology at viesure innovation center GmbH

Get started today

Anthos Config Management on GKE is generally available today. If you’re a GKE customer, you can also now use Anthos Config Management at a low incremental cost. By making it available to GKE customers, and offering it as a hosted, managed service for everyone, we’re making it easier than ever for you to leverage “KRM as a service” to simplify and secure Kubernetes resource management from the data center to the cloud.

To learn more about the technical details behind ACM, check out this recent episode of the Kubernetes Podcast from Google with the TL for Policy Controller, Max Smythe.