Expanding our AI-optimized infrastructure portfolio: Introducing Cloud TPU v5e and announcing A3 GA

Amin Vahdat

VP/GM ML, Systems, and Cloud AI, Google

Mark Lohmeyer

VP & GM, Compute and AI Infrastructure

We’re at a once-in-a-generation inflection point in computing. The traditional ways of designing and building computing infrastructure are no longer adequate for the exponentially growing demands of workloads like generative AI and LLMs. In fact, the number of parameters in LLMs has increased by 10x per year over the past five years. As a result, customers need AI-optimized infrastructure that is both cost effective and scalable.

For two decades, Google has built some of the industry’s leading AI capabilities: from the creation of Google’s Transformer architecture that makes gen AI possible, to our AI-optimized infrastructure, which is built to deliver the global scale and performance required by Google products that serve billions of users like YouTube, Gmail, Google Maps, Google Play, and Android. We are excited to bring decades of innovation and research to Google Cloud customers as they pursue transformative opportunities in AI. We offer a complete solution for AI, from computing infrastructure optimized for AI to the end-to-end software and services that support the full lifecycle of model training, tuning, and serving at global scale.

Google Cloud offers leading AI infrastructure technologies, TPUs and GPUs, and today, we are proud to announce significant enhancements to both product portfolios. First, we are expanding our AI-optimized infrastructure portfolio with Cloud TPU v5e, the most cost-efficient, versatile, and scalable Cloud TPU to date, now available in preview. TPU v5e offers integration with Google Kubernetes Engine (GKE), Vertex AI, and leading frameworks such as Pytorch, JAX and TensorFlow so you can get started with easy-to-use, familiar interfaces. We’re also delighted to announce that our A3 VMs, based on NVIDIA H100 GPUs, delivered as a GPU Supercomputer, will be generally available next month to power your large-scale AI models.

TPU v5e: The performance and cost efficiency sweet spot

Cloud TPU v5e is purpose-built to bring the cost-efficiency and performance required for medium- and large-scale training and inference. TPU v5e delivers up to 2x higher training performance per dollar and up to 2.5x inference performance per dollar for LLMs and gen AI models compared to Cloud TPU v4. At less than half the cost of TPU v4, TPU v5e makes it possible for more organizations to train and deploy larger, more complex AI models.

But you’re not sacrificing performance or flexibility to get these cost benefits: we balance performance, flexibility, and efficiency with TPU v5e pods, allowing up to 256 chips to be interconnected with an aggregate bandwidth of more than 400 Tb/s and 100 petaOps of INT8 performance. TPU v5e is also incredibly versatile, with support for eight different virtual machine (VM) configurations, ranging from one chip to more than 250 chips within a single slice. This allows customers to choose the right configurations to serve a wide range of LLM and gen AI model sizes.

“Cloud TPU v5e consistently delivered up to 4X greater performance per dollar than comparable solutions in the market for running inference on our production ASR model. The Google Cloud software stack is well-suited for production AI workloads and we are able to take full advantage of the TPU v5e hardware, which is purpose-built for running advanced deep-learning models. This powerful combination of hardware and software dramatically accelerated our ability to provide cost-effective AI solutions to our customers.” — Domenic Donato, VP of Technology, AssemblyAI

Our speed benchmarks are demonstrating a 5X increase in the speed of AI models when training and running on Google Cloud TPU v5e. We are also seeing a tremendous improvement in the scale of our inference metrics, we can now process 1000 seconds in one real-time second for in-house speech-to-text and emotion prediction models — a 6x improvement.” — Wonkyum Lee, Head of Machine Learning, Gridspace

TPU v5e: Easy to use, versatile, and scalable

Orchestrating large-scale AI workloads with scale-out infrastructure has historically required manual effort to handle failures, logging, monitoring, and other foundational operations. Today we’re making it simpler to operate TPUs, with the general availability of Cloud TPUs in GKE, the most scalable leading Kubernetes service in the industry today1. Customers can now improve AI development productivity by leveraging GKE to manage large-scale AI workload orchestration on Cloud TPU v5e, as well as on Cloud TPU v4.

And for organizations that prefer the simplicity of managed services, Vertex AI now supports training with various frameworks and libraries using Cloud TPU VMs.

Cloud TPU v5e also provides built-in support for leading AI frameworks such as JAX, PyTorch, and TensorFlow, along with popular open-source tools like Hugging Face’s Transformers and Accelerate, PyTorch Lightning, and Ray. Today we’re pleased to share that we are further strengthening our support for Pytorch with the upcoming PyTorch/XLA 2.1 release, which includes Cloud TPU v5e support, along with new features including model and data parallelism for large-scale model training, and more.

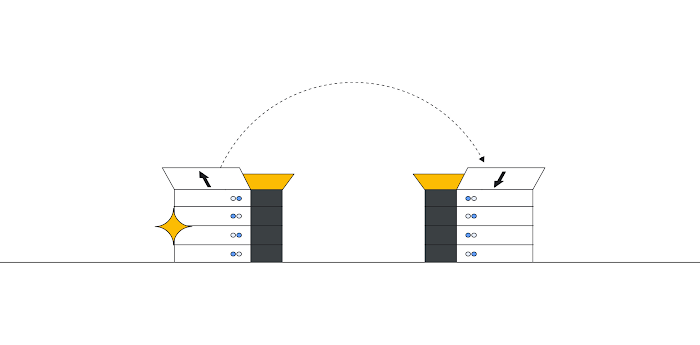

Finally, to make it easier to scale up training jobs, we’re introducing Multislice technology in preview, which allows users to easily scale AI models beyond the boundaries of physical TPU pods — up to tens of thousands of Cloud TPU v5e or TPU v4 chips. Until now, training jobs using TPUs were limited to a single slice of TPU chips, capping the size of the largest jobs at a maximum slice size of 3,072 chips for TPU v4. With Multislice, developers can scale workloads up to tens of thousands of chips over inter-chip interconnect (ICI) within a single pod, or across multiple pods over a data center network (DCN). Multislice technology powered the creation of our state-of-the-art PaLM models; now we are pleased to make this innovation available to Google Cloud customers.

“Easily one of the most exciting features for our development team is the unified toolset. The amount of wasted time and hassle they can avoid by not having to make different tools fit together will streamline the process of taking AI from idea to training to deployment. For example: Configuring and deploying your AI models on Google Kubernetes Engine and Google Compute Engine along with Google Cloud TPU infrastructure enables our team to speed up the training and inference of the latest foundation models at scale, while enjoying support for autoscaling, workload orchestration, and automatic upgrades.“ — Yoav HaCohen, Core Generative AI Team Lead, Lightricks

We’re thrilled to make our TPUs more accessible than ever. In the video below, you can get a rare peek under the hood at the physical data center infrastructure that makes all of this possible.

A3 VMs: Powering GPU supercomputers for gen AI workloads

To empower customers to take advantage of the rapid advancements in AI, Google Cloud partners closely with NVIDIA, delivering new AI cloud infrastructure, developing cutting-edge open-source tools for NVIDIA GPUs, and building workload-optimized end-to-end solutions specifically for gen AI. Google Cloud, along with NVIDIA, aims to make AI more accessible for a broad set of workloads and that vision is now being realized. For example, earlier this year, Google Cloud was the first cloud provider to offer NVIDIA L4 Tensor Core GPUs with the launch of the G2 VM.

Today, we’re thrilled to announce that A3 VMs will be generally available next month. Powered by NVIDIA’s H100 Tensor Core GPUs, which feature the Transformer Engine to address trillion-parameter models, NVIDIA’s H100 GPU, A3 VMs are purpose-built to train and serve especially demanding gen AI workloads and LLMs. Combining NVIDIA GPUs with Google Cloud’s leading infrastructure technologies provides massive scale and performance and is a huge leap forward in supercomputing capabilities, with 3x faster training and 10x greater networking bandwidth compared to the prior generation. A3 is also able to operate at scale, enabling users to scale models to tens of thousands of NVIDIA H100 GPUs.

The A3 VM features dual next-generation 4th Gen Intel Xeon scalable processors, eight NVIDIA H100 GPUs per VM, and 2TB of host memory. Built on the latest NVIDIA HGX H100 platform, the A3 VM delivers 3.6 TB/s bisectional bandwidth between the eight GPUs via fourth generation NVIDIA NVLink technology. Improvements in the A3 networking bandwidth are powered by our Titanium network adapter and NVIDIA Collective Communications Library (NCCL) optimizations. In all, A3 represents a huge boost for AI innovators and enterprises looking to build the most advanced AI models.

“Midjourney is an independent research lab exploring new mediums of thought and expanding the imaginative powers of the human species. Our platform is powered by Google Cloud’s latest G2 and A3 GPUs. G2 gives us a 15% efficiency improvement over T4s, and A3 now generates 2x faster than A100s. This allows our users the ability to stay in a flow state as they explore and create.” — David Holz, Founder and CEO, Midjourney

Flexibility of choice with Google Cloud AI infrastructure

There’s no one-size-fits-all when it comes to AI workloads — innovative customers like Anthropic, Character.AI, and Midjourney consistently demand not just improved performance, price, scale, and ease of use, but also the flexibility to choose the infrastructure optimized for precisely their workloads. That’s why together with our industry hardware partners,like NVIDIA, Intel, AMD, Arm and others, we provide customers with a wide range of AI-optimized compute options across TPUs, GPUs, and CPUs for training and serving the most compute-intensive models.

“Anthropic is an AI safety and research company focused on building reliable, interpretable, and steerable AI systems. We’re excited to be working with Google Cloud, with whom we have been collaborating to efficiently train, deploy and share our models. Google Kubernetes Engine (GKE) allows us to run and optimize our GPU and TPU infrastructure at scale, while Vertex AI will enable us to distribute our models to customers via the Vertex AI Model Garden. Google’s next-generation AI infrastructure powered by A3 and TPU v5e with Multislice will bring price-performance benefits for our workloads as we continue to build the next wave of AI.” — Tom Brown, Co-Founder, Anthropic

To request access to the Cloud TPU v5e, reach out to your Google Cloud account manager; or register to express interest in the A3 VMs.

1. As of August, 2023.