Introducing security configuration for gRPC apps with Traffic Director

Megan Yahya

Product Manager Cloud IDS

Sanjay Pujare

Tech Lead, Proxyless gRPC Security

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

Free trialDevelopers use the gRPC RPC framework for use cases like backend service-to-service communications or client-server communications between web, mobile and cloud. In July 2020, we announced support for proxyless gRPC services to reduce operational complexity and improve performance of service meshes with Traffic Director, our managed control plane for application networking. Then, in August we added support for advanced traffic management features such as route matching and traffic splitting. And now, gRPC services can be configured via the Traffic Director control plane to use TLS and mutual TLS to establish secure communication with one another. In this blog post we will talk about why this feature is important and the steps needed to get started.

Why gRPC Services?

As a general-purpose RPC framework, gRPC was developed as an open-source and HTTP/2-based version of a communication protocol that was used at Google internally. Today gRPC is used by more than one thousand organizations worldwide.

gRPC supports a variety of use-cases and deployment models—the above-mentioned communication between backend services, or by different clients to access Google Cloud services. gRPC supports multi-language environments; for example, it enables a Java client to talk to a server written in the Go language.

gRPC is implemented on top of HTTP/2 and mainly uses protobuf as the serialization/deserialization mechanism (but can use alternative mechanisms). The combination of the two provides organizations with a solid RPC framework that addresses their emerging problems as they scale:

Support for multiple languages

Support for automatic client library generation, which increases agility by reducing the amount of boilerplate code

Protobuf support for evolving message definitions (backward compatibility) and providing a single source of truth

HTTP/2's binary framing for efficiency and performance

HTTP/2’s support for concurrent streams and bi-directional streaming

Proxyless gRPC services

gRPC has an important role to play in today’s microservices-based architectures. When big monolithic applications are broken down into smaller microservices, in-process calls between components of the monolith are transformed into network calls between microservices. gRPC makes it easy for services to communicate, both because of the benefits we talked about in the previous section and also because of the support for xDS protocol that we added last year.

Microservices-based applications require a service-mesh framework to provide load balancing, traffic management and security. By adding xDS support to gRPC, we enabled any control plane that supports xDS protocols to talk directly to a gRPC service, to provide it with service mesh policies.

The first launch we did in the summer of 2020 enabled gRPC clients to process and implement policies for traffic management and load balancing. The load balancing is happening on the client side so you won’t need to change your gRPC servers at all. It officially supports Traffic Director as a control plane. The second launch last year enabled fine-grained traffic routing, and weight-based traffic splitting.

But how do we make the network calls as secure as intra-process calls? We would need to use gRPC over TLS or mTLS. That’s where security in service mesh comes in. The latest version of gRPC allows you to configure policies for encrypted service-to-service communications.

Security in service mesh

Historically enterprises have used web application firewalls (WAFs) and firewalls at their perimeters to protect their services, databases and devices against external threats. Over time, with the migration of their workloads to cloud, running VMs and containers and using cloud services, security requirements have also evolved.

In a defense-in-depth model, organizations maintain their perimeter protection using firewalls and web application firewalls for the whole app. Today, on top of that, organizations want a “zero trust” architecture where additional security policy decisions are made and enforced as close to resources as possible. When we talk about resources, we are talking about all enterprise assets—everything in an organization’s data center.

Organizations now want to be able to enforce policies, not just at the edge, but also between their trusted backend services. They want to be able to apply security rules to enable encryption for all the communications and also rules to control access based on service identities and Layer-7 headers and attributes.

The solution we are proposing today solves the most common pain points for establishing security between services, including:

Managing and installing certs on clients and servers

Managing trust bundles

Tying identities and issuing certificates from a trusted CA

Rotating certificates

Modifying code to read and use the certificates

TLS/mTLS for gRPC proxyless services

Imagine a world where your infrastructure provides workload identities, CAs and certificate management tied to those workload identities. In addition, workload libraries are able to utilize these facilities in its service mesh communication to provide service-to-service TLS/mTLS and authorization. Just think how much time your developers and administrators would save!

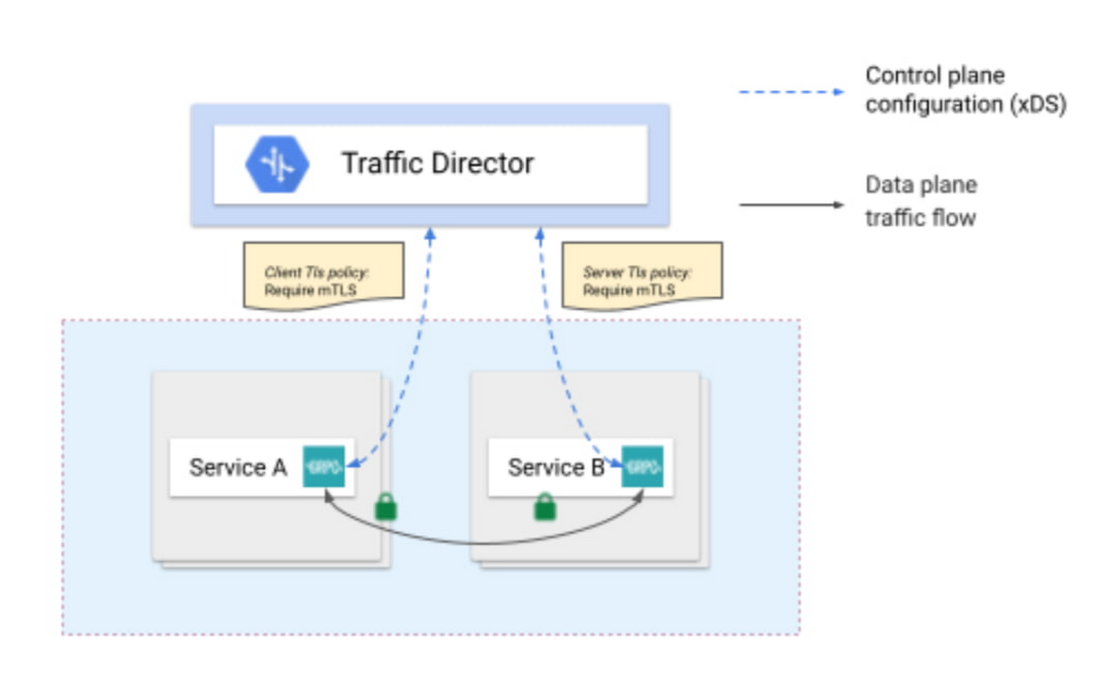

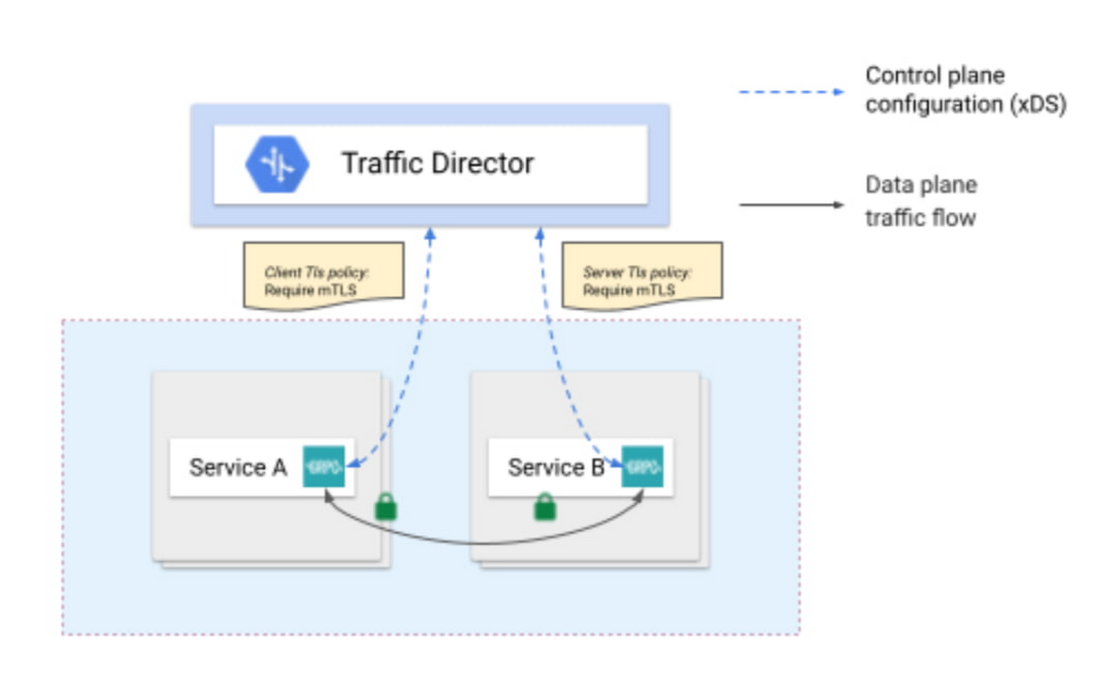

By configuring gRPC services via Traffic Director, you have all the necessary tools to establish security in an automated fashion between your services. Simply configure a security policy for your client and server, and Traffic Director distributes it to your services. A GKE workload certificate service generates the appropriate SPIFFE ID and creates a CSR (Certificate Signing Request) to the Google Cloud Certificate Authority (CA) Service, a managed private CA. CA Service signs the CSR and issues the certificates. And finally, GKE’s certificate provider makes the signed certificates available to the gRPC application via a “certificate volume” which gets used to encrypt and authenticate the traffic between your services.

In summary, using the new security features, you get the following benefits:

Toil-free provisioning of keys and certificates to all services in your mesh

Added security because of automatic rotation of keys and certificates

Integration with GKE including capabilities such as deployment descriptors, and labels

High availability of Google managed services such as Traffic Director and CA Service for your service mesh

Security tied to Google Identity & Access Management, for services authorization based on authorized Google service accounts.

Seamless interoperability with Envoy-based service meshes; for example, a service can be behind an Envoy proxy but the client uses gRPC proxyless service mesh security or vice versa

What’s next?

Next up, look for us to take security one step further by adding authorization features for service-to-service communications for gRPC proxyless services, as well as to support other deployment models, where proxyless gRPC services are running somewhere other than GKE, for example Compute Engine. We hope you'll join us and check out the setup guide and give us feedback.