TensorFlow Enterprise makes accessing data on Google Cloud faster and easier

Yu-Han Liu

Developer Programs Engineer, Google Cloud AI

Data is at the heart of all AI initiatives. Put simply, you need to collect and store a lot of it to train a deep learning model, and with the advancements and increased availability of accelerators such as GPUs and Cloud TPUs, the speed of getting the data from its storage location to the training process is increasingly important.

TensorFlow, one of the most popular machine learning frameworks, was open sourced by Google in 2015. Although it caters to every user, those deploying it on Google Cloud can benefit from enterprise-grade support and performance from the creators of TensorFlow. That’s why we recently launched TensorFlow Enterprise—for AI-enabled businesses on Google Cloud.

In this post, we look at the improvements TensorFlow Enterprise offers in terms of accessing data stored on Google Cloud. If you use Cloud Storage for storing training data, jump to the GCS reader improvements section to see how TensorFlow Enterprise doubles data throughput from Cloud Storage. If you use BigQuery to store data, jump to the BigQuery reader section to learn how TensorFlow Enterprise allows you to access BigQuery data directly in TensorFlow with high throughput.

Cloud Storage reader improvements

TensorFlow Enterprise introduces some improvements in the way TensorFlow Dataset reads data from Cloud Storage. To measure the effect of these improvements, we will run the same TensorFlow code with 1.14 and TensorFlow Enterprise and compare the average number of examples per second read from Cloud Storage. The code we run simply reads tfrecord files and prints the number of examples per second:

The output looks something like this:

The dataset we use for this experiment will be the fake imagenet data, a copy of which can be found at gs://cloud-samples-data/ai-platform/fake_imagenet.

We will run the same code on a Compute Engine VM first with TensorFlow 1.14, then with TensorFlow Enterprise and compare the average number of examples per second. In our experiments we ran the code on a VM with 8 CPUs and 64GB of memory, and reading from a regional Cloud Storage bucket in the same region as the VM.

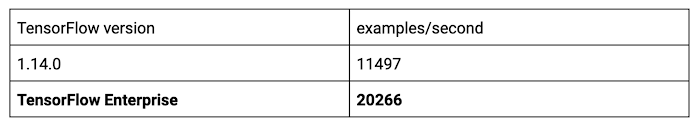

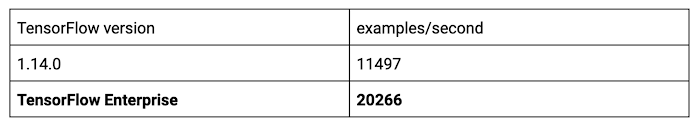

We see significant improvement:

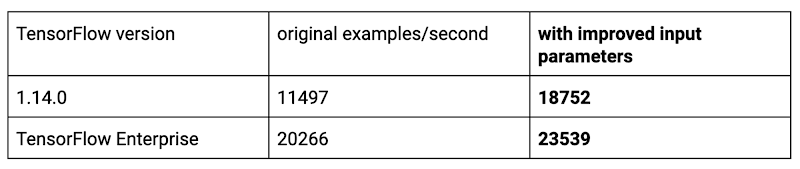

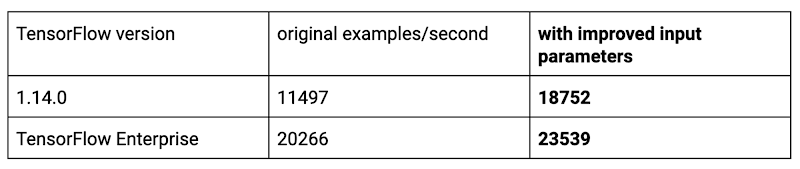

You can further improve data reading speed from Cloud Storage by adjusting various parameters TensorFlow provides. For example, when we replace the parallel_interleave call above to the following code:

We see further improvement:

There are other factors that affect reading speed from Cloud Storage. One common mistake is to have too many small tfrecord files on Cloud Storage as opposed to fewer larger ones. To achieve high throughput from TensorFlow reading data from Cloud Storage, you should group the data so that each file is more than 150MB.

BigQuery Reader

TensorFlow Enterprise introduces the BigQuery reader that allows you to read data directly from BigQuery. For example:

The BigQuery reader uses BigQuery’s Storage API for parallelized data access to allow high data throughput. The output looks like the following:

With the code above, each batch of of examples is structured as a Python OrderedDict whose keys are the column names specified in the read_session call, and whose values are TensorFlow tensors ready to be consumed by a model. For instance:

Conclusion

The speed of getting data from its storage location to the machine learning training process is increasingly critical to a deep learning model builders’ productivity. TensorFlow Enterprise can help by providing optimized performance and easy access to data sources, and continues to work with users to introduce improvements that make TensorFlow workloads more efficient on GCP.

For more information, please see our summary blog of Tensorflow Enterprise. To get started, visit TensorFlow Enterprise.