The Prompt: Takeaways from hundreds of conversations about generative AI — part 1

Donna Schut

Head of Technical Solutions, Applied AI Engineering

Kevin Tsai

Head of Solutions Architecture, Applied AI Engineering

Business leaders are buzzing about generative AI. To help you keep up with this fast-moving, transformative topic, each week in “The Prompt,” we’ll bring you observations from our work with customers and partners, as well as the newest AI happenings at Google. In this edition, we look at major themes and lessons from hundreds of customer conversations.

Generative AI has the potential to profoundly impact organizations. More than ever, artificial intelligence (AI) is becoming accessible for different functions, whether someone has a machine learning (ML) background or not. Many leaders are thinking about how they can leverage generative AI to create seamless conversational experiences, get insights from complex data, and generate content at the click of a button.

We’ve spoken to hundreds of leaders across industries over the past year, and in this edition of the “The Prompt” — part of a special two-part series — we’d like to share some of the major themes, use cases, and focus areas we’ve observed, to help you chart a course for your organization.

A new era

Generative AI is a type of machine learning (ML) that learns patterns and structures in training data and that can then be used to generate new content or data and to analyze tasks. Generative AI can create text, images, videos, audio, and more. Many generative AI use cases involve large language models (LLMs), which are ML algorithms that can recognize, predict, and generate human language.

LLMs are characterized by their multitask abilities, which let them perform well on a range of natural language processing tasks. For example, consider a model that's been pre-trained on a large, task-agnostic text corpus. The model uses a canonical language modeling objective and has been instruction-tuned. This model can perform well on many downstream tasks, such as question-answering and summarization.

Large models can even acquire abilities without having been explicitly pre-trained on them, a phenomenon known as emergent abilities. Modern models with built-in multi-task abilities are both more accessible and more extensible than those developed with previous methods of ML, where models are typically purpose-built for a specific use case. In this article, we refer to these previous methods of ML as traditional ML.

Executives across industries are exploring how generative AI can impact their business, opening opportunities for technical leaders to drive strategic imperatives and making partnerships between business leaders and IT more important than ever. Organizations are putting together task forces to define use cases and evaluate technologies. Although generative AI can create value for certain use cases, other needs might still best be addressed by traditional ML methods, such as customer lifetime value, or even database queries. To maximize return on its investment in AI, your organization should prioritize use cases and decide where generative AI can deliver the most value on top of traditional ML and other methods.

Trending use cases

We spoke to leaders of which the top industries were financial services, retail and CPG, healthcare, media, and manufacturing. They identified a number of use cases where they see generative AI as a suitable approach, headlined by customer service and support, document search and synthesis, content generation, content discovery, and code development. Summaries of these use cases are below, but for more information and architectural patterns, see the Generative AI on Google Cloud page.

Customer service and support

Generative AI can help your business improve operations, reduce costs, and improve customer experiences by more effectively servicing requests. Use cases include answering customer questions intuitively through a chatbot, and assisting support agents by summarizing cases, indicating next steps, and transcribing calls. You can also leverage generative AI to produce FAQ documentation based on transcripts and other internal knowledge sources that can power an assistive chatbot or internal search.

Document search and synthesis

Scores of organizations want to harness generative AI so employees can easily find the most relevant documents through improved search results and summaries. For example, your organization can reduce the time it takes employees to find answers to common HR- and process-related questions. Internal manuals and sites are often poorly indexed, so deploying a conversational AI-based employee search tool can offload work so your HR teams can focus on other tasks.

Content generation

If your organization includes creative teams, you can use generative AI to empower them to quickly create images, ad copy, articles, and website content for marketing campaigns. Marketing teams might want to generate advertising content based on audience attributes, enabling personalization at scale. Or they might want to create social media content for trends, which can be spotted by summarizing news articles using LLMs. These uses can improve marketing ROI, improve employee productivity, and accelerate time to market.

Content discovery

Generative AI can help both your internal and external users find the most relevant product or content. For example, a consumer could shop on a retailer’s site through a conversational agent by posing a few questions that prompt generative AI to present search results. Helping shoppers find what they’re looking for more quickly can ultimately boost conversion rates and online sales, as well as provide a more intuitive user experience.

Developer efficiency

With generative AI, your organization can also improve developer productivity via code generation and code completion capabilities, as well as chatbots that can answer code-related questions, in effect offering pair programming and troubleshooting assistance. Our generative AI customers have seen higher-quality code produced with higher efficiency, helping them to release features or launch products more quickly.

Shift toward democratization of AI

The way we work will change as generative-AI-powered applications become more widely available and best practices for leveraging generative AI become more refined. You might use out-of-the-box (OOTB) product functionality to quickly build immersive conversational experiences. You might adapt foundation models for your own needs in order to differentiate your offerings from those of the competition. You might even train and build your own large models by using specialized AI infrastructure to innovate and customize for your business. Depending on your business needs and your team's skill sets, you might opt for one of these approaches or for a mix.

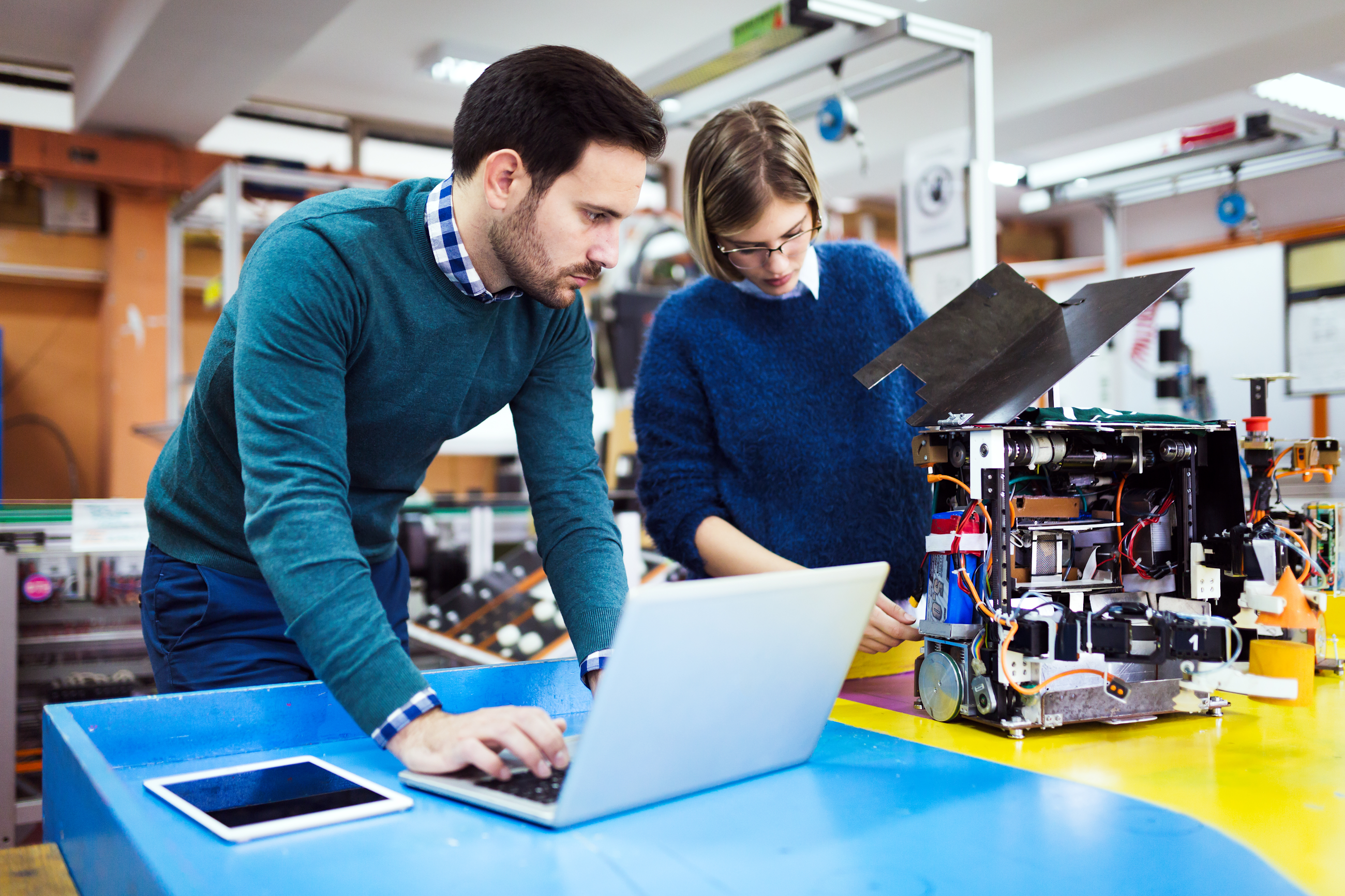

No matter what approach you use, to realize the value of generative AI, you need to train employees, hire for the right roles, and sponsor a culture of experimentation. Although application developers can work with OOTB functionality, adapting foundation models typically requires data science expertise. Building your own large models requires highly-skilled researchers and ML engineers. Depending on the approach or the mix of approaches you choose, your team composition across these roles may vary.

With generative AI, teams can create proofs of concept in days instead of months. But to prototype with LLMs, teams need to be able to assess whether LLMs can power their product vision. They also need to think through how they can build their product by using LLMs, and they must version the product on a tight feedback loop. This is why we believe a culture of experimentation is pivotal.

Responsible AI programs

Quick and easy experimentation can help your organization get value from AI faster than ever by minimizing the overhead of traditional ML. However, with this power and the ability to reach beyond how organizations have used AI previously, anyone who uses AI must be responsible in their approach.

With any new technology, evolving capabilities and uses create the potential for risks and complexities. As outlined in "Why we focus on AI,", these risks can manifest in many ways, from generating or worsening information hazards, to creating or amplifying unfair biases, to creating new cybersecurity risks.

To help meet these complexities, our approach to AI is grounded in a commitment to be bold and responsible. This approach includes applying our AI principles intentionally in all our products, innovating based on rigorous scientific methods, and collaborating with multidisciplinary experts. We're constantly listening, learning, and improving based on user and community feedback, as well as feedback from experts, governments, and representatives of disproportionately affected communities. We also recently launched a conceptual framework to help collaboratively secure AI technology.

There are some well-known risks that organizations need to think through. One example is hallucination. Hallucination is a response from an LLM that may seem coherent and confidently-presented but that is not actually based in factuality, occurring when the response is not grounded in training data or real-world information.

Hallucinations can be reduced but are currently very difficult to eliminate altogether. You might choose to build a workflow that includes human experts in the loop to mitigate risks. For example, for brand safety, you might want to review generated ads before they go live. If a problem is deterministic, such as determining the best type of bank account for a potential customer, a rules-based approach is safer. Another approach is to ground your models by augmenting LLMs with information retrieval.

Another example of risk is toxicity and bias. LLMs might perpetuate stereotypes and social biases or use toxic language that can cause offense. There are ways to mitigate (although not eliminate) this risk, including developing technical guardrails like content safety filters or content moderation models. As this field progresses, we’ll continue to evolve our tools to continue to reduce any risks.

Stayed tuned for part 2

Stay tuned next week for more learnings from hundreds of conversations about generative AI, when we will shift our focus to more technical insights. Until then, check out our recent edition of “The Prompt” about generative AI’s impact on knowledge work.