How to Understand and Action Mandiant's Intelligence on Information Operations

Mandiant

Written by: Daniel Kapellmann Zafra, Ryan Serabian, Sam Riddell, Nathan Brubaker

Defenders positioned across a wide range of roles and industries are engaged in identifying and exposing different types of malicious online influence activity. Cyber security researchers, government entities, academics, trust and safety departments within organizations, news outlets, and commercial entities each have unique roles based on their respective threat profiles and areas of coverage. This blog post introduces Mandiant’s approach to information operations (IO) and explains how organizations can benefit from access to our threat intelligence.

Mandiant tracks a range of activity across the online influence spectrum to generate intelligence that contributes to the exposure and mitigation of threats that result from IO. Mandiant's focus related to malicious online influence is to identify, analyze, and expose politically motivated efforts to manipulate target information environments using deceptive tactics. At times, this includes investigating financially motivated activity that may have political or commercial impact.

For years, we have identified and reported on IO campaigns that we judged to be operating in support of or on behalf of non-state and state actors, including but not limited to Belarusian, Russian, Chinese, and Iranian campaigns. Through the identification and exposure of these campaigns, we aid organizations and governments in mitigating the potential negative impacts of IO threat activity.

Note: See the Appendix for key definitions used in this blog post.

IO Threat Intelligence to Anticipate Cyber and Physical Risks

IO poses a threat to the security of nations, organizations, and individuals. This activity enables actors to covertly manipulate target audiences in order to influence real world decisions. IO threat intelligence can help both private and public institutions to gather context surrounding cyber and physical risks. IO can be used to support a variety of malicious objectives, for example:

- Damaging the reputation of private and governmental entities

- Encouraging harm to organizations, individuals, and marginalized groups

- Influencing democratic elections and exacerbating political unrest

- Destabilizing societal dynamics in regions undergoing conflict

Figure 1 describes some of the main use cases for IO threat intelligence.

Figure 1: Example IO threat intelligence use cases for commercial organizations, governmental institutions, and service providers or platforms

IO Defenders Must Adapt to Constantly Evolving Threat Activity

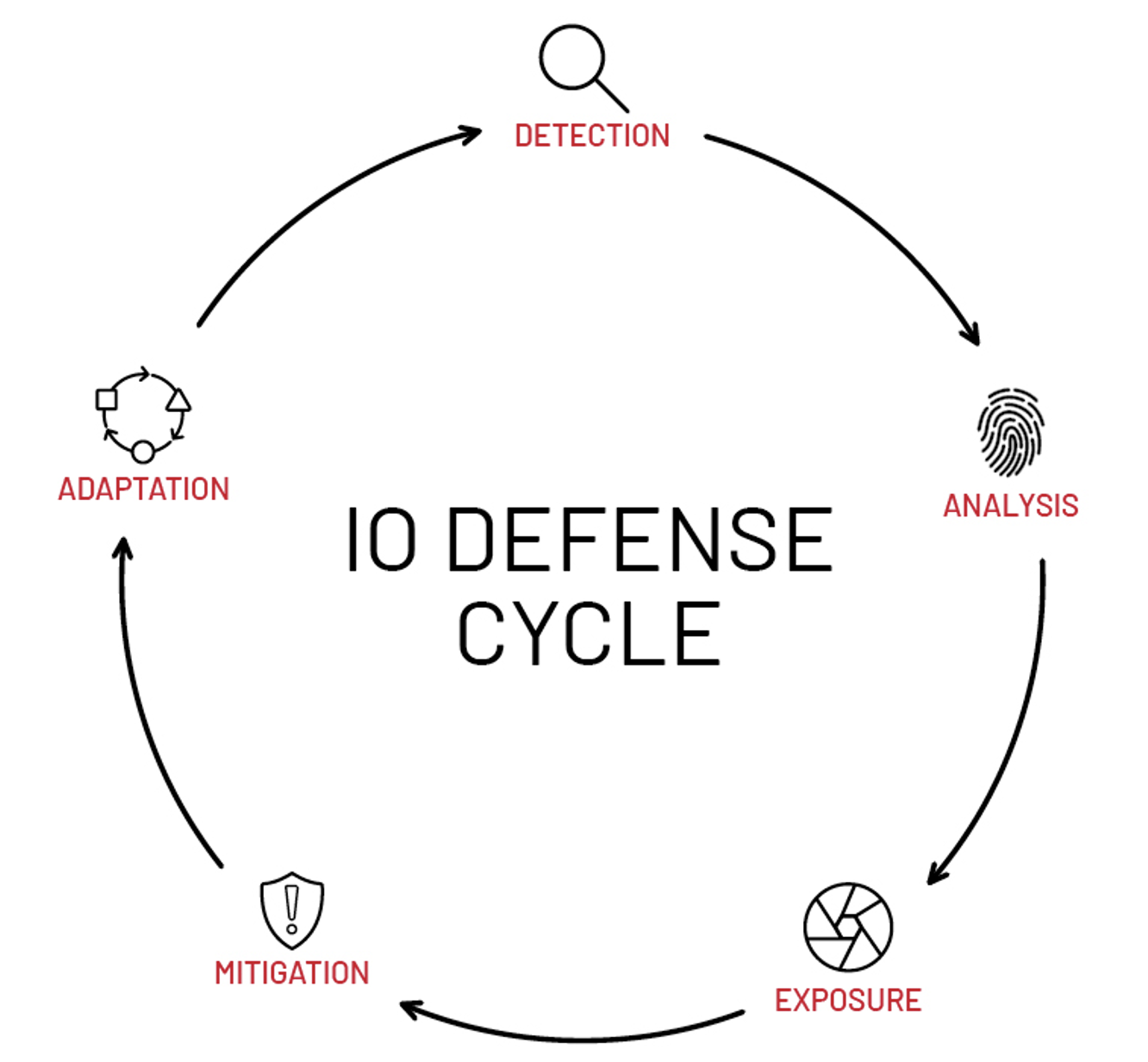

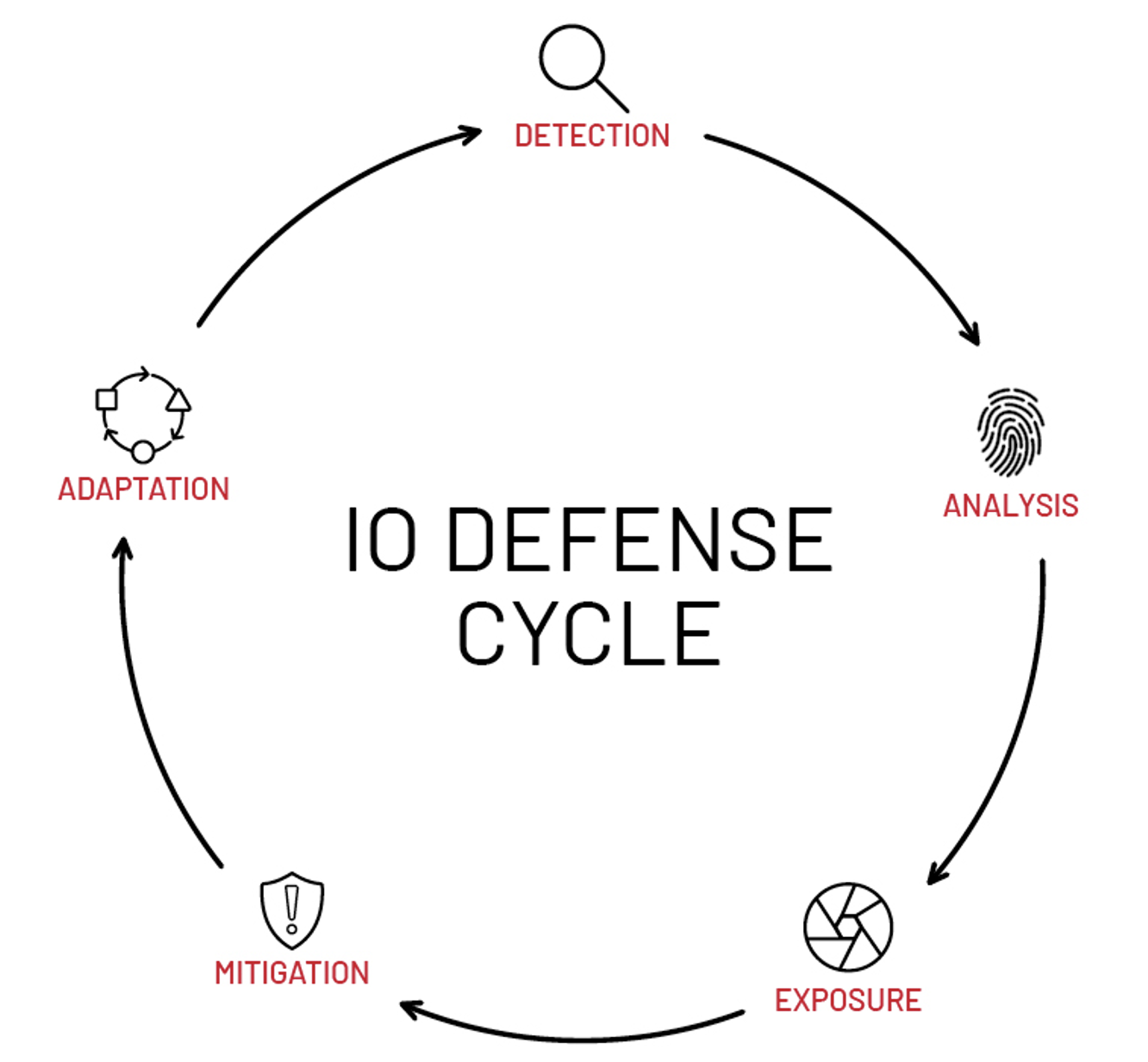

Different stakeholders are involved in the IO defense domain. Governments and service providers attempt to protect information environments by limiting the spread of concerted disinformation. When IO finds its way across the initial lines of defense, public and private organizations also employ their own defense mechanisms. The detection and response of defenders and actors constitutes a cycle of activity in which actors constantly adapt their tactics, techniques, and procedures (TTPs) to bypass front line protections, while defenders must adapt their strategies to mitigate that activity’s impacts (Figure 2).

Mandiant detects, analyzes, and exposes IO threat activity to provide organizations with context to mitigate its impact and remain aware of the evolving threat landscape. Whenever feasible, Mandiant also attributes threat activity to specific actors where possible, providing insight into their underlying motivations and to tracking malicious information flows based on known TTPs and behaviors.

Figure 2: Stages in the IO defense cycle

Government-Aligned IO Is Conducted on a Spectrum of State Affiliation

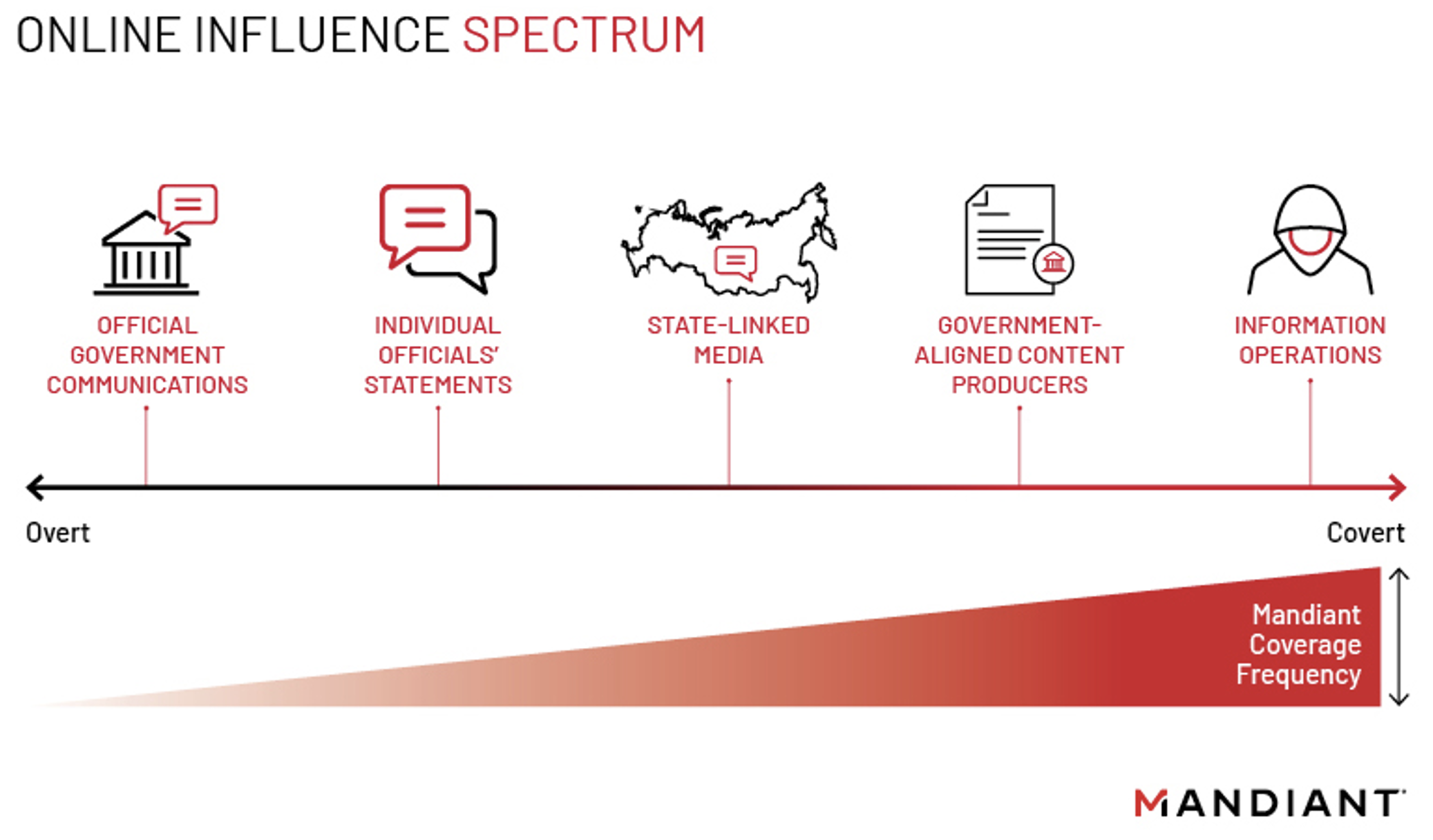

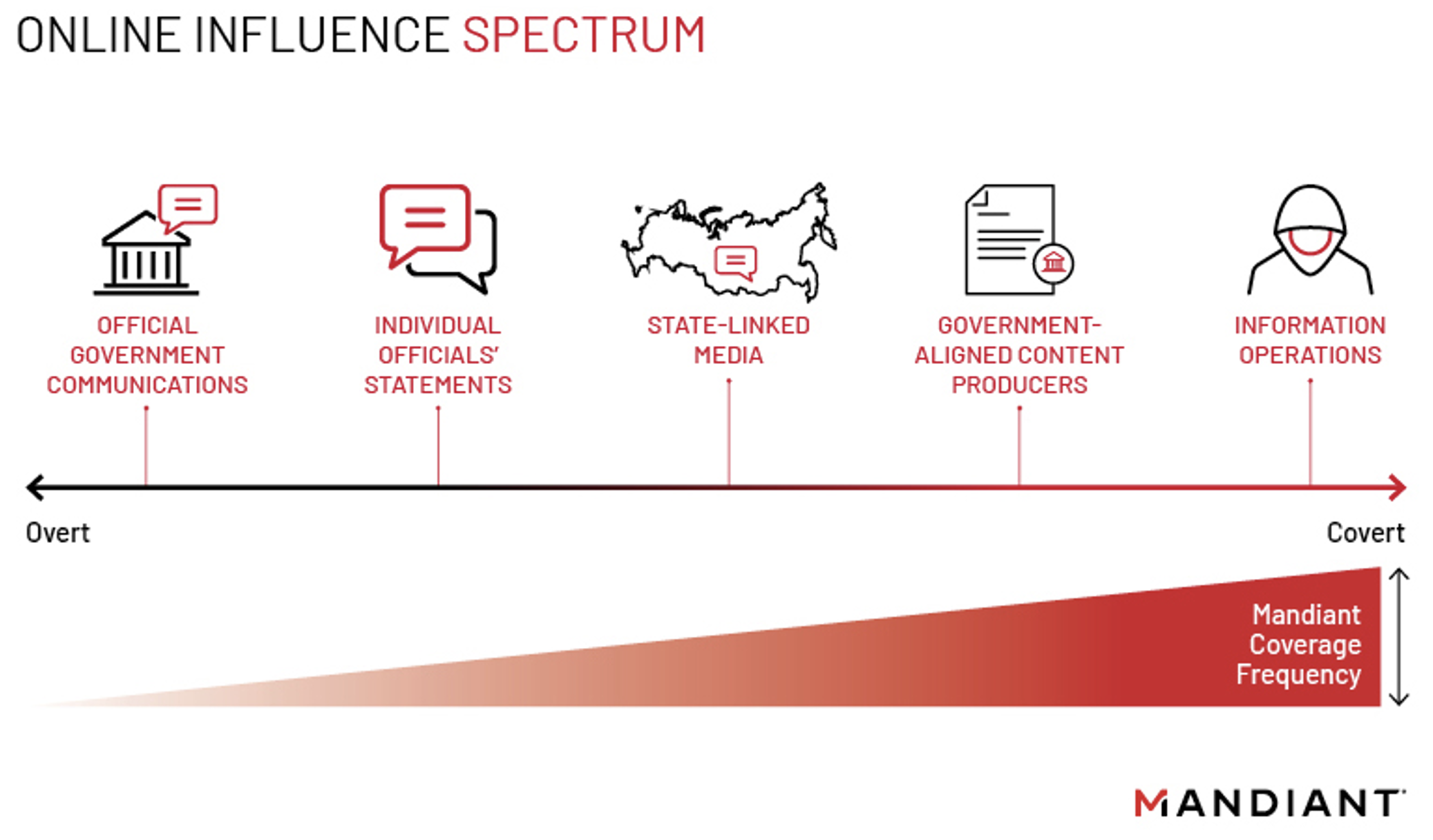

Online information environments can be targeted by a spectrum of state-aligned influence activity. Activity within the spectrum ranges from overt communications via official and public channels to covert activity leveraging deceptive tactics and assets in order to obfuscate ties to the original sponsor. IO actors may seek to amplify the impact of their messaging by operating in parallel on different sections of the online influence spectrum (Figure 3).

Mandiant focuses on uncovering IO where actors use covert methods to disseminate or promote content containing desired narratives. Although actors often use disinformation techniques to influence their target audiences, some of the most effective operations also involve strategically spreading true or partially true information.

Mandiant does not cover cases of misinformation, where false information is published or amplified via overt channels without the intent to deceive the audience.

Figure 3: A sample of activity placed on the Online Influence Spectrum

- Official government communications: Press releases, indictments, and posts by accounts belonging to official government entities such as government bureaus or ministries. This public messaging may include both factual information and disinformation aimed at swaying public opinion.

- Individual officials' statements: Posts or statements made by individuals actively employed or directly affiliated with a government. These statements may vary from official policies and positions to rogue statements.

- State-linked media: Outlets that are directly or indirectly controlled or funded by a government (e.g., articles published to state media outlets' webpages, television programs, and social media posts by the outlets or their employees).

- Government-aligned content producers: Individuals or entities whose motives or objectives align with that of a government. Such entities may have varying or unknown degrees of affiliation with the government.

- Information operations: Politically motivated efforts to manipulate target information environments using deceptive tactics, such as through coordinated and inauthentic online assets.

Classifying IO Activity

Mandiant both classifies and determines whether IO activity falls within scope of coverage by taking into consideration motivation, scale, the underlying veracity of shared contents, affiliation with state actors, potential impact, and the use of deceptive tactics, including coordinated inauthentic behavior (CIB). We use precise language to track the IO activity we observe as depicted in Figure 4.

Figure 4: How Mandiant classifies networks, operations, and campaigns

- When we assess multiple assets to be operating in coordination, we refer to the group of assets as a network.

- We refer to single instances of IO threat activity as operations, for example, a network of accounts promoting content related to a specific topic.

- Multiple operations or instances of threat activity that we assess to be linked are designated as campaigns.

When we have sufficient evidence to assess the entities or individuals that are conducting campaigns (e.g., Roaming Mayfly, Ghostwriter, DRAGONBRIDGE), we attribute those campaigns to known entities that we refer to as "actors" (e.g., Russia's Internet Research Agency). In some cases, we identify activity that can be attributed to private sector entities such as marketing firms.

At times, known cyber threat actors tracked for activity in other domains—such as cyber crime or espionage—overlap with observed IO activity. Some examples of such overlaps include actors using hack-and-leak operations or compromised websites and accounts to disseminate content. For instance, we have assessed that actor UNC1151, an intrusion group we have linked to the Belarusian Government, provides technical support to the Ghostwriter influence campaign.

Mixed Techniques to Uncover IO Threat Activity

Threat actors conducting IO use a variety of human and technology-based strategies to avoid being detected and exposed by defenders. These strategies may include using artificial intelligence (AI)-generated deepfake images, manipulating audiovisual contents, developing personas to pose as real individuals, and leveraging inauthentic networks of websites to promote strategic narratives.

Given the enormous amount of content that flows through the same channels that are used to conduct IO and threat actors' constant evolution of TTPs, defenders must constantly explore different techniques and leverage both subject matter expertise and technical capabilities to filter and uncover malicious activity.

Reach out to Mandiant for more information about how IO threat intelligence can help your organization.

Appendix: Glossary

CIB (Coordinated, inauthentic behavior): Groups of online assets that work together to mislead others about who they are and what they are doing (modified from Meta's definition of CIB).

Deepfake: Synthetic media, such as images or videos, generated using machine learning, which may be used to deceive target audiences by IO actors.

Disinformation: The intentional seeding or amplification of false information.

Generative Adversarial Network (GAN): A deep learning model architecture that consists of two sub-models, a generator and discriminator. The generator learns to create synthetic samples based on an original dataset with the goal of developing visuals that are realistic enough that the discriminator cannot distinguish real from synthetic.

Hack and Leak: Intrusion and subsequent release of extracted data with intent to influence.

Impersonator: An attempt to pose as another individual or entity.

Inauthentic Account: A general term for an account that dishonestly presents its identity. May be human operated or automated.

Information Operations (The Tactic): The use of coordinated, inauthentic online assets and/or deceptive tactics to influence target audiences.

Information Operation (The Activity): A specific instance of an actor's deliberate use of deceptive tactics to manipulate a target information environment regarding a given topic or incident.

Influence Campaign / Information Operations Campaign: Multiple information operations assessed to comprise part of a larger effort; these are typically executed over an extended period.

IO Assets: Actor-controlled online resources including for example social accounts, domains or email addresses used to support IO activity.

Misinformation: Unintentional spreading of false information.

Network: A group of individual assets that we assess to be acting in a coordinated and inauthentic manner.

Online Influence Activity: Intentional efforts to manipulate target information environments online in the interest of a threat actor or state sponsor. May use a range of tactics including those mentioned in IO, public messaging through diplomats or state media, and national-level content censorship by authoritarian regimes.

Propaganda: The dissemination of one-sided information in an effort to influence public information. Usually comes from nation-states or parties. Dishonest connotation.

Public Messaging: Efforts from governments to communicate with foreign publics. Typically involves counter messaging to perceived adversary messaging and attempts to spread true information.