Subatomic particles and big data: Google joins CERN openlab

Kevin Kissell

Technical Director, Office of the CTO

Today we are excited to announce that Google has signed an agreement to join CERN openlab. Together, we will be working to explore possibilities for joint research and development projects in cloud computing, machine learning, and quantum computing.

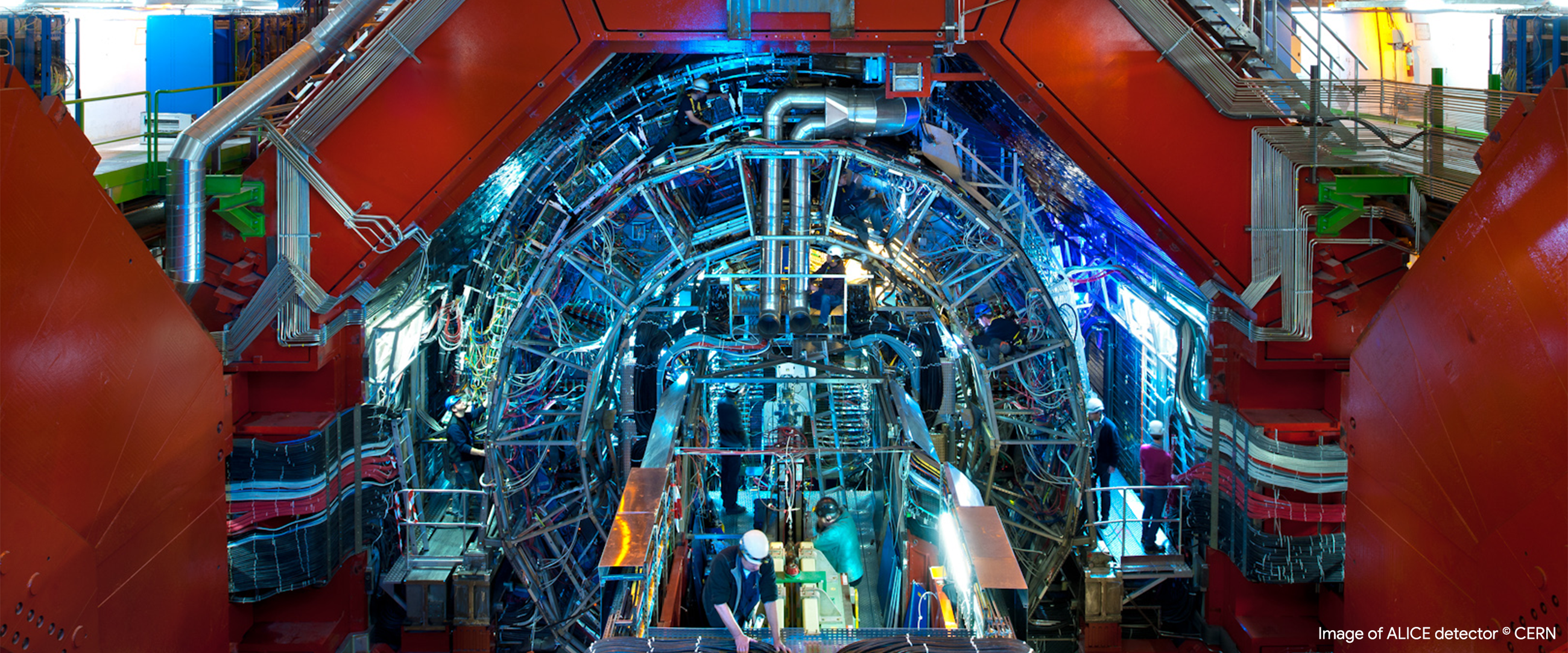

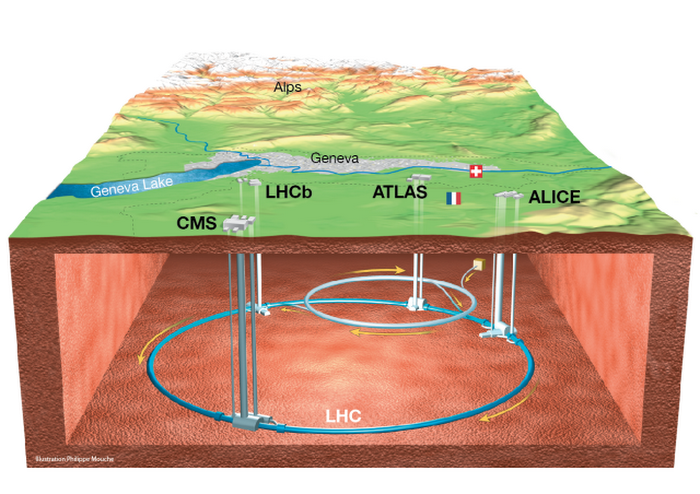

CERN, the European Organization for Nuclear Research, is one of the world’s most renowned institutions for physics research, and operates the world’s largest particle physics laboratory. Many people know CERN for the Large Hadron Collider, or LHC, the world’s largest and most powerful particle collider, which was used to discover the long-hypothesized Higgs boson particle in 2012.

Image © CERN

But it is also the birthplace of the World Wide Web: Tim Berners-Lee and Robert Cailliau of CERN invented HTTP, and created the first web site in 1991 as a means to enable sharing and collaborative analysis of experimental data. So in a real sense Google owes its existence to CERN.

CERN created CERN openlab in 2001 as a framework for collaboration between CERN and leading companies in information and computing technologies. There are currently over 20 active CERN openlab projects, spread across four R&D topics: data center technology and infrastructure, computing performance and software, machine learning and data analytics, and interdisciplinary applications.

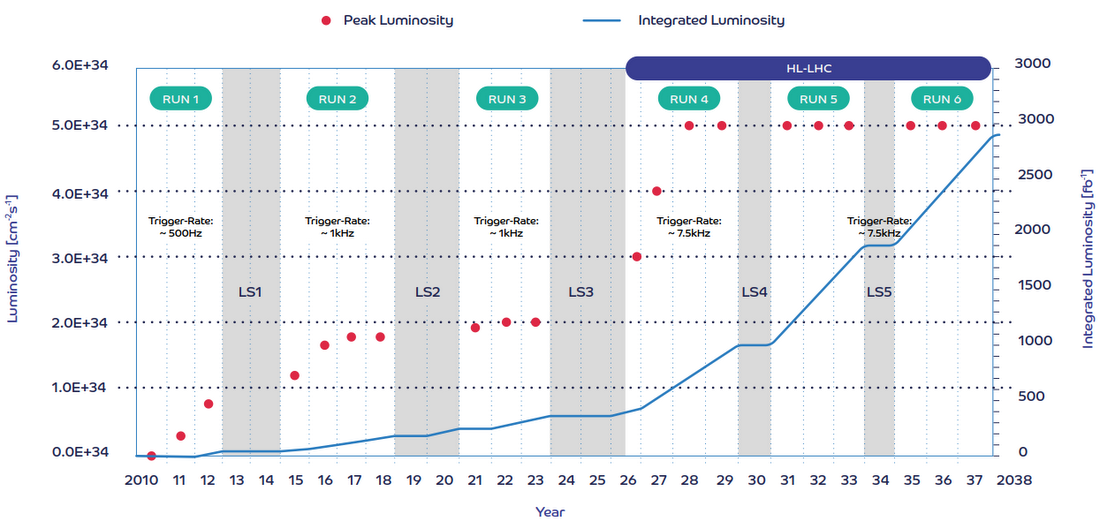

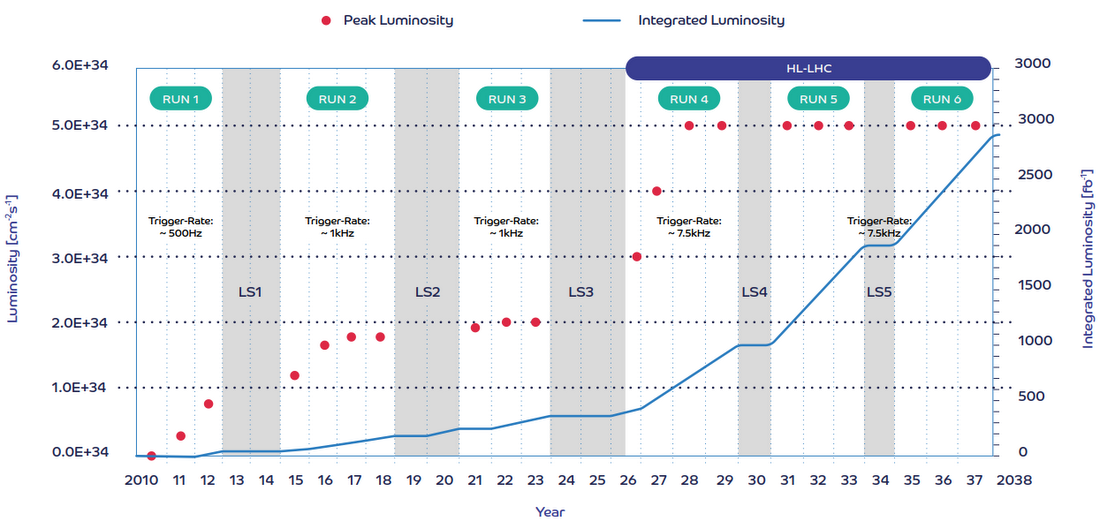

In the coming years, the LHC at CERN will undergo a series of upgrades that will vastly increase researchers’ visibility into the fundamental nature of matter, increasing “luminosity” and generating more particle collisions. What is known as the “High-Luminosity LHC”, or HL-LHC, will come on-line around 2026. Using current software, hardware and analysis techniques, it is estimated that the computing capacity required would be around 50-100 times higher than today. Data storage needs are expected to be on the order of exabytes by this time — an order of magnitude higher than today.

This creates the sorts of daunting problems in data management, analysis, and processing that Google finds exciting and challenging. Google has already been engaged with Fermilab and the Brookhaven National Laboratory (BNL), the two US “Tier 1” sites in the global computing grid used to store and analyse data from the LHC experiments. Working with Fermilab, we demonstrated the ability to use Google Compute Engine at the scale of hundreds of thousands of cores to process data from the CMS detector on the LHC, as early as 2016.

The data challenges for the 2021-2023 and 2026-2029 runs of the LHC will need to be addressed by more than just cloud scale-out of computing using off-the-shelf services. Accordingly, Google is joining CERN openlab, to collaborate with the CERN community to expand the frontiers of what is possible in computation, in storage, in machine learning, and in quantum computation. Working together, we can create better Google technology and help researchers at CERN to do better science.

“CERN has an ambitious upgrade programme for the Large Hadron Collider, which will result in a wide range of new computing challenges,” says Alberto Di Meglio, head of CERN openlab. “Overcoming these will play a key role in ensuring physicists are able to make new groundbreaking discoveries about our universe. We believe that working with Google can help us to successfully tackle some of these challenges, as well as producing technical breakthroughs that can have impact beyond our research community.”

“We look forward to finalising our joint plans soon and beginning R&D activities through this exciting new collaboration in the near future,” continues Di Meglio. “We are confident this endeavour will be a great success, with significant mutual benefit for both parties.”

This marks the beginning of a long journey. Watch this space for postcards from along the road.