Reduce latency with Cloud Spanner multi-region leader placement

Mark Donsky

Cloud Spanner product manager

Neha Deodhar

Software Engineering Manager, Cloud Spanner

Cloud Spanner is Google’s fully managed relational database that offers unlimited scale, strong consistency, and up to 99.999% availability.

When you set up a database instance in Spanner, you have the option of deploying it in a regional configuration or a multi-regional configuration:

Regional configurations, as the name suggests, are deployed within a single region, offer protection against zone outages, and deliver 99.99% availability.

Multi-regional configurations, by comparison, are deployed in at least three regions, offer protection against regional outages, and deliver 99.999% availability.

Google currently offers 13 multi-regional configurations that span the world. Each multi-regional configuration spans at least three regions: the default leader region, the secondary read/write region, and the witness region. In some configurations there might be one or more regions with read only replicas as well.

As described in Demystifying Cloud Spanner multi-region configurations, all client write requests first go to the default leader, which logs the write and then sends it to the other voting replicas. Consequently, to minimize latency, it’s important to select a multi-regional configuration whose default leader is as close to the client application as possible.

But what happens if you have identified a multi-regional configuration that generally suits your needs, but your application client is located closer to the secondary read-write region than it is to the default leader?

Let’s look at an example. Suppose you have a database named salesdb. Most of the traffic for this database is from Belgium (europe-west1) and you want to make sure your database can survive a regional failure.

In this example, to minimize latency, you’d want to select a multi-regional configuration that has Belgium (europe-west1) as the default leader, with read-write replicas in a nearby region such as London (europe-west2).

You might want to use eur5 as your configuration, but upon closer examination, eur5’s default leader is in London, and the secondary read-write region is in Belgium -- and to minimize latency, you’d like it to be the other way around.

Fortunately, with leader placement, you can simply swap the default leader and secondary read-write region for salesdb in a couple of clicks.

To swap the default leader for salesdb, follow these steps:

Go to the Spanner Instances page in the Cloud Console.

Click the name of the instance that contains the DB whose leader region you want to change -- in this case, salesdb. and click the pencil icon next to Default leader region.

Run the following DDL:

ALTER DATABASE salesdb SET OPTIONS(default_leader='europe-west1');

You can confirm that your update was successful in the updated database overview:

Once you’ve completed these steps, europe-west1 will be the default leader for salesdb.

Use Cases

Leader placement is particularly useful for two scenarios:

If most of your application traffic comes from one geographic region, then you can align the default leader region to that to minimize latency.

In an active-passive application architecture, after a planned or unplanned application failover across regions, you can co-locate Cloud Spanner’s default leader region with your application’s active instance to avoid cross-region requests and minimize latency.

Below are some examples of how you can use configurable leader placement to improve application latency.

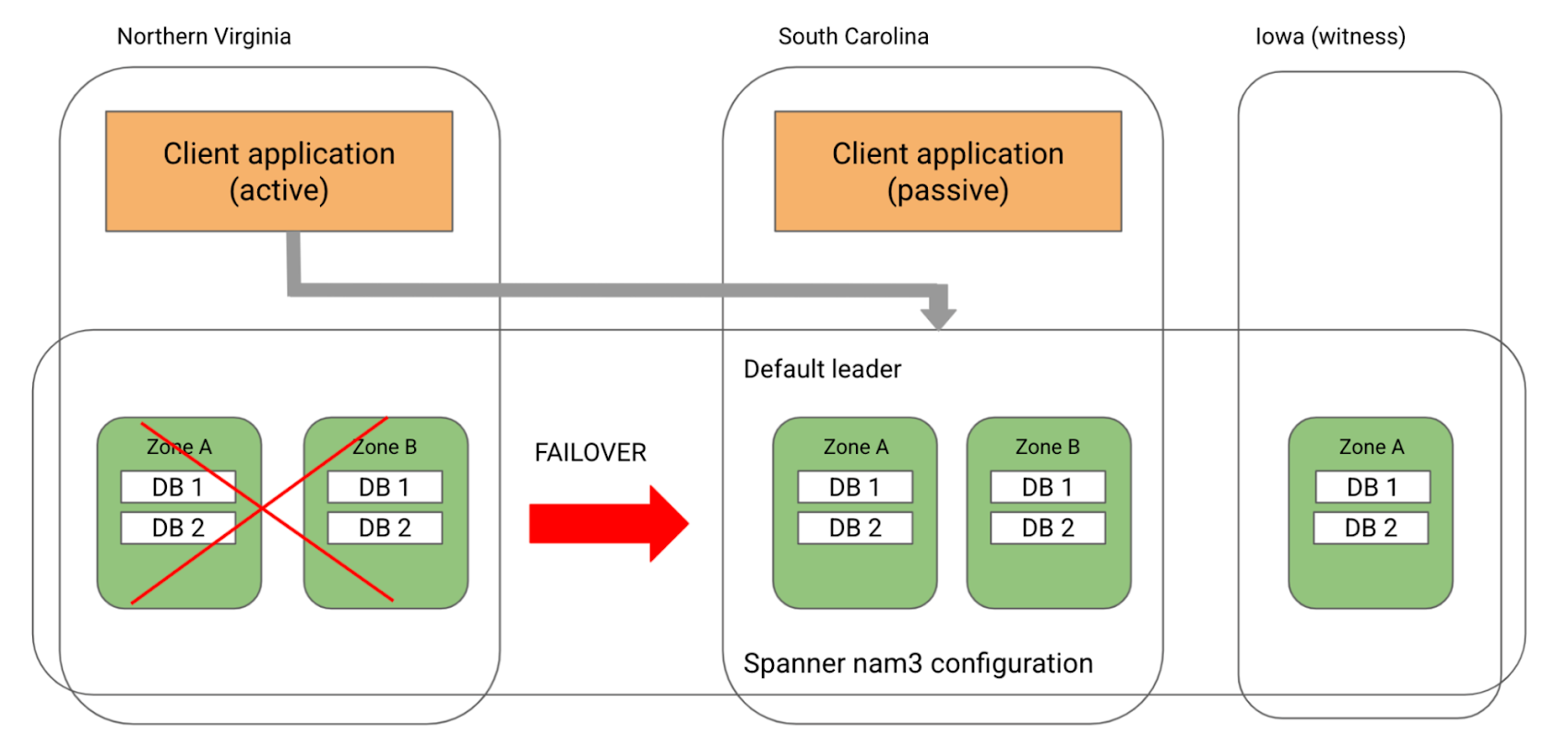

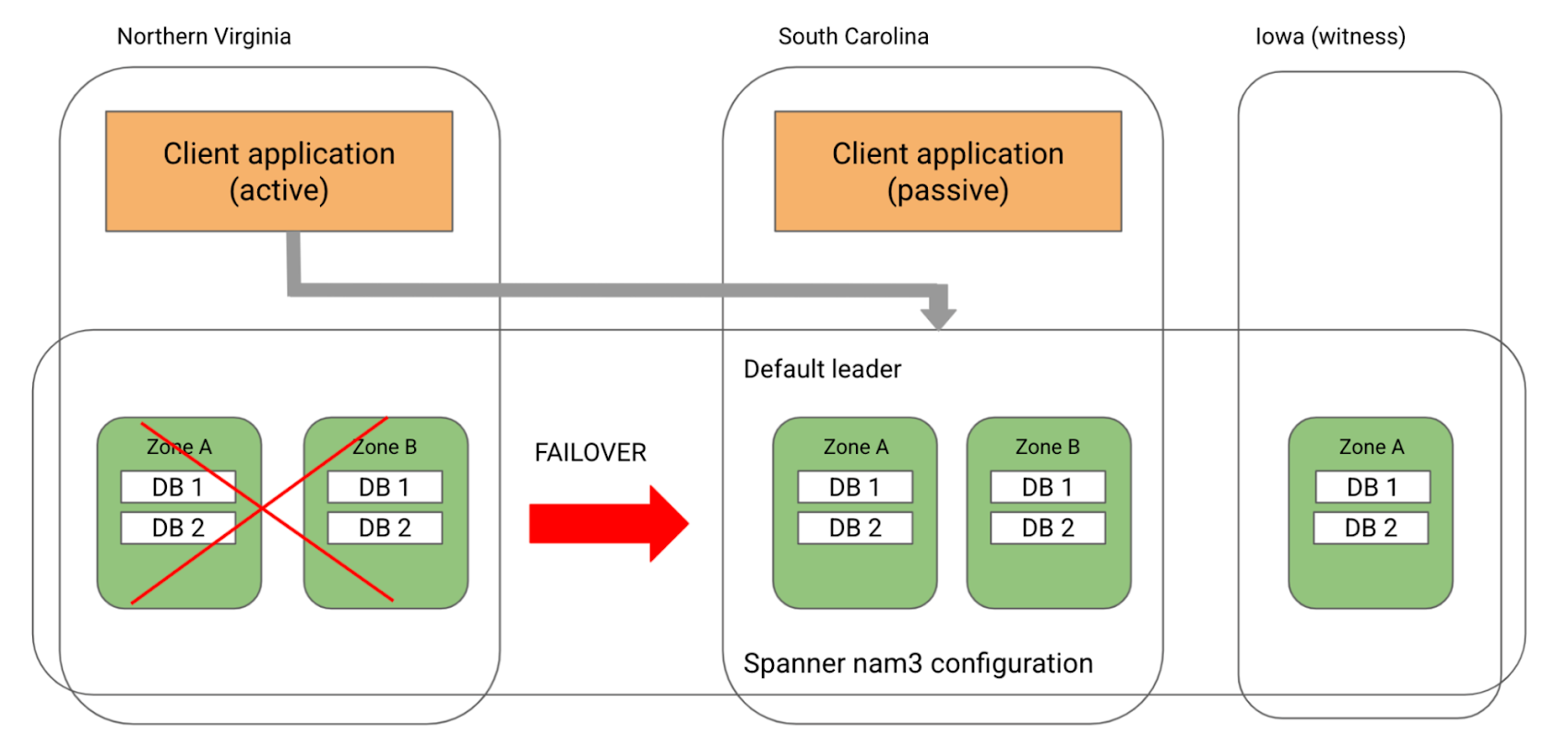

Active-passive application architecture

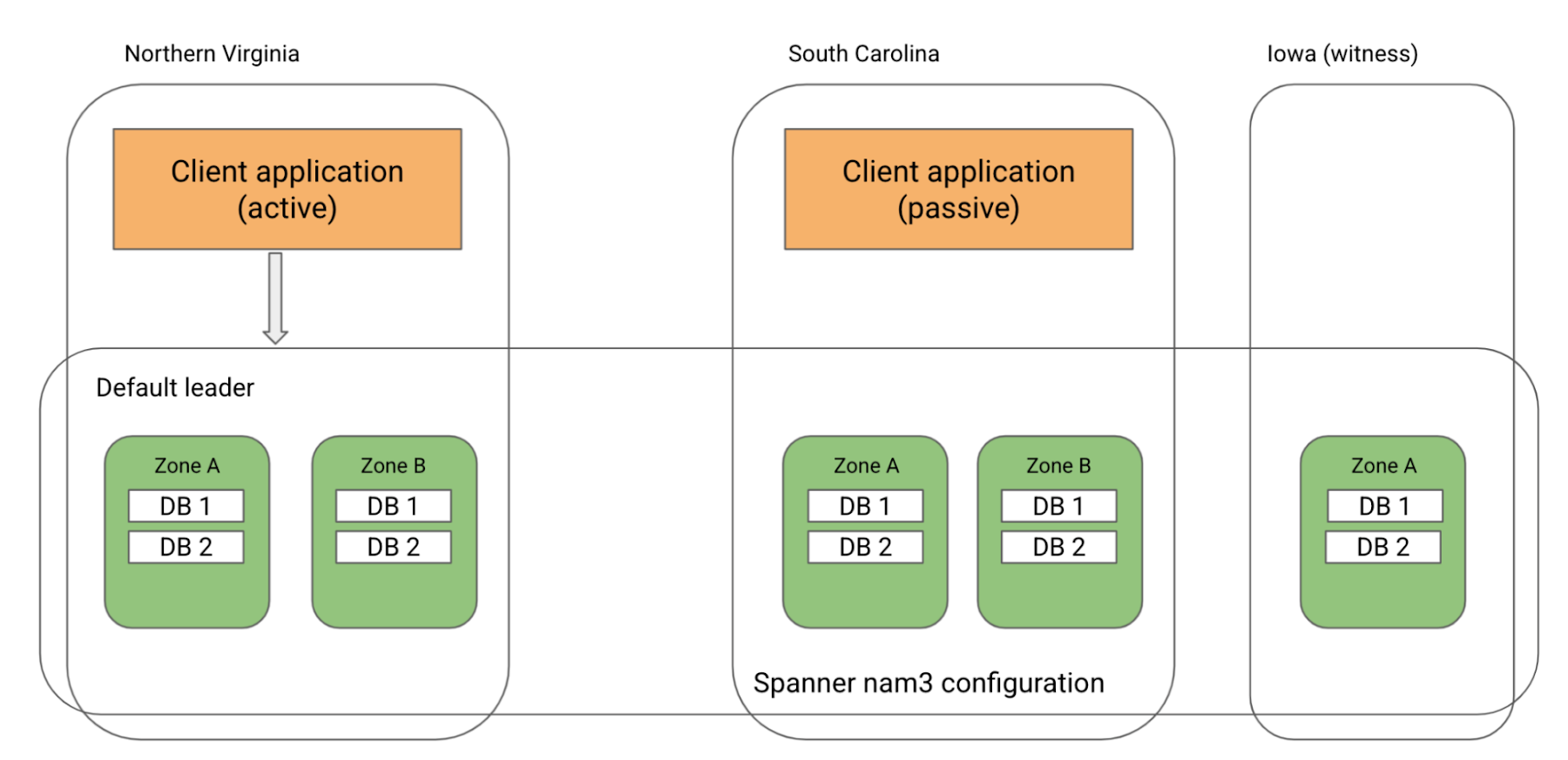

A common multi-region application architecture for high availability is an active-passive model where the application instance in one region is the active instance and serves all the traffic. An application instance in a second region is on standby and takes over serving all the traffic, in case the active region fails. Such applications can benefit from Cloud Spanner’s multi-region configurations and leader placement.

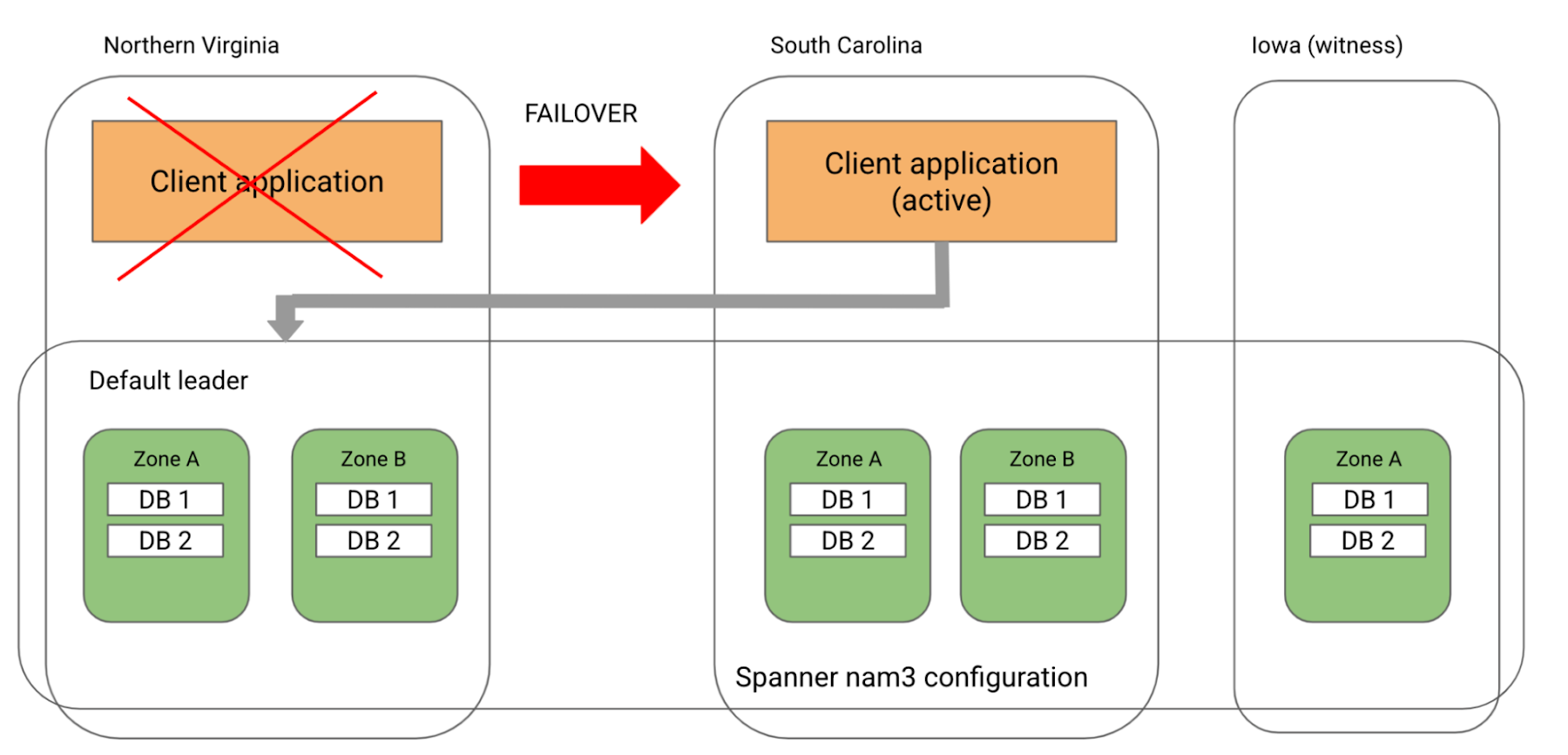

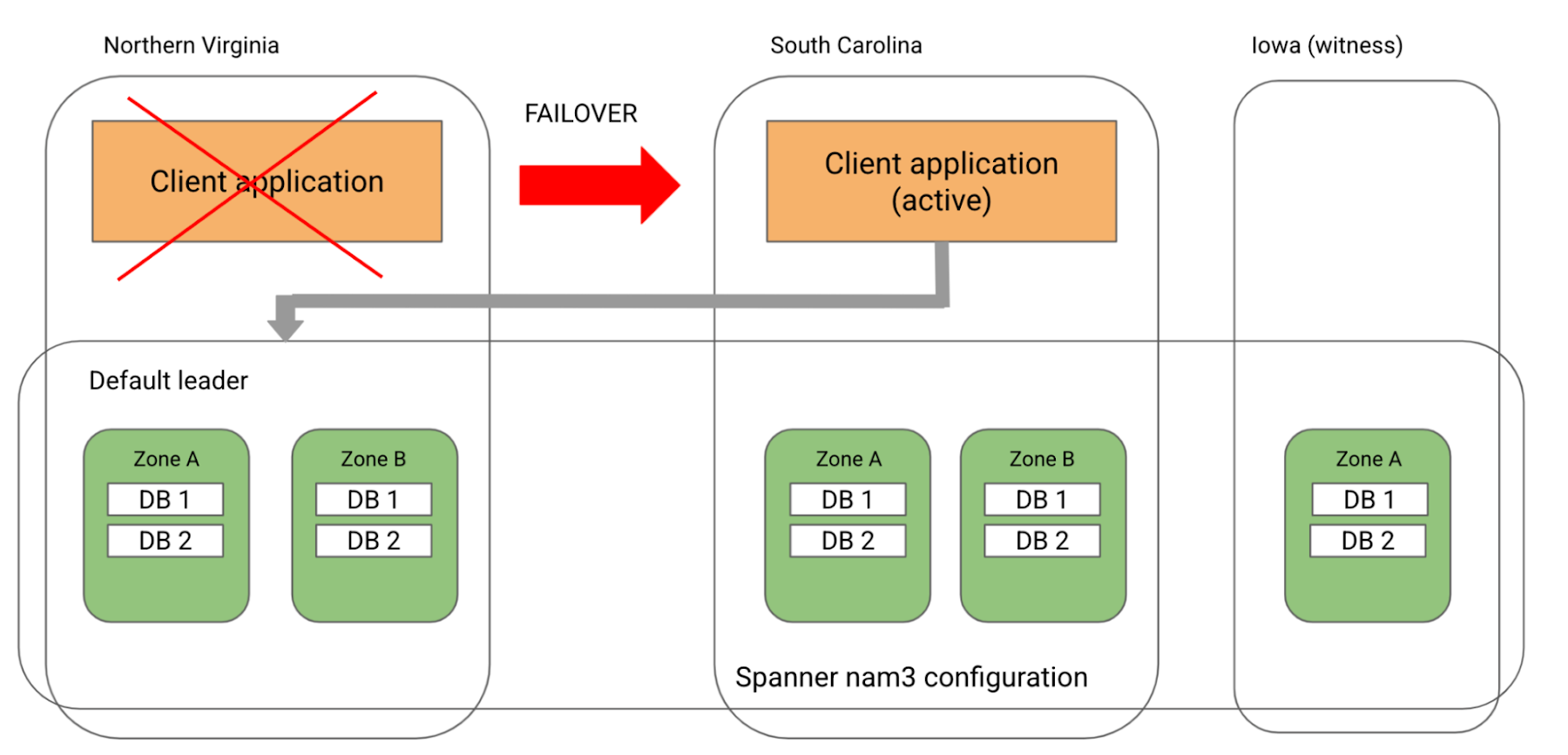

As an example, consider that you have an application with an active instance in Northern Virginia and a passive instance in South Carolina. To meet your application’s high availability needs, you can use Cloud Spanner’s nam3 multi-region configuration with the default leader region as Northern Virginia. Under normal circumstances, your application’s active region aligns with Cloud Spanner’s default leader region and you see low read and write latencies.

Now, let’s consider some potential failure scenarios.

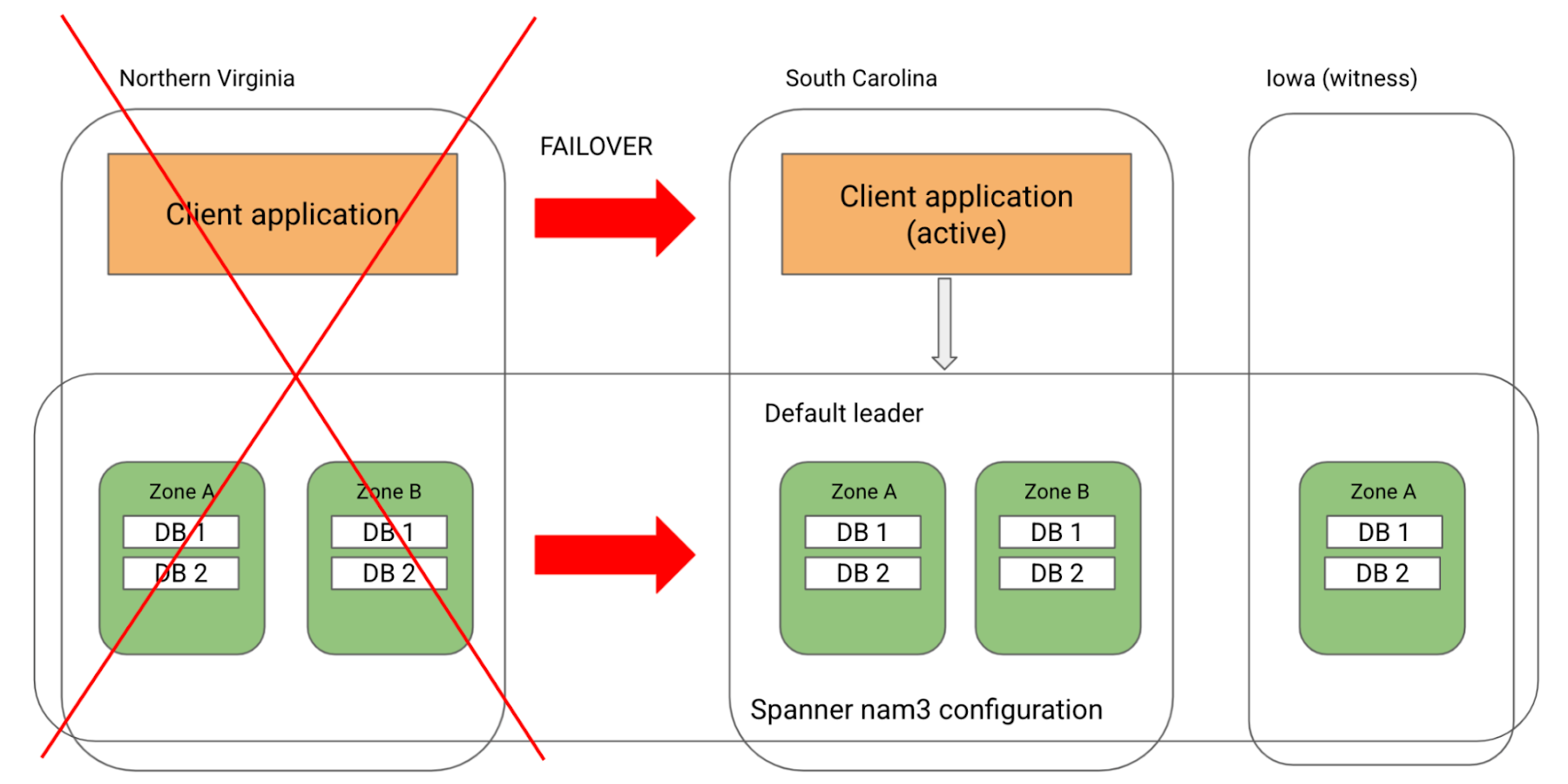

#1. Only your application instance fails in Northern Virginia

Here, your application will failover to South Carolina. The application’s region will now not be aligned with Cloud Spanner’s default leader region and your application will see higher latencies since every write request will be a cross-region request to Northern Virginia.

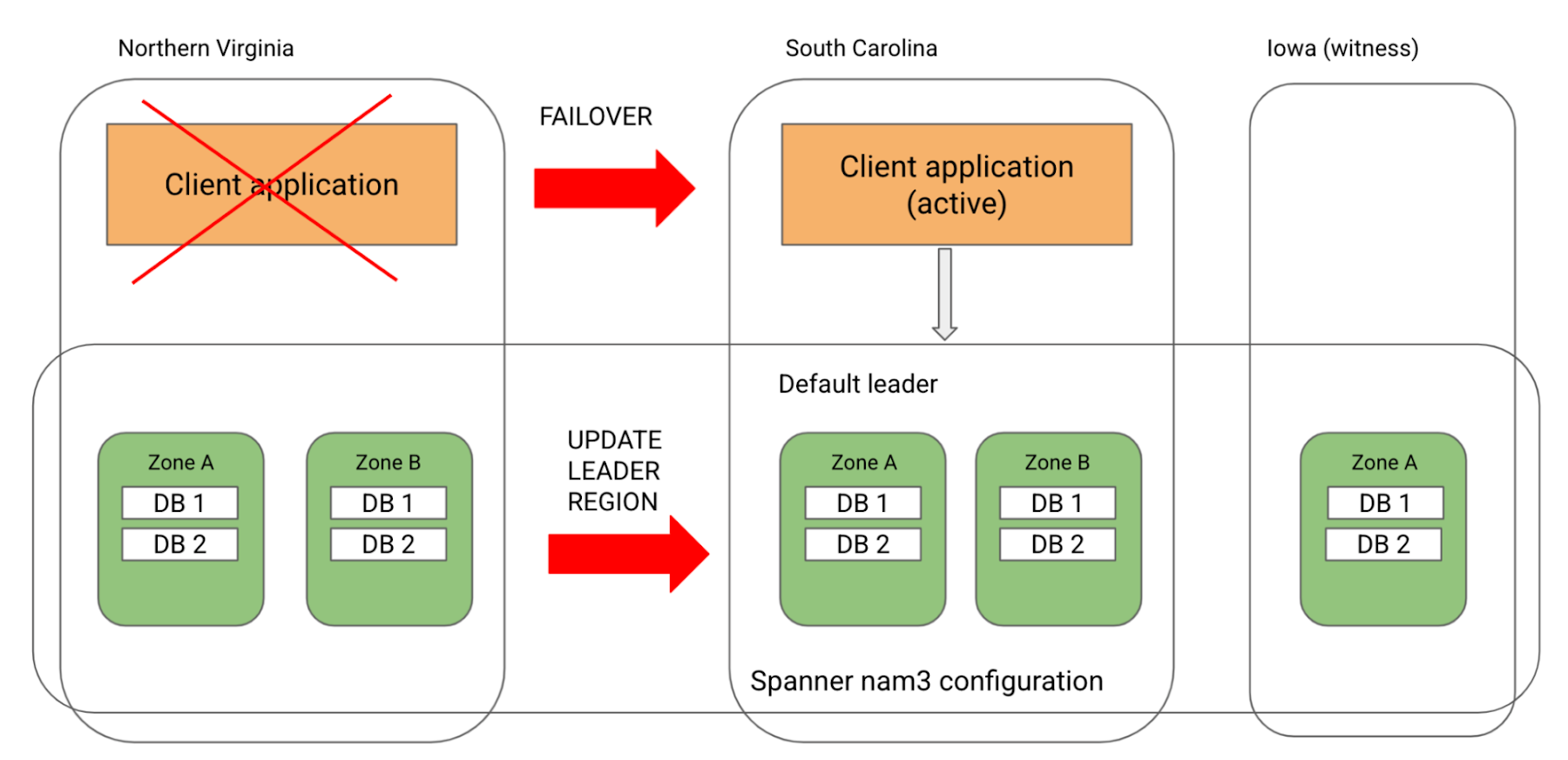

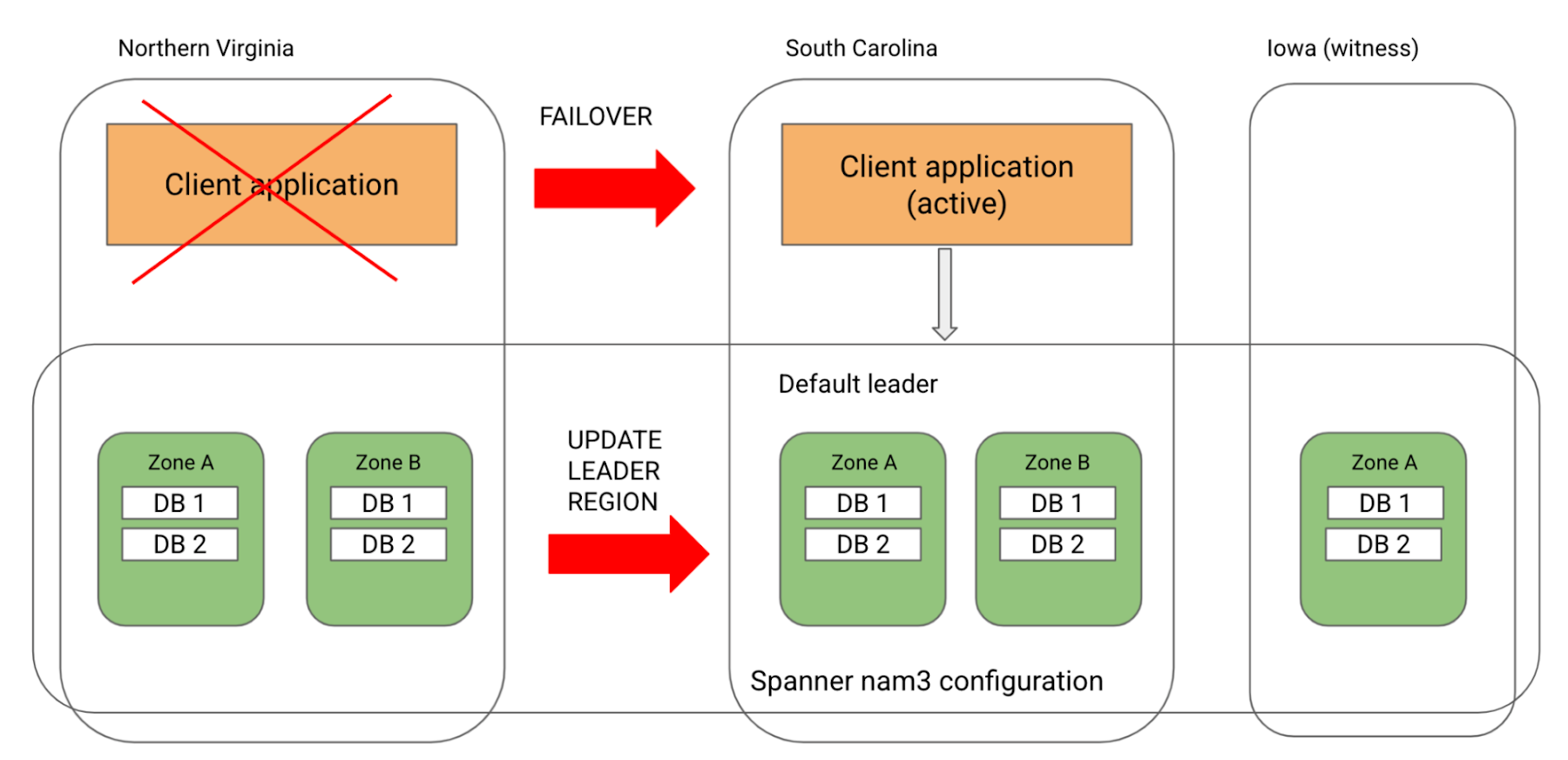

To improve latency, you can use leader placement and update Cloud Spanner’s default leader region to South Carolina. With this, your application and Cloud Spanner’s leader region will be co-located in South Carolina and your latency will be low. Note that updating the default leader region will not have any impact or downtime for your application.

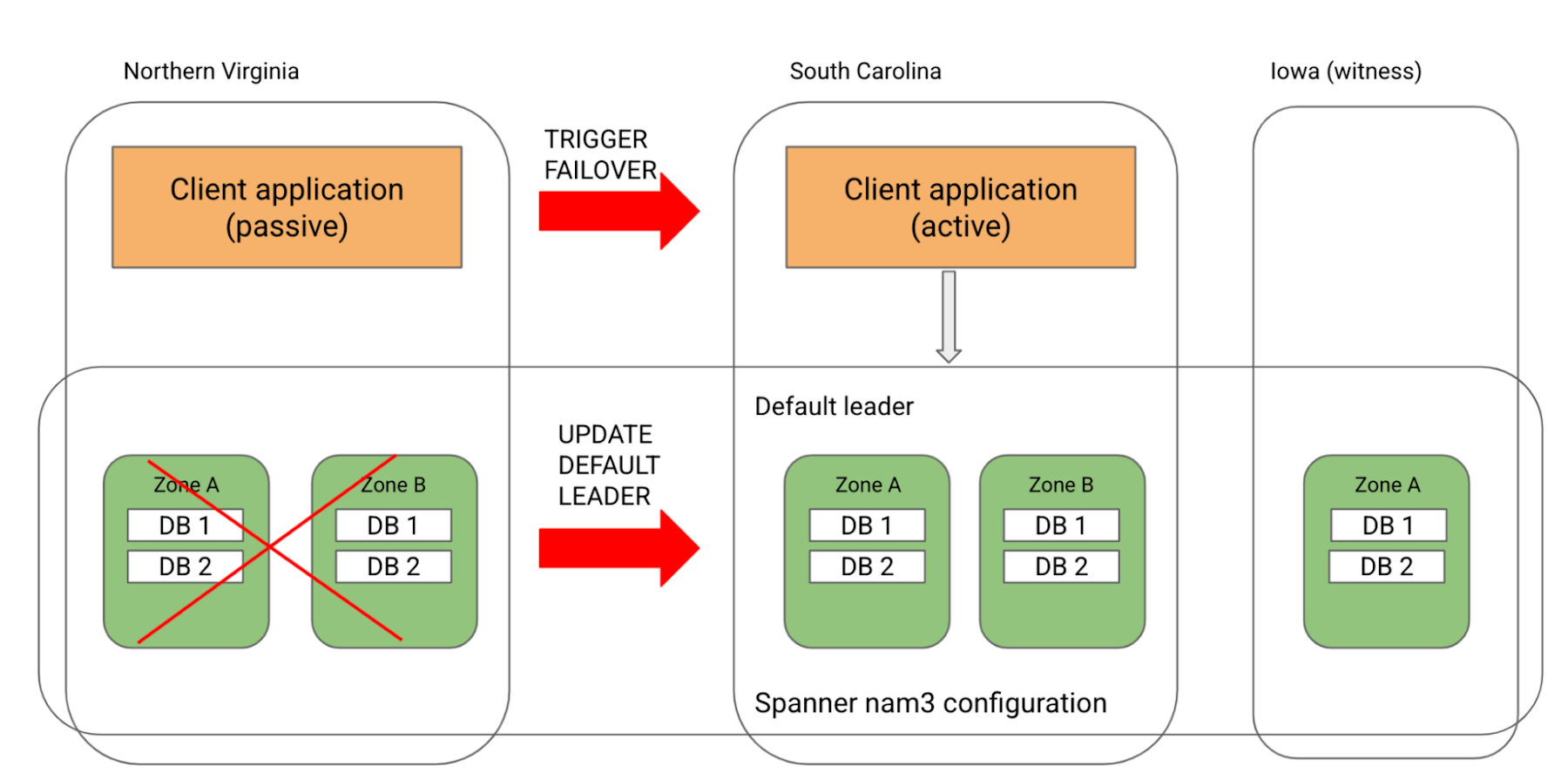

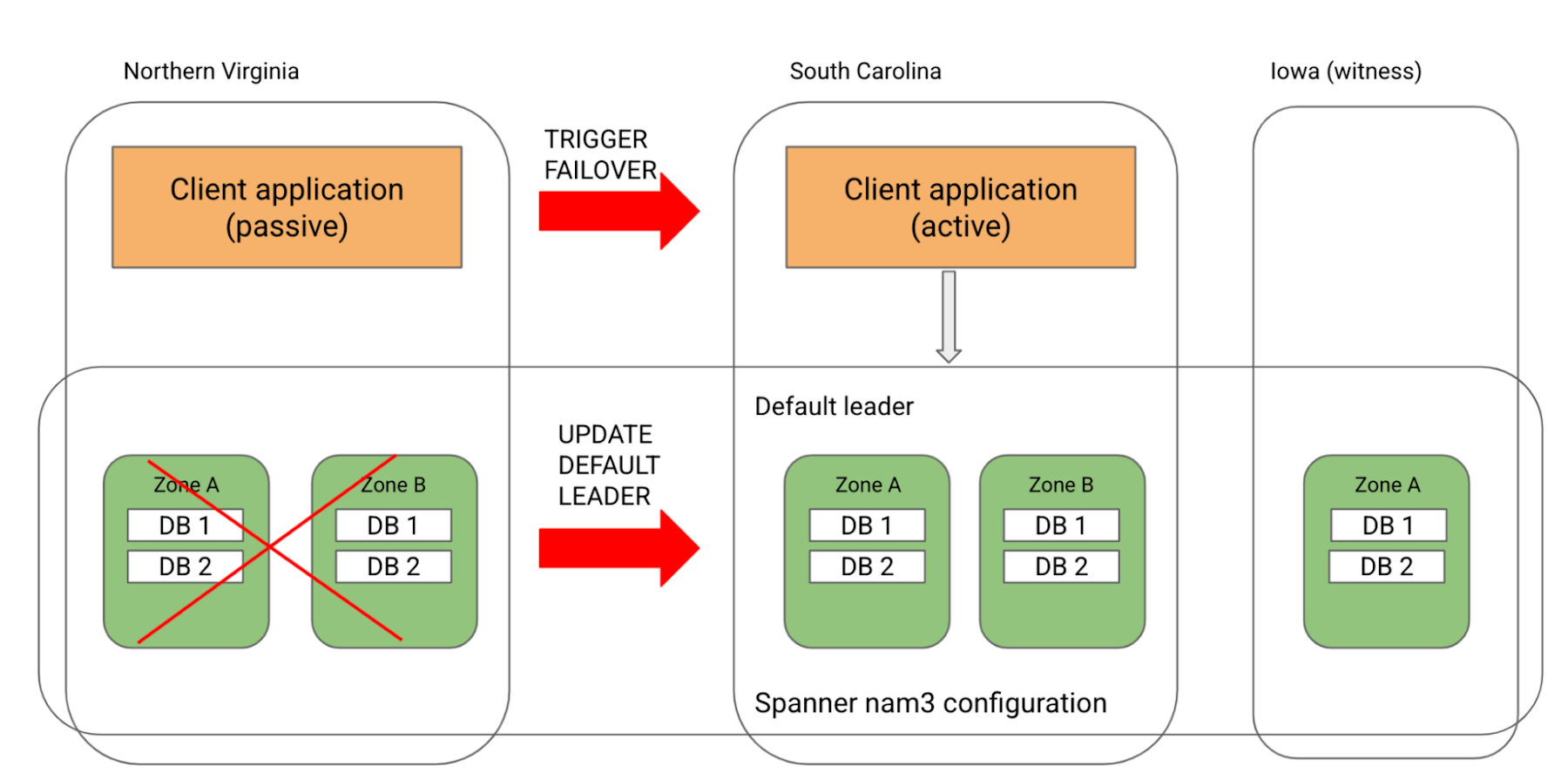

#2. Only Cloud Spanner fails in Northern Virginia

In this case, Cloud Spanner will automatically move the leaders to South Carolina. Note that the RPO and RTO is 0 in this scenario. Since the application’s active instance continues to be in Northern Virginia, your application will see higher latencies.

In the case of an extended outage, you can improve performance by failing over your application to South Carolina and keeping it aligned with Cloud Spanner’s leader region. You can also explicitly update Cloud Spanner’s default leader region to South Carolina to prevent the leaders from moving back to Northern Virginia when the region comes back up.

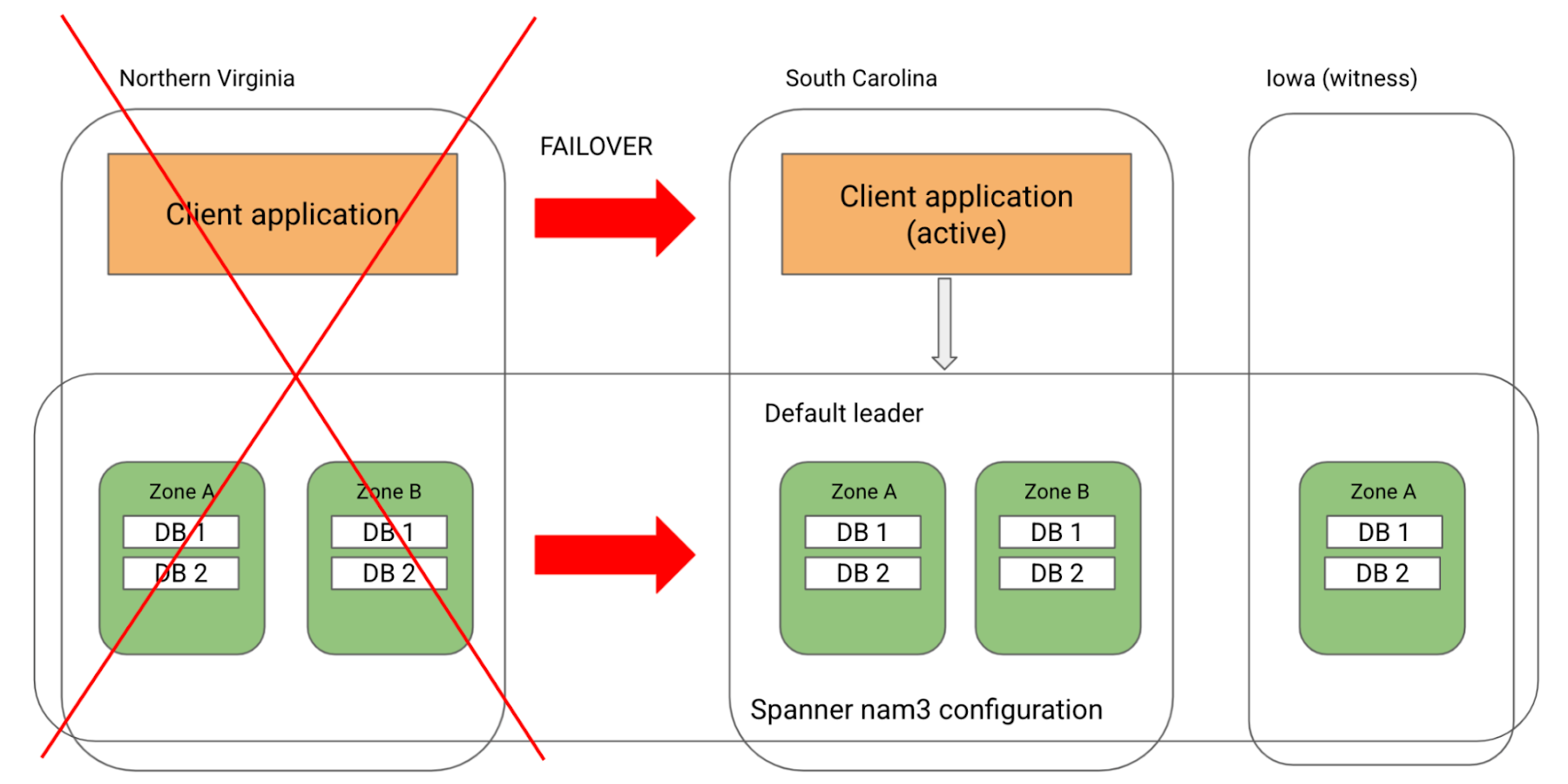

#3. All of Northern Virginia fails, bringing down both the application’s active instance as well as Cloud Spanner in Northern Virginia

In such an event, Cloud Spanner will automatically move its leaders to South Carolina without any impact to the application. Similarly, the application will failover to South Carolina. Now your application as well as database continue to be available, surviving a region failure. Furthermore, the database’s new leader region is aligned with the application’s failover region, ensuring that your application continues to see low latencies

Note that when Northern Virginia comes back up, Cloud Spanner will automatically move its leaders back to Northern Virginia. To avoid that and to continue using South Carolina as the leader region aligned with the application’s active instance, you can explicitly update Cloud Spanner’s default leader region to South Carolina.

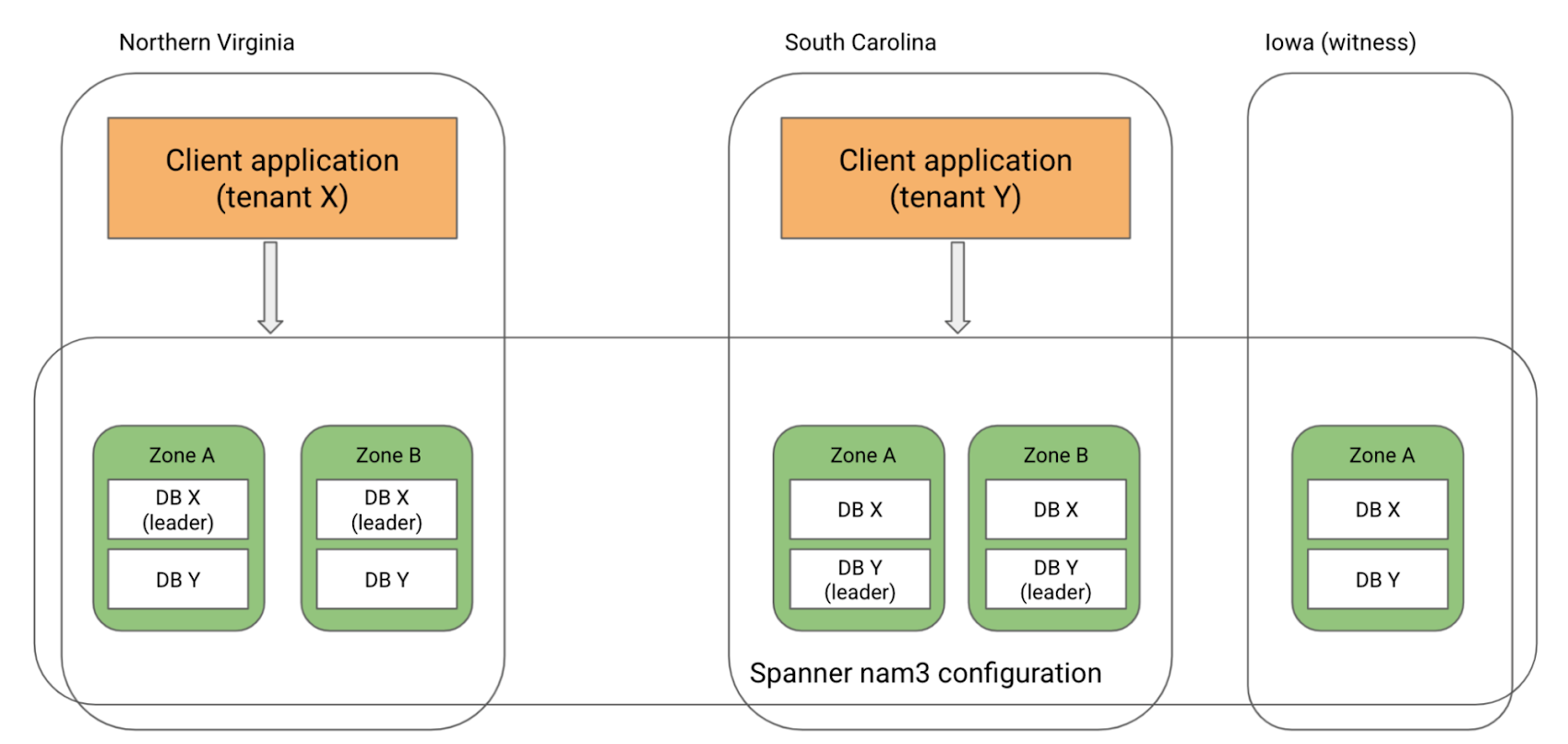

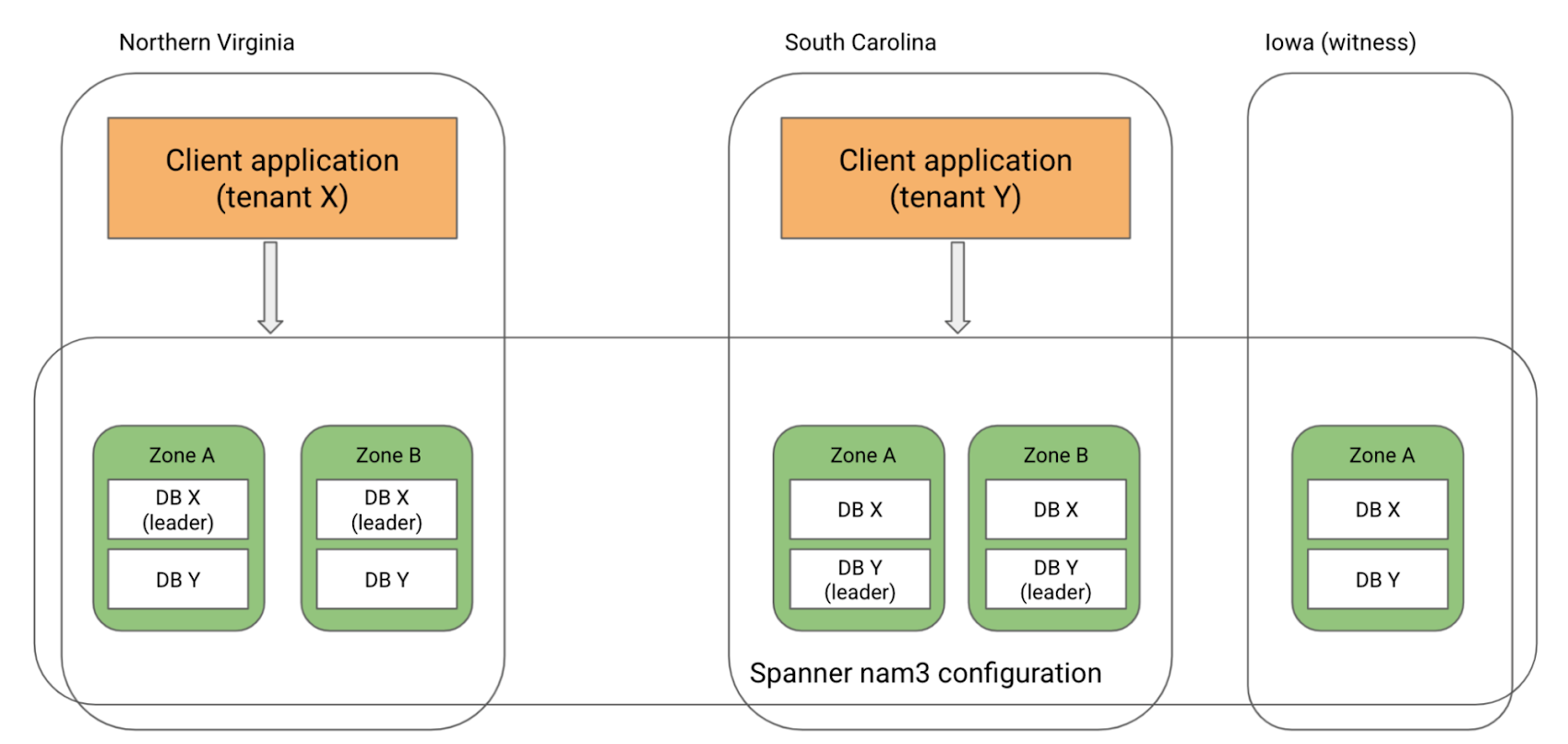

Multi-tenant architecture

If you have a multi-tenant application model that uses a separate database per tenant, you can use leader placement to configure the leader region per database to align with the tenant’s access patterns. For example, consider a multi-tenant application using nam3 configuration. You could set the leader region to South Carolina for databases of tenants based in South Carolina and Northern Virginia for databases of tenants based in Northern Virginia, thereby achieving low latencies for tenants from both regions.

To Conclude

Cloud Spanner’s leader placement feature provides you the ability to get the best write latencies by aligning the default leader region with your application’s traffic. For more information on how to configure the default leader region, please see https://cloud.google.com/spanner/docs/instance-configurations#configuring_the_default_leader_region

Learn more

To get started with Spanner, create an instance or try it out with a Spanner Qwik Start.

To learn more about Spanner’s regional and multi-regional configurations, read Demystifying Cloud Spanner multi-region configurations.