Intro to deep learning to track deforestation in supply chains

Alexandrina Garcia-Verdin

Geo for Environment Developer Advocate

Intro to deep learning to track deforestation in supply chains

Introduction

In my experience, I have observed that it’s common in machine learning to surrender to the process of experimenting with many different algorithms in a trial and error fashion, until you get the desired result. My peers and I at Google have a People and Planet AI YouTube series where we talk about how to train and host a model for environmental purposes using Google Cloud and Google Earth Engine. Our focus is inspiring people to use deep learning, and if we could rename the series, we would call it AI for Minimalists since we would recommend artificial neural networks for most of our use cases. And so in this episode we give an overview of what deep learning is and how you can use it for tracking deforestation in supply chains. I also included a summary of the architecture and products you can use in this blog that I presented at the 2022 Geo For Good Summit. For those of you interested in diving even deeper into code, please visit our end-to-end sample (click “open in colab” at the bottom of the screen to view this tutorial in a notebook format).

What’s included in this article

What is Deep Learning?

Measuring deforestation in extractive supply chains with ML

When to build a custom model outside of Earth Engine?

How to build a model with Google Cloud & Earth Engine?

Try it out!

What is Deep Learning?

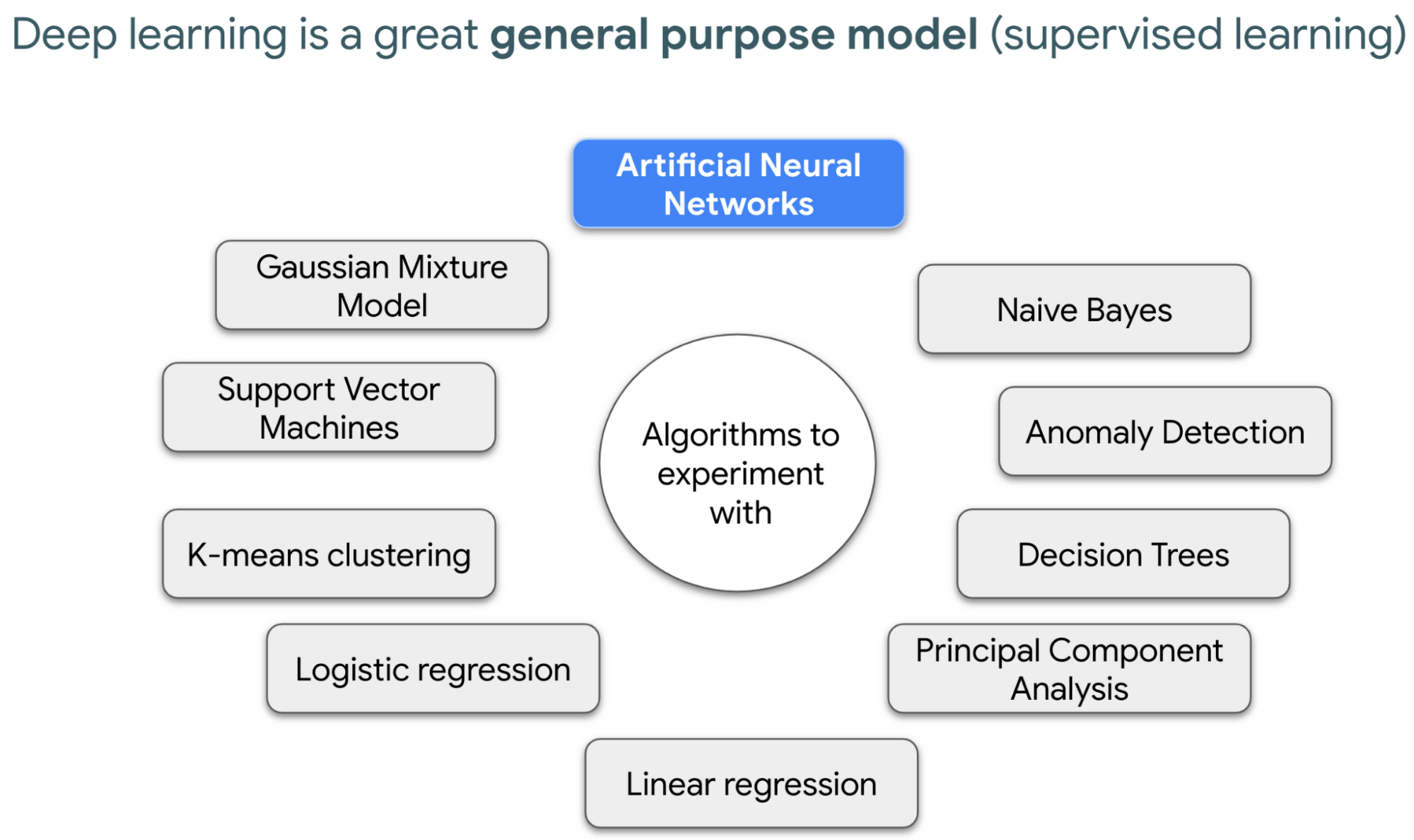

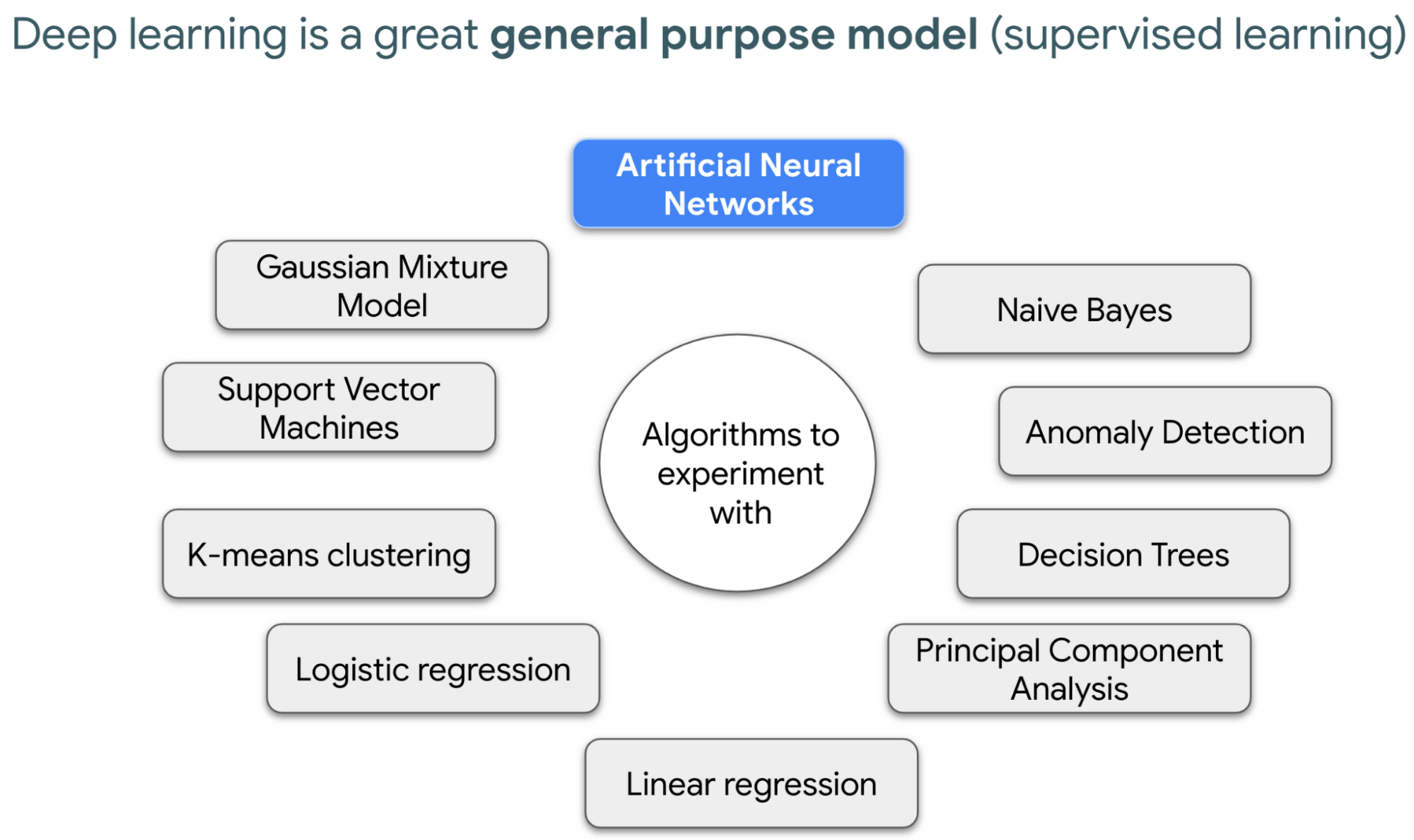

Out of the many ML algorithms out there, I’m happy to share that deep learning or artificial neural networks is a technique that can be used for almost any supervised learning job.

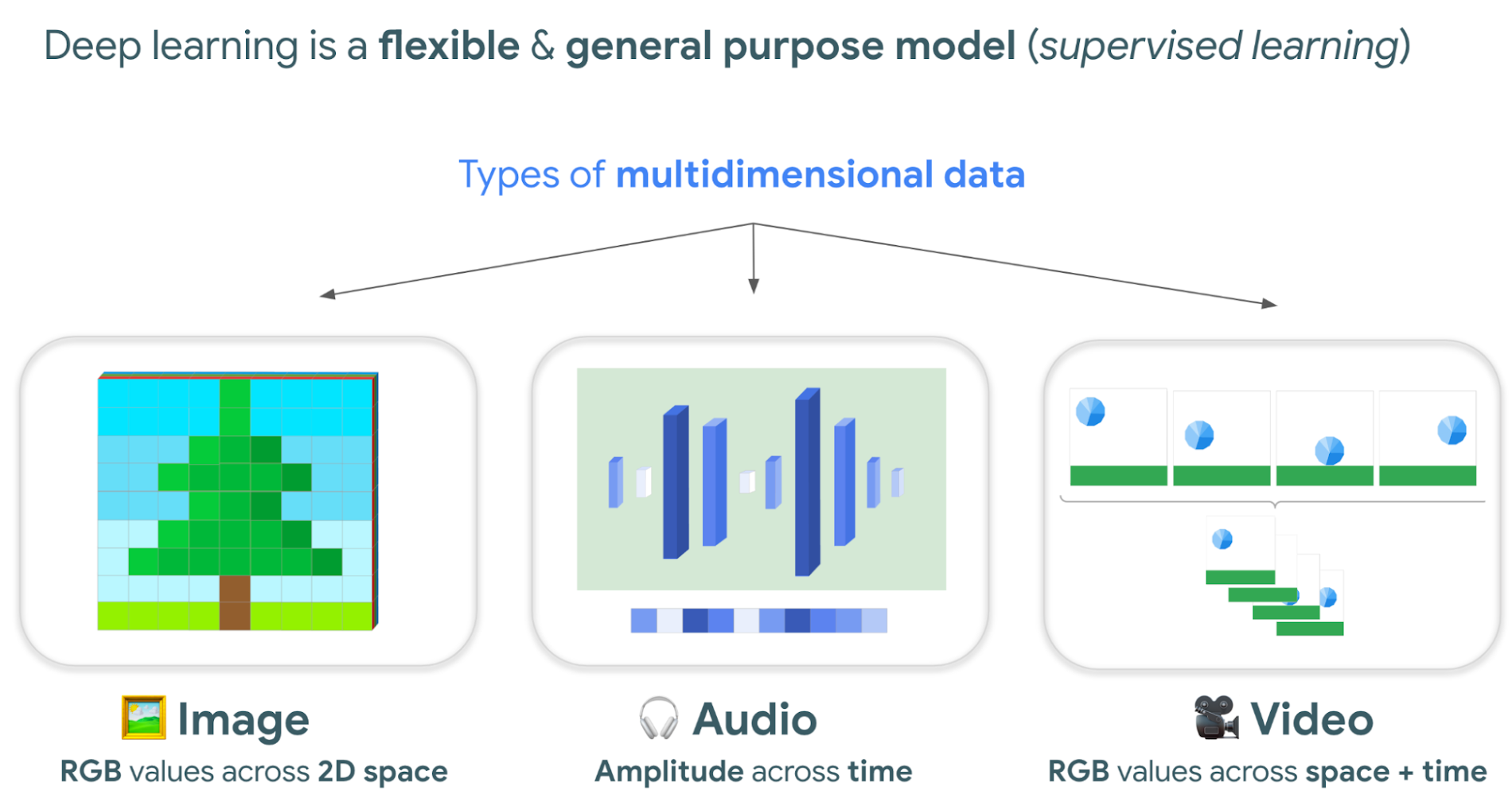

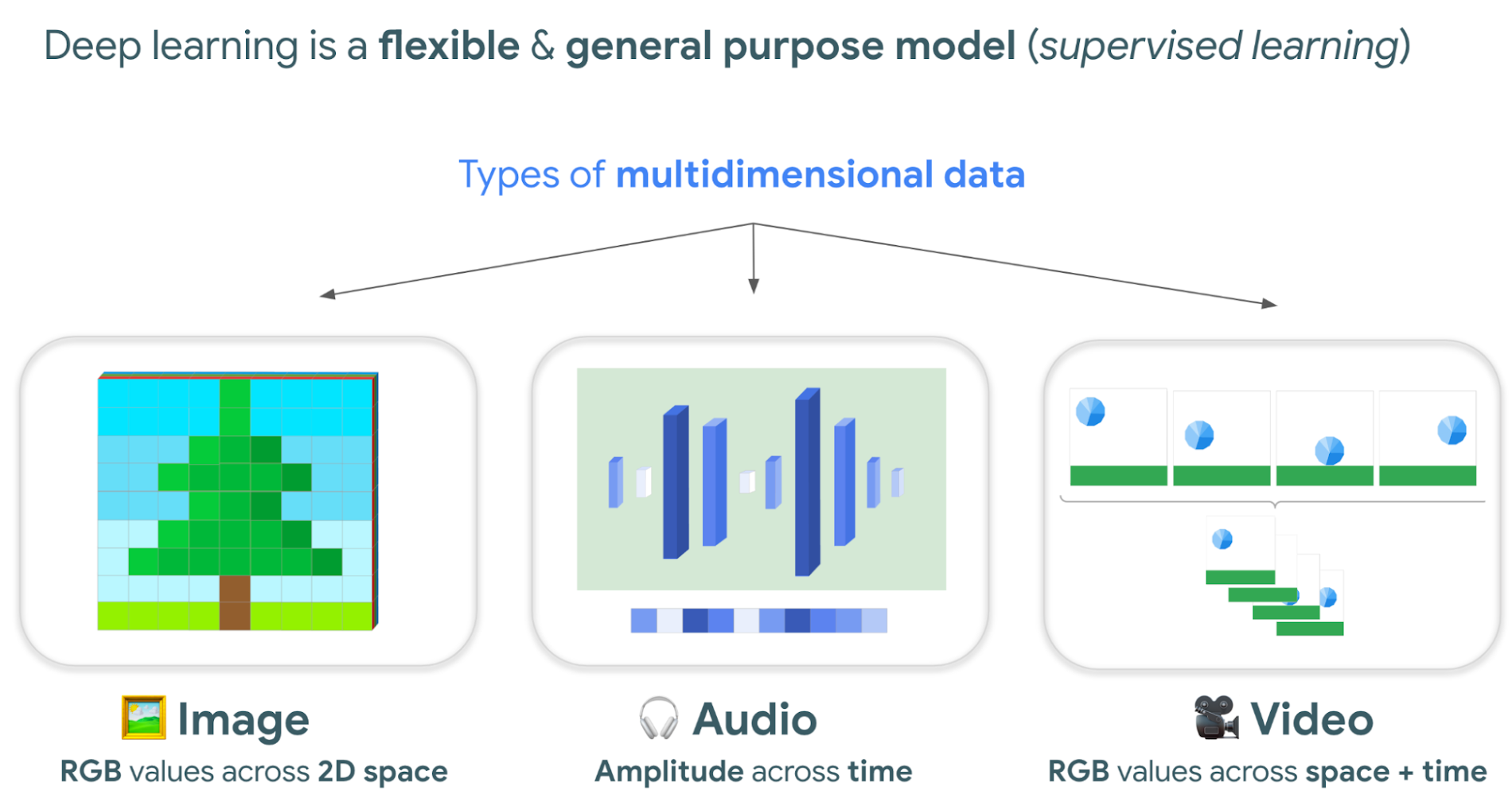

In supervised learning, you tell a computer the right answers to look for, through examples. Deep learning is very flexible, and is a great go-to algorithm. Especially for images, audio, or video files which are types of multidimensional data. This is because each of these data types have one or more dimensions with specific values for each point.

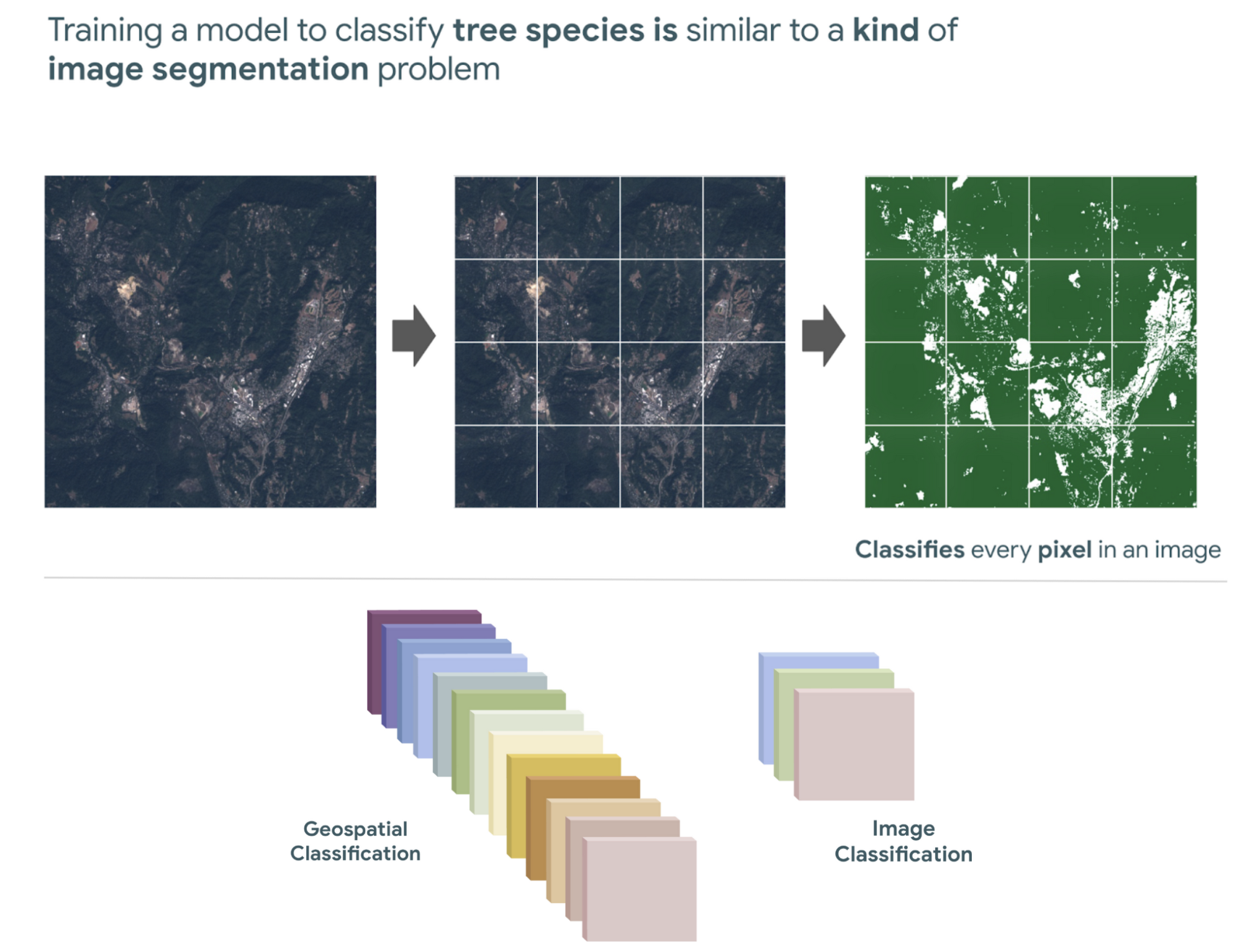

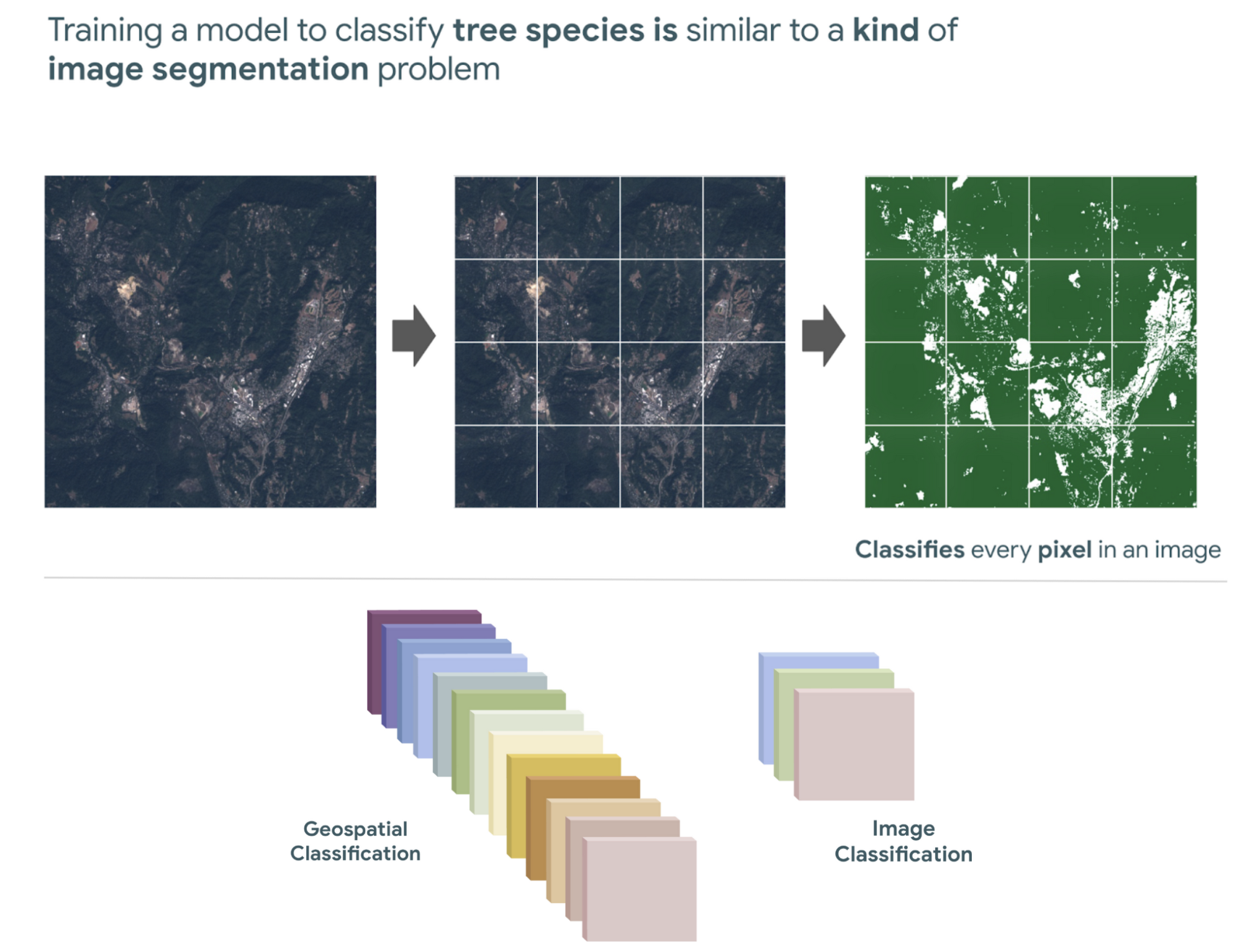

And training a model to classify tree species using satellite images is kind of like an image segmentation problem, where every pixel in the image is classified.

Deep learning approaches problems differently

David Cavazos, Developer Programs Engineer

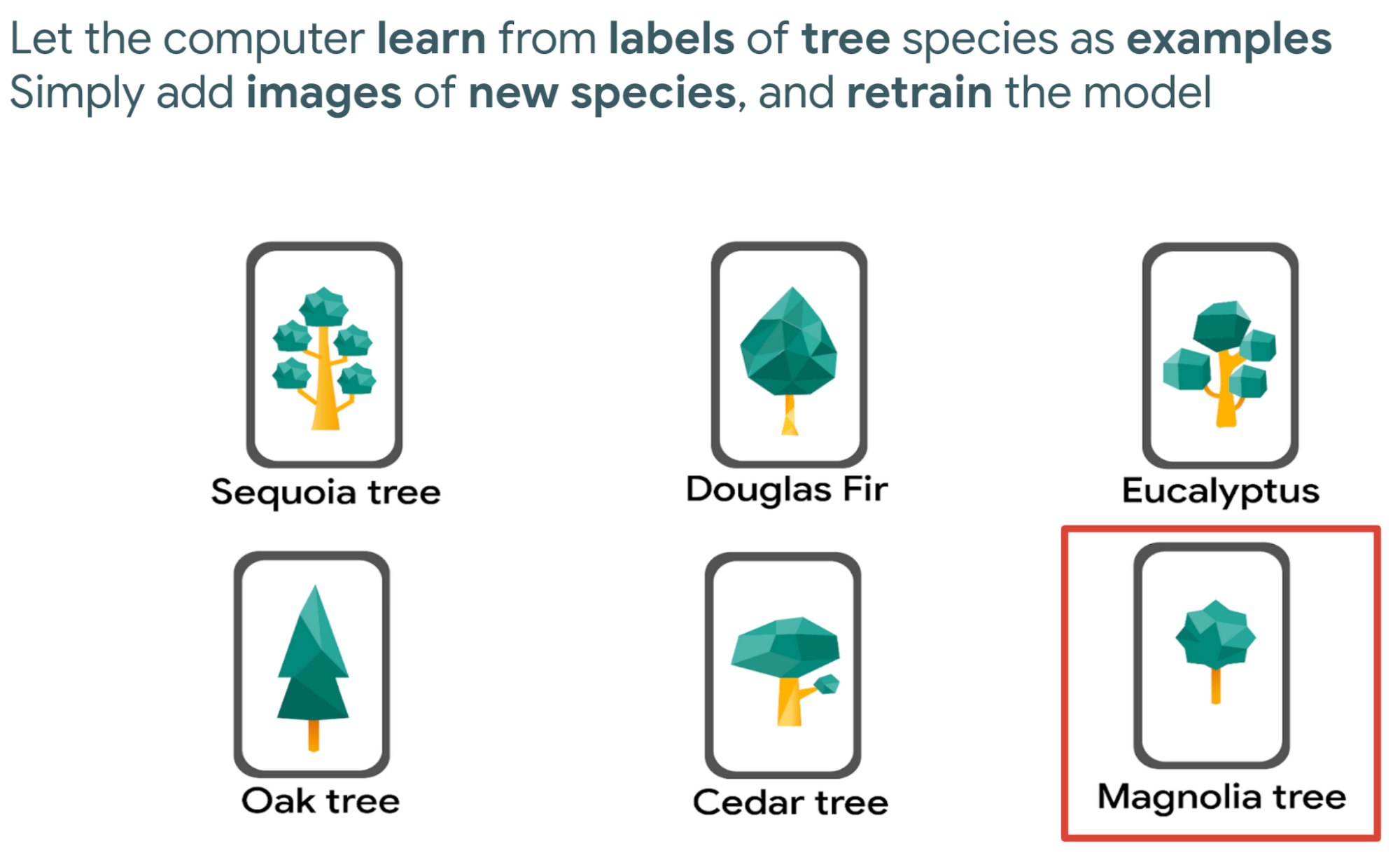

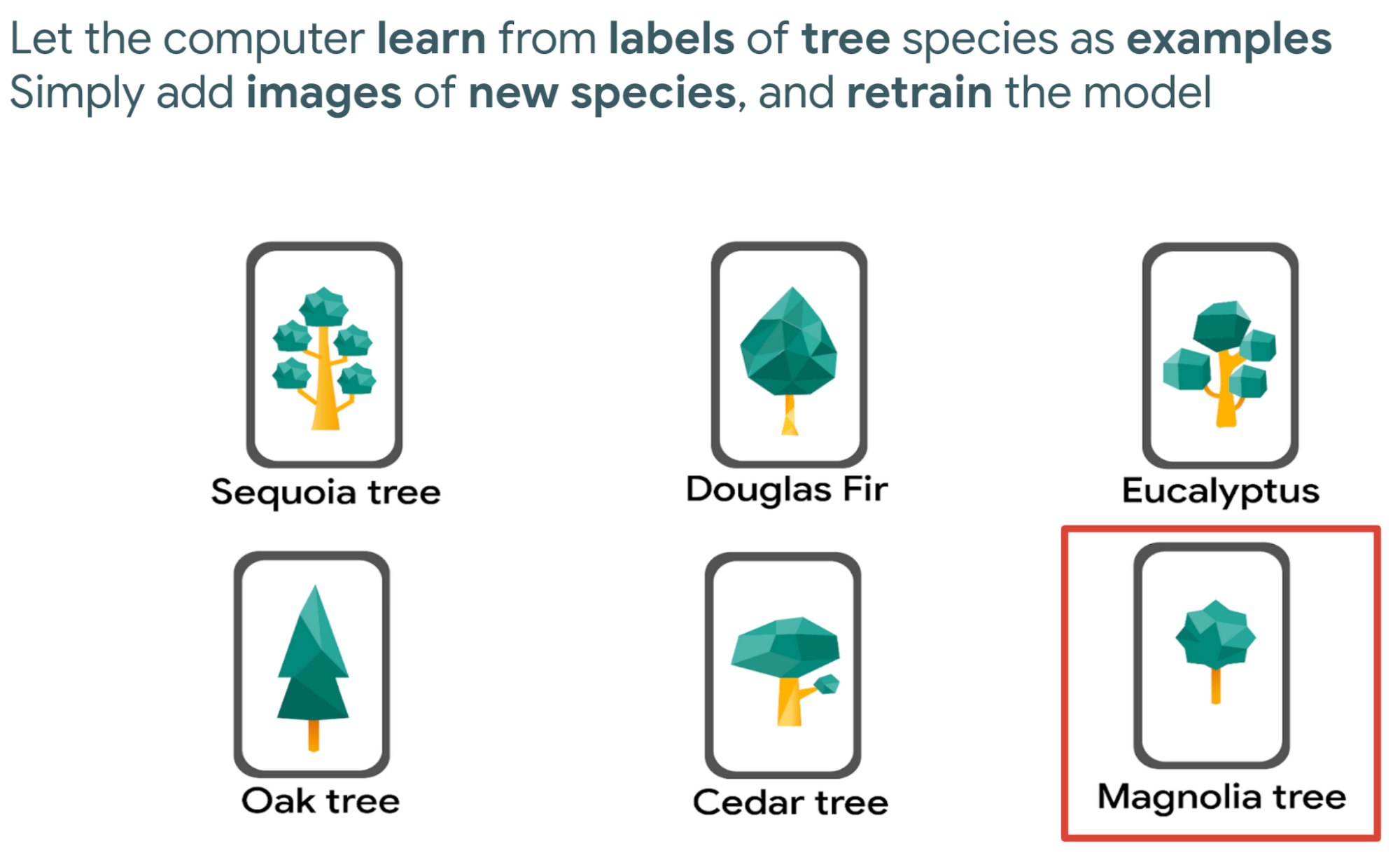

There’s no writing a function with explicit & sequential steps that reviews every single pixel one by one for every image, as traditional software development does. Let’s say you wish to build a model that classifies tree species. You don’t spend time coding all the instructions, but instead give a computer examples of images with tree species labels, and let it learn from these examples. And when you want to add more species, it’s as simple as adding new images of that species to retrain the model.

Measuring deforestation in extractive supply chains with ML

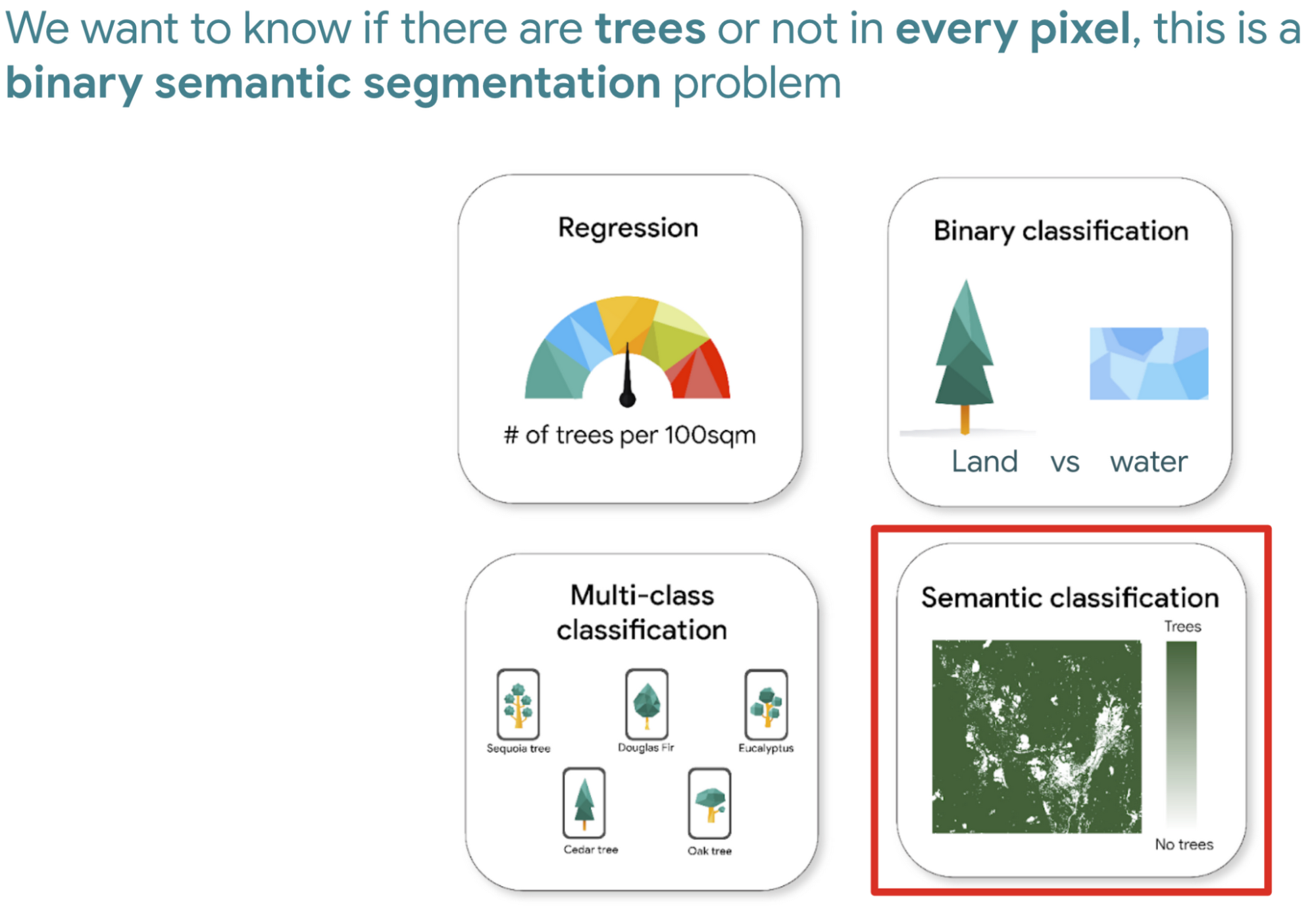

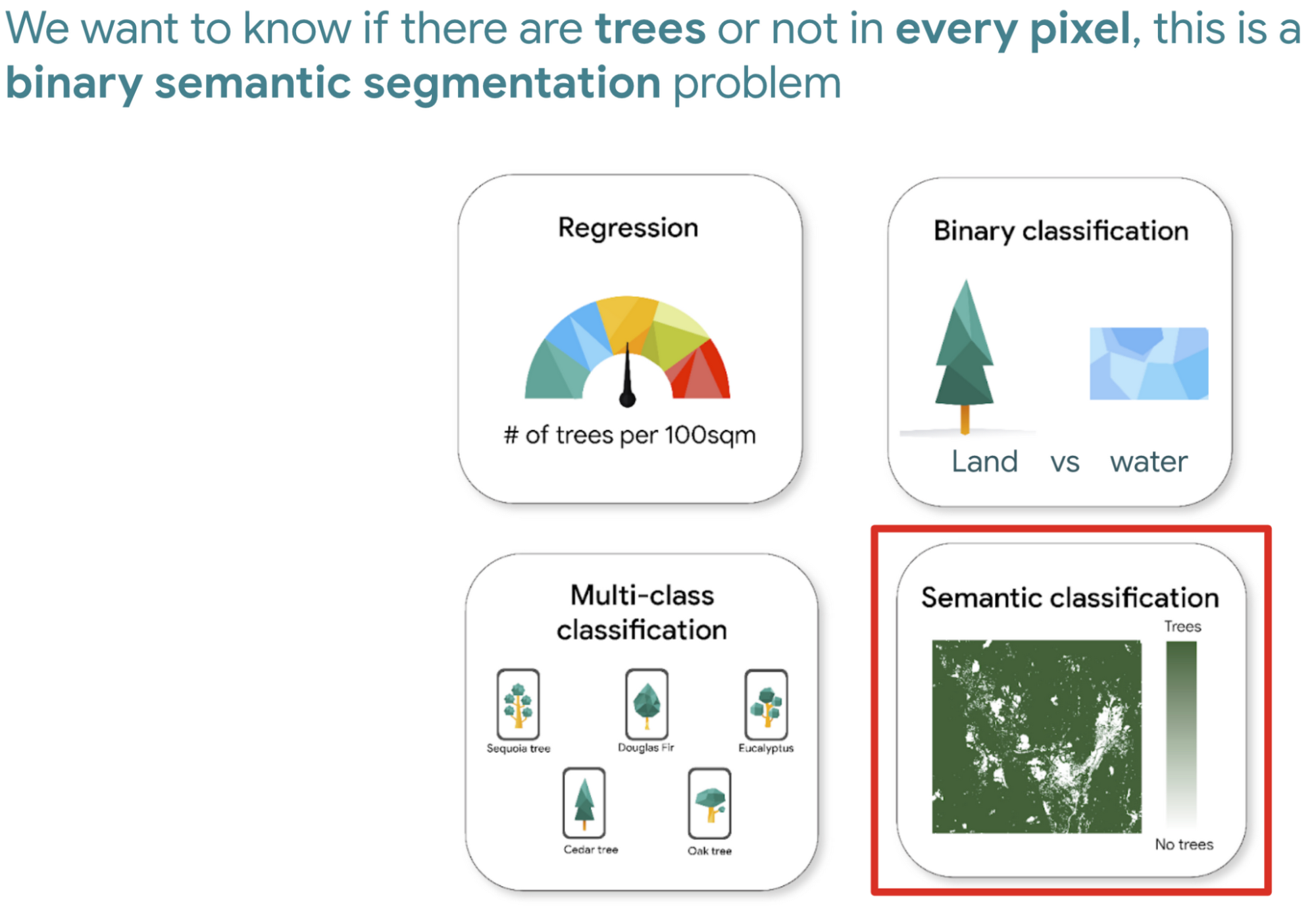

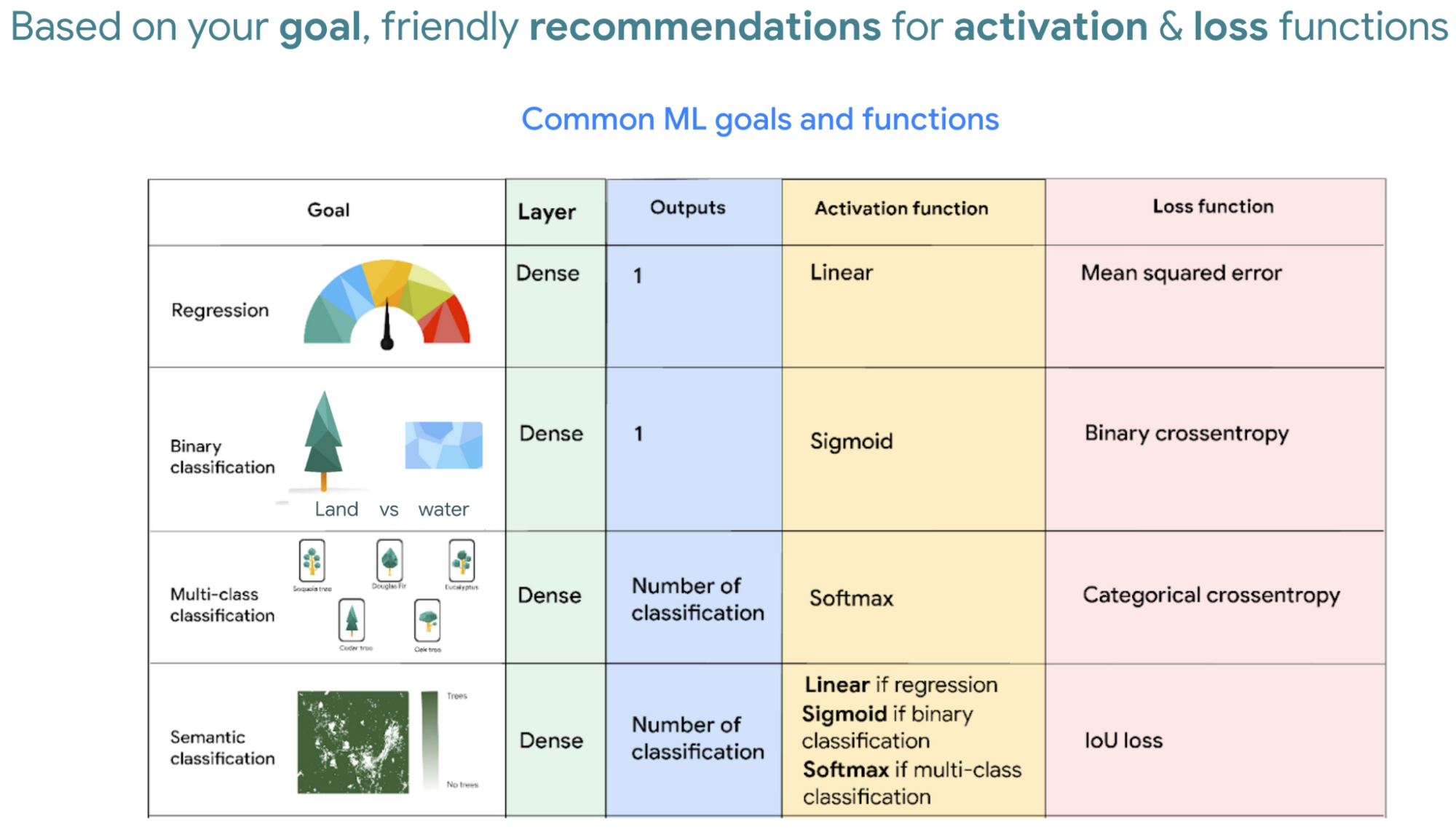

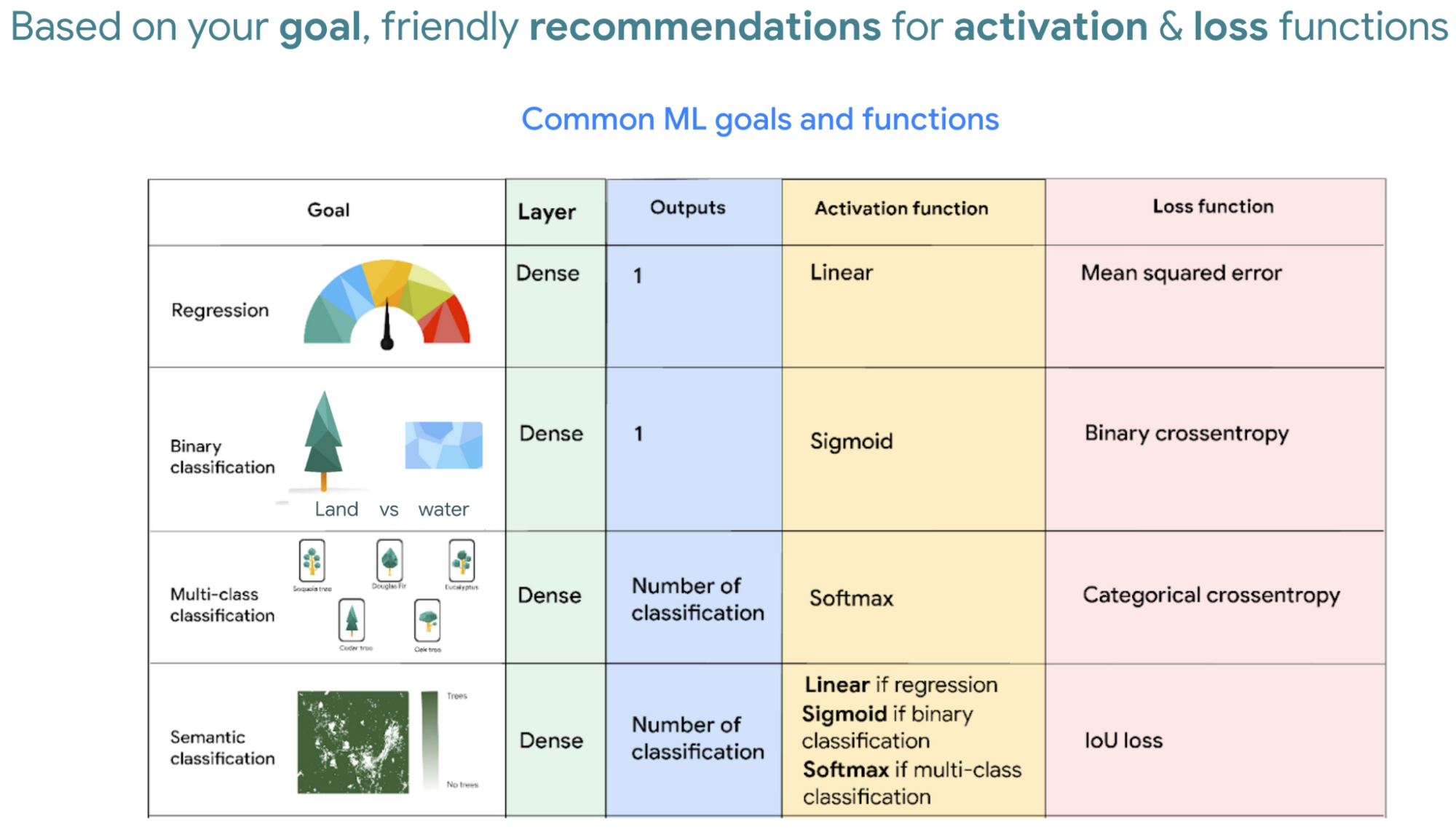

So let’s say we would like to measure deforestation using deep learning; to get started with building a model we first need a dataset that includes satellite images with an even amount of labels marking where there are trees and where there aren't. Next, we choose a goal, here are a few common ones. In our case, we simply want to know if there are trees or not for every pixel, and so this would be a binary semantic segmentation problem.

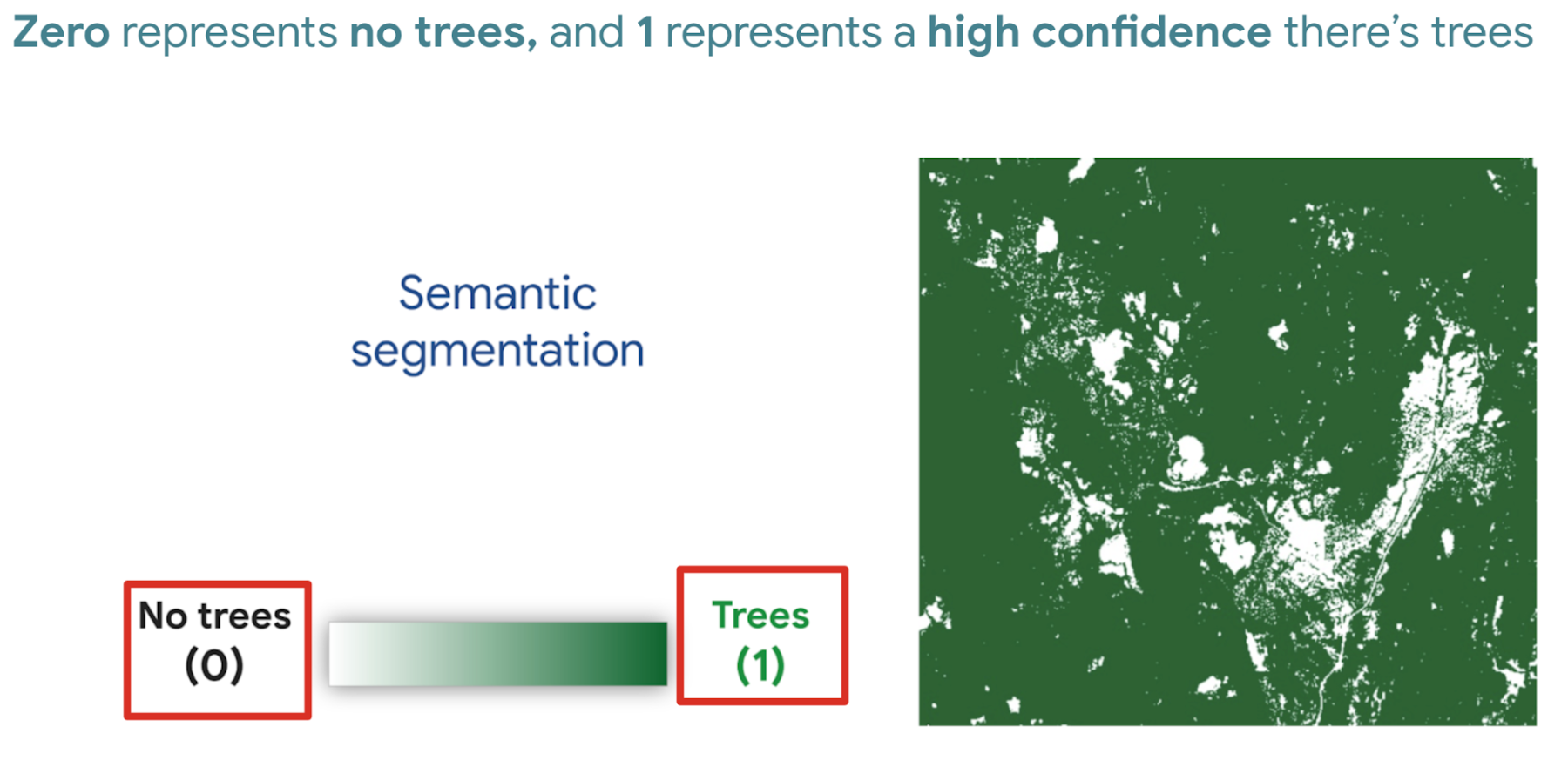

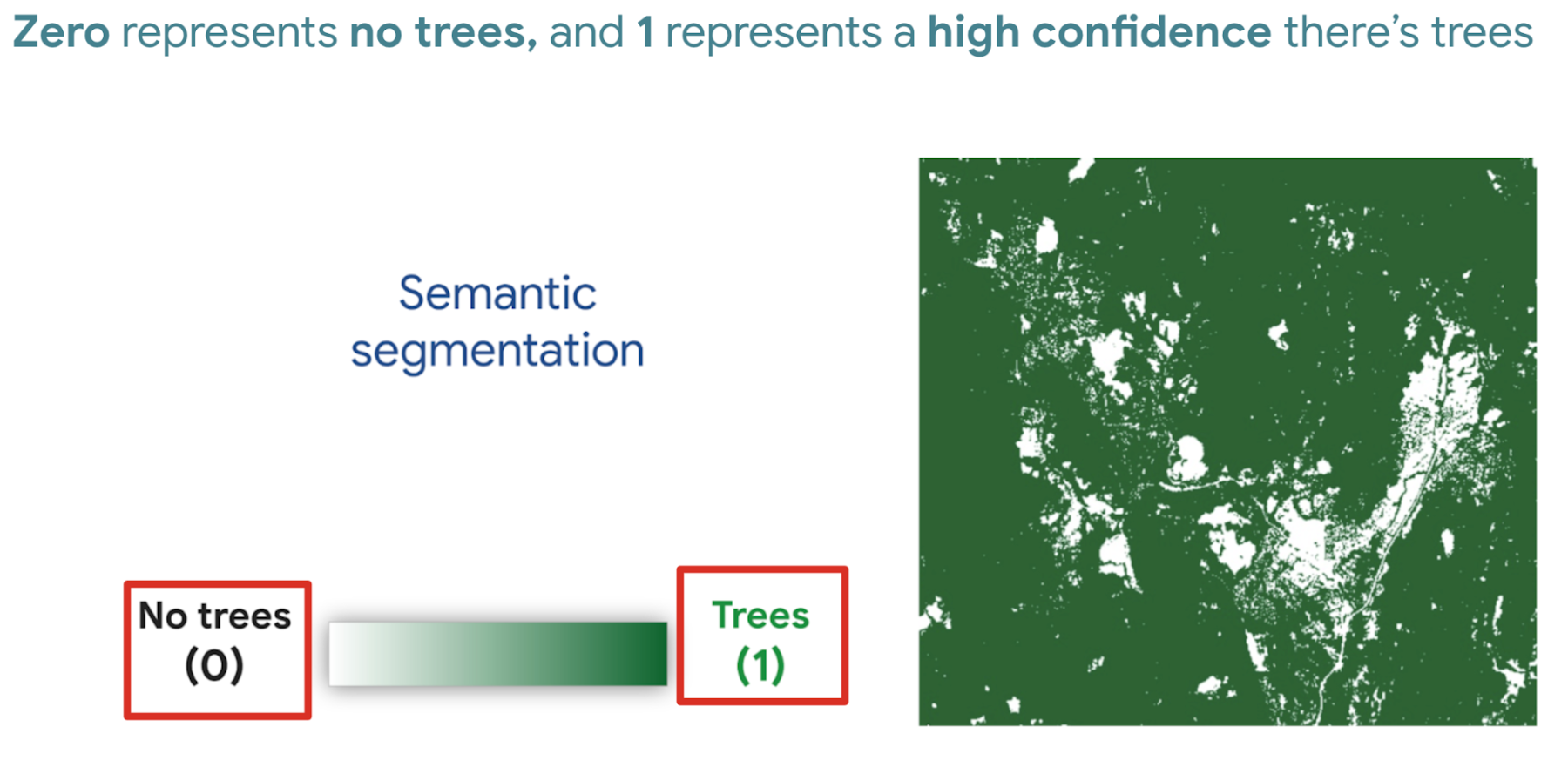

And based on this goal, we expect the outputs to be the percentage of trees for every pixel; as a number between 0 and 1. Zero represents no trees, and one represents a high confidence there are trees.

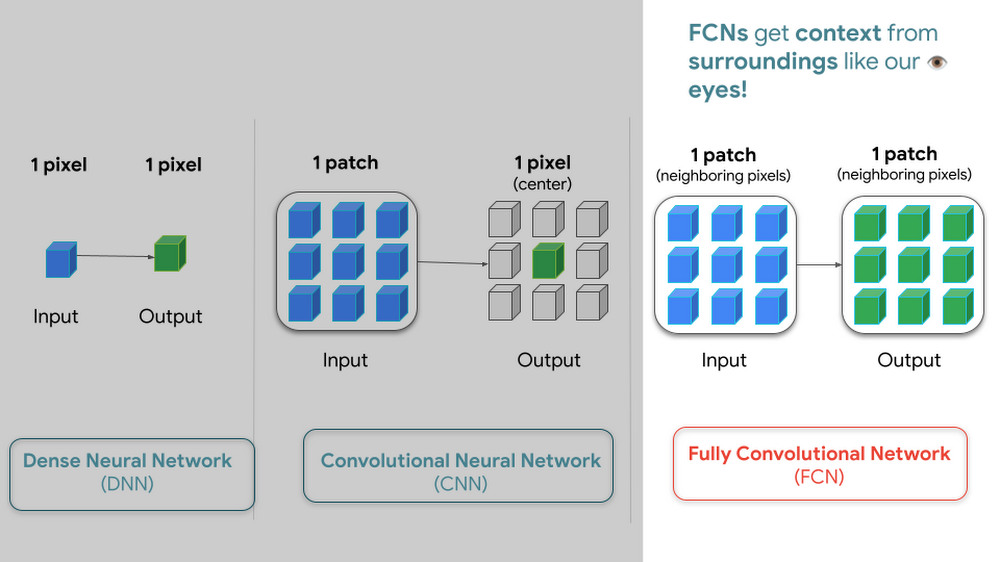

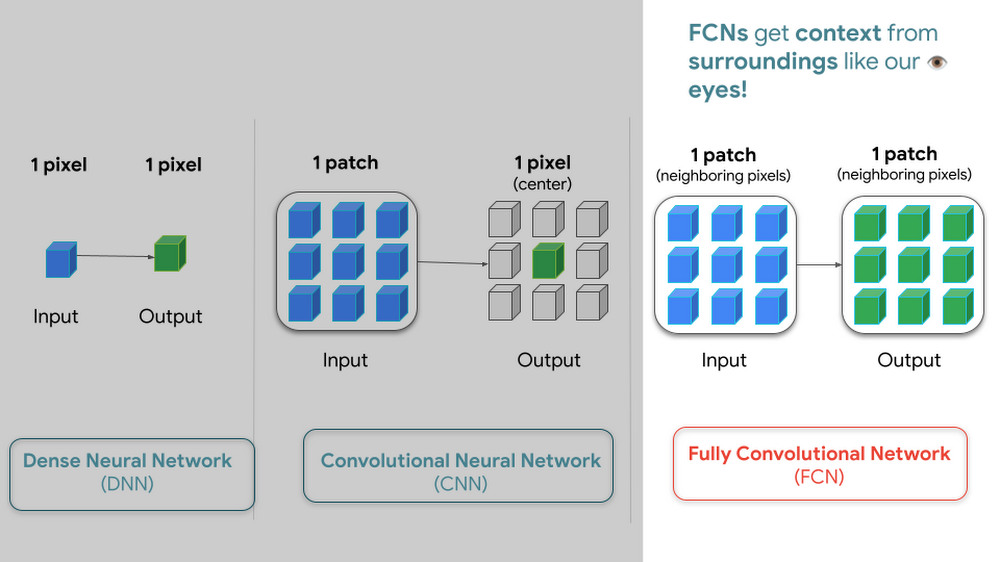

But how do we go from input images into probabilities of trees? Well think about it this way…there are many ways to approach this problem, here are 3 common ways of doing so. My peers and I prefer using Fully convolutional networks when building a map with ML predictions.

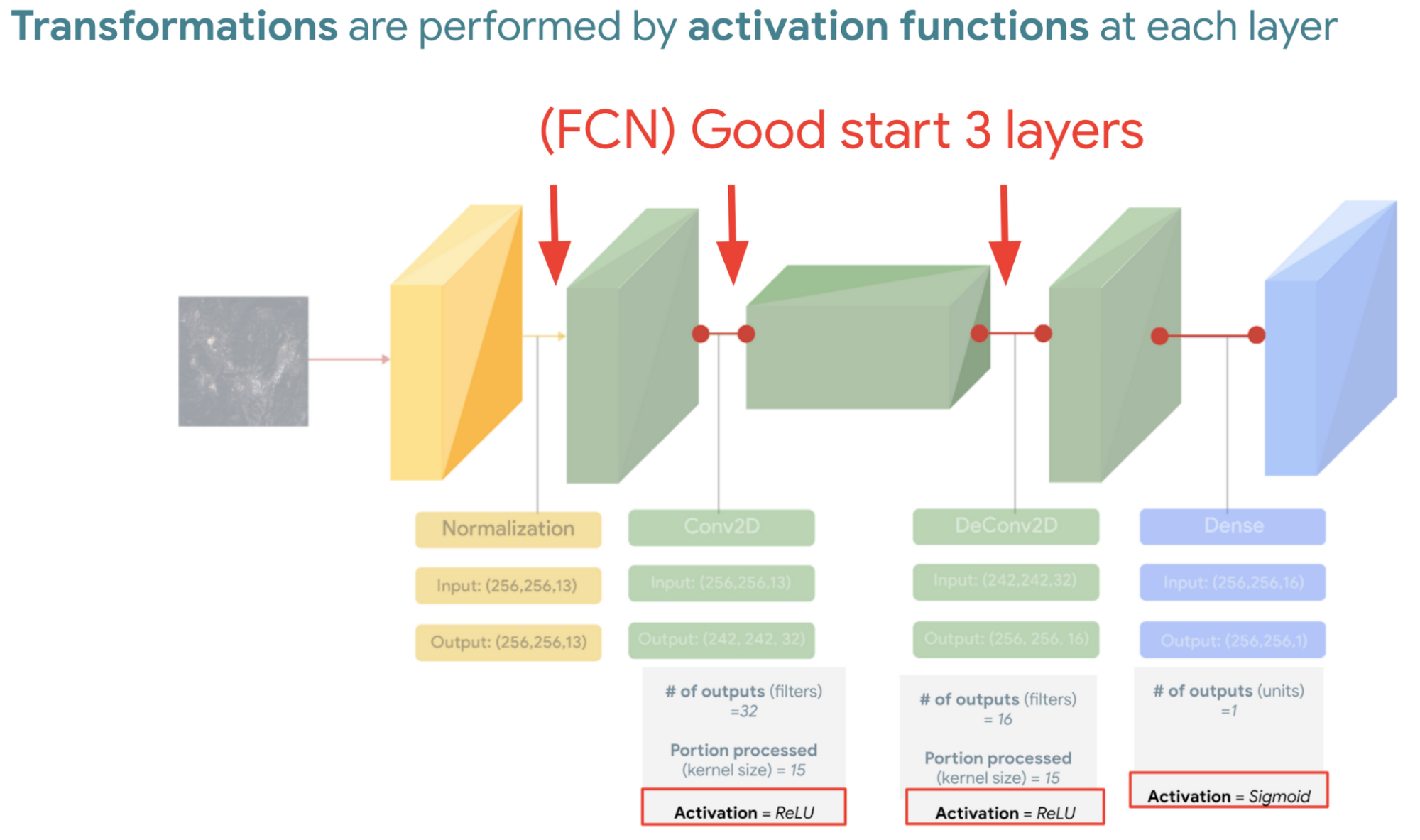

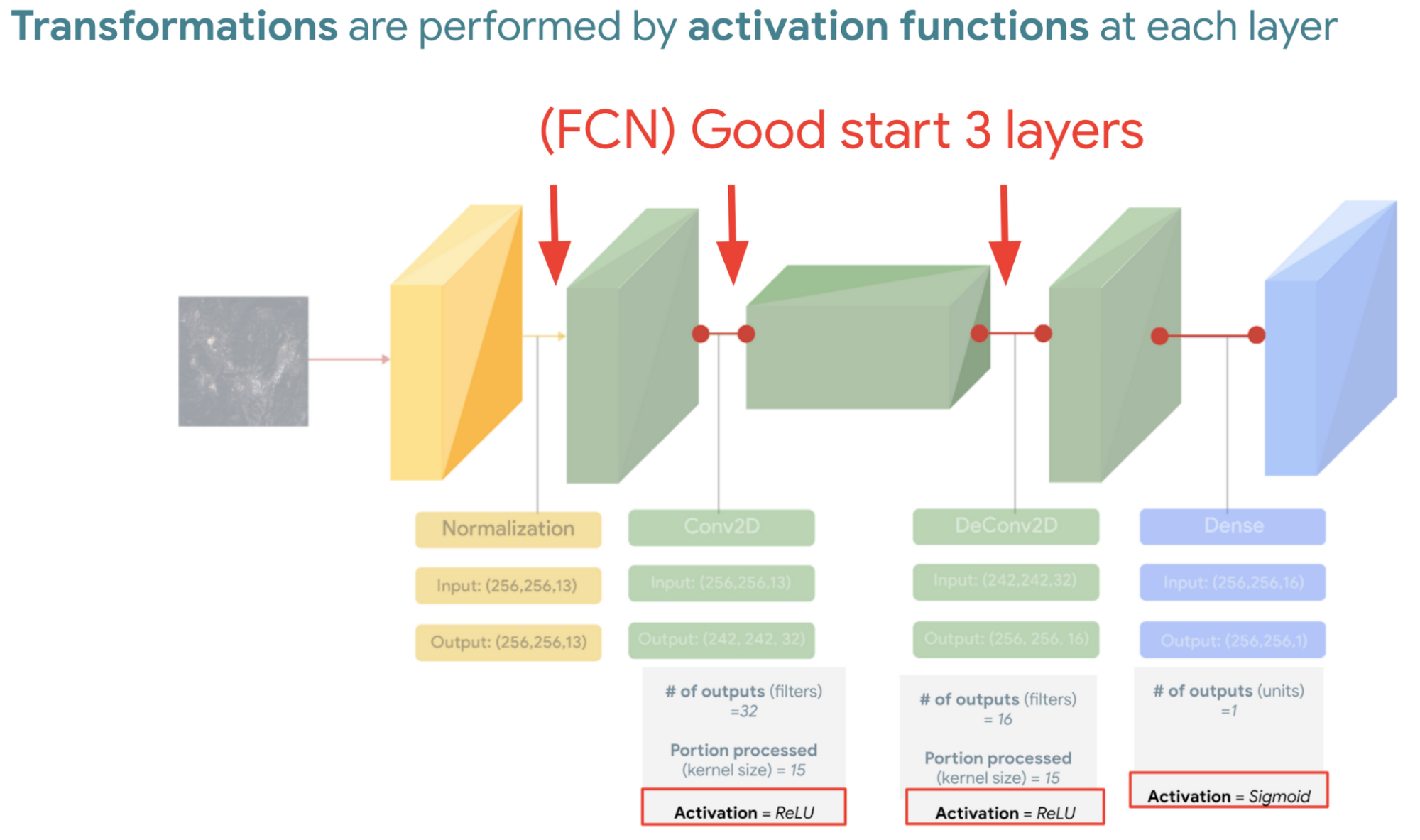

And since a model is a collection of interconnected layers, we must come up with an arrangement of layers that transforms our data inputs based on our desired outputs. Each layer by the way has something called an activation function, which performs the transformations of each layer before it passes them to the next layer.

FYI Below is a handy dandy table, with our recommended activation and loss functions to choose from based on your goal. We hope this saves you time.

We then reach the fourth and last layer. Depending on our goals at the beginning, we also choose an appropriate loss function that helps us score how well the model did during training.

After choosing layers and functions you will split your data into training and validation datasets. Just remember that all of this work is about experimenting repeatedly until you reach desired results. Our 8min episode gives this overview more in detail.

When to build a custom model outside of Earth Engine

So now that we covered what is deep learning, the next step is understanding which tools to use to build our deforestation model. For starters it’s important to call out that Google Earth Engine is a wonderful tool that helps organizations of all sizes find insights about changes on the planet, in order to make a climate positive impact. It has built-in machine learning algorithms (classifiers) that let users quickly spin them up, with just a basic machine learning background. This is fantastic place to start when using ML on geospatial data, however there are multiple situations where you will want to opt to build a custom model such as:

You want to use a popular ML library such as TensorFlow Keras.

You wish to build a state of the art model to build a global and accurate land cover map product such as Google’s Dynamic World.

Or because you generally have too much data to process that you can’t execute it in just one task in Earth Engine (and are trying to figure out hacky ways to export your data).

Whenever you identify with any of these options, you will want to roll up your sleeves and dive into building a custom model, which does require expertise and of course working with multiple products. But I have good news, using deep learning is a great go-to algorithm.

How to build a model with Google Cloud & Earth Engine?

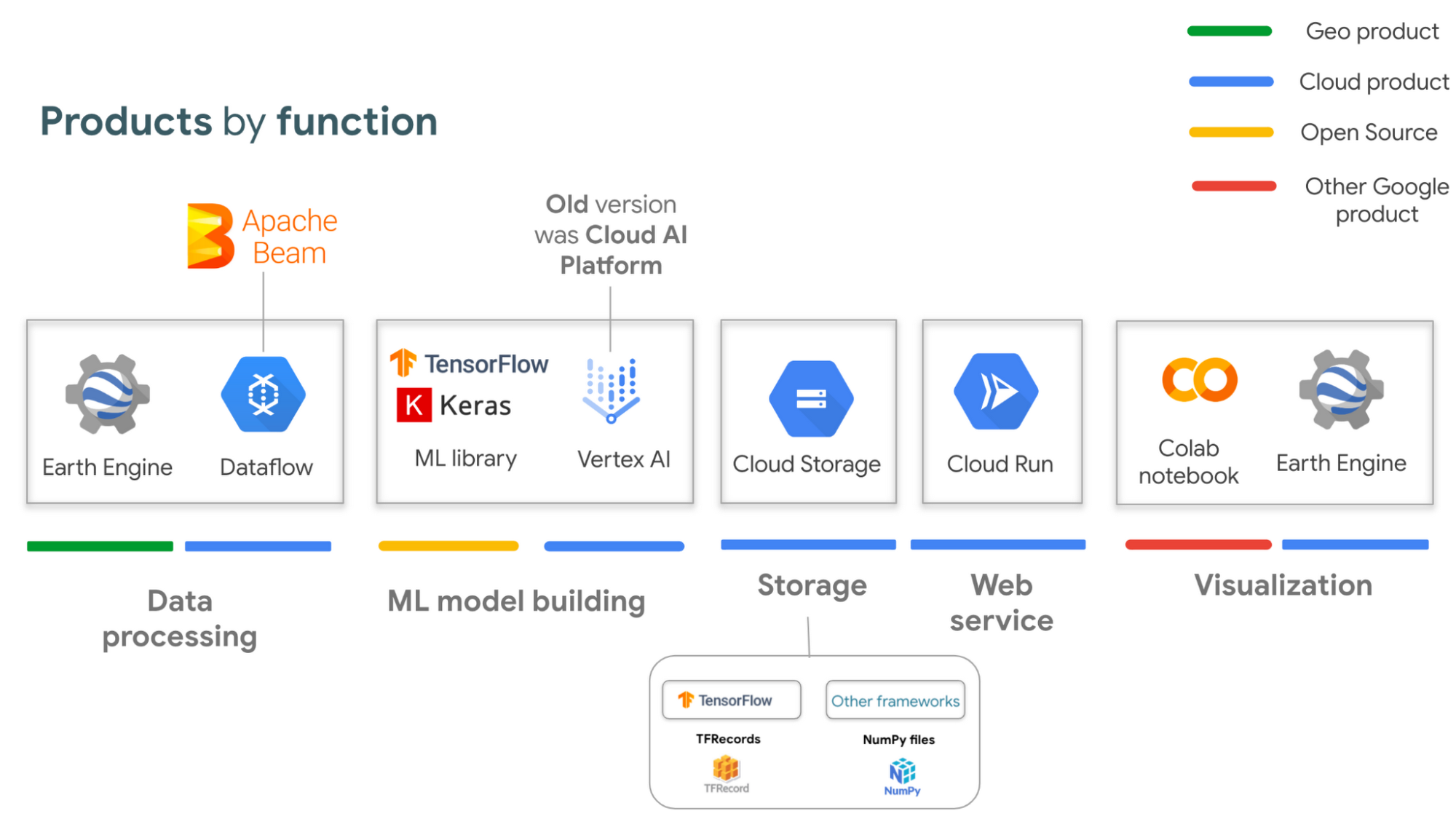

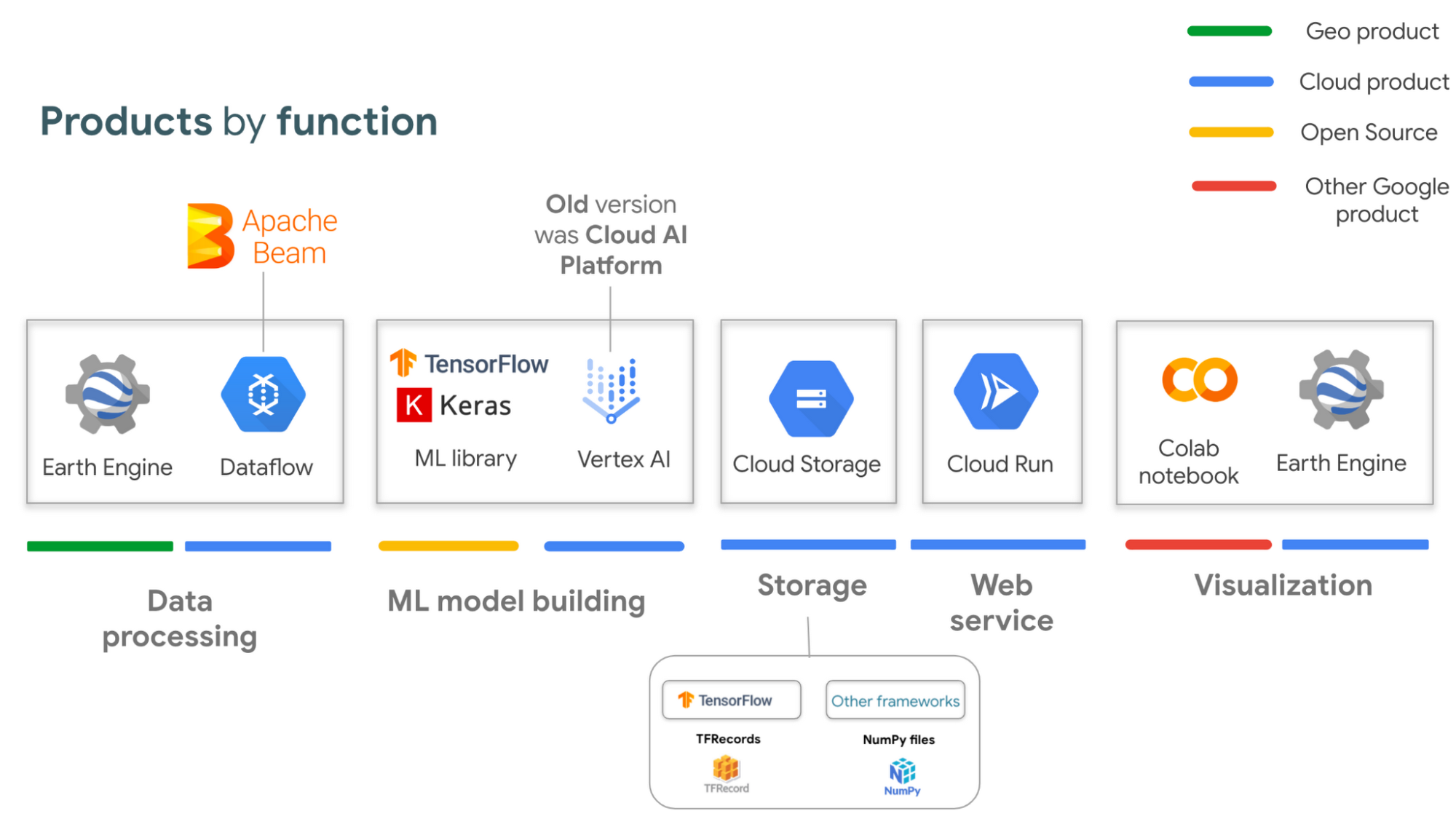

To get started, you will need an account with Google Earth Engine which is free for non-commercial entities and Google Cloud account which has a free tier if you are just getting started for all users. I have broken up the products you would use by function.

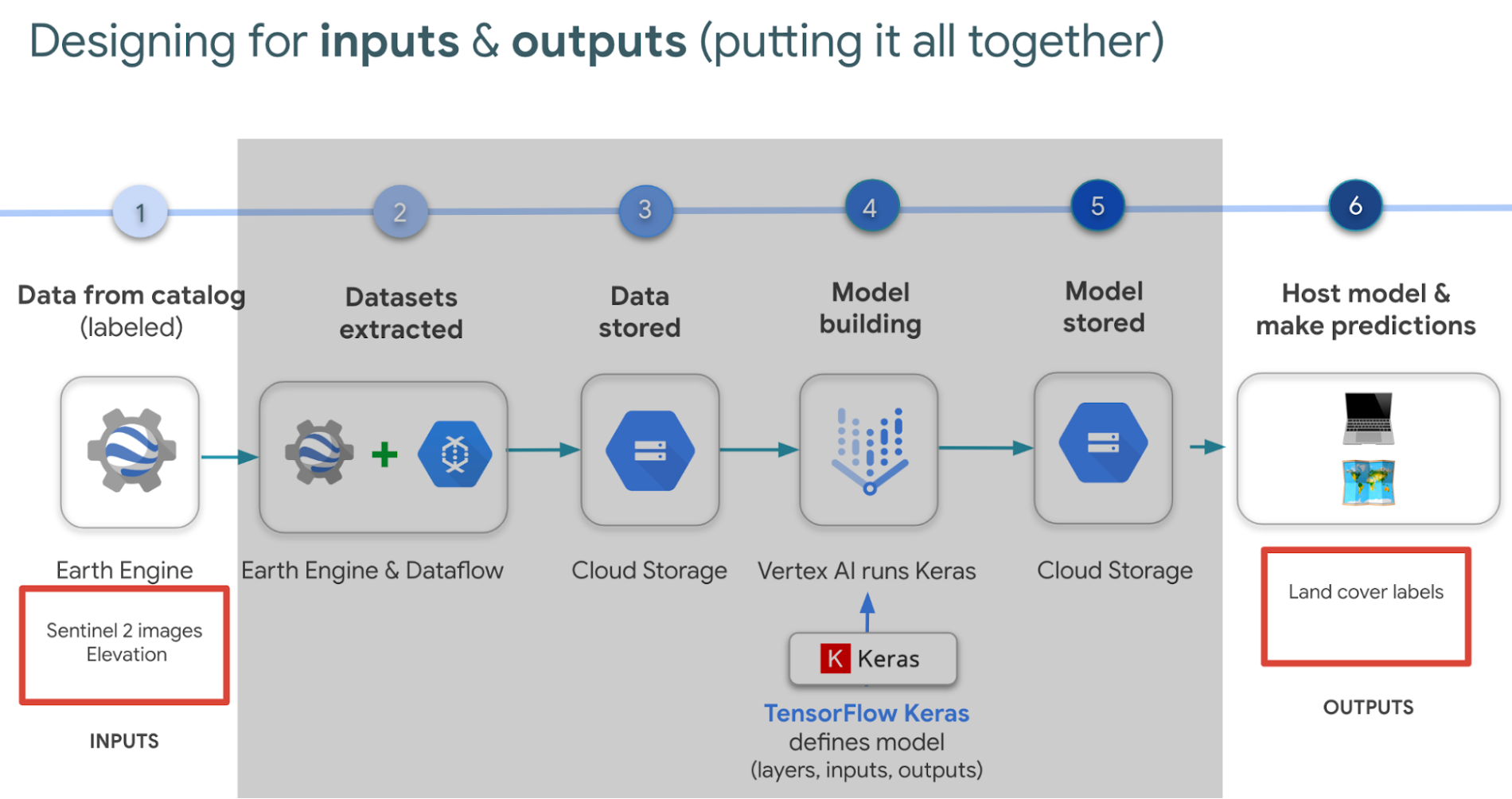

If you are interested in looking deeper into this overview, visit our slides here starting from slide 53 and read the speaker notes. Our code sample also walks through how to integrate with all of these projects end to end (just scroll down and click “open in colab”). But here is a quick visual summary. The main place to start is to identify which are the inputs and which are the outputs.

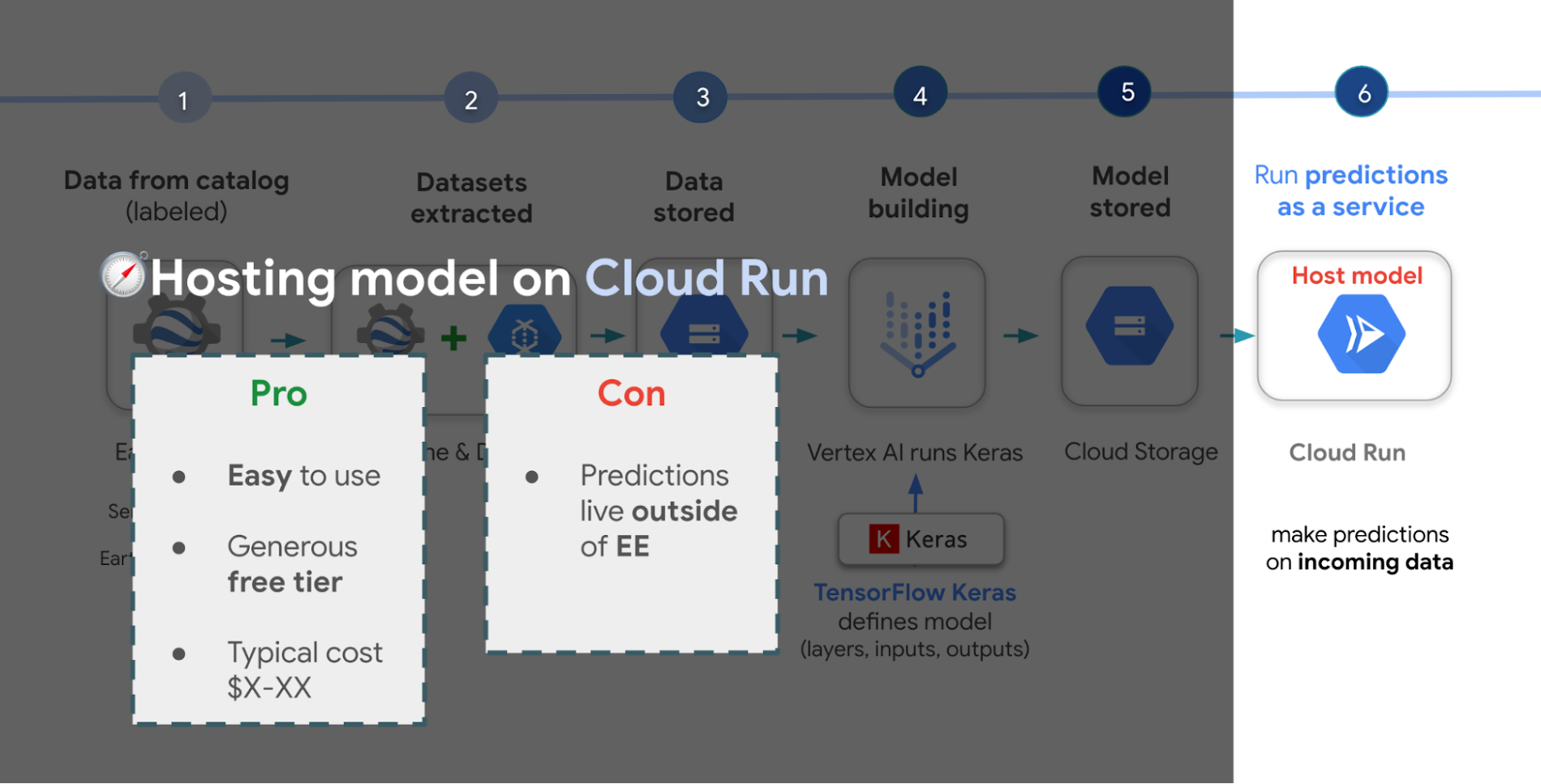

In our latest episodes for our People and Planet AI YouTube series, we walk through how to train a model and then host it in a relatively inexpensive web hosting platform called Cloud Run in episodes of less than 10mins.

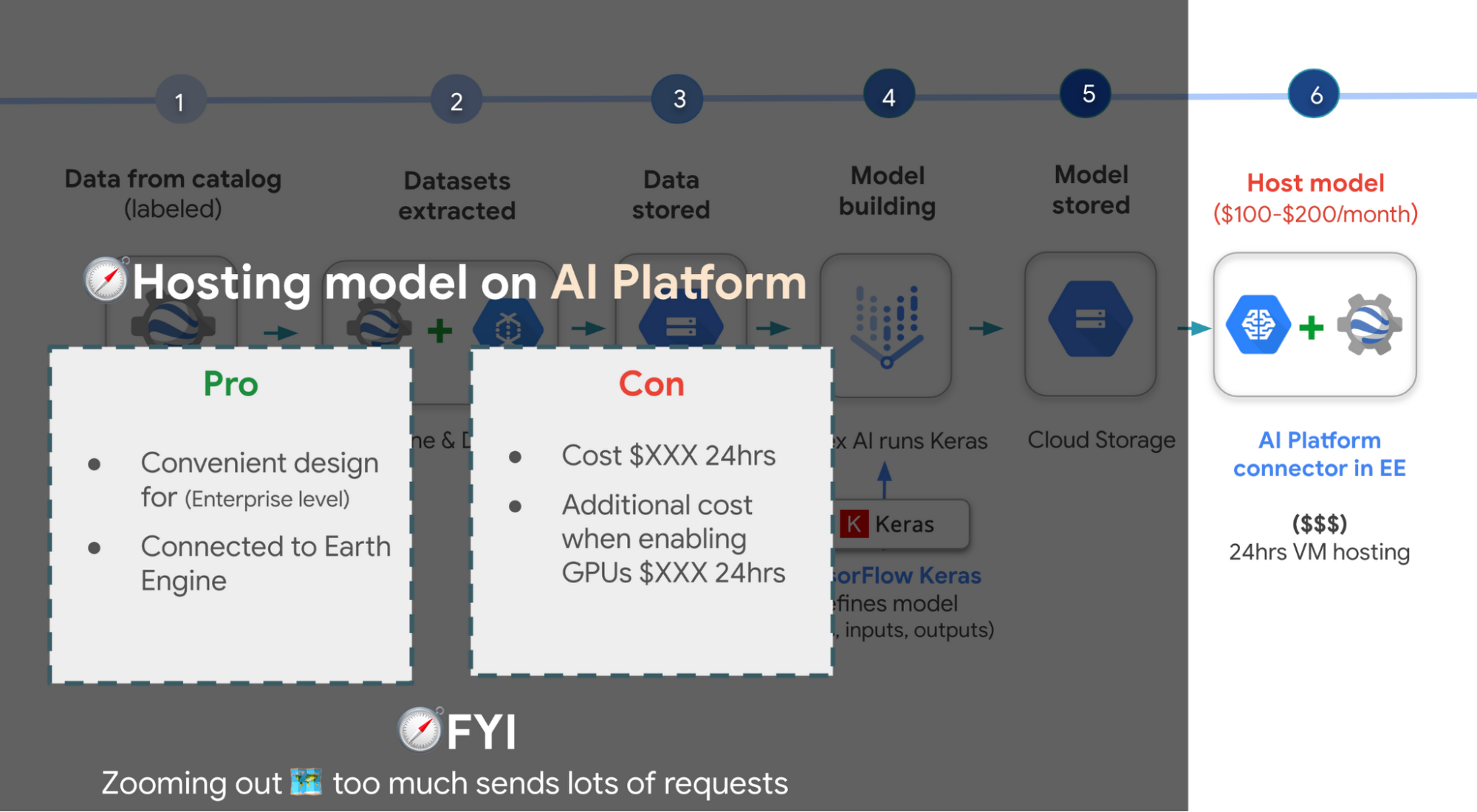

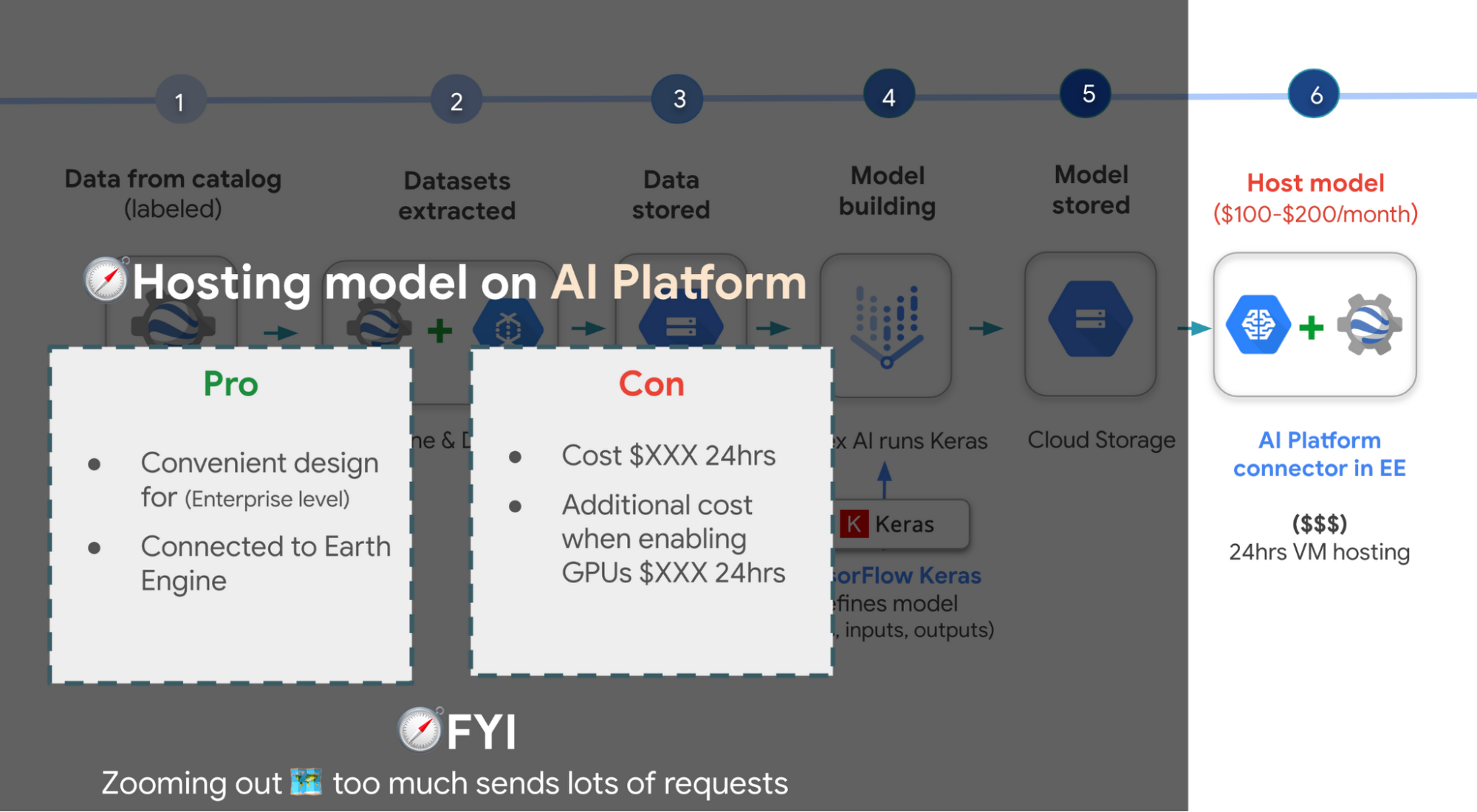

There are a few options presented in the slides, however the current best practice is to train a model using Vertex AI. Do note though that Google Earth Engine is currently not integrated with Vertex AI (we are working on this), but it is with the older (ML predecessor) called Cloud AI Platform (which is the recommended ML platform to use moving forward). As such, if you would like to import your model for detecting deforestation back into Earth Engine after training it in Vertex AI for example, you can host the model in Cloud AI Platform and get predictions. Just note that it’s a 24 hour paid service and so it can cost upwards of $100 or more a month to host your model to stream predictions. It also currently supports the following model building platforms if you don’t wish to use TensorFlow.

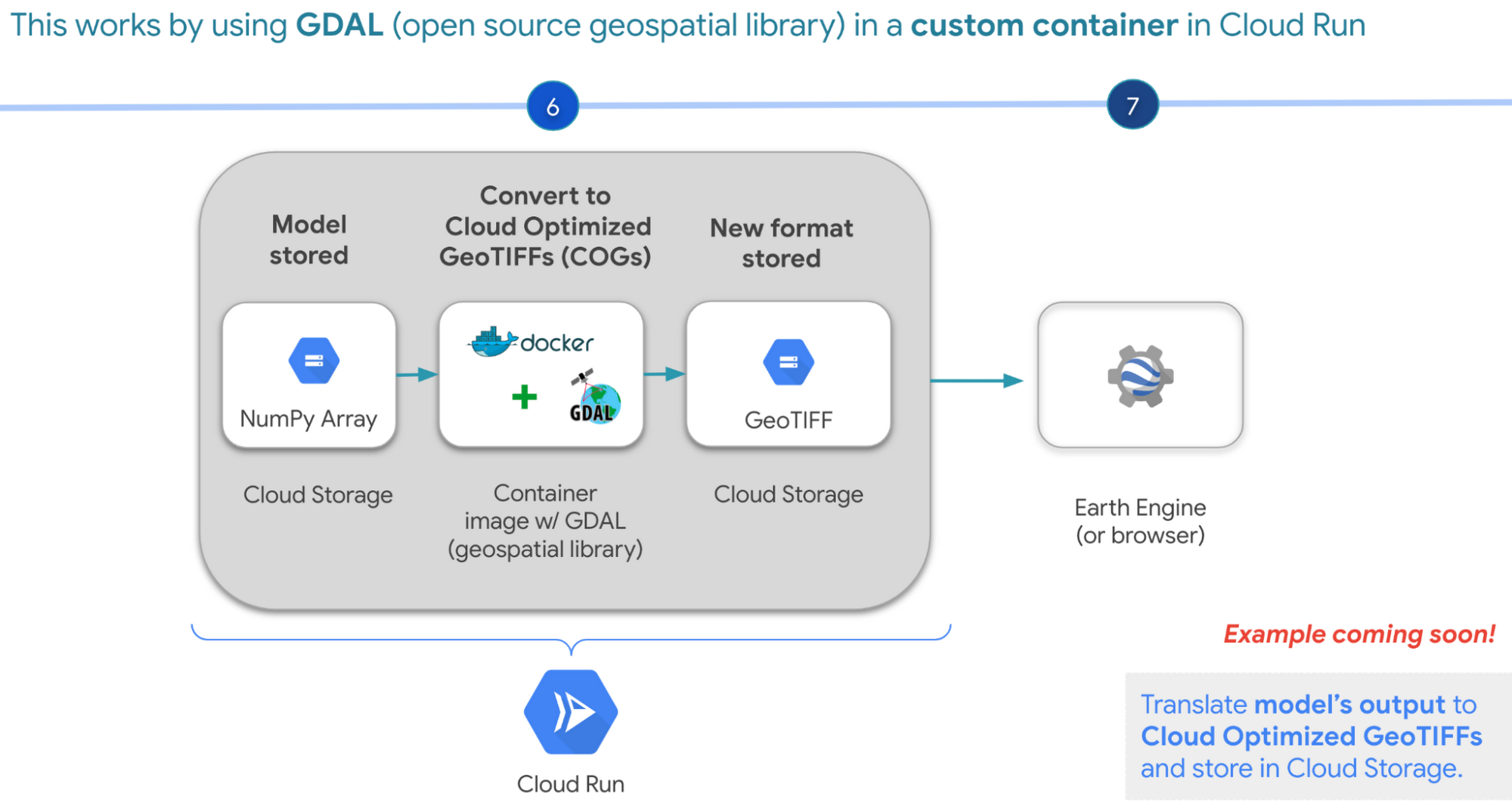

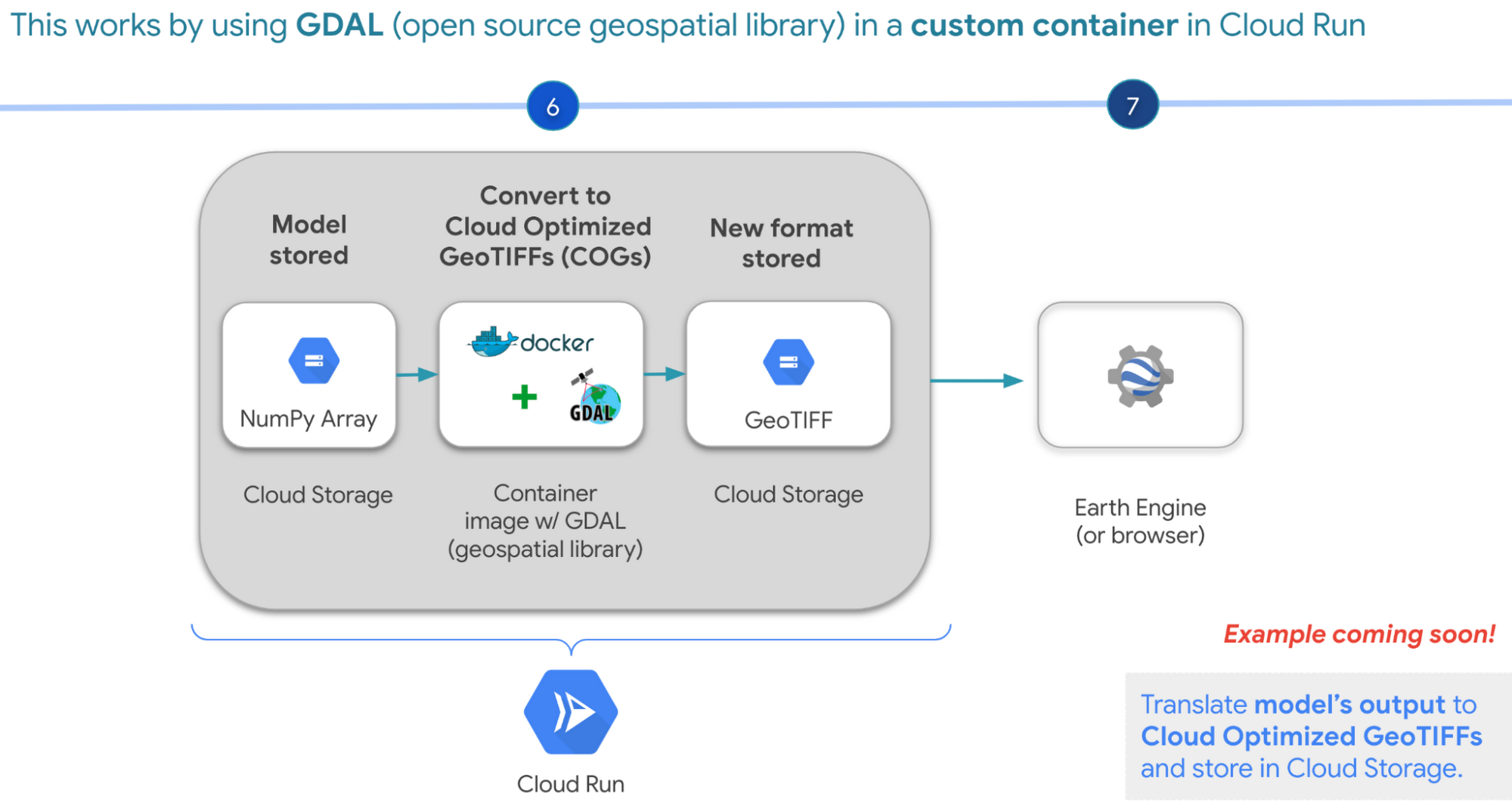

A cheaper alternative but without the convenience AI Platform offers is to manually translate the model’s output, which is NumPy Arrays into Cloud Optimized GeoTIFFs in order to load it back into Earth Engine using Cloud Run. Within this web service you would store the NumPy arrays into a Cloud Storage bucket, then spin up a container image with GDAL, an open source geospatial library in order to convert them into Cloud Optimized GeoTIFF files into Cloud Storage. This way you can view predictions from your browser or Earth Engine.

Try it out

This was a quick overview of deep learning and what Cloud products you can use to solve meaningful environmental challenges like detecting deforestation in extractive supply chains. If you would like to try it out, check out our code sample here (click “open in colab” at the bottom of the screen to view the tutorial in our notebook format or click this shortcut here).