Google Cloud launches from Google I/O 2021

Stephanie Wong

Head of Developer Skills & Community, Google Cloud

And that’s a wrap for Google I/O 2021! Its virtual nature this year surely didn’t stop its viral nature, as we saw over 215,000 registrants, over 235 sessions, 186,565 badges earned, and dozens of workshops and AMAs. Viewers from around the world once again tuned in to learn about the latest launches from Google, including Android, WearOS, Flutter, and TensorFlow. Developers came to sharpen their skills by learning the newest tools, APIs, and improved experiences to help them build.

Google Cloud has become a big part of the excitement as it unveiled a number of major launches around AI, Google Workspace, and sustainability, including a new unified AI platform, rich collaborative experiences, and breakthroughs in carbon-aware computing. If you couldn’t make it and want a quick list of the best cloud sessions, check out my cloud developer’s guide to I/O. To get to the heart of Google Cloud I/O announcements, read on.

Vertex AI

One of the most notable Google Cloud launches was the general availability of Vertex AI. It’s a managed unified machine learning (ML) platform that allows companies to accelerate deployment and maintenance of artificial intelligence (AI) platforms. Google Cloud has been leading with its AI Platform and AutoML products, like AutoML Vision, Tables, and Natural Language because we offer an end-to-end ML lifecycle, but we understand that you don’t want to have separate experiences for the AutoML training path and the custom AI Platform path.

Vertex AI unifies our existing offerings into a single experience for experimentation, versioning, and deploying ML/AI models into production environments. You can seamlessly manage and deploy your models through a new workflow (UI, API, and SDKs) for AI Platform Training, AI Platform Prediction, AutoML Tables, AutoML Vision, AutoML Video Intelligence, AutoML Natural Language, Explainable AI, and Data Labeling. Each of these services are now features of Vertex AI, the evolution of AI Platform (unified).

ML Ops included

Being an emerging field, MLOps is rapidly gaining momentum amongst the community because it provides an end-to-end machine learning development process to design, build and manage reproducible, testable, and evolvable ML-powered software. Vertex AI includes new MLOps features, including:

- Vertex Experiments to track, analyze and discover ML experiments for faster model selection.

- Vertex Vizier, which provides optimized values for hyperparameters to maximise models' predictive accuracy.

- Vertex Pipelines, which streamlines building and running ML pipelines to simplify MLOps.

- Explainable AI gives you detailed model evaluation metrics and feature attributions so you know how important each input feature is to your prediction.

End-to-end ML lifecycle

You can use Vertex AI to manage the following stages in the ML workflow:

- Define and upload a dataset.

- Train an ML model on your data either using AutoML or custom training on different machine types and GPUs. Get model evaluation and tuning hyperparameters for custom models.

- Use data labeling jobs that let you request human labeling for custom ML model datasets.

- Upload and store your model in Vertex AI.

- Deploy your trained model and get an endpoint for serving predictions.

- Send prediction requests to your endpoint.

- Specify a prediction traffic split in your endpoint.

- Manage your models and endpoints.

To interact with Vertex AI you can use:

- Notebooks prepackaged with JupyterLab and deep learning packages.

- The Google Cloud Console the UI to work with your ML resources and get logging and monitoring.

- Cloud client libraries for an optimized developer experience for a set of languages, or the Google API Client Libraries to access the Vertex AI API by using other languages like Dart.

- Or the REST API for managing jobs, models, endpoints, and predictions.

You have one experience and interface to use both AutoML or create custom models, a shared infrastructure, data modeling, UX, and API layer, and have the ability to quickly move between data science and production. You get both the notebook-based development version and a hosted cloud production version. For ML novices, AutoML gives you explainability and automatic provisioning but then, as you become more experienced, you’re able to manage constraints and make your own fine-grained decisions using the rest of Vertex AI all from one place.

Where to get started

Listen to the GCP Podcast, where I invite our AI executives to talk about responsible AI and how you can use Vertex AI to implement more inclusive and accurate ML practices. Check out the docs for Vertex AI tutorials on training image data, doing custom training, and bringing in structured data, and get code samples for Python, Java, Node.js and more. And join us for the digital Applied ML Summit at g.co/appliedmlsummit on June 10th for Vertex AI technical tutorials and interactive sessions with leading innovators and Kaggle Grandmasters.

Google Workspace

Smart canvas

Google Workspace announced smart canvas—a new product experience that delivers the next evolution of collaboration for Google Workspace. You’ll see enhancements throughout the rest of the year for apps like Docs, Sheets, and Slides—to make them more flexible, interactive, and intelligent.

Feature highlights

New interactive building blocks—smart chips, templates, and checklists—will connect people, content, and events into one seamless experience.

When you @ mention a person in a document, a smart chip shows you additional information like the person’s location, job title, and contact information.

Checklists are available on web and mobile, and you’ll soon be able to assign checklist action items to other people, which show up in Google Tasks.

More assisted analysis functionality in our Sheets web experience, with formula suggestions that make it easier for everyone to derive insights from data.

Present your content to a Google Meet call on the web directly from the Doc, Sheet, or Slide where you’re already working with your team.

Live translations of captions will be available in Google Meet in five languages.

Additional control over the Meet experience, including more space to see people and content, plus the ability to pin and unpin content and video feeds. And to help with meeting fatigue, you can now turn off your self-feed entirely.

Integrations

With the recent GA release of AppSheet Automation, you can integrate Google Workspace data sources. Looking ahead, the team is working on additional APIs so you can bring information from third-party tools directly into smart canvas elements like smart chips, checklists, and table templates.

Google Workspace Security

To ensure admins get the necessary controls and capabilities to protect their users and organizations against security threats and abuse, Google Workspace came out with new advanced security features.

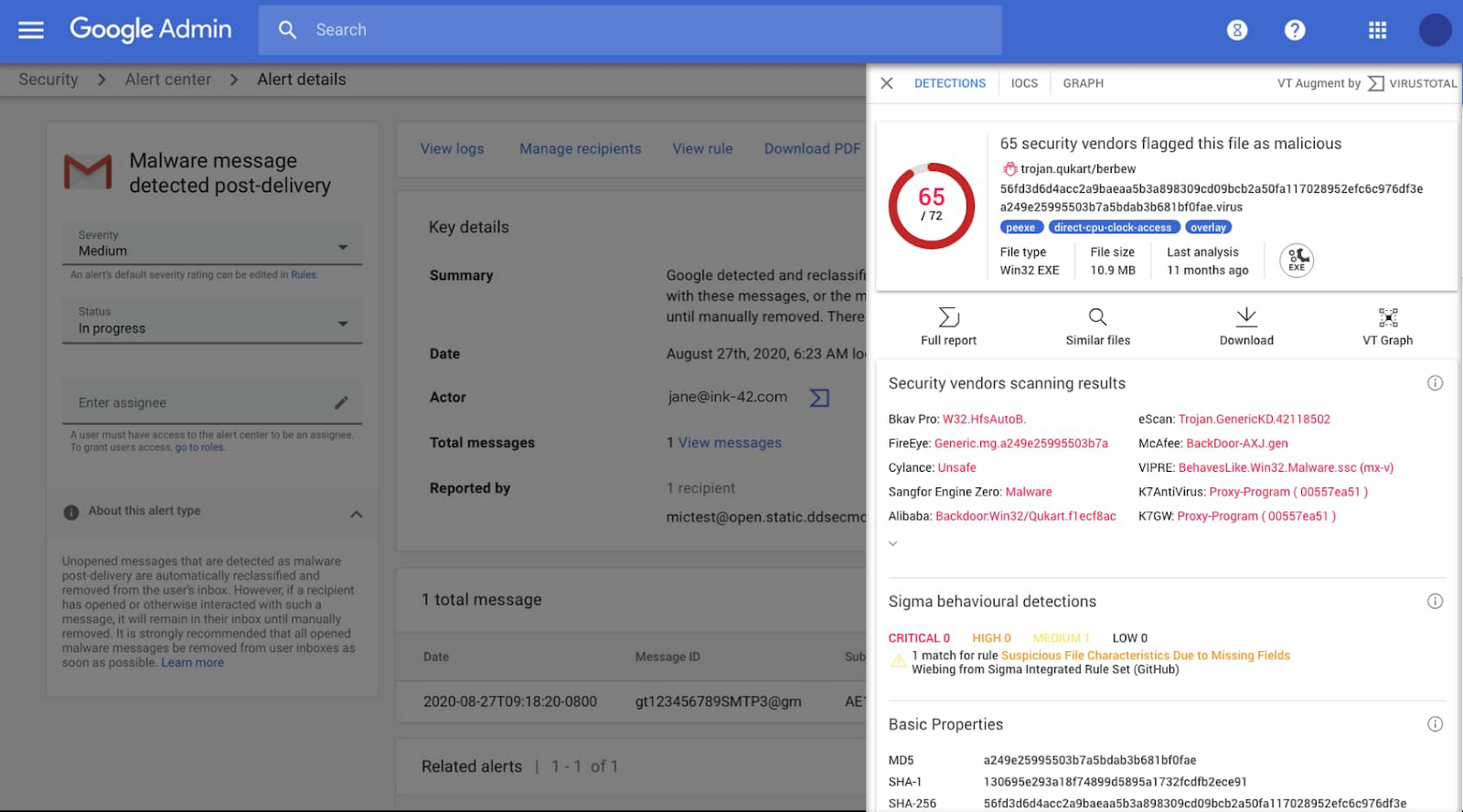

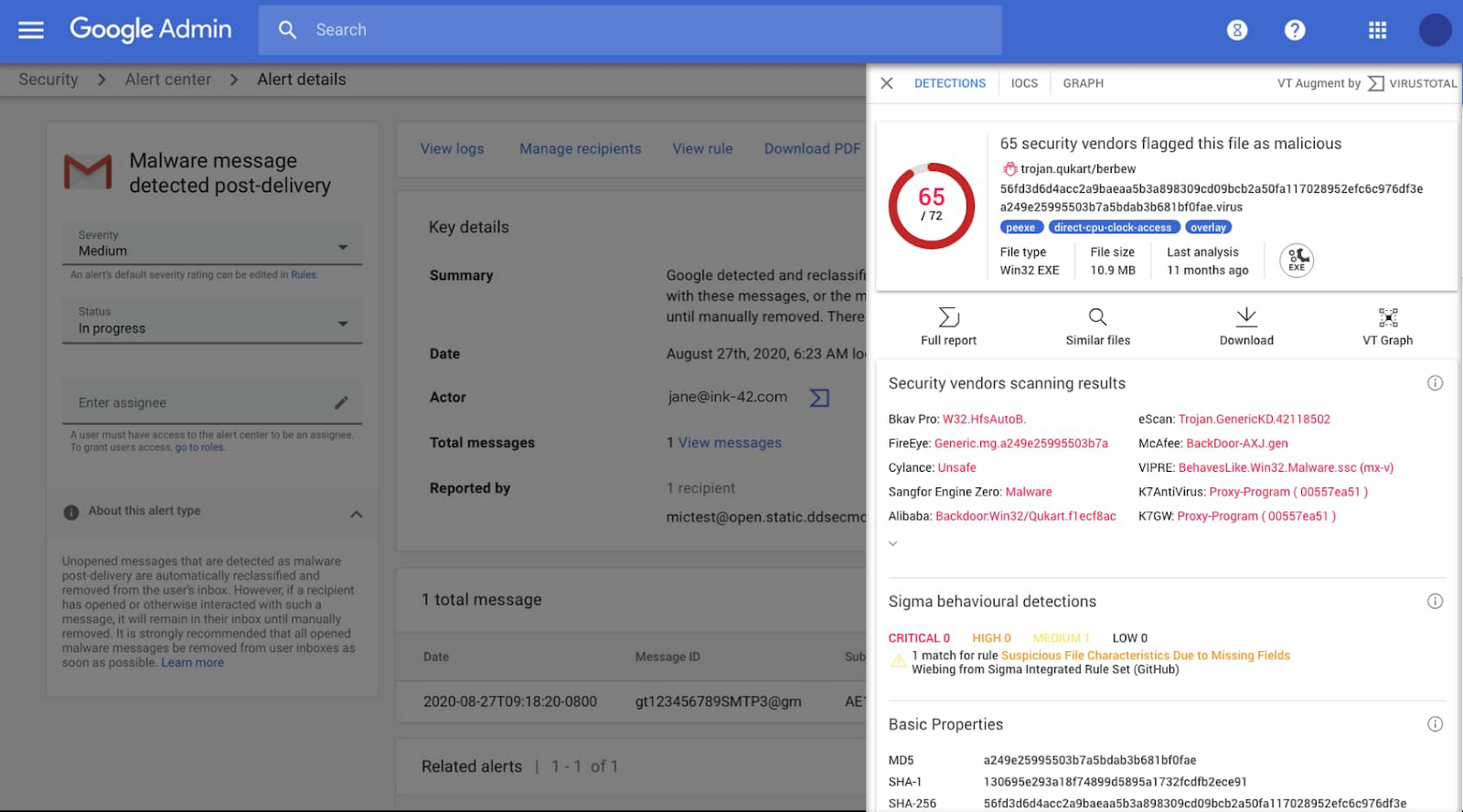

Alert Center

Google Workspace’s Alert Center gives you actionable, real-time alerts and security insights about security-related activity in your domain. Now the Alert Center is enriched with VirusTotal threat context and reputation data.

You get a unified view of critical alerts through:

- Indicators of compromise: See threat relationships with other artifacts to map out threat campaigns and pinpoint malicious network infrastructure.

- Threat graph: Visualize threat relationships graphically to make quick and accurate determinations for any alerts you study.

- Multi-angular detections: Get enhanced reputation information via crowdsourcing of YARA, SIGMA, and intrusion detection system rules.

Restricting access to Google Workspace resources

Admins also get enhancements for restricting Google Workspace resource access:

- Blocking all OAuth 2.0 API access with app access control

- New context-aware access for Google mobile and desktop apps

- Block all third-party API access to Google Workspace and end-user data

As an admin, you can choose to trust, limit, or block access to Google Workspace data to keep users and organizations safe from abuse and security threats. Check out the Google Cloud Security Talks for our breakdowns of new security features and research projects. Read the official blog post to learn about additional features in the new Workspace security bundle.

Sustainability

This was a big year for sustainability at Google. Back in 2007, we were the first major company to become carbon-neutral, and we’ve been matching 100% of our annual electricity use with renewable energy purchases since 2017. And now, we’re building on our progress with a new goal: By 2030, we plan to completely decarbonize our electricity use for every hour of every day. One way we can do this is by adjusting our operations in real time so that we get the most out of the clean energy that’s already available.

Carbon-aware computing

Our newest milestone in carbon-intelligent computing means Google can now shift moveable compute tasks between different data centers, based on regional hourly carbon-free energy availability.

Shifting compute tasks across location is a logical progression of our first step in carbon-aware computing, which was to shift compute across time. By enabling our data centers to shift flexible tasks to different times of the day, we were able to use more electricity when carbon-free energy sources like solar and wind are plentiful. Now, we’re also able to shift more electricity use to where carbon-free energy is available.

How it works

The amount of computing going on at any given data center varies across the world, increasing or decreasing throughout the day. Our carbon-intelligent platform uses day-ahead predictions of how heavily a given grid will be relying on carbon-intensive energy in order to shift computing across the globe, favoring regions where there’s more carbon-free electricity.

What this means for you

Google's global carbon-intelligent computing platform will increasingly reserve and use hourly compute capacity on the most clean grids available worldwide for compute jobs, starting with our multimedia processing efforts like YouTube uploads, Photos, and Drive.

As Google Cloud developers, you can prioritize cleaner grids, and maximize the proportion of carbon-free energy that powers your apps by choosing regions based on their carbon-free energy (CFE) scores.

To learn more, tune in to the livestream of our carbon-aware computing workshop on June 17 at 8:00 a.m PT. And for more information on our journey towards 24/7 carbon-free energy by 2030, read CEO Sundar Pichai’s latest blog.

Next-generation geothermal technology

As part of achieving our goal of running our operations on carbon-free energy around the clock, we announced a first-of-its-kind next-generation geothermal power project. As an “always on” carbon-free resource, it will soon begin adding carbon-free energy to the electric grid that serves our data centers and infrastructure throughout Nevada, including our Cloud region in Las Vegas.

Google is partnering with Fervo to develop AI and machine learning that could boost the productivity of next-generation geothermal as a renewable energy source. By using advanced drilling, fiber-optic sensing, and analytics techniques, next-generation geothermal can unlock an entirely new class of resource. The partnership will make it more effective at responding to demand, while also filling in the gaps left by variable renewable energy sources.

How it works

Using fiber-optic cables inside wells, Fervo can gather real-time data on flow, temperature, and performance of the geothermal resource. This data allows Fervo to identify precisely where the best resources exist, making it possible to control flow at various depths. Coupled with AI and machine learning development, these capabilities can increase productivity and unlock flexible geothermal power in a range of new places.

What this means for you

This project brings our data centers and cloud region in Nevada closer to round-the-clock clean energy and sets the stage for next-generation geothermal to play a role as a firm and flexible carbon-free energy source that can increasingly replace carbon-emitting fossil fuels. As we increase the carbon-free energy percentages for our Google Cloud regions, you can directly leverage these advancements to meet your own organizational sustainability goals.

Phew - and those were just the Cloud announcement highlights from Google I/O 2021. We saw exciting launches around developer-centric and unified ML experiences with Vertex AI, a multitude of more flexible and secure Workspace features, and incredible progress towards a carbon-free future at our data centers. There were many more key moments that I encourage you to view on demand, including AI and serverless demo derbies, full stack development on Cloud Run, and workshops on how to solve everyday problems using machine learning. Check out my blog post to learn more and the Google I/O site for access to sessions, AMAs, and more.

Got thoughts about the latest Google Cloud launches? Connect with me online @stephr_wong.