Introducing automated failover for private workloads using Cloud DNS routing policies with health checks

Truptesh Nagesh

Network Specialist, Google Cloud

Paarth Mahajan

Google Cloud Network Specialist

High availability is an important consideration for many customers and we’re happy to introduce health checking for private workloads in Cloud DNS to build business continuity/disaster recovery (BC/DR) architectures. Typical BC/DR architectures are built using multi-regional deployments on Google Cloud. In a previous blog post, we showed how highly available global applications can be published using Cloud DNS routing policies. The globally distributed, policy-based DNS configuration provided reliability, but in case of a failure, it required manual intervention to update the geo-location policy configuration. In this blog we will use Cloud DNS health check support for Internal Load Balancers to automatically failover to health instances.

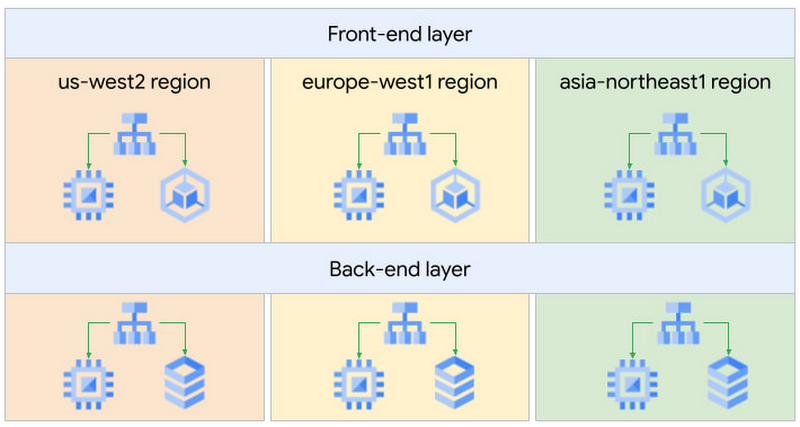

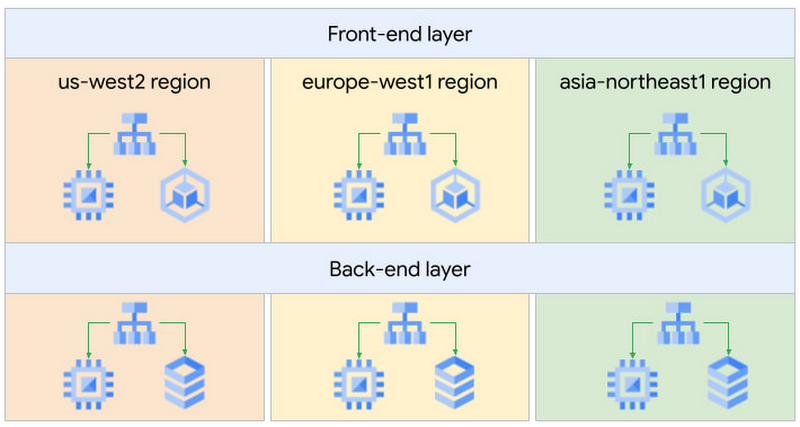

We will use the same setup we used in the previous blog. We have an internal knowledge-sharing web application. It uses a classic two-tier architecture: front-end servers tasked to serve web requests from our engineers and back-end servers containing the data for our application.

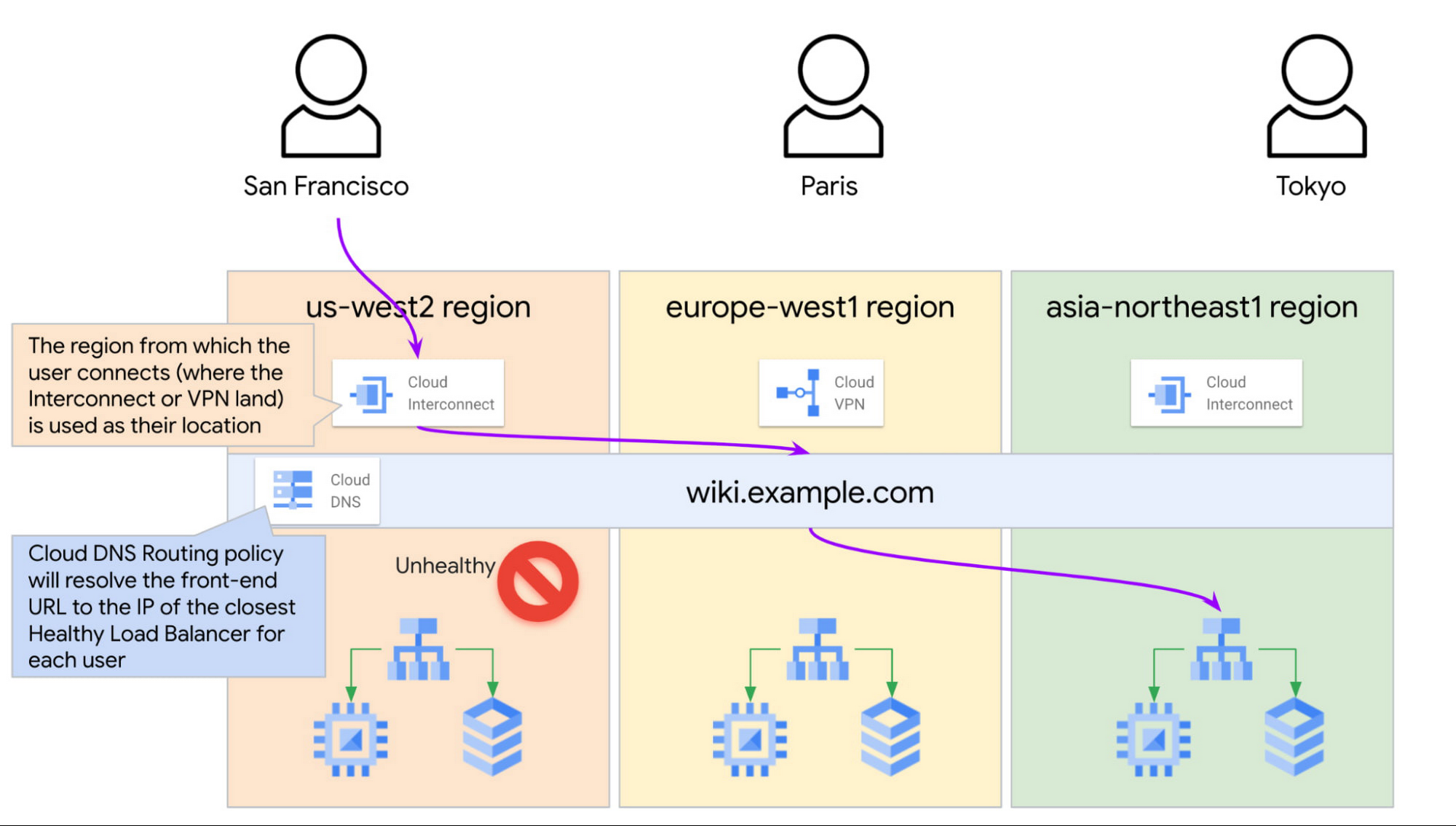

Our San Francisco, Paris, and Tokyo engineers will use this application, so we decided to deploy our servers in three Google Cloud regions for better latency, performance, and lower cost.

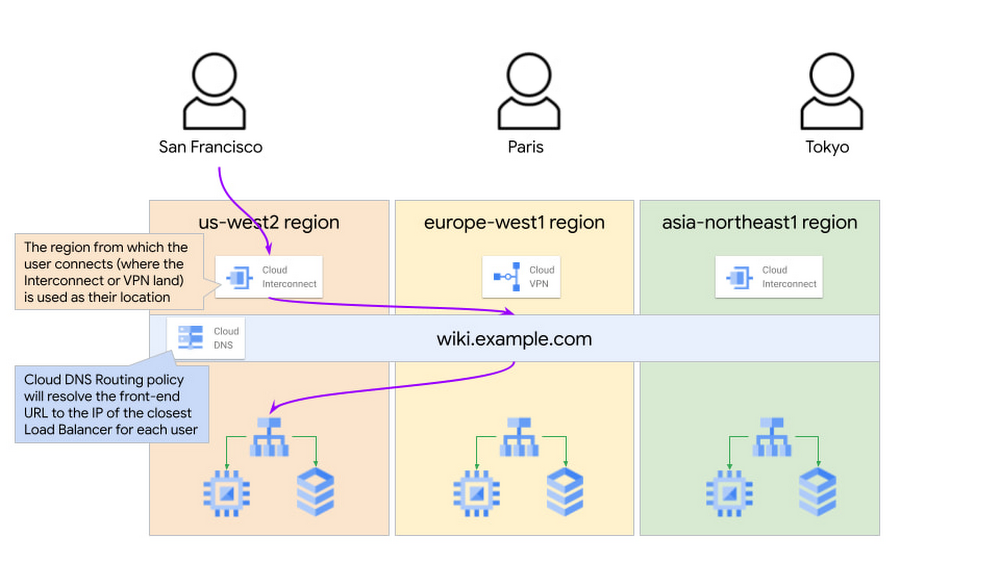

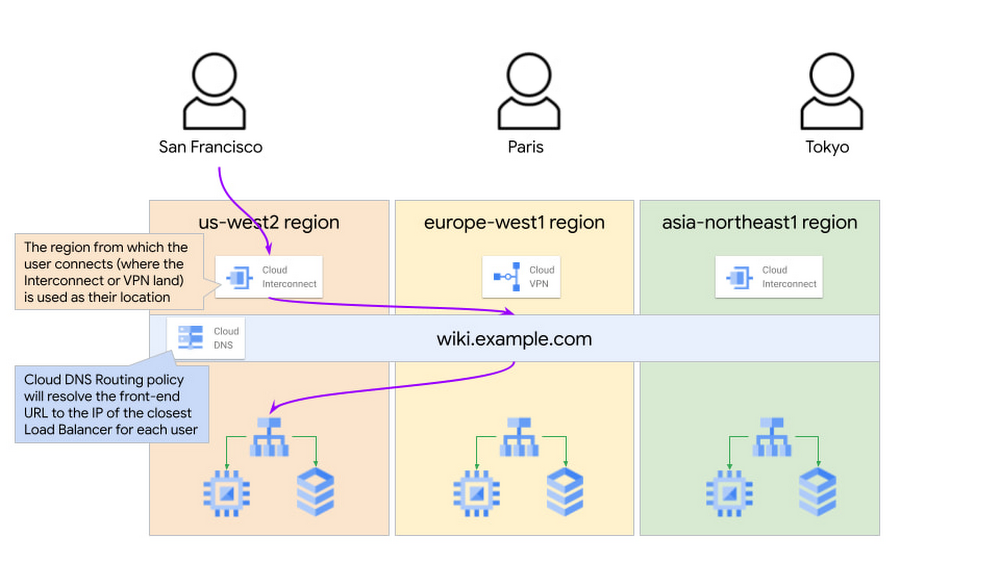

The wiki application is accessible in each region via an Internal Load Balancer (ILB). Engineers use the domain name wiki.example.com to connect to the front-end web app over Interconnect or VPN. The geo-location policy will use the Google Cloud region where the Interconnect or VPN lands as the source for the traffic and look for the closest available endpoint.

With the above setup, if our application in one of the regions goes down, we have to manually update the geo-location policy and remove the affected region from the configuration. Until someone detects the failure and updates the policy, the end users close to that region will not be able to reach the application. Not a great user experience. How can we design this better?

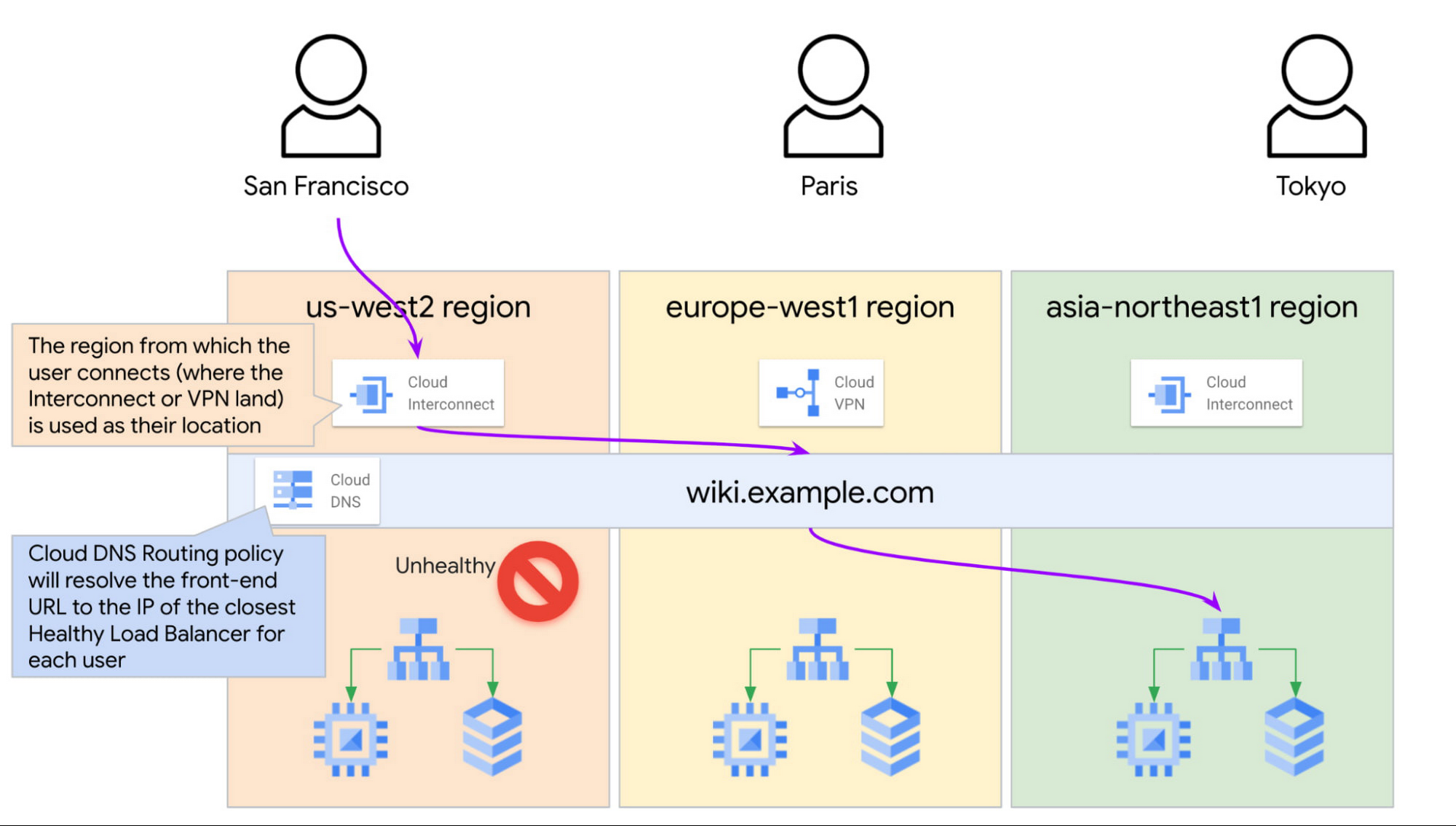

Google Cloud is introducing Cloud DNS health check support for Internal Load balancers. For an internal TCP/UDP load balancer, we can use the existing health checks for a back-end service, and Cloud DNS will receive direct health signals from the individual back-end instances. This enables automatic failover when the endpoints fail their health checks.

For example, if the US frontend service is unhealthy, Cloud DNS may return the closest region load balancer IP (in our example, Tokyo’s) to the San Francisco clients depending on the latency.

Enabling the health checks for the wiki.example.com record provides us with automatic failover in case of a failure and ensures that Cloud DNS always returns only the healthy endpoints in response to the client queries. This removes manual intervention and significantly improves the failover time.

The Cloud DNS routing policy configuration would look like this:

Creating the Cloud DNS managed zone:

Creating the Cloud DNS Record set:

For health checking to work, we need to reference the ILB using the ILB forwarding rule name. If we use the ILB IP instead, then Cloud DNS will not check the health of the endpoint.

See the official documentation page for more information on how to configure Cloud DNS routing policies with health checks.

Note: Cloud DNS uses the health checks configured on the load balancers itself. Users do not need to configure any additional health checks for Cloud DNS. See the official documentation page for information on how to create health checks for GCP Load Balancers.

With this configuration, if we were to lose the application in one region due to an incident, the health checks on the ILB would fail, and Cloud DNS would automatically resolve new user queries to the next closest healthy endpoint.

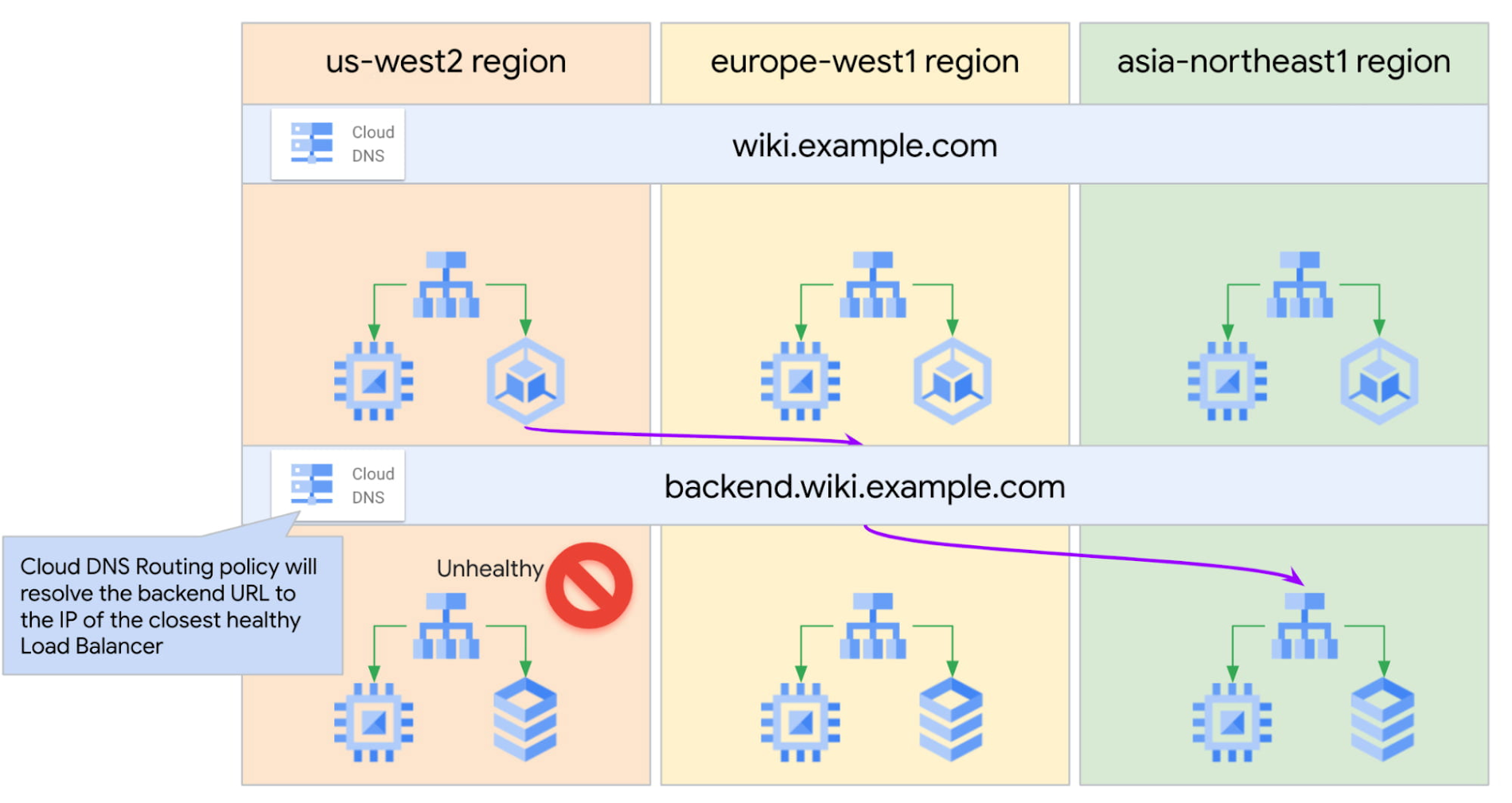

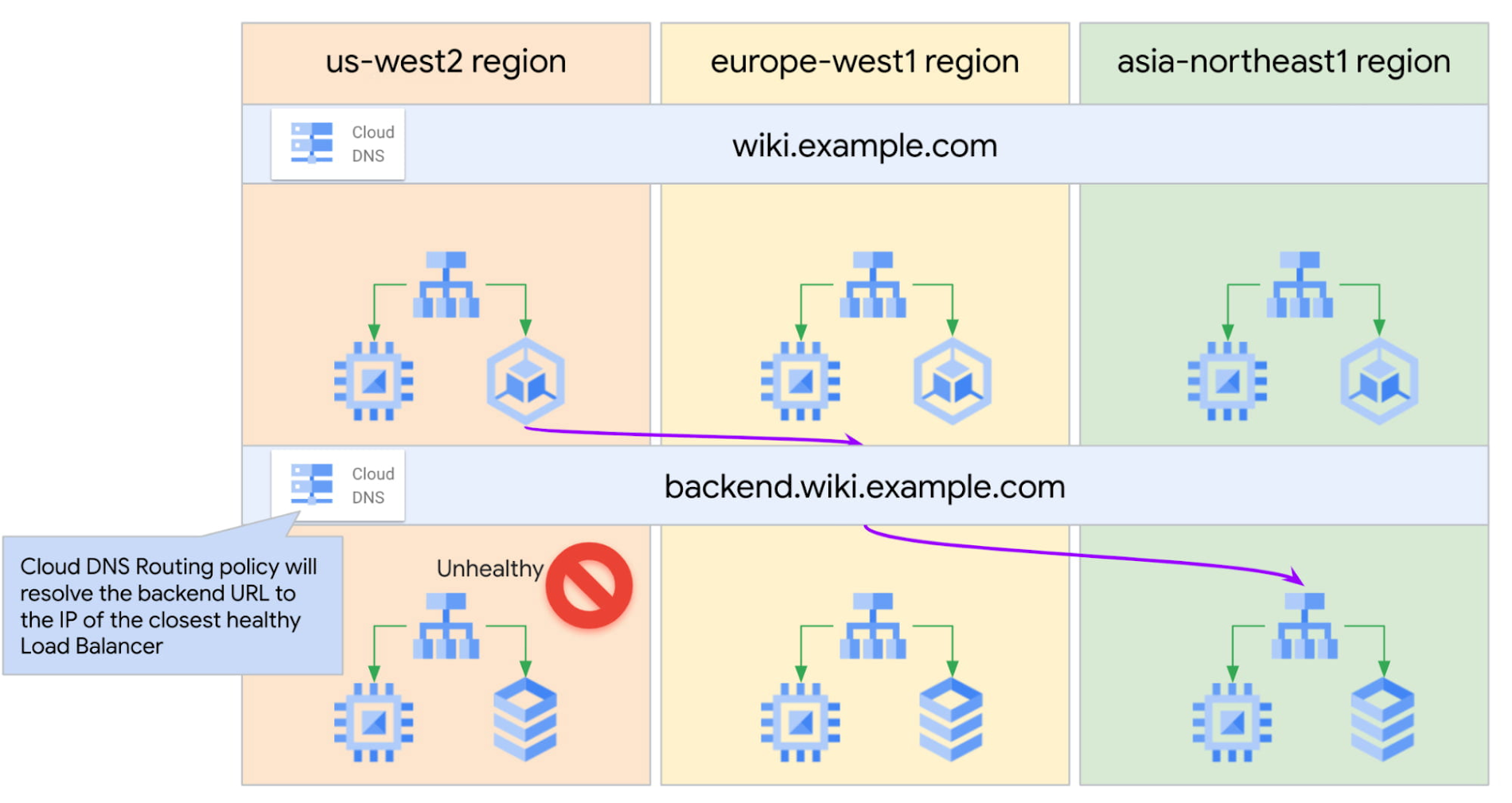

We can expand this configuration to ensure that front-end servers send traffic only to healthy bank-end servers in the region closest to them.

We would configure front-end servers to connect to the global hostname backend.wiki.example.com.The Cloud DNS geo-location policy with health checks will use the front-end servers’ GCP region information to resolve this hostname to the closest available healthy back-end tier Internal Load Balancer.

Putting it all together, we now have set up our multi-regional and multi-tiered application with DNS policies to automatically failover to a healthy endpoint closest to the end user.