How to publish applications to our users globally with Cloud DNS Routing policies?

Aurélien Legrand

Strategic Cloud Engineer

Karthik Balakrishnan

Cloud DNS Product Manager

When building applications that are critical to your business, one key consideration is always high availability. In Google Cloud, we recommend building your strategic applications on a multi-regional architecture. In this article, we will see how Cloud DNS routing policies can help simplify your multi-regional design.

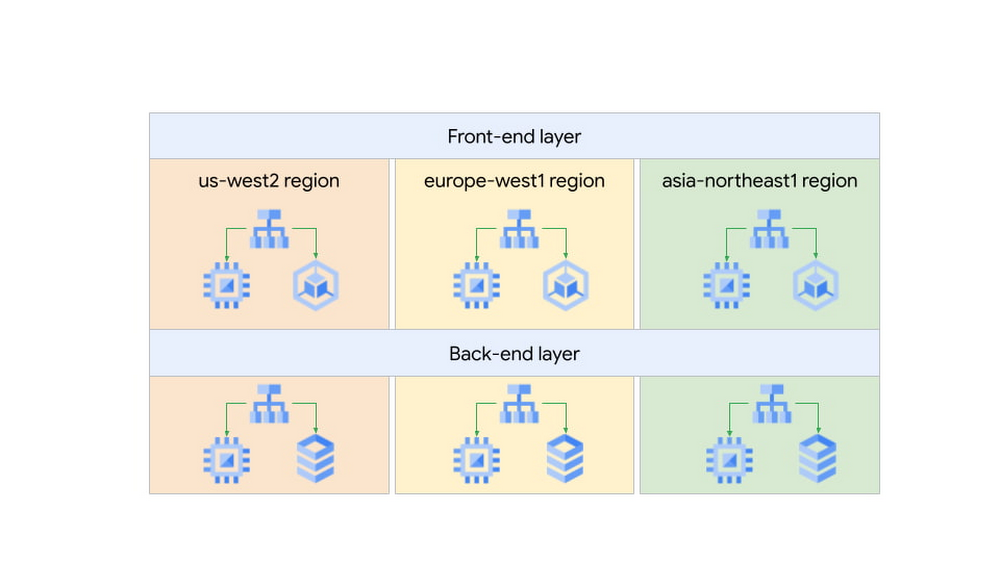

As an example, let’s take a web application that is internal to our company, such as a knowledge-sharing wiki application. It uses a classic 2-tier architecture: front-end servers tasked to serve web requests from our engineers and back-end servers containing the data for our application.

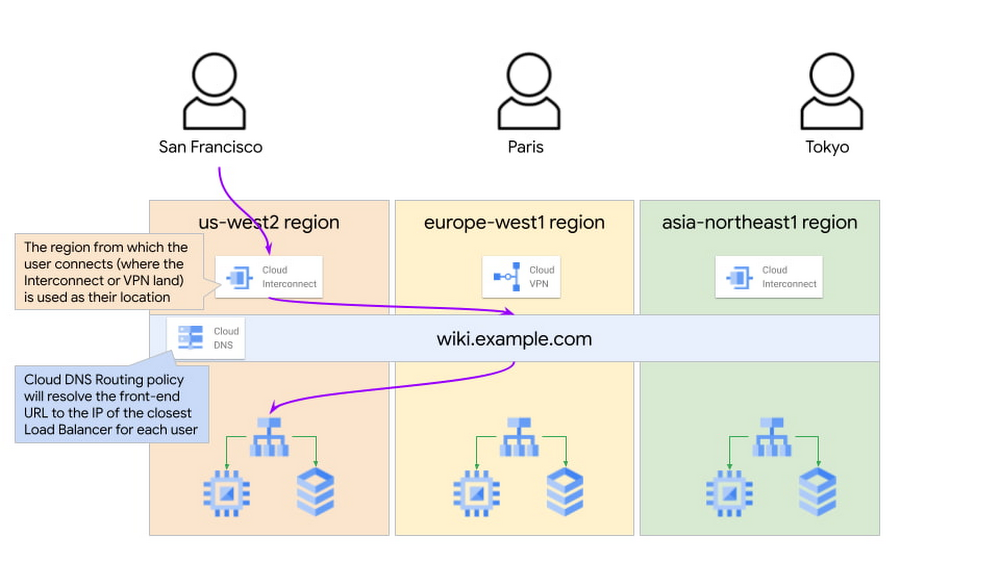

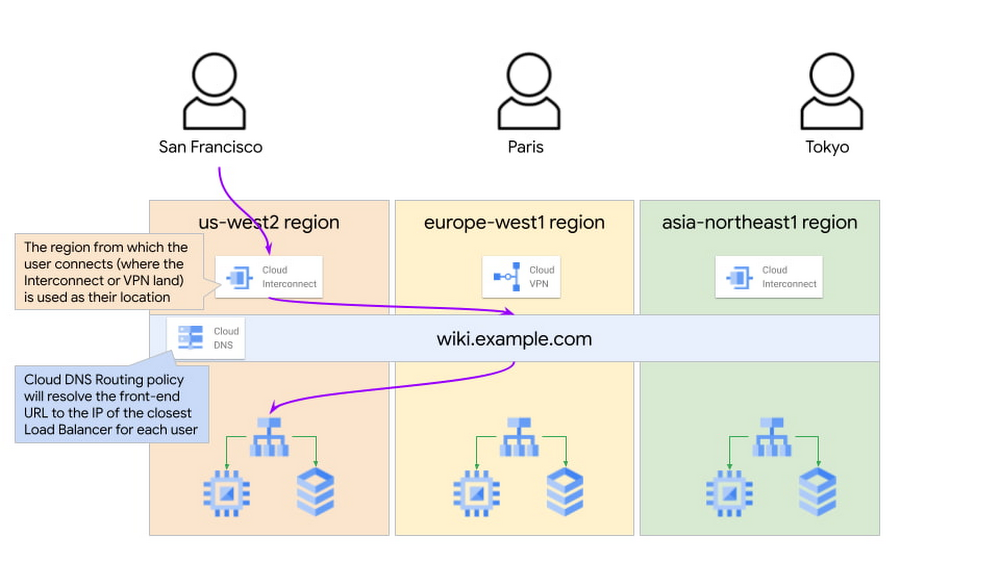

This application is used by our engineers based in the US (San Francisco), Europe (Paris) and Asia (Tokyo), so we decided to deploy our servers in three Google Cloud regions for better latency, performance and lower cost.

In each region, the wiki application is exposed via an Internal Load Balancer (ILB), which engineers connect to over an Interconnect or Cloud VPN connection.

Now our challenge is determining how to send users to the closest available front-end server.

Of course, we could use regional hostnames such as <region>.wiki.example.com where <region> is US, EU, or ASIA - but this puts the onus on the engineers to choose the correct region, exposing unnecessary complexity to our users. Additionally, it means that if the wiki application goes down in a region, the user has to manually change the hostname to another region - not very user-friendly!

So how could we design this better?

Using Cloud DNS Policy Manager, we could use a single global hostname such as wiki.example.com and use a geo-location policy to resolve this hostname to the endpoint closest to the end user. The geo-location policy will use the GCP region where the Interconnect or VPN lands as the source for the traffic and look for the closest available endpoint.

For example, we would resolve the hostname for US users to the IP address of the US Internal Load Balancer in the below diagram:

This allows us to have a simple configuration on the client side and to ensure a great user experience.

The Cloud DNS routing policy configuration would look like this:

See the official documentation page for more information on how to configure Cloud DNS routing policies.

This configuration also helps us improve the reliability of our wiki application: if we were to lose the application in one region due to an incident, we can update the geo-location policy and remove the affected region from the configuration. This would mean that new users will resolve the next closest region to them, and it would not require an action on the client’s side or the application team’s side.

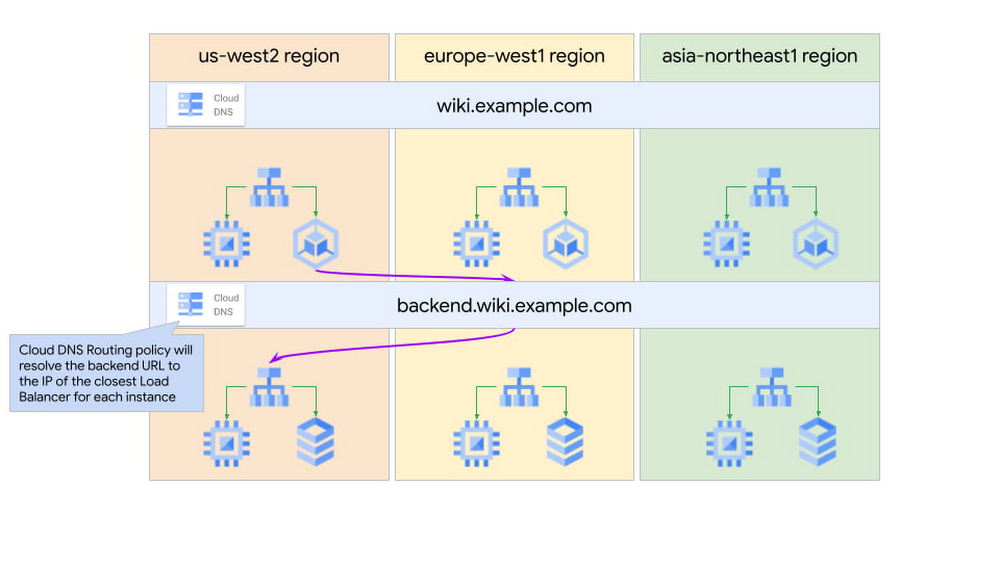

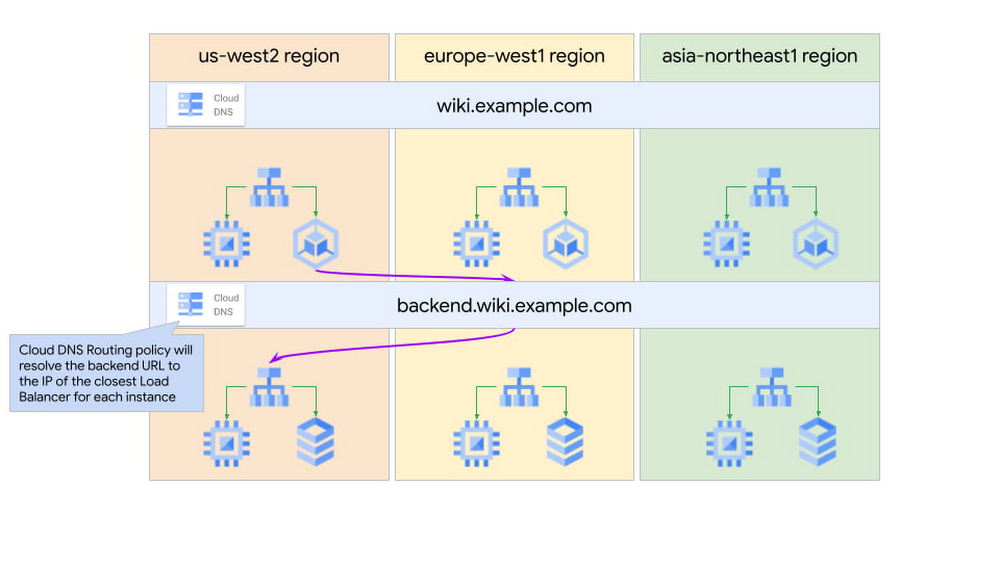

We have seen how this geo-location feature is great for sending users to the closest resource, but it can also be useful for machine-to-machine traffic.

Expanding on our web application example, we would like to ensure that front-end servers all have the same configuration globally and use the back-end servers in the same region.

We would configure front-end servers to connect to the global hostname backend.wiki.example.com. The Cloud DNS geo-location policy will use the front-end servers’ GCP region information to resolve this hostname to the closest available backend tier Internal Load Balancer.

Putting it all together, we now have a multi-regional and multi-tiered application with DNS policies to smartly route users to the closest instance of that application for optimal performance and costs.

In the next few months, we will introduce even smarter capabilities to Cloud DNS routing policies, such as health checks to allow automatic failovers. We look forward to sharing all these exciting new features with you in another blog post.