Incorporating quota regression detection into your release pipeline

Nethaneel Edwards

Senior Software Engineer

On Google Cloud, one of the ways an organization may want to enforce fairness in how much of a resource can be consumed is through the use of quotas. Limiting resource consumption on services is one way that companies can better manage their cloud costs. Oftentimes, people associate quotas with APIs to access that said resource. Although an endpoint may be able to handle a high number of Queries Per Second (QPS), the quota gives them a means to ensure that no one user or customer has monopoly over the available capacity. This is where fairness comes into play. It allows people to put limits that can be scoped per user or per customer and allows them to increase or lower those limits.

Although quota limits address the issue of fairness from a resource providers’ point of view — in this case, Google Cloud — you still need a way as the resource consumer to ensure that those limits are adhered to and, just as importantly, ensure that you don’t inadvertently violate those limits. This is especially important in a continuous integration and continuous delivery (CI/CD) environment, where there is so much automation going on. CI/CD is heavily based on automating product releases and you want to ensure that the products released are always stable. This brings us to the issue of quota regression.

What is quota regression and how can it occur?

Quota regression refers to the unplanned change in an allocated quota that oftentimes results in a reduced capacity for resource consumption.

Let's take for example an accountant firm. I have many friends in this sector and they can never hang out with me during their busy season between January and April. At least, that’s the excuse. During the busy season, they have an extraordinarily high caseload, and a low caseload the rest of the year. Let’s assume that these caseloads actually have an immediate impact on your resource costs on Google Cloud. Since this high caseload only occurs at a particular point throughout the year, it may not be necessary to maintain a high quota at all times. It’s not financially prudent since resources are paid on a “per-usage” model.

If the accountant firm has an in-house engineering team that has built load-tests to ensure the system is functioning as intended, you would expect the load capacity to increase before the busy season. If the load test is being done in an environment separate from the serving one (which it should be due to reasons such as security and avoiding unnecessary access grants to data), this is where you might start to see a quota regression. An example of this is load testing in your non-prod Google Cloud project (e.g. your-project-name-nonprod) and promoting images to your serving project (e.g. your-project-name-prod).

In order for the load tests to pass, there must be a sufficient quota allocated to the load testing environment. However, there exists a possibility that that quota has not been granted in the serving environment. It could be due to simply an oversight in the process where the admin needed to request the additional quota in the serving environment, or it could be because that quota was reverted after a busy season and thus went unnoticed. Whatever the reason, it still depends on human intervention to assert that the quotas are consistent across environments. If this is missed, the firm can go into a busy season with passing load tests and still have a system outage due to lack of quota in the serving environment.

Why not just use traditional monitoring?

This brings to mind the argument of “Security Monitor vs Security Guard.” Even with monitoring to detect such inconsistencies, alerts can be ignored and alerts can be late. Alerts work if there is no automation tied to the behavior. In the example above, alerts may just suffice. However, in the context of CI/CD, it’s likely for a deployment that introduces a higher QPS on dependencies to be promoted from a lower environment to the serving environment, because the load tests pass if the lower environment has sufficient quota. The problem here is that now that deployment is automatically pushed to production with alerts probably occurring with the outage.

The best way to handle these scenarios is to incorporate an automated way of not just monitoring and alerting, but a means for preventing promotion of that regressive behavior to the serving environment. The last thing you want is new logic that requires a higher resource quota than what is granted being automatically promoted to prod.

Why not use existing checks in tests? The software engineering discipline offers several types of tests (unit, integration, performance, load, smoke, etc…), none of which address something as complex as cross-environment consistency. Most of them focus on the user and expected behaviors. The only test that really focuses on infrastructure is the load test, but a quota regression is not necessarily part of the load test. It's not something you're going to detect since a load test occurs in its own environment and is agnostic of where it's actually running.

In other words, a quota regression test needs to be aware of the environments — it needs an expected baseline environment where the load test occurs and an actual serving environment where the product will be deployed. What I am proposing is an environment aware test to be included in the suite of many other tests.

Quota regression testing on Google Cloud

Google Cloud already provides services that you can use to easily incorporate this feature. This is more of a systems architecture practice that you can exercise.

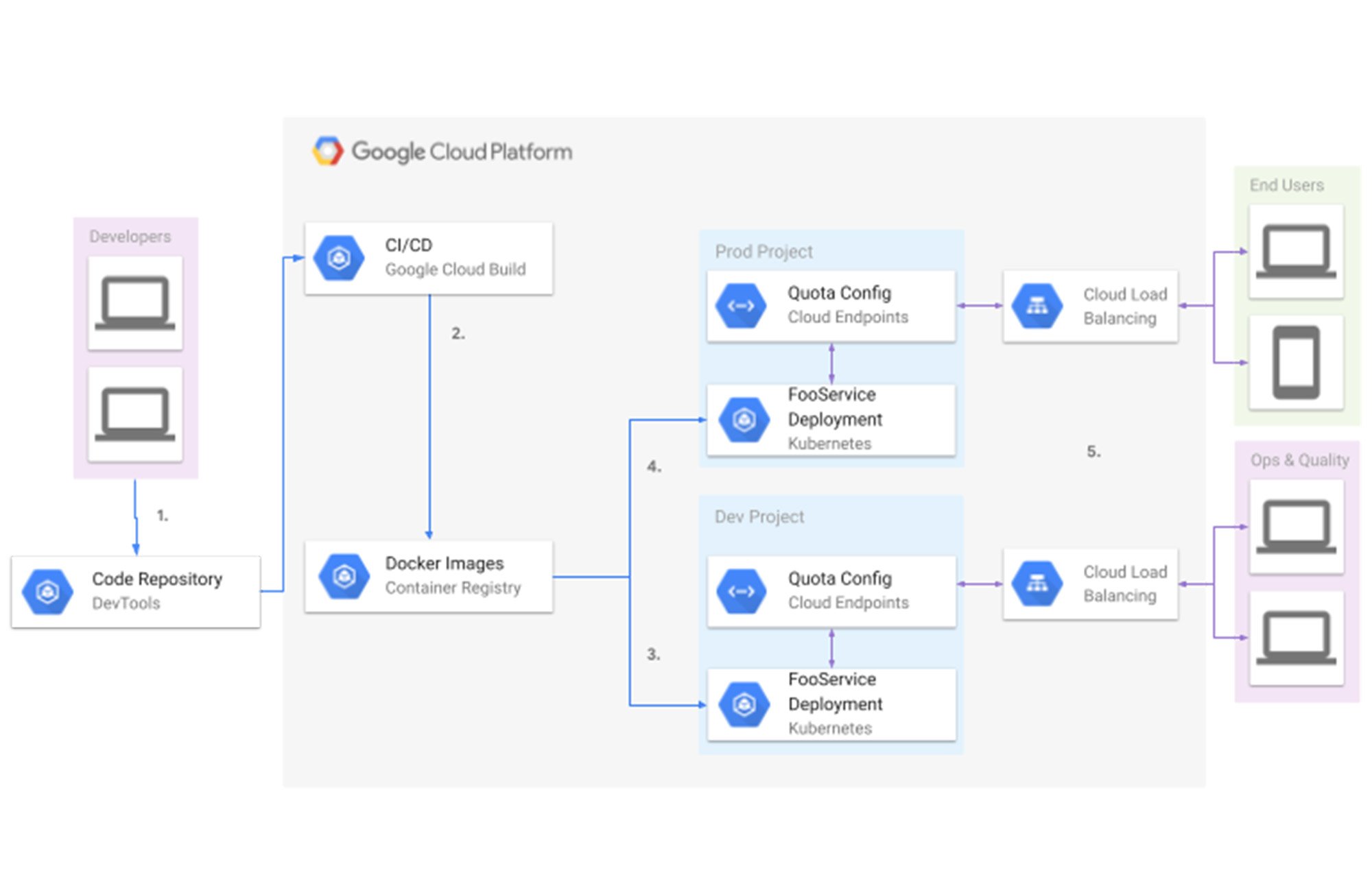

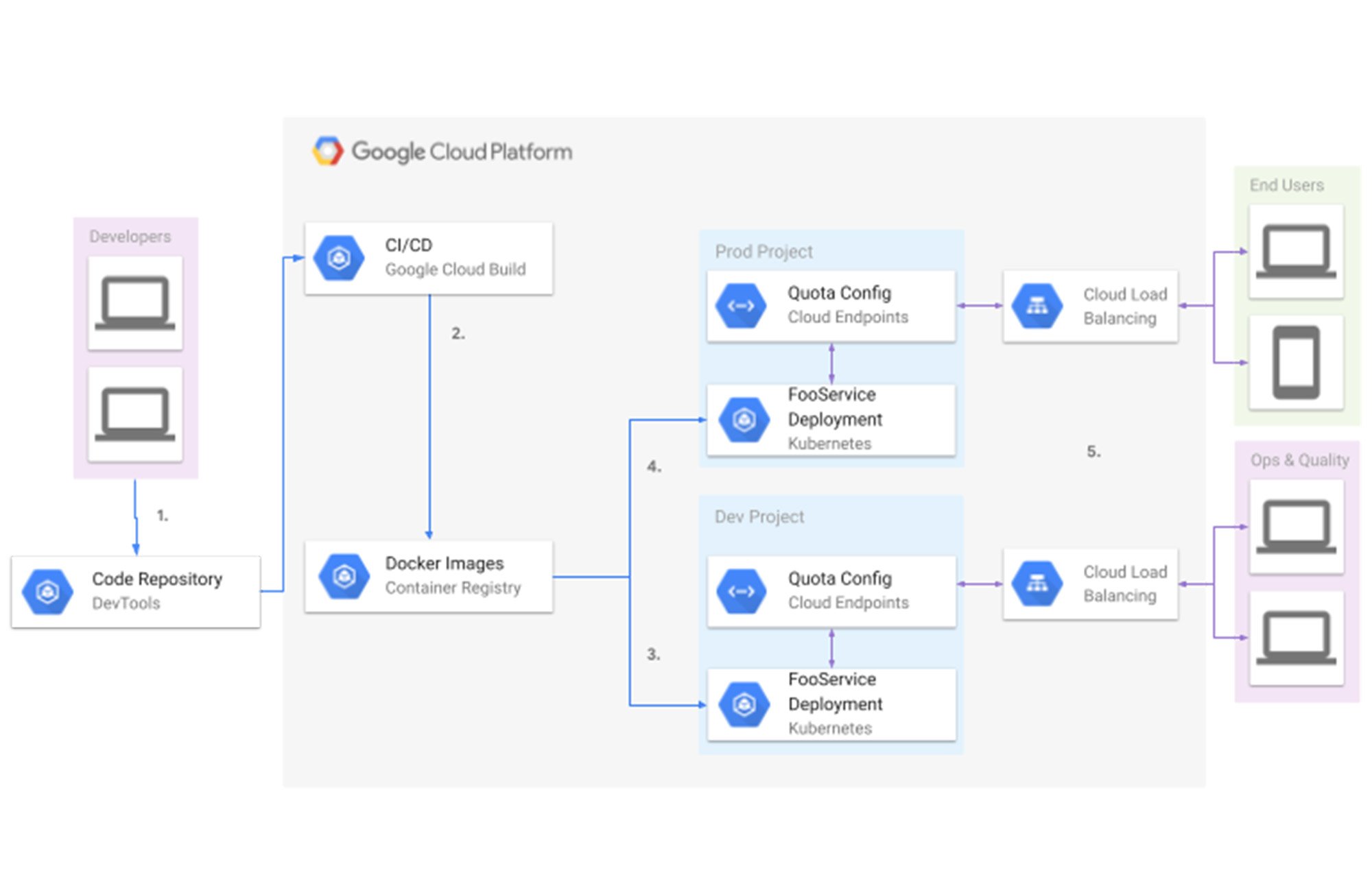

The Service Consumer Management API provides the tools you need to create your own quota regression test. Take for example the ConsumerQuotaLimit Resource that’s returned via the list api. For the remainder of this discussion, let’s assume an environment setup such as this:

In the diagram above, we have a simplified deployment pipeline:

Developers submit code to some repository

The Cloud Build build and deployment trigger gets fired

Tests are run

Deployment images are pushed if the prerequisite steps succeed

Images are pushed to their respective environments (in this case build to dev, and previous dev to prod)

Quotas are defined for the endpoints on deployment

Cloud Load Balancer makes the endpoints available to end users

Quota limits

With this mental model, let’s hone in on the role quotas play in the big picture. Let’s assume we have the following service definition for an endpoint called “FooService”. The service name, metric label and quota limit value are what we care about for this example.

gRPC Cloud Endpoint Yaml Example

In our definition we’ve established:

Service Name:

fooservice.endpoints.my-project-id.cloud.googMetric Label:

library.googleapis.com/read_callsQuota Limit:

1

Example commands and output.

Response example (truncated)

In the above response, the most important thing to note is the effective limit for a given service’s metric. The effective limit is the limit being applied to a resource consumer when enforcing customer fairness as discussed earlier.

Now that we’ve established how to get the effectiveLimit for a quota definition on a resource per project, we can define the assertion of quota consistency as:

Load Test Environment Quota Effective Limit <= Serving Environment Quota Effective Limit

Having a test like this, you can then integrate that with something like Cloud Build to block the promotion of your image from the lower environment to your serving environment if that test fails to pass. That saves you from introducing regressive behavior from the new image into the serving environment that would otherwise result in an outage.

The importance of early detection

It’s not enough to alert on a detected quota regression and block the image promotion to prod. It’s better to raise alarms as soon as possible. If resources are lacking when it’s time to promote to production, you’re now faced with the problem of wrangling enough resources in time. This may not always be possible in the desired timeline; it’s possible that the resource provider needs to scale up its resources to handle the increase in quota. This is not always something that can just be done in a day. For example, is the service hosted on Google Kubernetes Engine (GKE)? Even with autoscale, what if the ip pool is exhausted? Cloud infrastructure changes, although elastic, are not instant. Part of production planning needs to account for the time needed to scale.

In summary, quota regression testing is a key component that should be added to the entire concept of handling overload and dealing with load balancing in any cloud service — not just Google Cloud. It is important for product stability with the dips and spikes in demands, which will inevitably show up as a problem in many spaces. If you continue to rely on human intervention to ensure consistency of your quota across your configurations, you will only guarantee that eventually, you will have an outage when that consistency is not met. For more on working with quotas, check out the documentation.