Deploying a production-grade Helm release on GKE with Terraform

Rob Morgan

Gruntwork Software Engineer

Riley Karson

Google Software Engineer

Editor’s note: Today we hear from Gruntwork, a DevOps service provider specialized in cloud infrastructure automation, about how to automate Kubernetes deployments to GKE with HashiCorp Terraform.

As more organizations look to capitalize on the advantages of Kubernetes, they increasingly use managed platforms like Google Kubernetes Engine (GKE), to offload the work of managing Kubernetes themselves. They manage and deploy workloads with tools like kubectl and Helm, the Kubernetes package manager that repeatably applies common templates, a.k.a., charts.

Then there’s HashiCorp Terraform, an infrastructure management and deployment tool that allows you to programmatically configure infrastructure across a variety of providers, including Google Cloud. Terraform lets you deploy GKE clusters reliably and repeatedly, no matter your organization’s scale.

Here at Gruntwork, we find that using Terraform can make it easier to adopt Kubernetes, both on GCP as well as other cloud environments. We worked with Google Cloud to build a series of open-source Terraform modules based on Google Cloud Platform (GCP) and Kubernetes best practices that allow you to work with GCP and Kubernetes in a reliable manner.

To get a sense of what the Gruntwork GCP Modules do, first consider what you’d need to do to securely deploy a service on a GKE cluster using Helm:

Prepare a GCP service account with minimal permissions instead of reusing the project-scoped Compute default service account

Provision a service-specific VPC network instead of the project default network

Deploy a GKE private cluster and disable insecure add-ons and legacy Kubernetes features

Add a node pool with autoscaling, auto repair and auto upgrade enabled

Configure kubectl to interact with the cluster

Create a TLS cert to communicate with the Helm server, Tiller

Create a Tiller-specific namespace for Tiller

Deploy Tiller into the Tiller-specific namespace

Only after you’ve done all that will you be able to deploy workloads to Kubernetes using Helm! In addition, to deploy your services using Helm, each of your developers also needs to

Download a Tiller client cert for Helm

Use Helm to release a Helm chart with your service

That’s quite a daunting list just to release your first Helm chart on GKE and definitely not a problem that you want to solve from scratch. Our new GKE module automates these steps for you, allowing you can consistently apply all of these GCP and Kubernetes best practices using Terraform, with a single terraform apply!

To learn more, we’ve included a full, working config in the module's GitHub repo, and are showing snippets of config below. Alternatively, you can open it in Google Cloud Shell with the button below to try it out yourself.

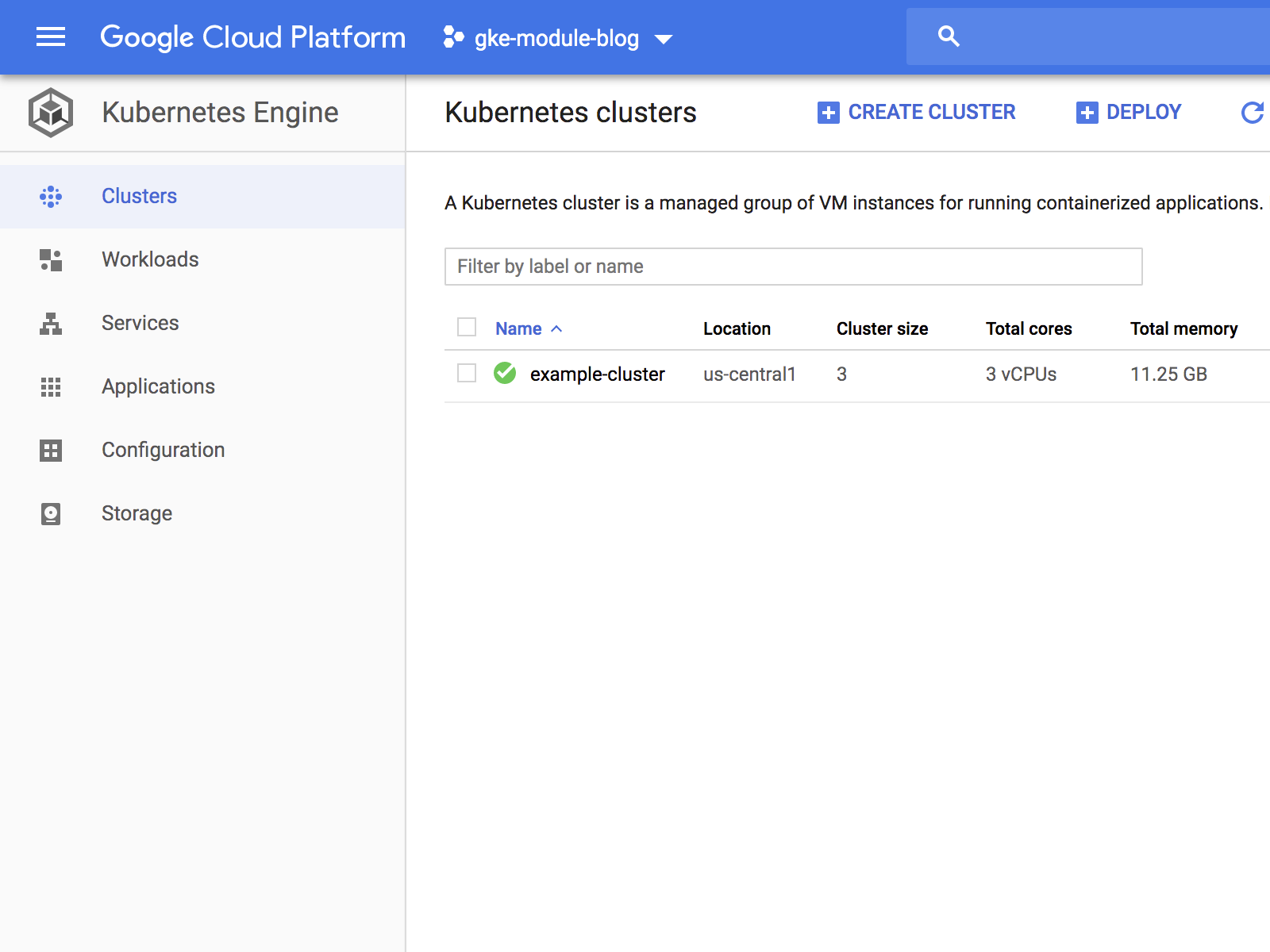

You can use the Cloud Console to verify that the cluster has been deployed correctly:

Next, you can use kubergrunt (a collection of utility scripts compiled to a Go binary for use with Terraform) to deploy Helm’s server component, Tiller, into your cluster. This also releases a chart using Helm, allowing you to view your deployed service on the web.

Finally, you can use Helm to securely release a chart and view its status.

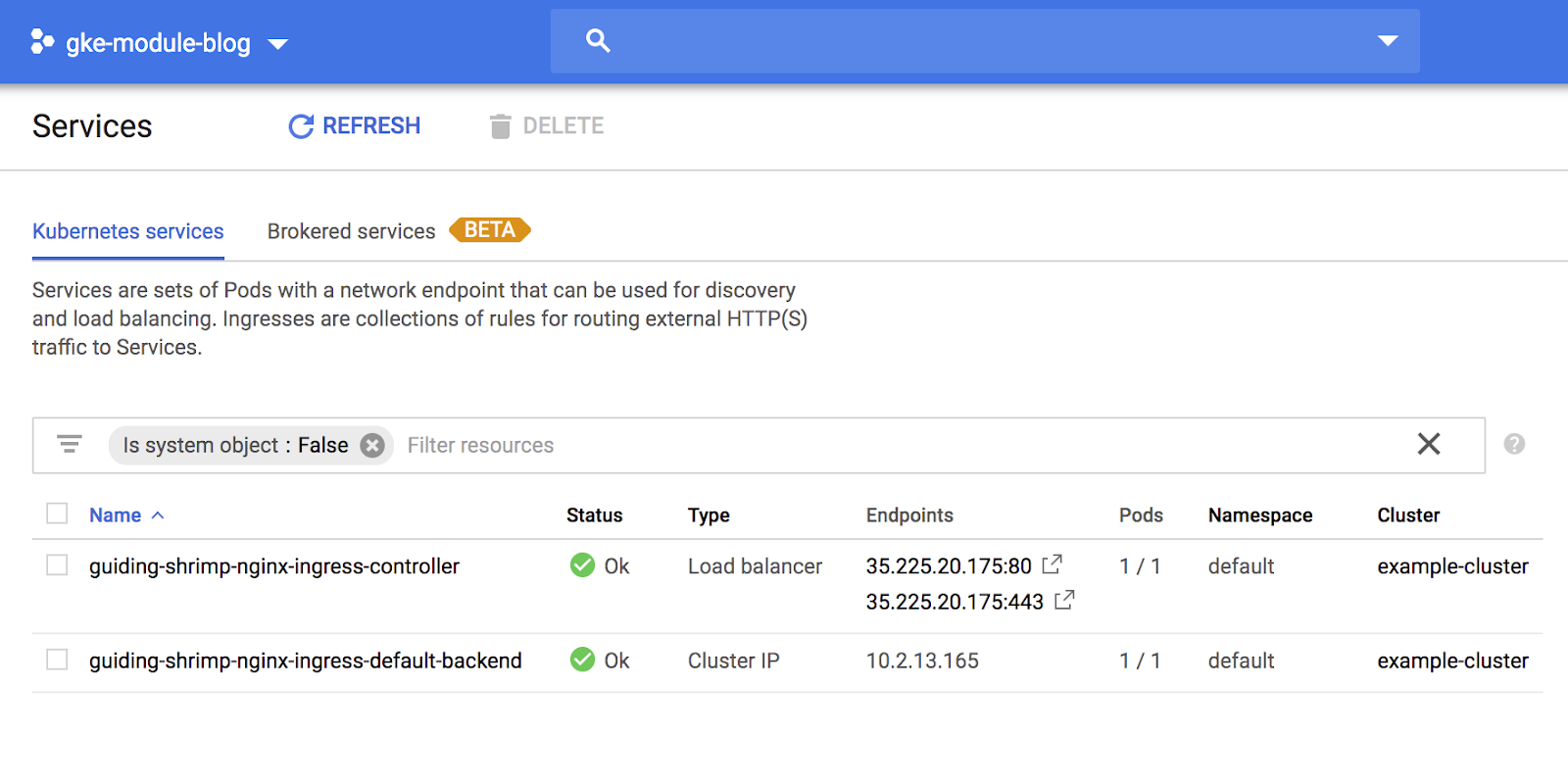

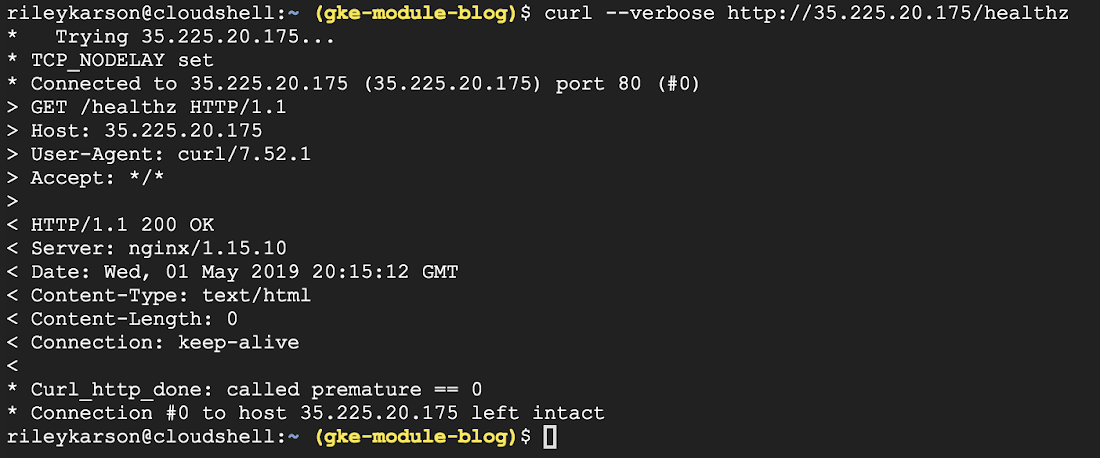

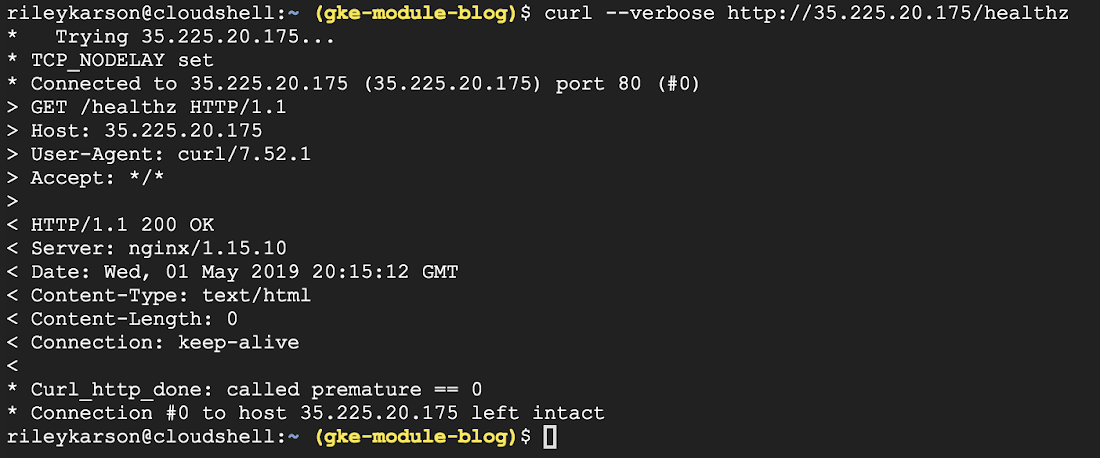

Once that’s finished, you can pull up the service address in the Cloud Console under “Services” and poll the /healthz path for a 200 response.

The Gruntwork GCP modules make production-ready enterprise configuration of GKE clusters simple, allowing you to roll out clusters and workloads following best practices in minutes. The modules are available now; they’re published on the Terraform Module Registry, and are available licensed as Apache 2.0 on GitHub.

Together with Google Cloud, we plan to continue to broaden the number of GCP services that you can provision with Terraform through our modules, providing Terraform users a familiar workflow across multiple cloud and on-premises environments and reducing the operational complexity of managing GCP infrastructure. If you have any specific feedback on use cases you’d like us to prioritize, please reach out to us at info@gruntwork.io.