Expanding your Bigtable architecture with change streams

Billy Jacobson

Developer Advocate, Cloud Bigtable

Engineers use Bigtable to hold vast amounts of transactional and analytical information as part of their data workflow. We are excited about the release of Bigtable change streams that will enhance these data workflows for event-based architectures and offline processing. In this article, we will cover the new feature and a few example applications that incorporate change streams.

Change streams

Change streams capture and output mutations made to Bigtable tables in real-time. You can access the stream via the Data API, and we recommend that you use the Dataflow connector which offers an abstraction over the change streams Data API and the complexity of processing partitions using the Apache Beam SDK. Dataflow is a managed service that will provision and manage resources and assist with the scalability and reliability of stream data processing.

The connector allows you to focus on the business logic instead of having to worry about specific Bigtable details such as correctly tracking partitions over time and other non-functional requirements.

You can enable change streams on your table via the Console, gcloud CLI, Terraform, or the client libraries. Then you can follow our change stream quickstart to get started with development.

Example architectures

Change streams enable you to track changes to data in real time and react quickly. You can more easily automate tasks based on data updates or add new capabilities to your application by using the data in new ways. Here are some example application architectures based on Bigtable use cases that incorporate change streams.

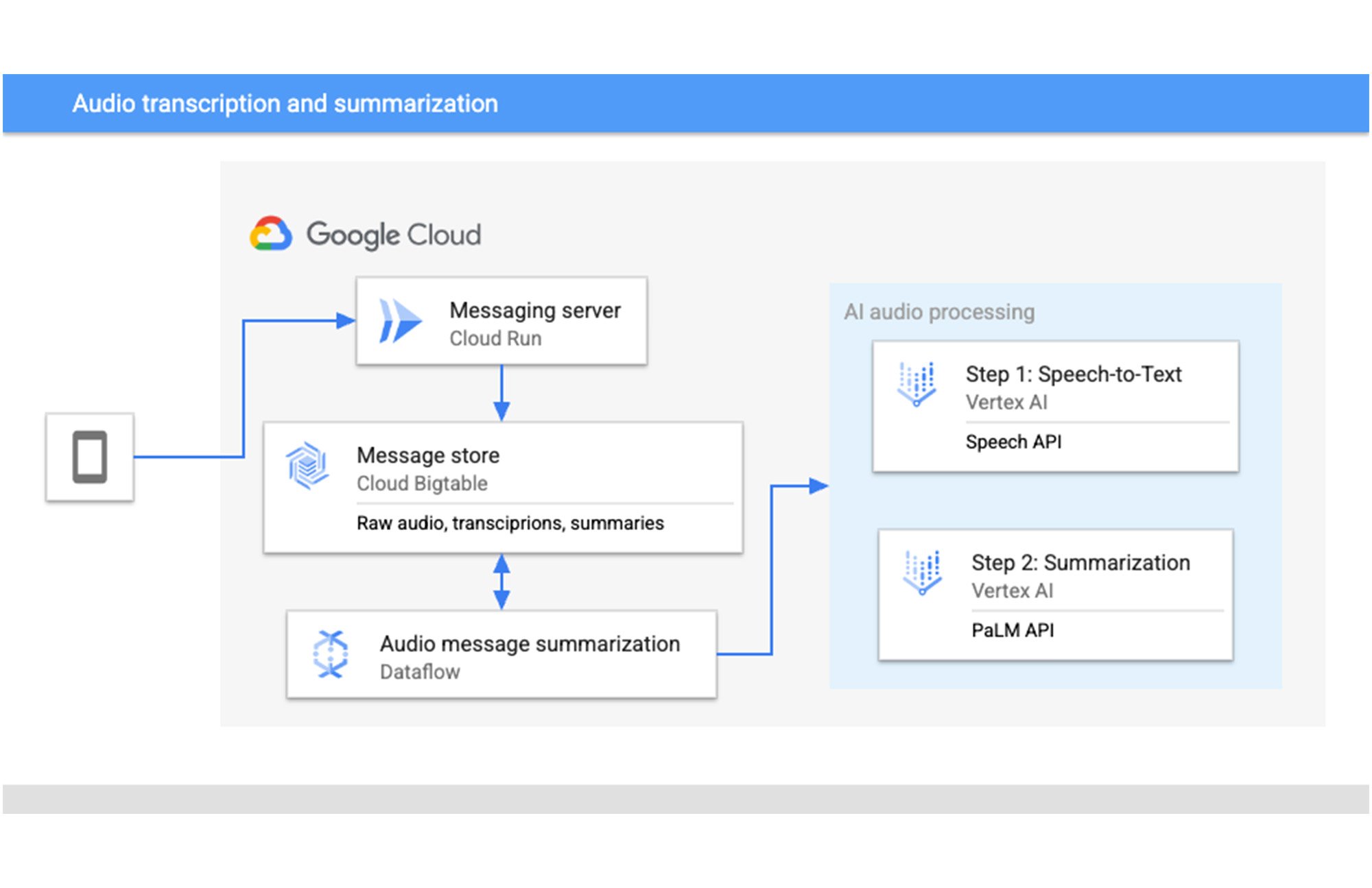

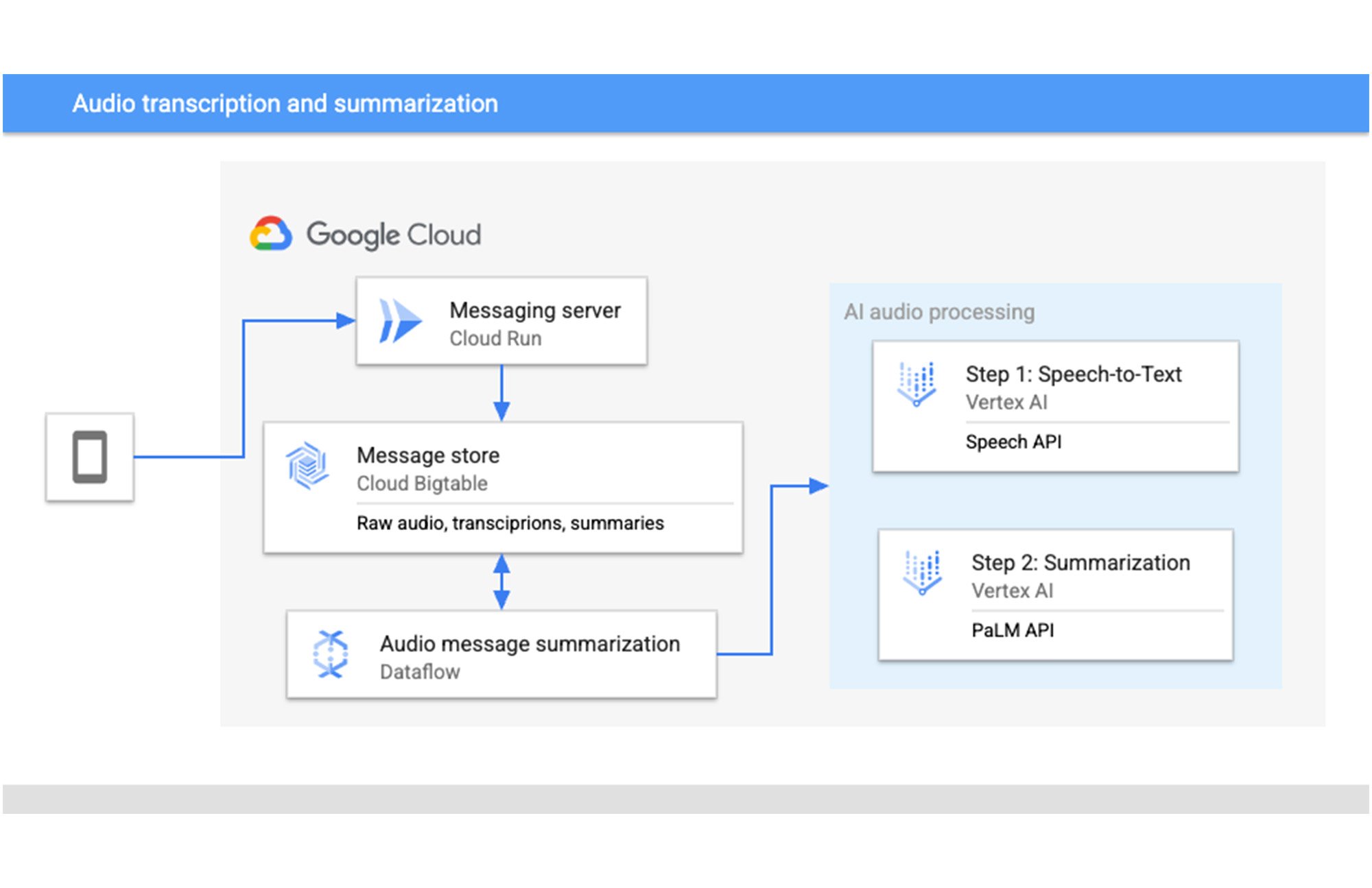

Data enrichment with modern AI

New APIs for AI are rapidly in development and can add great value to your application data. There are APIs for audio, images, translation and more that can enhance data for your customers. Bigtable change streams gives a clear path to enrich new data as it is added.

Here we are transcribing and summarizing voice messages by using pre-built models available in Vertex AI. We can use Bigtable to store the raw audio file in bytes and when a new message is added, AI audio processing is kicked off via change streams. A Dataflow pipeline will use the Speech API to get a transcription of the message and the PaLM API to summarize that transcription. These can be written to Bigtable for access by the users to get the message their preferred method.

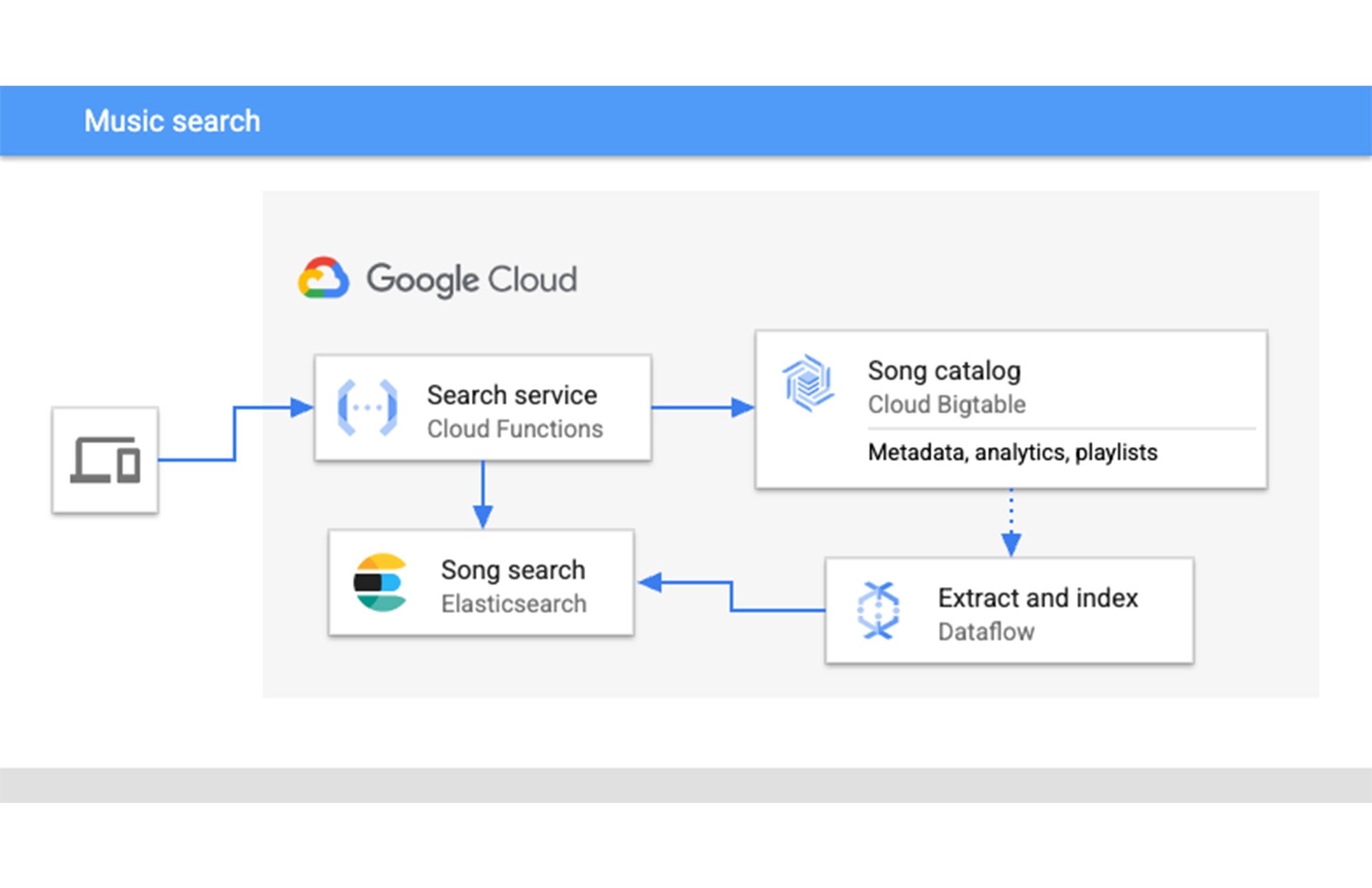

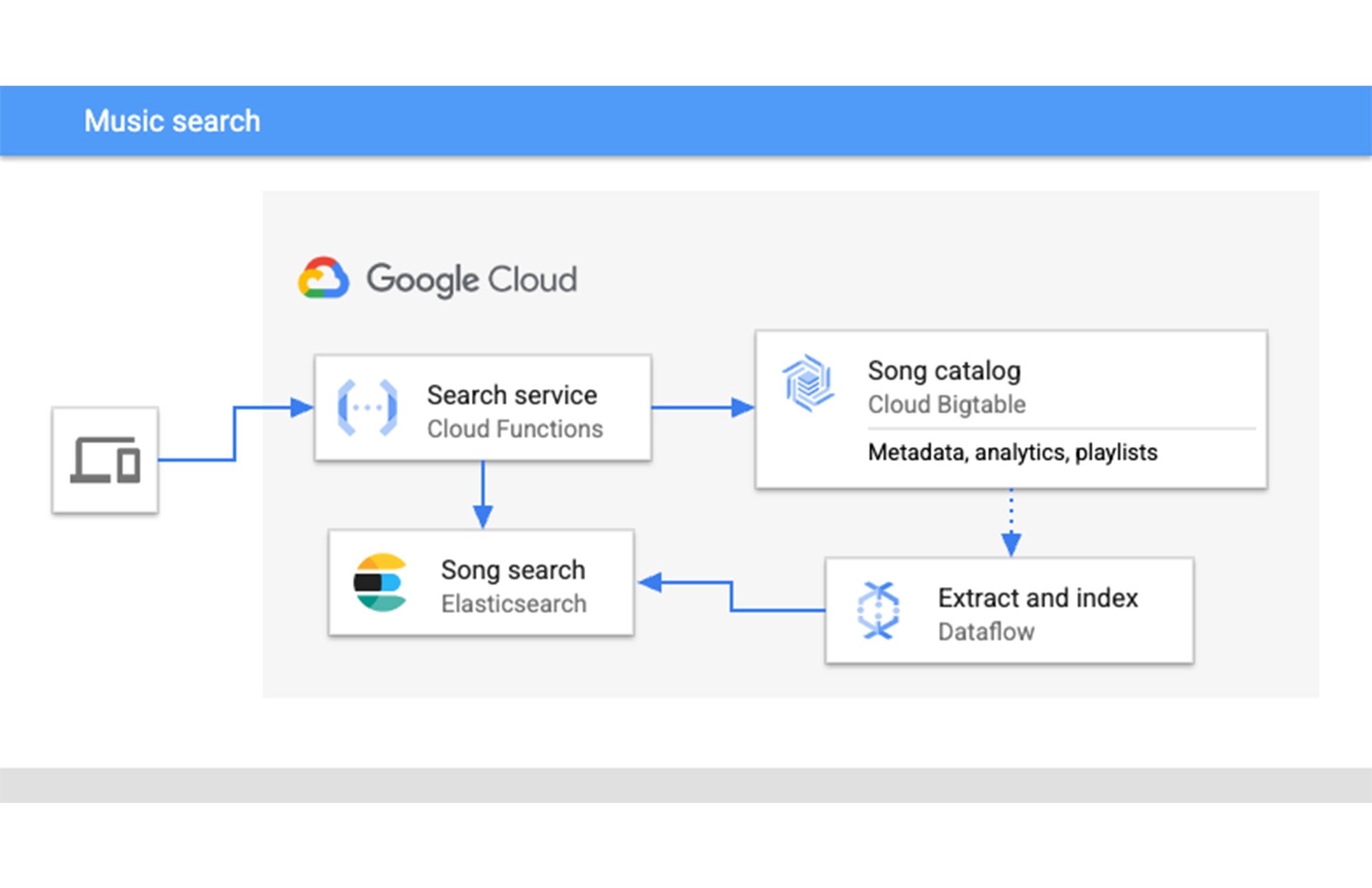

Full-text search and autocomplete

Full-text search and autocomplete are common use cases for many applications from online retail to streaming media platforms. For this scenario, we have a music platform which is adding full-text search functionality to the music library, by indexing the album names, song titles and artists in Elasticsearch.

When new songs are added, the changes are captured by a pipeline in Dataflow. It will extract the data to be indexed and write it to Elasticsearch. This keeps the index up to date and users can query it via a search service hosted on Cloud Functions.

Event-based notifications

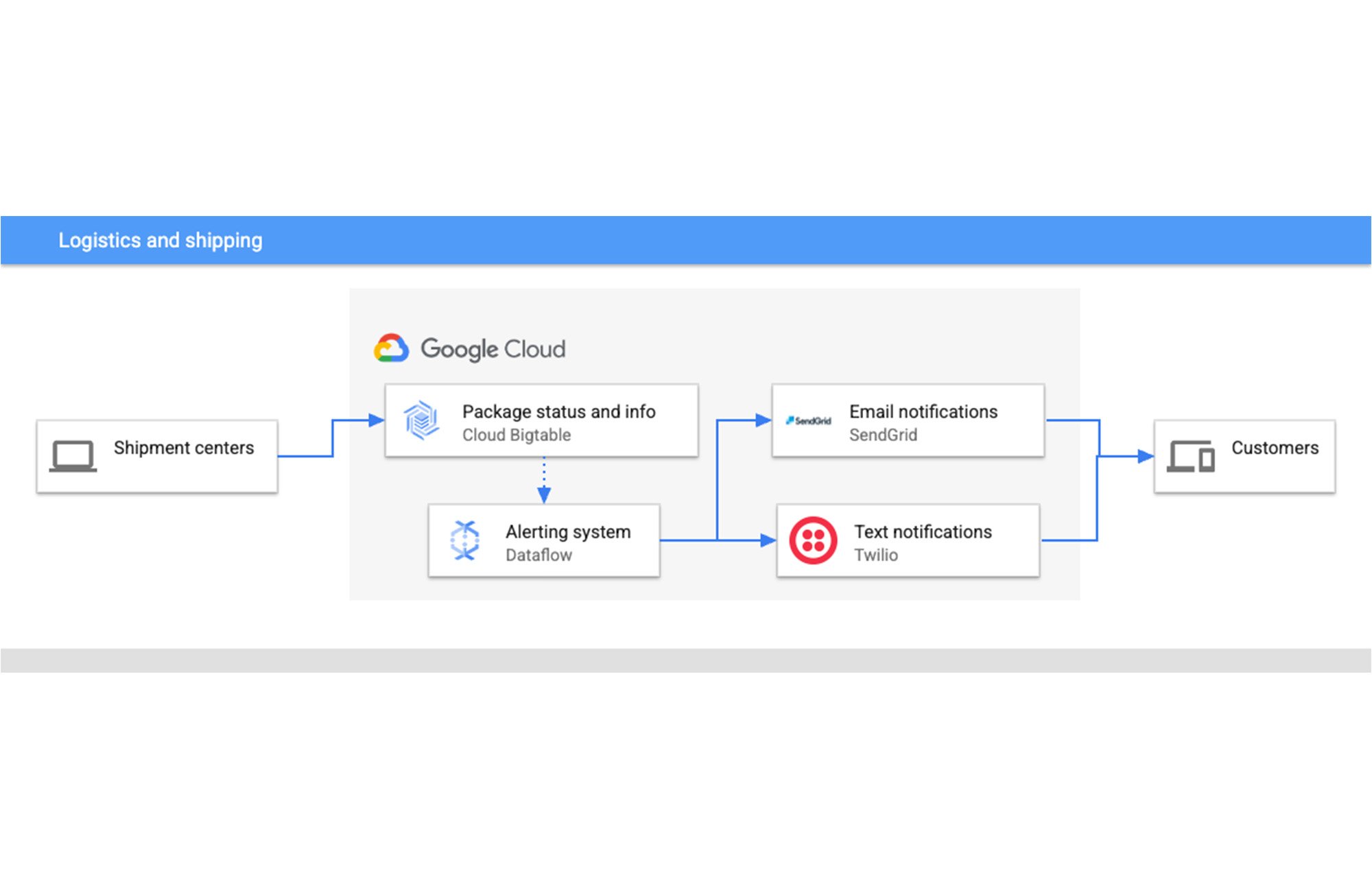

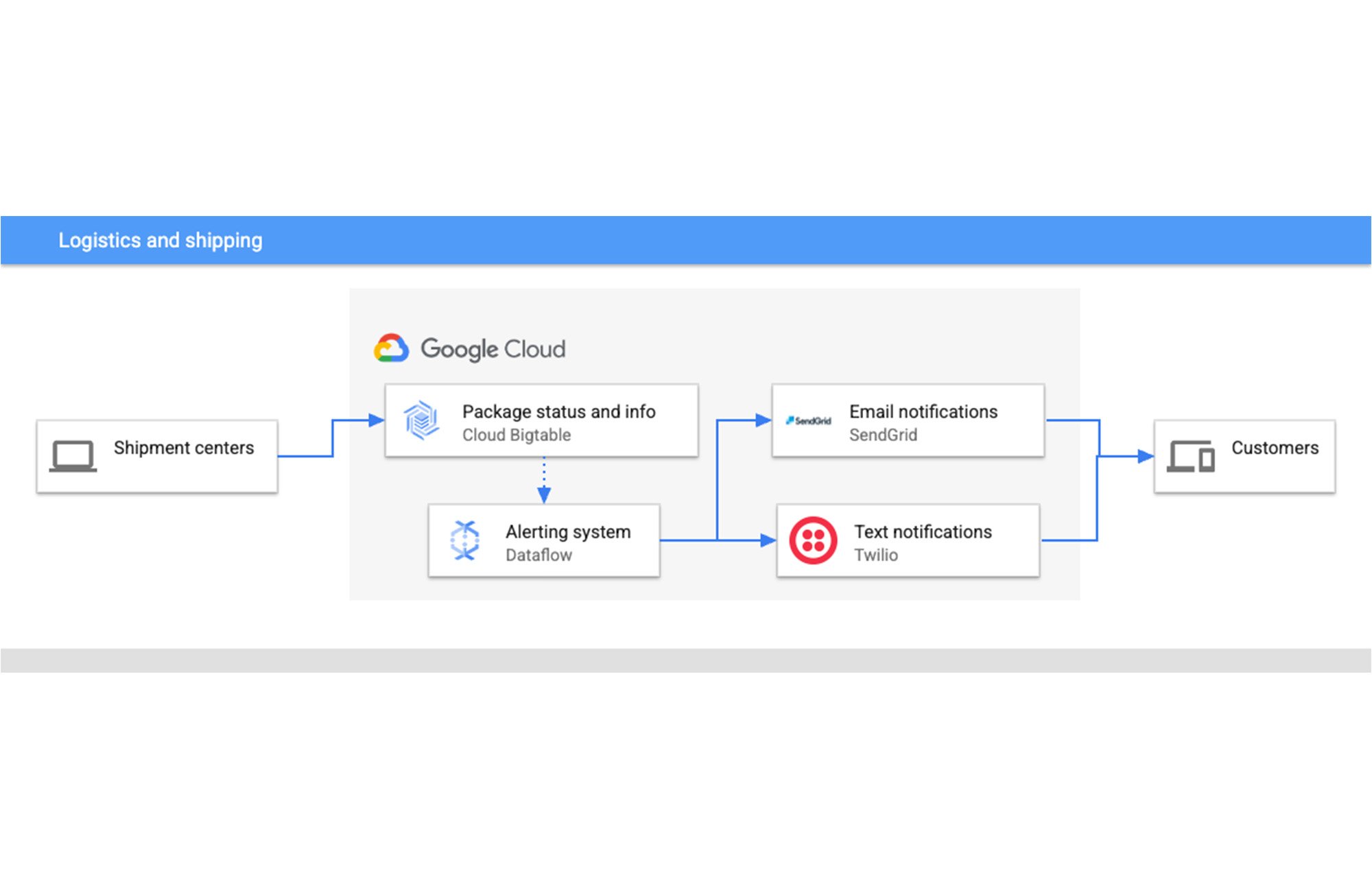

Processing events and alerting customers in real time adds a valuable tool for application development. You can customize your architecture for pop-ups, push notifications, emails, texts, etc. Here is one example of what a logistics and shipping company could do.

Logistics and shipping companies have millions of packages traveling around the world at any given moment. They need to keep track of where each package is when it arrives at each new shipment center, so it can continue to the next location. You might be awaiting a fresh pair of shoes or maybe a hospital needs to know when their next shipment of gloves is arriving, so customers can sign up for either email or text notifications about their package status.

This is an event-based architecture that works great with Bigtable change streams. We have real-time data about the packages coming from shipment centers being written to Bigtable. The change stream is captured by our alerting system in Dataflow that uses APIs like SendGrid and Twilio for easy email and text notifications respectively.

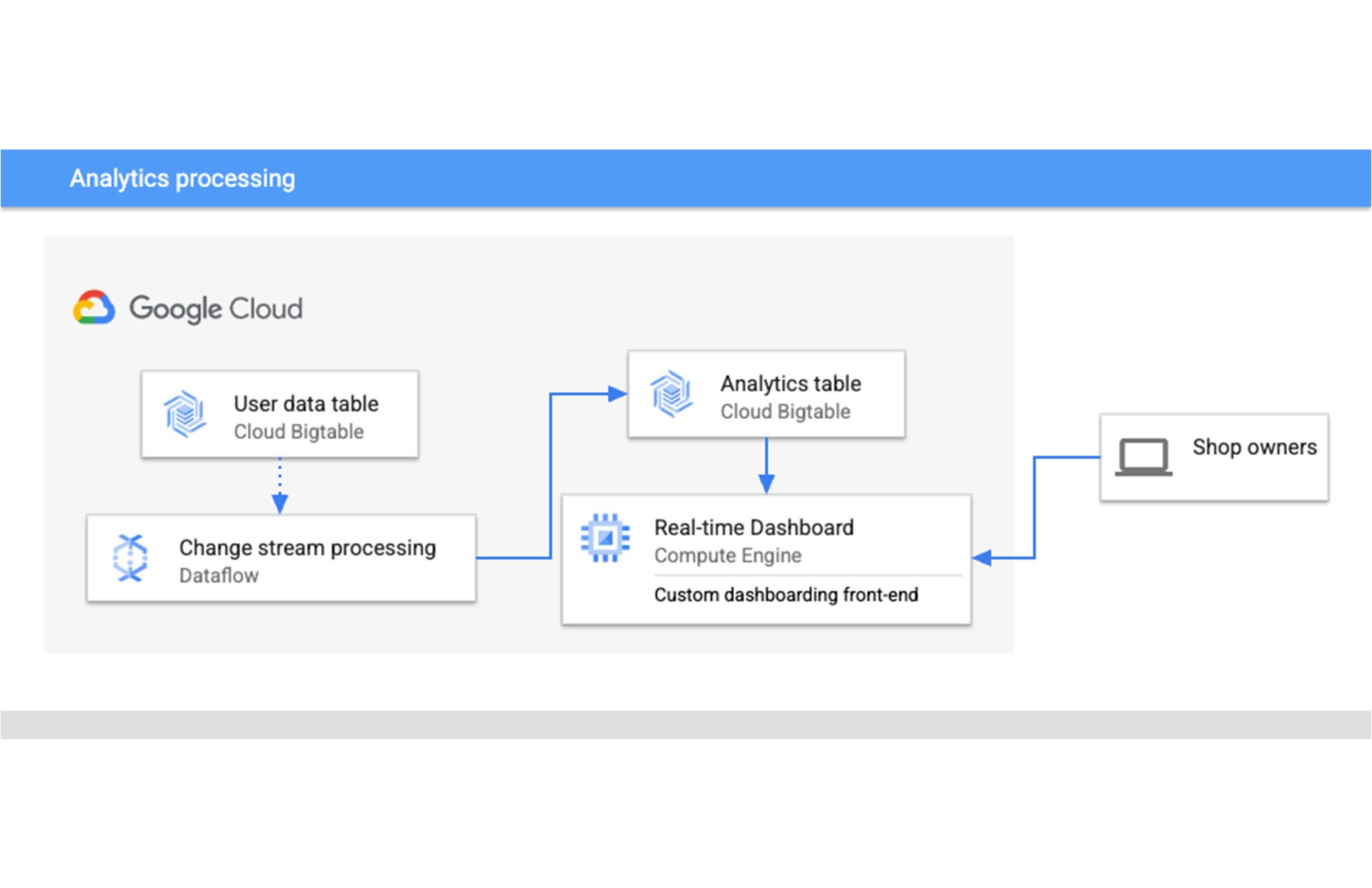

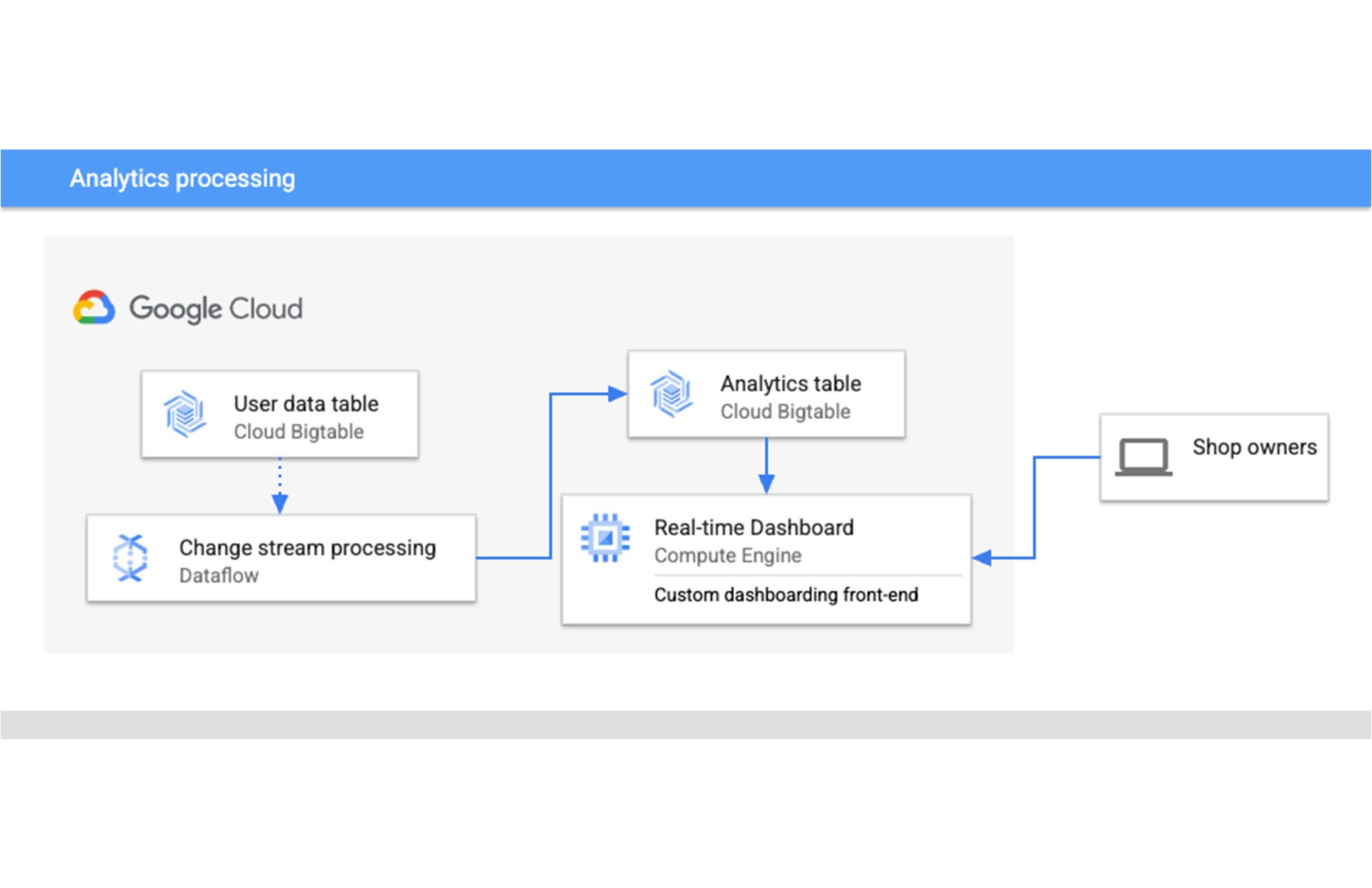

Real-time analytics

For any application using Bigtable, you will likely have heaps of data. Change streams can help you unlock real-time analytics use cases by allowing you to update metrics in small increments as the data arrives instead of large infrequent batch jobs. You could create a windowing scheme for regular intervals, then run aggregation queries on the data in the window and then write those results to another table for analytics and dashboarding.

This architecture shows a company that offers a SaaS platform for online retail and wants to show their customers the performance metrics for their online storefronts like the number of visitors, conversion rates, abandoned carts, most viewed items. They write that data to Bigtable and every five minutes aggregate the data by the dimensions they’d like their users to slice and dice by and then write that to an analytics table. They can create real-time dashboards using libraries like D3.js on top of data from the analytics table and provide better insights into their users.

You can use our tutorial on collecting song analytics data to get started.

Next steps

Now you are familiar with new ways to use Bigtable in event-driven architectures and managing your data for analytics with change streams. Here are a few next steps for you to learn more and get started with this new feature: