How The Home Depot is teaming up with Google Cloud to delight customers with personalized shopping experiences

Vivek Yadav

Principal Architect, Google Cloud

Jason Rice

Sr. Director Marketing Technology, The Home Depot

The Home Depot, Inc., is the world’s largest home improvement retailer with annual revenue of over $151B. Delighting our customers—whether do-it-yourselfers or professionals—by providing the home improvement products, services, and equipment rentals they need, when they need them, is key to our success.

We operate more than 2,300 stores throughout the United States, Canada, and Mexico. We also have a substantial online presence through HomeDepot.com, which is one of the largest e-commerce platforms in the world in terms of revenue. The site has experienced significant growth both in traffic and revenue since the onset of Covid-19.

Because many of our customers shop at both our brick-and-mortar stores and online, we’ve embarked on a multi-year strategy to offer a shopping experience that seamlessly bridges the physical and digital worlds. To maximize value for the increasing number of online shoppers, we’ve shifted our focus from event marketing to personalized marketing, as we found it to be far more effective in improving the customer experience throughout the sales journey. This led to changing our approach to marketing content, email communications, product recommendations, and the overall website experience.

Challenge: launching a modern marketing strategy using legacy IT

For personalized marketing to be successful, we had to improve our ability to recognize a customer at the point of transaction so we could—among other things—suspend irrelevant and unnecessary advertising. Most of us have experienced the annoyance of receiving ads for something we’ve already purchased, which can degrade our perception of the brand itself. While many online retailers can identify 100% of their customer transactions due to the rich information captured during checkout, most of our transactions flow through physical stores, making this a more difficult problem to solve.

Our old legacy IT system, which ran in an on-premises data center and leveraged an open-source software framework for the processing of large datasets, also challenged us since maintaining both the hardware and software stack required significant resources. When that system was built, personalized marketing was not a priority, so it took several days to process customer transaction data and several weeks to roll out any system changes. Further, managing and maintaining the large cluster base of the software stack presented its own set of issues in terms of quality control and reliability, as did keeping up with open-source community updates for each data processing layer.

Adopting a hybrid approach

As we worked through the challenges of our legacy system, we started thinking about what we wanted our future system to look like. Like many companies, we began with a “build vs. buy” analysis. We looked at several products on the market and determined that while each of them had their strengths, none was able to offer the complete set of features we needed.

Our project team didn’t think it made sense to build a solution from scratch, nor did we have access to the third-party data we needed. After much consideration, we decided to adopt a solution that combined a complete rewrite of the legacy system with the support of a partner to help with the customer transaction matching process.

Building the foundation on Google Cloud

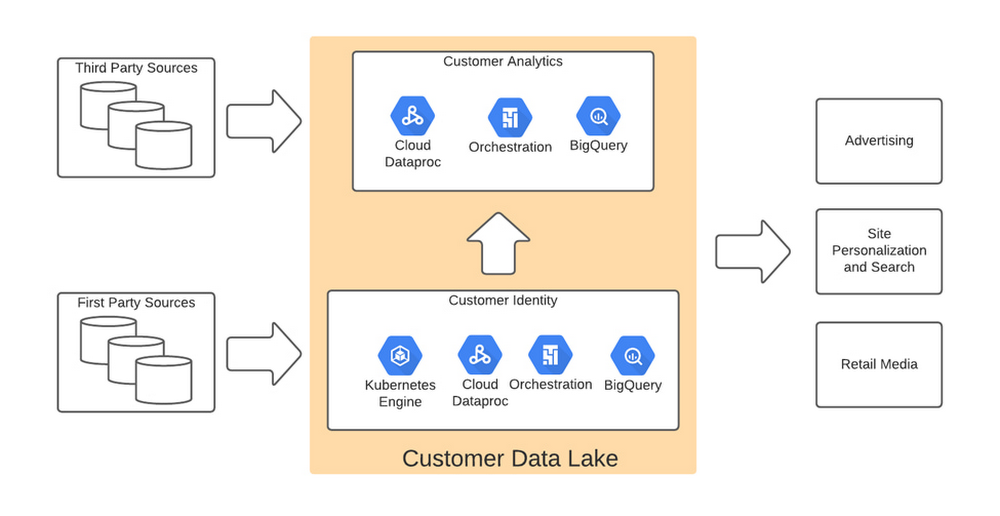

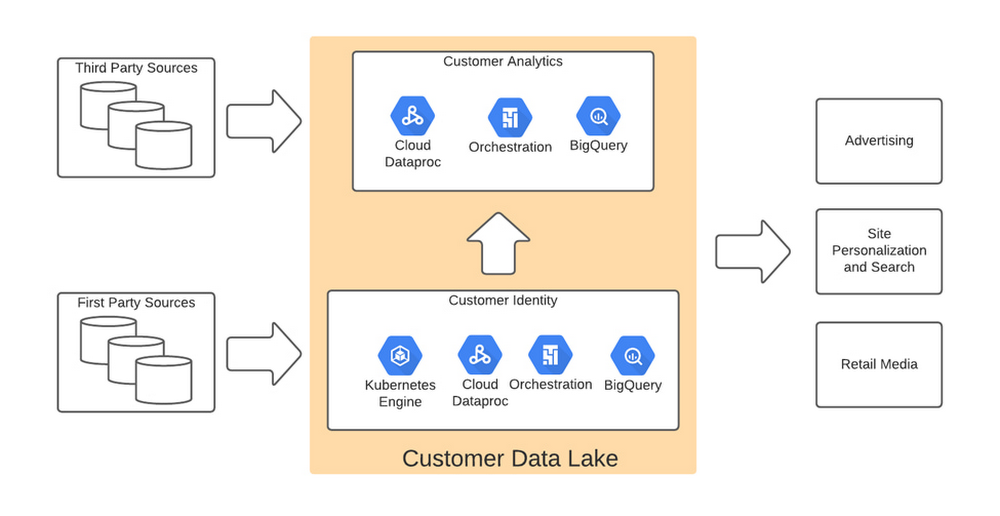

We chose Google Cloud’s data platform, specifically BigQuery, Dataflow, DataProc, Cloud Storage, and Cloud Composer. Google Cloud platform empowered us to break down data silos and unify each stage of the data lifecycle from ingestion, storage, and processing to analysis and insights. Google Cloud offered best-in-class integration with open-source standards and provided the portability and extensibility we needed to make our hybrid solution work well. The open standards of BigQuery’s BQ Storage API allowed us to leverage fast BQ storage layers to be utilized with other compute platforms, e.g., DataProc.

We used BigQuery combined with Dataflow to integrate our first- and third-party data into an enterprise data and analytics data lake architecture. The system then combined previously siloed data and used BigQuery ML to create complete customer profiles spanning the entire shopping experience, both in-store and online.

Understanding the customer journey with the help of Dataflow and BigQuery

The process of developing customer profiles involves aggregating a number of first- and third-party data sources to create a 360-degree view of the customer based on both their history and intent. It starts with creating a single historical customer profile through data aggregation, deduplication, and enrichment. We used several vendors to help with customer resolution and NCOA (Change of Address) updates, which allows the profile to be house-holded and transactions to be properly reconciled to both the individual and the household. This output is then matched to different customer signals to help create an understanding of where the customer is in their journey—and how we can help.

The initial implementation used Google Dataflow, Google’s streaming analytics solution, to load data from Google Cloud Storage into BigQuery and perform all necessary transformations. The Dataflow process was converted into BQML (BigQuery Machine Learning) since this significantly reduced costs and increased visibility into data jobs. We used Google Cloud Composer, a fully managed workflow orchestration service, to help orchestrate all data operations and DataProc and Google Kubernetes Engine to enable special case data integration so we could quickly pivot and test new campaigns. The architecture diagram below shows the overall structure of our solution.

Taking full advantage of cloud-native technology

In our initial migration to Google Cloud, we moved most of our legacy processes in their original form. However, we quickly learned that this approach didn’t take full advantage of the cloud-native and more improved features Google Cloud offered such as auto scaling of resources, flexibility to decouple storage from the compute layer, and a wide variety of options to choose the best tool for the job. We refactored our legacy distributed processing dataset pipelines written in Java-based Map Reduce and our Pig Latin jobs to Dataflow and BigQuery jobs. This dramatically reduced processing time and made our data pipeline code concise and efficient.

Previously, our legacy system processes ran longer than intended, and data was not used efficiently. Optimizing our code to be cloud-native and leveraging all the capabilities of Google Cloud services resulted in reduced run times. We decreased our data processing window from 3 days to 24 hours, improved resource usage by dramatically reducing the amount of compute we used to possess this data, and built a more streamlined system. This in turn reduced cloud costs and provided better insight. For example, DataFlow offers powerful native features to monitor data pipelines, enabling us to be more agile.

Leveraging the flexibility and speed of the cloud to improve outcomes

Today, using a continuous integration/continuous delivery (CI/CD) approach, we can deploy multiple system changes each week to further improve our ability to recognize in-store transactions. Leveraging the combined capabilities of various Google Cloud systems—BigQuery, DataFlow, Cloud Composer, Dataproc, and Cloud Storage–we drastically increased our ability to recognize transactions and can now connect over 75% of all transactions to an existing household. Further, the flexible Google Cloud environment coupled with our cloud-native application makes our team more nimble and better able to respond to emerging problems or new opportunities.

Increased speed has led to better outcomes in our ability to match transactions across all sales channels to a customer and thereby improve their experience. Before moving to Google Cloud, it took 48 to 72 hours to match customers to their transactions, but now we can do it in less than 24 hours.

Making marketing more personal—and more efficient

The ability to quickly match customers to transactions has huge implications for our downstream marketing efforts in terms of both cost and effectiveness. By knowing what a customer has purchased, we can turn off ads for products they’ve already bought or offer ads for things that support what they’ve bought recently. This helps us use our marketing dollars much more efficiently and offer an improved customer experience.

Additionally, we can now apply the analytical models developed using BQML and Vertex AI to sort customers into audiences. This allows us to more quickly identify a customer’s current project, such as remodeling a kitchen or finishing a basement, and then personalize their journey by offering them information on products and services that matter most at a given point through our various marketing channels. This provides customers with a more relevant and customized shopping journey that mirrors their individual needs.

Protecting a customer’s privacy

With this improved ability to better understand our customers, we remain committed to respecting their privacy. Google’s cloud solutions provide us with additional capabilities to manage and protect our customers’ data. This way we can provide our customers the more personalized shopping experience they desire while also honoring our privacy commitments.

With flexible Google Cloud technology in place, The Home Depot is well positioned to compete in an industry where customers have many choices. By putting our customers’ needs first, we can stay top of mind whenever the next project comes up.