How to do multi-cluster Kubernetes in the real world—one GKE shop’s approach

Mengliao Wang

Senior Software Developer, Team Lead, Geotab

Editor’s note: Today, we hear from Mengliao Wang, Senior Software Developer, Team Lead at Geotab, a leading provider of fleet management hardware and software solutions. Read on to hear how the company is expanding on their adoption of Google Cloud to deliver new services for their customers by leveraging Google Kubernetes Engine (GKE) multi-cluster features.

Geotab’s customers ask a lot of our platform: They use it to gain insights from vast amounts of telemetry data collected from their fleet vehicles. They rely on it to adhere to strict data privacy requirements. And, because our customers are located all over the world, they need the platform to address their data residency and other jurisdictional processing requirements, which require compute and storage to live within a specific geographic region.

Meanwhile, as a managed service provider, we need a cost-efficient business model — that was certainly a driving factor for adopting containers and GKE. As we started architecting the deployment of multiple clusters to support our customers’ data residency requirements, we determined we also needed to explore approaches to reduce the total operational maintenance and costs of our multi-cluster environment.

In order to meet customers where they are, we moved forward with running GKE clusters in multiple Google Cloud Platform regions. At the same time, we recently began using GKE multi-cluster services, which provides our customers with the security and low latency they need, while giving us cost savings and an easy-to-maintain solution.

Read on to learn more about Geotab, our journey to Google Cloud and GKE, and, more recently, how we deployed multi-cluster Kubernetes using GKE multi-cluster services.

The rise of connected fleet vehicles

“By 2024, 82% of all manufactured vehicles will be equipped with embedded telematics.”—Berg Insight

As a global leader in IoT and connected transportation, Geotab is advancing security, connecting commercial vehicles to the internet, and providing web-based analytics to help customers better manage their fleet vehicles. With over 2.5 million connected vehicles and processing billions of data points per day, we leverage data analytics and machine learning to support our customers in several ways. We help them improve productivity, optimize fleets by reducing their fuel consumption, enhance driver safety, achieve strong compliance to regulatory changes, and meet sustainability goals. Geotab partners with Original Equipment Manufacturers (OEMs) to help expand customers’ fleet management capabilities through access to the Geotab platform.

Our journey to Google Cloud and GKE

We originally chose Google Cloud as our primary cloud provider as we found it to be the most stable of the cloud providers we tried, with the least amount of unscheduled downtime. End-to-end reliability is an important consideration for our customers’ safety and their confidence in Geotab’s driver-assistance features. Since getting started on our public cloud journey, we’ve leveraged Google Cloud to modernize different aspects of the Geotab platform.

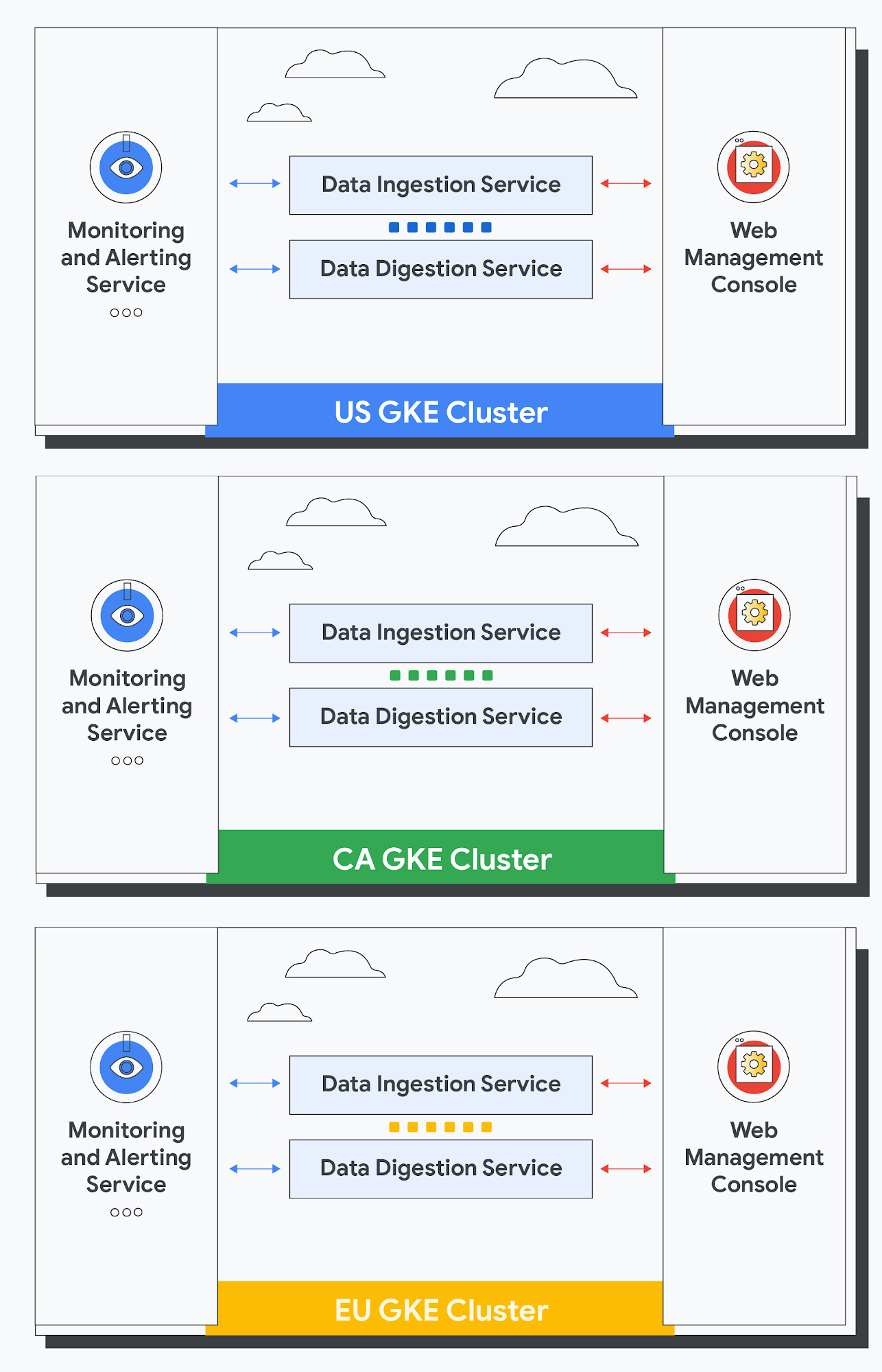

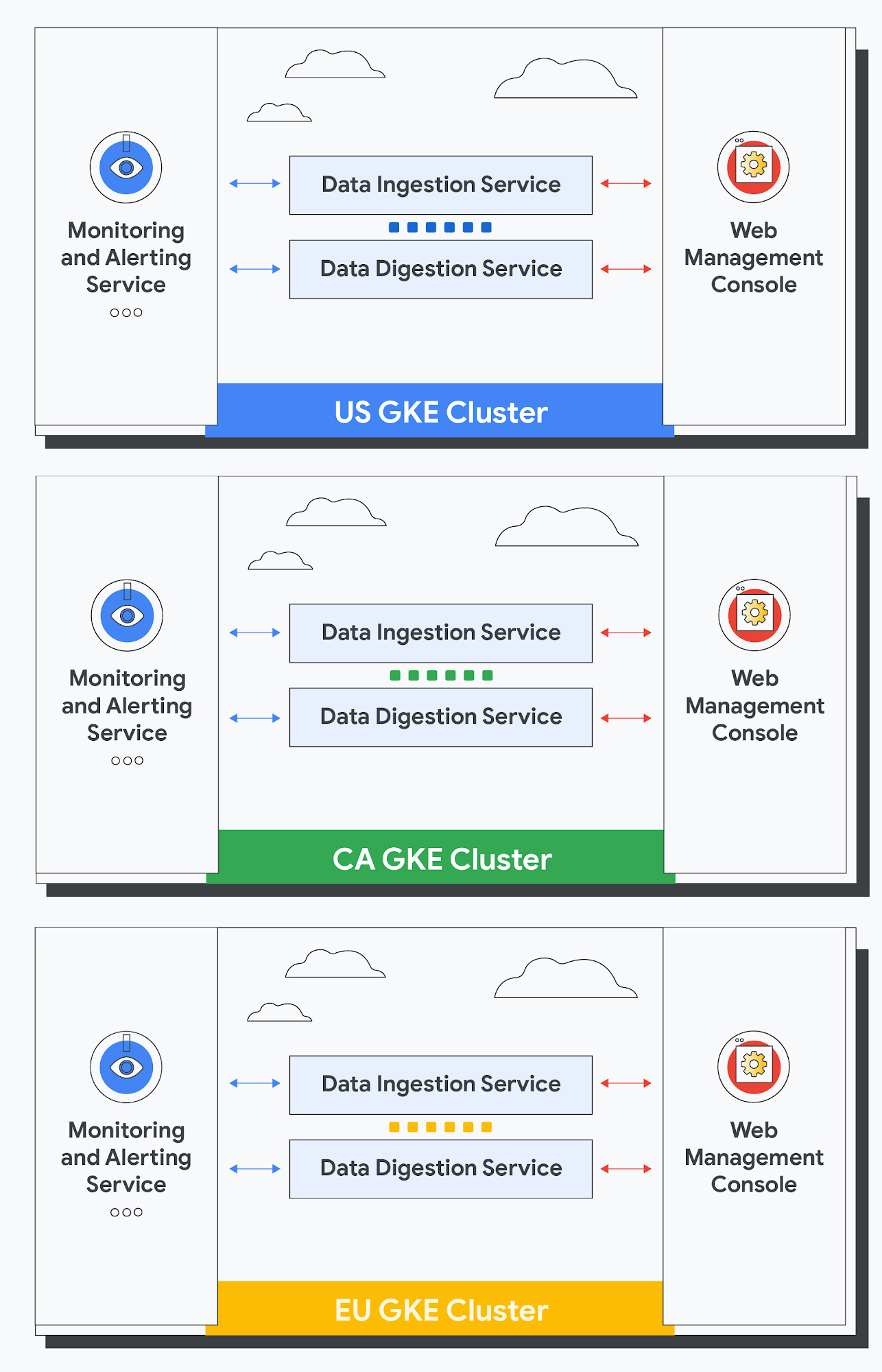

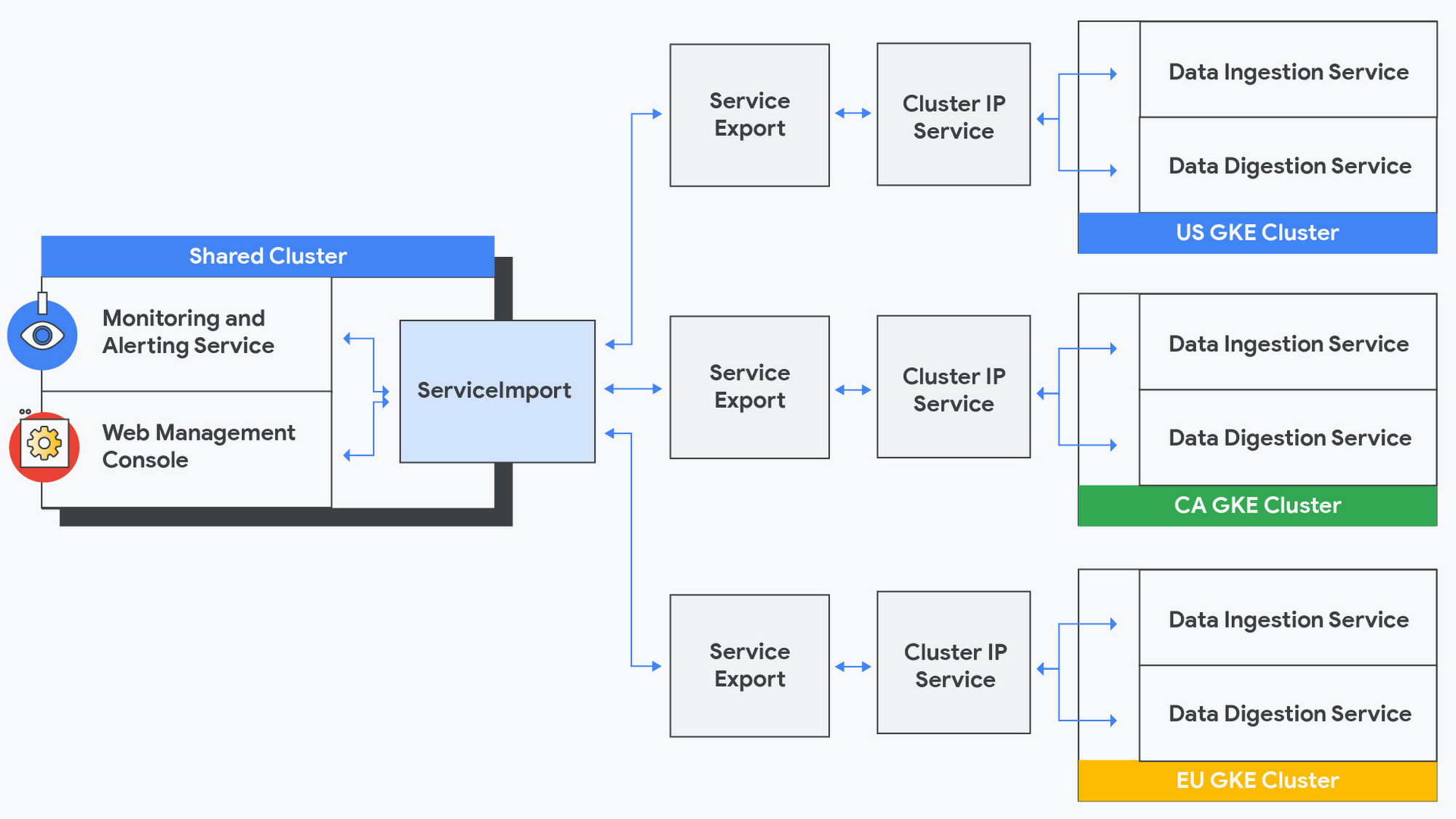

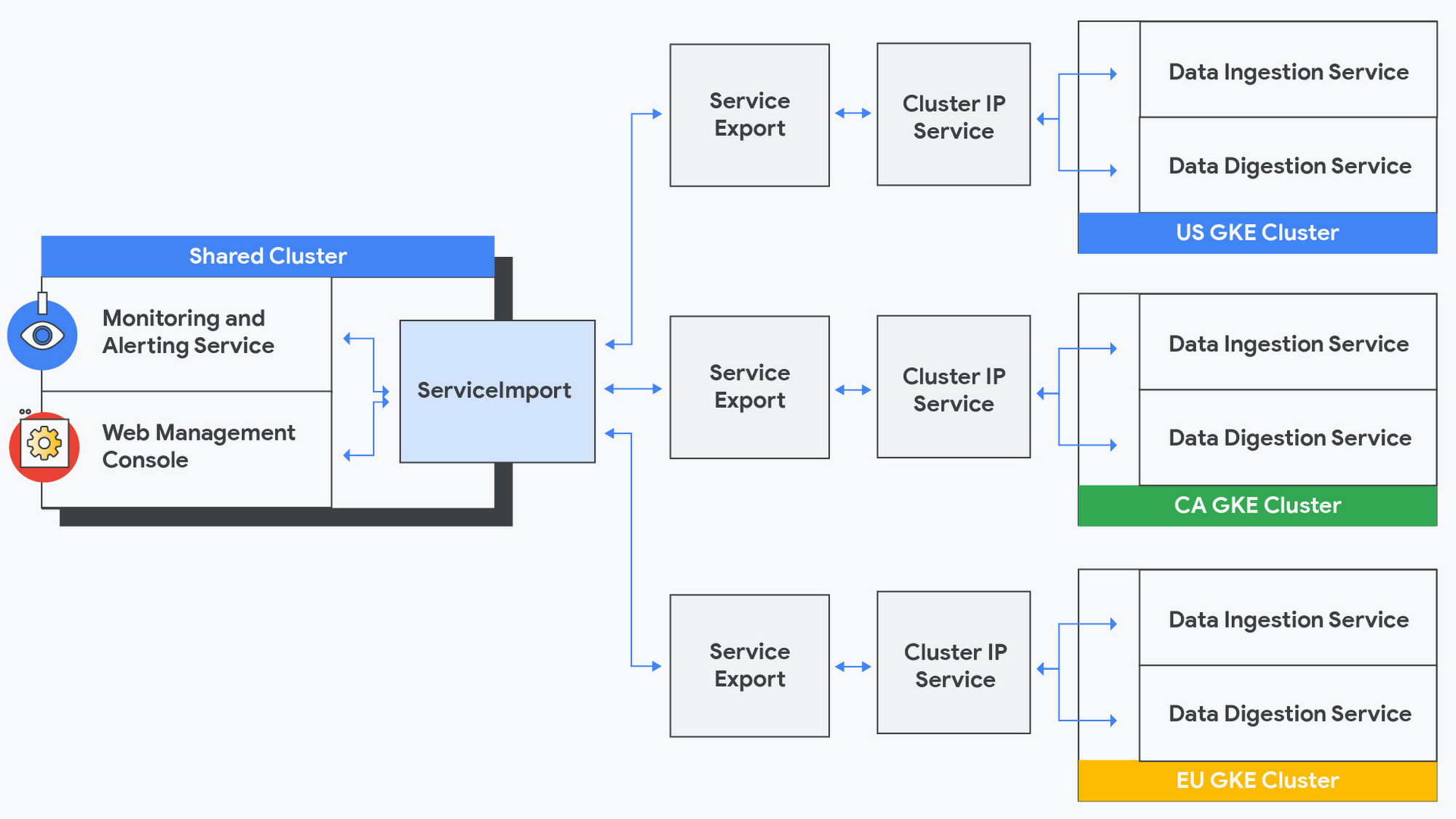

First, we embarked on a multi-milestone and multi-year initiative to modernize the Geotab Data Platform, adopting a container-based architecture using open source technologies; we continue to leverage Google Cloud services to launch innovative solutions that combine analytics and access to massive data volumes for better transportation planning decisions. Today, Geotab Data Platform is built entirely on GKE, with multiple services such as data ingestion, data digestion, data processing, monitoring and alerting, a management console, and several applications. We are now leveraging this modern platform to introduce new Geotab services to our customers.

Exploring multi-cluster Kubernetes

As discussed above, we recently began deploying our GKE clusters into multiple regions, to meet our customers’ performance and data residency requirements. However, not every service that makes up the Geotab platform is created equal…

For example, data digestion and data ingestion services are at the core of the data platform. Data digestion services are Application Programming Interfaces (API), machine learning models, and business intelligence (BI) tools that consume data from the data environment for various data analysis purposes, and are served directly to customers. Data ingestion services ingest billions of telematics data records per day from Geotab GO devices and are responsible for persisting them into our data environment.

But when looking at optimizing operating costs, we identified several services outside of the data platform that did not process sensitive customer information — our monitoring and alerting services are examples of these services. Duplicating these services in multiple regions would result in higher infrastructure costs and would result in additional maintenance complexity and overhead.

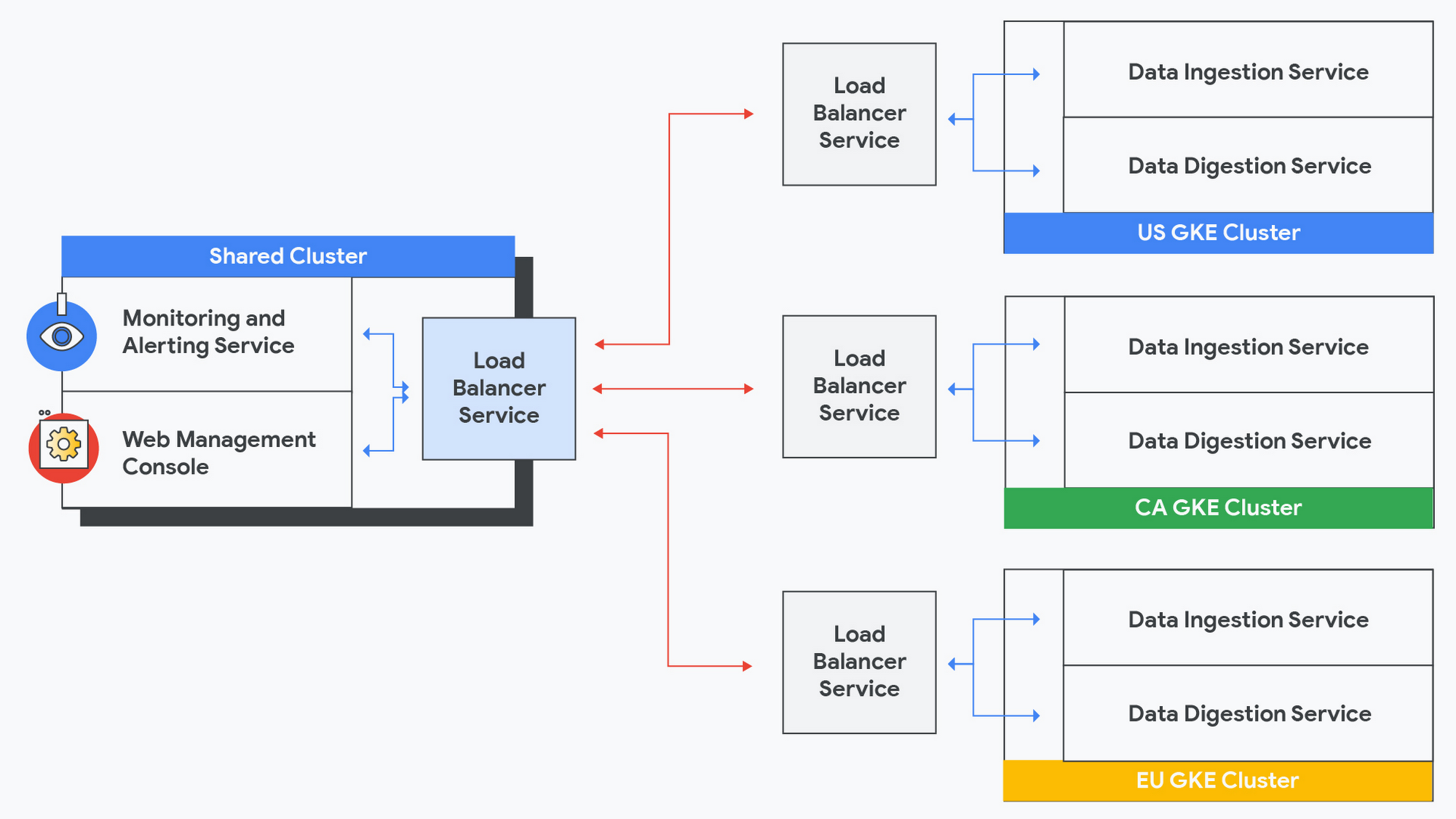

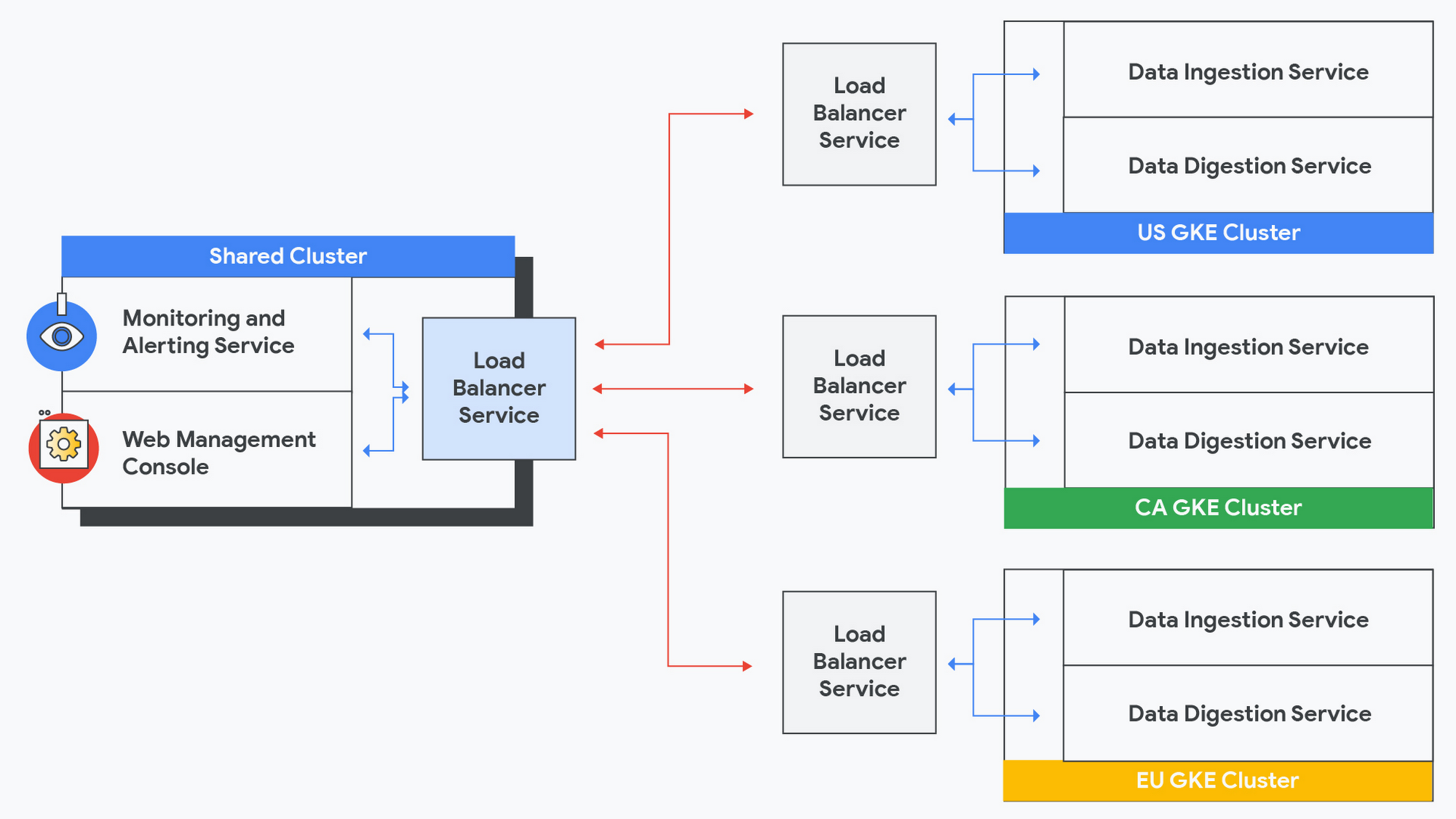

We decided to deploy the services that do not process any customer data as shared services in a dedicated cluster. Not only does this lower the cost for resources, but it also makes it easier to manage from an operational perspective. However, this approach introduced two new challenges:

Services such as data ingestion and data digestion that run in each jurisdiction needed to expose their metrics outside of their cluster to make them available to the shared services (monitoring and alerting / management console) running on the shared cluster, resulting in some security concerns.

Since metrics would not be passing within a cluster subnetwork, they would travel via the public network, resulting in higher latency as well as additional security concerns.

This is where GKE Multi-cluster Services (MCS) came in, perfectly solving these concerns without introducing any new architectural components for us to configure and maintain. MCS is a cross-cluster Service Discovery and invocation mechanism built-in to GKE. MCS extends the capabilities of the standard Kubernetes Service object. Services that are configured to be exported with MCS are discoverable and accessible across all clusters within a fleet of clusters via a virtual IP address, matching the behavior of a ClusterIP Service that is accessible within a cluster. With MCS, we do not need to expose public endpoints and all traffic is routed within the Google network.

With MCS configured, we get the best of both worlds: services between the shared cluster and other regionally hosted clusters communicate as if they are all hosted in one cluster! Problem solved!

Reflecting on the journey

Our modernization journey on Google Cloud continues to pay dividends. During the first phase of our journey, we reaped the benefits of being able to scale up our systems with less downtime. With GKE features like MCS, we are able to reduce the time required to roll-out new features to our global customers while addressing our business objectives to manage operating costs. We look forward to continuing on our multi-cluster journey with Google Cloud and GKE.

Are you interested in learning more about how GKE multi-cluster services can help with your Kubernetes multi-cluster challenges? Check out this guide to configuring multi-cluster services, or reach out to a Google Cloud expert — we’re eager to help!