Discover and invoke services across clusters with GKE multi-cluster services

Emeka Nwafor

Product Manager, GKE

Jeremy Olmsted-Thompson

Staff Software Engineer, GKE

Do you have a Kubernetes application that needs to span multiple clusters? Whether for privacy, scalability, availability, cost management or data sovereignty reasons, it can be hard for platform teams to architect, implement, operate, and maintain applications across cluster boundaries, as Kubernetes’ Service primitive only enables service discovery within the confines of a single Kubernetes cluster.

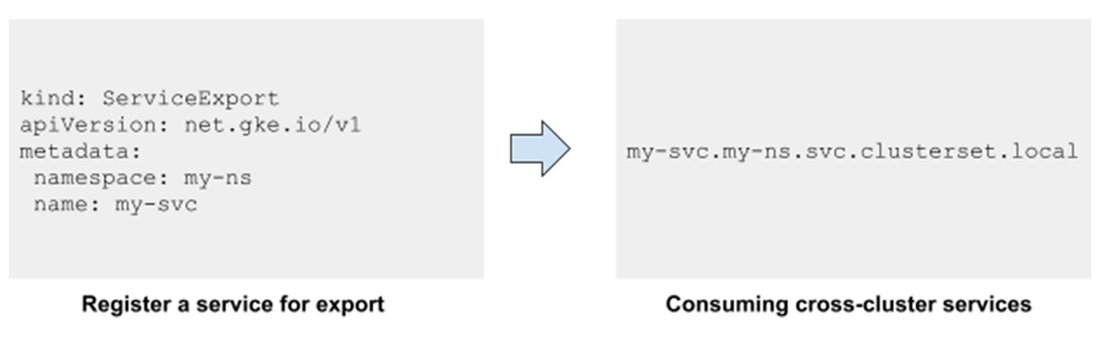

Today, we are announcing the general availability of multi-cluster services (MCS), a Kubernetes-native cross-cluster service discovery and invocation mechanism for Google Kubernetes Engine (GKE), the most scalable managed Kubernetes offering. MCS extends the reach of the Kubernetes Service primitive beyond the cluster boundary, so you can easily build Kubernetes applications that span multiple clusters.

This is especially important for cloud-native applications, which are typically built using containerized microservices. The one constant with microservices is change—microservices are constantly being updated, scaled up, scaled down, and redeployed throughout the lifecycle of an application, and the ability for microservices to discover one another is critical. GKE’s new multi-cluster services capability makes managing cross-cluster microservices-based apps simple.

How does GKE MCS work?

GKE MCS leverages the existing service primitive that developers and operators are already familiar with, making expanding into multiple clusters consistent and intuitive. Services enabled with this feature are discoverable and accessible across clusters with a virtual IP, matching the behavior of a ClusterIP service within a cluster. Just like your existing services, services configured to use MCS are compatible with community-driven, open APIs, ensuring your workloads remain portable. The GKE MCS solution leverages fleets to group clusters and is powered by the same technology offered by Traffic Director, Google Cloud’s fully managed, enterprise-grade platform for global application networking.

Common MCS use cases

Mercari, a leading e-commerce company and an early adopter of MCS: “We have been running all our microservices in a single multi-tenant GKE cluster. For our next-generation Kubernetes infrastructure, we are designing multi-region homogeneous and heterogeneous clusters. Seamless inter-cluster east-west communication is a prerequisite and multi-cluster Services promise to deliver. Developers will not need to think about where the service is running. We are very excited at the prospect.” - Vishal Banthia, Engineering Manager, Platform Infra, Mercari

We are excited to see how you use MCS to deploy services that span multiple clusters to deliver solutions optimized for your business needs. Here are some popular use cases we have seen our customers enable with GKE MCS.

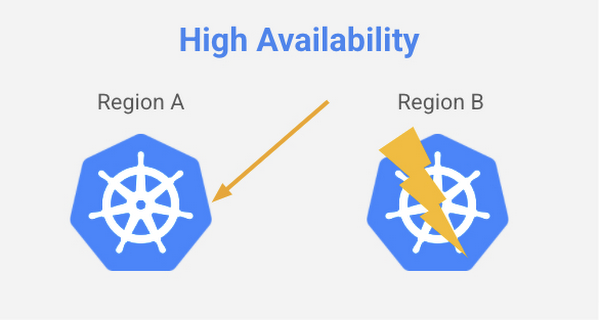

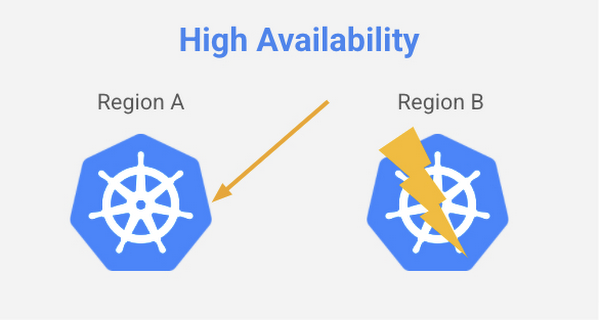

- High availability - Running the same service across clusters in multiple regions provides improved fault tolerance. In the event that a service in one cluster is unavailable, the request can fail over and be served from another cluster (or clusters). With MCS, it’s now possible to manage the communication between services across clusters, to improve the availability and resiliency of your applications to meet service level objectives.

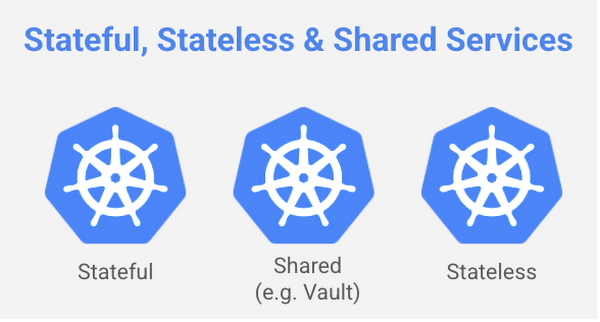

Stateful and stateless services - Stateful and stateless services have different operational dependencies and complexities and present different operational tradeoffs. Typically, stateless services have less of a dependency on migrating storage, making it easier to scale, upgrade and migrate a workload with high availability. MCS lets you separate an application into separate clusters for stateful and stateless workloads, making them easier to manage.

- Shared services - Increasingly, customers are spinning up separate Kubernetes clusters to get higher availability, better management of stateful and stateless services, and easier compliance with data sovereignty requirements. However, many services such as logging, monitoring (Prometheus), secrets management (Vault), or DNS are often shared amongst all clusters to simplify operations and reduce costs. Instead of each cluster requiring its own local service replica, MCS makes it easy to set up common shared services in a separate cluster that is used by all functional clusters.

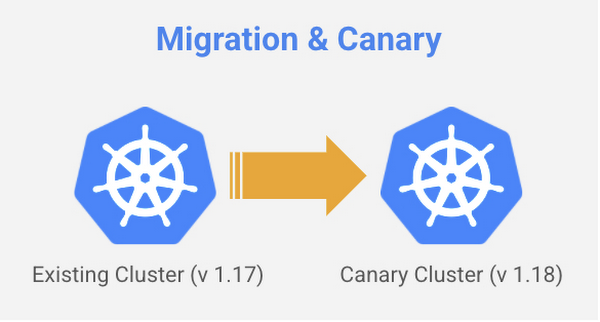

- Migration - Modernizing an existing application into a containerized microservices-based architecture often requires services to be deployed across multiple Kubernetes clusters. MCS provides a mechanism to help bridge the communication between those services, making it easier to migrate your applications—especially when the same service can be deployed in two different clusters and traffic is allowed to shift.

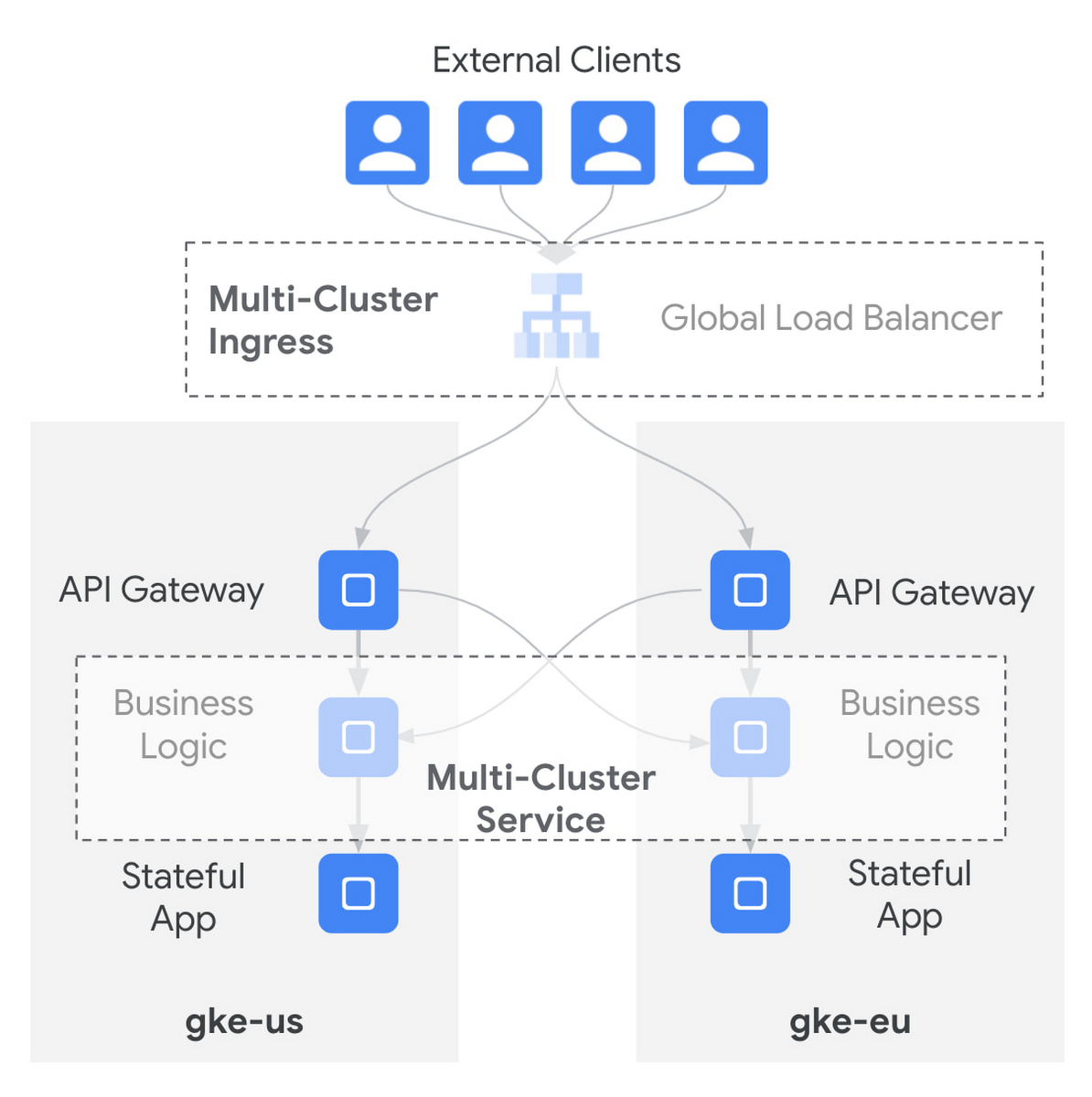

Multi-cluster Services & Multi-cluster Ingress

MCS also complements Multi-cluster Ingress with multi-cluster load balancing for both east-west and north-south traffic flows. Whether your traffic flows from the internet across clusters, within the VPC between clusters, or both, GKE provides multi-cluster networking that is deeply integrated and native to Kubernetes.

Get started with GKE multi-cluster services today

You can start using multi-cluster services in GKE today and gain the benefits of higher availability, better management of shared services, and easier compliance for data sovereignty requirements of your applications. To learn more, check out this how-to doc.

Thanks to Maulin Patel, Product Manager, for his contributions to this blog post.