Autopilot is now GKE’s default mode of operation — here’s what that means for you

Victor Szalvay

GKE Product Manager

William Denniss

Group Product Manager, Google Kubernetes Engine

Ah, Kubernetes. So powerful and yet so much effort to learn and operate. Everyone wants all the goodness but no one is crazy about all the effort. The infrastructure abstraction and scaling is great, but who wouldn’t love less manual node shaping and endless bin packing for cost optimization?

We introduced Autopilot mode for Google Kubernetes Engine (GKE) in 2021 precisely to address this conundrum. Autopilot is a cluster mode of operation that puts Kubernetes in the hands of mere mortals. Whether you tried Autopilot mode back then or have been waiting to get in on the action, a lot has changed and it’s time for a fresh look. That’s because Autopilot got a big promotion — it’s now officially the default and recommended mode of GKE cluster operation in the cluster creation interface.

Why do we recommend Autopilot?

Simply put, we believe Autopilot is the best cluster mode for most Kubernetes use cases.

This blog post is the first in a series where we’ll explore why GKE Autopilot is the recommended mode of operation. Throughout the series, we’ll explore use cases and implementation patterns to help you get the most from Autopilot. In this blog post, we cover why Autopilot is the recommended mode from the standpoint of value to our customers.

In a nutshell, Autopilot provides improvements in the following areas:

Faster time to market

Always on reliability

Improved security posture

Lowest total cost of ownership (TCO) for Kubernetes

Let’s take a deeper look at each of these benefits.

Faster time-to-market

GKE Autopilot streamlines Kubernetes operations and developer impacts, resulting in faster build and deployment. But don’t take our word for it, Forrester Research recently analyzed companies using Autopilot and concluded they had a 45% improvement in developer productivity. Teams using Autopilot were able to focus on business-value-generating activities while leaving undifferentiated Kubernetes operations toil to Google.

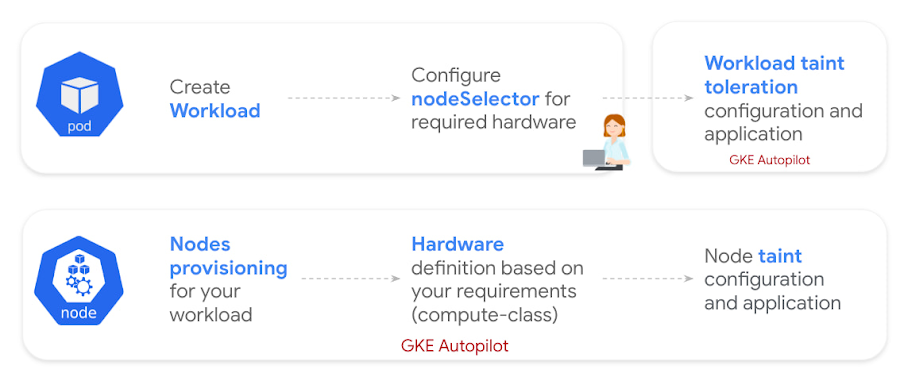

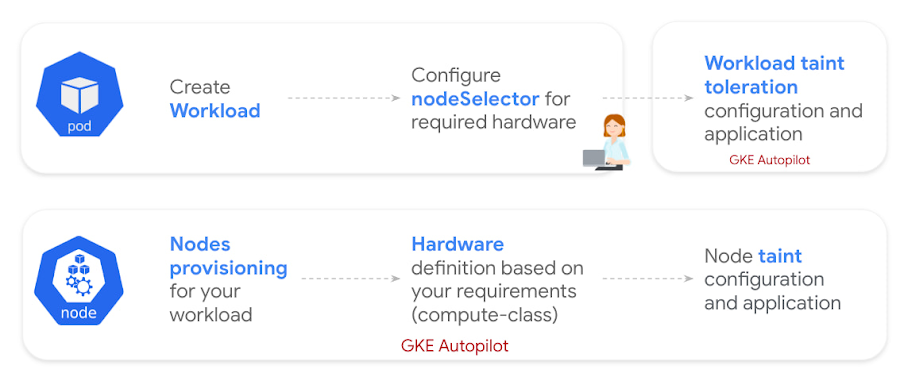

How exactly? Autopilot simplifies the consumption model with compute classes, allowing developers to provision a wide range of resources and target CPU platforms directly in the workload definition (podSpec). Platform teams can confidently leave this to developers, as Autopilot automatically spins up the needed infrastructure and configures the needed taints and tolerations.

No need for deep Kubernetes cluster administration expertise: We also made Autopilot easy for less experienced teams to operate. Autopilot clusters are provisioned with sensible default configurations appropriate for most production use cases. This greatly reduces the Kubernetes learning curve and allows customers that are new to Kubernetes to adopt it with confidence. Autopilot customers are able to deploy containerized applications 2.6x faster than competitive platforms1.

Reduced overhead of Day 2 operations: We manage your Kubernetes node pools and nodes for you. Let that sink in for a minute: node provisioning, scaling, maintenance, and security are all handled for you by Google SRE. The nodes are still very much there, in your project purview, you just don’t need to worry about managing them.

Always on reliability

Workload SLA backed by Google SRE: On top of the awesome SLA that GKE Standard mode provides, Autopilot mode gives you a pod- (workload-) level SLA backed by Google SRE. Google monitors the entire Autopilot cluster control plane, worker nodes and core Kubernetes system components — and ensures your pods are always scheduled.

Automatic provisioning and scaling: By optimizing for your workload, Autopilot automatically provisions the right resources your workloads need, so you don’t have to figure out node size and shape. Autopilot then scales workloads to meet demand using the Kubernetes tools you already know and love, like HPA and VPA.

Flexible maintenance options: You retain the flexibility to use maintenance windows and exclusions. When coupled with pod disruption budgets, you can effectively control when and how node maintenance happens to avoid inopportune disruptions.

This all results in higher uptime and better results for your workloads. And critically, fleet-wide, we see better cluster and node health on Autopilot.

Improved security posture

Let’s face it, Kubernetes security is hard. Platform teams often spend a lot of time creating safe environments for developers to use. Autopilot provides a security-focused version of Kubernetes out of the box, with sensible security settings enabled by default. This reduces possible attack surfaces, minimizing the impact of CVEs and configuration errors.

Hardened default cluster configuration: Autopilot comes out of the box with strong security best practices. This includes many of Google’s recommended practices from Hardening your cluster’s security.

While nodes are visible, no privileged access is permitted by workloads or users. There are very few legitimate use cases for root access to nodes and privileged containers on Kubernetes. Autopilot enforces this from the start, while providing exceptions for allowlisted partner workloads.

Shielded Nodes: On by default with GKE Autopilot, Shielded Nodes provide strong, verifiable node identity and integrity to increase the security of GKE nodes.

Workload Identity: Autopilot provides Workload Identity out of the box, which is the recommended way for your workloads running on GKE to access Google Cloud services in a secure and manageable way.

Single tenant: To meet governance requirements, the nodes provisioned by Autopilot remain in your project purview, ensuring compliance with governance restrictions while providing more flexibility than multi-tenant architectures.

Lowest TCO for Kubernetes

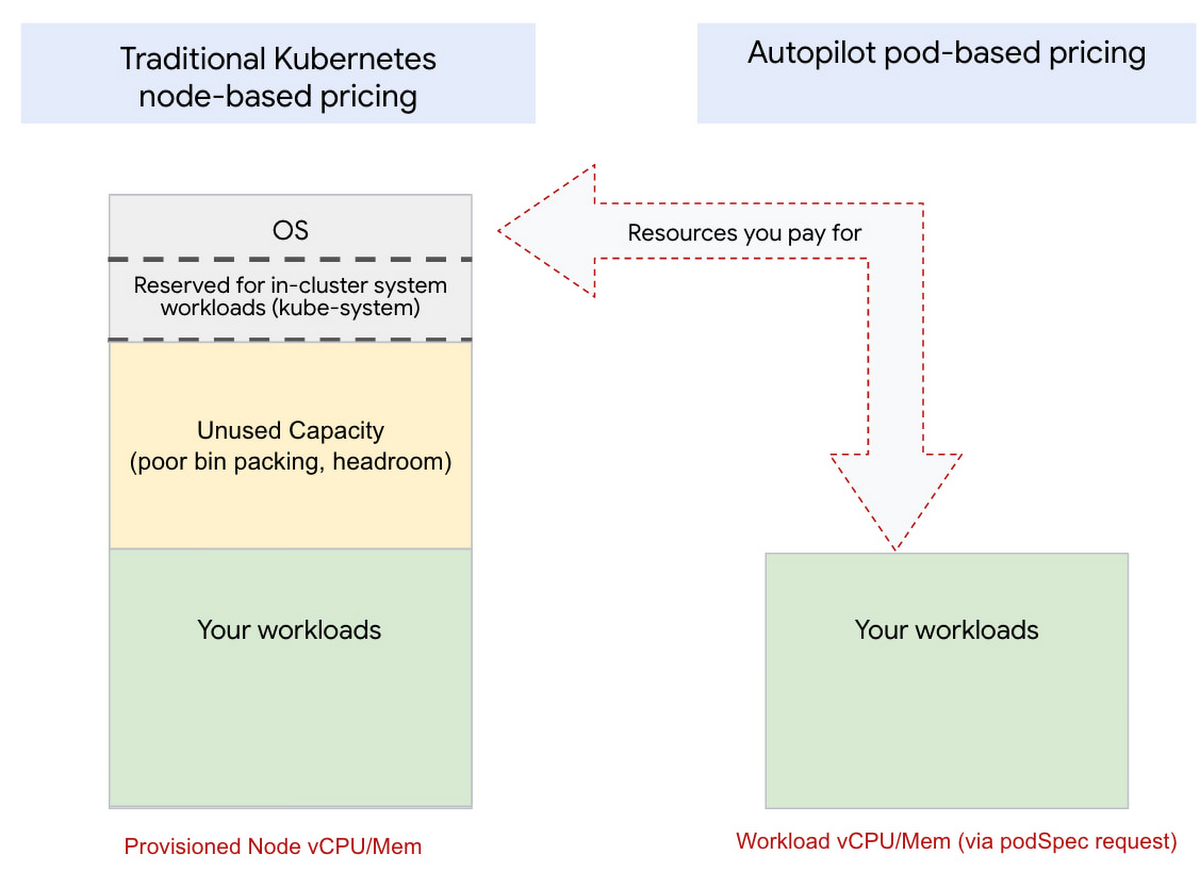

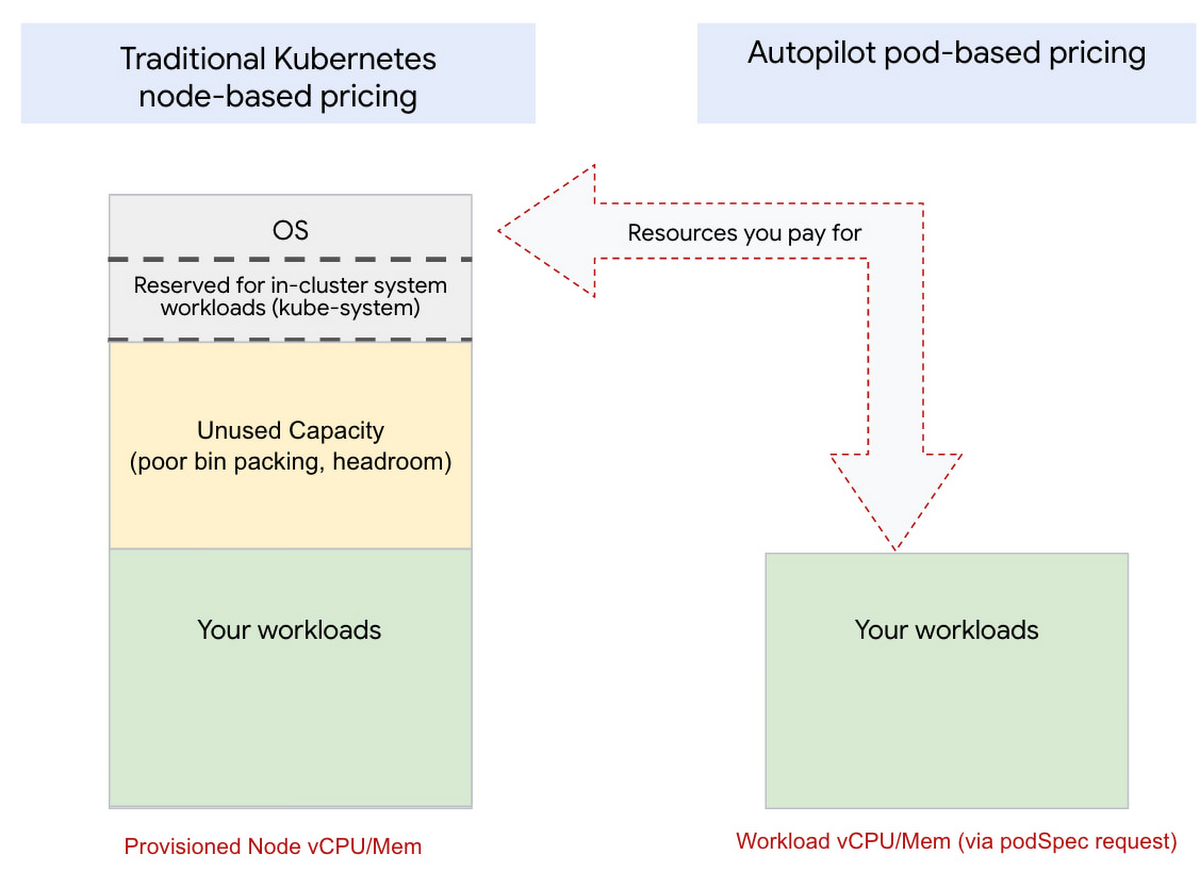

With traditional managed Kubernetes, you pay for all provisioned infrastructure, regardless of utilization. Most customers overprovision clusters for scaling and do not “bin pack” nodes efficiently. This all results in paying for infrastructure you aren’t using.

With Autopilot you only pay for what you use (Pod pricing). Billing is based on the resource requests made in the podSpec and no other infrastructure costs are incurred. This completely eliminates the risk of inefficient bin packing!

Maximized utilization: Traditional managed Kubernetes reserves resources on each node for system workloads, something a customer still pays for. Autopilot also eliminates this waste because you only pay for the workload resource requests, not the entirety of the underlying VM infrastructure.

Reduced operational cost: Remember that Google does the heavy Day 0 and Day 2 operations around node provisioning, scaling and maintenance, in addition to the existing managed control plane and system resources provided by Standard mode. There’s also a lot less your team needs in terms of specific Kubernetes expertise to get started with Autopilot.

Kubernetes cost optimization often requires continuous effort because workload churn introduces fragmentation in “bin packing”. With Autopilot, you are no longer responsible for bin packing, so the labor overhead associated with bin packing is also eliminated.

According to Forrester Research, teams utilizing Autopilot can save up to 85% on operational costs.

What can I do with Autopilot?

In short, almost anything.

GKE Autopilot has had one guiding principle from the start: Autopilot is GKE. This means that every design decision we made ensured that Autopilot did not diverge from the Kubernetes spec or stray from GKE itself. Autopilot is therefore Kubernetes-compliant and supports most Kubernetes workloads including StatefulSets (with block storage devices), DaemonSets (including key partner workloads from Palo Alto Networks, DataDog, Sysdig and more), and GPUs for AI/ML workloads. It also supports all the goodies you need to run your workloads like Anthos Service Mesh, IP Masquerading, Binary Authorization, OPA/Gatekeeper, Policy Controller, mutating webhooks, Google Managed Prometheus, network tags, and a lot more.

In the next blog post in this series on GKE Autopilot, we’ll explore some use cases Autopilot is handling for our customers and provide clear examples on how to take advantage of the power of Kubernetes, without all the pain. In the meantime, we invite you to get started with GKE Autopilot and attend our Twitter Spaces for a live discussion on GKE Autopilot.

1. Google Developer Experience - Competitive Benchmark Report 2022 by User Research International