Livin’ la vida local: Easier Kubernetes development from your laptop

Balint Pato

Software Engineer

Running applications in containers on top of Kubernetes is all the rage. However, the brave new world of containers isn’t always kind to application developers who are used to a fast local developer experience. With Kubernetes, there can be lots of differences between how you develop and run an application locally vs. in a Kubernetes cluster.

Here at Google, we want to speed up your workflow and reduce these differences, so you can enjoy a great local developer experience. To that end, we made some recent contributions to the open-source projects for Minikube, which lets you run Kubernetes workloads locally, and Skaffold, a command line tool for continuous development on Kubernetes. We think these updates will make a big difference in your day-to-day life as a Kubernetes developer.

Run GPU workloads on minikube

As machine learning (ML) and other compute intensive applications become more and more popular, there’s an increasing need for hardware accelerators like GPUs to speed up these workloads. If you have containerized ML workloads, for instance, you can use GPUs on Google Kubernetes Engine (GKE), which provides a production-ready environment.

However, there hasn’t been a local Kubernetes development environment that supports GPU workloads. That means that to develop and test GPU apps, you either have to use a local environment that doesn’t resemble your production GKE environment, or you have to use a remote environment, increasing the latency of the feedback cycle. Neither of these are great options, since doing development on an environment that differs from production can result in subtle bugs and reduced productivity.

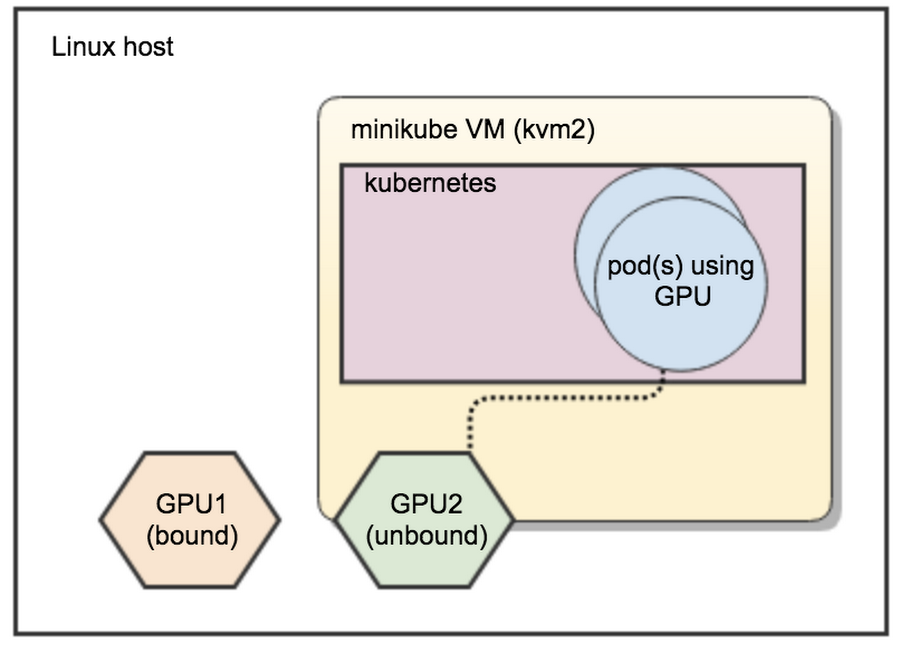

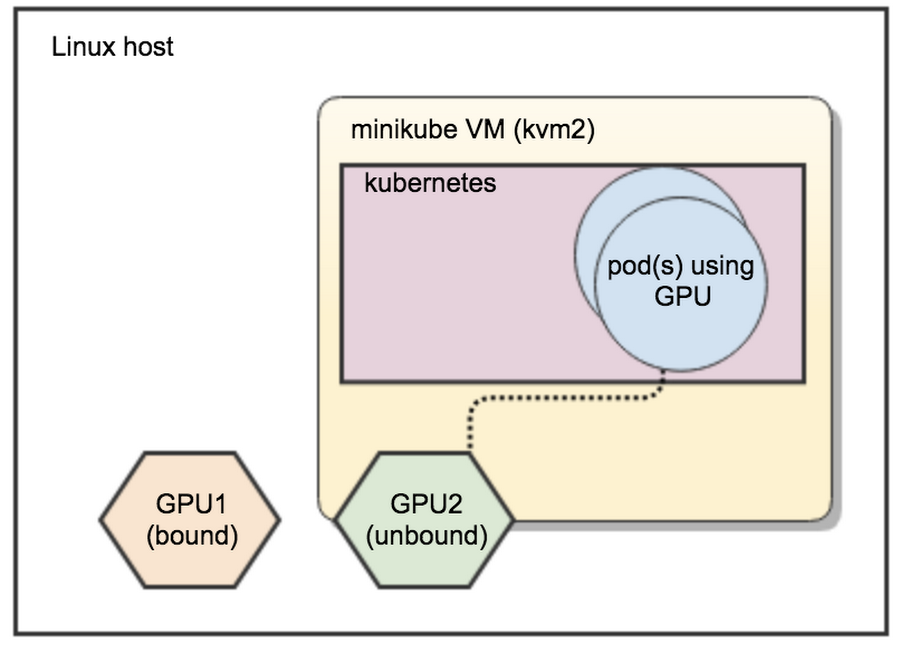

This is where Minikube can help. Minikube is the de-facto standard for running Kubernetes workloads locally. It runs a single-node Kubernetes cluster inside a VM on your developer machine. Then, this past summer, we added GPU support to minikube, improving the developer experience of creating GPU workloads on Kubernetes.

Now, you can pass-through a spare GPU from your workstation to this VM and run GPU workloads on minikube. Currently, this integration only works on Linux and has some hardware requirements. Learn more about the requirements and find detailed instructions on how to set this up in the minikube documentation.

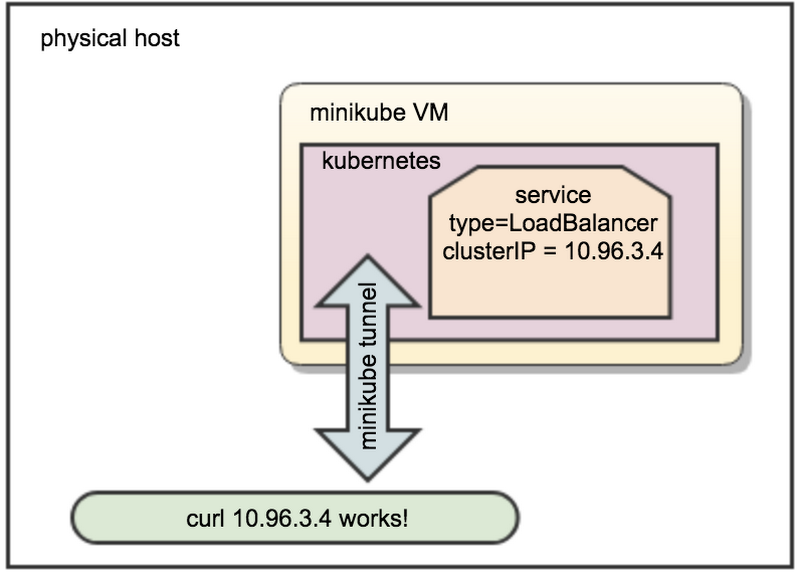

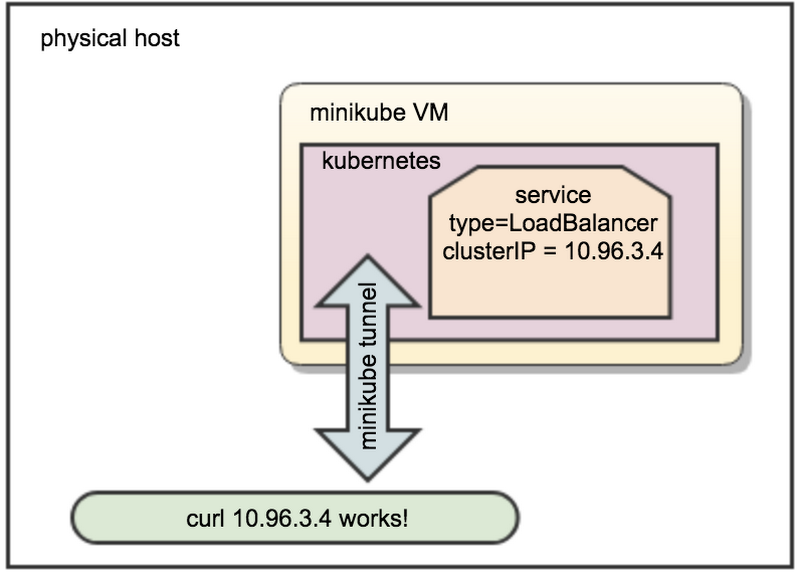

Run services of type=LoadBalancer on minikube

If the plan is to deploy your application to a cloud platform, you probably want to create some services with the Kubernetes LoadBalancer type, which creates a load balancer and external IP. For example, in GKE, the platform provisions the necessary infrastructure including firewall rules and an external endpoint and updates the service status with the external IP in the External-IP field. However, until recently, if you tried to do this in minikube, you would be faced with a never-resolving External-IP. For example, kubectl get svc nginx returns:

Minikube supports emulating behavior of services with a LoadBalancer type. But in case of minikube The command currently runs as a separate daemon, and creates a network route to the minikube VM, making the ClusterIP available from the host machine, and copies the clusterIP to the ExternalIP field:

You’ve been asking for this feature for a long time, and unlike other local container development environments, it works for as many load balancing services as you like. See the documentation for more details and try it out!

Build and deploy Java projects with Jib and Skaffold

Java developers draw from a vast ecosystem of tooling and libraries that make developing in Java a breeze. However, effectively containerizing Java apps on Kubernetes can be difficult. Build times can be slow. Containers can be heavy. Making a change and having your application server reload with that new change applied is not a simple process.

We announced Jib a few months ago. Jib containerizes your Java applications with zero configuration. With Jib, you don’t need to install Docker, run a Docker daemon, or even write a Dockerfile. Jib leverages information from your build system to containerize your applications efficiently and enable fast rebuilds. Jib is available as a plugin for Maven or Gradle so that you can use Jib with the build system you are familiar with. Just apply the plugin and run your build—you’ll have your container available no time.

Jib is now available as a builder in Skaffold. You can use Skaffold with a Maven or Gradle project configured with the Jib plugin. Skaffold uses Jib to containerize your JVM-based applications and deploy them to a Kubernetes cluster when it detects a change. No more tedious steps to redeploy your application for every change you make. You can now focus on what you really care about—writing code. To get started, simply add jibMaven or jibGradle to your artifact in your skaffold.yaml. See the Skaffold repository for an example.

We’re excited for you to try out Skaffold and Jib to help you improve your Java on Kubernetes development workflow. We are building out more integrations and welcome your feedback!

Sync files to your pods with Skaffold

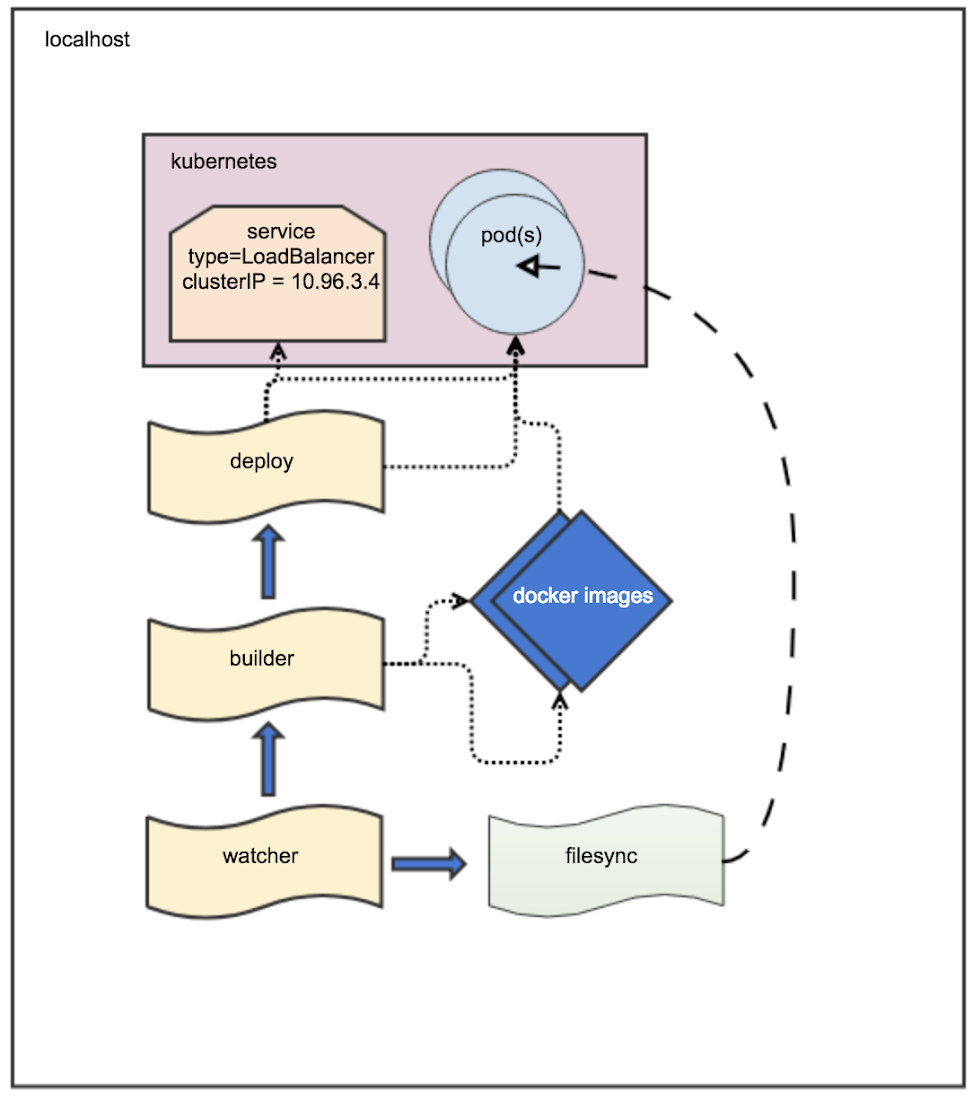

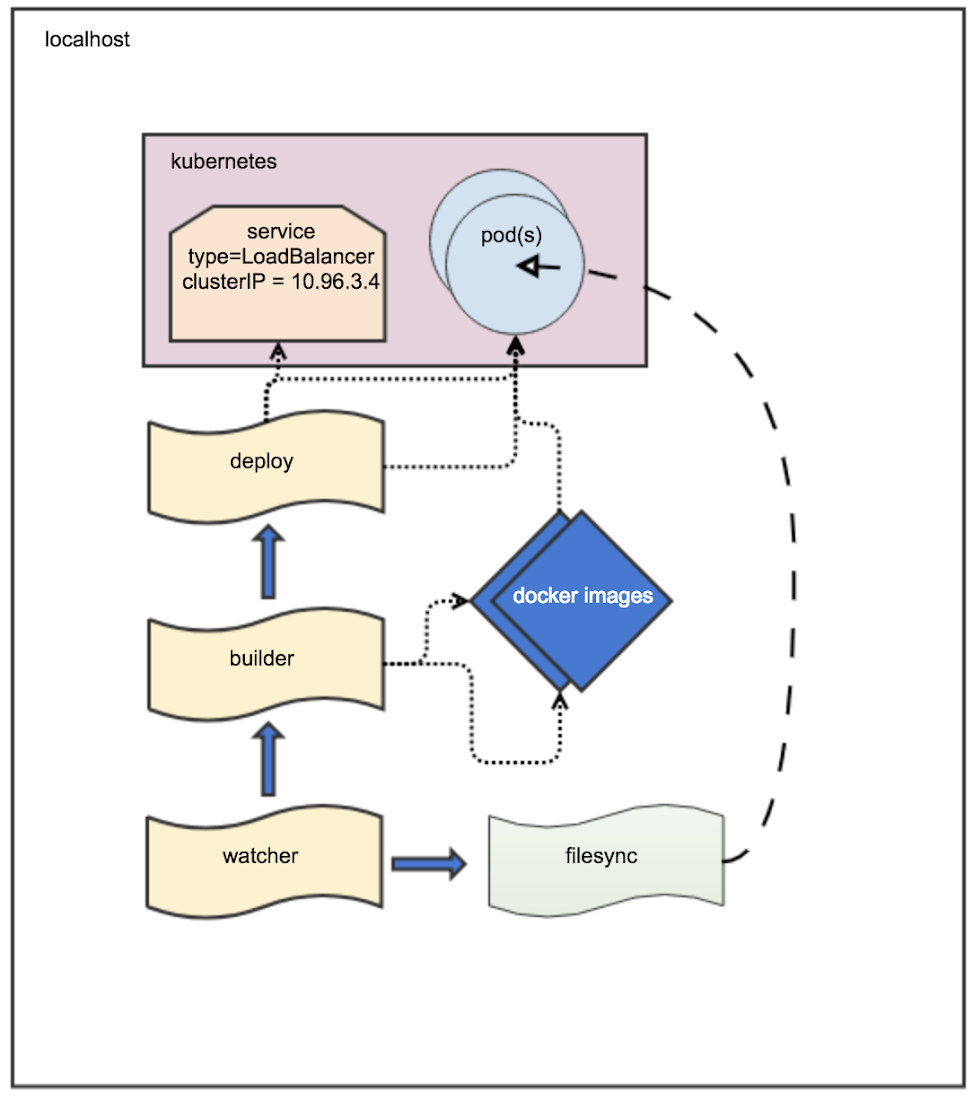

With even one change to a file, Skaffold rebuilds the images that depend on that file, pushes them to a registry, and then redeploys the relevant parts of your Kubernetes application. For most projects, immediate rebuild and redeploy is the quickest way to see the effects of local changes to your code.

However, what if you’re working on a simple web application and you modify just one HTML file. Though a rebuild and redeploy would incorporate this change correctly, it’s no longer the fastest solution. Instead, it would be much more efficient if Skaffold could simply inject the new version of the file into an already running container. The change would immediately be picked up, and the engineer could quickly visualize modifications to the code.

The Skaffold file sync feature solves this problem. For each image, you can specify which files can be synced directly into a running container. Then, when you modify these files, Skaffold copies them directly into the running container rather than kicking off a full rebuild and redeploy. With Skaffold’s file sync feature, you can enjoy even faster development!

Visit the links below and give a try of all Skaffold’s new features.

Making local development for Kubernetes awesome

Almost all great apps get their start on a developer’s laptop. Here at Google Cloud, we have a great group of people dedicated to making local development for Kubernetes applications awesome. Here’s a big shout out to everyone on the team who contributed to this article: Rohit Agarwal, Priya Wadhwa, Appu Goundan, Q Chen and Brian de Alwis, Kim Lewandowski, Ahmet Alp Balkan, David Gageot, Vic Iglesias and Don McCasland. And if you have other ideas about how to improve the local Kubernetes app development process, let us know!