Supercharging Vertex AI with Colab Enterprise and MLOps for generative AI

Nenshad Bardoliwalla

Director, Product Management, Vertex AI

Warren Barkley

Senior Director, Product Management, Google Cloud

Three years ago, we launched Vertex AI with the goal of providing the best AI/ML platform for accelerating AI workloads — one platform, every ML tool an organization needs. A lot has changed since then, especially this year, with Vertex AI adding support for generative AI, including developer-friendly products for building apps for common gen AI use cases, and access to over 100 large models from Google, open-source contributors, and third parties.

While this has significantly broadened the range of Vertex AI users, which now includes many developers working with AI for the first time, data science and machine learning engineering remain core to our focus. With that in mind, we’re excited to announce several new products for Vertex AI that can allow data scientists to achieve better productivity and collaboration, and organizations to better manage MLOps.

Launching today in public preview with GA planned for September, Colab Enterprise offers a managed service that combines the ease-of-use of Google’s Colab notebooks with enterprise-level security and compliance support capabilities. With it, data scientists can use Colab Enterprise to collaboratively accelerate AI workflows with access to the full range of Vertex AI platform capabilities, integration with BigQuery for direct data access, and even code completion and generation.

We’re also expanding Vertex AI’s open source support with the announcement of Ray on Vertex AI to efficiently scale AI workloads. On top of that, we’re continuing to advance MLOps for gen AI with tuning across modalities, model evaluation, and a new version of Vertex AI Feature Store with embeddings support. With these capabilities, customers can leverage the features they need across the entire AI/ML workflow, from prototyping and experimentation to deploying and managing models in production. Let’s take a closer look at these announcements and how they can help organizations advance their AI practice.

Bringing Colab to Google Cloud for better collaboration and productivity

The process of customizing existing foundation models or developing new models involves significant experimentation, collaboration, and iteration — tasks that data scientists typically complete via notebooks, often working in silo on a local laptop without proper security, governance, or access to the right hardware for AI/ML work.

Developed by Google Research and boasting over 7 million monthly active users, Colab is a popular cloud-based Jupyter notebook that runs in a browser, can execute Python code, facilitates sharing of projects and insights, and supports charts, images, HTML, LaTeX, and more. With 67% of Fortune 100 companies already using Colab, we’re pleased to offer these powerful capabilities now combined with the enterprise-ready security, data controls, and reliability of Google Cloud.

Data scientists can get up and running quickly with zero configuration required. Powered by Vertex AI, Colab Enterprise provides access to everything Vertex AI ePlatform has to offer — from Model Garden and a range of tuning tools, to flexible compute resources and machine types, to data science and MLOps tooling.

Colab Enterprise also powers a notebook experience for BigQuery Studio — a new unified, collaborative workspace that makes it easy to discover, explore, analyze, and predict data in BigQuery. Colab Enterprise enables users to start a notebook in BigQuery to explore and prepare data, then open that same notebook in Vertex AI to continue their work with specialized AI infrastructure and tooling. Teams can directly access data wherever they’re working. With the ability to share notebooks across team members and environments, Colab Enterprise can effectively eliminate the boundaries between data and AI workloads.

Working more flexibly with expanded open-source support

To help data science teams work in their preferred ways, Vertex AI supports a range of open-source frameworks such as Tensorflow, PyTorch, scikit-learn, and XGBoost. Today, we’re pleased to add support for Ray, an open-source unified compute framework to scale AI and Python workloads.

Ray on Vertex AI provides managed, enterprise-grade security and increased productivity alongside cost and operational efficiency. With integrations across Vertex AI products like Colab Enterprise, Training, Predictions, and MLOps features, it’s easy to implement Ray on Vertex AI within existing workflows.

To understand how Colab Enterprise and Ray on Vertex AI work togehter, a data scientist might start in Vertex AI’s Model Garden, where they can select from a wide range of open-source models such as Llama 2, Dolly, Falcon, Stable Diffusion, and more. With one click, these models can be opened in a Colab Enterprise notebook, ready for experimentation, tuning, and prototyping. With built in support for reinforcement learning alongside highly scalable and performant capabilities, Ray on Vertex AI is perfect for training open gen AI models and getting the most out of your compute resources. With Ray on Vertex AI, teams can easily create a Ray cluster and connect it to a Colab Enterprise notebook to scale a training job. From the notebook, they can easily deploy the model to a fully managed, autoscaling endpoint.

The ease of use of Ray on Vertex AI is already exciting customers.

“The supply chain industry has faced a number of challenges from dramatic increases in order volumes to goods shortages to changes in consumer buying patterns. It’s important that businesses are not only data-driven, but AI-driven as well. Through Ray on Vertex, it is easier to scale up the training procedure and train machine and reinforcement learning agents to optimize across a variety of warehouse operating conditions,” said Matthew Haley, Lead AI Scientist, and Murat Cubuktepe, Senior AI Scientist, at materials handling, software, and services firm Dematic. “With these improvements made possible by Vertex AI, we can help our clients save costs while navigating supply chain disruptions.”

Implementing comprehensive MLOps in the age of gen AI

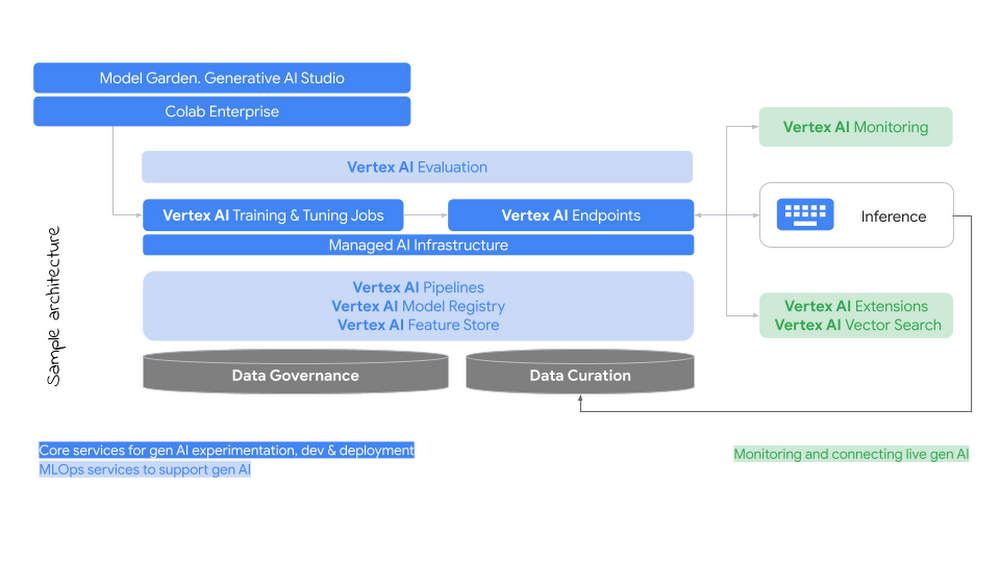

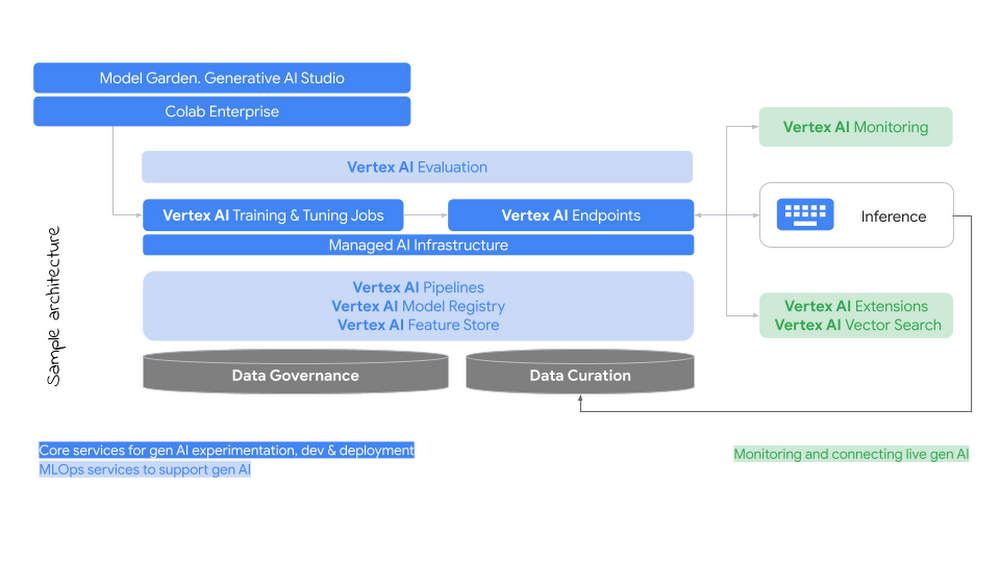

Customers frequently ask us how gen AI changes MLOps requirements. We believe there is no need to throw out existing MLOps investments. Many of the same best practices and requirements remain, including customizing models with enterprise data, managing models in a central repository, orchestrating workflows through pipelines, deploying models in production using endpoints or batch processing, and monitoring models in production.

That said, there are areas where organizations may need to update their MLOps strategy, as gen AI introduces new challenges, including:

Managing AI Infrastructure: Gen AI increases AI infrastructure requirements for training, tuning, and serving as model sizes grow.

Customizing with new techniques: There are now more ways to customize these multi-task models using various techniques across prompt engineering, supervised tuning, and reinforcement learning with human feedback (RLHF).

Managing new types of artifacts: Gen AI introduces new governance requirements associated with prompts, tuning pipelines, and embeddings.

Monitoring generated output: For production use, it’s essential to continuously monitor generated output for safety and recitation using responsible AI features.

Curating and connecting to enterprise data: For many use cases, models need fresh and relevant data from your internal systems and data corpus. Grounding on facts with citations becomes important to increase confidence in results.

Evaluating performance: Determining how to evaluate generated content or quality of a task introduces nuances far beyond what we see with predictive ML.

Enterprise-readiness is at the core of our approach to gen AI, and we’ve developed a new MLOps Framework for predictive and gen AI to help you navigate these challenges.

With two decades of experience running advanced AI/ML workloads at scale funneled into Vertex AI, many of our existing capabilities are well-suited to the needs of gen AI. A variety of hardware choices, including both GPUs and TPUs, let teams select AI Infrastructure optimized for large models, helping them achieve their preferred price and performance. Vertex AI Pipelines provides support for orchestrating and executing tuning or Reinforcement Learning with Human Feedback (or RLHF) pipelines. Model Registry can manage the lifecycle of gen AI models alongside predictive models. Together, data scientists can share and reuse new artifacts, even making it possible to version control and deploy through a CI/CD process.

Newer capabilities also provide a strong foundation for effectively using gen AI in production. Google Cloud offers the only curated collection of models from a hyperscale cloud provider that includes open source, first party, and third party models. It supports a variety of tuning methods, including supervised tuning and RLHF; includes built-in security, safety, and bias features, all rooted in Google’s Responsible AI Principles; and newly-announced extensions to external data sources and capabilities.

Today, we are introducing new features that further enhance MLOps for gen AI, including:

Tuning across modalities: We’re making supervised tuning generally available for PaLM 2 Text and bringing RLHF into public preview. We’re also introducing a new tuning method for Imagen called Style Tuning, so enterprises can create images aligned to their specific brand guidelines or other creative needs, with as few as 10 reference images required.

Model evaluation: Two new features promote continuous iteration and improvement by letting organizations systematically evaluate the quality of models: Automatic Metrics, where a model is evaluated based on a defined task and “ground truth” dataset; and Automatic Side by Side, which uses a large model to evaluate the output of multiple models being tested, helping to augment human evaluation at scale.

Feature Store with support for embeddings: We’re introducing the next generation of Vertex AI Feature Store, now built on BigQuery, to help you avoid data duplication and preserve data access policies. The new Feature Store natively supports vector embeddings data type, allowing you to simplify the infrastructure required to store, manage and retrieve unstructured data in real-time.

“Vertex AI Feature Store’s new capabilities present a major operational win for our team,” said Gabriele Lanaro, Senior Machine Learning Engineer, at ecommerce company Wayfair. “We expect improvements across the board in areas such as:

Inference and Training - Native BigQuery operations are fast and intuitive to use, which will not only simplify our MLOps pipeline but also allow our data scientists to experiment smoothly.

Feature Sharing - By using BigQuery datasets with familiar permission management, we can better catalog our data with our existing tools.

Embeddings Support - We leverage custom vector embeddings generated by our in-house algorithms for many applications, such as encoding customer behavior for scam detection. It’s great to see vectors become first-class citizens in the Vertex AI Feature Store. Reducing the number of systems we need to maintain frees up our MLOps for more tasks, helping our teams ship models into production faster.”

MLOps for Gen AI - Reference Architecture

As AI becomes more deeply embedded in every enterprise, with more employees tackling AI use cases across the organization, your AI platform is increasingly vital. We aim to meet the pace of today's innovation with the capabilities needed for enterprise ready AI.

To learn more and get started with Colab Enterprise visit documentation. To learn more about managing gen AI, check out the new AI Readiness Quick Check tool based on Google’s AI Adoption Framework.