New Vertex AI Feature Store built with BigQuery, ready for predictive and generative AI

Raiyaan Serang

Senior Product Manager, Vertex AI

Alex Martin

Product Manager, Vertex AI

Vertex AI is Google Cloud’s machine learning (ML) platform for training and deploying ML models and AI applications — a one-stop destination for data engineering, data science, and ML engineering workflows. The new Vertex AI Feature Store supports these workflows and is in public preview. Feature Store is now fully powered by your organization’s existing BigQuery infrastructure and unlocks both predictive and generative AI workloads at any scale. We are also adding new real-time serving options that offer low latencies for feature lookups. Newly-added vector retrieval functionality directly integrates with BigQuery to bring native embeddings support to your feature store.

What is a feature store?

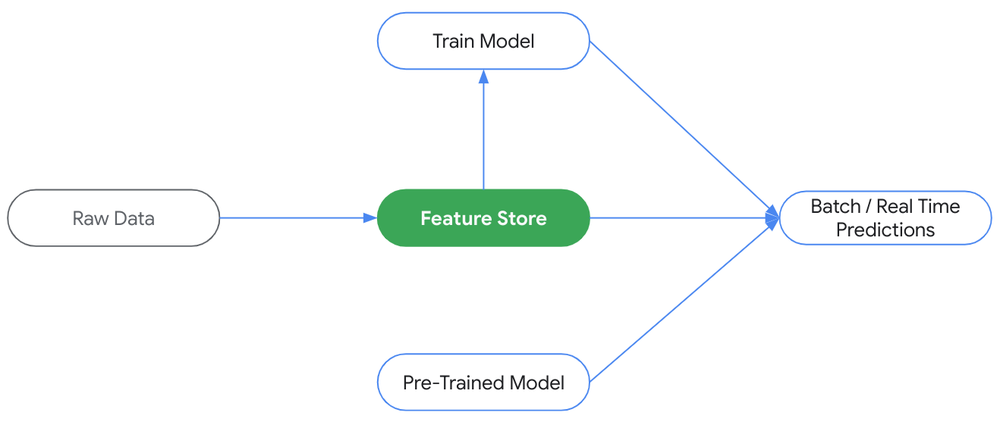

A feature store is a centralized repository for the management and processing of machine learning inputs, also known as features. Feature stores are an integral part of an end-to-end MLOps framework. They enable data scientists and machine learning practitioners to reduce the cycle time of AI/ML deployments by organizing ML features across the entire organization. Feature stores make it easy and efficient to create, store, share, discover, and serve data for ML applications.

Feature Stores are at the heart of machine learning

Most ML teams adopt feature stores to address three key problems:

- Lack of feature reuse and sharing - Multiple data science teams re-create the same features, leading to redundant work, unnecessary storage and compute expenses, and lost productivity.

- Offline-online feature inconsistency - Train-serve skew is a common reason why production models don’t perform as well as they did in training. The skew often occurs when there is a change between how data is handled in training versus serving pipelines.

- Real-time data serving is challenging - Serving feature values at scale with low latency and high availability can be difficult and expensive. Often, multiple teams need to be involved to build and maintain complex infrastructure.

Feature stores help address these problems by bringing discoverability and observability to the experience, providing governance and guardrails for data usage in training and prediction, and managing online serving databases for scalable and efficient serving of ML data.

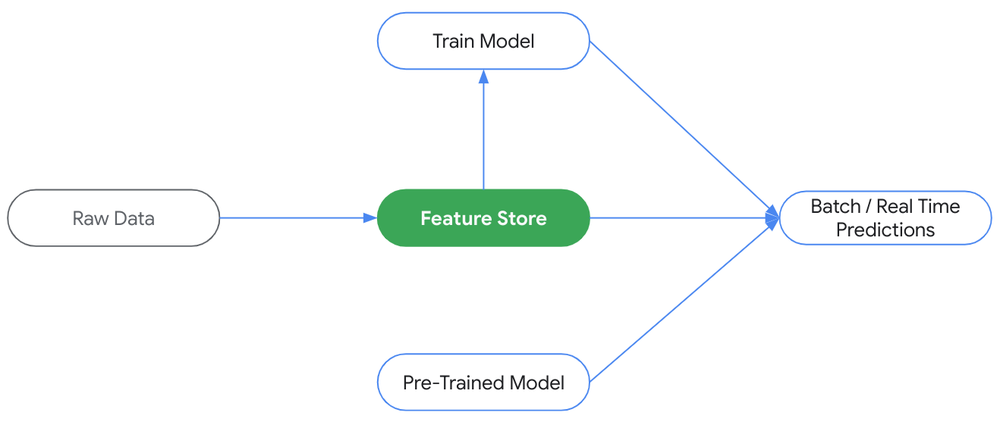

Introducing the next-generation Vertex AI Feature Store

The new and improved Vertex AI Feature Store is poised to bring a more streamlined and compelling experience for our users across three main areas:

- Built on BigQuery - BigQuery can now be an organization’s offline store, letting enterprises bring the feature store experience to existing BigQuery infrastructure, avoid data duplication, and save on costs. Organizations can also now use the full power and flexibility of BigQuery SQL to retrieve and modify features, along with the data access and governance controls they’re already used to.

- Low-latency serving - Forget about having to orchestrate complex online architectures and instead leverage fully managed, high performance real-time serving infrastructure that scales with needs. With 99% of requests met within 2 milliseconds (according to internal benchmarks), Feature Store supports real-time user experiences with new optimized online serving in addition to Bigtable online serving. Organizations won’t have to worry about cluster configuration or data sync conflicts to serve BigQuery data of any size at any QPS.

- Ready for both predictive & generative AI - Store vector embeddings in BigQuery and easily deploy them for real time serving, like any other Feature Store feature. Leverage BigQuery’s massively scalable infrastructure to perform similarity search at scale during model training, experimentation, and batch workloads. For real-time similar data retrieval, organizations can use native embeddings methods in the Feature Store API. There is no need to set up additional infrastructure or copy data to a specialized database. Retrieve similar values in real time at any scale with extremely low latency at any QPS.

Vertex AI Feature Store now places BigQuery at the center of the experience

Initial customer reactions

The Feature Store team worked hand-in-hand with many Google Cloud customers to incorporate feedback and improve the product experience, bringing simplicity, speed, and embeddings support to customers like Wayfair and Shopify:

“Vertex Feature Store’s new capabilities present a major operational win for our team. We expect improvements across the board in areas such as:

- Inference and Training - Native BigQuery operations are fast and intuitive to use, which will not only simplify our MLOps pipeline but also allow our data scientists to experiment seamlessly.

- Feature Sharing - By using BigQuery datasets with familiar permission management, we can better catalog our data with our existing tools.

- Embeddings Support - We leverage custom vector embeddings generated by our in-house algorithms for many applications, such as encoding customer behavior for scam detection. It’s great to see vectors become first-class citizens in the Vertex AI Feature Store. Reducing the number of systems we need to maintain frees up our MLOps for more tasks, helping our teams ship models into production faster.”

- Gabriele Lanaro, Senior Machine Learning Engineer, Wayfair

"Feature Store 2.0 is a significant improvement in design. The reconfiguration of BigQuery as the offline store is a key change, eliminating the need for data duplication. The speed of the low latency online store ensures quick online inference for machine learning models that will unlock new live and responsive tools for our merchants. These improvements are a testament to our partnership with Google Cloud, and we're excited to see how these continued improvements can provide our merchants with an unfair advantage in the future."

- Marc-Antoine Bélanger, Senior Data Developer, Shopify

What’s next

We’re thrilled to expand access to more customers to enable them to try the new Feature Store and look forward to incorporating more feedback along our path to General Availability. To get started, please refer to our official Public Preview documentation.

Learn more

Curious about the vision of the new Feature Store? Check out our session at NEXT ‘23 and read more about Vertex AI announcements made at Google Cloud Next ‘23.