Get to know Cloud TPUs

Yufeng Guo

Developer Advocate, Google Cloud Platform

Editor’s note: Yufeng Guo is a Developer Advocate who focuses on AI technologies.

No matter what type of workloads we’re running, we’re always looking for more computational power. With general purpose processors, we’re quickly coming up against a hard limit: the laws of physics. At the same time, the things we’re trying to accomplish with machine learning keep getting more complex and compute intensive.

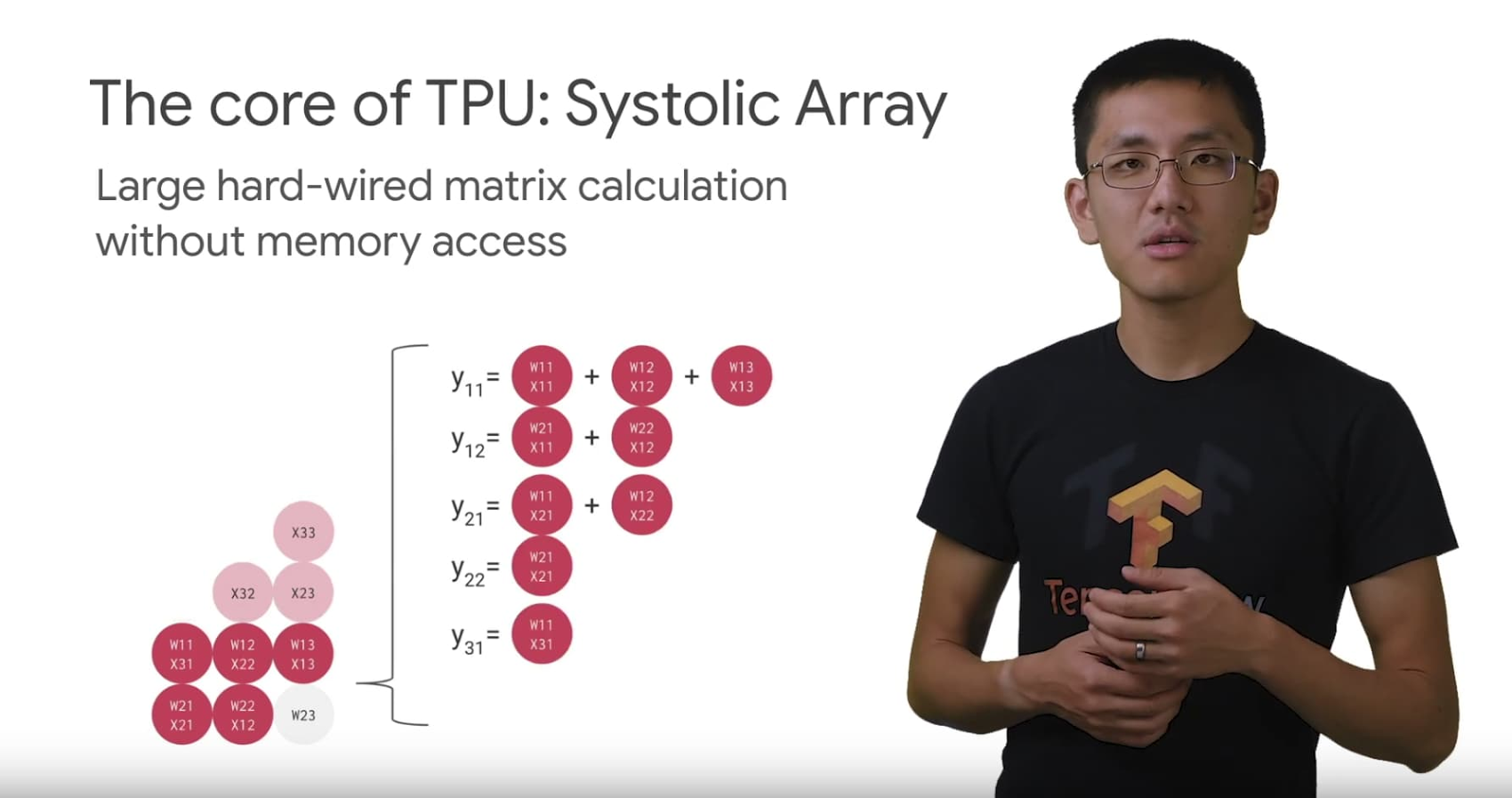

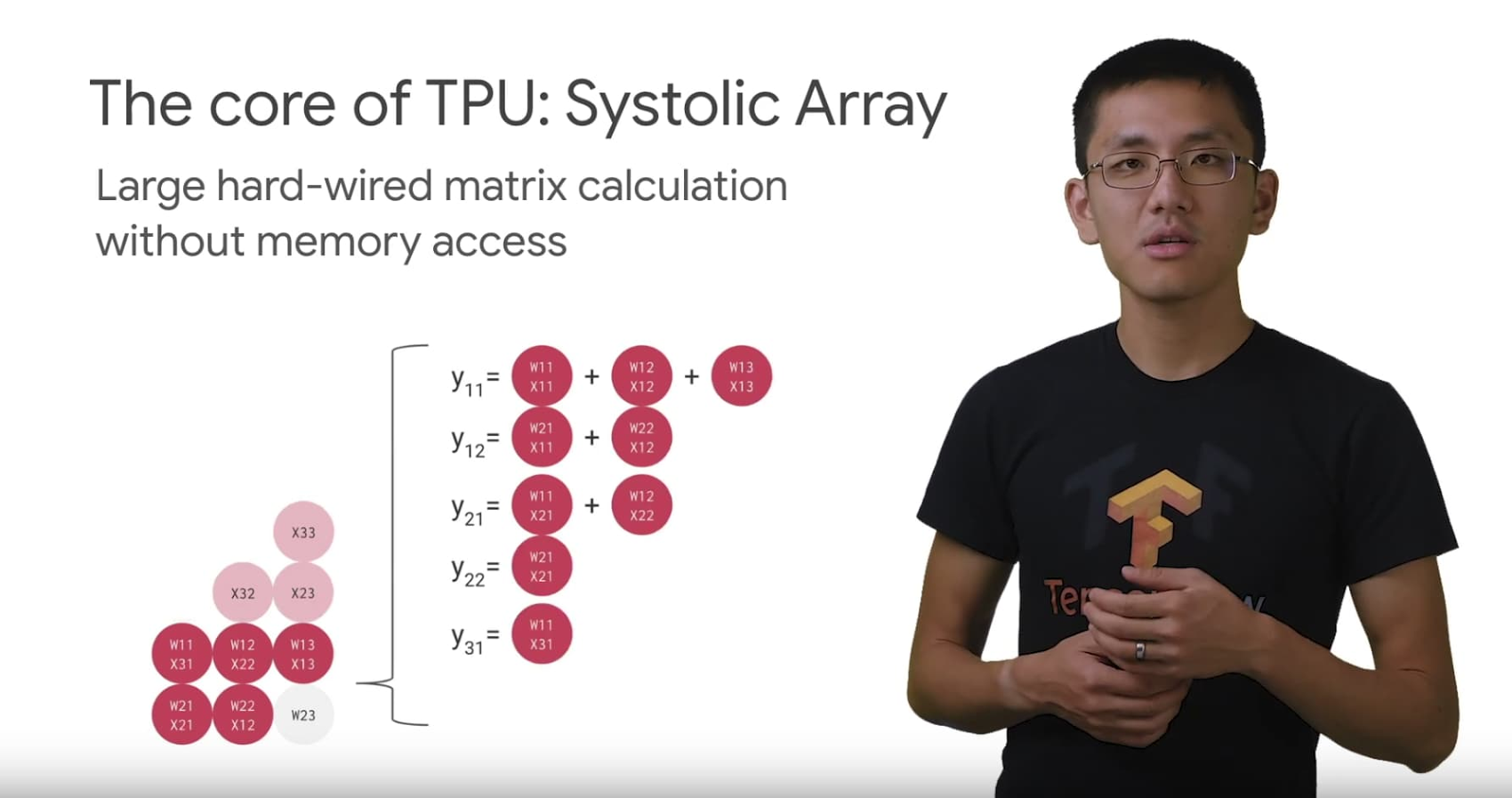

This need for more specific computational power is what led to the creation of the first tensor processing unit (TPU). Google purpose-built the TPU to tackle our own machine learning workloads, and now they’re available to Google Cloud customers. The more we understand about TPUs, and why they were built the way they are, the better we can design machine learning architectures and software systems to tackle even more complex problems in the future.

In this two-part video series, I look at the origins of the customer AI chip and what makes the TPU so specialized for your AI challenges.

First dive into the history and hardware of TPUs. You’ll learn why Google created an application specific integrated circuit (ASIC) for AI workloads like Translate, Photos, and even Search. You’ll also learn about the TPU architecture and how it differs from CPUs and GPUs.

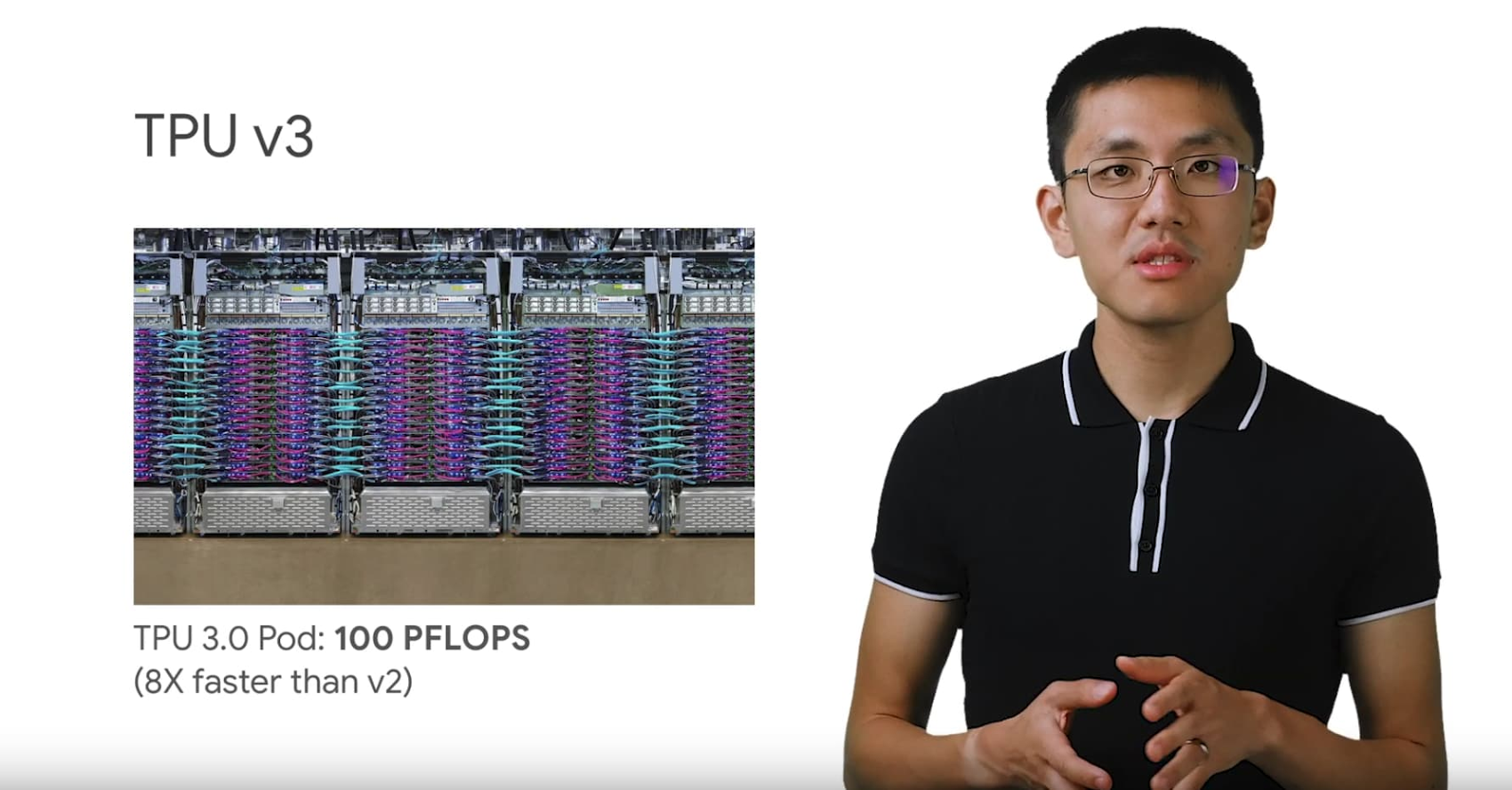

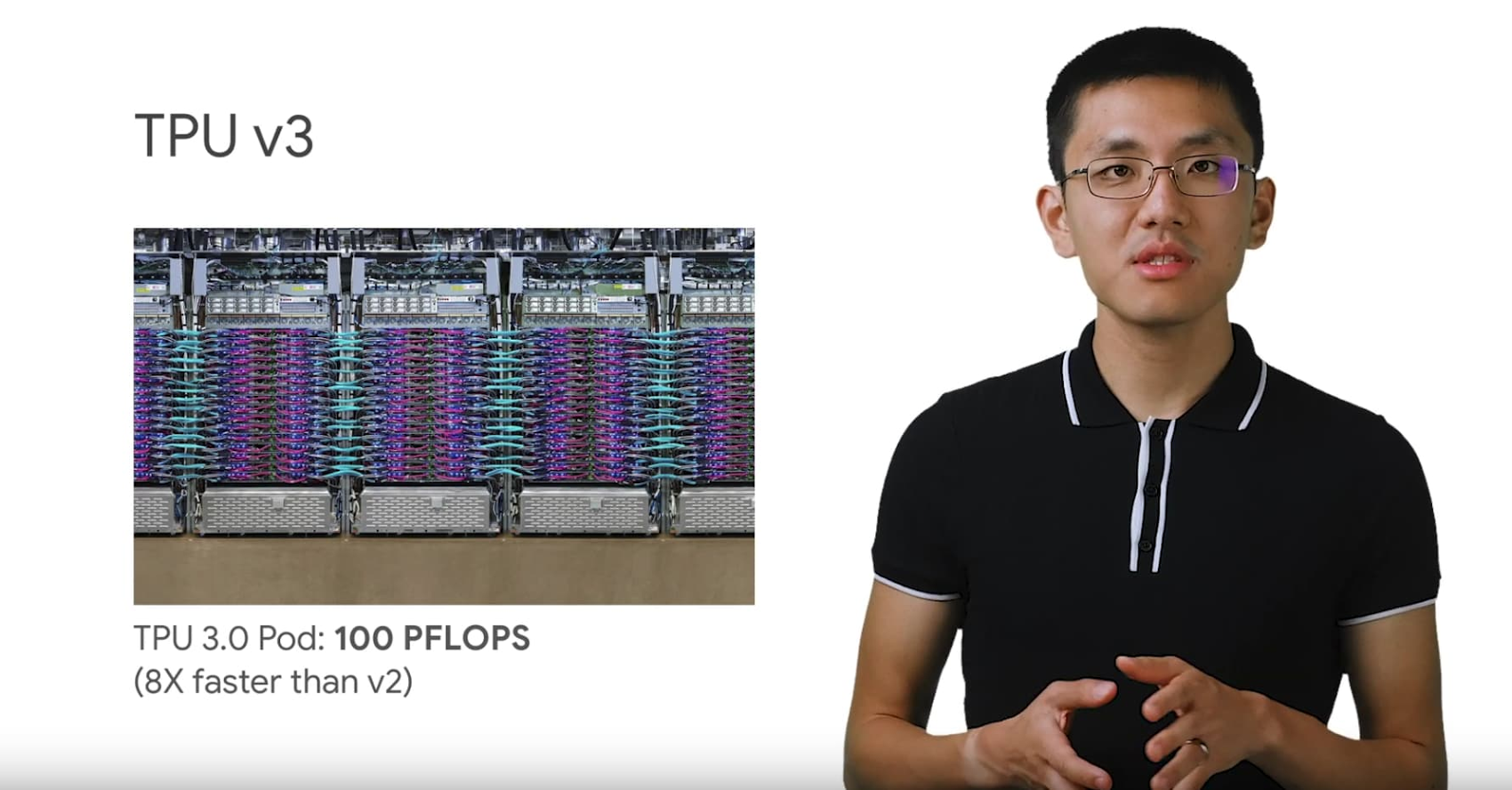

Next, dive into the TPU v2 and v3, publicly available through Google Cloud. Learn more about their performance capabilities, like connecting thousands together in the form of Cloud TPU Pods. This video also covers basic benchmark examples, and new usability improvements with TensorFlow 2.0 and the Keras API.

Learn more about Cloud TPUs and pricing at the website and check out the documentation to see how to get started. You can also join our Kaggle community and the second competition using TPUs to identify toxicity comments across multiple languages. For more information about AI in general, check out the AI Adventures series on YouTube.