Getting started with Cloud TPUs: An overview of online resources

The Google Cloud team

The foundation for machine learning is infrastructure that’s powerful enough to swiftly perform complex and intensive data computation. But for data scientists, ML practitioners, and researchers, building on-premises systems that enable this kind of work can be prohibitively costly and time-consuming. As a result, many turn to providers like Google Cloud because it’s simpler and more cost-effective to access that infrastructure in the cloud.

The infrastructure that underpins Google Cloud was built to push the boundaries of what’s possible with machine learning—after all, we use it to apply ML to many of our own popular products, from Street View to Inbox Smart Reply to voice search. As a result, we’re always thinking of ways we can accelerate machine learning and make it more accessible and usable.

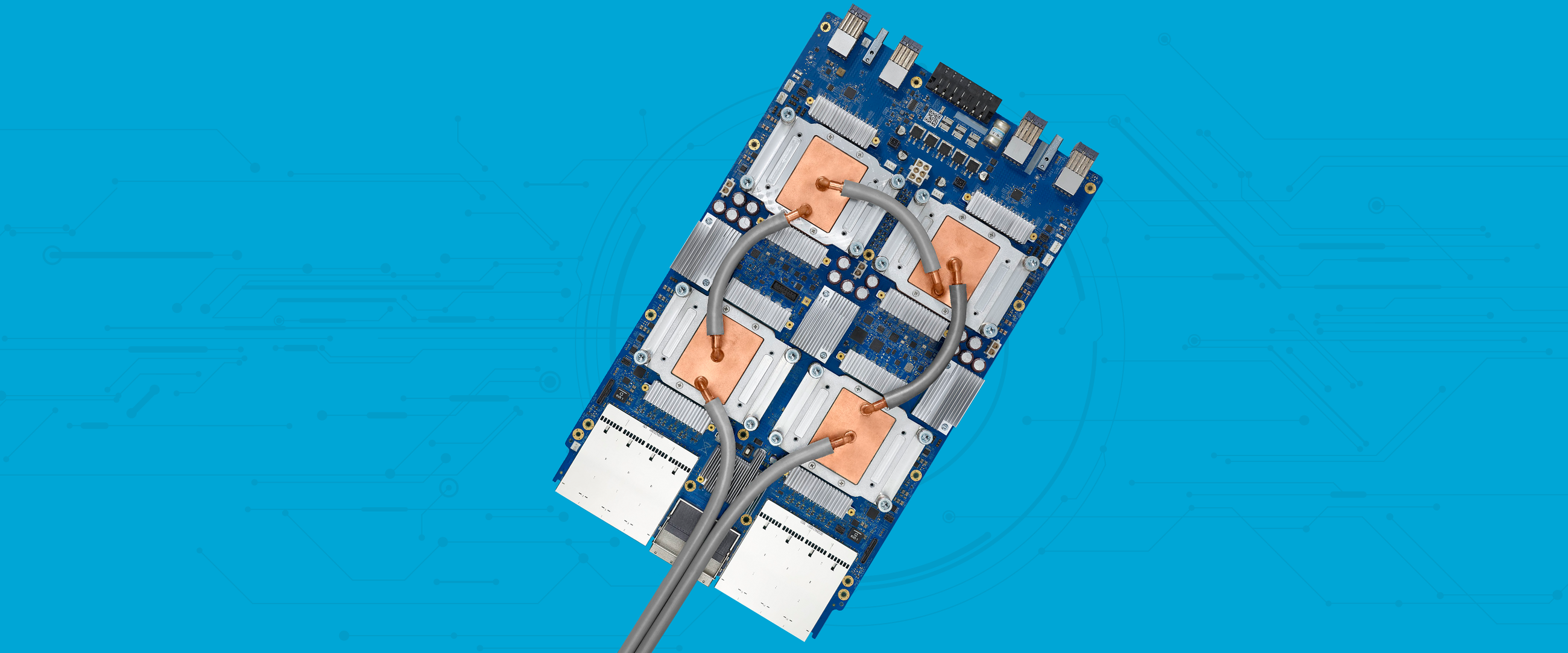

One way we’ve done this is by designing our very own custom machine learning accelerators, ASIC chips we call tensor processing units, or TPUs. In 2017 we made TPUs available to our Google Cloud customers for their ML workloads, and since then, we’ve introduced preemptible pricing, made them available for services like Cloud Machine Learning Engine and Kubernetes Engine, and introduced our TPU Pods.

While we’ve heard from many organizations that they’re excited by what’s possible with TPUs, we’ve also heard from some that are unsure of how to get started. Here’s an overview of everything you might want to know about TPUs—what they are, how you might apply them, and where to go to get started.

I want a technical deep dive on TPUs

To give users a closer look inside our TPUs, we published an in-depth overview of our TPUs in 2017 based on our in-datacenter performance analysis whitepaper.

At Next ‘18, “Programming ML Supercomputers: A Deep Dive on Cloud TPUs” covered the programming abstractions that allow you to run your models on CPUs, GPUs, and TPUs, from single devices up to entire Cloud TPU pods. “Accelerating machine learning with Google Cloud TPUs” from O’Reilly AI Conference in September, also takes you through a technical deep dive on TPUs, as well as how to program them.

And finally, you can also learn more about what makes TPUs fine-tuned for deep learning and hyperparameter tuning using TPUs in Cloud ML Engine.

I want to know how fast TPUs are, and what they might cost

In December, we published the MLPerf 0.5 benchmark results which measure performance for training workloads across cloud providers and on-premise hardware platforms. The findings demonstrated that a full Cloud TPU v2 pod can deliver the same result in 7.9 minutes of training time that would take a single state-of-the-art GPU 26 hours.

From a cost perspective, the results also revealed revealed a full Cloud TPU v2 Pod can cost 38% less, than training the same model to the same accuracy on an n1-standard-64 Google Cloud VM with eight V100 GPUs attached, and can complete the training task 27 times faster. We also shared more on why we think Google Cloud is the ideal platform to train machine learning models at any scale.

I want to I want to understand the value of adopting TPUs for my business

The Next ‘18 session Transforming Your Business with Cloud TPUs can help you identify business opportunities to pursue with Cloud TPUs across a variety of application domains, including image classification, object detection, machine translation, language modeling, speech recognition, and more.

One example of a business already using TPUs is eBay. Visual search is an important way eBay customers quickly find what they’re looking for. But with more than a billion product listings, eBay has found training a large-scale visual search model is no easy task. As a result, they turned to Cloud TPUs. You can learn more by reading their blog or watching their presentation at Next '18.

I want to quickly get started with TPUs

The Cloud TPU Quickstart sets you up to start using TPUs to accelerate specific TensorFlow machine learning workloads on Compute Engine, GKE, and Cloud ML Engine. You can also take advantage of our open source reference models and tools for Cloud TPUs. Or you can try out this Cloud TPU self-paced lab.

I want to meet up with Google engineers and others in the AI community to learn more

If you’re located in the San Francisco Bay Area, our AI Huddles provide a monthly, in-person place where you can find talks, workshops, tutorials, and hands-on labs for applying ML on GCP. At our November AI Huddle, for example, ML technical lead Lak Lakshmanan shared how to train state-of-the-art image and text classification models on TPUs. You can see a list of our upcoming huddles here.

Want to keep learning? Visit our website, read our documentation, or give us feedback.