Unified data and ML: 5 ways to use BigQuery and Vertex AI together

Sara Robinson

Staff Developer Relations Engineer

Shana Matthews

Cloud AI Product Marketing

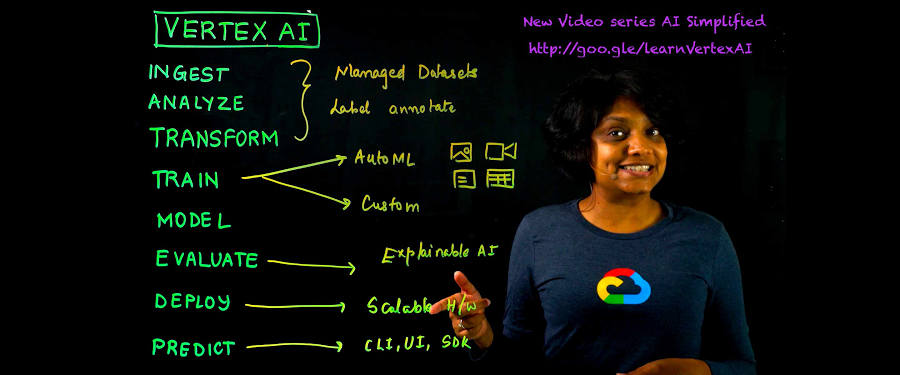

Are you storing your data in BigQuery and interested in using that data to train and deploy models? Or maybe you’re already building ML workflows in Vertex AI, but looking to do more complex analysis of your model’s predictions? In this post, we’ll show you five integrations between Vertex AI and BigQuery, so you can store and ingest your data; build, train and deploy your ML models; and manage models at scale with built-in MLOps, all within one platform. Let’s get started!

April 2022 update: You can now register and manage BigQuery ML models with Vertex AI Model Registry, a central repository to manage and govern the lifecycle of your ML models. This enables you to easily deploy your BigQuery ML models to Vertex AI for real time predictions. Learn more in this video about “ML Ops in BigQuery using Vertex AI.”

Import BigQuery data into Vertex AI

If you’re using Google Cloud, chances are you have some data stored in BigQuery. When you’re ready to use this data to train a machine learning model, you can upload your BigQuery data directly into Vertex AI with a few steps in the console:

You can also do this with the Vertex AI SDK:

Notice that you didn’t need to export our BigQuery data and re-import it into Vertex AI. Thanks to this integration, you can seamlessly connect your BigQuery data to Vertex AI without moving your data from the cloud.

Access BigQuery public datasets

This dataset integration between Vertex AI and BigQuery means that in addition to connecting your company’s own BigQuery datasets to Vertex AI, you can also utilize the 200+ publicly available datasets in BigQuery to train your own ML models. BigQuery’s public datasets cover a range of topics, including geographic, census, weather, sports, programming, healthcare, news, and more.

You can use this data on its own to experiment with training models in Vertex AI, or to augment your existing data. For example, maybe you’re building a demand forecasting model and find that weather impacts demand for your product; you can join BigQuery’s public weather dataset with your organization’s sales data to train your forecasting model in Vertex AI.

Below, you’ll see an example of importing the public weather data from last year to train a weather forecasting model:

Accessing BigQuery data from Vertex AI Workbench notebooks

Data scientists often work in a notebook environment to do exploratory data analysis, create visualizations, and perform feature engineering. Within a managed Workbench notebook instance in Vertex AI, you can directly access your BigQuery data with a SQL query, or download it as a Pandas Dataframe for analysis in Python.

Below, you’ll see how you can run a SQL query on a public London bikeshare dataset, then download the results of that query as a Pandas Dataframe to use in my notebook:

Analyze test prediction data in BigQuery

That covers how to use BigQuery data for training models in Vertex AI. Next, we’ll look at integrations between Vertex AI and BigQuery for exporting model predictions.

When you train a model in Vertex AI using AutoML, Vertex AI will split your data into training, test, and validation sets, and evaluate how your model performs on the test data. You also have the option to export your model’s test predictions to BigQuery so you can analyze them in more detail:

Then, when training completes, you can examine your test data and run queries on test predictions. This can help determine areas where your model didn’t perform as well, so you can take steps to improve your data next time you train your model.

Export Vertex AI batch prediction results

When you have a trained model that you’re ready to use in production, there are a few options for getting predictions on that model with Vertex AI:

Deploy your model to an endpoint for online prediction

Export your model assets for on-device prediction

Run a batch prediction job on your model

For cases in which you have a large number of examples you’d like to send to your model for prediction, and in which latency is less of a concern, batch prediction is a great choice. When creating a batch prediction in Vertex AI, you can specify a BigQuery table as the source and destination for your prediction job: this means you’ll have one BigQuery table with the input data you want to get predictions on, and Vertex AI will write the results of your predictions to a separate BigQuery table.

With these integrations, you can access BigQuery data, and build and train models. From there Vertex AI helps you:

Take these models into production

Automate the repeatability of your model with managed pipelines

Manage your models performance and reliability over time

Track lineage and artifacts of your models for easy-to-manage governance

Apply explainability to evaluate feature attributions

What’s Next?

Ready to start using your BigQuery data for model training and prediction in Vertex AI? Check out these resources:

Codelab: Intro to Vertex AI Workbench

Documentation: Vertex AI batch predictions

Video Series: AI Simplified: Vertex AI

GitHub: Example Notebooks

Training: Vertex AI: Qwik Start

Are there other BigQuery and Vertex AI integrations you’d like to see? Let Sara know on Twitter at @SRobTweets.