Build, deploy, and scale ML models faster with Vertex AI’s new training features

Winston Chiang

Product Manager

Nathan Li

Software Engineer

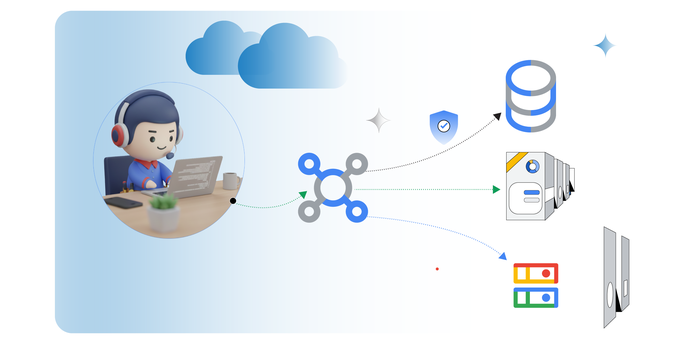

Vertex AI includes over a dozen powerful MLOps tools in one unified interface, so you can build, deploy, and scale ML models faster. We’re constantly updating these tools, and we recently enhanced Vertex AI Training with an improved Local Mode to speed up your debugging process and Auto-Container Packaging to simplify cloud job submissions. In this article, we’ll look at these updates, and how you can use them to accelerate your model training workflow.

Debugging is an inherently repetitive process with small code change iterations.

Vertex AI Training is a managed cloud environment that spins up VMs, loads dependencies, brings in data, executes code, and tears down the cluster for you. That’s a lot of overhead to test simple code changes, which can greatly slow down your debugging process. Before submitting a cloud job, it’s common for developers to first test code locally.

Now, with Vertex AI Training’s improved Local Mode, you can iterate and test your work locally on a small sample data set without waiting for the full Cloud VM lifecycle. This is a friendly and fast way to debug code before running it at cloud scale.

By leveraging the environment consistency made possible by Docker Containers, Local Mode lets users submit their code as a local run with the expectation it will be processed in a similar environment to the one executing a cloud job. This results in greater reliability and reproducibility. With this new capability, you can debug simple run time errors faster since they do not need to submit the job to the cloud and wait for VM cluster lifecycle overhead. Once you have setup the environment, you can launch a local run with gcloud:

Once you are ready to run your code at cloud scale, Auto-Container Packaging simplifies the cloud job submission process. To run a training application, you need to upload your code and any dependencies. Previously this process took three steps:

Build the docker container locally.

Push the built container to a container repository.

With Auto-Container Packaging, that 3 step process is brought down to a single Create step:

Additionally, even if you are not familiar with Docker, Auto-Container Packaging lets you take advantage of the consistency and reproducibility benefits of containerization.

These new Vertex AI Training features further simplify and speed up your model training workflow. Local Mode helps you iterate faster with small code changes to quickly debug runtime errors. Auto-Container Packaging reduces the steps it takes to submit your local python code as a scaled up cloud job.

You can try this codelab to gain hands-on experience with these features.

To learn more about the improved local mode, visit our local mode documentation guide.

Auto-Container Packaging documentation can be found on the Create a Custom Job documentation page under “gcloud.”

- To learn about Vertex AI, check out this blog post from our developer advocates.