Why AI: Can new tech help security solve toil, threat overload, and the talent gap?

Seth Rosenblatt

Security Editor, Google Cloud

Adam Greenberg

Content Marketing Manager, Mandiant

How AI can take the pain out of 3 of security’s most deeply-rooted problems

As the security industry has gotten better at recognizing the signals that need to be turned into alerts, the tinny, metallic “ping” of yet another alert demanding attention can be almost trauma-inducing for a weary security analyst. Each of these alerts has the potential to be the first sign of a major security incident, but relatively few of them are.

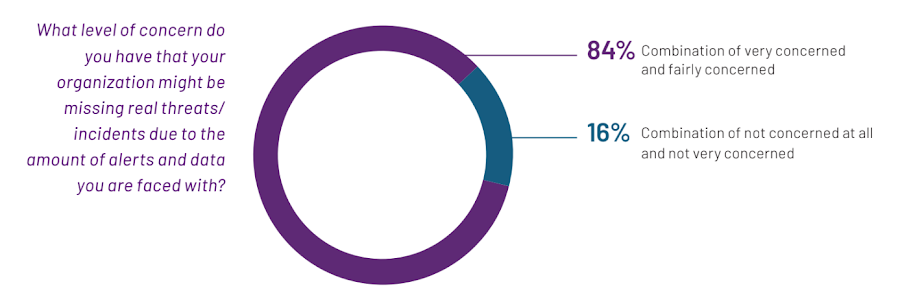

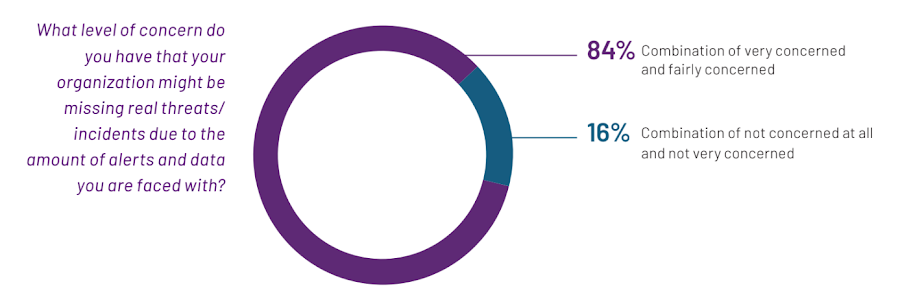

Alert fatigue makes it harder to discern the most serious threats from lesser threats and false positives. According to Mandiant’s 2023 Global Perspectives on Threat Intelligence survey, 84% of respondents said that they are “fairly concerned” or “very concerned” that their organization might be missing real threats or incidents because of the volume of alerts and data that they must respond to and analyze.

In the same survey, 69% of respondents said that they believe their IT employees “feel overwhelmed” by incoming alerts and data. That figure jumped to 84% for North American respondents, when broken down by region.

Majority of respondents said that they are “fairly concerned” or “very concerned” that their organization might be missing real threats or incidents because of the volume of alerts and data that they must respond to and analyze, 2023 Global Perspectives on Threat Intelligence, Mandiant.

Alert fatigue has long been a struggle in cybersecurity, and part of a larger problem we call “threat overload.” This challenge, along with the manual nature of most security operations tasks that leads to vast human toil and the widening security talent gap, is notorious for burning out security teams, and holding back security operations centers from being able to optimize their work and move from being largely reactive to more proactive.

Speaking directly to board members, business leaders, CIOs, CISOs, and leading security professionals, security experts at Google Cloud and Mandiant have consistently heard the same thing: There is an urgent need for technology to simplify complex issues for those with less security expertise, and to provide guidance on how best to act. We believe that machine learning (ML) and artificial intelligence (AI) tools can significantly lighten the burden — and possibly even eliminate — three of security’s thornier problems.

Withered by toil

Two of those thorny problems — excess threats and a lack of trained experts to address them — are hard problems to solve, but not necessarily complicated ones. However, “toil” is a messy root ball that can strangle the efforts of even the best-resourced organizations.

Security operations have long been caught in toil’s grip. As defined in Google’s Site Reliability Engineering book, toil is “the kind of work tied to running a production service that tends to be manual, repetitive, automatable, tactical, and that scales linearly as a service grows.”

For some analysts, their entire workload fits the SRE definition of 'toil.'

Iman Ghanizada, global head of Autonomic Security Operations, Google Cloud, and Anton Chuvakin, security solution strategist, Google Cloud

“Toil can also smother skilled security experts before they’ve had a chance to bloom into the kind of dedicated engineers who can help close the talent gap”, wrote Google Cloud’s Iman Ghanizada, global head of Autonomic Security Operations, and Anton Chuvakin, security solution strategist.

“If you’re a security analyst, you may realize that sifting through toil is one of the more significant and burdensome elements of your role,” they wrote. “For some analysts, their entire workload fits the SRE definition of ‘toil.’”

Security leaders tell us that tools are one of the bigger producers of toil, so part of Google Cloud’s mission is to leverage AI in ways that enable teams to better use the tools they have, and in some cases get rid of them altogether. AI can help solve these problems by supporting teams in managing multiple environments, implementing security design and capabilities, and generating security controls — effectively, helping systems secure themselves.

Confronting the swarm of threats

At the top of the post we talked about alert fatigue being part of a bigger problem: threat overload. Threat overload is the idea that there are too many threats, and organizations cannot track them all. Nor should they try to. One of the best options to free up resources and make security operations more efficient has been to use threat intelligence to identify and protect the business against the threats that matter most.

When an organization ingests threat information and scans for vulnerabilities, it’s important to be able to prioritize responses. Intelligence is only useful if an organization can properly apply it, and many organizations struggle to do so. AI can help identify an organization’s vulnerabilities, whether threat actors are exploiting those vulnerabilities to attack organizations, and how best to prioritize the organization’s defensive response. Ultimately, AI can help reduce an organization’s exposure and prevent incidents from potentially turning into full-blown breaches.

Mind the talent gap

Although organizations will often look to technology and automation to solve cybersecurity problems, there is still a human component to security. Yet finding people with the right skills to perform those jobs is a challenge — and it seems to be growing more complex as the attack surface, and subsequently the threat landscape, continues to evolve.

Because the demand for skilled cybersecurity workers is so high, organizations often don’t have the budget, security technology tools, or institutional maturity to procure them. Also, many skilled cybersecurity workers don’t want to deal with the high pressure and stressors that come with working at less security-savvy organizations.

Using AI in this way can help close the skills gap and expand the hiring pool, since AI should be able to make sense of the complex problems and interpret the results for staff with fewer technical skills.

While there are efforts to train more experts — especially those with more diverse backgrounds — for security teams, adopting embedded AI and ML technologies to automate mundane and repetitive tasks can help fill the hiring gaps in resource-constrained security operations centers.

For example, generative AI can help pair analysts with security-specific large language models (LLMs) that can complete toilsome tasks at breakneck speed, and then convert that work into actionable tasks. Using AI in this way can help close the skills gap and expand the hiring pool, since AI can make sense of the complex problems and interpret the results for staff with fewer technical skills.

AI in security is already here — and growing

Google Cloud and Mandiant have been incorporating automation such as ML into solutions and services for years now. Already we’ve been using AI to help security teams reduce alert fatigue, support malware analysis, improve security operation center efficiency, and ultimately do more with less. Generative AI can provide a leap beyond how we apply AI and ML today.

Recent advancements, including in LLMs and other generative AI technologies, will further support efforts to improve security across the IT industry, notably by leveraging frontline intelligence to help organizations combat the threats that matter most. While AI isn’t an immediate silver bullet for security’s biggest pains, the possibilities for AI and security — and especially around security-focused LLMs such as the Security AI Workbench we announced this week at the RSA Conference — create powerful use cases and expansive opportunity for how AI can help security teams be even more effective.