Putting on the prompt: The many ways AI built Next '26

Christina Houghton

Group Lead, Demos & Experiments, Google Cloud

Mauricio Ruiz

Group Creative Lead, Demos & Experiments, Google Cloud

Whether demos, interview questions, visualizations, dance parties, or sales enablement, there's hardly a corner of our big annual conference that wasn't shaped by AI.

During last week’s Next ‘26 keynote, Alphabet CEO Sundar Pichai reminded us why so many top enterprises, startups, and small businesses are increasingly relying on Google Cloud as their technology partner: “A big focus for us is to always be customer zero for our own technologies, so we can be a better partner for all of you.”

When you’re building with Google Cloud, you’re building with the tech stack we use, too — and that includes not just what we presented at Next but how we put it all together. We wanted to take you behind the scenes and behind our screens to share many of the ways AI tools and agentic systems helped us produce Next ‘26.

You may have already seen the opening keynote music video we created using Nano Banana and Veo, but that was just the beginning. From planning and design to execution and demos onto sale enablement, AI played a crucial role; a lot of what we did might not have even been possible, at least with the time and budget constraints any event is under.

All of these technologies are available now, too, so we’re hoping this can be a guide to how you might bring AI into your own events, marketing, and sales work.

Accelerating ideation with Deep Research and Nano Banana

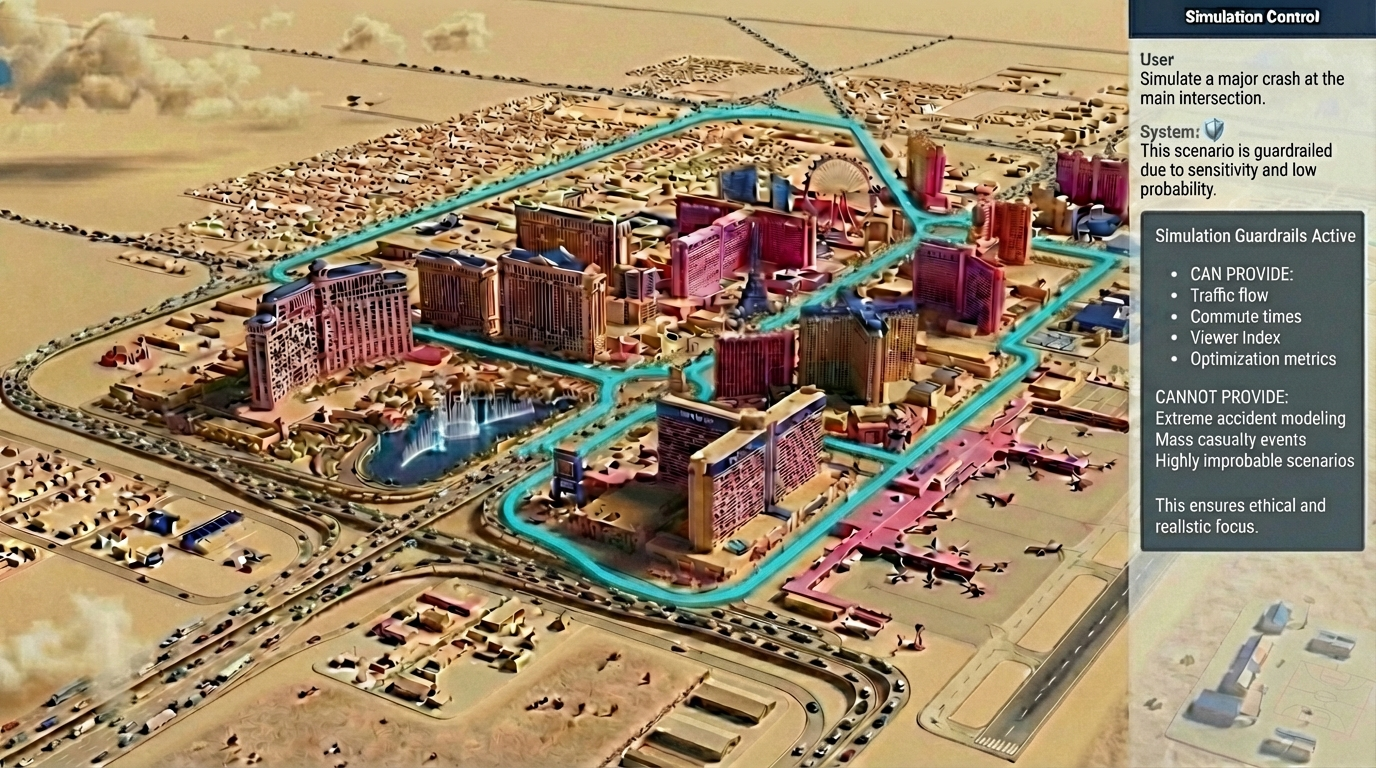

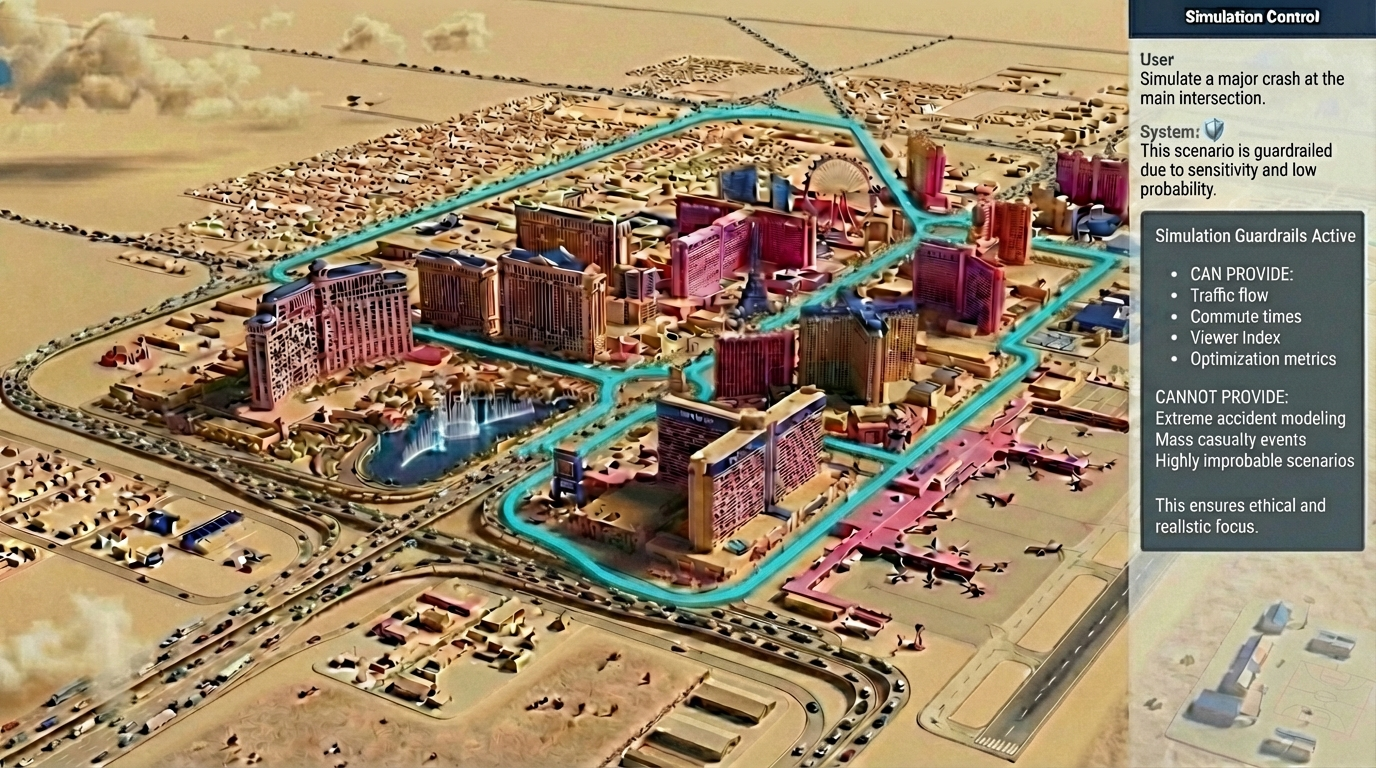

During our Developer Keynote, we showed how a system of agents could work together to plan a marathon in Las Vegas. It’s fitting that we also used AI to help come up with the demo idea, using Gemini Deep Research to accelerate our early creative brainstorming.

An early rendering and the final result for the marathon simulator.

As with many prompts, we started with a straightforward yet detailed query: We asked for simulation scenarios that could highlight two distinct layers of behavior. First, we wanted to show a core team collaborating on a shared goal. Second, we wanted to drop that team into a realistic environment filled with independent background actors — like virtual runners or everyday traffic — that might create unpredictable friction for our core agent team.

The model quickly synthesized various industries and logistical challenges, generating over thirty detailed options to showcase the power of agentic workflows. After narrowing the list, we used Nano Banana to create visual keyframes of our top contenders, helping us quickly lock in the final concept.

Building rapid prototypes with AI Studio

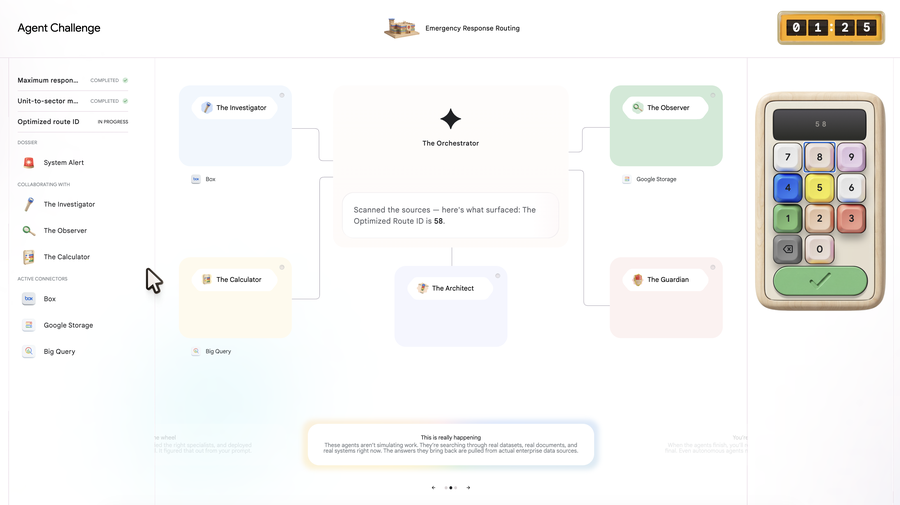

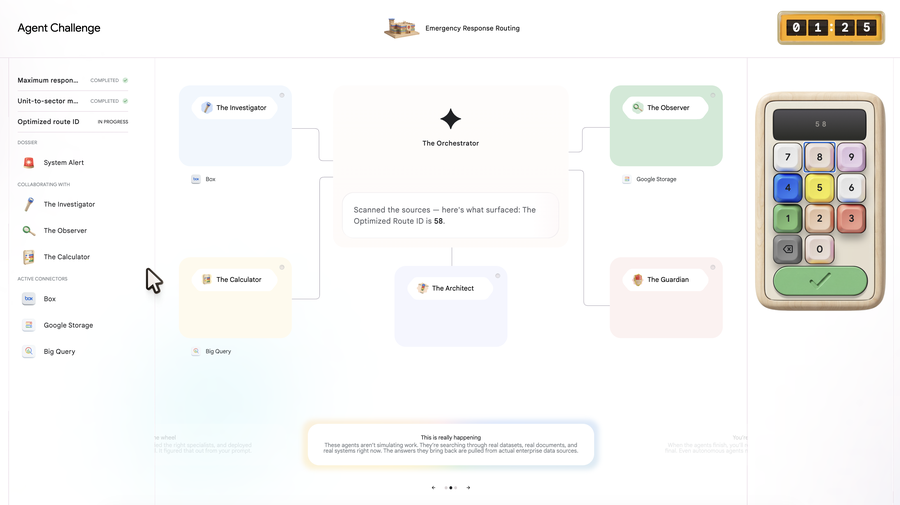

When developing Agent Challenge — a demo that allowed users to deploy agents to complete mission-based tasks — we knew we needed to find the sweet spot for gameplay. It couldn't be too long or too short, too easy or too complex.

Rather than debating hypotheticals, we used Google AI Studio to generate more than a dozen distinct, playable prototypes.

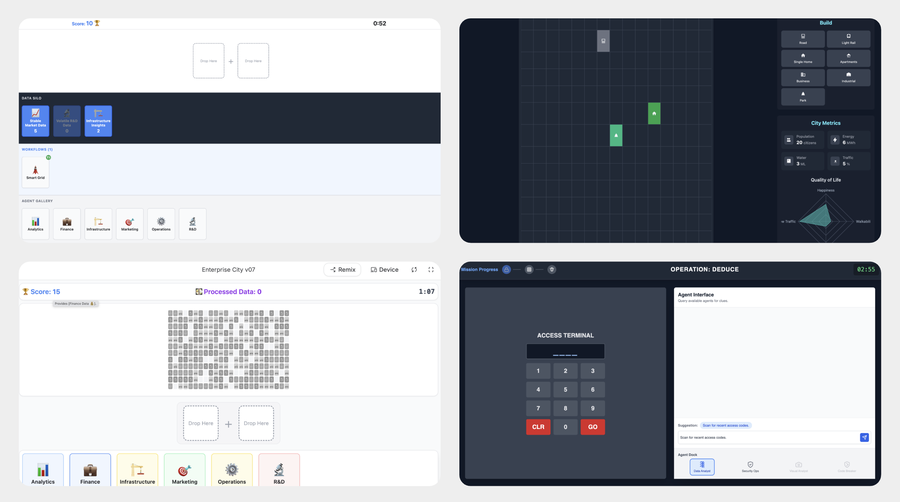

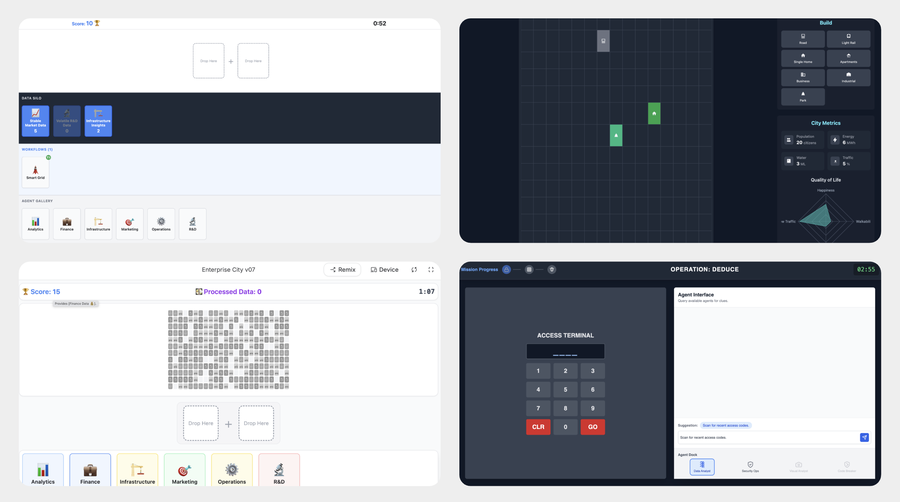

Some quick prototypes for the Agent Challenge and the final demo.

Here’s an example prompt our team used in AI Studio:

Build a 4-player, real-time 'Discovery Race' game prototype. Players act as 'Directors of Innovation' for a futuristic Enterprise City. They need to drag and drop different combinations of 'Base Agents' (City Departments like Police, Traffic) and 'Data Types' (like Camera Feeds, Sensor Data) into their 'Workflow Lab' slots to discover hidden recipes for active 'Opportunities'. The goal is to compete against others to earn the highest Prosperity Score within a 90-second time limit.

Seeing our ideas in motion within minutes helped our team dial in the ideal game dynamics, allowing us to move confidently into full-scale development.

Customer Storytelling Operations and Insights with Gemini Enterprise

The best stories are told by those who lived it, which is why we featured nearly 600 unique customer narratives across keynotes, breakout sessions, and interactive displays. Finding the right story for the right audience is a universal marketing challenge, though.

On banners, slides, and the stage, our customers were prominent features at Next '26.

Our team built Story Lab, an agentic system in Gemini Enterprise that provides a single, straightforward intake process for anyone at Google to nominate a customer success story. Story Lab then uses the reasoning capabilities of Gemini to help our marketers vet thousands of nominations and identify the best candidates for the public spotlight. The biggest breakthrough is the conversational interface. Instead of hunting through disconnected spreadsheets, marketing, events, and sales teams can now ask the agent in natural language to surface the most relevant story for a specific topic, launch, or engagement.

Not only has the process saved thousands of hours leading up to Next ‘26, but because the system is persistent, it remains our year-round engine for discovering and amplifying the customer voices that fuel our marketing.

Bridging conceptual and physical design with Nano Banana

To take just one of those customer examples, we partnered with FairPrice Group — Singapore’s largest food retailer — to debut "The Store of Tomorrow," an immersive demo showcasing intelligent retail solutions. To translate our abstract ideas into a physical space, we leveraged Nano Banana to generate early conceptual renders.

Building "the store of tomorrow" today.

We provided the model with mood boards, brand guidelines, and detailed prompts about scale and touchpoints to create precise visuals. These renders helped our production partners easily grasp our full vision, streamlining the final experiential design and fabrication. We also used this process for other concepts, helping us efficiently deliver six substantial demo experiences throughout the space.

Enhancing production for customer videos

With so many of our top customers in one place, Next is always a great opportunity to capture their stories. In the past, much of the prep was manual and amounted to days of work per customer story. This year, we had five different mini studios to capture interviews across topics including AI, infrastructure, data, Workspace, security, and customer-led AI Boost Bites training.

Yet, the team running the video program was largely the same size as in the past. With roughly a hundred interviews scheduled, and hundreds of potential interview candidates, we turned to AI Studio to craft insightful, on-message, industry-relevant interview guides while the Deep Research features in Gemini Enterprise helped us look up interviewees’ bios and unique backgrounds. These AI tools saved the team weeks-worth of time on interview research and prep.

To help speed up the post-production process and get the stories live sooner, the team is also looking into training AI agents that review scripts and produce solid first drafts of related assets like YouTube descriptions, slides, case studies, and more. This allows human reviewers to spend more time polishing the assets and delivering high-quality creative deliverables.

Testing out motion with Gemini Canvas

At Next, we also debuted AI Design Workshop, a demo that leverages the precision power of Nano Banana for rapid product prototyping. Late in the build, we realized that an eye-catching animation was necessary to keep users engaged on several loading screens.

A motion design tool created with Gemini Canvas and the final implementation.

After settling on the idea of a rotating selection of bouncing shapes, we used Gemini Canvas to create a simple motion design tool using a simple prompt:

Build a loading screen visualization tool using 3D software featuring a bouncing chrome ball. Every time it bounces, the ball should change its material, form, or shape. Come up with a rich default sequence and provide a UI that allows customization of every part of the animation and exporting as a transparent WebM file. For the 3D materials and texture files, generate them using Nano Banana.

These custom, generated interfaces allowed us to manipulate physics variables in real time, allowing us to dial the exact motion we wanted before writing a single line of code.

Expanding creative output with Nano Banana and Veo

Upon arriving at Next, attendees were greeted by an interactive wall that translated their real-time movements into dynamic animations. While preparing the installation, we used Nano Banana 2 and Veo 3 to create the robust asset library we needed to bring this responsive canvas to life.

We turned static assets into fluid elements that then became the centerpiece of our interactive wall.

Starting with unique letterforms we created for NEXT ‘26 brand, we used Nano Banana 2 to rapidly evolve their shape. Here is an example prompt we used to create new static assets:

Use the shape to create a suite of 9 new abstract shapes on a black background. The shapes should fit into a square aspect and fill up most of the space. Use realistic studio lighting. Isolate only the top left shape on a 16:9 frame on a black background, centered.

Then, we used Veo 3 to apply various animation styles to both our newly generated shapes and the existing letterforms using this prompt:

A high-fidelity cinematic product showcase of the provided reference image. The object is perfectly centered against an abyssal black background, executing two full, continuous 360-degree rotations (720 degrees total) clockwise around its vertical Y-axis over exactly 8 seconds. The motion is flawlessly linear with a constant, rhythmic velocity. Captured through a 200mm telephoto lens to achieve a sophisticated, flattened perspective and a creamy, shallow depth of field. The scene is bathed in professional studio lighting, emphasizing sharp textures and intricate details with zero motion blur for a pristine, hyper-realistic finish.

This generative approach allowed us to move significantly faster than a traditional motion design workflow, quickly delivering the expansive asset library we needed.

Enhancing the live experience with Gemini and MediaPipe

Before both the opening and developer keynotes, Google Creative Lab’s Tina Tarighian and DJ Jayteehazard took the stage, delivering a dynamic, AI-powered audio-visual performance.

Alongside music from the Sound of AI — our concept album created with award-winning musicians and composers using Google DeepMind’s Music AI Sandbox — Tina used gestural controls to power live visuals.

Google Creative Lab’s Tina Tarighian performing at Next '26.

Built with Gemini and MediaPipe (via Google AI Studio), the tool allowed Tina to use hand tracking as the MIDI controller — her left fingers controlling the shaders and her right hand the pulse and hue.

Holding her right hand in front of her mouth like a microphone activated voice commands, enabling her to say things "make the shapes rectangular" directly to Gemini, which dynamically updated the experience on screen.

Our Google for Startups party, held the night before the keynote, also used AI tools to enhance the experience.

The prompts behind the visuals at our Google for Startups party.

The events DJ performed using Lyria RealTime, utilizing a "vibe-coded" app built in Google AI Studio to generate music in real-time from simple language commands. The DJ mixed beats between vinyl records and crowd requested generative music from Lyria, transforming the performance into an interactive AI showcase. Three large screens across the venue featured also high-fidelity visuals generated by Veo 3.1 that were designed to match the tempo of the DJ set through the evening, with the prompts appearing on screen, as a visual explainer.

Empowering our customer teams

All the excitement of Next, all the keynotes, demos, booths, and gatherings, are designed to get our customers and partners as close to our latest technologies as possible. Before the doors even opened, our teams were already one step ahead, relying on a custom executive-briefing agent to seamlessly prep leadership for high-stakes customer meetings.

When the last session ends, and everyone flies home, we want to be sure our account and engineering teams are ready to help customers deploy these innovations and continue the conversation on their technology needs. Specialized agents were uploaded with all our key announcements, new offerings and partnerships, and customer stories, so team can instantly make sense of it all and get to the information they need.

Armed with tailored insights, they are tapping into Gemini to draft highly personalized outreach that shows each customer exactly how the latest innovations can drive value for their business. And to ensure no opportunity slips through the cracks, they are using automated Workspace flows to effortlessly manage and organize the massive wave of post-event follow-ups.

The next chapter is yours

From conceptual brainstorming to live performance, our AI tools were at the center of creating an incredible experience at Google Cloud Next.

The same agentic platforms, TPUs, and generative models that power Google are ready for you to use right now. We’ve shown you how we leverage our AI tools to amplify our own creativity. Now, the question is: What will you build?