PyTorch on Google Cloud: How to train PyTorch models on AI Platform

Rajesh Thallam

Machine Learning Specialist, Cloud Customer Engineer

Vaibhav Singh

Group Product Manager

PyTorch is an open source machine learning and deep learning library, primarily developed by Facebook, used in a widening range of use cases for automating machine learning tasks at scale such as image recognition, natural language processing, translation, recommender systems and more. PyTorch has been predominantly used in research and in recent years it has gained tremendous traction in the industry as well due to its ease of use and deployment.

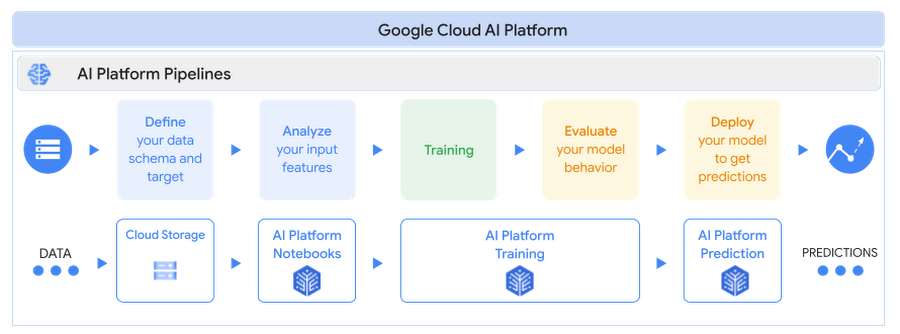

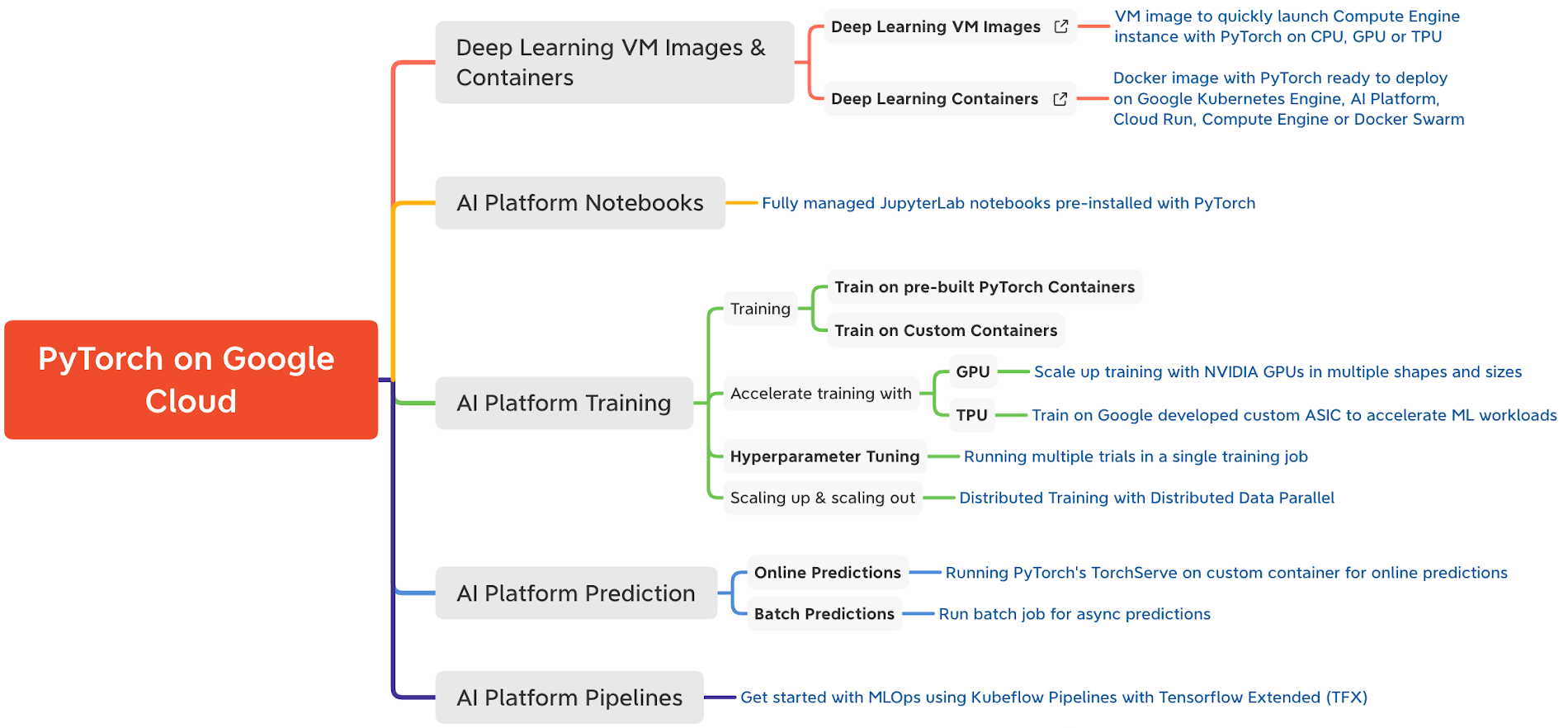

Google Cloud AI Platform is a fully managed end-to-end platform for data science and machine learning on Google Cloud. Leveraging Google's expertise in AI, AI Platform offers a flexible, scalable and reliable platform to run your machine learning workloads. AI Platform has built-in support for PyTorch through Deep Learning Containers that are performance optimized, compatibility tested and ready to deploy.

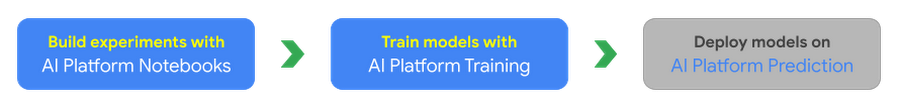

In this new series of blog posts, PyTorch on Google Cloud, we aim to share how to build, train and deploy PyTorch models at scale and how to create reproducible machine learning pipelines on Google Cloud.

Why PyTorch on Google Cloud AI Platform?

Cloud AI Platform provides flexible and scalable hardware and secured infrastructure to train and deploy PyTorch based deep learning models.

- Flexibility: AI Platform Notebooks and AI Platform Training gives flexibility to design your compute resources to match any workload while the platform manages the bulk of the dependencies, networking and monitoring under the hood. Spend your time building models, not worrying about infrastructure.

- Scalability: Run your experiments with AI Platform Notebooks using pre-built PyTorch containers or custom containers and scale your code with high availability using AI Platform Training by training models on GPUs or TPUs.

- Security: AI Platform leverages the same global scale technical infrastructure designed to provide security through the entire information processing lifecycle at Google.

- Support: AI Platform collaborates closely with PyTorch and NVIDIA to ensure top-notch compatibility between AI Platform and NVIDIA GPUs including PyTorch framework support.

Here is a quick reference of support for PyTorch on Google Cloud

In this post, we will cover:

- Setting up a PyTorch development environment on JupyterLab notebooks with AI Platform Notebooks

- Building a sentiment classification model using PyTorch and training on AI Platform Training

You can find the accompanying code for this blog post on the GitHub repository and the Jupyter Notebook.

Let’s get started!

Use case and dataset

In this article we will fine tune a transformer model (BERT-base) from Huggingface Transformers Library for a sentiment analysis task using PyTorch. BERT (Bidirectional Encoder Representations from Transformers) is a Transformer model pre-trained on a large corpus of unlabeled text in a self-supervised fashion. We will begin experimentation with the IMDB sentiment classification dataset on AI Platform Notebooks. We recommend using an AI Platform Notebook instance with limited compute for development and experimentation purposes. Once we are satisfied with the local experiment on the notebook, we show how you can submit the same Jupyter notebook to the AI Platform Training service to scale the training with bigger GPU shapes. AI Platform Training service optimizes the training pipeline by spinning up infrastructure for the training job and spinning it down after the training is complete, without you having to manage the infrastructure.

In upcoming posts, we will show how you can deploy and serve these PyTorch models on AI Platform Prediction service.

Creating a development environment on AI Platform Notebooks

We will be working with JupyterLab notebooks as a development environment on AI Platform Notebooks. Before you begin, you must set up a project on Google Cloud Platform with the AI Platform Notebooks API enabled.

Please note that you will be charged when you create an AI Platform Notebook instance. You pay only for the time your notebook instance is up and running. You can choose to stop the instance which will save your work and only charge for the boot disk storage until you restart the instance. Please delete the instance after you are done.

You can create an AI Platform Notebooks instance:

- Using the pre-built PyTorch image from AI Platform Deep Learning VM (DLVM) Image or

- Using a custom container with your own packages

Creating a Notebook instance with the pre-built PyTorch DLVM image

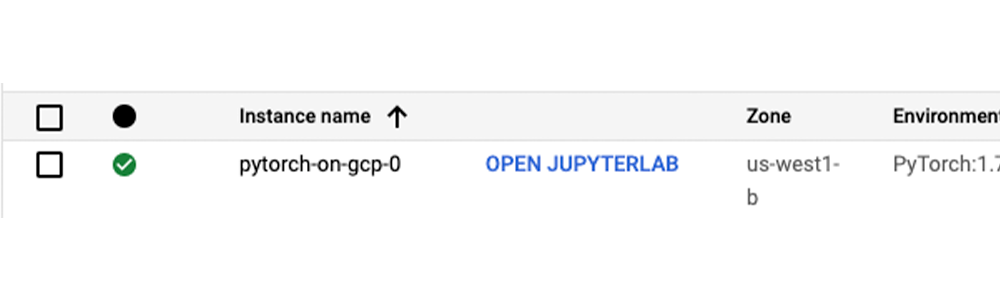

AI Platform Notebooks instances are AI Platform Deep Learning VM Image instances with JupyterLab notebook environments enabled and ready for use. AI Platform Notebooks offers PyTorch image family supporting multiple PyTorch versions. You can create a new notebook instance from Google Cloud Console or command line interface (CLI). We will use the gcloud CLI to create the Notebook instance on NVIDIA Tesla T4 GPU. From Cloud Shell or any terminal where Cloud SDK is installed, run the following command to create a new notebook instance:To interact with the new notebook instance, go to the AI Platform Notebooks page in the Google Cloud Console and click the “OPEN JUPYTERLAB” link next to the new instance, which becomes active when it’s ready to use.

Most of the libraries needed for experimenting with PyTorch have already been installed on the new instance with the pre-built PyTorch DLVM image. To install additional dependencies, run %pip install <package-name> from the notebook cells. For the sentiment classification use case, we will be installing additional packages such as Hugging Face transformers and datasets libraries.

Notebook instance with custom container

An alternative to installing dependencies with pip in the Notebook instance is to package the dependencies inside a Docker container image derived from AI Platform Deep Learning Container images and create a custom container. You can use this custom container for creating AI Platform Notebooks instances or AI Platform Training jobs. Here is an example to create a Notebook instance using a custom container.

1. Create a Dockerfile with one of the AI Platform Deep Learning Container images as base image (here we are using PyTorch 1.7 GPU image) and run/install packages or frameworks you need. For the sentiment classification use case include transformers and datasets.

2. Build image from Dockerfile using Cloud Build from terminal or Cloud Shell and get the image location gcr.io/{project_id}/{image_name}

3. Create a notebook instance with the custom image created in step #2 using the command line.

Training a PyTorch model on AI Platform training

After creating the AI Platform Notebooks instance, you can start with your experiments. Let’s look into the model specifics for the use case.

The model specifics

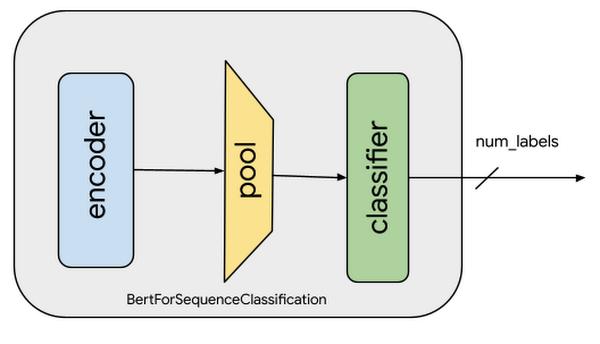

For analyzing sentiments of the movie reviews in IMDB dataset, we will be fine-tuning a pre-trained BERT model from Hugging Face. Fine-tuning involves taking a model that has already been trained for a given task and then tweaking the model for another similar task. Specifically, the tweaking involves replicating all the layers in the pre-trained model including weights and parameters, except the output layer. Then adding a new output classifier layer that predicts labels for the current task. The final step is to train the output layer from scratch, while the parameters of all layers from the pre-trained model are frozen. This allows learning from the pre-trained representations and "fine-tuning" the higher-order feature representations more relevant for the concrete task, such as analyzing sentiments in this case.

For the scenario here analyzing sentiments, the pre-trained BERT model already encodes a lot of information about the language as the model was trained on a large corpus of English data in a self-supervised fashion. Now we only need to slightly tune them using their outputs as features for the sentiment classification task. This means quicker development iteration on a much smaller dataset, instead of training a specific Natural Language Processing (NLP) model with a larger training dataset.

For training the sentiment classification model, we will:

- Preprocess and transform (tokenize) the reviews data

- Load the pre-trained BERT model and add the sequence classification head for sentiment analysis

- Fine-tune the BERT model for sentence classification

In the snippet above, notice that the encoder (also referred to as the base model) weights are not frozen. This is why a very small learning rate (2e-5) is chosen to avoid loss of pre-trained representations. Learning rate and other hyperparameters are captured under the TrainingArguments object. During the training, we are only capturing accuracy metrics. You can modify the compute_metrics function to capture and report other metrics.

We will explore integration with Cloud AI Platform Hyperparameter Tuning Service in the next post of this series.

Training the model on Cloud AI Platform

While you can do local experimentation on your AI Platform Notebooks instance, for larger datasets or models often a vertically scaled compute resource or horizontally distributed training is required. The most effective way to perform this task is AI Platform Training service. AI Platform Training takes care of creating designated compute resources required for the task, performs the training task, and also ensures deletion of compute resources once the training job is finished.

Before running the training application with AI Platform Training, the training application code with required dependencies must be packaged and uploaded into a Google Cloud Storage bucket that your Google Cloud project can access. There are two ways to package the application and run on AI Platform Training:

- Package application and Python dependencies manually using Python setup tools

- Use custom containers to package dependencies using Docker containers

You can structure your training code in any way you prefer. Please refer to the GitHub repository or Jupyter Notebook for our recommended approach on structuring training code.

Using Python packaging to build manually

For this sentiment classification task, we have to package the training code with standard Python dependencies -transformers, datasets and tqdm - in the setup.py file. The find_packages() function inside setup.py includes the training code in the package as dependencies.Now, you can submit the training job to Cloud AI Platform Training using the gcloud command from Cloud Shell or terminal with gcloud SDK installed. gcloud ai-platform jobs submit training command stages the training application on GCS bucket and submits the training job. We are attaching 2 NVIDIA Tesla T4 GPUs to the training job for accelerating the training.

Training with custom containers

To create a training job with a custom container, you have to define a Dockerfile to install the dependencies required for the training job. Then, you build and test your Docker image locally to verify it before using it with AI Platform Training.Before submitting the training job, you need to push the image to Google Cloud Container Registry and then submit the training job to Cloud AI Platform Training using the gcloud ai-platform jobs submit training command.

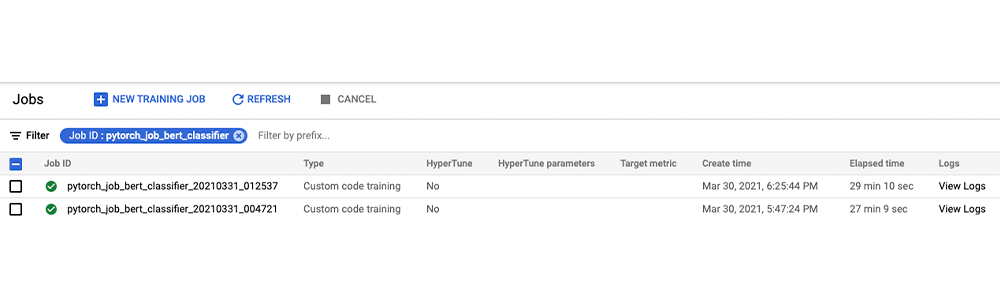

Once the job is submitted, you can monitor the status and progress of training job either in Google Cloud Console or using gcloud commands as shown below:

You can also monitor the job status and view the job logs from the Google AI Platform Jobs console.

Let’s run prediction calls on the trained model locally with a few examples (refer to the notebook for the complete code). The next post in this series will show you how to deploy this model on AI Platform Prediction service.

Cleaning up the Notebook environment

After you are done experimenting, you can either stop or delete the AI Notebook instance. Delete the AI Notebook instance to prevent any further charges. If you want to save your work, you can choose to stop the instance instead.

What’s next?

In this article, we explored Cloud AI Platform Notebooks as a fully customizable IDE for PyTorch model development. We then trained the model on Cloud AI Platform Training service, a fully managed service for training machine learning models at scale.

References

- Introduction to AI Platform Notebooks

- Getting started with PyTorch | AI Platform Training

- Configuring distributed training for PyTorch | AI Platform Training

- GitHub repository with code and accompanying notebook

In the next installments of this series, we will examine hyperparameter tuning on Cloud AI Platform and deploying PyTorch models on AI Platform Prediction service. We encourage you to explore the Cloud AI Platform features we have examined.

Stay tuned. Thank you for reading! Have a question or want to chat? Find authors here - Rajesh [Twitter | LinkedIn] and Vaibhav [LinkedIn].

Thanks to Amy Unruh and Karl Weinmeister for helping and reviewing the post.