Build a platform with KRM: Part 1 - What’s in a platform?

Megan O'Keefe

Senior Staff Developer Advocate

This is the first post in a multi-part series on building developer platforms with the Kubernetes Resource Model (KRM).

In today’s digital world, it’s more important than ever for organizations to quickly develop and land features, scale up, recover fast during outages, and do all this in a secure, compliant way. If you’re a developer, system admin, or security admin, you know that it takes a lot to make all that happen, including a culture of collaboration and trust between engineering and ops teams.

But building culture isn’t just about communication and shared values— it’s also about tools. When application developers have the tools and agency to code, with enough abstraction to focus on building features, they can build fast without getting bogged down in infrastructure. When security admins have streamlined processes for creating and auditing policies, engineering teams can keep building without waiting for security reviews. And when service operators have powerful, cross-environment automation at their disposal, they can support a growing business with new engineering teams - without having to add more IT staff. Said another way: to deliver high-quality code fast and safely, you need a good developer platform.

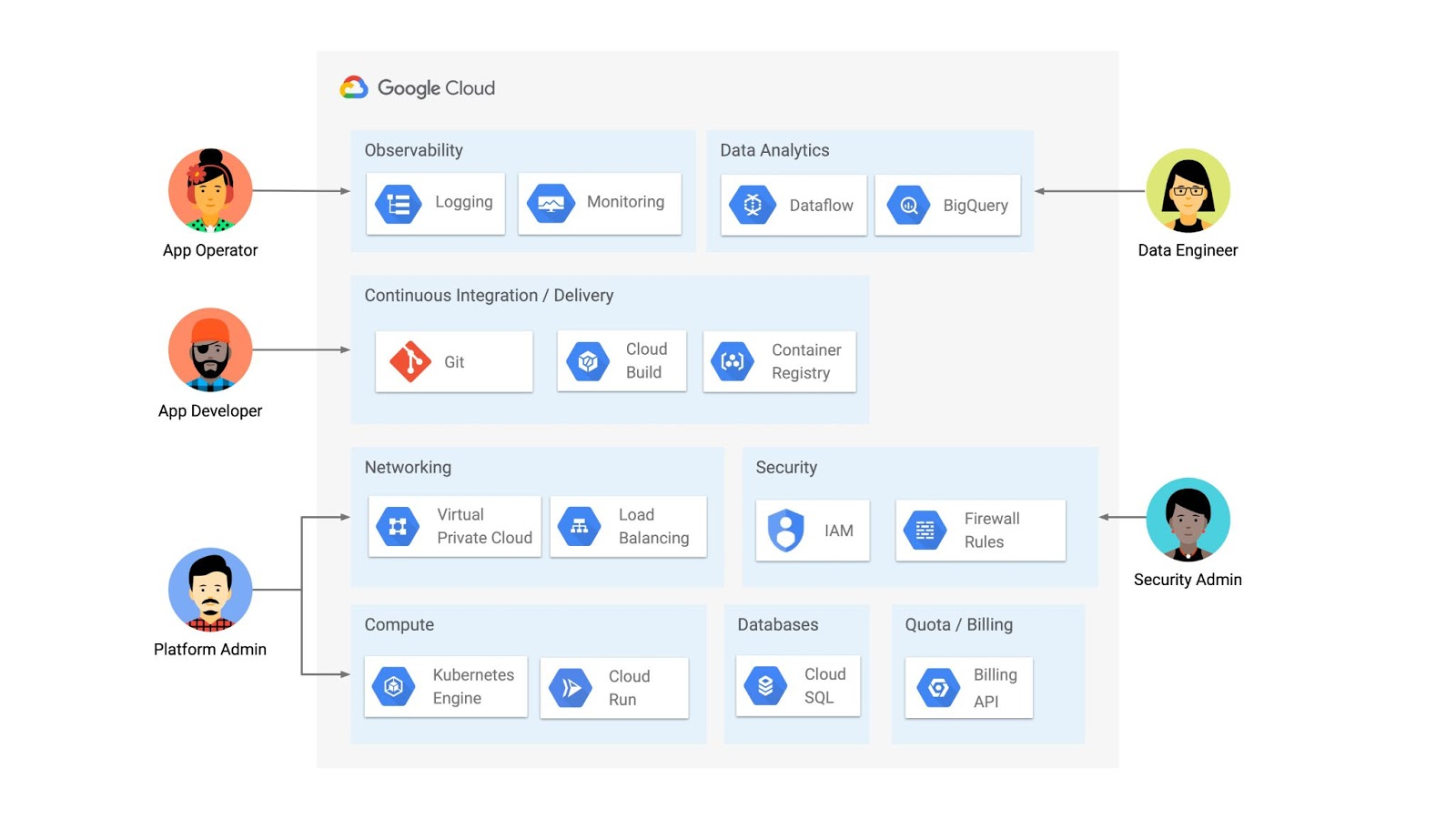

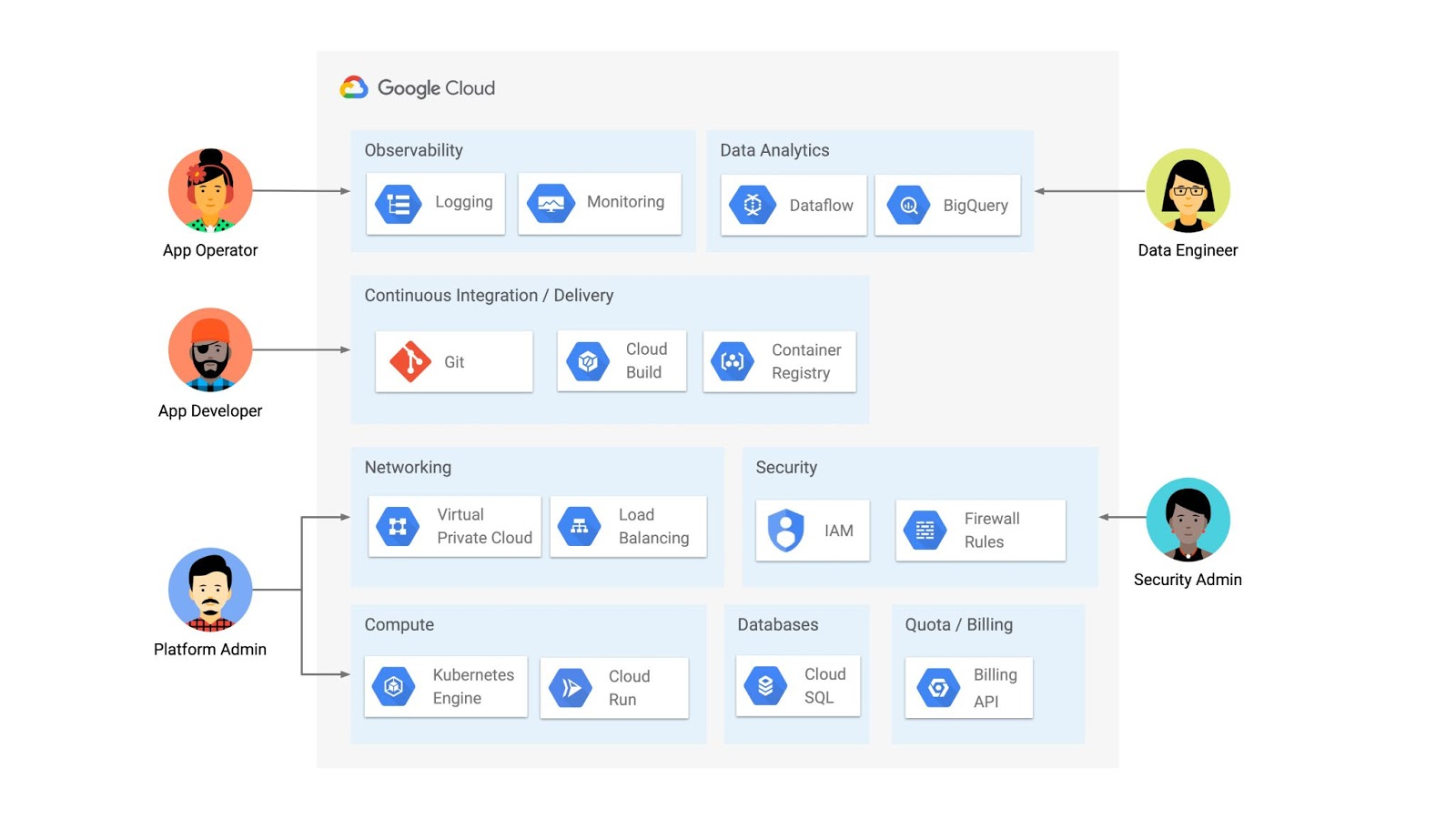

What is a platform? It’s the layers of technology that make software delivery possible, from Git repositories and test servers, to firewall rules and CI/CD pipelines, to specialized tools for analytics and monitoring, to the production infrastructure that runs the software itself.

An organization’s platform needs depend on a variety of factors, such as industry vertical, size, and security requirements. Some organizations can get by with a fully-managed Platform as a Service (PaaS) like Google App Engine, and others prefer to build their platform in-house. At Google Cloud, we serve lots of customers who fall somewhere in the middle: they want more customization (and less lock-in) than what’s provided by an all-in-one PaaS, but they have neither the time nor resources to build their own platform from scratch. These customers may come to Google Cloud with established tech preferences and goals. For example, they may want to adopt Serverless but not Service Mesh, or vice versa. An organization in this category might turn to a provider like Google Cloud to use a combination of hosted infrastructure and services, as shown in the diagram below.

But a platform isn’t just a combination of products. It’s the APIs, UIs, and command-line tools you use to interact with those products, the integrations and glue between them, and the configuration that allows you to create environments in a repeatable way. If you’ve ever tried to interact with lots of resources at once, or manage them on behalf of engineering teams, you know that there’s a lot to keep track of. So what else goes into a platform?

For starters, a platform should be human-friendly, with abstractions depending on the user. In the diagram above, for example, the app developer focuses on writing and committing source code. Any lower-level infrastructure access can be limited to what they care about: for instance, spinning up a development environment. A platform should also be scalable: additional resources should be able to be “stamped out” in an automated, repeatable way. A platform should be extensible, allowing an org to add new products to that diagram as their business and technology needs evolve. Finally, a platform needs to be secure, compliant to industry- and location-specific regulations.

So how do you get from a collection of infrastructure to a well-abstracted, scalable, extensible, secure, platform?

You’ll see that one product icon in that diagram is Google Kubernetes Engine (GKE), a container orchestration tool based on the open-source Kubernetes project. While Kubernetes is first and foremost a “compute” tool, that’s not all it can do.

Kubernetes is unique because of its declarative design, allowing developers to declare their intent and let the Kubernetes control plane take action to “make it so.” The Kubernetes Resource Model (KRM) is the declarative format you use to talk to the Kubernetes API. Often, KRM is expressed as YAML, like the file shown below.

If you’ve ever run “kubectl apply” on a Deployment resource like the one above, you know that Kubernetes takes care of deploying the containers inside Pods, scheduling them onto Nodes in your cluster. And you know that if you try to manually delete the Pods, the Kubernetes control plane will bring them back up- it still knows about your intent, that you want three copies of your “helloworld” container. The job of Kubernetes is to reconcile your intent with the running state of its resources- not just once, but continuously.

So how does this relate to platforms, and to the other products in that diagram? Because deploying and scaling containers is only the beginning of what the Kubernetes control plane can do. While Kubernetes has a core set of APIs, it is also extensible, allowing developers and providers to build Kubernetes controllers for their own resources, even resources that live outside of the cluster. In fact, nearly every Google Cloud product in the diagram above— from Cloud SQL, to IAM, to Firewall Rules — can be managed with Kubernetes-style YAML. This allows organizations to simplify the management of those different platform pieces, using one configuration language, and one reconciliation engine. And because KRM is based on OpenAPI, developers can abstract KRM for developers, and build tools and UIs on top.

Further, because KRM is typically expressed in a YAML file, users can store their KRM in Git and sync it down to multiple clusters at once, allowing for easy scaling, as well as repeatability, reliability, and increased control. With KRM tools, you can make sure that your security policies are always present on your clusters, even if they get manually deleted.

In short, Kubernetes is not just the “compute” block in a platform diagram - it can also be the powerful declarative control plane that manages large swaths of your platform. Ultimately, KRM can get you several big steps closer to a developer platform that helps you deliver software fast, and securely.

The rest of this series will use concrete examples, with accompanying demos, to show you how to build a platform with the Kubernetes Resource Model. Head over to the GitHub repository to follow Part 1 - Setup, which will spin up a sample GKE environment in your Google Cloud project.

And stay tuned for Part 2, where we’ll dive into how the Kubernetes Resource Model works.