Auditing GKE Clusters across the entire organization

Mikołaj Stefaniak

Strategic Cloud Engineer, Google Cloud

Abdel Sghiouar

Senior Cloud Developer Advocate

Introduction

Companies moving to the cloud and running containers are often looking for elasticity. The ability to scale up or down as needed, means paying only for the resources used. Using automation allows engineers to focus on applications rather than on the infrastructure. These are key features of the cloud native and managed container orchestration platforms like Google Kubernetes Engine (GKE).

GKE clusters leverage Google Cloud to achieve the best in class security and scalability. They come with two modes of operation and a lot of advanced features. In Autopilot mode, clusters use more automation to reduce operational cost. This comes with less configuration options though. For use cases where you need more flexibility, the Standard mode offers greater control and configuration options. Irrespective of the selected operational mode, there are always recommended, GKE specific features and best practices to adopt. The official product documentation provides comprehensive descriptions and enlists these best practices.

But how do you ensure that your clusters are following them? Did you consider configuring the Google Groups for RBAC feature to make Kubernetes user management easier ? Or did you remember to set NodeLocal DNS cache on standard GKE clusters to improve DNS lookup times?

Lapses in GKE cluster configuration may lead to reduced scalability or security. Over time, this may decrease the benefits of using the cloud and managed Kubernetes platform. Thus, keeping an eye on cluster configuration is an important task! There are many solutions to enforce policies for resources inside a cluster, but only a few address the clusters themselves. Organizations that implemented the Infrastructure-as-code approach may apply controls there. Yet, this requires change validation processes and code coverage for the entire infrastructure. Also, creation of GKE specific policies will need time investment and product expertise. And even then, there might be often a need to check the configurations of running clusters (i.e. for auditing purposes).

Automating cluster checks

The GKE Policy Automation is a tool that will check all clusters in your Google Cloud organization. It comes with a comprehensive library of codified cluster configuration policies. These follow the best practices and recommendations from the Google Product and Professional Services teams. Both the tool and the policy library are free and released as an open source project on Github. Also, the solution does not need any modifications on the clusters to operate. It is simple and secure to use, leverages read-only access to cluster data via Google Cloud APIs.

You can use GKE Policy Automation to run a manual one time check, or in an automated & serverless way for continuous verification. The second approach will discover your clusters and check if they comply with the defined policies on a regular basis.

After successful cluster identification, the tool pulls information using the Kubernetes Engine API. In the next releases, the tool will support more data inputs to cover additional cluster validation use cases, like scalability limits check.

GKE Policy Automation engine evaluates the gathered data against the set of codified policies, originating from Google Github repository by default; but users can specify their own repositories. This is useful for adding custom policies or in cases when public repository access is not allowed.

The tool supports a variety of ways for storing the policy check results. Besides the console output, it can save the results in JSON format on Cloud Storage or to Pub/Sub. Although those are good cloud integration patterns, they need further JSON data processing. We recommend leveraging the GKE Policy Automation integration with the Security Command Center.

The Security Command Center is Google Cloud's centralized vulnerability and threat reporting service. The GKE Policy Automation registers itself as an additional source of findings there. Next, for each cluster evaluation, the tool creates new or updates existing findings. This brings all SCC features like finding visualization and management together. Also, the cluster check findings will be subject to the configured SCC notifications.

In the next chapters we will show how to run GKE Policy Automation in a serverless way. The solution will leverage cluster discovery mechanisms and Security Command Center integration.

Continuous cluster evaluation

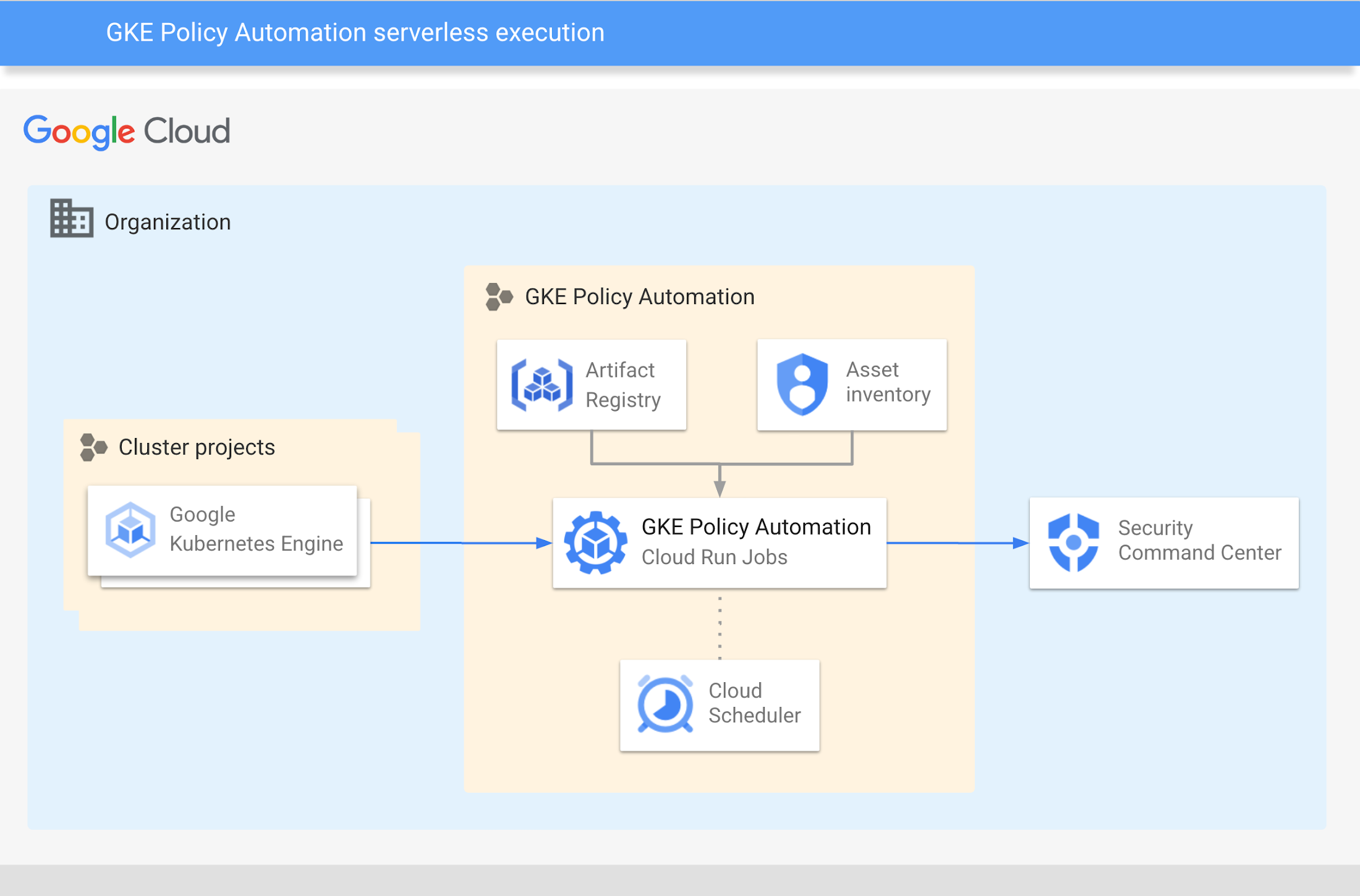

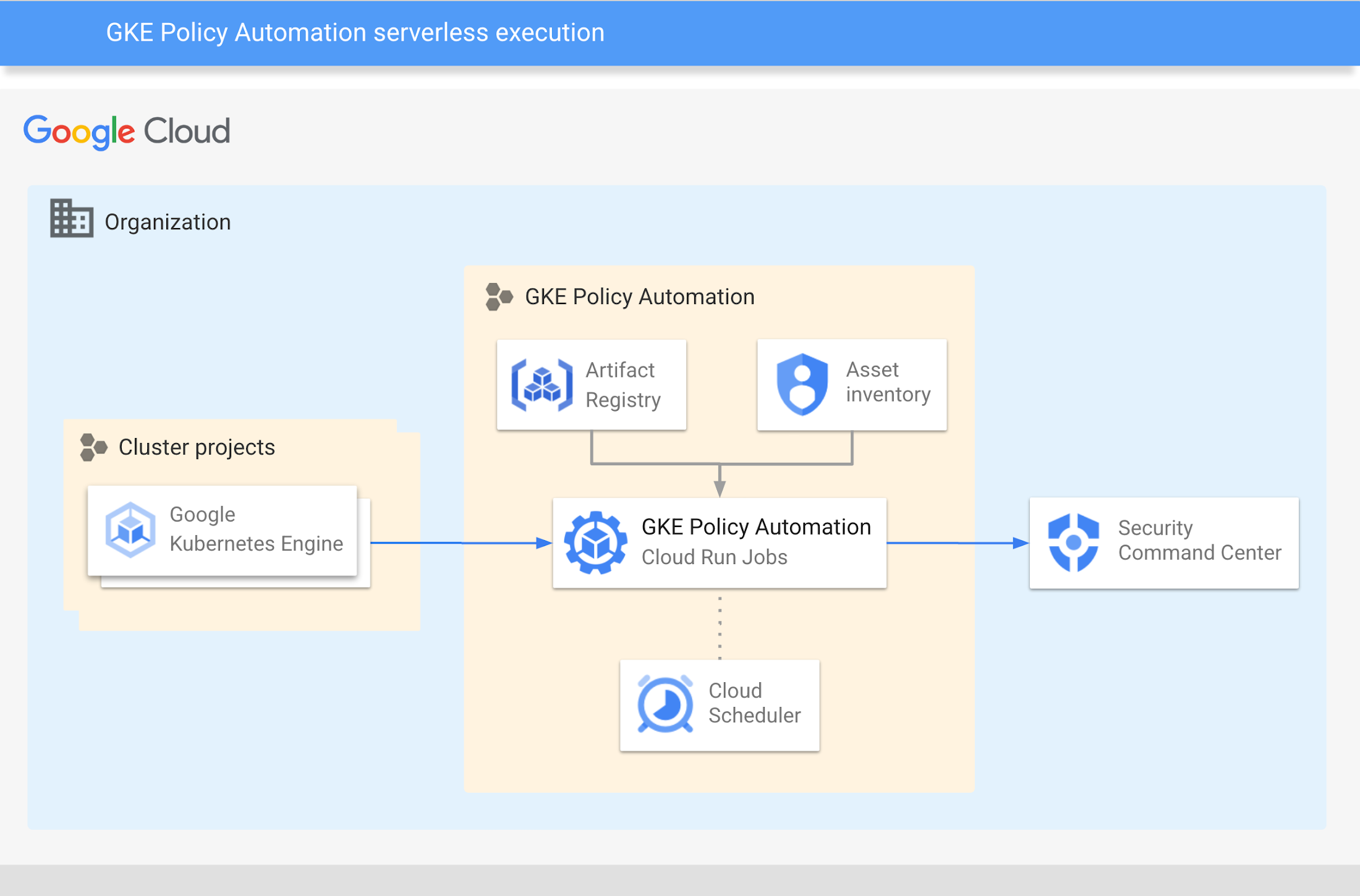

The GKE Policy Automation comes with a sample Terraform code that creates the infrastructure for serverless operation. The below picture shows the overall architecture of this solution.

The serverless GKE Policy Automation solution uses a containerized version of the tool.

The Cloud Run Jobs service executes the container as a job to perform cluster checks. This happens in configurable intervals, triggered by the Cloud Scheduler.

The solution discovers GKE clusters running in your organization using Cloud Asset Inventory.

On each run, the solution gathers cluster data and evaluates configuration policies against them. The policies originate from the Google Github repository by default or from user specified repositories.

At the end, the tool sends evaluation results to the Security Command Center as findings.

GKE Policy Automation container images are available in the Github container registry. To run containers with Cloud Run, the images have to be either built in the Cloud or copied there. The provided Terraform code will provision Artifact Registry repository for this purpose. The following chapters of this post describe how to copy GKE Policy Automation’s image to Google Cloud.

Prerequisites

Existing Google Cloud project for GKE Policy Automation resources

Terraform tool version >= 1.3

The operator will also need sufficient IAM roles to create the necessary resources:

roles/editorrole or equivalent on GKE Policy Automation projectroles/iam.securityAdminrole or equivalent on Google Cloud organization - to set IAM policies for Asset Inventory or Security Command Center

Adjusting variables

The Terraform code needs inputs to provision the desired infrastructure. In our scenario, GKE Policy Automation will check clusters in the entire organization. The dedicated IAM service account will be created for the purpose of running a tool. The account will need the following IAM roles on the Google Cloud organization level:

roles/cloudasset.viewerto detect running GKE clustersroles/container.clusterViewerto get GKE clusters configurationroles/securitycenter.sourcesAdminto register the tool as SCC sourceroles/securitycenter.findingsEditorto create findings in SCC

The .tfvars file below provides all of the necessary inputs to create the above role bindings. Remember to adjust the project_id, region and organization values accordingly.

The tool can be also used to check clusters in a given folder or project only. This can be useful when granting organization-wide permissions is not a viable option. In such a case, the discovery parameters in the above example need to be adjusted. Please refer to the input variables README documentation for more details.

Besides the infrastructure, we need to configure the tool itself. The repository provides an example config.yaml file, with the following content:

The Terraform will populate the variable values in the config.yaml file above and copy it to the Secret Manager. The secret will be then mounted as a volume in the Cloud Run job image. The full documentation of the tool's configuration file is available in the GKE Policy Automation user guide.

Both configuration files should be saved in a terraform subdirectory in the GKE Policy Automation folder.

Running Terraform

Initialize Terraform by running

terraform initCreate and inspect plan by running

terraform plan -out tfplanApply plan by running

terraform apply tfplan

Copying container image

The steps below describe how to copy the GKE Policy Automation container image from Github to Google Artifact Registry. Please

1. Set the environment variables. Remember to adjust the values accordingly.

2. Pull the latest image

3. Login

4. Tag the container image

5. Push the container image

Creating Cloud Run job

As of the moment of writing this article, Google's Terraform provider does not yet support Cloud Run Jobs.

Therefore, the Cloud Run job creation has to be done manually. The command below uses gcloud command and environment variables defined before.

Observing the results

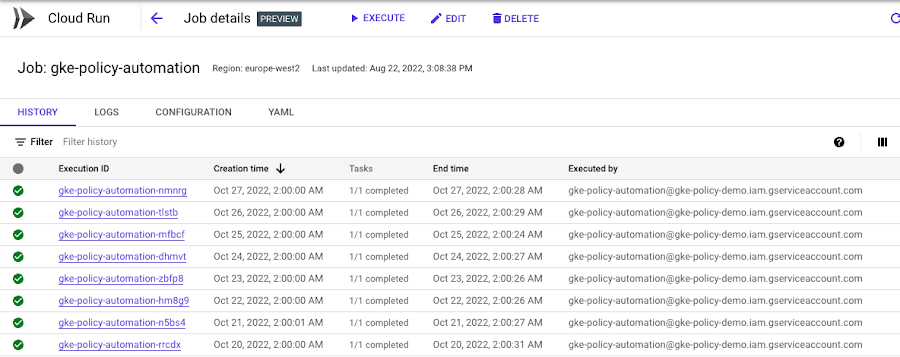

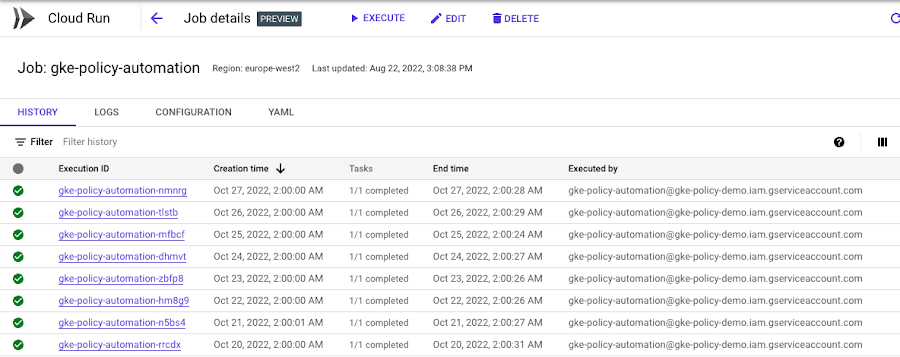

The configured Cloud Scheduler will run the GKE Policy Automation job once per day. To observe results immediately, we advise to run the job manually. The successful Cloud Run job executions can be viewed in the Cloud Console, as in the example below.

Security Command Center Integration

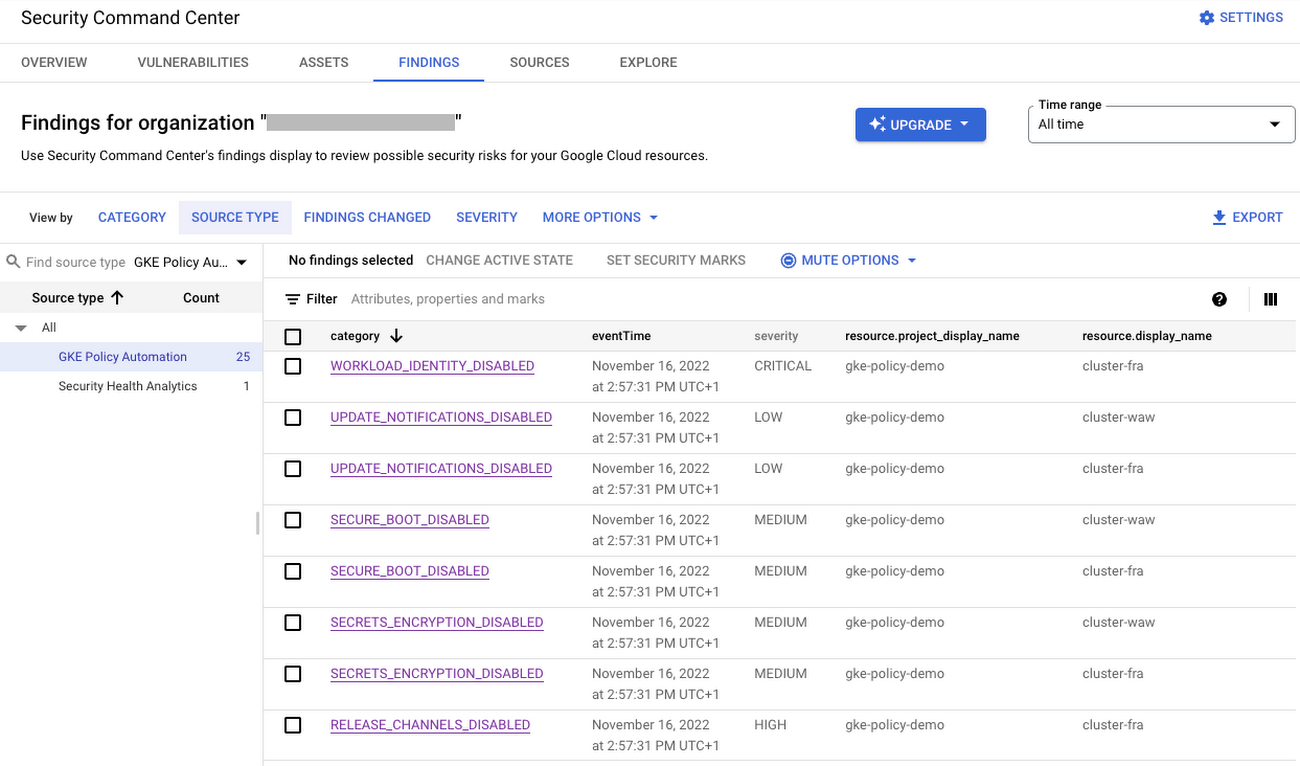

Once the GKE Policy Automation job runs successfully, it will produce findings in the Security Command Center for the discovered GKE clusters. It is possible to view them in the SCC Findings view as shown below. Additionally, findings will be available via the SCC API and subject to configured SCC Pub/Sub notifications. This gives the possibility to leverage any existing SCC integrations i.e. with the systems used by your Security Operations Center teams.

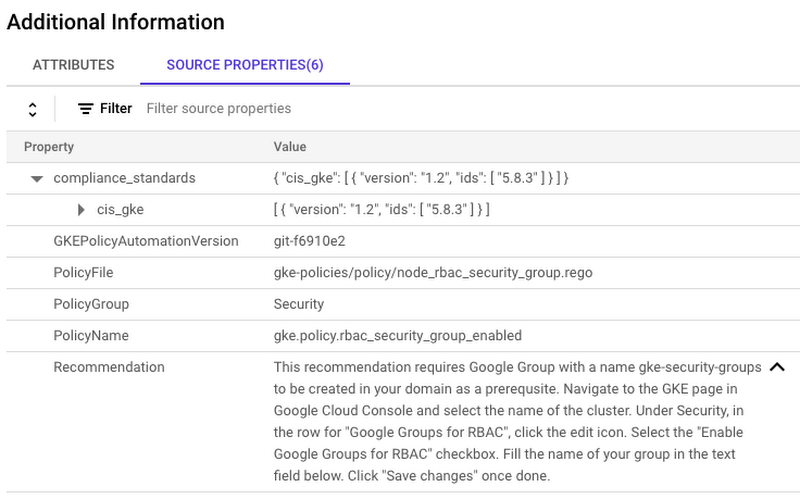

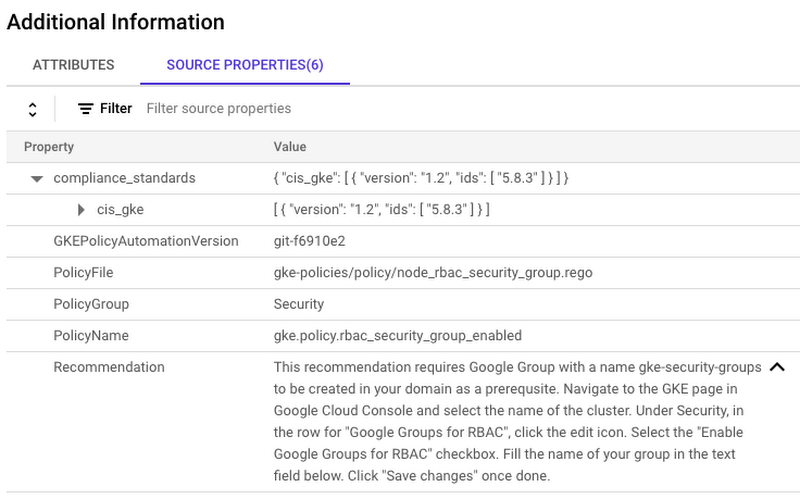

Selecting the specific finding will navigate to the detailed finding view. In this view, all the finding attributes are shown. Additionally, in the Source Properties tab are the GKE Policy Automation specific properties. Those include:

Evaluated policy file name

Recommended actions and external documentation reference

Center for Internet Security (CIS) benchmarks for GKE mapping for security related findings

The below example shows source properties of a GKE Policy Automation finding in SCC.

GKE Policy Automation will update existing SCC findings during each run. The findings will be set as inactive when the corresponding policy will become valid for a given cluster. Same way, the existing inactive findings will be set to active when policy will violate.

For the cases when some policies are not relevant for given clusters, we recommend using the Security Command Center mute feature. For example, if the Binary Authorization policy is not relevant for development clusters, a muting rule with a development project identifier can be created.

Summary

In the article we have shown how to establish GKE cluster governance for Google Cloud organization using the GKE Policy Automation, an open-source tool created by the Google Professional Services team. The tool along with Google Cloud serverless solutions, like Cloud Run Jobs allows you to build a fully automated solution; alongside the Security Command Center integration, giving you the possibility to process GKE policy evaluation results in a unified and cloud native way.