Cloud Run for Anthos brings eventing to your Kubernetes microservices

Mete Atamel

Cloud Developer Advocate

Bryan Zimmerman

Product Manager

Building microservices on Google Kubernetes Engine (GKE) provides you with maximum flexibility to build your applications, while still benefiting from the scale and toolset that Google Cloud has to offer. But with great flexibility comes great responsibility. Orchestrating microservices can be difficult, requiring non-trivial implementation, customization, and maintenance of messaging systems.

Cloud Run for Anthos now includes an events feature that allows you to easily build event-driven systems on Google Cloud. Now in beta, Cloud Run for Anthos’ event feature assumes responsibility for the implementation and management of eventing infrastructure, so you don’t have to.

With events in Cloud Run for Anthos, you get

The ability to trigger a service on your GKE cluster without exposing a public HTTP endpoint

Support for Google Cloud Storage, Cloud Scheduler, Pub/Sub, and 60+ Google services through Cloud Audit logs

Custom events generated by your code to signal between services through a standardized eventing infrastructure

A consistent developer experience, as all events, regardless of the source, follow the CloudEvents standard

You can use events for Cloud Run for Anthos for a number of exciting use cases, including:

Use a Cloud Storage event to trigger a data processing pipeline, creating a loosely coupled system with the minimum effort.

Use a BigQuery audit log event to initiate a process each time a data load completes, loosely coupling services through the data they write.

Use a Cloud Scheduler event to trigger a batch job. This allows you to focus on the code of what that job is doing and not its scheduling.

Use Custom Events to directly signal between microservices, leveraging the same standardized infrastructure for any asynchronous coordination of services.

How it works

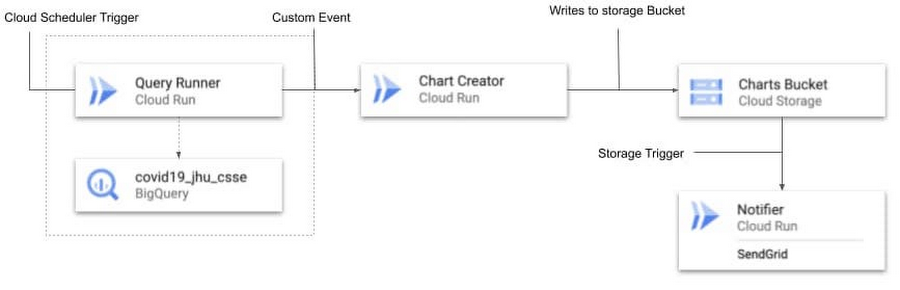

Cloud Run for Anthos lets you run serverless workloads on Kubernetes, leveraging the power of GKE. This new events feature is no different, offering standardized infrastructure to manage the flow of events, letting you focus on what you do best: building great applications. The solution is based on open-source primitives (Knative), avoiding vendor-lock-in while still providing the convenience of a Google-managed solution.Let’s see events in action. This demo app builds a BigQuery processing pipeline to query a dataset on a schedule, create charts out of the data and then notify users about the new charts via SendGrid. You can find the demo on github.

You’ll notice in the example above that the services do not communicate directly with each other, instead we use events on Cloud Run for Anthos to ‘wire up’ coordination between these services, like so:

Let’s break this demo down further.

Step 1- Create the Trigger for Query Runner: First, create a trigger targeting the Query runner service based on a cloud scheduler job.

Step 2- Handle the event in your code: In our example we need details provided in the trigger. These are delivered via the HTTP header and body of the request and can easily be unmarshalled using the CloudEvent SDK and libraries. In this example, we use C#:

Read the event using CloudEvent SDK:

Parse the CloudEvent Data using Google Events library for C#:

Step 3 - Signal the Chart Creator with a custom event: Using custom events we can easily signal a downstream service without having to maintain a backend. In this example we raise an event of type dev.knative.samples.querycompleted

Then we create a trigger for the Chart Creator service that fires when that custom event occurs. In this example we use the following gcloud command to create the trigger:

Step 4 - Signal the notifier service based on a GCS event: We can trigger the notifier service once the charts have been written to the storage service by simply creating a Cloud Storage trigger.

And there you have it! From this example you can see how with events for Cloud Run for Anthos, it’s easy to build a standardized event-based architecture, without having to manage the underlying infrastructure. To learn more and get started, you can:

Get started with Events for Cloud Run for Anthos

Follow along with our demo in our Qwiklab

View our recorded talk at Next 2020