Best practices for SAP app server autoscaling on Google Cloud

Riley Harrington

Solution Manager, SAP Strategy & Architecture, Google Cloud

Mike Altarace

Strategic Cloud Engineer

In most large SAP environments, there is a predictable and well known daily variation in app server workloads. The timing and rate of workload changes are generally consistent and rarely change, making them great candidates to benefit from the elastic nature of cloud infrastructure. Expanding and contracting VMs to match the workload cycle can speed up task processing during busy times, while saving cost when resources are not needed.

In this article, we will explore two options for autoscaling SAP app servers, discuss the pros and cons of each, and walk through a sample deployment.

The two common approaches for scaling an SAP app server on Google Cloud Platform (GCP) are:

Utilization-based autoscaling: Generic VMs are added to the SAP environment as usage increases (e.g. by measuring CPU utilization).

Schedule-based scaling: Previously configured VMs are started and stopped in tandem with workload cycles.

Utilization-based autoscaling

GCP offers a robust VM autoscaling platform that scales the VM landscape up and down based on CPU or load balancer usage, Stackdriver metrics, or a combination of these. The core GCP elements needed to establish autoscaling are:

Instance template: An SAP app server baseline VM image that gets stamped into running VMs on a scale up event.

Managed Instance Group (MIG): A collection of definitions on how and when to scale the VM defined by the instance template. It includes the VM shape, zones to launch, autoscale rules, min/max counts, and more.

In utilization-based autoscaling, each SAP app server function (for example, Dialog, Batch) has its own separate instance template and instance group so it can scale up and down independently. How SAP systems integrate newly created VMs—by performing logon group assignments and monitoring, for example—differs based on how the system is configured, so we won’t discuss it in this article.

Here are some of the benefits and challenges of utilization-based autoscaling.

Pros

- When done right, this approach provides the most optimal utilization of resources. Scale-up takes place only when new resources are needed, and scale down occurs when they are not.

- Each SAP component scales up or down independently. For example, batch workers are scaled at a different rate and size than dialog workers.

- Since there is only a single instance template per component, upgrades and patches are easier to execute.

Cons:

- Instances are not automatically added to the non-default SAP logon group.

- Instances are not automatically monitored by SAP Solution Manager.

Implementing utilization-based autoscaling

To implement utilization-based autoscaling, first we need a baseline image of each SAP component.

Starting with a valid app server dialog VM, remove all hostname references from config/profile files and replace them with a templated variable, like $HOSTNAME—you will need to replace this variable with the actual hostname using a startup script. Next, take a snapshot of all disks.

In this example, we assume there are three disks: boot, pdssd (which holds /usr/sap folder), and swap.

Once they’re ready, we create an image out of each snapshot.

Once we have an image of each VM, we can create the instance template.

Now, we can create the MIG that contains a healthcheck and autoscaling policy.

Once completed, the MIG runs the first dialog instance and begins measuring the CPU utilization. As you can see from the variable “target-cpu-utilization” on the bottom line, in this example the MIG adds and removes dialog instances when usage crosses above or below 60%.

Memory-based scaling

SAP app server load can also scale very well based on memory usage. Thanks to the flexibility of GCP autoscaling, we can easily modify our example to use memory usage as the scale trigger. (Note: Memory usage in a VM is not exposed to the hypervisor, so we will need to install the Stackdriver agent before we create our boot disk snapshot).

In this case, we’ll set the scale trigger to 50% by executing the following gcloud command, which uses the stackdriver memory usage metric “agent.googleapis.com/memory/percent_used”.

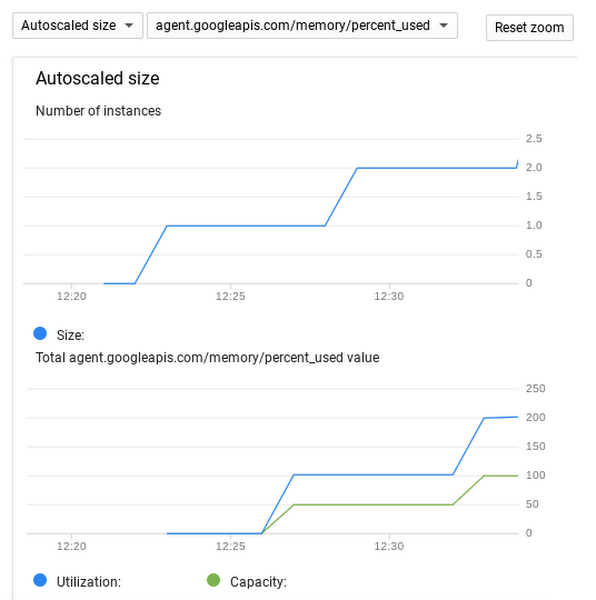

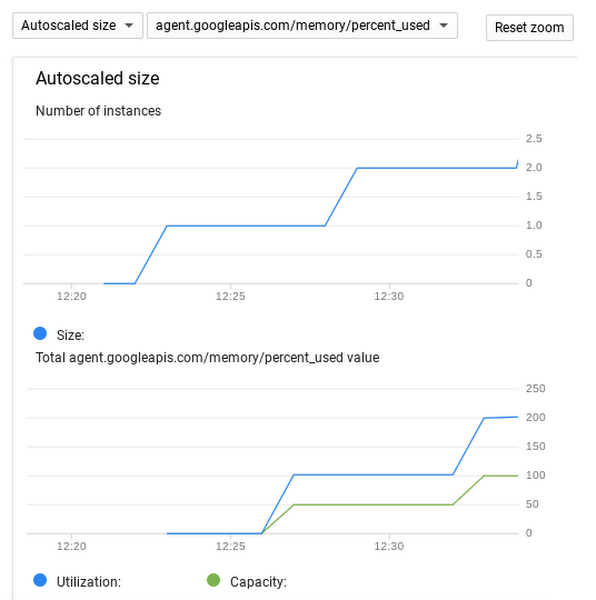

To see the progression of your scale events, simply go to the “Monitoring” tab of your instance group in the GCP Console.

Next-level scaling

You can further optimize scaling by using Stackdriver custom metrics to base it on the actual SAP job load rather than CPU load. Using the SAP workload as the indicator for autoscaling gives you a more graceful VM shutdown, and won’t interrupt jobs that might have low CPU usage.

Schedule-based autoscaling

Schedule-based autoscaling works best when your SAP app server workloads are running on a known and recurring pattern.

In this example, we will create a fully configured and functioning cluster, sized to service peak workload. Initially, we create and configure the app server cluster for peak usage, with all VMs up and registered with the correct SAP logon groups. VMs will then be stopped, but not terminated, until the next work schedule. Right before the known work is scheduled to start, Cloud Scheduler revives the VMs, bringing the cluster to full capacity. At a set time when work is expected to complete, Cloud Scheduler then stops the VMs again.

Here are some of the benefits and challenges of schedule-based autoscaling.

Pros

It is a simple environment to configure and maintain.

It delivers predictable usage and cost.

Desired SAP logon groups are preconfigured in cluster VMs.

Cons

Scale events are fixed across the cluster, which creates a rigid scale up/down cycle.

Any change in workload start or end time requires schedule modifications.

All VMs come up and turn down at the same time regardless of usage, which can lead to suboptimal resource usage.

Stopped or suspended VMs still incur storage cost.

Maintenance and upgrades are required for each VM.

Implementing schedule-based autoscaling

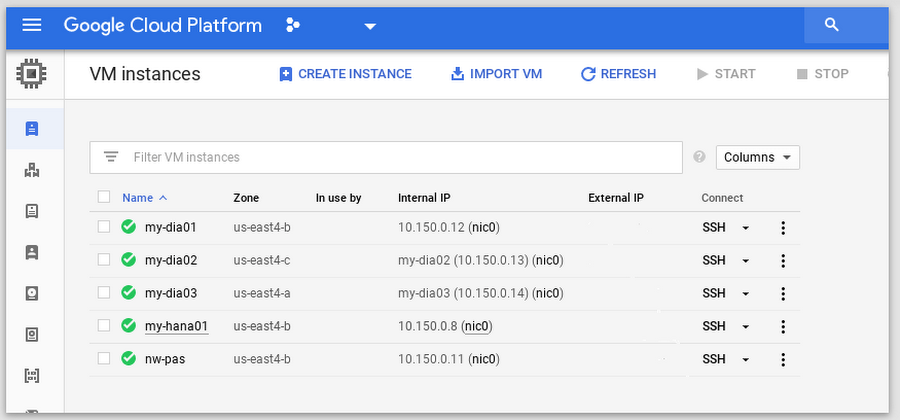

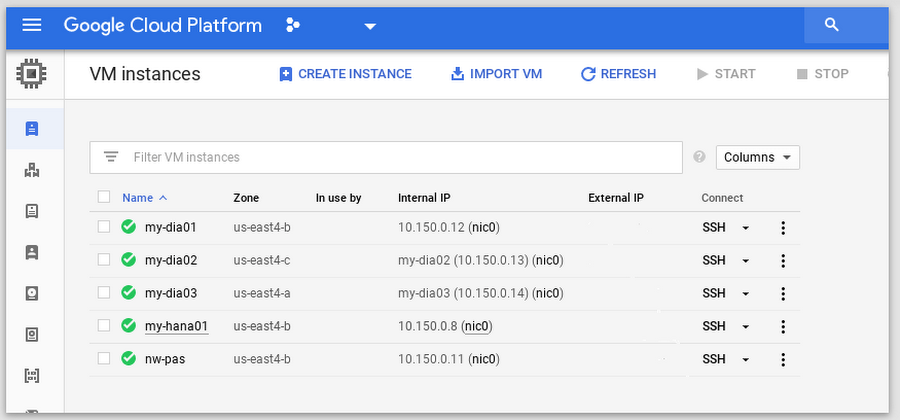

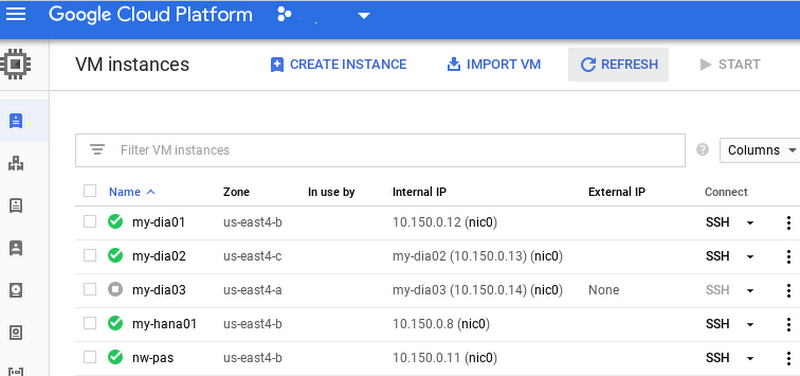

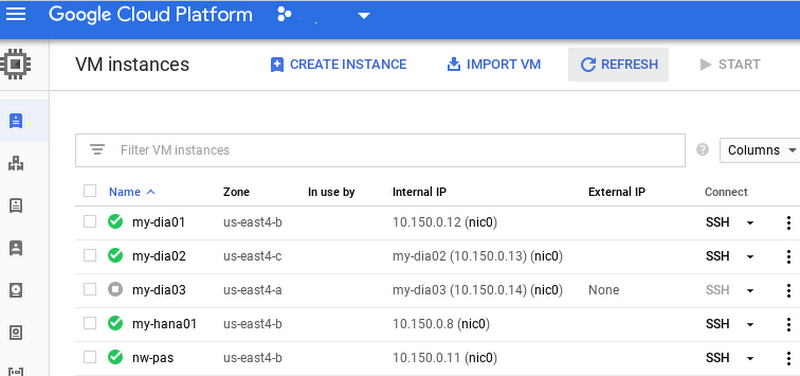

The first step in our schedule-based autoscaling example is to build and configure the app server cluster using GCP SAP NetWeaver deployment guides. The resulting environment contains a HANA instance, a primary application server instance, and three dialog instances.

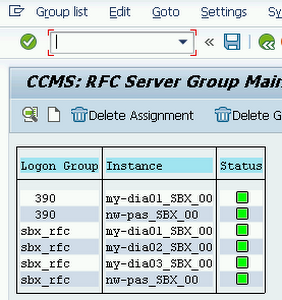

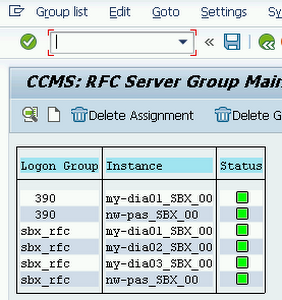

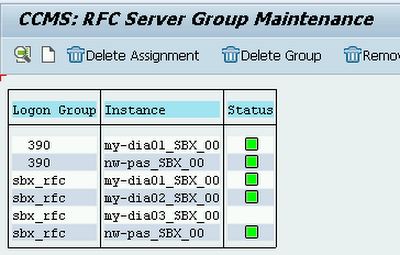

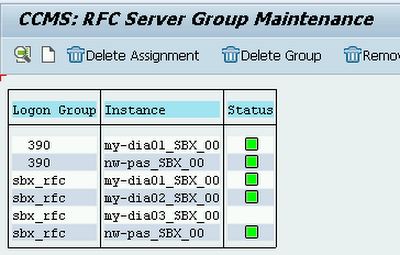

If we issue the RZ12 transaction code in the SAP UI we can observe the VMs joining the cluster.

The next step is to label the dialog VMs to include them in the scaling events. In our example, we add the label “nwscale” to all of the instances that will be scheduled to scale up and down.

Following along with the Cloud Scheduler for VM walkthrough, we clone the git repo and deploy the cloud functions that start and stop VMs, and create a Pub/Sub topic for scale up and scale down events.

Now we can test to see if our function can stop one of our dialog instances.

Based on the tag we created earlier, we base64-encode the message that contains the zone and resource we are operating on.

Then we use the payload to call the cloud function and stop dialog VMs in the us-east4-a zone that’s labeled with “nwscale=true”.

As we can see, the labeled dialog instance in east4-a stops.

The results of the SAP RZ12 transaction code also show us that the instance is marked in SAP UI as unavailable, but still is a part of the SAP logon group for when it starts up again later.

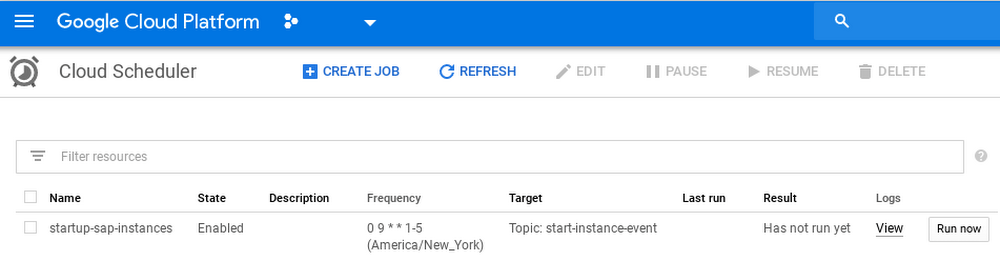

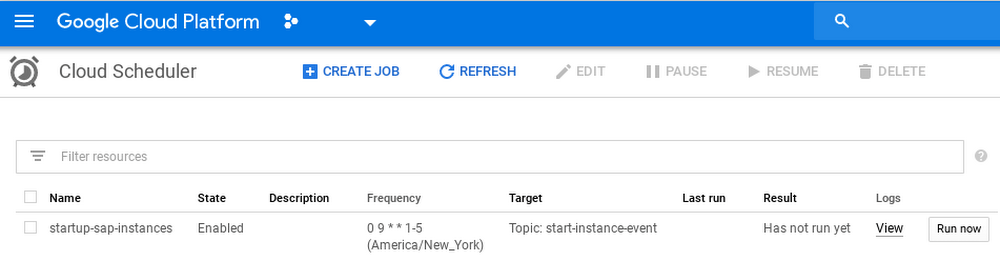

Now that the initial setup is complete, we can create a Cloud Scheduler cron job to start and stop the instances. For our example, we’ll scale up all labeled instances every weekday at 9AM ET.

We can confirm the schedule has been created through the Cloud Scheduler console.

To complete the system, just use the same process to create start/stop schedules for all remaining zones.

The next level: SAP event-based scaling

Since the SAP platform is capable of directly managing infrastructure, we can further improve our schedule-based autoscaling implementation by using SAP event-based scaling and allowing the SAP admin to define and control the VM landscape. An SAP External Command (SM69) executes the gcloud command and publishes scale messages to Pub/Sub. This can then be referenced in either a custom ABAP or by calling a function module like SXPG_COMMAND_EXECUTE.

Other considerations

When implementing autoscaling in your environment, there are a couple other things to keep in mind.

Remove application instances gracefully

Scale down does not necessarily drain app server instances before shutting them down, so using the SAP web-based UI instead of rich clients (SAPGUI/NWBC) can limit user disruption.

Monitoring autoscaled instances

SAP Solution Manager requires instances to be added in advance for monitoring purposes. Schedule-based instances can be added as part of their initial configuration, and make debugging easier since they persist after work is done.

Conclusion

There are many benefits of autoscaling in an SAP app server environment. Depending on the particulars of your environment, utilization-based or schedule-based autoscaling can expand your VMs when you need them, and contract them when you don’t, providing cost and resource savings along the way. In this article, we looked at some of the pros and cons of each approach and walked through the deployment steps for each method. We look forward to hearing how it works for you.

To learn more about SAP solutions on Google Cloud, visit our website.