Supercharge your Cloud NAT: Introducing new Cloud NAT features

Tracy Jiang

Product Manager

Ghaleb Al-Habian

Customer Engineer, Networking Specialist

Try Google Cloud

Start building on Google Cloud with $300 in free credits and 20+ always free products.

Free trialIntroduction

Cloud NAT is our fully-managed, proxy-less, Network Address Translation offering. It is completely implemented at the Andromeda SDN layer, offering superior performance and effortless scale. With it, Compute Engine and Google Kubernetes Engine (GKE) workloads can access internet resources in a scalable and secure manner, without exposing the workloads running on them to outside access using external IPs.

Cloud NAT essentially works by “stretching” external IP addresses across many instances. It does so by dividing the available source ports per external IP equally across all in-scope instances. Through the use of IP auto-allocation we automate the process of expanding external IP addresses allocated to your NAT gateway.

Although adequate for the majority of customer workloads, some monitoring best practices recommend that all instances have enough external ports to communicate out to the internet.

We are thrilled to announce new Cloud NAT features that improve its scalability, flexibility and performance!

Cloud NAT Dynamic Port Allocation

Cloud NAT Customized TCP timewait

Cloud NAT Rules

More ports when you need them: Introducing Dynamic Port Allocation

One of the main best practices of monitoring Cloud NAT is keeping an eye on the availability of ports for your workloads. This is acutely important when your workloads open many parallel connections to the same destination on the internet.

Before Dynamic Port Allocation (DPA), all instances had to get equal amounts of port allocations behind the same NAT gateway.

As you can probably imagine, this works well when connections to external endpoints are evenly spread out across all workloads. However, if certain nodes need to open many connections externally while others don’t, you end up needing to increase the port allocation (using the minimum ports per node configuration) for all nodes and wasting valuable external IP resources.

For example, a 4,000-instance deployment behind a NAT gateway with a 1,024 ports-per-node configuration would need 64 external IP addresses allocated to the NAT gateway. Now say one of these nodes needs to burst, on occasion, to 4,096 ports, then you would have to bump that configuration for all nodes and end up allocating 254 external IP addresses (almost a /24’s worth of external IPs). While the one node will now have the ports it needs, all the other 3999 nodes will have 4,096 ports allocated that they don’t really require and will be sitting mostly idle.

DPA solves this problem elegantly; it allows you to configure both a “Minimum ports per VM” and “Maximum ports per VM” for a NAT gateway. When a node starts using up ports and getting close to exhausting its “Minimum ports per VM” configuration, DPA will automatically allocate it --and only it-- more ports (doubling current port count each time) and keep allocating it more ports as necessary up to the configured “Maximum ports per VM” setting. And conversely, when the node releases more than half of its connections the NAT gateway will deallocate the extra ports until it gets back to the configured “Minimum ports per VM”.

Coming back to our earlier example, with DPA just allocating an extra external IP for a total of 65 external IP addresses allows 15 nodes to simultaneously burst to 4,096 ports while keeping the rest of your deployment at 1,024 ports. A significant improvement over the previously-required 254 external IP addresses!

Customize that TCP Time_wait timeout

The TCP Timewait timeout is now configurable where it used to be statically set to an unconfigurable 120 seconds. This timeout can now be configured per NAT gateway, allowing for faster connection closeouts and increasing the rate that NAT source ports can be reused for new connections.

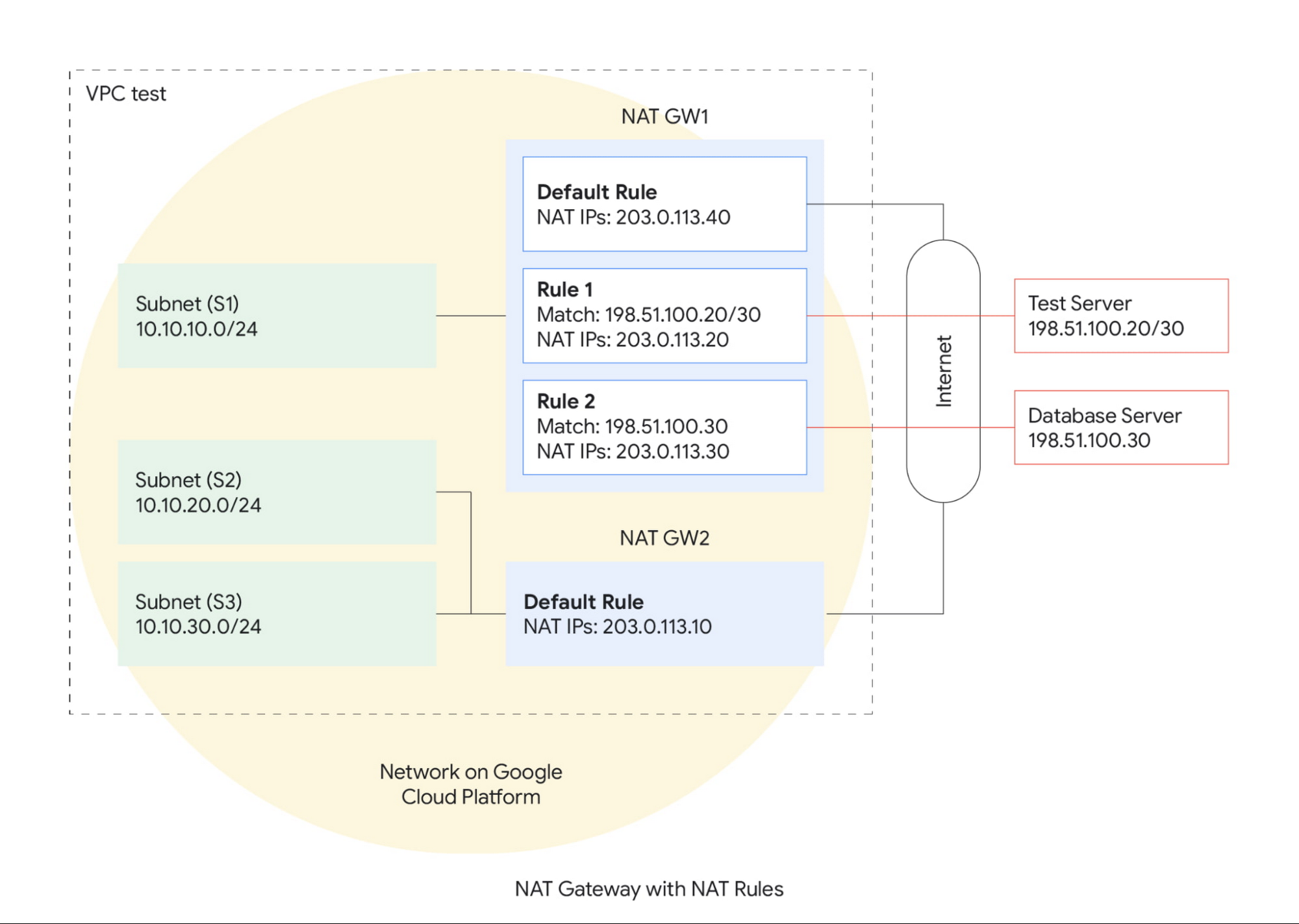

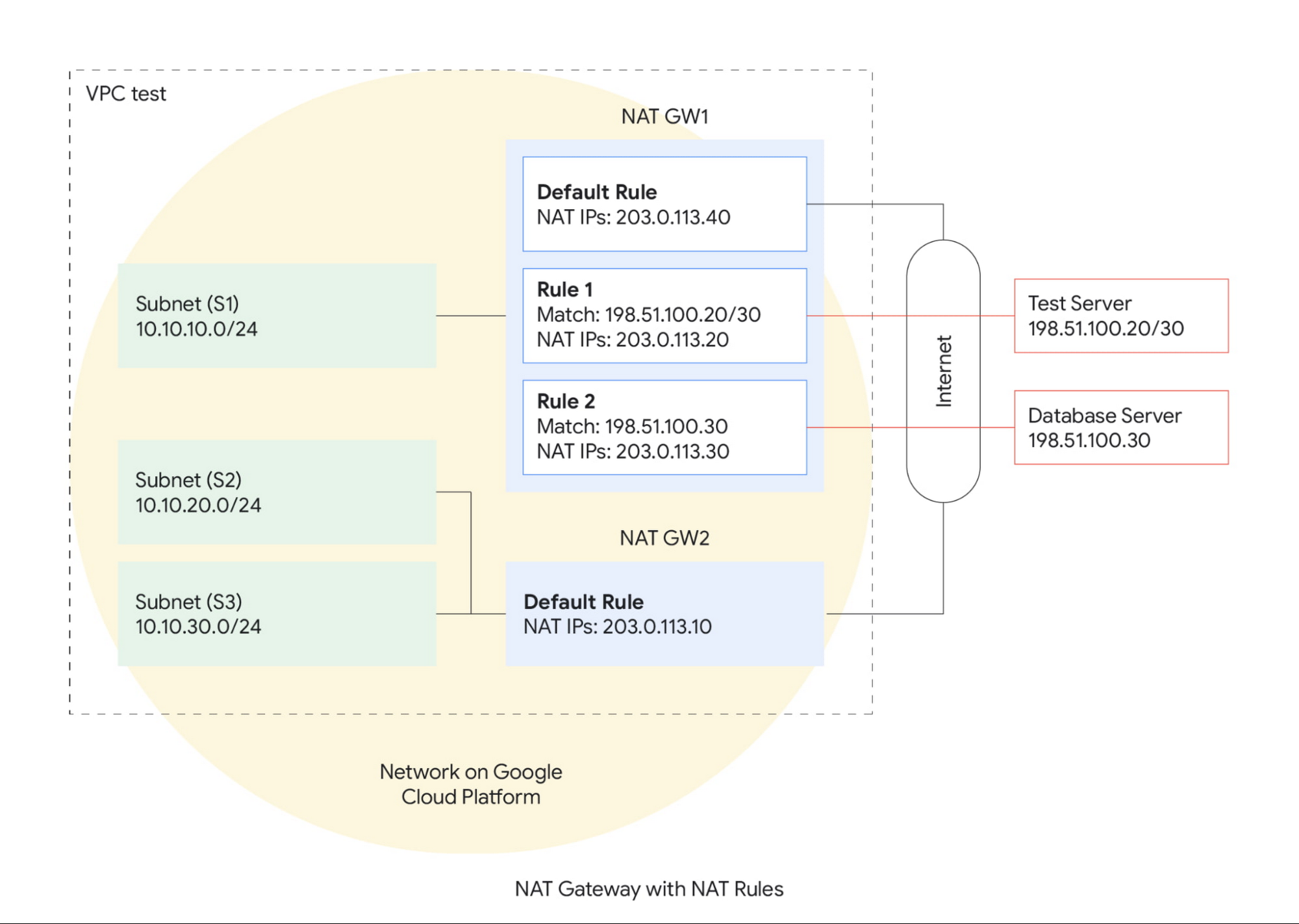

Pick your source IP based destinations with NAT Rules

A common way our customers use Cloud NAT is to configure their NAT gateway in manual IP allocation mode and adding/removing reserved external IP addresses to source communication from predictable IP addresses.

One of the key reasons to use manual IP allocation mode is to enable the 3rd-party systems to allowlist source IP addresses when talking to them. Think of payment processor endpoints, restricted source repositories, and rate-limited APIs as examples. Cloud NAT is a great way to stretch the same predictable set of external IP addresses over many instances and GKE nodes.

Unfortunately, up until now, a Cloud NAT gateway imposed the same set of manual IP addresses for all destinations on the internet. If your instances needed more ports for other destinations on the internet, you then had to manually allocate more IP addresses to the NAT gateway and inform all your external partners of the new set of IP addresses so they could add these addresses into their allowlist. Cloud NAT Rules now have you covered!

NAT Rules enable innovative use-cases in Cloud NAT, for example:

You can configure a NAT gateway that uses a small set of predefined IP addresses only for certain destinations that need IP allowlisting, while using another larger set for all other destinations. Allowing you to scale both sets independently (and really only scaling up the allowlisting set only when needed).

Destination IP addresses that belong to low-priority endpoints (and can hence tolerate some connection drops due to port exhaustion) can have a smaller set of external IP addresses allocated to them. This ensures that such endpoints can never starve the port allocations for higher-priority destinations.

Moreover, you can combine DPA with NAT Rules for even more scalability! When both features are configured on a NAT gateway, Cloud NAT will intelligently manage port allocations separately for both default and rule-based destinations and scale them both up/down separately.

We hope you are excited about these new NAT capabilities as we are! Feel free to take them for a spin: try out the NAT Rules codelab, see more examples of NAT Rules configurations, and review the CloudNAT Dynamic Port Allocation documentation. If you haven’t already, explore how to enable Cloud NAT for your Compute Engine and GKE workloads.