Picture this: How media companies can render faster — for less — with cloud-based NFS caching

Brennan Doyle

Solutions Architect

Tom Taylor

Founder, Gunpowder Technologies

Content creators are by nature unique. So it should come as no surprise that content creation companies also have unique needs — especially when it comes to building out the infrastructure they need to deliver their work on time, and on budget.

Gunpowder works with all manner of creators — advertising agencies, Visual Effects (VFX) studios, media, and even individual artists — to help them configure the compute, storage and networking capacity that powers their ideas, both on-premises and in the cloud. As a Google Cloud partner, we recently started using an open-source NFS caching system called knfsd with several of our clients, and found that it can help solve two high-level challenges that creative companies face:

Harnessing excess capacity: Whether it’s as a long-term solution, as part of a migration, or scaling with cloud resources to accommodate a short-term deadline, creatives can augment on-prem compute and storage with a hybrid cloud configuration.

Controlling costs: Media companies can save on both storage and compute costs, by chasing lower-cost, ephemeral compute resources in the cloud, minimizing storage outlays, and keeping network changes low.

To illustrate, read on for examples from some of the creative companies we work with, plus a behind-the-scenes look at how the virtual caching appliance works, and how to start using it as part of your own content creation workflow.

Bursting to the cloud with Refik Anadol, media artist with a global conscience

Earlier this year, renowned Turkish media artist Refik Anadol was invited to present his work at the World Economic Forum, a.k.a. Davos. Refik envisioned an AI-generated art installation that looked at the plight of coral reefs under climate change, utilizing approximately 100 million coral images as raw data.

Refik had just three weeks to produce the piece, but it would have taken the servers in his Los Angeles studio at least six to complete the render. Refik turned to Gunpowder to help him meet his deadline. We identified low-cost Spot VM capacity at Google Cloud’s Oregon data center (which has the added benefit of being powered by 100% carbon-free energy), and set up knfsd to cache the data there. Refik used up to 250 T4 GPUs to render the final piece, “Artificial Realities: Coral Installation,” delivering it in time for the event, all while using sustainably powered resources.

Controlling costs at cloud-first VFX studio House of Parliament

In the past year alone, House of Parliament has completed nine Super Bowl commercials, and generated all of the visual effects for the video for Taylor Swift’s song, ‘Lavender Haze,” among other projects.

House of Parliament is 100% in Google Cloud, but which cloud region depends on the day. Demand for compute fluctuates depending on the project at hand, so Parliament is always chasing the region with available capacity at the lowest Spot price (Spot VMs are up to 91% cheaper than regular instances). Knfsd helps with that too. The caching appliance sits in front of its main file system in us-west2 (Los Angeles), to which the VM render nodes connect as they work on their daily jobs. This way, the render nodes can work off the House of Parliament’s full storage array without actually having to provision storage in the region or copy data there, which would have incurred additional costs and slowed down the workflow.

Caching solution overview — the geeky stuff

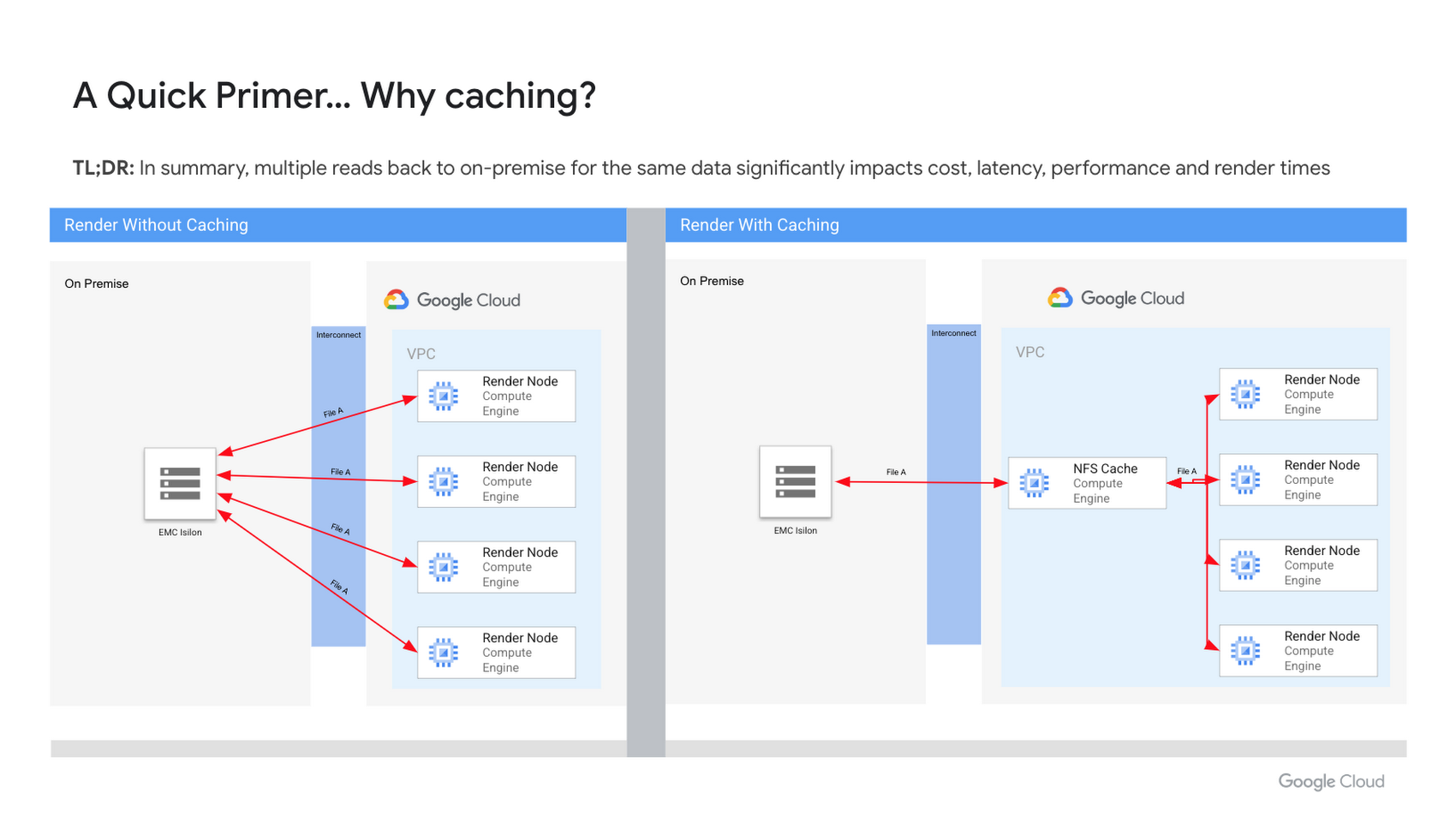

The cloud caching solution is relatively simple. Before, when customers wanted to use the cloud to scale existing compute, ‘render workers’ running in Google Cloud mounted and read files directly from on-prem NFS file servers. To minimize latency and optimize data transfer, customers provisioned a high-bandwidth pipe, using Cloud VPN, or Dedicated Interconnect, between their on-prem data center and Google Cloud. While this solution certainly works, it can suffer from network latency, and the extra requests put a strain on customer’s on-prem NFS storage arrays.

Now, instead of the remote workers going back to the on-prem data center for their data, they first check in to see if the data they need is available from the cloud-based knfsd virtual caching appliance. This dramatically cuts down on the number of calls back to the on-prem file servers that the render workers need to make, enabling a reduction in the size of VPN or Dedicated Interconnects, improving throughput, and reducing I/O to and from on-prem file servers.

Knfsd uses two existing Linux kernel modules: nfs-kernel-server (the standard Linux NFS Server), which supports NFS re-exporting; and cachefilesed (FS-Cache), which provides a persistent cache of network filesystems on disk. It works by mounting NFS exports from a source NFS filer (typically located on-prem) and re-exporting the mount points to downstream NFS clients (typically in Google Cloud). By re-exporting, the solution provides two layers of caching:

Level 1: The standard block cache of the operating system, residing in RAM.

Level 2: FS-Cache. A Linux kernel module which caches data from network filesystems locally on disk.

When the volume of data exceeds available RAM (L1), FS-Cache simply caches the data on the disk, making it possible to cache terabytes of data. By leveraging Local SSDs, a single cache node can serve up to 9TB of data at blazingly fast speeds.

Caching appliance FTW

Implementing an NFS cache like knfsd brings on all sorts of benefits. Let’s take a look at six of them:

1. Exponentially scale storage speeds

In the customer stories above, we discussed how NFS caching was used to accelerate access to files.

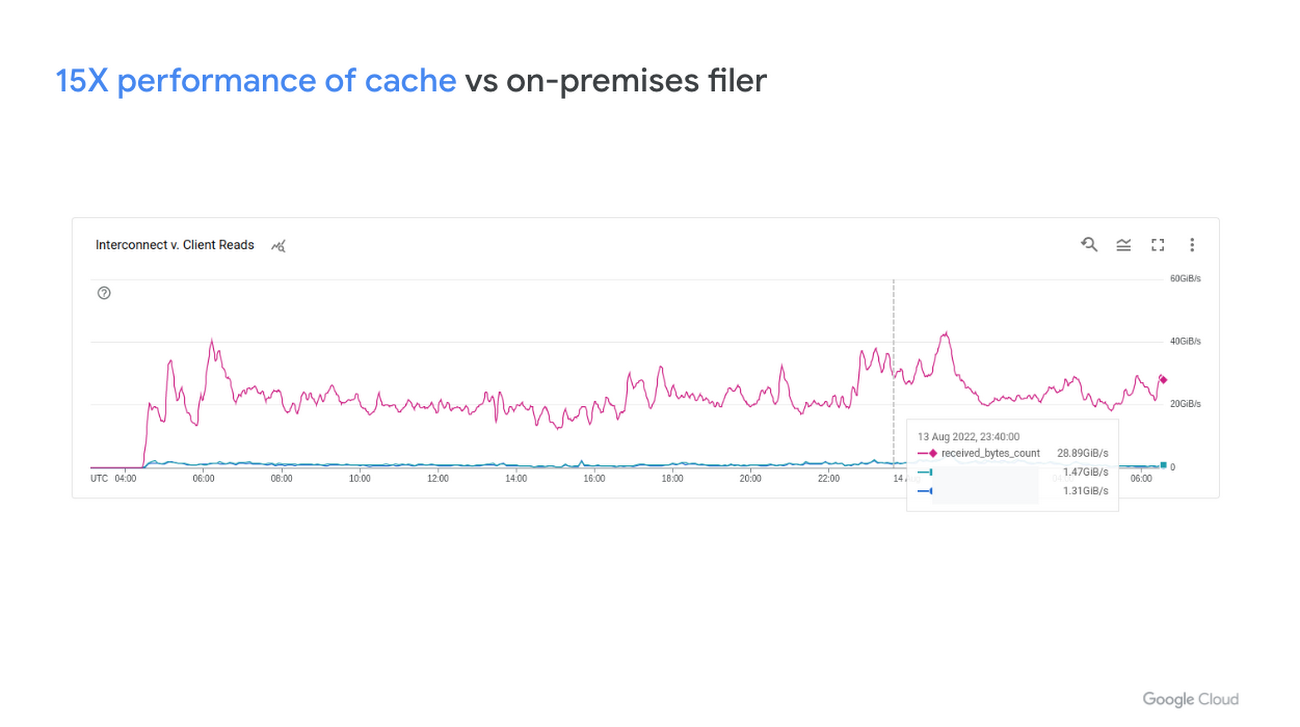

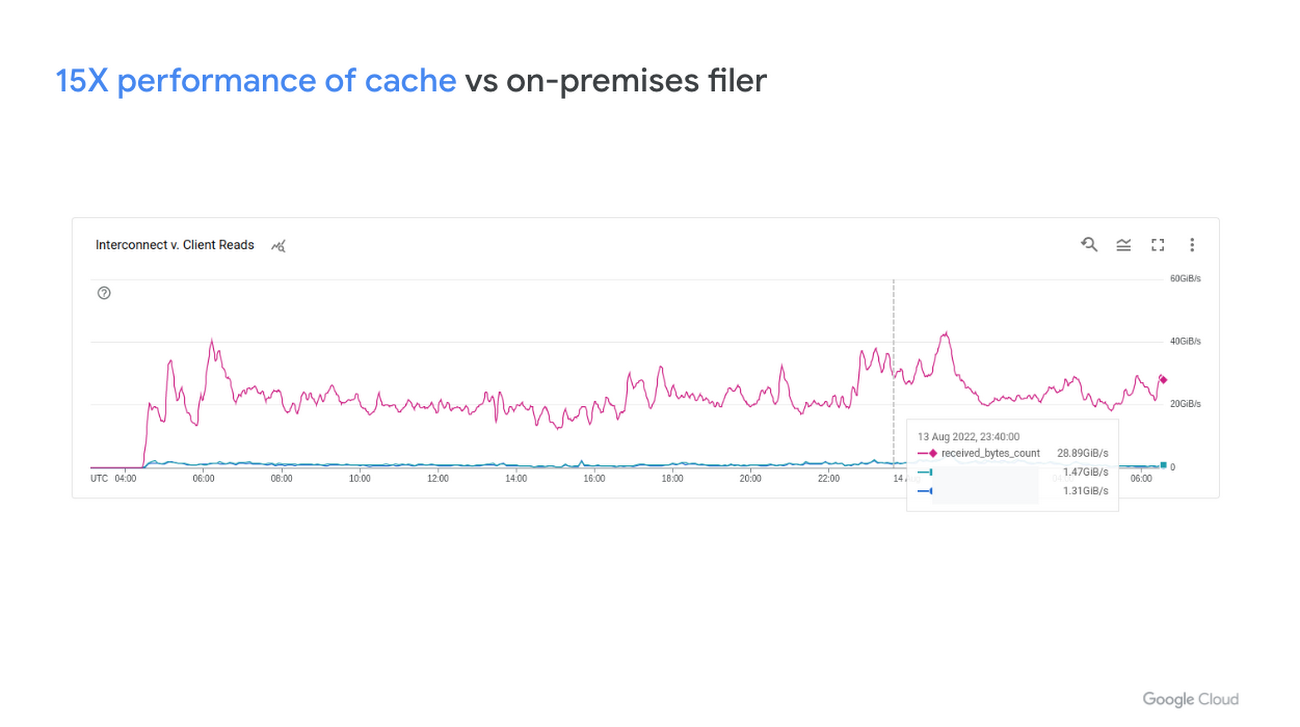

For the on-prem filer to cloud-based cache pattern (e.g., Refik Anadol), we’ve seen as much as a 15X performance improvement from leveraging an NFS cache.

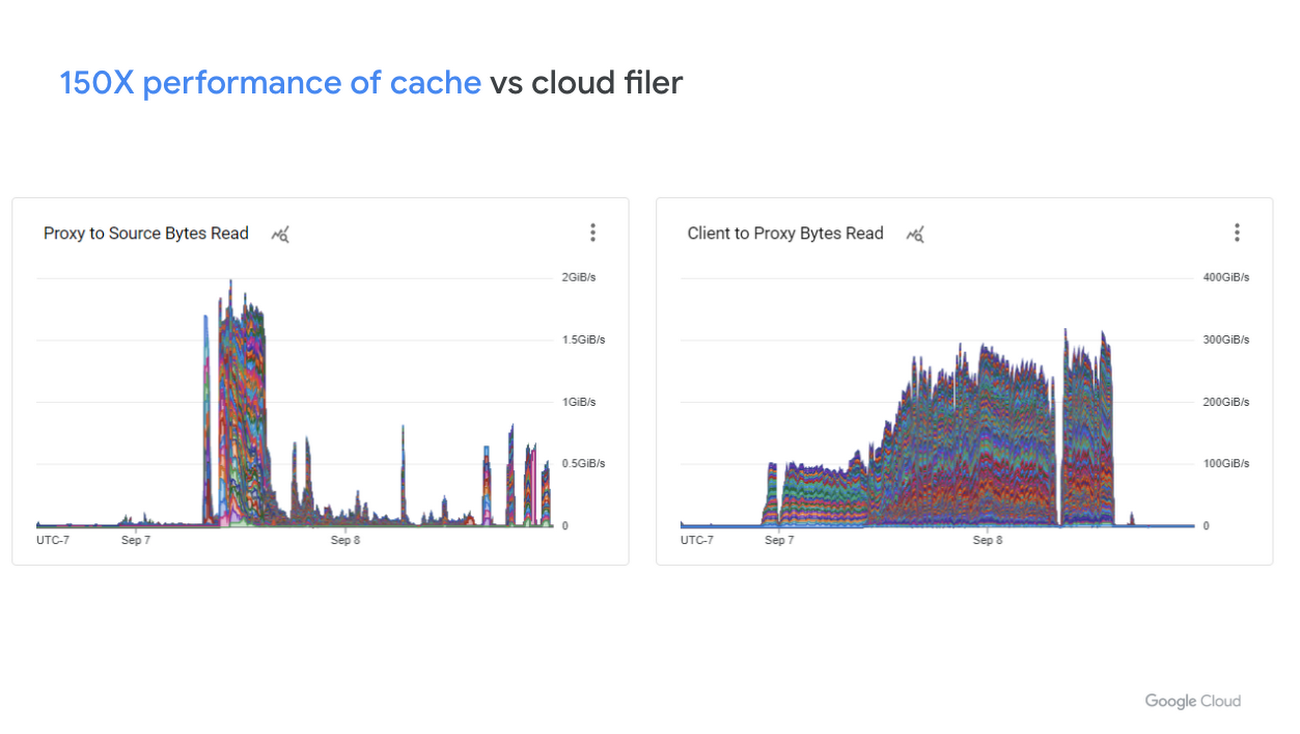

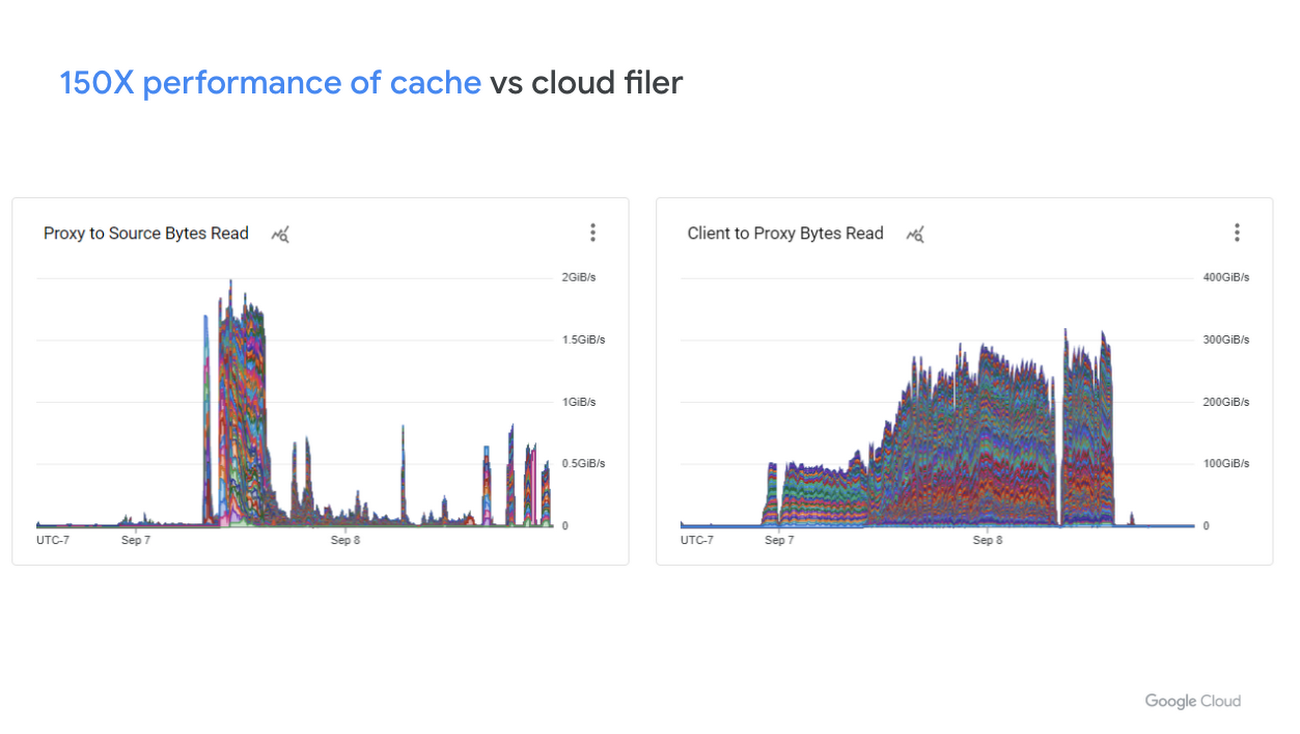

For the cloud-based use case like House of Parliament, with a conservatively provisioned filer to cloud-based cache, we’ve seen as much as a 150X performance improvement by leveraging an NFS cache.

2. Stop over provisioning steady-state storage

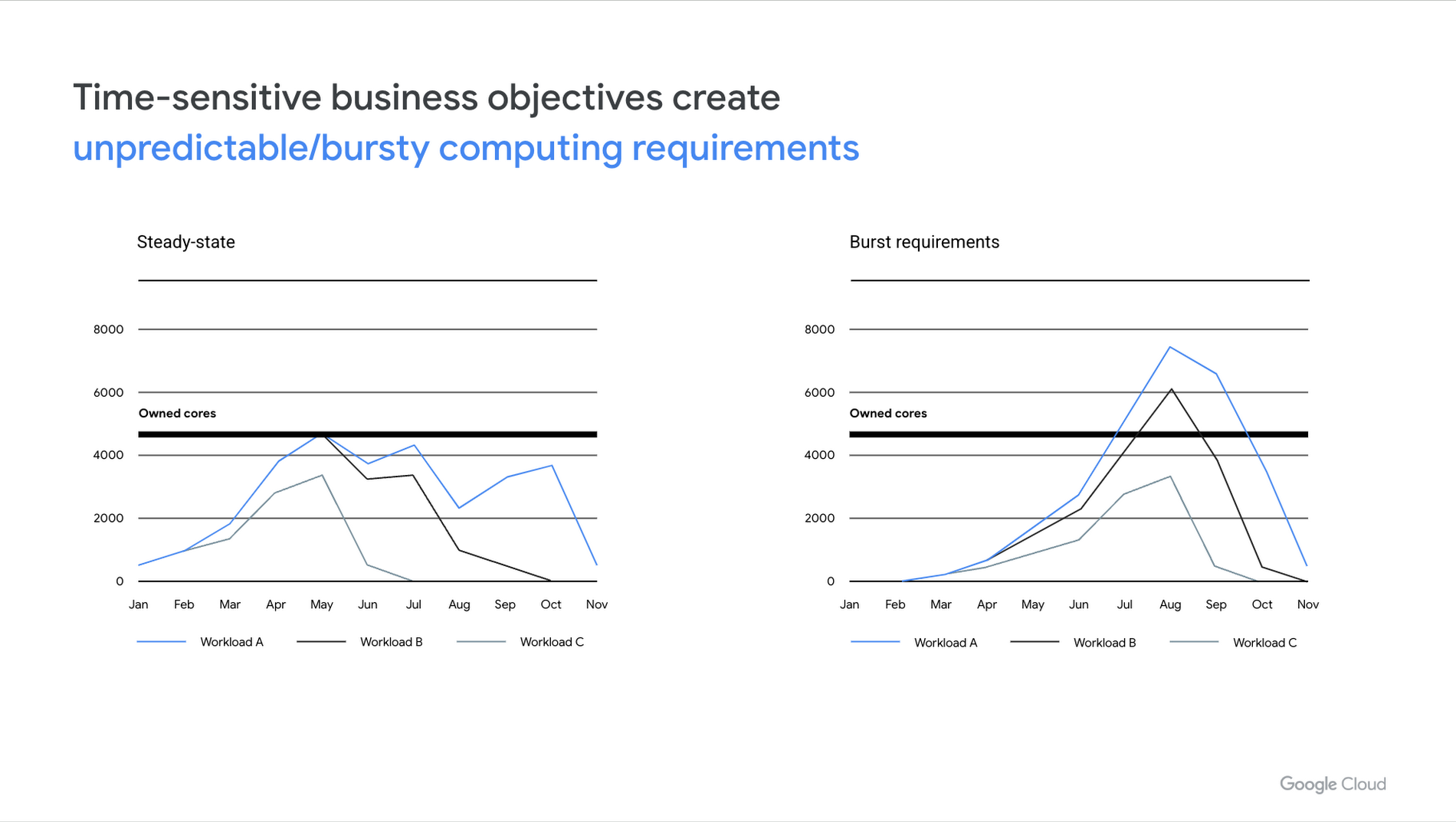

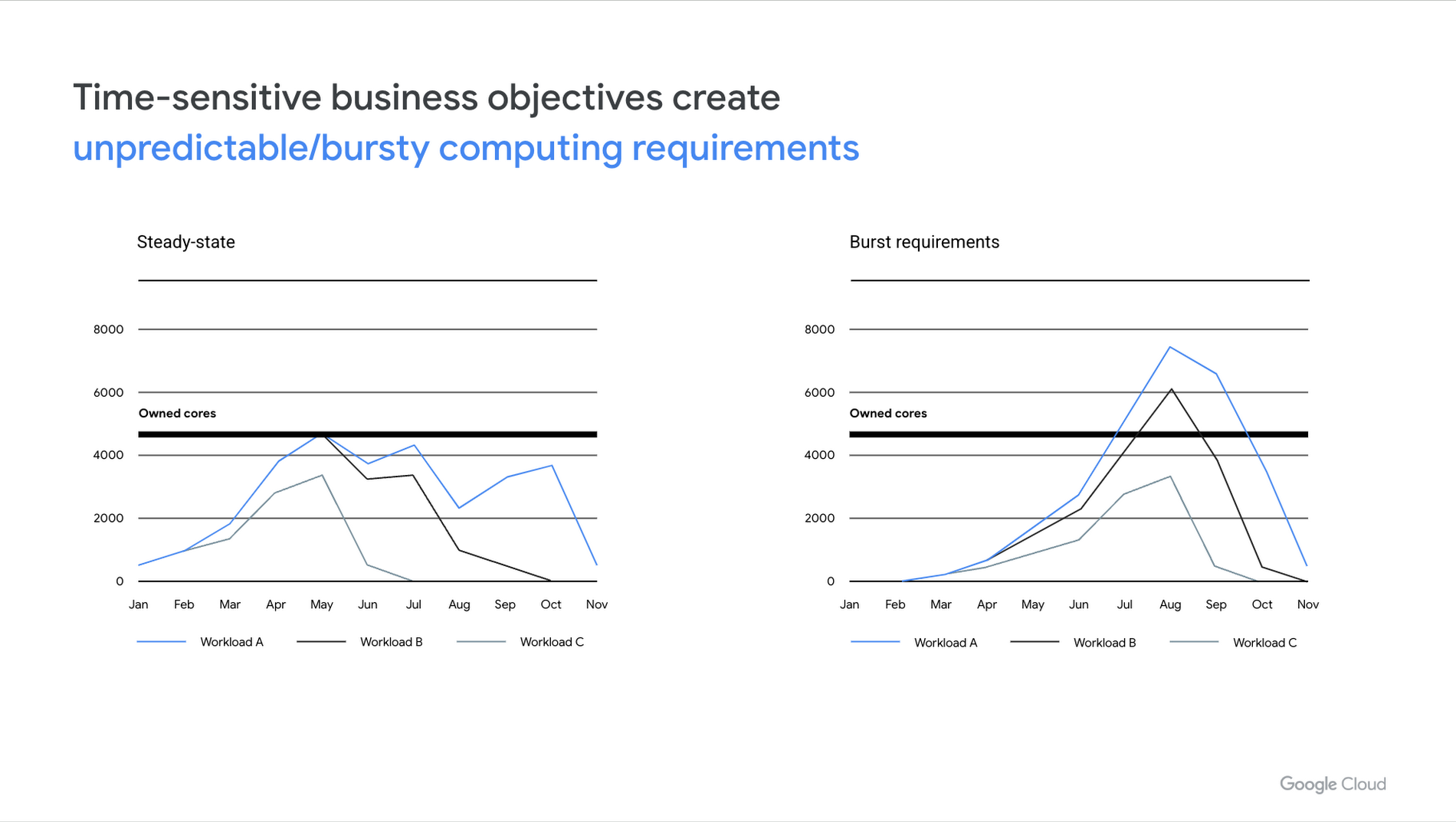

Traditionally, content creators have been forced to plan very carefully for how many resources (physical machines) they need to get a project done, and to match capital expenditures to their compute and storage needs. Even if you get this right at the start of a project, production needs seem to change constantly and playing this resource management game has been a challenging task — especially when you’re balancing multiple projects.

Cloud makes it much easier to scale compute to match these shifting requirements. And with NFS caching, you can easily scale storage size and performance to match as well, without having to provision more storage or perform large data migrations.

3. Save money on compute

NFS caching speeds up data throughput, thus decreasing render times and overall job costs. In addition, it can help manage latency, enabling jobs to move to remote data centers with more low-priced Spot VM capacity. This is possible because many of our customers integrate checkpointing for their render jobs, ensuring their workloads are fault tolerant, and thus suitable for Spot VMs

4. Save money on networking

A lot of workloads reuse files many times over, for any number of processes. By moving the data once to the cloud, then accessing it multiple times from the NFS cache, we lower the amount of data which needs to traverse a VPN or dedicated interconnect. This allows us to rightsize the network and provision a smaller connection.

5. Maintain artist productivity

When a storage system is overloaded, artists can’t do their work. By offloading I/Ops and throughput to the caches, we keep these systems and their most important stakeholders, our customers’ employees, working as intended.

6. Fit into existing pipelines

By fully leveraging native NFS, knfsd seamlessly integrates with existing workflows. You don’t need to install any additional software tools on the render nodes’ VMs. For reads, you don’t need to modify paths or filenames when moving jobs between datacenters — everything is accessed in the same way. And when writing new files, the system writes all data directly back to the source-of-truth file.

Looking ahead

Today, a lot of creative companies still look longingly at the cloud as a way to complement their on-prem compute resources. But between steep learning curves, engineering resource constraints, and concerns about wide area network latency and bandwidth costs, using the cloud can seem out of reach.

At Gunpowder Technology, we focus on removing these technical and resource barriers for our customers, accelerating their ability to get past the tech and back to focusing on delivering to their fullest creative potential. Knfsd is available as an open source repository, so if you have the engineering expertise and resources, you can integrate it yourself into a custom pipeline.

But if you’d appreciate leaning on our years of experience and letting us do the heavy lifting for you, reach out to info@gunpowder.tech to discuss how we can custom tailor a solution for you.

We’re excited to collaborate on these use cases with you. To learn more about how to deploy and manage the open source NFS caching system, see the single node tutorial or the Terraform-based deployment scripts hosted on GitHub. Or for an overview and demo of the solution, be sure to reach out to your sales team, or Gunpowder.tech.