How to use Google Cloud to find and protect PII

Karim Wadie

Strategic Cloud Engineer

Nikhiel Sawhney

Head of EMEA Security Practice

BigQuery is a leading data warehouse solution in the market today, and is valued by customers who need to gather insights and advanced analytics on their data. Many common BigQuery use cases involve the storage and processing of Personal Identifiable Information (PII)—data that needs to be protected within Google Cloud from unauthorized and malicious access.

Too often, the process of finding and identifying PII in BigQuery data relies on manual PII discovery and duplication of that data. One common way to do this is by taking an extract of columns used for PII and copying them into a separate table with restricted access. However, creating unnecessary copies of this data and processing it manually to identify PII increases the risks of failure and subsequent security events.

In addition, the security of PII data is often mandated by multiple regulations and failure to apply appropriate safeguards may result in heavy penalties. To address this issue, customers need solutions that 1) identify PII in BigQuery and 2) automatically implement access control on that data to prevent unauthorized access and misuse, all without having to duplicate it.

This blog will discuss a solution developed by Google Professional Services for leveraging Google Cloud DLP to inspect and classify sensitive data and suggest a solution for using these insights to automatically tag and protect data in BigQuery tables.

BigQuery Auto Tagging solution overview

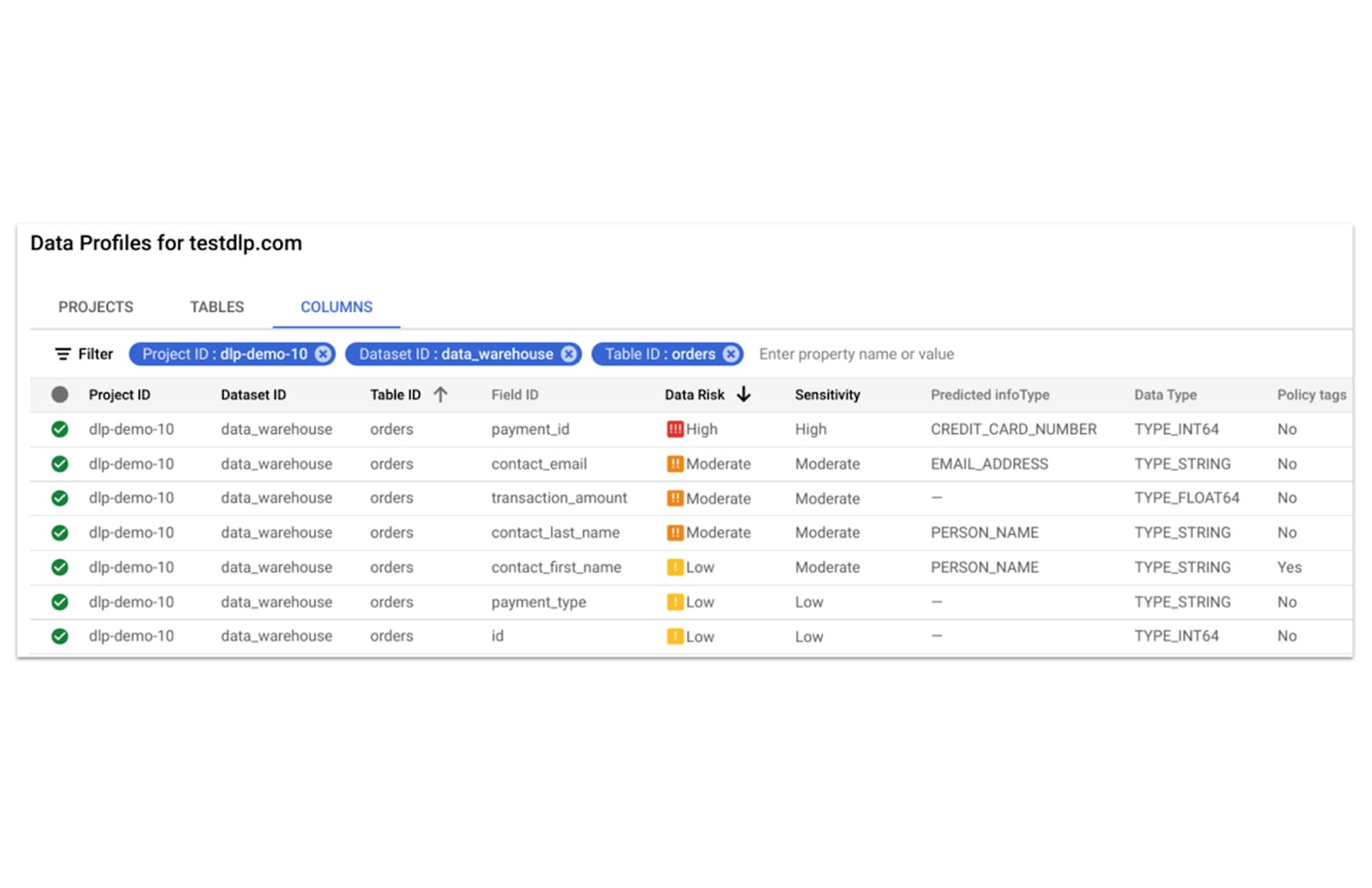

Automatic DLP can help to identify sensitive data, such as PII, in BigQuery. Organizations can leverage Automatic DLP to automatically search across their entire BigQuery data warehouse for tables that contain sensitive data fields and report detailed findings in the console (see Figure 1 below,) in Data Studio, and in a structured format (such as a BigQuery results table.) Newly created and updated tables can be discovered, scanned, and classified automatically in the background without a user needing to invoke or schedule it. This way you have an ongoing view into your sensitive data.

In this blog, we show how a new open source solution called BigQuery Auto Tagging Solution solves our second goal—automating access control on data. This solution sits as a layer on top of Automatic DLP and automatically enforces column-level access controls to restrict access to specific sensitive data types based on user-defined data classification taxonomies (such as high confidentiality or low confidentiality) and domains (such as Marketing, Finance, or ERP System.) This solution minimizes the risk of unrestricted access to PII and ensures that there is only one copy of data maintained with appropriate access control applied down to the column level.

The code for this solution is available on Github at GoogleCloudPlatform/bq-pii-classifier. Please note that while Google does not maintain this code, you can reach out to your Sales Representative to get in contact with our Professional Services team for guidance on how to implement it.

BigQuery and Data Catalog Policy Tags (now Dataplex) have some limitations that you should be aware of before implementing this solution to ensure that it will work for your organization:

Taxonomies and Policy Tags are not shared across regions: If you have data in multiple regions you will need to create or replicate your taxonomy in each region that you want to apply policy tags.

Maximum number of 40 taxonomies per project: If you require different taxonomies for different business domains or have replications to support multiple Cloud regions those will count against this quota.

Maximum number of 100 policy tags per taxonomy: Cloud DLP supports up to 150 infoTypes for classification, however, a single policy taxonomy can only support up to 100 including any nested categories. If you need to support more than 100 data types, you may need to split these across more than one taxonomy.

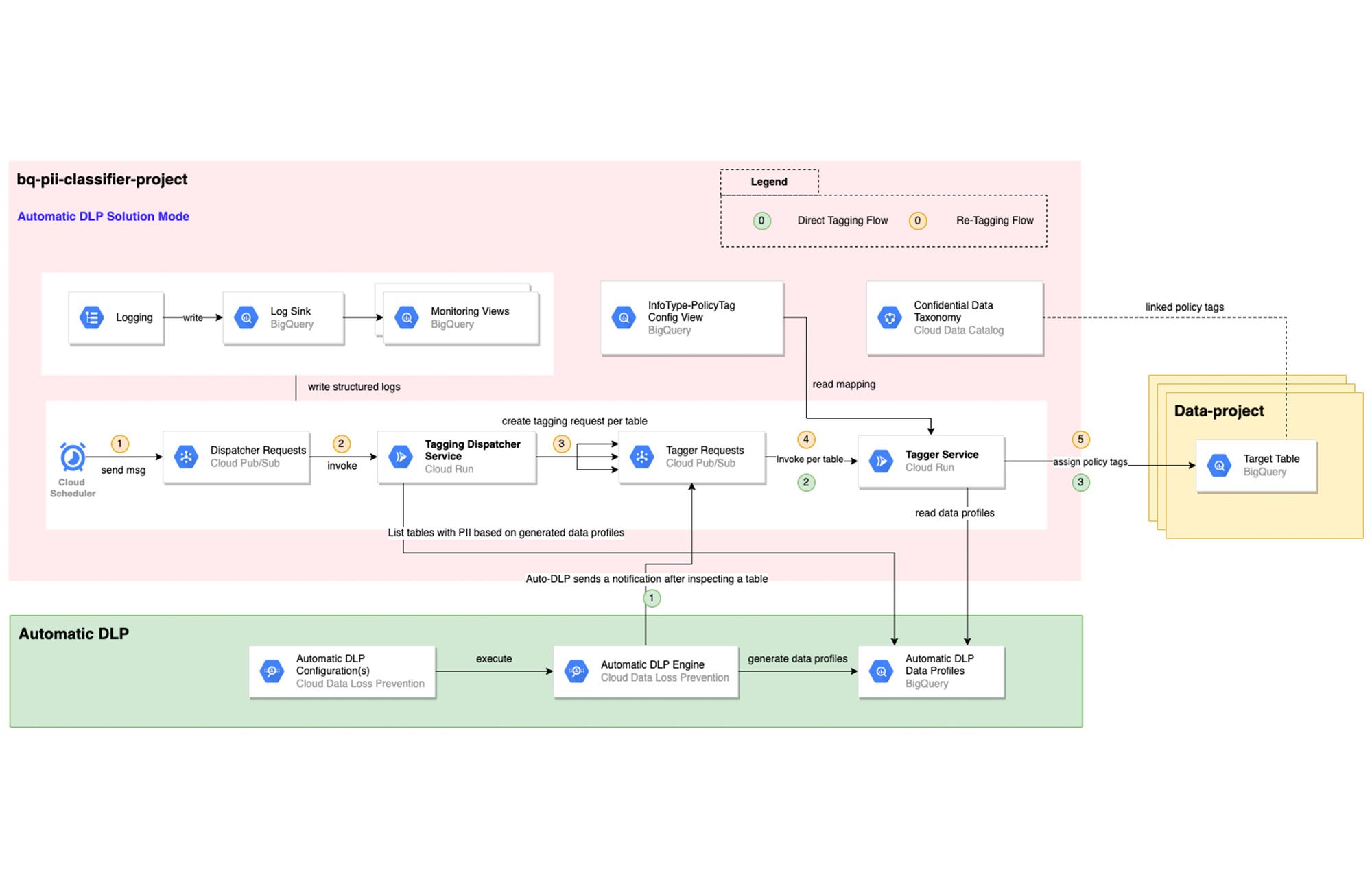

High-level overview of the solution

The solution is composed mainly of the following components: Dispatcher Requests topic, Dispatcher service, BigQuery Policy Tagger Requests topic, and BigQuery Policy Tagger service and logging components.

The Dispatcher Service is a Cloud Run service that expects a BigQuery scope to be expressed as inclusion and exclusion lists of projects, datasets, and tables. This Dispatcher service will query Automatic DLP Data Profiles to check if the tables in-scope have data profiles generated. For these tables, it will publish one request per table to the “BigQuery Policy Tagger Requests” PubSub topic. This topic enables rate limiting of BigQuery column tagging operations and apply auto-retries with backoffs.

The “BigQuery Policy Tagger” Service is also a Cloud Run service that receives the information of the DLP scan results of a BigQuery table. This service will determine the final InfoType of each column and apply the appropriate Policy Tags as defined in the InfoType - Policy Tags mapping. Only-one INFO_TYPE is selected and the function assigns the associated policy tag.

Lastly, all Cloud Run services maintain structured logs that are exported by a log sink to BigQuery. There are multiple BigQuery views that help with monitoring and debugging Cloud Run call chains and tagging actions on columns.

Deployment options

After deploying the solution, it can be used in two different ways:

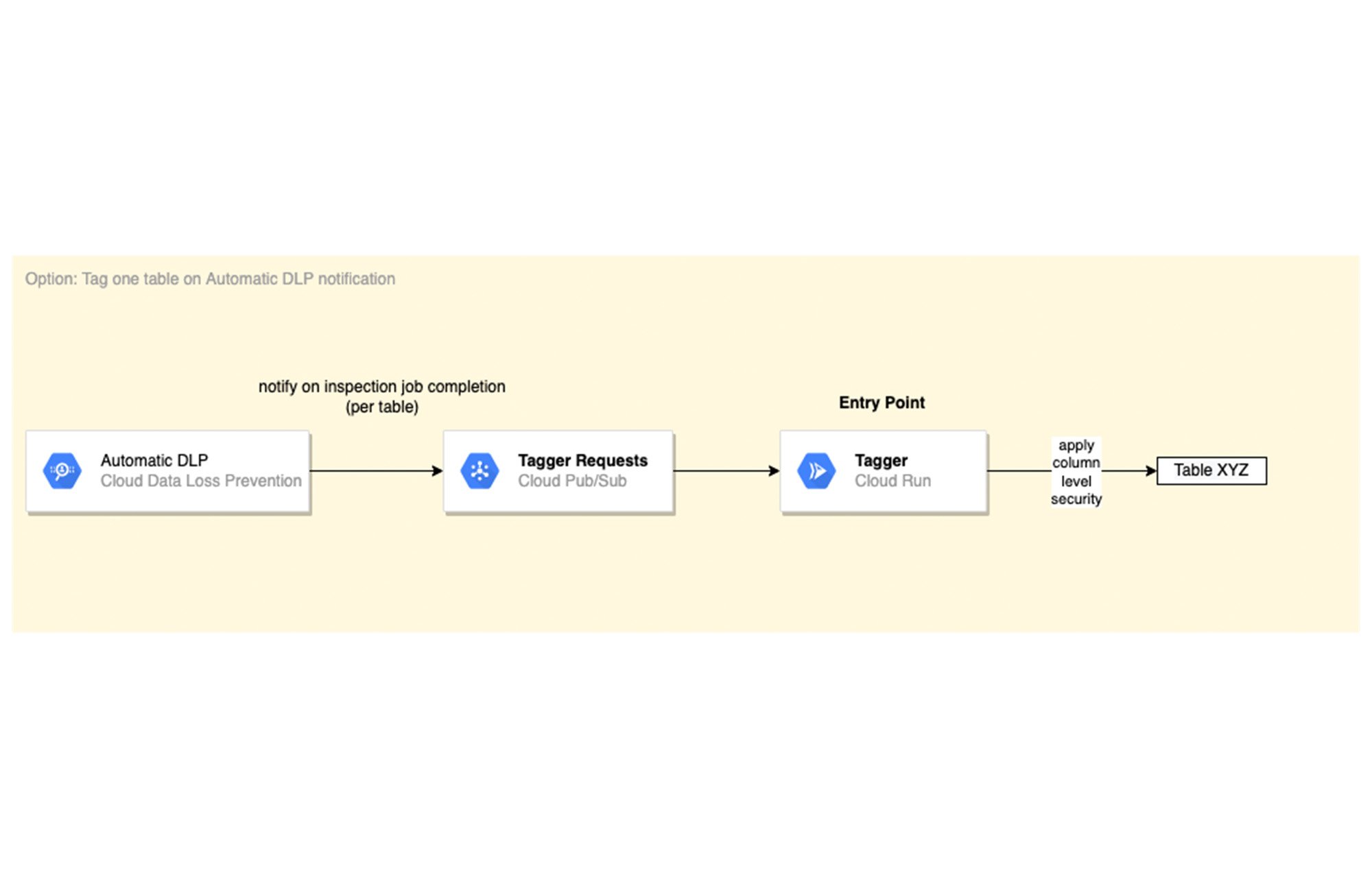

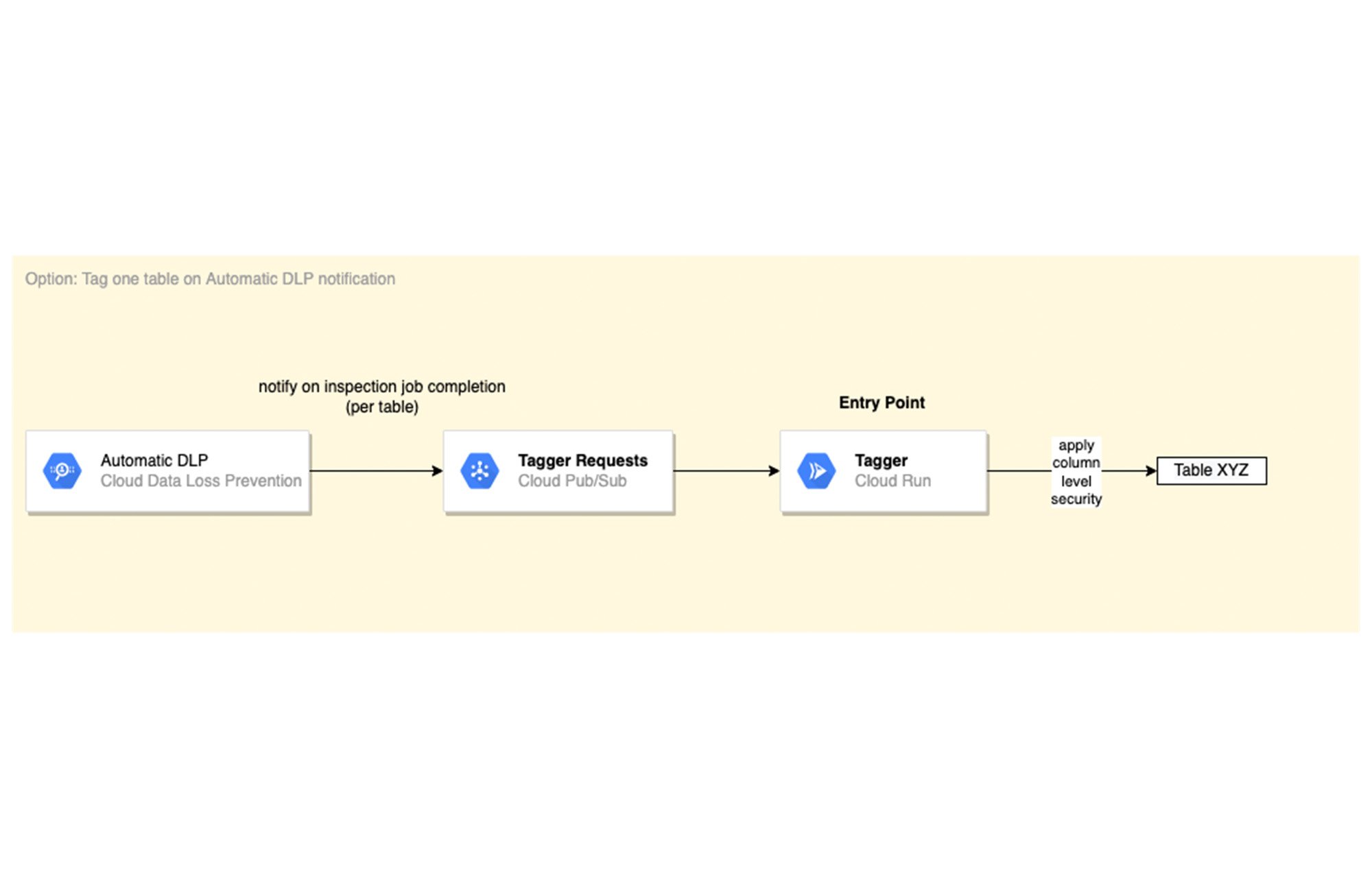

[Option 1] Automatic DLP-triggered immediate tagging:

Automatic DLP is configured to send a Pub/Sub notification on each inspection job completion. The Pub/Sub notification includes the resource name and it triggers the Tagger service directly.

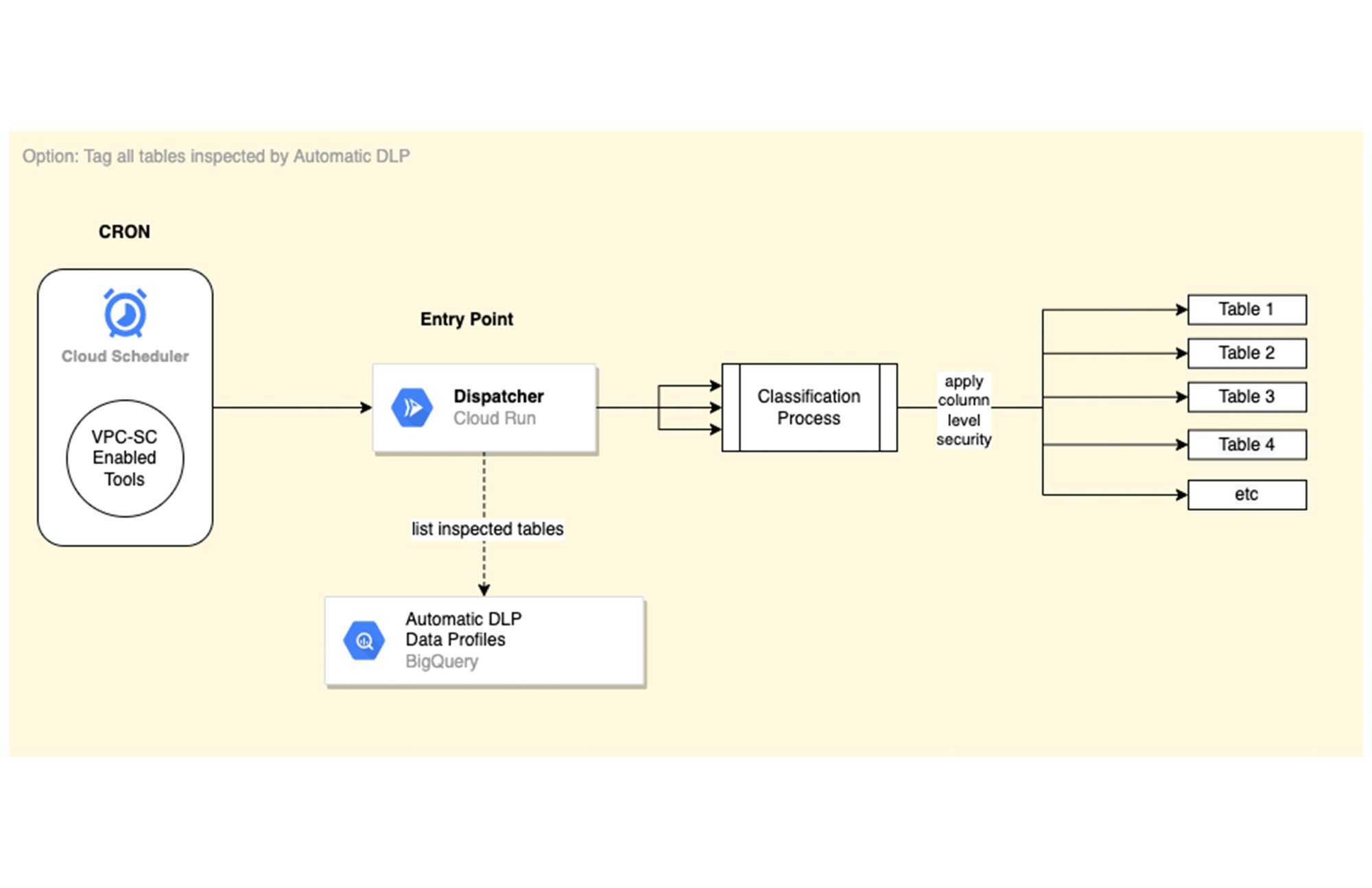

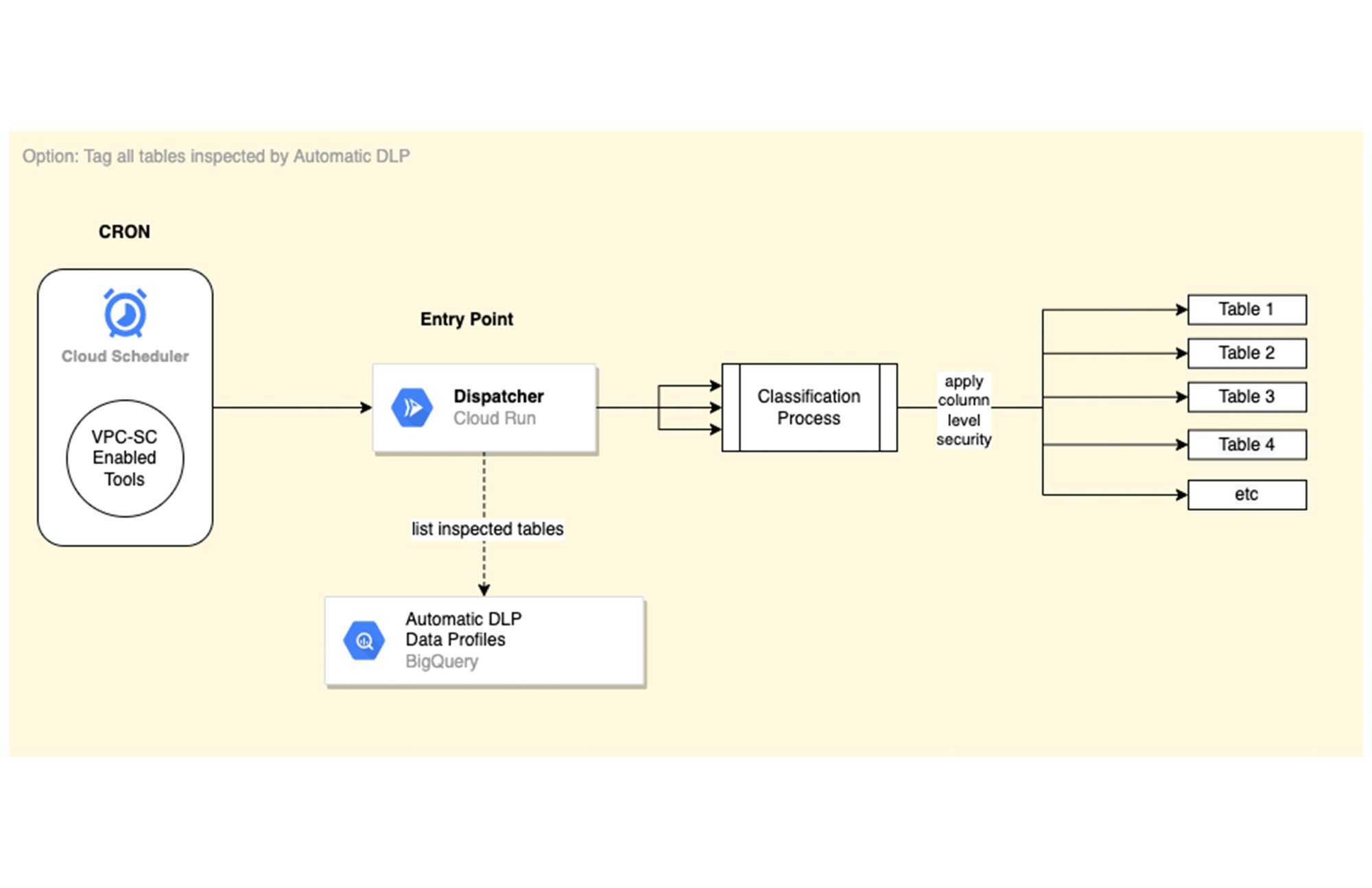

[Option 2] Scheduled tagging:

In this scenario, the Dispatcher service is invoked on a schedule with a payload representing a BigQuery scope to list inspected tables by Automatic DLP and create a tagging request per table. You could use Cloud Scheduler (or any Orchestration tool) to invoke the Dispatcher service. If the solution is deployed within a VPC-SC perimeter, other schedulers that support VPC-SC should be used (such as Composer or Custom App.)

In addition, more than one Cloud Scheduler/Trigger could be defined to group projects/datasets/tables that have the same tagging schedule (such as daily or monthly.)

To learn more about Automatic DLP, see our webpage. To learn more about the BQ classifier, see the open source project on Github: GoogleCloudPlatform/bq-pii-classifier and get started today!