Exploring container security: running and connecting to HashiCorp Vault on Kubernetes

Seth Vargo

Distinguished Software Engineer

If you’re serious about security, you need a secrets management tool that provides a single source of secrets, credentials, and other sensitive information for your organization. HashiCorp Vault is a popular open-source tool that does just that. Vault operates in a client-server model where a central cluster of Vault servers store and maintain secret data, and that data can be accessed by clients through the API, CLI, or web interface. Vault encrypts all data in transit with TLS 1.2+, at rest with 256-bit AES-GCM, and can also be upgraded to be FIPS 140-2 compliant.

In addition to being a static key-value store for information, Vault can also generate Google Cloud Service accounts, provide pass-through encryption to Cloud KMS, and generate dynamic credentials like database passwords, TLS certificates, and SSH keys. These properties make Vault a popular secrets management choice for containerized workloads, especially on Kubernetes. Instead of sharing credentials across pods and services, Vault allows each service to uniquely authenticate and request their own unique credentials. Furthermore, secrets have a limited scope and lifetime, and they can be revoked early in the event of a data breach.

Running Vault on Kubernetes

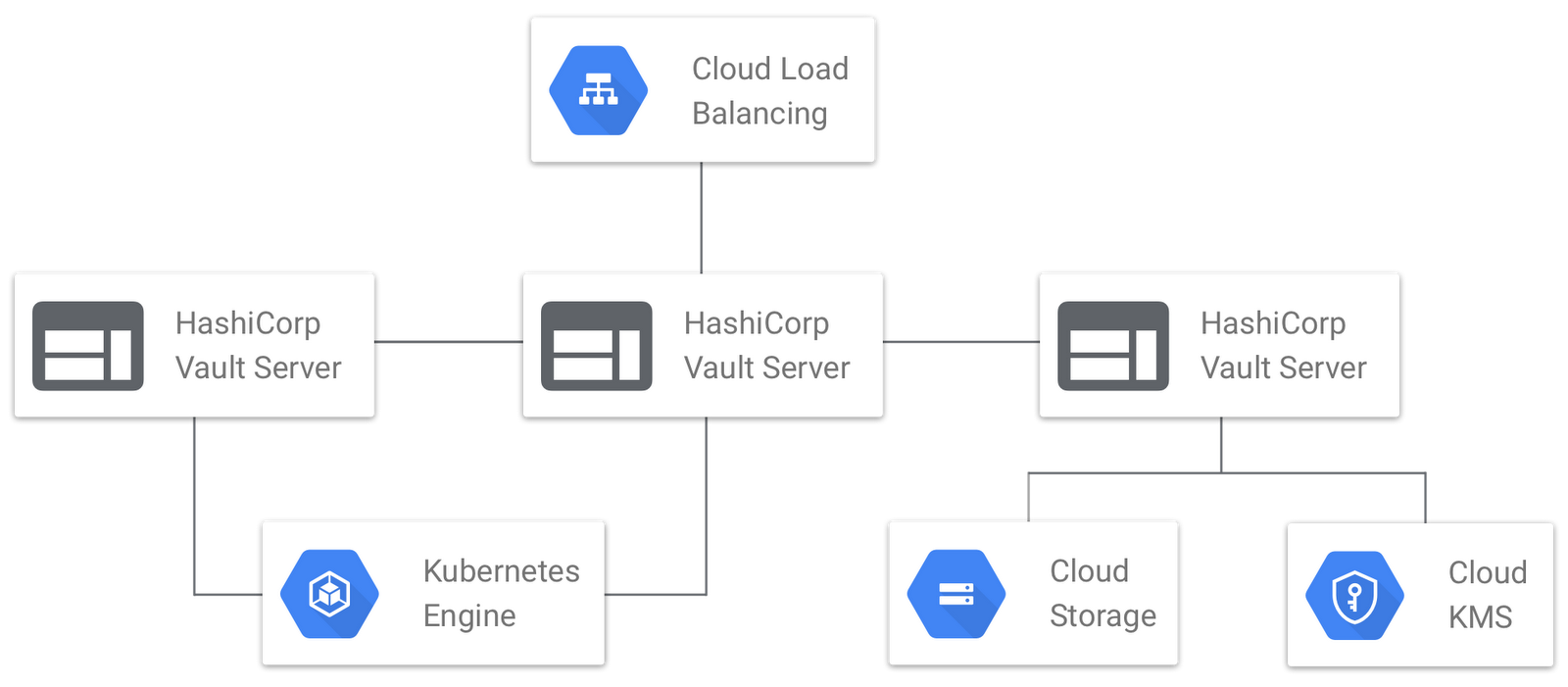

While you can run Vault on any platform, many users prefer to run Vault on Kubernetes because it performs much of the operational overhead automatically. Here is a sample architecture diagram for running Vault on Kubernetes.

If you choose to run Vault on Kubernetes on Google Cloud, here are some best practices to consider.

Choose a dedicated Kubernetes cluster - Always run Vault in a dedicated Kubernetes cluster, in a dedicated Google Cloud project, to which access is tightly controlled. No other pods or services should run in this cluster, and access to the cluster and project should be restricted to Vault administrators and security teams.

Use Cloud Storage or Cloud Spanner - Use the Cloud Storage backend or Cloud Spanner backend for durable storage of Vault's data. Both backends provide at least five nines of availability and support running Vault in High Availability mode. Both backends encrypt all data at rest. Cloud Storage can optionally use a customer-managed or customer-supplied encryption key. Cloud Spanner can perform bulk data transformations in a single transaction, making it a better fit for Vault Enterprise customers.

Auto-Unseal with Cloud KMS - Automatically initialize and unseal new Vault servers with Cloud KMS. A sidecar container initializes Vault, encrypts the unseal keys and initial root token with Cloud KMS, and persists the encrypted keys in the storage backend. When new Vault servers join the cluster, they are automatically started and unsealed with these keys. Combined with built-in Kubernetes semantics, this provides a highly available service with very little operational overhead.

Load balance at L4 - Delegate TLS termination and request management to Vault by using a regional load balancer (L4) in Google Cloud instead of a global load balancer (L7). This gives Vault full control over the TLS configuration and aids in using client certificates as a means for authentication.

Do not expose Vault via Kubernetes - Even though Vault is running in Kubernetes, do not expose Vault through Kubernetes service discovery. Instead, clients should connect to Vault via an IP/DNS address. Not only does this simplify the TLS configuration, but it makes Kubernetes an implementation detail, making it easier to perform upgrades.

For a step-by-step tutorial for setting up Vault on Google Cloud, please see this repository on GitHub. For a one-command set of Terraform configurations to deploy Vault on Google Cloud, please see this repository instead.

Connecting to Vault from Kubernetes

It is common for pods and services running in Kubernetes to need access to secrets like database passwords or API keys. While Kuberentes offers built-in secrets functionality, they have some considerable drawbacks, most notably they are not encrypted by default and there is no concept of rotation or revocation built into the system. For this reason, many organizations use Vault to store and manage access to secrets in Kubernetes.

Recall from the previous section that we do not recommend exposing Vault via standard Kubernetes methods like service discovery. To clients, Vault is just a service that exists at an IP or DNS address. Connecting to Vault from within a Kubernetes pod or service does not require that Vault itself be running under Kubernetes.

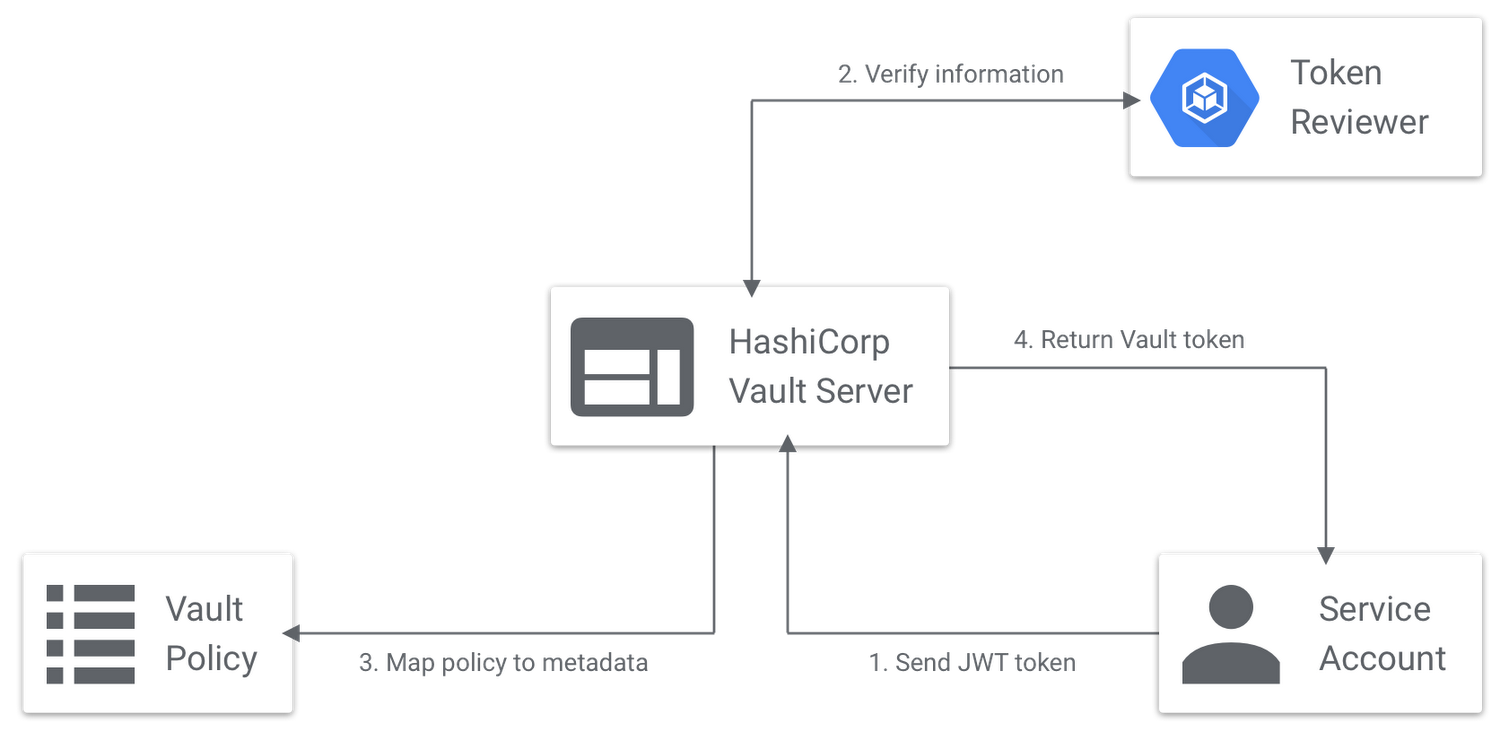

Vault acts as an identity broker, mapping credentials from third-party systems to policy and access internally. Users or machines supply information that is validated by Vault. Vault then uses metadata to assign policies to a token. For example, a user may provide a set of LDAP credentials to Vault. Vault validates those credentials against the LDAP server and maps the user's group membership in LDAP to policies in Vault.

In the case of Kubernetes, pods and services use the Vault Kubernetes auth method to authenticate by submitting their Service Account token to Vault. Vault verifies this token against Kubernetes' TokenReviewer API. Upon success, that pod's identity is mapped to policy and permissions in Vault.

While it is possible to build this integration directly into your application, there is a helpful open source init container that performs the Vault-Kubernetes auth flow. We recommend using this init container to perform the auth flow automatically, and it will save the resulting authenticated Vault token in an in-memory volume mount to be shared with other applications and services.

Vault + Kubernetes, working together

We are very excited to see Vault continue to gain adoption in the Kubernetes community, and we are committed to making Vault users successful on Google Cloud. For a deeper dive into these materials, see our Using Vault to Secure Kubernetes talk from Google Cloud NEXT. Be sure to follow us on Twitter if you have any questions or comments!