Megatrends drive cloud adoption—and improve security for all

Phil Venables

VP, TI Security & CISO, Google Cloud

Get original CISO insights in your inbox

The latest on security from Google Cloud's Office of the CISO, twice a month.

SubscribeEditor's note: This blog was updated on December 14, 2023, to add AI as the ninth cloud security megatrend.

We are often asked if the cloud is more secure than on-premise infrastructure. The quick answer is that, in general, it is. The complete answer is more nuanced and is grounded in a series of cloud security “megatrends” that drive technological innovation and improve the overall security posture of cloud providers and customers.

An on-prem environment can, with a lot of effort, have the same default level of security as a reputable cloud provider’s infrastructure. Conversely, a weak cloud configuration can give rise to many security issues. But in general, the base security of the cloud coupled with a suitably protected customer configuration is stronger than most on-prem environments.

Google Cloud’s baseline security architecture adheres to Zero Trust principles — the idea that every network, device, person, and service is untrusted until it proves itself. It also relies on defense in depth, with multiple layers of controls and capabilities to protect against the impact of configuration errors and attacks.

At Google Cloud, we prioritize security by design and have a team of security engineers who work continuously to deliver secure products and customer controls. Additionally, we also take advantage of industry megatrends that increase cloud security further, outpacing the security of on-prem infrastructure.

These nine megatrends actually compound the security advantages of the cloud compared with on-prem environments (or at least those that are not part of a distributed or trusted partner cloud). IT-decision makers should pay close attention to these megatrends because they’re not just transient issues to be ignored once 2023 rolls around — they guide the development of cloud security and technology, and will continue to do so for the foreseeable future.

Let’s look at these megatrends in more depth.

Economy of scale: Decreasing marginal cost of security

Public clouds are of sufficient scale to implement levels of security and resilience that few organizations have previously constructed. At Google, we run a global network, we build our own systems, networks, storage and software stacks. We equip this with a level of default security that has not been seen before, from our Titan security chips which assure a secure boot; our pervasive data-in-transit and data-at-rest encryption; and make available confidential computing nodes that encrypt data even while it’s in use.

We prioritize security, of course, but prioritizing security becomes easier and cheaper because the cost of an individual control at such scale decreases per unit of deployment. As the scale increases, the unit cost of control goes down. As the unit cost goes down, it becomes cheaper to put those increasing baseline controls everywhere.

Finally, where there is necessary incremental cost to support specific configurations, enhanced security features, and services to support customer security operations and updates, then even the per-unit cost of that will decrease. It may be chargeable but it is still a lower cost than on-prem services whose economics are going in the other direction. Cloud is, therefore, the strategic epitome of raising the security baseline by reducing the cost of control. The measurable level of security can’t help but increase.

Shared fate: The flywheel of cloud expansion

The long-standing shared responsibility model is conceptually correct. The cloud provider offers a secure base infrastructure (security of the cloud) and the customer configures their services on that in a secure way (security in the cloud). But, if the shared responsibility model is used more to allocate responsibility when incidents occur and less as a means of understanding mutual collective responsibility, then we are not living up to mutual expectations or responsibility.

Taking a broader view of a “shared responsibility” model, we should use such a model to create a mutually beneficial shared fate. We’re in this together. We know that if our customers are not secure, then we as cloud providers are collectively not successful. This shared fate extends beyond just Google Cloud and our customers—it affects all the clouds because a trust issue in one impacts the trust in all. If that trust issue makes the cloud "look bad," then current and potential future customers might shy away from the cloud, which ultimately puts them in a less-secure position.

This is why our security mission is a triad of Secure the Cloud (not only Google Cloud), Secure the Customer (shared fate) and Secure the Planet (and beyond).

Further, “shared fate” goes beyond just the reality of shared consequences. We view this as a philosophy of deeply caring about customer security, which gives rise over time to elements like:

- Secure-by-default configurations. Our default configurations ensure security basics have been enabled and that all customers start from a high security baseline, even if some customers change that later.

- Secure blueprints. Highly opinionated configurations for assemblies of products and services in secure-by-default ways, with actual configuration code, so customers can more easily bootstrap a secure cloud environment.

- Secure policy hierarchies. Setting policy intent at one level in an application environment should automatically configure down the stack so there’s no surprises or additional toil in lower-level security settings.

- Consistent availability of advanced security features. Providing advanced features to customers across a product suite and available for new products at launch is part of the balancing act between faster new launches and the need for security consistency across the platform. We reduce the risks customers face by consistently providing advanced security features

- High assurance attestation of controls. We provide this through compliance certifications, audit content, regulatory compliance support, and configuration transparency for ratings and insurance coverage from partners such as our Risk Protection Program.

Shared fate drives a flywheel of cloud adoption. Visibility into the presence of strong default controls and transparency into their operation increases customer confidence, which in turn drives more workloads coming onto cloud. The presence of and potential for more sensitive workloads in turn inspires the development of even stronger default protections that benefit customers.

Healthy competition: The race to the top

The pace and extent of security feature enhancement to products is accelerating across the industry. This massive, global-scale competition to keep increasing security in tandem with agility and productivity is a benefit to all.

For the first time in history, we have companies with vast resources working hard to deliver better security, as well as more precise and consistent ways of helping customers manage security. While some are ahead of others, perhaps sustainably so, what is consistent is that cloud will always lead on-prem environments which have less of a competitive impetus to provide progressively better security.

On-prem may not ever go away completely, but cloud competition drives security innovation in a way that on-prem hasn’t and won’t.

Cloud as the digital immune system: Benefit for the many from the needs of the few(er)

Security improvements in the cloud happen for several reasons:

- The cloud provider’s large number of security researchers and engineers postulate a need for an improvement based on a deep theoretical and practical knowledge of attacks.

- A cloud provider with significant visibility on the global threat landscape applies knowledge of threat actors and their evolving attack tactics to drive not just specific new countermeasures but also means of defeating whole classes of attacks.

- A cloud provider deploys red teams and world-leading vulnerability researchers to constantly probe for weaknesses that are then mitigated across the platform.

- The cloud provider’s software engineers often incorporate and curate open-source software and often support the community to drive improvements for the benefit of all.

- The cloud provider embraces vulnerability discovery and bug bounty programs to attract many of the world’s best independent security researchers.

- And, perhaps most importantly, the cloud provider partners with many of its customer security teams, who have a deep understanding of their own security needs, to drive security enhancements and new features across the platform.

This is a vast, global forcing function of security enhancements which, given the other megatrends, is applied relatively quickly and cost-effectively.

If the customer’s organization can not apply this level of resources, and realistically even some of the biggest organizations can’t, then an optimal security strategy is to embrace every security feature update the cloud provides to protect networks, systems, and data. It’s like tapping into a global digital immune system.

Software-defined infrastructure: Continuous controls monitoring vs. policy intent

One of the sources of the comparative advantage of the cloud over on-prem is that it is a software-defined infrastructure. This is a particular advantage for security since configuration in the cloud is inherently declarative and programmatically configured. This also means that configuration code can be overlaid with embedded policy intent (policy-as-code and controls-as-code).

The customer validates their configuration by analysis, and then can continuously assure that configuration corresponds to reality. They can model changes and apply them with less operating risk, permitting phased-in changes and experiments. As a result, they can take more aggressive stances to apply tighter controls with less reliability risk. This means they can easily add more controls to their environment and update it continuously.

In practical terms, software-defined infrastructure can be vital to cloud security because it can help you verify that the configuration an IT team is using exactly corresponds to its specific security requirements. Policy-as-code and controls-as-code can help prevent breaches that occur due to a control not being deployed when it should have been.

Software-defined infrastructure is another example of where cloud security aligns fully with business and technology agility.

The BeyondProd model and SLSA framework are prime examples of how our software-defined infrastructure has helped improve cloud security. BeyondProd and the BeyondCorp framework apply Zero Trust principles to protecting cloud services. Just like not all users are in the same physical location or using the same devices, developers do not all deploy code to the same environment. BeyondProd enables microservices to run securely with granular controls in public clouds, private clouds, and third-party hosted services.

The SLSA framework applies this approach to the complex nature of modern software development and deployment. Developed in collaboration with the Open Source Security Foundation, the SLSA framework formalizes criteria for software supply chain integrity. That’s no small hill to climb, given that today’s software is made up of code, binaries, networked APIs and their assorted configuration files.

Managing security in a software-defined infrastructure means the customer can intrinsically deliver continuous controls monitoring, constant inventory assurance and be capable of operating at an “efficient frontier” of a highly secure environment without having to incur significant operating risks.

Increasing deployment velocity

Cloud providers use a continuous integration/continuous deployment model. This is a necessity for enabling innovation through frequent improvements, including security updates supported by a consistent version of products everywhere, as well as achieving reliability at scale.

Cloud security and other mechanisms are API based and uniform across products, which enables the management of configuration in programmatic ways—also known as configuration-as-code. When configuration-as-code is combined with the overall nature of cloud being a software-defined infrastructure, it enables customers to implement CI/CD approaches for software deployment and configuration to enable consistency in their use of the cloud.

This automation and increased velocity decreases the time customers spend waiting for fixes and features to be applied. That includes the speed of deploying security features and updates, and permits fast roll-back for any reason. Ultimately, this means that the customer can move even faster yet with demonstrably less risk—eating and having your cake, as it were. Overall, we find deployment velocity to be a critical tool for strong security.

Simplicity: Cloud as an abstraction machine

A common concern about moving to the cloud is that it’s too complex. Admittedly, starting from scratch and learning all the features the cloud offers may seem daunting. Yet even today’s feature-rich cloud offerings are much simpler than prior on-prem environments—which are far less robust.

The perception of complexity comes from people being exposed to the scope of the whole platform, despite more abstraction of the underlying platform configuration. In on-prem environments, there are large teams of network engineers, system administrators, system programmers, software developers, security engineering teams, storage admins, and many more roles and teams. Each has their own domain or silo to operate in.

That loose-flying collection of technologies with its myriad of configuration options and incompatibilities required a degree of artisanal engineering that represents more complexity and less security and resilience than customers will encounter in the cloud.

Cloud is only going to get simpler because the market rewards the cloud providers for abstraction and autonomic operations. In turn, this permits more scale and more use, creating a relentless hunt for abstraction. Like our digital immune system analogy, the customer should see the cloud as an abstraction pattern-generating machine: It takes the best operational innovations from tens of thousands of customers, and assimilates them for the benefit of everyone.

The increased simplicity and abstraction permit more explicit assertion of security policy in more precise and expressive ways applied in the right context. Simply put, simplicity removes more potential surprise—and security issues are often rooted in surprise.

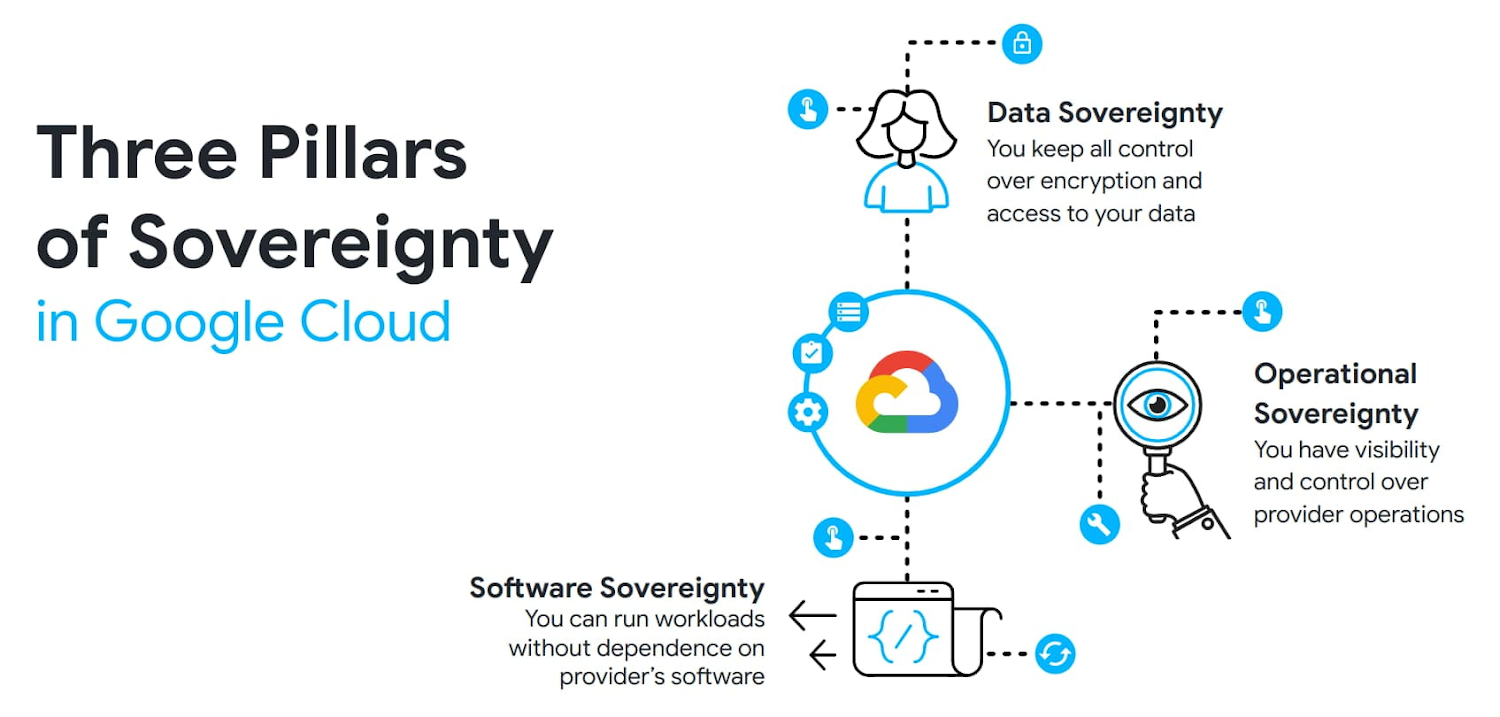

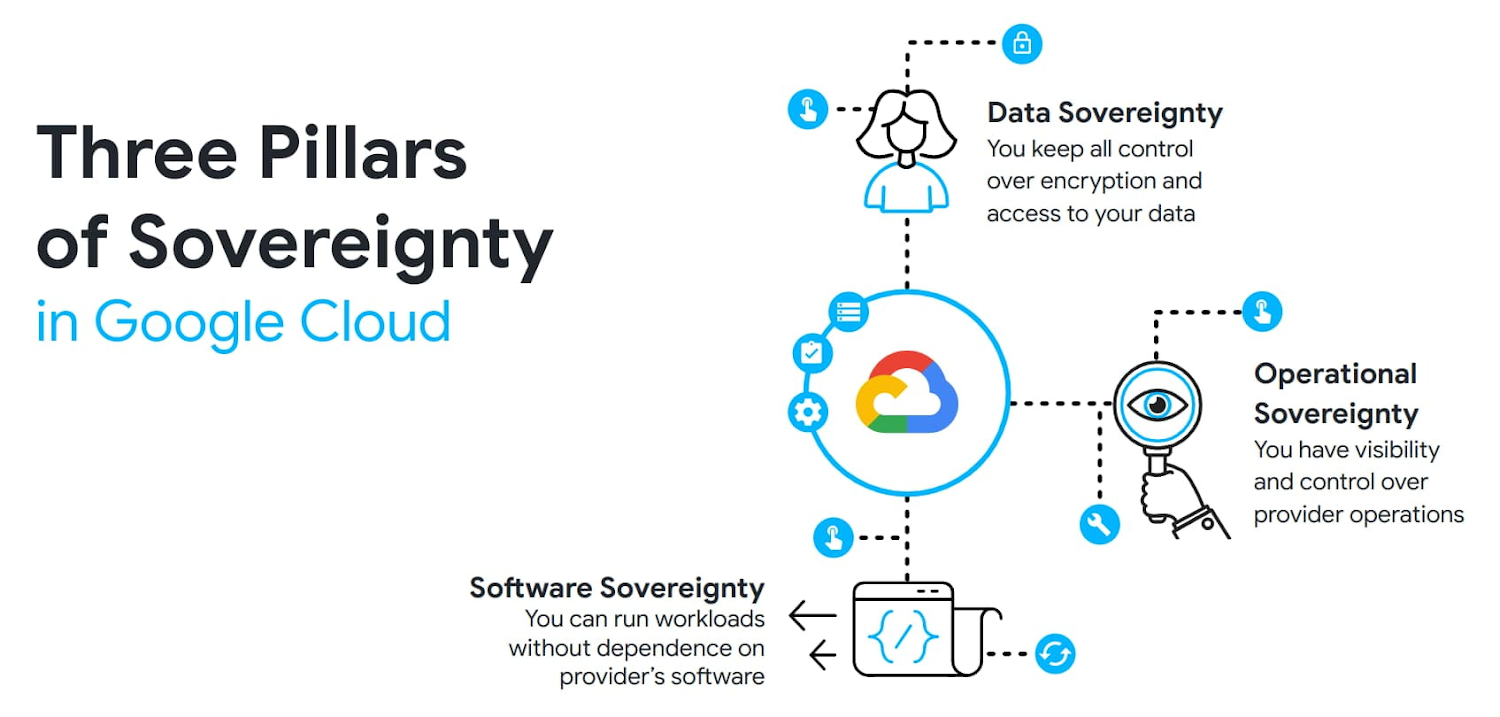

Empowering digital sovereignty with cloud security

Digital sovereignty is an organization’s intention to retain control over their data and how that data is stored, processed and managed when using third-party services — including cloud providers.

Organizations should feel — and actually have — control over their data. When controls have been designed well, they should encourage organizations to use the cloud and benefit from all that the cloud offers.

We see the control of encryption as vital to addressing sovereignty concerns, and have engineered leading encryption solutions that enable customers to maintain control of who can access their cloud data.

We take an expansive view of sovereignty requirements encompassing data, operations, and technology considerations.

- Data sovereignty provides customers with a mechanism to prevent the provider from accessing their data, approving access only for specific provider behaviors that customers think are necessary. Examples of customer controls provided by Google Cloud include storing and managing encryption keys outside the cloud, giving customers the power to only grant access to these keys based on detailed access justifications, and protecting data-in-use. With these features, the customer is the ultimate arbiter of access to their data.

- Operational sovereignty provides customers with assurances that the people working at a cloud provider cannot compromise customer workloads. The customer benefits from the scale of a multi-tenant environment while preserving control similar to a traditional on-prem environment. Examples of these controls include restricting the deployment of new resources to specific provider regions, and limiting support personnel access based on predefined attributes such as citizenship or a particular geographic location.

- Software sovereignty provides customers with assurances that they can control the availability of their workloads and run them wherever they want, without being dependent on or locked-in to a single cloud provider. Software sovereignty includes the ability to survive events that require providers to quickly change where their workloads are deployed and what level of outside connection is allowed.

The global footprint of many cloud providers means that cloud can more easily meet national or regional deployment needs. By engaging with customers and policymakers across these pillars, we can provide solutions that address their requirements, while optimizing for additional considerations like functionality, cost, infrastructure consistency, and developer experience.

While designing a digital sovereignty strategy that balances control and innovation can be challenging, this overall approach also provides a means for organizations (and groups of organizations that make up a sector or national critical infrastructure) to manage concentration risks. They can do this either by relying on the increased regional and zonal isolation mechanisms in the cloud, or through improved means of configuring resilient multicloud services. This is also why the commitment to open source and open standards is so important.

Innovating to address digital sovereignty requirements is important to advance digital transformation and technological creativity, and to join in the benefits of the cloud.

Artificial intelligence: Augmentation to help manage threats, reduce toil, and scale talent

What started as pattern recognition evolved into machine learning, and that has since become a whole range of artificial intelligence tools including generative AI and foundation models. AI has been the sleeper megatrend, first slowly and now rapidly becoming an integral part of what we do — broadly in IT, and especially in security as the challenge of stopping threats required creative adaptation. Similarly to cloud computing or any new technology, AI changes the risk landscape.

For all of its uses, AI is assuredly a cloud security megatrend that can increasingly fuel and accelerate all the other megatrends. While we also expect to see AI help attackers, AI should give defenders an advantage because AI is good at amplifying capability based on data — and defenders have more data.

For example, Google Cloud recently launched Duet AI for Security Operations, a generative AI tool that helps security teams detect, investigate, and respond to threats — including by analyzing large amounts of data in seconds, reducing time-consuming manual reviews, and improving response time.

In the long run, we believe that AI can help cloud security more than on-premises security — and taps into the stronger virtuous circle of security improvement. Since generative AI models broadly become more useful when they’re trained on larger sets of data, there’s an immediate scaling problem that gen AI faces if it can only be trained from on-premises data. Clouds simply can provide more data to train a foundation model on, and security-focused gen AI will become significantly better when it relies on not just “big data” but “massively huge data available in the cloud.”

Security gen AI foundation models such as Google Cloud’s fit squarely in the flywheel of security innovation. It depends on cloud technology, and it can also make cloud security better. Like navigating the ocean on a sailboat when there’s no wind, you could theoretically do it on-premises, but doing so would make it much harder to use and impossible to rapidly improve.

Generative AI has the potential to revolutionize how we use technology, especially in cybersecurity. We are hopeful that it can significantly lighten the burden — and possibly even eliminate — three of security’s thornier problems: toil, threat overload, and the talent gap.

Enabling progress in AI means focusing on the opportunities it presents, the responsibilities we bear as we develop it, and securing AI from malicious use and hacking.

The bottom line is that cloud computing megatrends will propel security forward faster, for less cost and less effort than any other security initiative. With the help of these megatrends, the advantage of cloud security over on-prem is inevitable.