Inside the eDreams ODIGEO Data Mesh — a platform engineering view

Carlos Saona

Chief Architect, eDreams ODIGEO

Luis Velasco

Data and AI Specialist

Editor’s note: Headquartered in Barcelona, eDreams ODIGEO (or eDO for short) is one of the biggest online travel companies in the world, serving more than 20 million customers in 44 countries. Read on to learn about how they modernized their legacy data warehouse environment to a data mesh built on BigQuery.

Data analytics has always been an essential part of eDO's culture. To cope with exponential business growth and the shift to a customer-centered approach, eDO evolved its data architecture significantly over the years, transitioning from a centralized data warehouse to a modern data mesh architecture powered by BigQuery. In this blog, a companion to a technical customer case study, we will cover key insights from eDO’s data mesh journey.

Evolution of data platform architecture at eDO

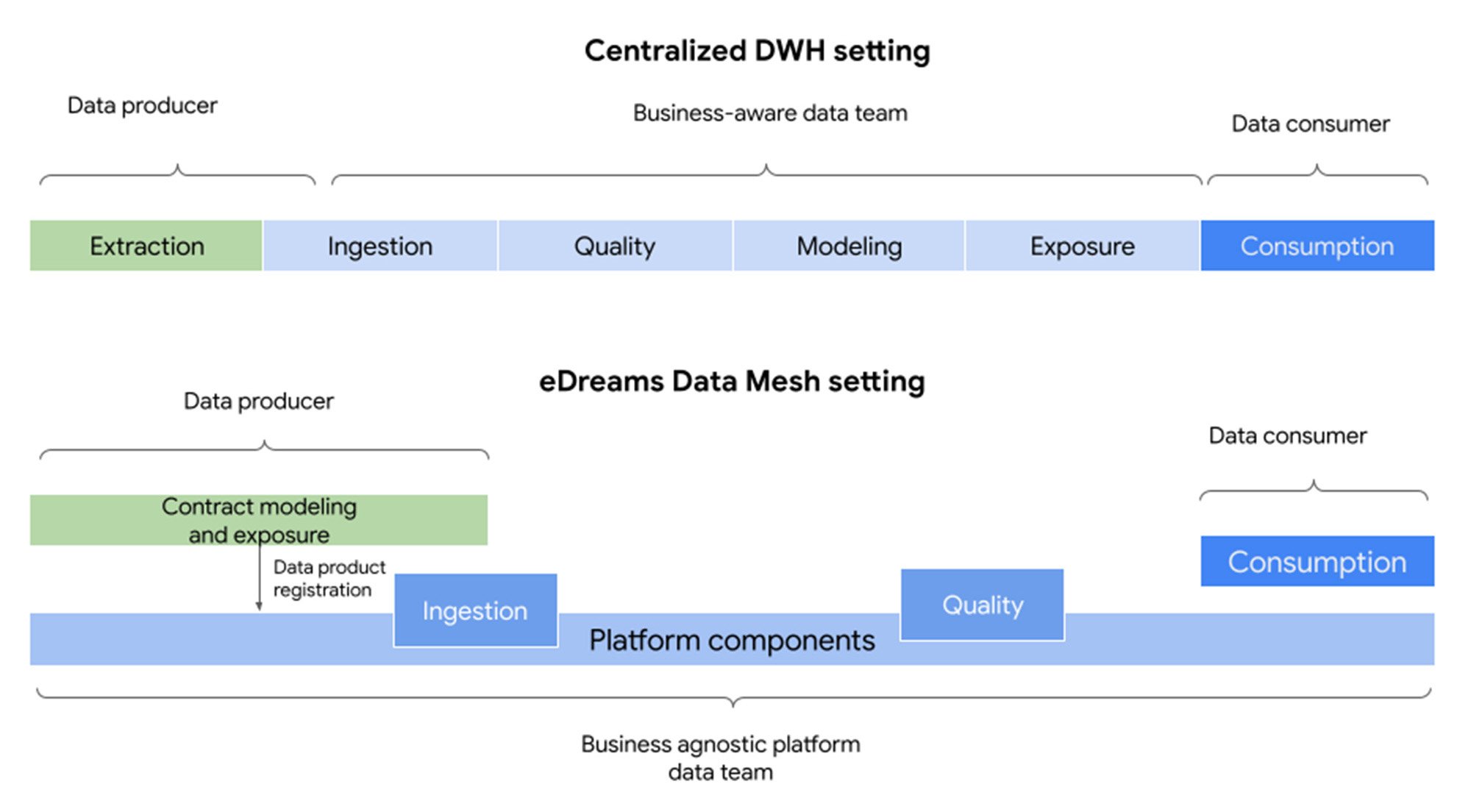

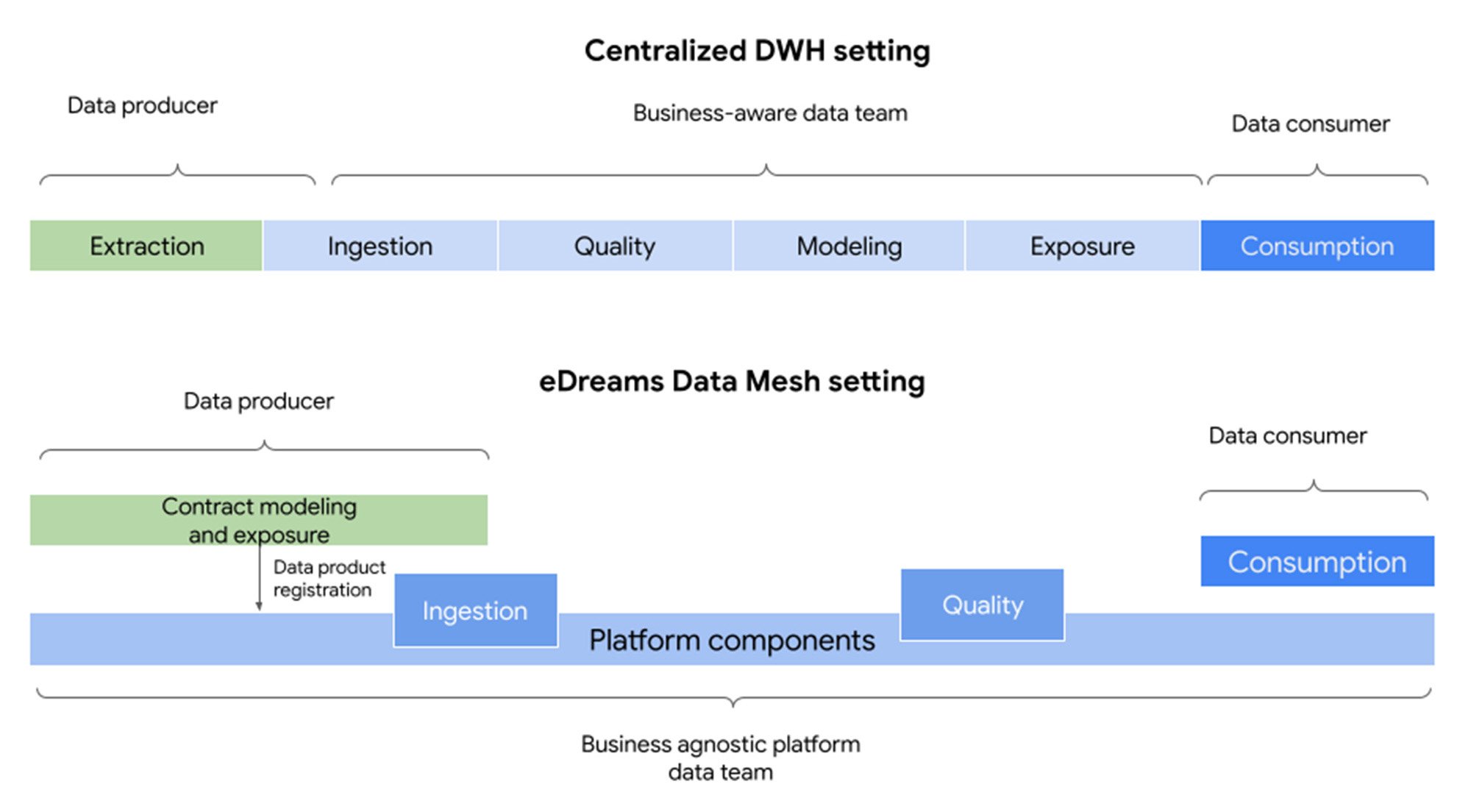

Initially, eDO had a centralized data team that managed a data warehouse. Data was readily available and trusted. However, as the company grew, adding new data and adapting to increasing complexity became a bottleneck. Some analytics teams built their own pipelines and silos, which improved data availability and team autonomy but introduced challenges with data trust, quality, and ownership.

As teams and complexity grew, data silos became intolerable. AI and data science needs only made the need for a unified data model more pressing. In 2019, building a centralized model with a data lake would have been the natural next step, but it would have been at odds with the distributed e-commerce platform, which is organized around business domains and autonomous teams — not to mention the pressure of data changes imposed by its very high development velocity.

Instead, eDO embraced the data mesh paradigm, deploying a business-agnostic data team and a distributed data architecture aligned with the platform and teams. The data team no longer “owns” data, but instead focuses on building self-service components for producers and consumers.

The eDO data mesh and data products architecture

In the end, eDO implemented a business-agnostic data platform that operates in self-service mode. This approach isolates development teams from data infrastructure concerns, as is the case with a traditional centralized data team. It also isolates the data platform team from any business knowledge, unlike a traditional centralized data team. This prevents the data team from becoming overloaded by the ever-increasing functional complexity of the system.

The biggest challenges for adopting data mesh are cultural, organizational, and ownership-related. However, engineering challenges are also significant. Data mesh systems must be able to handle large volumes of data, link and join datasets from different business domains efficiently, manage multiple versions of datasets, and ensure that documentation is available and trustworthy. Additionally, these systems must minimize maintenance costs and scale independently of the number of teams and domains using them.

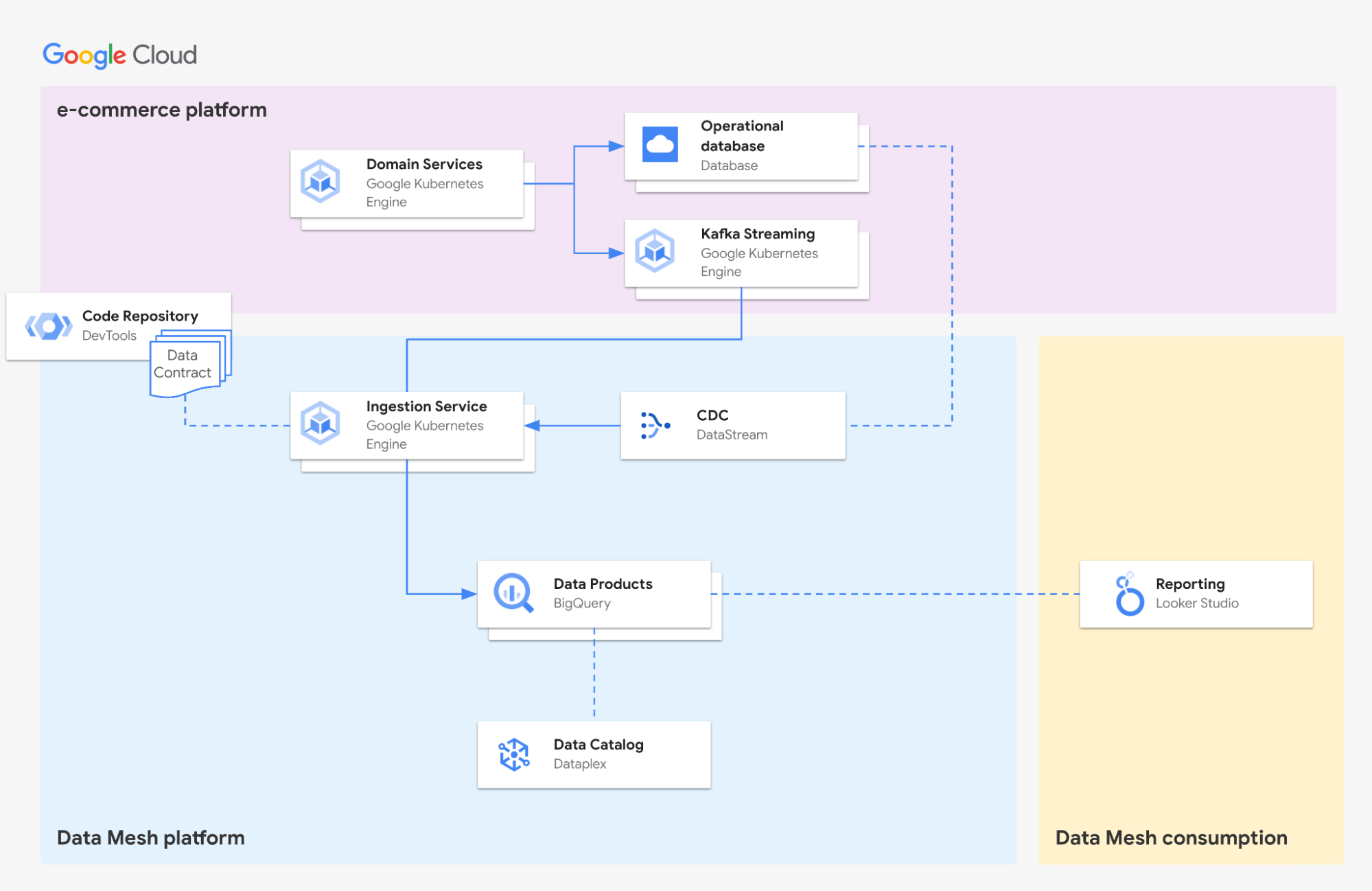

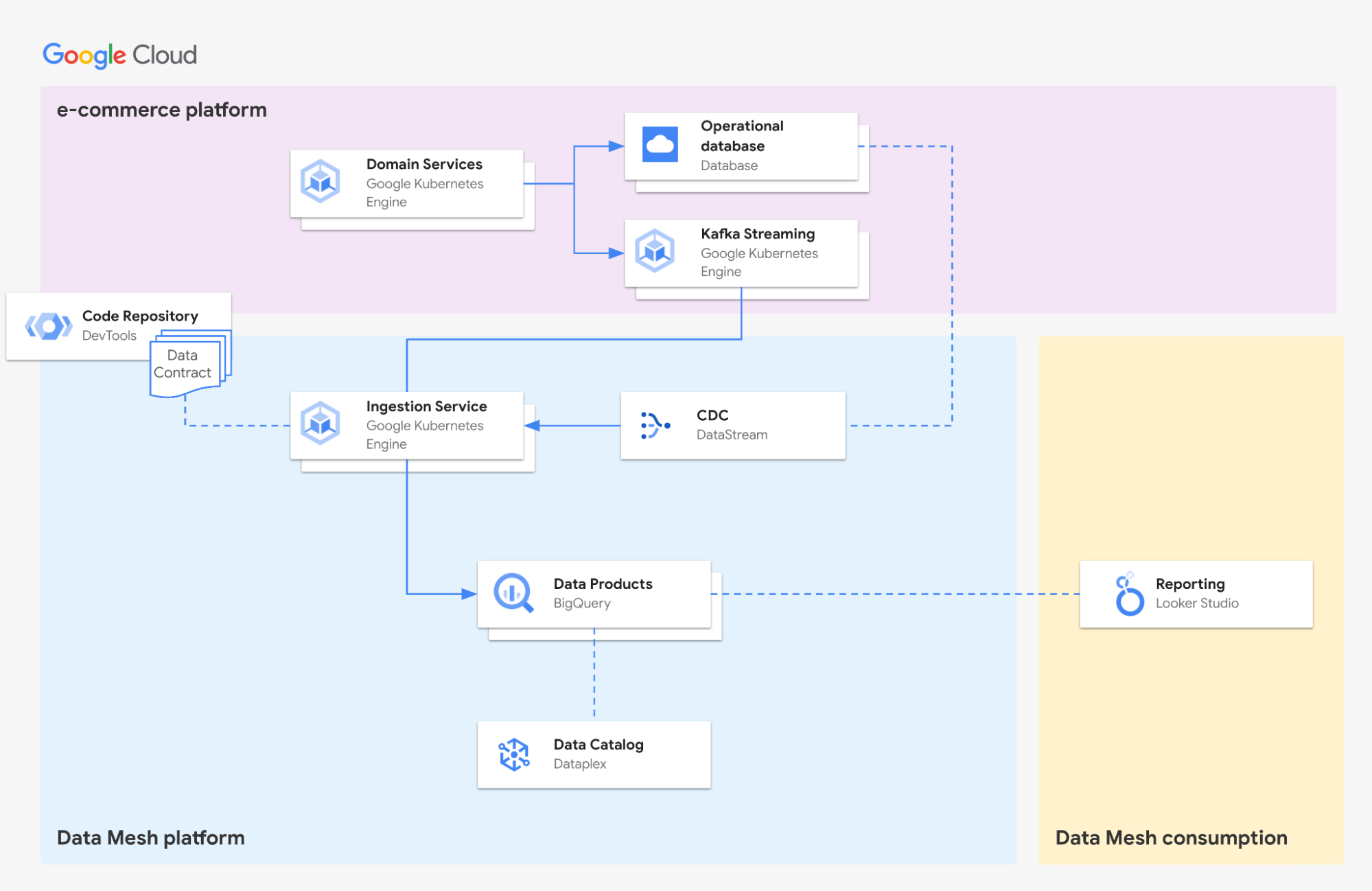

To build its data mesh, eDO chose a number of Google Cloud products, with BigQuery at the heart of the architecture. These technologies were chosen for their serverless nature, support for standard protocols, and ease of integration with the existing e-commerce platform.

One key enabler of this architecture is the deployment of data contracts. These contracts are:

- Owned exclusively by a single microservice and its team

- Implemented declaratively using Avro and YAML files

- Documented in the Avro schema

- Managed in the source code repository of the owning microservice

Declarative contracts enable the automation of data ingestion and quality checks without requiring knowledge of business domains. Exclusive ownership of contracts by microservices and human-readable documentation in a git repository help producers take ownership of their contracts.

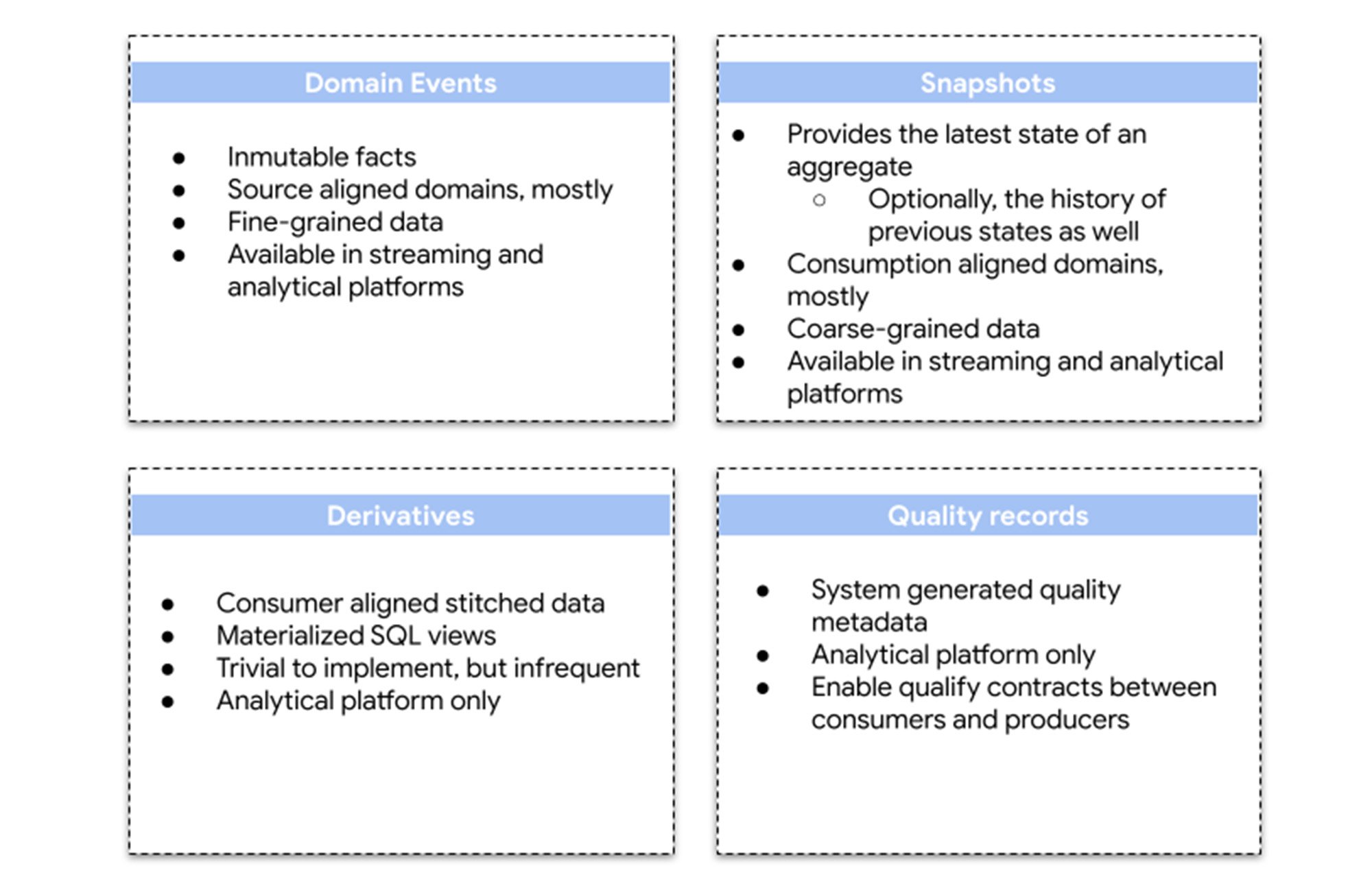

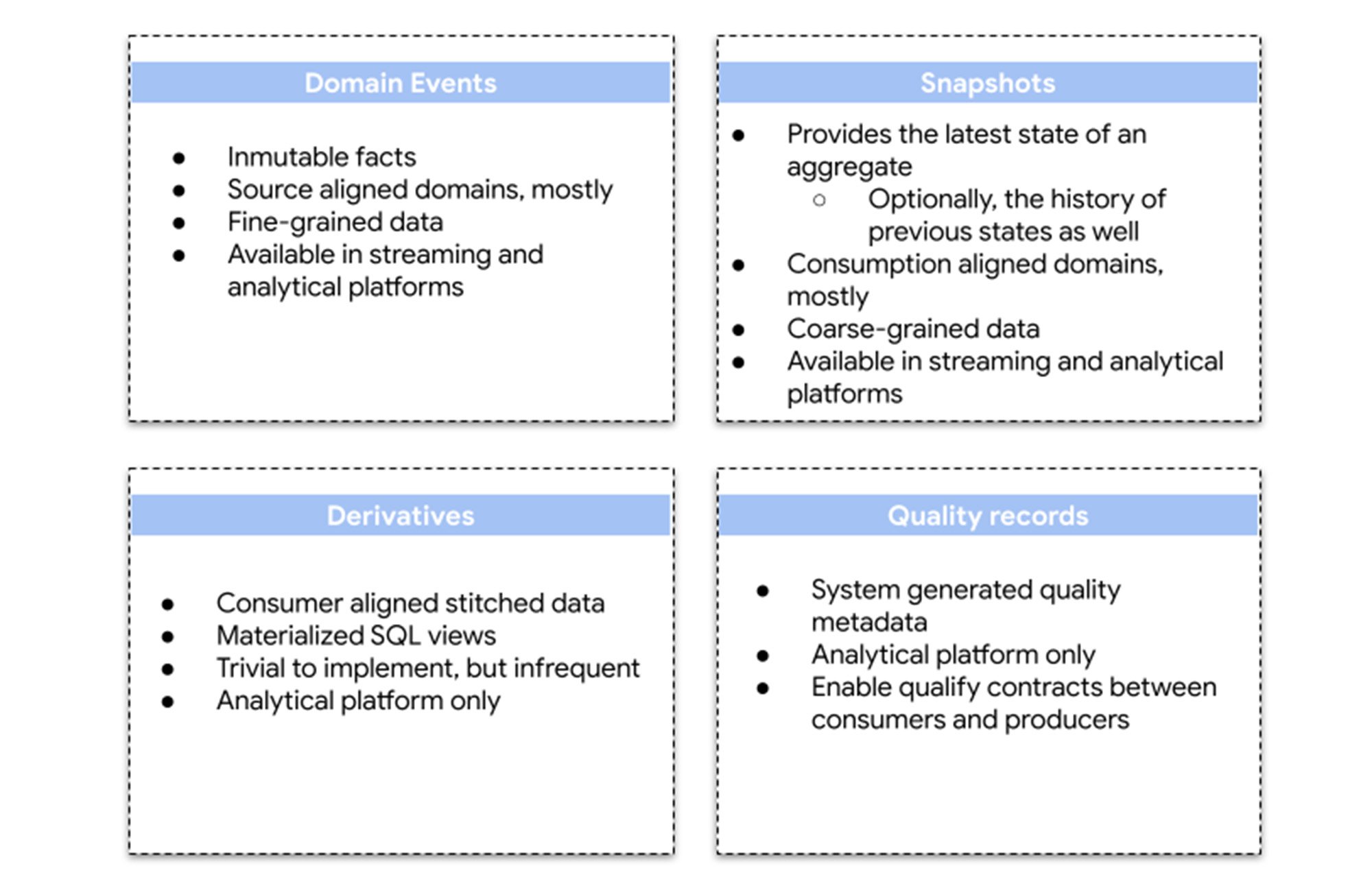

Finally, when it comes to data architecture, the platform has four types of data products: domain events, snapshots, derivatives and quality records that fulfill different access patterns and needs.

Lessons learned and the future of the platform

eDO learned a lot during the four years since the project started and have many improvements and new features planned for the near future.

Lessons learned:

- Hand-pick consumer stakeholders who are willing and fit to be early adopters.

- Make onboarding optional.

- Foster feedback loops with data producers.

- Data quality is variable and should be agreed upon between producers and consumers, rather than by a central team.

- Plan for iterative creation of data products, driven by real consumption.

- Data backlogs are part of teams' product backlogs.

- Include modeling of data contracts in your federated governance, as it is the hardest and riskiest part.

- Consider Data Mesh adoption a difficult, challenging engineering project, in domain and platform teams.

- Documentation should be embedded into data contracts, and tailored to readers who are knowledgeable about the domain of the contract.

Work on improving the data mesh continues! Some of the areas include:

- Improved data-quality SLAs - working on ways to measure data accuracy and consistency, which will enable better SLAs between data consumers and producers.

- Self-motivated decommissions - experimenting with a new "Data Maturity Assessment" framework to encourage analytical teams to migrate their data silos to the data mesh.

- Third-party-aligned data contracts - creating different pipelines or types of data contracts for data that comes from third-party providers.

- Faster data timeliness - working on reducing the latency of getting data from the streaming platform to BigQuery.

- Multi-layered documentation - deploying additional levels of documentation to document not only data contracts, but also concepts and processes of business domains.

Conclusion

Data mesh is a promising fresh approach to data management that can help organizations overcome the challenges of traditional centralized data architectures. By following the lessons learned from eDO's experience, we hope organizations can successfully implement their own Data Mesh initiatives.

Want to learn more about deploying Data Mesh in Google Cloud? See this technical white paper today.