Tesla V100 GPUs are now generally available

Chris Kleban

Group Product Manager, Google Cloud

We are pleased to bring our NVIDIA V100 graphics processing units (GPUs) with NVLink to general availability. These GPUs offer serious power for complex computational workloads. Our V100 offering, together with our K80, P100 and P4 GPUs, are all great for speeding up many CUDA-powered compute and HPC workloads. The V100 GPU stands out in particular for machine learning workloads. Each V100 GPU has 640 tensor cores and offers up to 125 TFLOPS of mixed precision ML performance. This means that you can get up to a petaFLOP of ML performance in a single virtual machine (VM), making for a very powerful processing tool for ML training and inference workloads. In addition, for the most demanding workloads, we offer our high-speed NVLink network between GPUs at up to 300GB/s and optional local SSD for fast disk I/O.

Since our beta launch, we’ve improved our overall V100 GPU offering by making it easier, faster, and cheaper to use, along with adding flexibility. Our V100 GPUs are accessible via our infrastructure services, Google Compute Engine and Kubernetes Engine, as well as our managed services, Cloud Machine Learning Engine and Dataproc.

We are excited to see what you’ll do with these GPUs. One V100 customer that uses both Compute and Kubernetes Engine is OpenAI, which works on developing and promoting safe artificial general intelligence. They use our V100 and P100 GPUs, together with preemptible CPU-only virtual machines (VMs), to run large reinforcement learning jobs to train AI to play cooperatively in multiplayer games and prepare for The International Dota 2 competition.

At OpenAI, we run large-scale reinforcement learning jobs using 100,000+ preemptible CPU cores and 1,000+ GPUs. Google Cloud Platform's support has been extremely helpful—they put effort into understanding the cloud architecture of our application, provided design recommendations and tuned their platform to support our use cases better.

Szymon Sidor, Member of Technical Staff, OpenAI

Here’s what we’ve added to the V100 GPU offering during the beta.

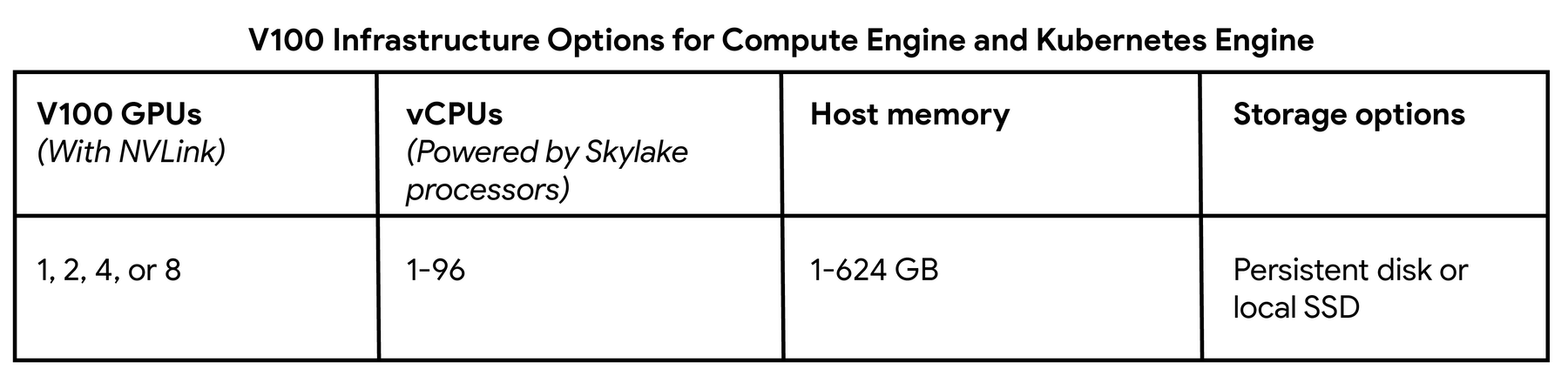

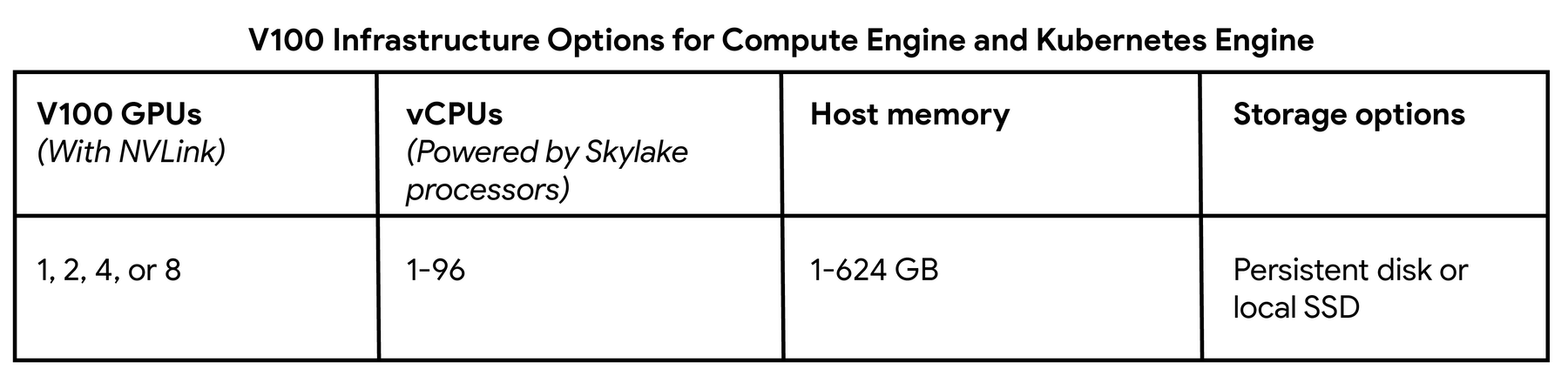

First, we increased flexibility by adding support for attaching two or four V100s to a VM, which means there’s now support for attaching one, two, four or eight V100s per VM. This is important as it allows you to match your workload to the optimal amount of GPU power. This plus our custom VM feature allow you to create a VM shape with the CPU, memory, storage and V100 GPU performance that meets your needs.

Second, we made it easier to get started with GPUs on Compute Engine for ML and other compute workloads by offering new performance- and workload-optimized pre-configured operating system images. Simply create a VM with a GPU, choose one of these with pre-installed libraries and get started. These images work for all GPU platforms—K80, P100, P4 and V100— and come in three flavors: TensorFlow, PyTorch, and Base. All three of them include the latest versions of the NVIDIA tools they depend on: CUDA 9.2, CuDNN 7.2 and NCCL 2.2, and are a good fit for ML workloads. The base image can also be used for other compute workloads, like HPC, that come with preconfigured GPU drivers. Using these images brings you the performance optimizations for using GPUs on GCP, and in particular really helps when using multiple V100 GPUs with NVLink for deep learning workloads. To find out more and to get started, check out our image documentation.

Third, we lowered our preemptible GPU pricing for all GPU platforms, including for V100s, to 70% off on-demand pricing. This provides any organization with an extremely low-cost compute offering and eliminates having to deal with the fluctuating prices of an auction system. This is critical if you are doing ML or HPC work with a limited budget but are flexible enough to handle the limitations that come with preemptible VMs, like max life of 24 hours.

Want to learn more? Visit our GPU product page for general information and pricing. Or visit our GPU documentation to find out location availability and how to get started.