Protecting your Kubernetes deployments with Policy Controller

Jamie Duncan

Specialist Customer Engineer, Google Cloud

John Murray

Product Manager, Google Cloud

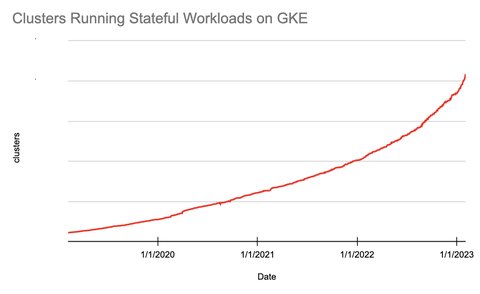

In November, the Kubernetes project disclosed a vulnerability which every Kuberenetes administrator or adopter should be aware of. The vulnerability, known as CVE-2020-8554, stems from default permissions allowing users to create objects that could act as a “Man in the Middle” and therefore potentially intercept sensitive data. If you are using a Google Cloud managed solution like Anthos or Kubernetes Engine (GKE), you can easily and effectively mitigate this vulnerability. In this blog, we’ll show you how.

First let’s talk about the vulnerability.

Who is vulnerable: CVE-2020-8554 affects all multi-tenant Kubernetes clusters. Multi-tenancy is defined in a Kubernetes cluster as a single cluster with multiple users who require isolation from each other.

What can happen: This vulnerability by itself does not give an attacker permissions to create a Kubernetes Service. However, an attacker who has obtained permissions to create a Kubernetes Service of type LoadBalancer or ClusterIP might be able to intercept network traffic originating from other Pods in the cluster.

To address this vulnerability Policy Controller or Open Policy Agent Gatekeeper (OPA) can be used to implement constraints to mitigate this issue. The rest of this blog shows you the power of the Policy Controller component of Anthos Config Management (ACM) to do this.

Using Policy Controller to mitigate exposure

There are multiple ways to create policies that mitigate this issue in Kubernetes with the OPA Gatekeeper. The example from the CVE uses a list of allowed IP addresses for ExternalIP objects, and denies any request that attempts to use an IP address outside of this range.

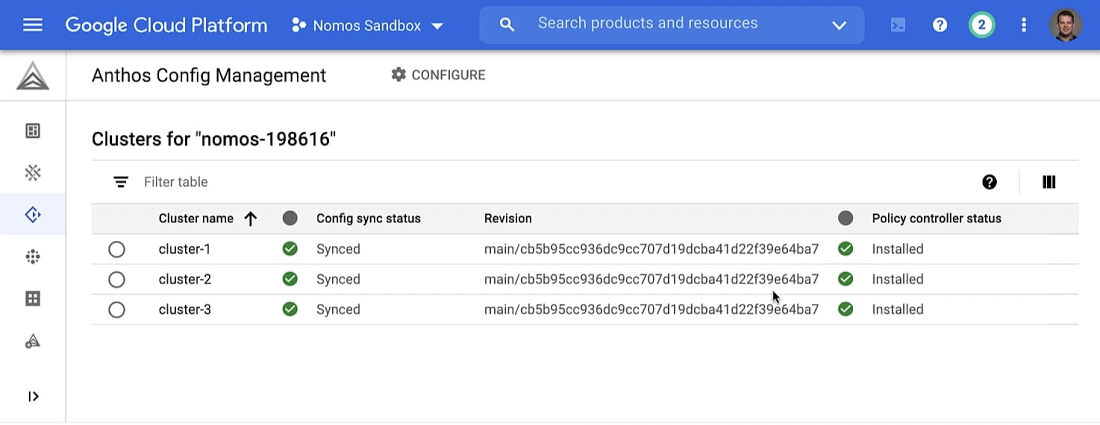

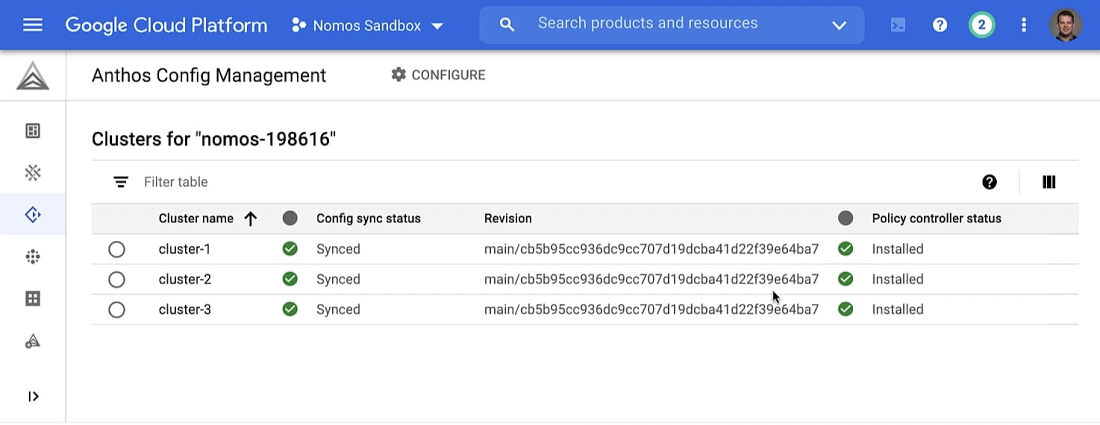

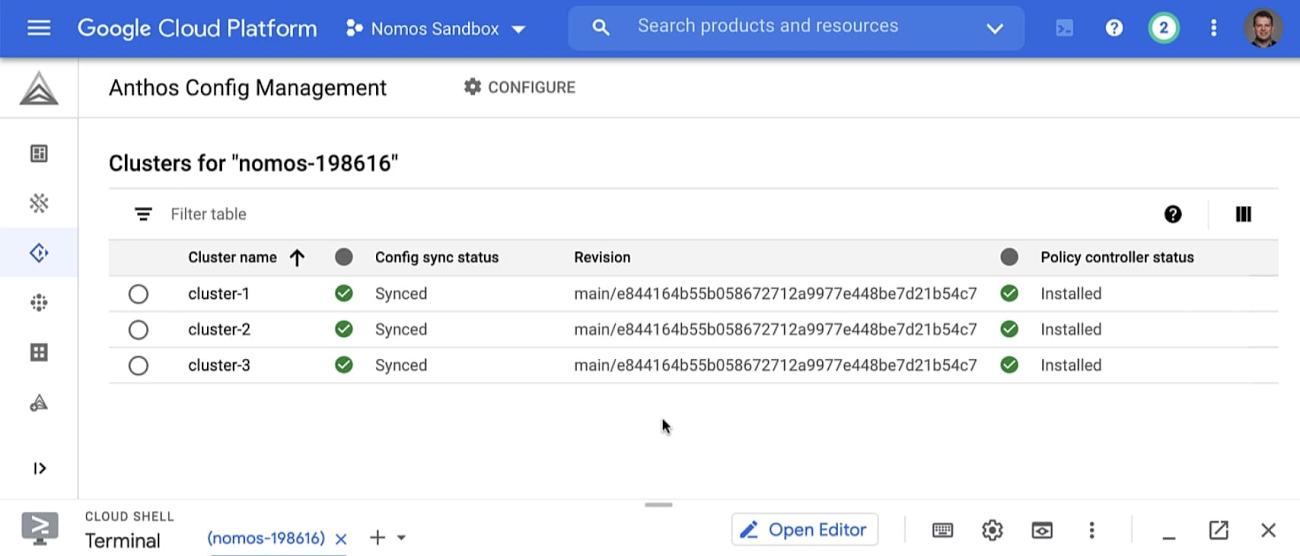

We begin this example with three Anthos clusters synchronized to the same ACM git repository. In the following screenshot, cluster-1, cluster-2, and cluster-3 have their cluster configurations synchronized by ACM with the main branch and are pulling from the latest commit. You can find more information about how ACM deploys policies across multiple clusters in the ACM documentation.

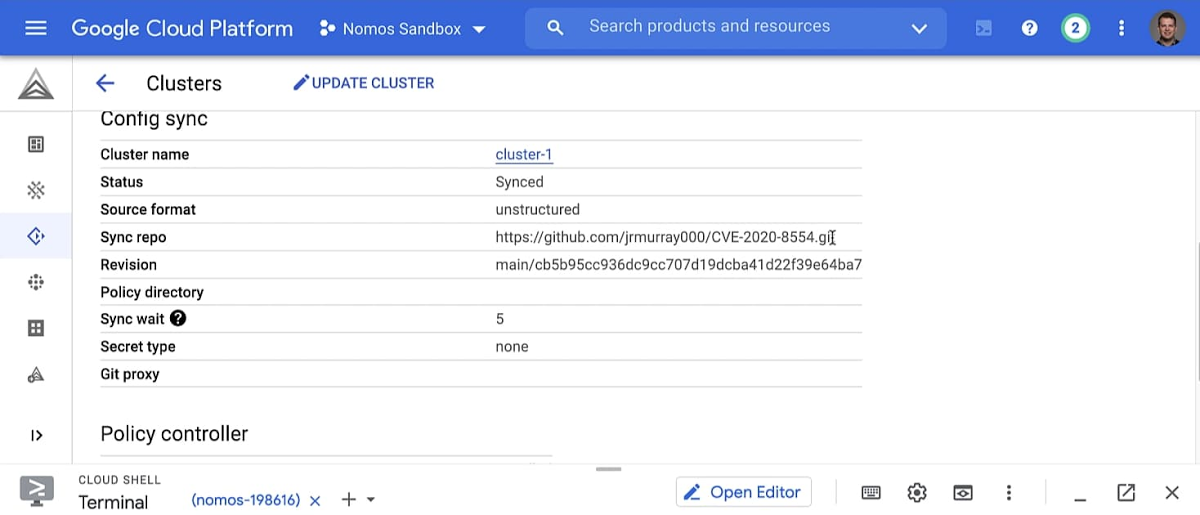

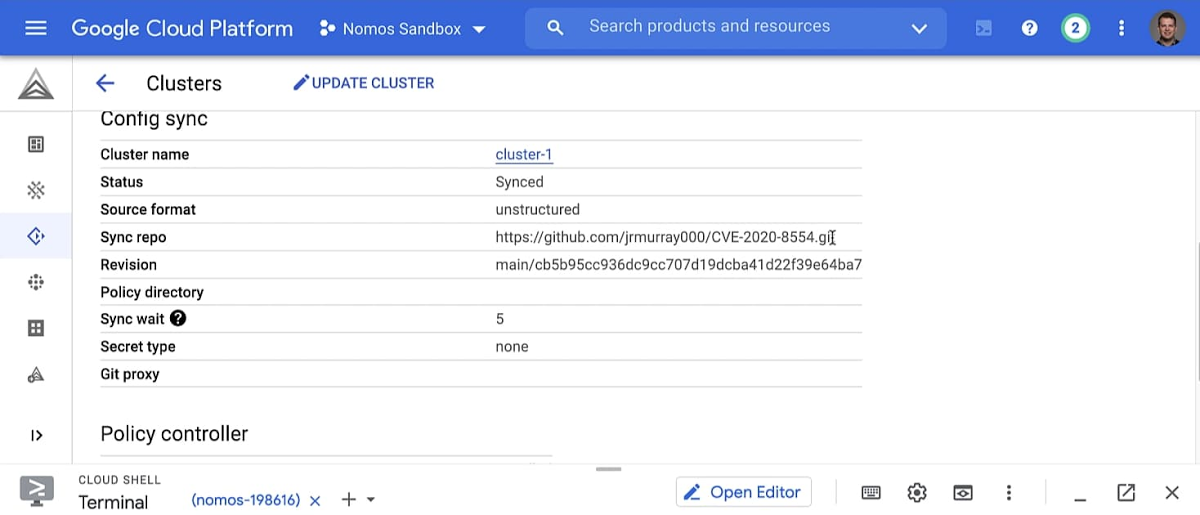

Drilling down into each cluster you can find more information about the repository and the cluster’s ACM configuration, including the example git repository’s URL.

Constraints in Policy Controller are enforced using Constraint Templates. Policy Controller has a robust default set of Constraint Templates that are frequently added to and updated by Google. The example we’re using comes from another upstream repository maintained by the OPA Gatekeeper Project. Google Cloud is adding an officially supported version of this Template to the Policy Controller library as part of the upcoming 1.6.1 release of Anthos Config Management, but the Template can be applied directly to clusters running earlier versions. The Constraint Template we’re using employs a list of allowed IP addresses that can be used for ExteranalIP objects in kubernetes. Our example list has one address, “203.0.113.0”.

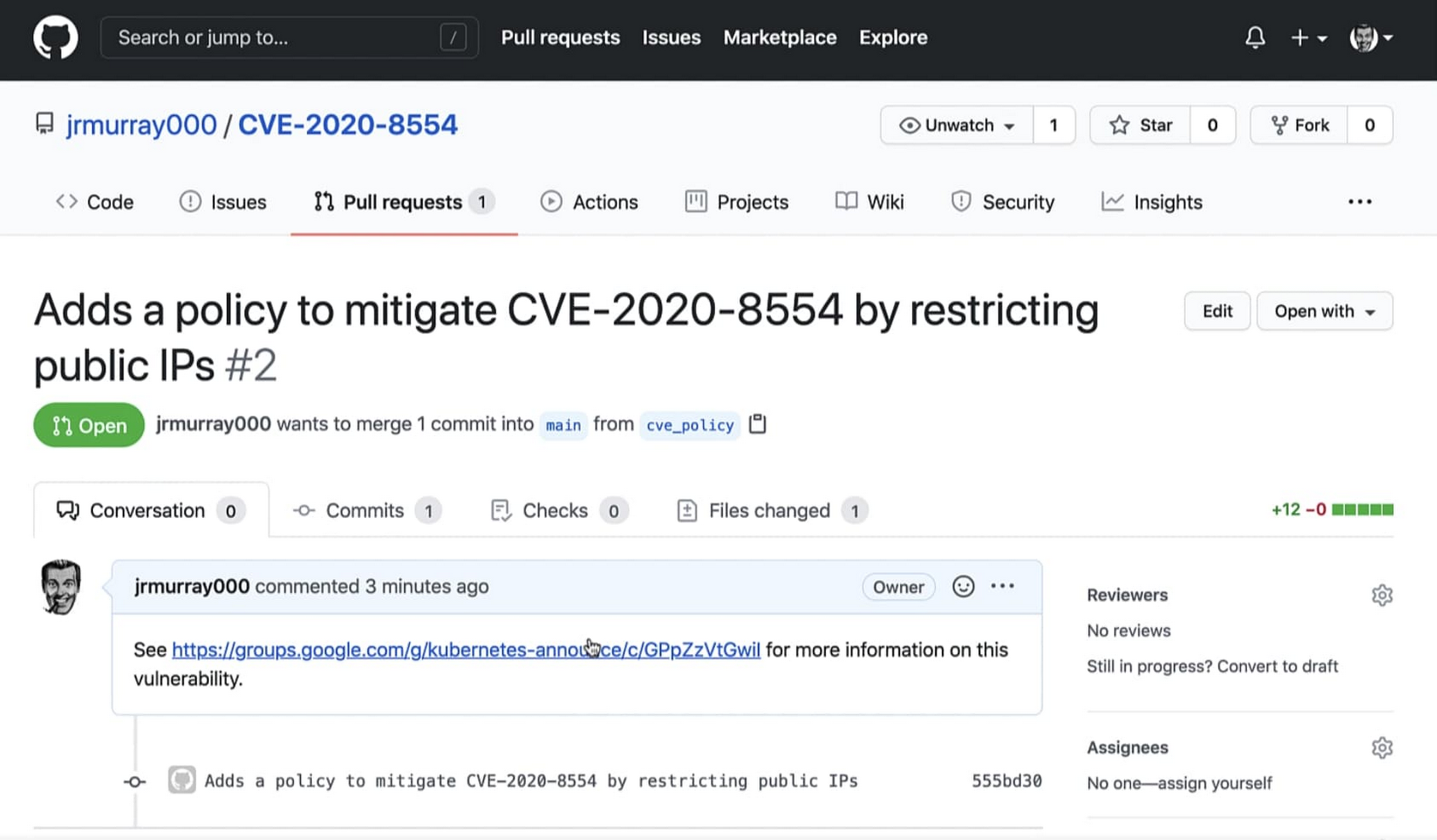

The Constraint and Template files are committed to a new branch of the repository. Once the changes are reviewed and merged into the main branch of the ACM repository, all of our clusters will be updated with the new policy.

With the files created, we’ll go through the normal pull request process for Github and merge the commit.

Approving and merging the request adds the new policy to your ACM repository. Each cluster continually checks for changes to the ACM repository. When a new update is detected, ACM applies the changes automatically and protects them against drift.

Within a few seconds, each cluster will synchronize to the latest commit that contains the new policy.

Each cluster has integrated the new policy constraint by synchronizing with its ACM repository’s latest commit. In our example, this is the git commit ID beginning with “e84416”. Now it’s time to test the policy.

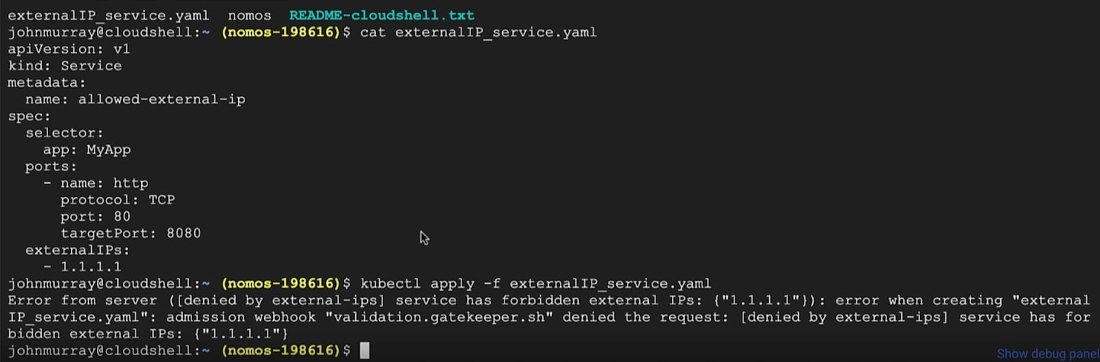

We’ll attempt to create a service in one of our Anthos clusters with an ExternalIP outside the allowed range of addresses in our Constraint. In the test we’re attempting to create a Service with an ExternalIP value of “1.1.1.1”.

When we attempt to create this Service, the Policy Controller Constraint validates the Service before it’s actually created and denies it because it violates the Constraint we just put in place with Policy Controller.

Taking action and next steps

Every Kubernetes cluster is potentially vulnerable to CVE-2020-8554. Utilizing Policy Controller, or OPA Gatekeeper on GKE, this vulnerability can be effectively mitigated at scale. Using admission controllers like Policy Controller is a fundamental design element for any secure kubernetes deployment. Additional security best practices can be found in the Google Cloud Security Command Center.

For more details about Google Cloud’s response to CVE-2020-8554 please visit our Security Bulletins for GKE, Anthos On-Prem, and Anthos on AWS.