ShapeMask: High-performance, large-scale instance segmentation with Cloud TPUs

Weicheng Kuo

Software Engineer, Robotics at Google

Omkar Pathak

Hardware Engineer, Cloud TPUs

Many of us take the ability to see the world for granted, but the everyday task of identifying objects of various shapes, colors, and sizes is a challenging feat. Yet, this type of technology is critical for a range of applications, from medical image analysis to photo editing.

As part of our ongoing effort to create software that can perform useful visual tasks, we’ve developed a new image segmentation model called ShapeMask that offers a great combination of high accuracy and high scalability. In this blog we’ll look at what exactly ShapeMask is, what its advantages are, and how you can get started with it.

An overview of ShapeMask

The task that ShapeMask performs is called “instance segmentation,” which involves identifying and tracing the boundaries of specific instances of various objects in a visual scene. For example, in a cityscape image that contains several cars, ShapeMask can be used to highlight each car with a different color. Each of these highlighted areas is called a “mask.”

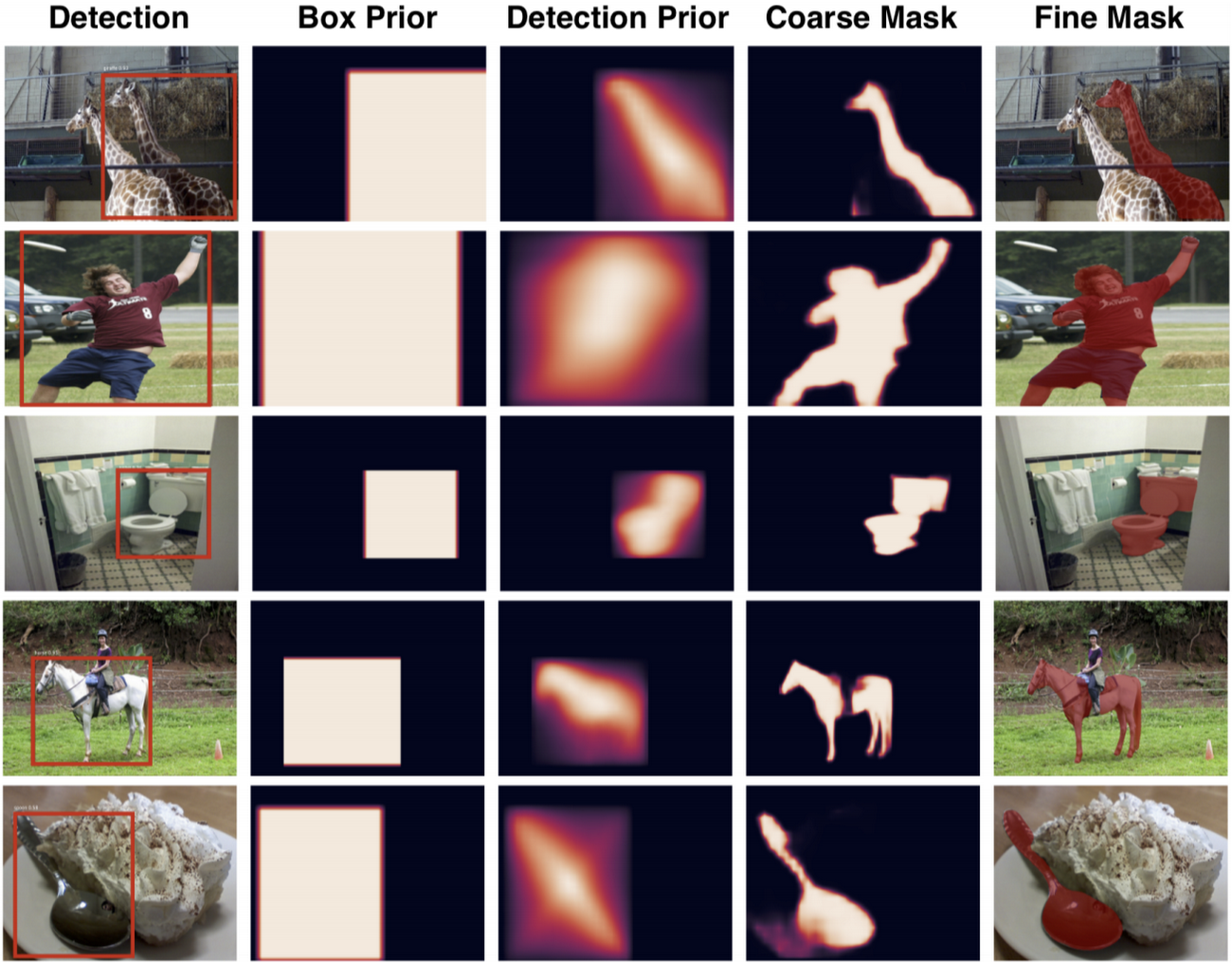

ShapeMask builds on a well-known object detection model called RetinaNet (this Cloud TPU tutorial has more information) which can detect the location and size of various objects in an image but does not produce object masks. ShapeMask initially locates objects using RetinaNet, but then gradually refines the shapes of these detected objects by grouping pixels that have a similar appearance. This new approach allows ShapeMask to create accurate masks. We have made a well-optimized implementation of the ShapeMask model available open-source here.

A highly scalable solution

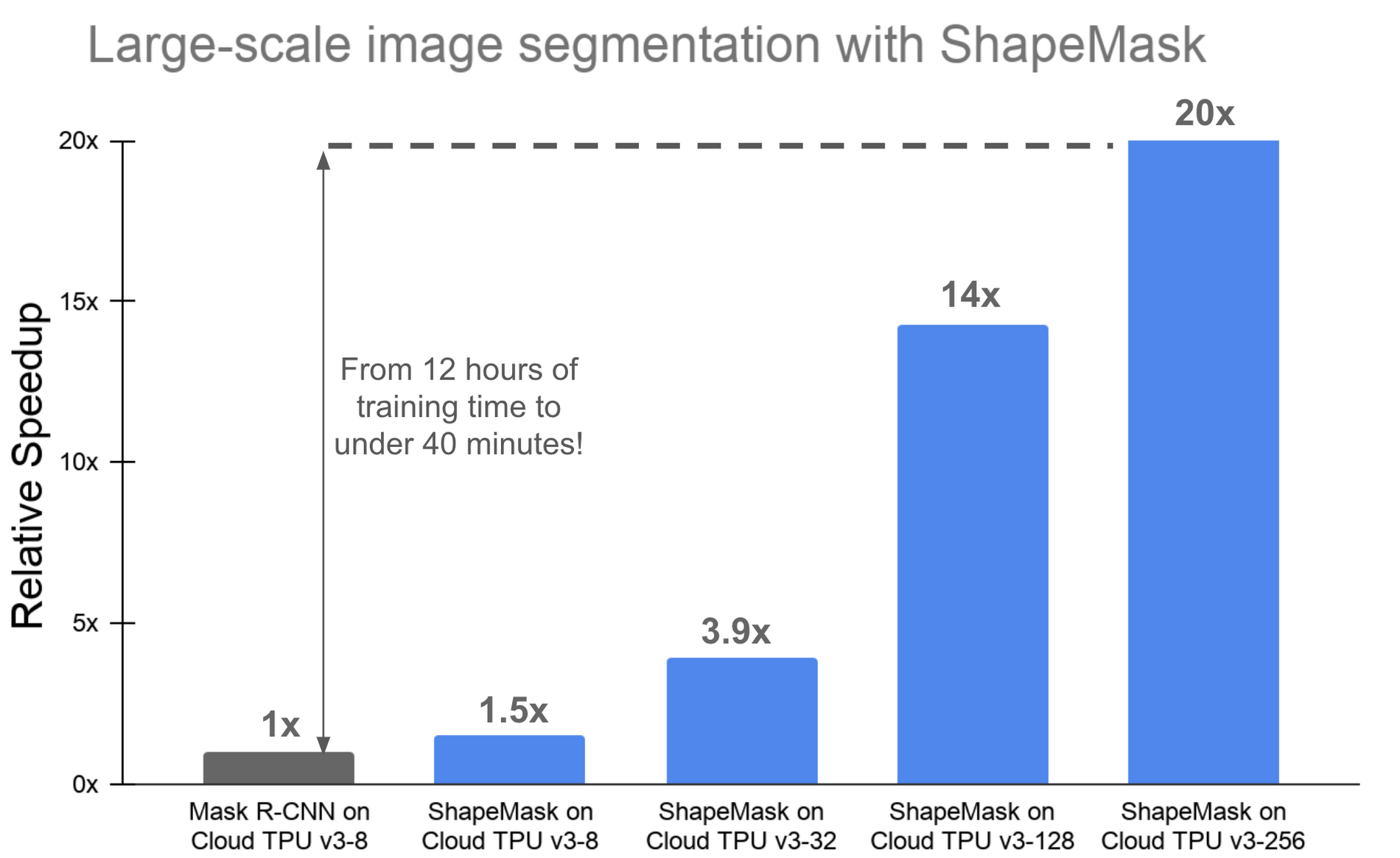

Many businesses depend on automated image segmentation to enable a broad set of applications. These businesses often work with large, frequently changing datasets, and their researchers and engineers need to experiment with a variety of ML model architectures. To iterate quickly on large, realistic datasets, they need to be able to scale up the training of their image segmentation models. One advantage ShapeMask has over other image segmentation models is that it can train efficiently at large batch sizes, which makes it possible to distribute ShapeMask training across a large number of ML accelerators.

Cloud TPUs are designed to enable exactly the type of scaling that ShapeMask uses. For example, a machine perception engineer can experiment with ShapeMask on a small dataset on a single Cloud TPU v3 device—which has eight cores—and then use the same code to quickly train the same ShapeMask model on a much larger dataset using a “slice” of a Cloud TPU Pod with 32, 128, or 256 cores. Without any code changes, ShapeMask can scale to a batch size of 2048, while still achieving an accuracy of 34.7 mask mAP (more on mAP here).

With a 256-core Cloud TPU v3 slice, the ShapeMask model can be trained on the standard COCO image segmentation dataset in just under 40 minutes—that’s a big improvement from waiting hours to train ShapeMask (or a comparable Mask R-CNN model) on a single Cloud TPU device.

High accuracy, too

For customers that require the absolute highest image segmentation accuracy, the ShapeMask model can be trained to an accuracy of 38 mask mAP on a Cloud TPU v3 device with a batch size of 64. For comparison, our reference implementation of Mask R-CNN trains to an accuracy of 37.3 mask mAP on a Cloud TPU v3 device with a batch size of 64 and requires about 200 more minutes than ShapeMask to train.

All the statistics mentioned above were collected using TensorFlow version 1.14. While we expect you to get similar results, your results may vary. More information about ShapeMask and its performance is available in this methodology doc.

Getting started—in your browser or in a Google Cloud project

It’s easy to start experimenting with ShapeMask right in your browser using a free Cloud TPU in Colab. (Just select the “Runtime” tab and “Change Runtime Type” to “Python 3” and “TPU”).

You can also get started with a well-optimized, open-source implementation of ShapeMask in your own Google Cloud projects by following this ShapeMask tutorial.

If you’re new to Cloud TPUs, you can get familiar with them by following our quickstart guide. Cloud TPU Pods are currently available in beta, and we encourage you to contact a Google Cloud Sales representative to evaluate them.

Acknowledgements

Thanks to everyone who contributed to this post and who helped develop this new capability, including Tsung-Yi Lin, Anelia Angelova, Pengchong Jin, Zak Stone, Wes Wahlin, Adam Kerin, Vishal Mishra, David Shevitz, and Allen Wang.