O que você criará

Neste tutorial, você fará o download de um modelo personalizado exportado do TensorFlow Lite que foi criado usando o AutoML Vision Edge. Depois, você executará um app para Android pré-desenvolvido que usa o modelo para identificar imagens de flores.

Objetivos

Neste tutorial de apresentação completo, você usará o código para fazer o seguinte:

- Executar um modelo pré-treinado em um app para Android usando o interpretador TFLite.

Antes de começar

Treinar um modelo do AutoML Vision Edge

Antes de implantar um modelo em um dispositivo Edge, você precisa treinar e exportar um modelo do TF Lite do AutoML Vision Edge seguindo o Guia de início rápido do modelo de dispositivo Edge.

Depois de concluir o guia de início rápido, você terá exportado arquivos de modelo treinados: um arquivo TF Lite, um arquivo de rótulo e um arquivo de metadados, conforme mostrado abaixo.

Instalar o TensorFlow

Antes de começar o tutorial, você precisa instalar vários softwares:

- instale o tensorflow versão 1.7

- Instale o PILLOW

Se você tem uma instalação Python funcional, execute os seguintes comandos para fazer o download desse software:

pip install --upgrade "tensorflow==1.7.*" pip install PILLOW

Consulte a documentação oficial do TensorFlow se tiver problemas com este processo.

Clonar o repositório Git

Usando a linha de comando, clone o repositório Git com o seguinte comando:

git clone https://github.com/googlecodelabs/tensorflow-for-poets-2

Acesse o diretório do clone local do repositório

(diretório tensorflow-for-poets-2). Execute todos os exemplo de código a seguir

deste diretório:

cd tensorflow-for-poets-2

Configurar o app para Android

Instalar o Android Studio

Se necessário, instale o Android Studio 3.0+ localmente.

Abrir o projeto com o Android Studio

Abra um projeto com o Android Studio seguindo estas etapas:

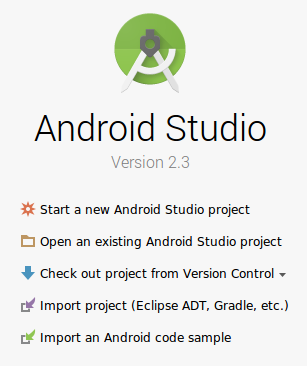

Abra o Android Studio

.

Depois que ele for carregado, selecione

.

Depois que ele for carregado, selecione  "Abrir um projeto do Android Studio atual" nesta janela pop-up:

"Abrir um projeto do Android Studio atual" nesta janela pop-up:

No seletor de arquivos, escolha

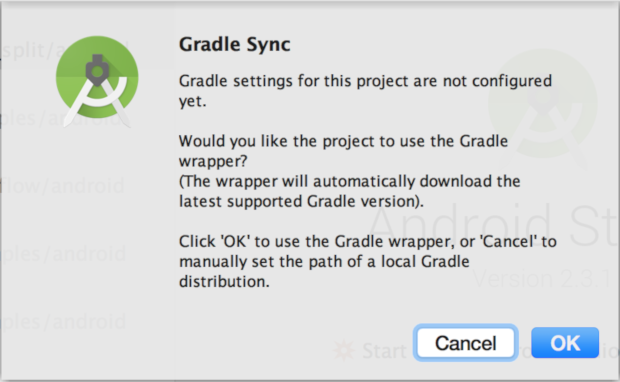

tensorflow-for-poets-2/android/tfliteno diretório de trabalho.Na primeira vez que você abrir o projeto, verá uma janela pop-up "Sincronização do Gradle" perguntando sobre o uso do wrapper do Gradle. Selecione "OK".

Testar a execução do app

O app pode ser executado em um dispositivo Android real ou no Android Studio Emulator.

Configurar um dispositivo Android

Você não poderá carregar o app do Android Studio no seu smartphone se não ativar o "modo de desenvolvedor" e a "depuração USB".

Para concluir esse processo de configuração única, siga estas instruções.

Configurar o emulador com acesso à câmera (opcional)

Se você optar por usar um emulador em vez de um dispositivo Android real, o Android Studio facilitará a configuração do emulador.

Como este app usa a câmera, configure a câmera do emulador para usar a câmera do computador, e não o padrão de teste padrão.

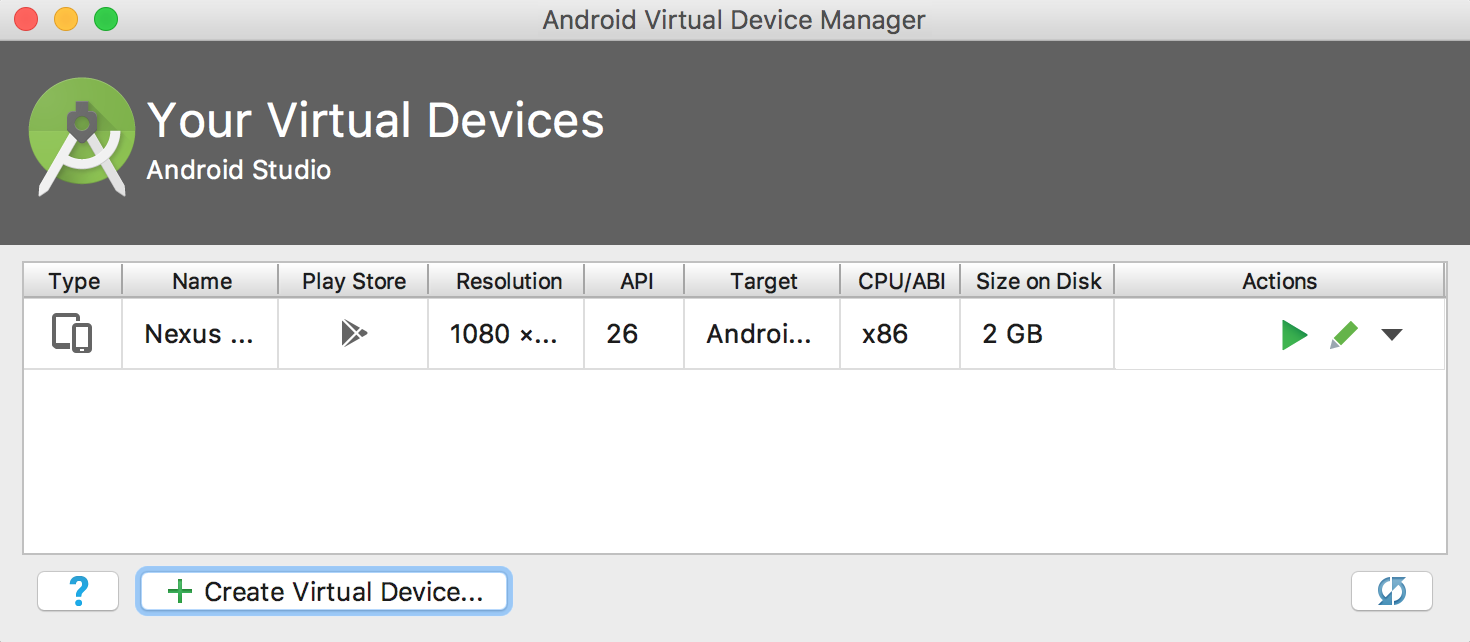

Para configurar a câmera do emulador, você precisa criar um novo dispositivo no Gerenciador do dispositivo virtual Android, um serviço que pode ser acessado com este botão![]() .

Na página principal do Gerenciador, selecione "Criar dispositivo virtual":

.

Na página principal do Gerenciador, selecione "Criar dispositivo virtual":

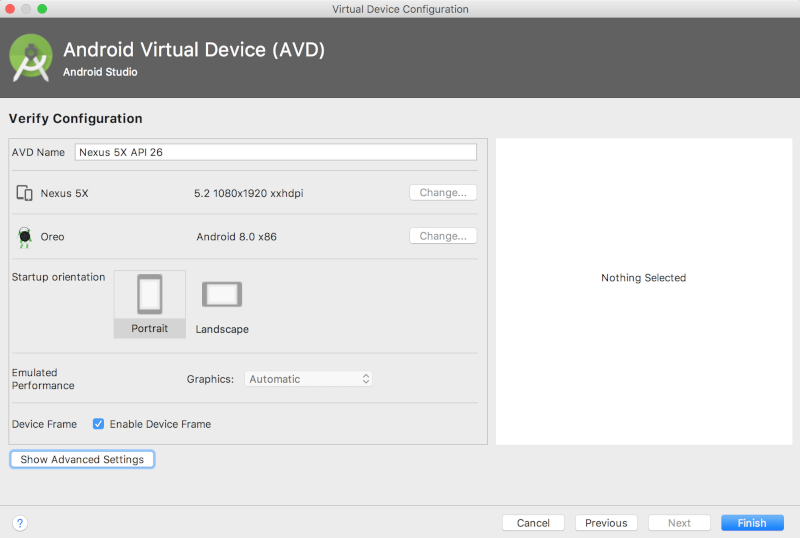

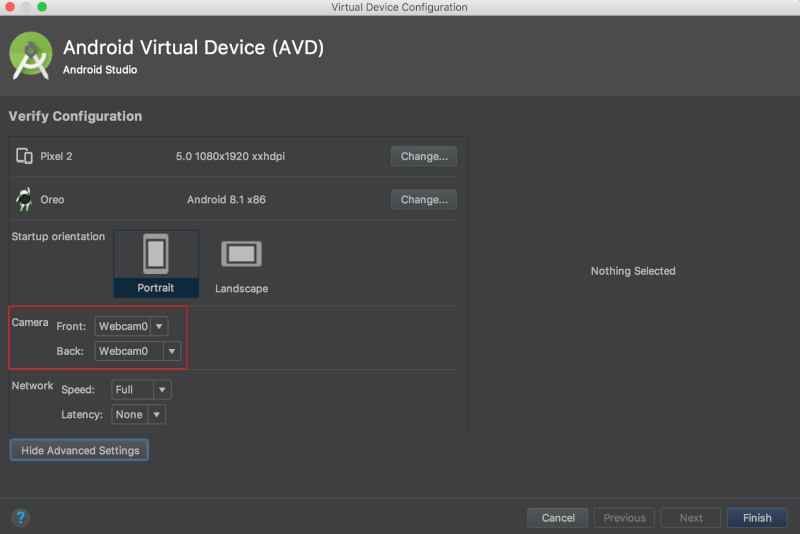

Em seguida, na página "Verificar configuração", que é a última da configuração do dispositivo virtual, selecione "Mostrar configurações avançadas":

Com as configurações avançadas exibidas, defina as duas fontes de câmera para usar a webcam do computador host:

Executar o app original

Antes de fazer qualquer alteração no app, execute a versão que vem com o repositório.

Para iniciar o processo de criação e instalação, execute uma sincronização com o Gradle.

![]()

Depois de executar uma sincronização com o Gradle, selecione ![]() .

.

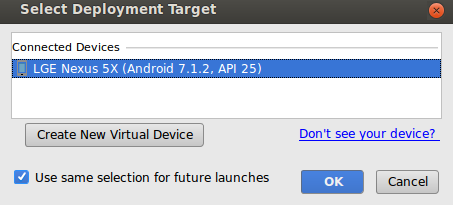

Depois de selecionar o botão de reprodução, selecione o dispositivo neste pop-up:

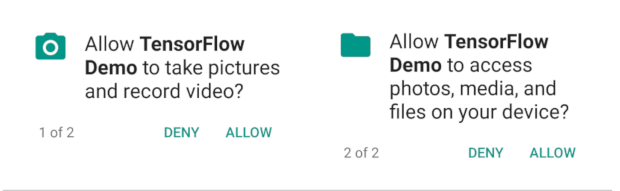

Depois de selecionar seu dispositivo, você precisa permitir que a demonstração do Tensorflow acesse sua câmera e seus arquivos:

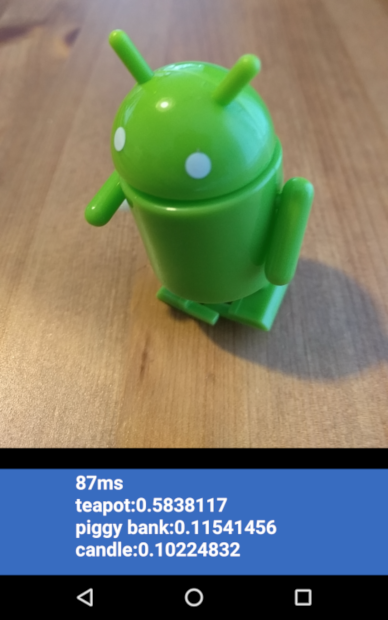

Agora que o app está instalado, clique no ícone ![]() para abri-lo. Esta versão do app usa a MobileNet padrão, pré-treinada nas 1.000 categorias do ImageNet.

para abri-lo. Esta versão do app usa a MobileNet padrão, pré-treinada nas 1.000 categorias do ImageNet.

Ela será mais ou menos assim:

Executar o app personalizado

A configuração padrão do app classifica as imagens em uma das 1.000 classes do ImageNet usando a MobileNet padrão sem novo treinamento.

Agora, faça as modificações para que o app use um modelo criado pelo AutoML Vision Edge para as categorias de imagem personalizadas.

Adicionar arquivos de modelo ao projeto

O projeto de demonstração é configurado para pesquisar um arquivo graph.lite e um arquivo labels.txt no diretório android/tflite/app/src/main/assets/.

Substitua os dois arquivos originais pelas versões com os seguintes comandos:

cp [Downloads]/model.tflite android/tflite/app/src/main/assets/graph.lite cp [Downloads]/dict.txt android/tflite/app/src/main/assets/labels.txt

Modificar o app

Este app usa um modelo de ponto flutuante, enquanto o modelo criado pelo AutoML Vision Edge é quantizado. Você fará algumas alterações no código para permitir que o app use o modelo.

Altere o tipo de dados de labelProbArray e filterLabelProbArray de ponto flutuante para byte, na definição de membro de classe e no inicializador ImageClassifier.

private byte[][] labelProbArray = null;

private byte[][] filterLabelProbArray = null;

labelProbArray = new byte[1][labelList.size()];

filterLabelProbArray = new byte[FILTER_STAGES][labelList.size()];

Aloque o imgData com base no tipo int8, no inicializador ImageClassifier.

imgData =

ByteBuffer.allocateDirect(

DIM_BATCH_SIZE * DIM_IMG_SIZE_X * DIM_IMG_SIZE_Y * DIM_PIXEL_SIZE);

Transmita o tipo de dados de labelProbArray de int8 para ponto flutuante, em printTopKLabels():

private String printTopKLabels() {

for (int i = 0; i < labelList.size(); ++i) {

sortedLabels.add(

new AbstractMap.SimpleEntry<>(labelList.get(i), (float)labelProbArray[0][i]));

if (sortedLabels.size() > RESULTS_TO_SHOW) {

sortedLabels.poll();

}

}

String textToShow = "";

final int size = sortedLabels.size();

for (int i = 0; i < size; ++i) {

Map.Entry<String, Float> label = sortedLabels.poll();

textToShow = String.format("\n%s: %4.2f",label.getKey(),label.getValue()) + textToShow;

}

return textToShow;

}

Executar o app

No Android Studio, execute uma sincronização com o Gradle para que o sistema de compilação possa encontrar seus arquivos:

![]()

Depois de executar uma sincronização do Gradle, selecione a reprodução ![]() para iniciar o processo de criação e instalação como antes.

para iniciar o processo de criação e instalação como antes.

Ela será mais ou menos assim:

Mantenha pressionados os botões liga/desliga e diminuir volume para fazer uma captura de tela.

Teste o app atualizado apontando a câmera para diferentes imagens de flores a fim de ver se elas foram classificadas corretamente.

Como funciona?

Agora que o app está em execução, examine o código específico do TensorFlow Lite.

TensorFlow-Android AAR

Este app usa um arquivo TFLite Android Archive (AAR) pré-compilado. Esse AAR está hospedado no jcenter.

As linhas a seguir no arquivo build.gradle do módulo incluem a versão mais recente do AAR do repositório Bintray Maven do TensorFlow no projeto.

repositories {

maven {

url 'https://google.bintray.com/tensorflow'

}

}

dependencies {

// ...

compile 'org.tensorflow:tensorflow-lite:+'

}

Use o bloco a seguir para instruir o Android Asset Packaging Tool para que os recursos .lite ou .tflite não sejam compactados.

Isso é importante, já que o arquivo .lite será mapeado na memória e não funcionará quando o arquivo for compactado.

android {

aaptOptions {

noCompress "tflite"

noCompress "lite"

}

}

Como usar a API TFLite Java

O código que faz a interface com o TFLite está todo contido em ImageClassifier.java (em inglês).

Configurar

O primeiro bloco de interesse é o construtor para ImageClassifier:

ImageClassifier(Activity activity) throws IOException {

tflite = new Interpreter(loadModelFile(activity));

labelList = loadLabelList(activity);

imgData =

ByteBuffer.allocateDirect(

4 * DIM_BATCH_SIZE * DIM_IMG_SIZE_X * DIM_IMG_SIZE_Y * DIM_PIXEL_SIZE);

imgData.order(ByteOrder.nativeOrder());

labelProbArray = new float[1][labelList.size()];

Log.d(TAG, "Created a Tensorflow Lite Image Classifier.");

}

Há algumas linhas de interesse específico.

A linha a seguir cria o intérprete de TFLite:

tflite = new Interpreter(loadModelFile(activity));

Esta linha cria uma instância de um intérprete de TFLite. O intérprete funciona de forma semelhante a um tf.Session (para quem está familiarizado com o TensorFlow fora do TFLite).

Você passa para o intérprete um MappedByteBuffer que contém o modelo.

A função local loadModelFile cria um MappedByteBuffer contendo o arquivo de recursos graph.lite da atividade.

As seguintes linhas criam o buffer de dados de entrada:

imgData = ByteBuffer.allocateDirect(

4 * DIM_BATCH_SIZE * DIM_IMG_SIZE_X * DIM_IMG_SIZE_Y * DIM_PIXEL_SIZE);

Esse buffer de bytes é dimensionado para conter os dados da imagem uma vez convertidos em flutuantes.

O intérprete pode aceitar matrizes flutuantes diretamente como entrada, mas o ByteBuffer é mais eficiente, já que evita cópias extras no intérprete.

As linhas a seguir carregam a lista de rótulos e criam o buffer de saída:

labelList = loadLabelList(activity); //... labelProbArray = new float[1][labelList.size()];

O buffer de saída é uma matriz flutuante com um elemento para cada rótulo em que o modelo gravará as probabilidades de saída.

Executar o modelo

O segundo bloco de interesse é o método classifyFrame.

Esse método leva um Bitmap como entrada, executa o modelo e retorna o texto a ser impresso no app.

String classifyFrame(Bitmap bitmap) {

// ...

convertBitmapToByteBuffer(bitmap);

// ...

tflite.run(imgData, labelProbArray);

// ...

String textToShow = printTopKLabels();

// ...

}

Esse método faz três coisas. Primeiro, o método converte e copia a entrada Bitmap para o imgData ByteBuffer para entrada no modelo.

Em seguida, ele chama o método de execução do intérprete, passando o buffer de entrada e a matriz de saída como argumentos. O intérprete define os valores da matriz de saída para a probabilidade calculada para cada classe.

Os nós de entrada e saída são definidos pelos argumentos para a etapa de conversão toco que criou o arquivo de modelo .lite anteriormente.

A seguir

Você acabou de concluir um tutorial de um app Android de classificação de flores usando um modelo Edge. Você testou um app de classificação de imagem antes de fazer modificações nele e receber anotações de exemplo. Em seguida, examinou o código específico do TensorFlow Lite para entender a funcionalidade subjacente.

- Saiba mais sobre o TFLite na documentação oficial e no repositório de códigos (links em inglês).

- Teste a versão quantizada deste app de demonstração para ver um modelo mais eficiente em um pacote menor.

- Tente usar outros modelos prontos para o TFLite, incluindo um detector de hotwords de fala e uma versão de resposta inteligente no dispositivo.

- Saiba mais sobre o TensorFlow em geral com a documentação de primeiros passos do TensorFlow.