Bring Your Own IP addresses: the secret to Bitly’s shortened cloud migration

Shailesh Shukla

Vice President and General Manager, Networking, Google Cloud

Editor’s note: Please note that the method of cutover mentioned in this blog is no longer supported. Visit the documentation for the latest on BYOIP.

Enterprises want to move their applications to the cloud, but worry about having to swap their IP addresses for ones from their cloud provider. We hear from our customers that managing the migration of IP addresses can be one of the most challenging aspects of a cloud migration for network administrators.

Here at Google Cloud, we now allow you to Bring Your Own IP (BYOIP) addresses to Google’s network infrastructure across all our 24 regions—the first cloud provider to make this feature globally available. By bringing over your own IP addresses, you can accelerate your migration while minimizing downtime, as well as significantly reduce your networking infrastructure costs. See the launch video.

With Google Cloud, your BYOIP prefixes can be broken into blocks as small as 16 addresses (/28), can be distributed to any region, and can also be used for global load balancers, creating more flexibility with the resources you already have. You can also advertise the IP addresses you bring to Google Cloud globally, to all peers.

Already, BYOIP is helping accelerate enterprises’ migration to Google Cloud. Bitly is a link management platform that empowers businesses of every size to embed short, branded call-to-action links in their communications. Founded in 2008 and based in New York City, Bitly shortens billions of links a year and is used by tens of thousands of enterprise customers and small businesses around the world, helping them to maximize the impact of every digital initiative.

Bitly had long wanted to move to the cloud and build a multi-region architecture, but before we introduced BYOIP, no other cloud provider could help them. They needed this feature for two main reasons:

Hard-coded IPs delaying migration. Bitly customers with custom domains are hard-coded to Bitly’s IPs in their DNS records. Without BYOIP, Bitly would have needed all their customers to change their IPs in their DNS entries from Bitly IP addresses to Google IP addresses; this would be very disruptive to the Bitly customers. However, until all those customers come over, Bitly’s migration cannot be complete.

Costs of legacy networking infrastructure. Without BYOIP, Bitly would also have had to maintain a network edge in a separate colocation facility to house legacy IP addresses still tied to the facility, continuing to incur that cost until all the IP addresses were brought to Google Cloud.

Bitly began migrating to Google Cloud about a year ago, starting with their backend. Since then, they’ve worked with us to develop BYOIP and accelerate their migration into our cloud. In doing so, they also minimized downtime and significantly reduced their networking infrastructure cost for maintaining their public IPs in their on-premises data centers.

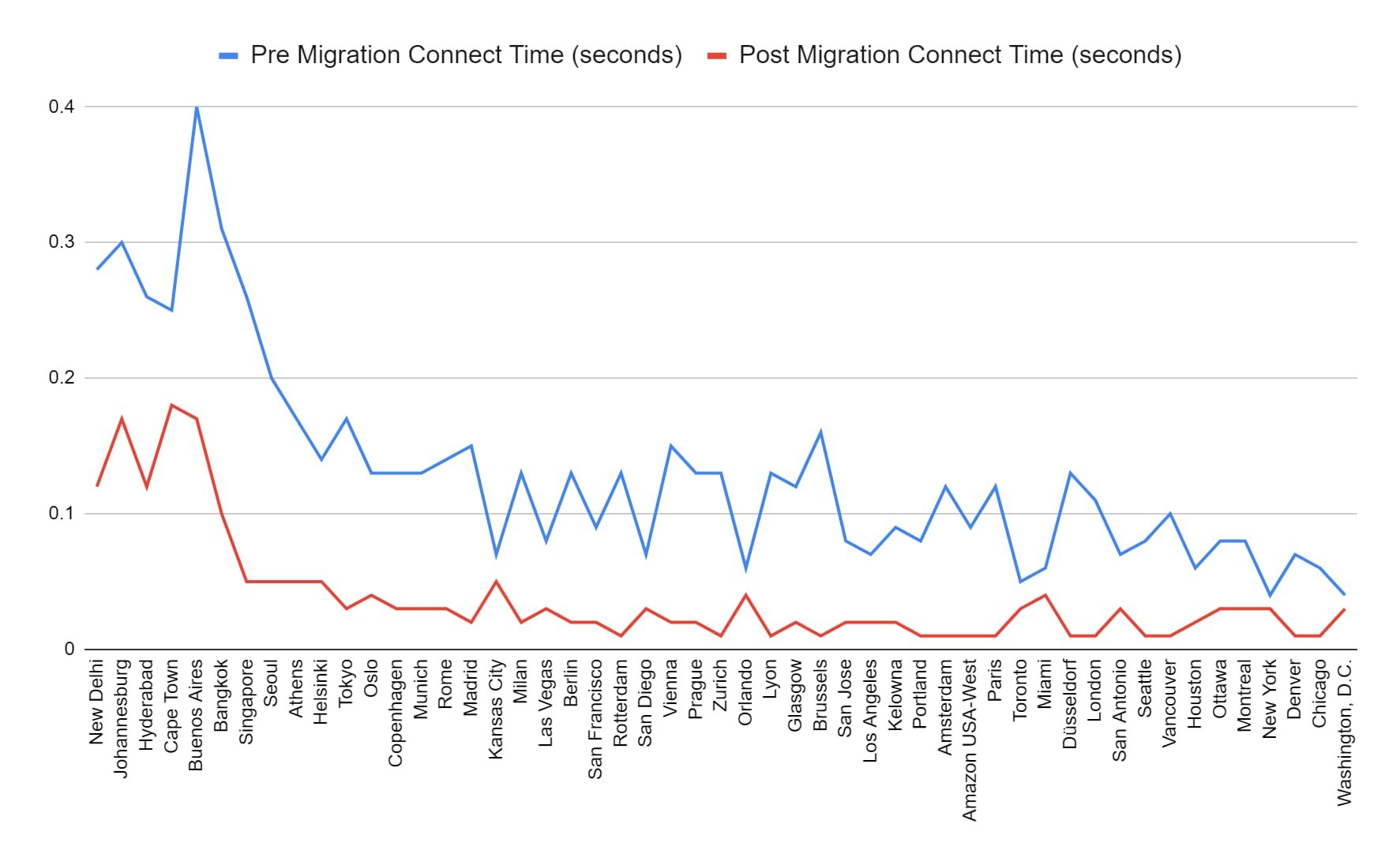

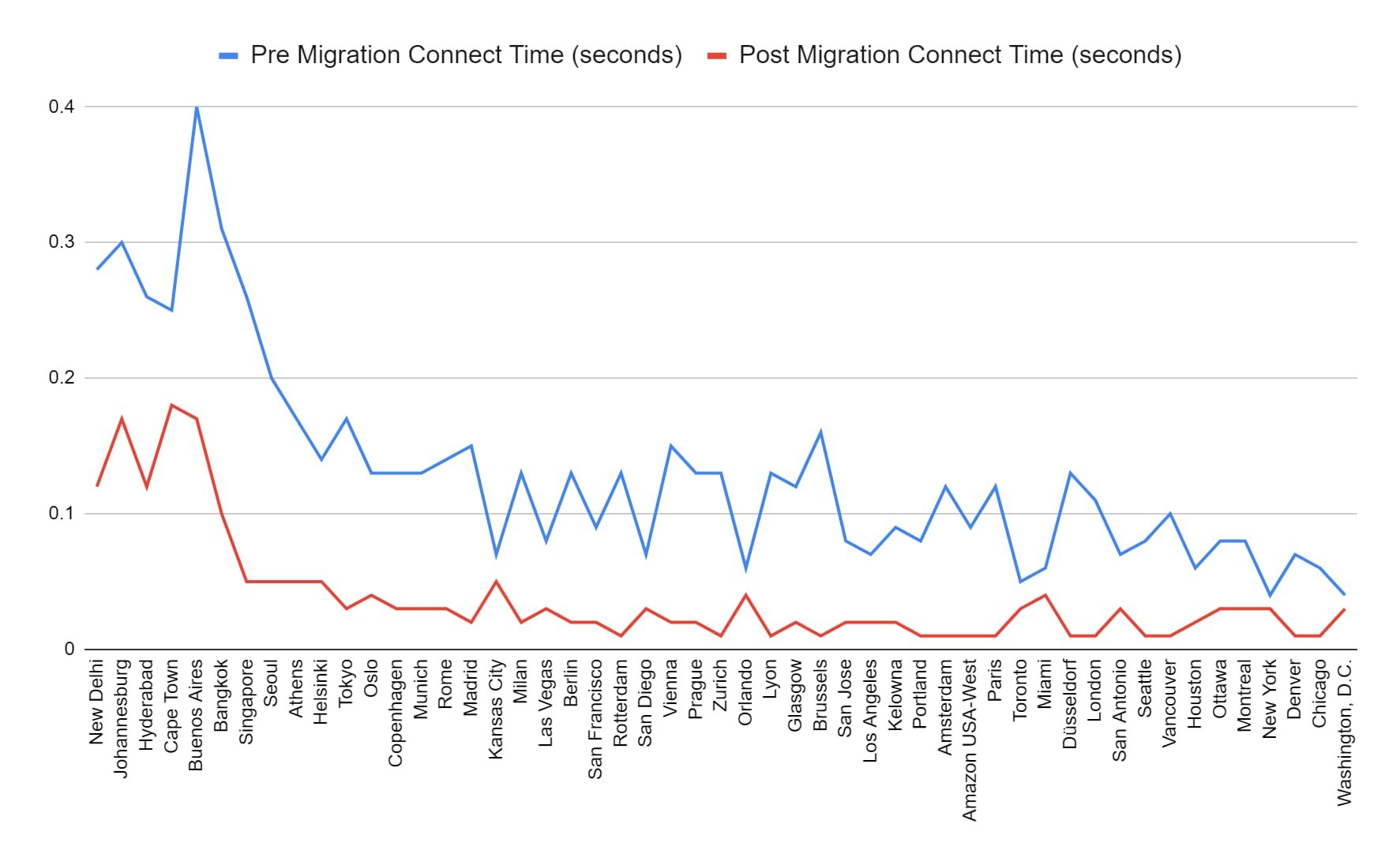

By moving to Google Cloud, Bitly saw another significant benefit: improved performance by using Google’s global network infrastructure. These improvements include up to 50% decrease in latency from the client to a service for resolve time, connection time, and download time.

“By bringing our own IP addresses to Google Cloud, we moved our applications without requiring our customers to change their IP address whitelists, minimizing risk, downtime, and toil during migration.” - Russell Holbrook, VP of Engineering, Bitly

Let’s connect

BYOIP is just the latest example of how we’re working to give you the right options to connect your business to Google Cloud. Let us know how you plan to use this new networking feature and what capabilities you’d like in the future. You can learn more about GCP’s cloud networking portfolio online and reach us at gcp-networking@google.com.