| title | description | author | tags | date_published |

|---|---|---|---|---|

Ingress with NGINX controller on Google Kubernetes Engine |

Learn how to deploy the NGINX Ingress Controller on Google Kubernetes Engine using Helm. |

ameer00 |

Google Kubernetes Engine, Kubernetes, Ingress, NGINX, NGINX Ingress Controller, Helm |

2018-02-12 |

Ameer Abbas | Solutions Architect | Google

Contributed by Google employees.

This guide explains how to deploy the NGINX Ingress Controller on Google Kubernetes Engine.

This tutorial shows examples of public and private GKE clusters.

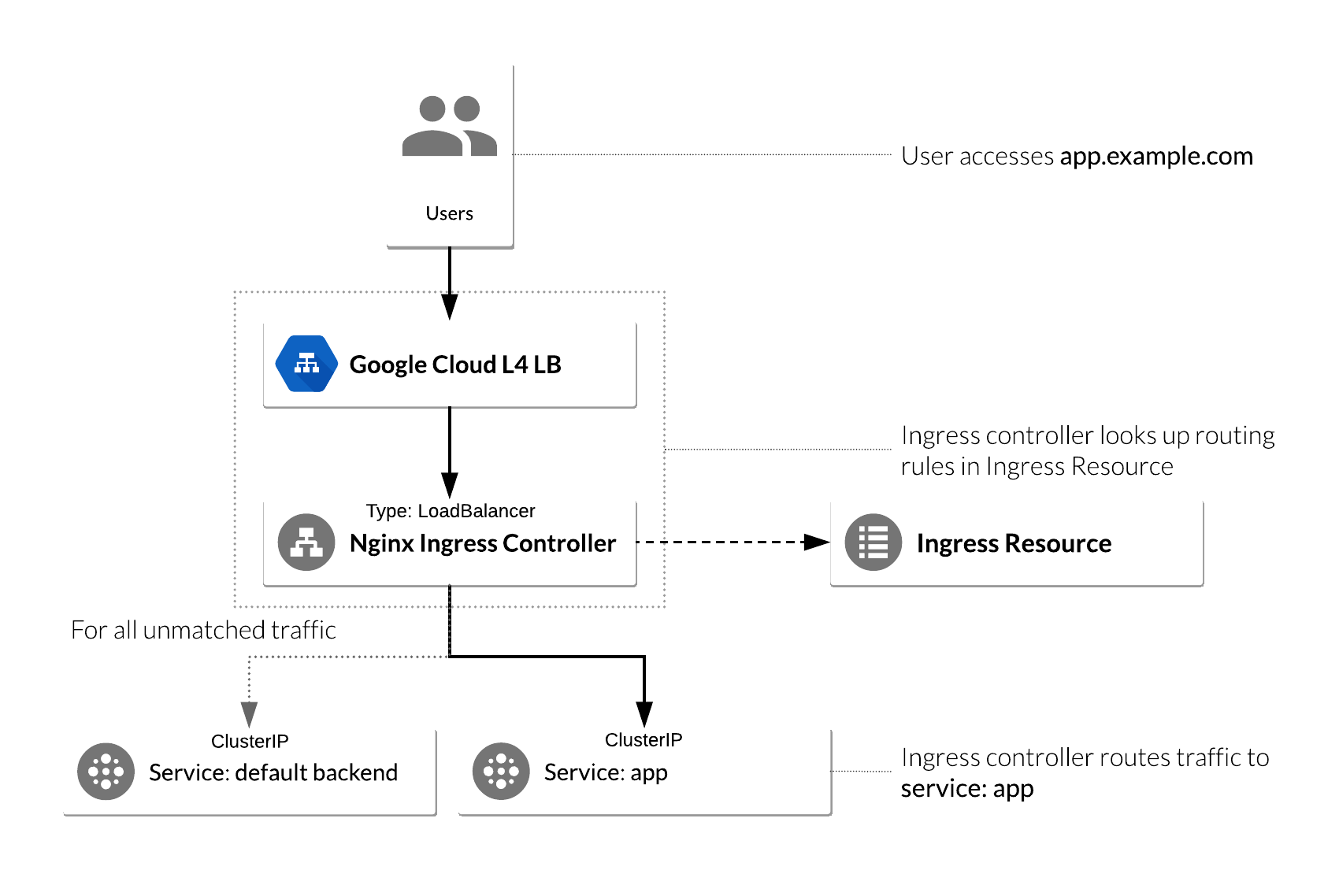

In Kubernetes, Ingress allows external users and client applications access to HTTP services. Ingress consists of two components. Ingress Resource is a collection of rules for the inbound traffic to reach Services. These are Layer 7 (L7) rules that allow hostnames (and optionally paths) to be directed to specific Services in Kubernetes. The second component is the Ingress Controller which acts upon the rules set by the Ingress Resource, typically through an HTTP or L7 load balancer. It is vital that both pieces are properly configured to route traffic from an outside client to a Kubernetes Service.

NGINX is a popular choice for an Ingress Controller for a variety of features:

- WebSocket, which allows you to load balance Websocket applications.

- SSL Services, which allows you to load balance HTTPS applications.

- Rewrites, which allows you to rewrite the URI of a request before sending it to the application.

- Session Persistence (NGINX Plus only), which guarantees that all the requests from the same client are always passed to the same backend container.

- Support for JWTs (NGINX Plus only), which allows NGINX Plus to authenticate requests by validating JSON Web Tokens (JWTs).

The following diagram shows the architecture described above:

This tutorial illustrates how to set up a Deployment in Kubernetes with an Ingress Resource using NGINX as the Ingress Controller to route and load-balance traffic from external clients to the Deployment.

This tutorial explains how to accomplish the following:

- Create a Kubernetes Deployment.

- Deploy NGINX Ingress Controller with Helm.

- Set up an Ingress Resource object for the Deployment.

- Deploy a simple Kubernetes web application Deployment.

- Deploy NGINX Ingress Controller using the stable Helm chart.

- Deploy an Ingress Resource for the application that uses NGINX Ingress as the controller.

- Test NGINX Ingress functionality by accessing the Google Cloud L4 (TCP/UDP) load balancer frontend IP address and ensure that it can access the web application.

This tutorial uses billable components of Google Cloud, including the following:

- Google Kubernetes Engine

- Cloud Load Balancing

Use the pricing calculator to generate a cost estimate based on your projected usage.

-

Create a public Google Kubernetes Engine cluster:

gcloud container clusters create gke-public \ --zone=us-central1-f \ --enable-ip-alias \ --num-nodes=2

In order to provide external connectivity to GKE private clusters, you need to create a Cloud NAT gateway.

-

Create and reserve an external IP address for the NAT gateway:

gcloud compute addresses create us-east1-nat-ip \ --region=us-east1 -

Create a Cloud NAT gateway for the private GKE cluster:

gcloud compute routers create rtr-us-east1 \ --network=default \ --region us-east1 gcloud compute routers nats create nat-gw-us-east1 \ --router=rtr-us-east1 \ --region us-east1 \ --nat-external-ip-pool=us-east1-nat-ip \ --nat-all-subnet-ip-ranges \ --enable-loggingFor private GKE clusters with private API server endpoint, you must specify an authorized list of source IP addresses from where you will be accessing the private GKE cluster. In this tutorial, you use Cloud Shell.

-

Get the public IP address of your Cloud Shell session:

export CLOUDSHELL_IP=$(dig +short myip.opendns.com @resolver1.opendns.com)Note: The Cloud Shell public IP address might change if your session is interrupted and you open a new Cloud Shell session.

-

Create a firewall rule that allows Pod-to-Pod and Pod-to-API server communication:

gcloud compute firewall-rules create all-pods-and-master-ipv4-cidrs \ --network default \ --allow all \ --direction INGRESS \ --source-ranges 10.0.0.0/8,172.16.2.0/28 -

Create a private GKE cluster:

gcloud container clusters create gke-private \ --zone=us-east1-b \ --num-nodes "2" \ --enable-ip-alias \ --enable-private-nodes \ --master-ipv4-cidr=172.16.2.0/28 \ --enable-master-authorized-networks \ --master-authorized-networks $CLOUDSHELL_IP/32

-

Connect to both clusters to generate entries in the kubeconfig file:

gcloud container clusters get-credentials gke-public --zone us-central1-f gcloud container clusters get-credentials gke-private --zone us-east1-bYou use the kubeconfig file to authenticate to clusters by creating a user and context for each cluster. After you generate entries in the kubeconfig file, you can quickly switch context between clusters.

The following steps are identical for both the public and private GKE cluster. Ensure that you are using the correct cluster context before proceeding.

Helm is a tool that streamlines installing and managing Kubernetes applications and resources. Think of it like apt, yum, or homebrew for Kubernetes. The use of Helm charts is recommended, because they are maintained and typically kept up to date by the Kubernetes community.

Helm 3 comes pre-installed in Cloud Shell.

Verify the version of the Helm client in Cloud Shell:

helm version

The output should look like this:

version.BuildInfo{Version:"v3.5.0", GitCommit:"fe51cd1e31e6a202cba7dead9552a6d418ded79a", GitTreeState:"clean", GoVersion:"go1.15.6"}

Ensure that the version is v3.x.y.

You can install the helm client in Cloud Shell by following the instructions here.

You can deploy a simple web-based application from the Google Cloud repository. You use this application as the backend for the Ingress.

-

From Cloud Shell, run the following command:

kubectl create deployment hello-app --image=gcr.io/google-samples/hello-app:1.0This gives the following output:

deployment.apps/hello-app created -

Expose the

hello-appDeployment as a Service:kubectl expose deployment hello-app --port=8080 --target-port=8080This gives the following output:

service/hello-app exposed

Kubernetes allows administrators to bring their own Ingress Controllers instead of using the cloud provider's built-in offering.

The NGINX controller, deployed as a Service, must be exposed for external

access. This is done using Service type: LoadBalancer on the NGINX controller

service. On Google Kubernetes Engine, this creates a Google Cloud Network (TCP/IP) load balancer with NGINX

controller Service as a backend. Google Cloud also creates the appropriate

firewall rules within the Service's VPC network to allow web HTTP(S) traffic to the load

balancer frontend IP address.

Here is a basic flow of the NGINX ingress solution on Google Kubernetes Engine.

-

Before you deploy the NGINX Ingress Helm chart to the GKE cluster, add the

nginx-stableHelm repository in Cloud Shell:helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginx helm repo update -

Deploy an NGINX controller Deployment and Service by running the following command:

helm install nginx-ingress ingress-nginx/ingress-nginx -

Verify that the

nginx-ingress-controllerDeployment and Service are deployed to the GKE cluster:kubectl get deployment nginx-ingress-ingress-nginx-controller kubectl get service nginx-ingress-ingress-nginx-controllerThe output should look like this:

# Deployment NAME READY UP-TO-DATE AVAILABLE AGE nginx-ingress-ingress-nginx-controller 1/1 1 1 13m # Service NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE nginx-ingress-ingress-nginx-controller LoadBalancer 10.7.255.93 <pending> 80:30381/TCP,443:32105/TCP 13m -

Wait a few moments while the Google Cloud L4 load balancer gets deployed, and then confirm that the

nginx-ingress-nginx-ingressService has been deployed and that you have an external IP address associated with the service:kubectl get service nginx-ingress-ingress-nginx-controllerYou may need to run this command a few times until an

EXTERNAL-IPvalue is present.You should see the following:

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE nginx-ingress-ingress-nginx-controller LoadBalancer 10.7.255.93 34.122.88.204 80:30381/TCP,443:32105/TCP 13m -

Export the

EXTERNAL-IPof the NGINX ingress controller in a variable to be used later:export NGINX_INGRESS_IP=$(kubectl get service nginx-ingress-ingress-nginx-controller -ojson | jq -r '.status.loadBalancer.ingress[].ip') -

Ensure that you have the correct IP address value stored in the

$NGINX_INGRESS_IPvariable:echo $NGINX_INGRESS_IPThe output should look like this, though the IP address may differ:

34.122.88.204

An Ingress Resource object is a collection of L7 rules for routing inbound traffic to Kubernetes Services. Multiple rules can be defined in one Ingress Resource,

or they can be split up into multiple Ingress Resource manifests. The Ingress Resource also determines which controller to use to serve traffic. This can be set

with an annotation, kubernetes.io/ingress.class, in the metadata section of the Ingress Resource. For the NGINX controller, use the value nginx:

annotations: kubernetes.io/ingress.class: nginx

On Google Kubernetes Engine, if no annotation is defined under the metadata section, the

Ingress Resource uses the Google Cloud GCLB L7 load balancer to serve traffic. This

method can also be forced by setting the annotation's value to gce:

annotations: kubernetes.io/ingress.class: gce

Deploying multiple Ingress controllers of different types (for example, both nginx and gce) and not specifying a class

annotation will result in all controllers fighting to satisfy the Ingress, and all of them racing to update the Ingress

status field in confusing ways. For more information, see

Multiple Ingress controllers.

-

Create a simple Ingress Resource YAML file that uses the NGINX Ingress Controller and has one path rule defined:

cat <<EOF > ingress-resource.yaml apiVersion: networking.k8s.io/v1 kind: Ingress metadata: name: ingress-resource annotations: kubernetes.io/ingress.class: "nginx" nginx.ingress.kubernetes.io/ssl-redirect: "false" spec: rules: - host: "$NGINX_INGRESS_IP.nip.io" http: paths: - pathType: Prefix path: "/hello" backend: service: name: hello-app port: number: 8080 EOFThe

kind: Ingressline dictates that this is an Ingress Resource object. This Ingress Resource defines an inbound L7 rule for path/helloto servicehello-appon port 8080.The

hostspecification of theIngressresource should match the FQDN of the Service. The NGINX Ingress Controller requires the use of a Fully Qualified Domain Name (FQDN) in that line, so you can't use the contents of the$NGINX_INGRESS_IPvariable directly. Services such as nip.io return an IP address for a hostname with an embedded IP address (i.e., querying[IP_ADDRESS].nip.ioreturns[IP_ADDRESS]), so you can use that instead. In production, you can replace thehostspecification in theIngressresource with your real FQDN for the Service. -

Apply the configuration:

kubectl apply -f ingress-resource.yaml -

Verify that Ingress Resource has been created:

kubectl get ingress ingress-resourceThe IP address for the Ingress Resource will not be defined right away, so you may need to wait a few moments for the

ADDRESSfield to get populated. The IP address should match the contents of the$NGINX_INGRESS_IPvariable.The output should look like the following:

NAME CLASS HOSTS ADDRESS PORTS AGE ingress-resource <none> 34.122.88.204.nip.io 80 10sNote that the

HOSTSvalue in the output is set to the FQDN specified in thehostentry of the YAML file.

You should now be able to access the web application by going to the $NGINX_INGRESS_IP.nip.io/hello.

http://$NGINX_INGRESS_IP.nip.io/hello

You can also access the hello-app using the curl command in Cloud Shell.

curl http://$NGINX_INGRESS_IP.nip.io/hello

The output should look like the following.

Hello, world!

Version: 1.0.0

Hostname: hello-app-7bfb5ff469-q5wpr

From Cloud Shell, run the following commands:

-

Delete the Ingress Resource object:

kubectl delete -f ingress-resource.yamlYou should see the following:

ingress.extensions "ingress-resource" deleted -

Delete the NGINX Ingress Helm chart:

helm del nginx-ingressYou should see the following:

release "nginx-ingress" uninstalled -

Delete the app:

kubectl delete service hello-app kubectl delete deployment hello-appYou should see the following:

service "hello-app" deleted deployment.extensions "hello-app" deleted -

Delete the Google Kubernetes Engine clusters:

gcloud container clusters delete gke-public --zone=us-central1-f --async gcloud container clusters delete gke-private --zone=us-east1-b -

Delete the

ingress_resource.yamlfile:rm ingress-resource.yaml