Preparing PostgreSQL for migration with Database Migration Service

Gabe Weiss

Developer Advocate

Last November, we made relational database migration easier for MySQL users with our public preview of Database Migration Service (DMS). Today, we’ve officially made the product generally available, and bring the same easy-to-use migration functionality to PostgreSQL.

The thing I’ve appreciated the most about diving deep with DMS has been that it just works. Once you get your source instance and database(s) prepared, and establish the connectivity between source and destination, doing the migration is all handled. When it's all finished, cutting over to using your Cloud SQL instance as your application's primary database is as simple as clicking a button in the DMS UI.

Not to downplay the difficulty in database prep, or connectivity. I wrote a thorough blog post walking through the various connectivity options for DMS in great detail. Network topology can be incredibly complicated, and connecting two machines securely through the internet while serving an application with thousands or millions of users is anything but simple.

Today, I want to take a moment with you to cover preparing your source PostgreSQL instance and database(s) for migration using DMS and some gotchas I found so you don’t have to.

I’ll start by saying, the documentation and in-product UI guidance are both excellent for DMS. If you’re familiar with setting up replication infrastructure for PostgreSQL, you’re probably good to jump in, and check in on the documentation if needed. Having said that, it’s documentation, so here I’ll try to add a bit so it’s all in one place to get everything you need to prepare your source PostgreSQL instance and database(s).

Step one, be sure your source instance version is supported. Current list of supported versions can be found on the documentation page I linked above.

Next up is a schema piece: DMS doesn’t support migrating tables that don’t have a primary key. Starting a migration against a database that has tables without primary keys will still succeed, but it won’t bring over the data from a table that’s lacking a primary key, but the table will still be created. So if you want to bring the data over from a table that doesn’t have a primary key, you have a few options:

- You’ll have to add a primary key prior to starting the migration.

- You’ll need to bring the data over yourself after the initial migration. Keeping in mind of course that if you bring the data over yourself, even if you maintain the connection, DMS won’t replicate data for that table moving forward.

- You can export the table from the source instance and import it into the new instance.

- Finally, you can create a table with the same schema as the one you have that doesn’t have the primary key, give it a primary key (should use a sequence generator to autogenerate the key) and copy the source data into it. Then do the migration. DMS as part of doing the migration will create the non-PK table, it just doesn’t copy the data over. Then you can copy the data from the migrated primary key table, and finally delete the primary key table once you’ve verified the data. It sounds complicated, but it ensures you’re getting the same data at the point of migration as the rest of your data as long as you’ve got any new rows inserted into the non-primary key table also going into the primary key copy. If you’re worried about the data in that table changing during the migration, you can copy the data over right before promoting the destination instance to minimize that window.

DMS relies on pglogical for the migration work. This means that the pglogical extension has to be installed on each of the databases you want to migrate. Instructions for installing pglogical on your instance and database(s) can be found here. If you’re running on Linux, the repo’s installation page is helpful. To be sure I took one for the team, I decided to see how bad it might be to migrate a PostgreSQL database installed with Homebrew from MacOS to Cloud SQL. Turns out, shockingly not too bad! Installing pglogical from source:

1) Clone GitHub repo

2) Run make

2a) Get compilation error because postgres.h not found

3) Find where Homebrew installed Postgres, find include folder, add all include folders to C_INCLUDE_PATH

4) Run make again, built!

5) Run sudo make install because pglogical documentation said I might need it (side note: don’t pre-optimize!)

5a) Fails without any good messages

6) Run make install

7) Great success! Can’t quite test success yet, since now the instance and database(s) have to be configured to use pglogical and replication.

The next piece is pretty straightforward if you’ve done replication in PostgreSQL before. There are some configuration variables on the instance you need to set in order for the replication to succeed. There are two main ways to change these values. You can either adjust them while the instance is running with the ALTER SYSTEM SET <variable> TO <value>; calls, or you can change them in the configuration file, posgresql.conf. Either way, you’ll need to restart the instance for the changes to take effect.

If you want to change it in the configuration file, but don’t know where it lives, generally it lives in the data directory for the database. If you only have the credentials to log in to your database but don’t know where it lives, you can run SHOW data_directory once connected to the database and it’ll give you the location of the data directory.

The variables you need to set are:

wal_level = logical # Needs to be set to logical

max_replication_slots = n # Number varies, see here for details

max_wal_senders = n # Should be max_replication_slots plus the number of actively connected replicas.

max_worker_processes = n # Should be set to how many databases that are being replicated

shared_preload_libraries = pglogical

Note that the shared_preload_libraries variable is a comma delimited list. You need to be careful when you set it to check first to see if there are other libraries that are being preloaded to include them, otherwise you could unload required libraries by your setup and cause issues with the database.

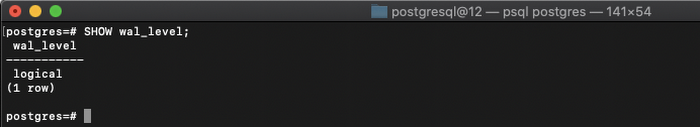

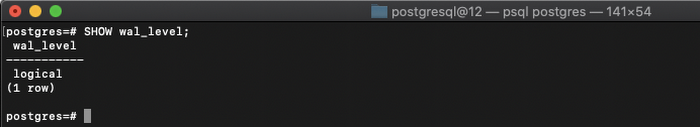

SHOW <variable> e.g. SHOW wal_level should show logical.

Quick example time:

Note that these numbers are for the DMS load only. If you already have these values set for other reasons, you need to take that into account. For example, if you’ve got max_worker_processes set to 8 to handle higher parallel querying, then you may want to add more on top to accommodate the replication to avoid impacting performance.

Case 1: You’re just doing a migration and immediately promoting the Cloud SQL instance. There aren’t any other replicas setup on the source, and you only have a single database you’re migrating over. Then you’d want to set the values to:

# Technically we only need 1 for Cloud SQL subscriber and the default is

# set to 10, so you could just leave it alone. This is just illustrating

# that you could set it lower without any issues

max_replication_slots = 3

# Equal to max_replication_slots + 1 because we’ll only have one

# replica connected to the source instance

max_wal_senders = 4

# Technically we only need 1 here because we’re only bringing over

# one database, but always a good practice to have one as a buffer

# just in case there’s an issue so it doesn’t rely on

# only the one processor.

max_worker_processes = 2

Case 2: You have a setup where your on prem local instance is already set up with 5 replication slots to handle other replication you have in place, and there are 4 databases you want to migrate to the Cloud, you would want to set the variables up like:

# 5 for existing subscribers + 4 for each of source databases since pglogical

# requires 1 slot for each database

max_replication_slots = 9

# Equal to max_replication_slots + 6 because say we have 5 existing replicas,

# and we’ll be adding one more replica for DMS doing the migration

max_wal_senders = 15

# 4 databases we’re migrating, plus and extra as a buffer just in case

max_worker_processes = 5

Once you have your variables all set, if you changed them in the config file, now’s the time you need to restart your PostgreSQL instance.

You can verify it worked by logging into the instance and running CREATE EXTENSION pglogical on one of the databases you’re planning on replicating over. As long as it works, you’ll need to connect to every database you want to be replicating and run that command on each one. And while you’re there on each database, you need to grant the user that you specified in the Define a source step creating the migration certain privileges. These grants need to happen on each database you’re replicating as well as the postgres database:

# on all schemas (aside from the information schema and schemas starting with "pg_") on each database to migrate, including pglogical

GRANT USAGE on SCHEMA <SCHEMA> to <USER>

# on all databases to get replication information from source databases.

GRANT SELECT on ALL TABLES in SCHEMA pglogical to <USER>

# on all schemas (aside from the information schema and schemas starting with "pg_") on each database to migrate, including pglogical

GRANT SELECT on ALL TABLES in SCHEMA <SCHEMA> to <USER>

# on all schemas (aside from the information schema and schemas starting with "pg_") on each database to migrate, including pglogical

GRANT SELECT on ALL SEQUENCES in SCHEMA <SCHEMA> to <USER>

# We’re not handling it in this blog post, but if you happen to be trying to replicate

# from RDS, it would be GRANT rds_replication TO USER.

ALTER USER USER WITH REPLICATION

If your source database is earlier than version 9.6, there’s an extra step to follow because before that, PostgreSQL didn’t have replication delay monitoring by default. This is needed because DMS uses this to be able to watch if replication lag becomes too high. I’m not going to cover it in detail here since all versions before 9.6 are currently end of life, but if you need to do this, there’s information on what you need to do here.

Congratulations! Your PostgreSQL instance and database(s) are fully configured and ready for DMS! Another nicety of DMS, is when you’re all configured and ready to go, there’s a connectivity/configuration test in the UI that will tell you if everything is configured correctly or not before you hit the final “do it” button.

Remember I mentioned that I cover a lot of the nitty gritty details around connectivity between your source database and the Cloud SQL instance in the blog post I linked at the top of this post. It covers MySQL there, so I’ll add a pitfall I ran into with PostgreSQL here before I leave you.

Be sure to remember if you haven’t already, to enable your database to listen and accept connections from non-localhost locations. Two pieces to this, one, you need to change the listen_address variable in your postgresql.conf file. It defaults to localhost, which might work depending on how you’re managing connection to the database from your application, but won’t work for the migration. You also need to modify the pg_hba.conf file to grant your user for the migration access to your local database from the Cloud. If you don’t do either of these, DMS is really good about giving you clear error messages from the PostgreSQL instance telling you that you messed up. Ask me how I know.

And there we have it. Everything in one place to get you ready to go bringing your PostgreSQL database into Cloud SQL. If you have any questions, suggestions or complaints, please reach out to me on Twitter, my DMs are open! Thanks for reading.